Evaluating Large and Lightweight Vision Models for Irregular Component Segmentation in E-Waste Disassembly

Precise segmentation of irregular and densely arranged components is essential for robotic disassembly and material recovery in electronic waste (e-waste) recycling. This study evaluates the impact of model architecture and scale on segmentation perf…

Authors: Xinyao Zhang, Chang Liu, Xiao Liang

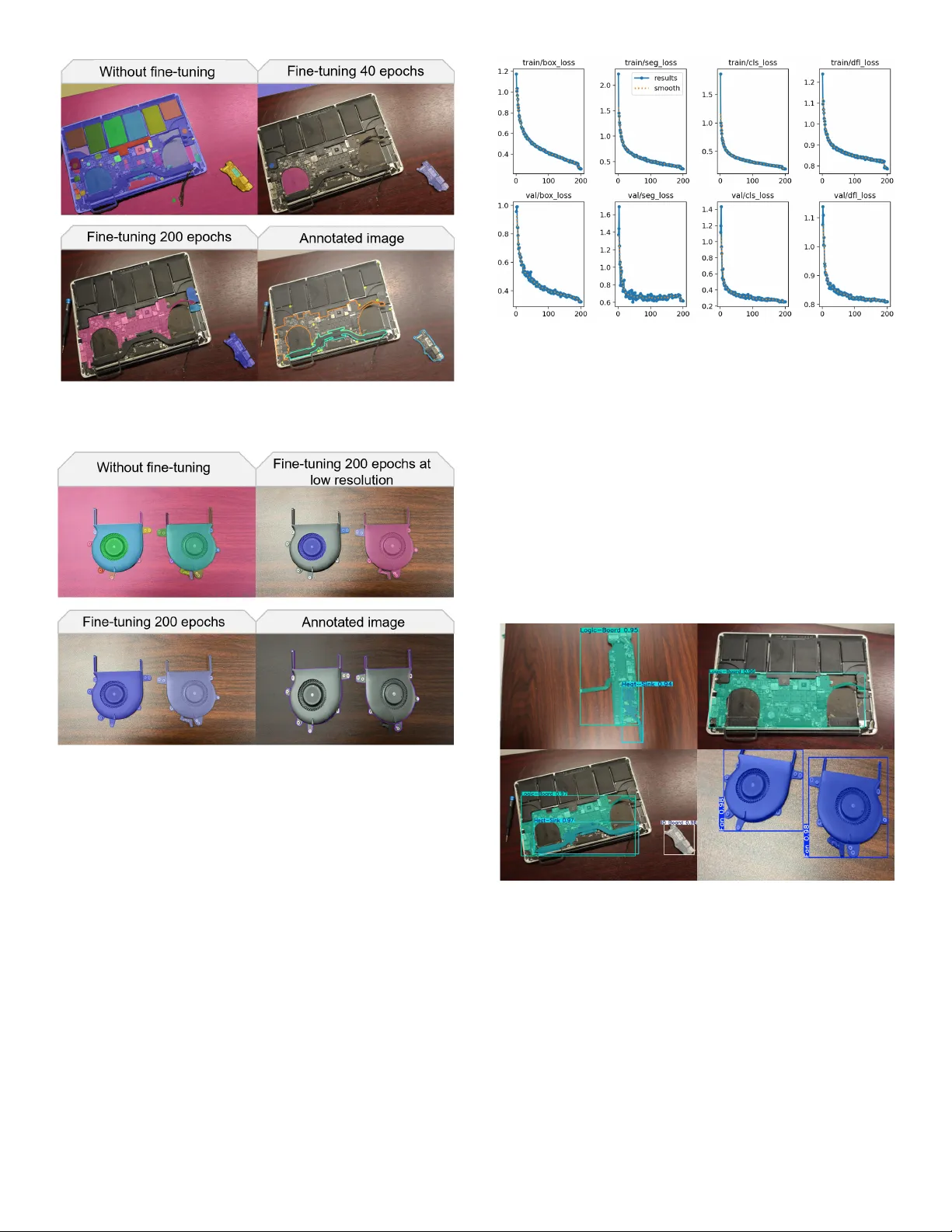

Proceedings of the ASME 2026 21st International Manufacturing Science and Engineering Conferences MSEC2026 June 14-18, 2026, State College, Pennsylvania MSEC2026-182794 EV ALU A TING LARGE AND LIGHT WEIGHT VISION MODELS FOR IRREGUL AR COMPONENT SEGMENT A TION IN E-W ASTE DISASSEMBL Y Xiny ao Zhang 1 , Chang Liu 2 , Xiao Liang 2 , Minghui Zheng 2 , Sara Behdad 3 , ∗ 1 Florida State Univ ersity , Industrial and Manufacturing Engineering, T allahassee, FL, USA 2 T exas A&M Univ ersity , Mechanical Engineering, College Station, TX, USA 3 University of Florida, En vironmental Engineering Sciences, Gainesville, FL, USA ABSTRA CT Pr ecise segmentation of irr egular and densely arr ang ed com- ponents is essential f or robo tic disassembly and material r ecov er y in electronic wast e (e-w ast e) r ecycling. This study evaluat es the impact of model arc hitecture and scale on segmentation per f or - mance by comparing SAM2, a transf ormer -based vision model, with the lightw eight Y OLOv8 netw ork. Both models w er e trained and tested on a newly collected dataset of 1,456 annotated RGB imag es of laptop components including logic boards, heat sinks, and fans, captur ed under var ying illumination and orientation conditions. Data augmentation tec hniques, such as random ro- tation ( ± 15 ° ), flipping, and cropping, w er e applied to impr ov e model robus tness. Y OLOv8 achiev ed higher segmentation accu- racy (mAP50 = 98.8%, mAP5 0–95 = 85%) and str ong er bound- ar y pr ecision than SAM2 (mAP50 = 8.4%). SAM2 demonstr ated flexibility in repr esenting diver se object structur es but of ten pro- duced ov er lapping masks and inconsistent contours. These find- ings show that larg e pre-tr ained models req uir e task -specific op- timization for industrial applications. The resulting dataset and benc hmarking framew ork pro vide a foundation for dev eloping scalable vision algorithms for robo tic e-wast e disassembly and circular manufacturing sys tems. Ke ywords: Electronic W aste, W aste Electrical and Elec- tronic Equipment (WEEE), Semantic Segmentation, T ransformer -based Models, Benchmark, Computer Vi- sion for Disassembly 1. INTRODUCTION A ccurate robotic disassembly of electronic w aste (e-waste) requires precise identification of irregularly -shaped components. Spatial misalignment compromises the ability of robots to deter - mine the position and or ientation of parts f or practical manipula- tion. Existing object segmentation approaches struggle to detect non-con v e x boundar ies at the pixel lev el. This study inv es tigates ∗ Corresponding author: sarabehdad@ufl.edu the applicability of Meta ’s Segment Anything Model (S AM) for fine boundar y detection using an e-was te dataset. T o facilitate ro- bust model ev aluation and open-source data, the collected dataset combines complex pol y gonal attachments, such as circuit boards and fans, into a single disassembly lay er . A total of 1,456 R GB images are processed by the S AM after applying data augmenta- tion strategies, and the boundar y precision is compared against the state-of-the-ar t Y OLOv8 (Y ou Only Look Once) model. The Y OLOv8 architecture demonstrates super ior accuracy in identi- fying comple x object contours, minimizing boundar y errors, and impro ving par t localization. The ev aluation sho w s unique ad- vantag es of each architecture in handling object boundar ies and scale variations. The study establishes a benchmark dataset that supports future work on ir regular component segmentation and model optimization for robotic e-was te disassembly . E-was te component segmentation presents technical difficul- ties that set it apar t from conv entional object recognition tasks. Components suc h as heat sinks and circuit boards e xhibit non- con v e x g eometries with ir regular boundar ies, thin protr usions, and inter nal cutouts. These shapes differ from the compact ob- jects commonly found in benchmarks such as COCO and Pascal V OC [ 1 , 2 ]. Laptop assemblies also contain densely pack ed com- ponents with narrow inter -par t gaps, which increases the r isk of boundary o v er lap and occlusion. In addition, components of the same categor y vary in shape, size, and lay out among different manufacturers with high intra-class variation. Visual comple xity further intensifies the challenge. Metallic and PCB sur f aces pro- duce low -contrast visual appearances with reflectiv e ar tif acts that reduce the per f or mance of color -based segmentation cues. Practi- cal constraints dur ing disassembl y impose additional limitations. During disassembly , components are view ed from limited and fix ed angles, which restricts the diversity of training image per - spectiv es. Although similar challeng es are shared with related industrial tasks such as PCB inspection [ 3 ] and bin picking in manufacturing [ 4 ], the combination of ir regular geometry , dense 1 Cop yright © 2026 by ASME spatial arrangement, and cross-brand variability mak es e-w aste segmentation a unique benchmark problem. 2. LITERA TURE REVIEW 2.1. Irregular Object Segmentation As e-waste v olumes rise globally , the precise visual identifi- cation of electronic components has become a significant bottle- neck in automated disassembly and recy cling sys tems. For exam- ple, laptop motherboards consist of numerous ir regular ly shaped components with nar ro w gaps and complicate robotic segmenta- tion tasks. These components often hav e fix ed orientations, which restrict image capture angles and result in occlusions. Design variations between manufacturers also lead to inconsistencies in component shapes, sizes, and locations. A practical automated solution should detect components of varying sizes and shapes, perform pix el-le v el segmentation, and delineate clear boundaries ev en in densely packed arrangements. Object detection uses bounding bo x es to identify objects. Ho w ev er , segmentation as- signs labels to ev ery pix el in an image and infers object str uctures in precise spatial aw areness f or robots [ 5 ]. Fe w studies hav e addressed the segmentation of ir regular ly shaped e-was te com- ponents. Exis ting methods often lack validation on non-con v e x objects and fail to achiev e robust pixel-le v el segmentation. Recent adv ancements in deep learning impro v e segmenta- tion and facilitate detailed f eature extraction from raw image data. Conv olutional neural networks (CNNs) dr iv e progress in re- lated computer vision tasks, and the Mask R egion-Con v olutional Neural Netw orks (Mask R -CNNs) hav e become a helpful tool f or instance segmentation [ 6 ]. Chen et al. optimized CNNs to capture dependency features betw een images and ev aluated their applicability to circuit boards analy sis [ 3 ]. Aar thi et al. applied Mask R -CNNs to detect the waste objects and guide the robot for grasping [ 7 ]. Mask R -CNN structure was also e valuated to detect metal objects and determine their orientations for dual-ar m robot grasping [ 8 ]. Jahanian et al. [ 9 ] proposed a modified Mask R - CNN model f or multi-task ins tance segmentation pur poses, which detected the boundar ies of electr ic components on PCB boards. Despite these advancements, CNN-based approaches struggle in unstructured environments when dealing with densel y pack ed components where fine details and ov erlapping structures require f eature extraction techniques. The Y OLO frame w ork has gained attention f or fine-grained object detection tasks. The Y OLO framew ork is a single-s tage CNN architecture that reformulates object detection as a regres- sion problem. T wo-s tag e CNN methods such as Mask R -CNN first g enerate region proposals and then classify them. Y OLO instead predicts bounding bo xes and class probabilities simul- taneously in a single f orward pass. This design reduces infer - ence time and makes Y OLO suitable for real-time applications. Dev elopments in Y OLOv5 and Y OLOv8 impro v ed small-object detection in multi-camera vision sy stems [ 10 ]. F or e xample, a Y OLOv5 model was designed for image segmentation in sce- narios inv ol ving single circular boards [ 11 ]. Also, Zhang et al. demonstrated its industrial applicability by optimizing Y OLOv4 to detect scre ws in various laptop brands [ 12 ]. How e v er , since Y OLO f ocuses on object detection, hybrid approaches that com- bine detection with fine-grained segmentation are necessar y to achie v e precise boundar y delineation [ 4 ]. More recently , T ransf ormer -based architectures emerge as promising solutions f or segmentation tasks. The SEgmenta- tion TRansf ormer (SETR) replaced traditional CNNs with a T ransformer encoder to achiev e pix el-wise segmentation [ 13 ]. SegLater introduced a lightw eight design, making T ransf ormer - based segmentation more practical f or real-w orld applications [ 14 ]. In waste recy cling, Dong et al. applied a T ransf ormer model to classify constr uction w aste components in comple x on- site environments [ 15 ]. Despite strong global feature represen- tation per f or mance, the high computational cost of T ransf or mers limits their real-time applicability in robotic sys tems. Despite significant progress in segmentation research, robust pix el-lev el methods f or irregular and densely arranged compo- nents are limited. This study aims to address this gap by ev alu- ating and compar ing advanced vision architectures f or accurate component segmentation in robotic e-waste disassembly . 2.2. E-waste Dataset Giv en the importance of datasets in the dev elopment of smart e-w aste recycling sys tems, previous studies ha v e collected datasets suitable f or performing object detection and computer vision tasks in this domain. How ev er , most of them f ocus on detecting e-waste in solid was te streams. IIiev et al. introduced an e-waste dataset consisting of 19,613 images f or 77 different electronic device classes [ 16 ]. This dataset, publicly a v ailable, was assembled by combining 91 open-source datasets from the R oboflo w Univ erse, supplemented b y manually annotated images from Wikimedia Commons. Its primar y pur pose was to support e-was te detection. Similarl y , V oskergian and Ishaq collected around 4,000 images of four electronic object types from the Open Imag es Dataset V7 and ev aluated Y OLO object detection models on this dataset [ 17 ]. Zhou et al. dev eloped a dataset with 660 imag es specificall y for scre w hole detection on laptops, using a combination of Y OLO and F aster R -CNN architectures [ 10 ]. T rashNet [ 18 ] contains sev en categories, including plastic, glass, metal, etc. It provides 2527 images for training was te sor ting and management models [ 19 ]. Similar ly , Ekunday o et al. [ 20 ] also proposed a W asteN et dataset, which is a combination of multiple was te datasets with a total of 33 , 520 images. Madha v et al. proposed a dataset containing 8,000 imag es f or eight consumer electronic device classes, aimed at classifi- cation tasks; ho w ev er , the dataset is not publicly a vailable [ 21 ]. Sel vakanmani et al. utilized tw o public datasets from Kaggle namely , the Starter E- W aste Dataset and the Compressed E- W aste Dataset, which contain 613 and 806 images, respectivel y . The y ev aluated deep lear ning models for classification tasks on these datasets [ 22 ]. Liu et al. proposed an automated disassembly sys tem and a customized phone dataset, which contains o v er 2,300 images of different phone la y er components [ 23 ]. Baker et al. collected 650 imag es representing 12 smartphone classes, which w ere used f or was te sor ting e xperiments [ 24 ]. Islam et al. presented 1,053 images of eight different electronic devices and implemented T ransf or mer methods f or e-was te classification [ 25 ]. R upok et al. introduced a dataset containing 1,004 images categorized into f our classes, though this dataset is not publicly 2 Cop yright © 2026 by ASME a vailable [ 26 ]. Zhou et al. ev aluated a dataset with 52,800 multi- vie w imag es f or 22 classes from a recy cling f acility and applied a g raph transf or mer model f or e-waste classification [ 27 ]. Quang et al. collected disassembled images of 10 different cellphones, containing 11 component classes [ 28 ]. In total, they annotated 533 images, which were used for instance segmentation and detection tasks. Also, W u et al. e valuated a dataset with 513 color and depth imag es of laptops for def ect detection and segmentation [ 29 ]. 2.3. SAM Segmentation The Segment Anything Model represents a shift tow ard f oun- dation models f or image segmentation [ 30 ]. SAM was trained on the S A -1B dataset, which contains o ver 11 million images and 1 billion masks. The model uses a promptable design that ac- cepts points, box es, or te xt as input and produces class-agnostic segmentation masks. S AM demonstrates strong zero-shot gen- eralization f or diverse visual domains, including medical imag- ing [ 31 ] and remote sensing [ 32 ]. How ev er , S AM faces chal- lenges when applied to specialized industrial datasets without domain-specific fine-tuning. Objects with lo w contrast, ov erlap- ping boundaries, or unusual shapes can deg rade its segmentation quality . S AM2 e xtends the or iginal S AM to suppor t video segmen- tation [ 33 ]. S AM2 introduces a streaming architecture with a memory attention module that tracks objects o v er frames. The model was trained on the SA - V dataset, which adds video an- notations to the image-based S A -1B data. S AM processes each image independently , how e v er SAM2 maintains a memory bank that stores features from previous frames to impro v e temporal consistency . When applied to static images, S AM2 retains the encoder -decoder s tructure of SAM but benefits from a refined training process and impro ved mask decoder . In this study , w e ev aluate S AM2 on static e-waste images to assess whether these architectural updates lead to higher segmentation accuracy f or irregular components. 3. METHODOL OG Y 3.1. SAM2 Architecture E-was te disassembly consists of handling a diverse ar ra y of components, from tiny screw s to large circuit boards. These objects often appear in lay ered configurations, whic h require fine- grained segmentation of comple x shapes and ov erlapping par ts. T o f acilitate this, we aim to compare the per f or mance of a larg e vi- sion model with a lightw eight s tate-of-the-ar t Y OLO model. The proposed S AM2 architecture, shown in Figure 1 , builds upon the original S AM to better accommodate the comple xities of e-was te disassembly . The original SAM model has demonstrated con- siderable success in fields ranging from autonomous driving to medical imaging, how e v er , it faces challeng es when segmenting irregularly shaped and diminutiv e components. S AM2 addresses these issues by introducing a hierarchical encoder -decoder frame- w ork and a generativ e learning component, both of which facili- tate more precise segmentation of e-w aste objects that vary greatly in size, shape, and texture. This hierarchical design addresses the diversity of e-waste disassembly scenarios. At low er encoder lev els, the model e x- tracts fine spatial details such as edges and corners. These fea- tures are important for delineating small components, includ- ing IO boards and connector clips, which occupy only a small portion of the imag e. At higher le v els, the encoder captures broader conte xtual information, such as the spatial relationships betw een adjacent par ts. These relational features help distin- guish ov erlapping components in dense assemblies, for e xample, when a heat sink partially co v ers a logic board. The decoder combines these multi-scale representations through progressiv e upsampling. This fusion suppor ts the model to handle the larg e size variation present in e-waste imag es, where logic boards may span half the image and small connectors co v er f e w er than 100 pix els. The goal of SAM2 is to capture hierarchical str uctures more precisely than traditional dense classification models. S AM2 e x- tends the larg e-scale pretraining of SAM b y using additional hi- erarchical annotations and a generativ e decoding strategy , where it can segment ir regular objects while preser ving high-le v el con- te xtual understanding. Let 𝑥 ∈ R 𝐻 × 𝑊 × 3 denote an input image. S AM2 encodes 𝑥 at multiple scales to capture both fine-grained details and coarse conte xtual cues. Formall y , we define: 𝐸 ( 𝑥 ) = { 𝐸 1 ( 𝑥 ) , 𝐸 2 ( 𝑥 ) , . . . , 𝐸 𝑛 ( 𝑥 ) } , (1) where 𝐸 𝑖 ( 𝑥 ) represents the features e xtracted at the 𝑖 -th lev el of the encoder . Lo w er lev els ( 𝑖 small) capture high-resolution details, while higher lev els ( 𝑖 larg e) encode more global inf ormation. This hierarchical approach is useful for e-was te scenar ios, where small objects (e.g., screw s) and larg er assemblies (e.g., motherboards) coe xist in the same scene. The decoder reconstructs the image representation and pro- duces segmentation masks by combining f eatures from multiple scales: 𝐷 ( 𝐸 ( 𝑥 ) ) = 𝑛 𝑖 = 1 𝐷 𝑖 𝐸 𝑖 ( 𝑥 ) . (2) Each decoder bloc k 𝐷 𝑖 ( ·) performs upsampling and f eature re- construction from its paired encoder la y er . The design retains critical local structures, including edges and cor ners, and incor- porates contextual cues from higher -le v el representations. The mask prediction module applies a nonlinear transf orma- tion to the decoder output to generate a semantic segmentation mask: 𝑀 ( 𝑥 ) = 𝜎 𝐷 ( 𝐸 ( 𝑥 ) ) , (3) where 𝜎 ( · ) denotes the sigmoid activ ation function. This step produces a pix el-wise probability map that indicates the likeli- hood of each pixel belonging to a specific object class. By lear n- ing to segment a wide v ariety of e-was te components, S AM2 can handle complex boundar ies and o v er lapping parts that often ar ise in disassembly tasks. S AM2 incor porates a generativ e learning paradigm to mod- ify its segmentation outputs fur ther . In scenarios with incomplete or ambiguous annotations, the model uses lear ned latent repre- sentations to inf er plausible object shapes and boundaries. This capability is par ticularl y adv antageous f or e-was te disassembly , where items ma y be par tially occluded or exhibit non-unif orm geometries. S AM2 synthesizes high-quality segmentations from 3 Cop yright © 2026 by ASME FIGURE 1: SAM2 ARCHITECTURE. dense embeddings, and e xtends larg e-scale pretraining to w ard domain-specific e-waste segmentation tasks. Through its hierarchical encoder -decoder design and gener - ativ e learning mechanism, SAM2 adjusts to diverse e-waste sce- narios. It maintains robust performance on both large, comple x assemblies and small, ir regular components. Empir ical results indicate that SAM2’ s approach to multi-scale f eature extraction and g enerativ e inf erence impro v es segmentation quality , which further makes it a promising solution for automated disassembly sys tems in industrial settings. 3.2. Y OLOv8 Architecture Y OLOv8 provides a unified architecture f or segmentation that incor porates f eature fusion, anchor -free detection, and multi- scale feature lear ning. The structure of Y OLOv8 can be f ound in Figure 2 . The framew ork consists of three pr incipal compo- nents: a CSPDarknet53 backbone augmented with a C2f module, an anchor -free decoupled segmentation head, and a hybrid loss function design. The backbone e xtracts essential features using a 53-lay er deep conv olutional neural network with a cross-stage partial net- w ork (CSP) architecture. The CSP uses residual connections to the backbone and direct connections to the segmentation head part. Another significant improv ement in Y OLOv8 is the use of a C2f module (cross-s tage par tial bottleneck with tw o conv olu- tions) instead of a traditional C3 con v olutional module, which enhances accuracy and reduces inf erence time. The C2f mod- ule employ s split-and-merg e operations to agg reg ate multi-scale f eatures and improv e the capture of fine-grained spatial patterns f or ir regular shapes. Y OLOv8 also uses a smaller 3x3 con v o- lutional la y er to replace the or iginal 6x6 conv olutional la y er in Y OLOv5 in the backbone netw ork. The module facilitates the recognition of fragmented objects, which are commonl y encoun- tered in e-waste collection, by integrating shallow -la y er features with deeper representations. An anchor -free strategy , employ ed by Y OLOv8, facilitates the direct reg ression of bounding bo x centers and sizes. Instead of predefined anchor bo x es, the model directly predicts bound- ing bo x coordinates and adjusts to the heterogeneous shapes and scales. A decoupled task -specific head segregates detection con- fidence, classification, and localization into parallel processing streams. This separation minimizes cross-task inter f erence in cluttered scenes where o v erlapping or fragmented objects are prev alent. For segmentation, a dual-resolution fusion strategy is em- plo y ed: high-resolution f eatures from the backbone preser v e fine structural details, while upsampled semantic f eatures from the C2f module pro vide contextual aw areness. These complemen- tary data streams are combined within a lightweight mask head, which synthesizes pixel-accurate boundar ies through hierarchi- cal f eature aggregation. The result is robust contour delineation, ev en for objects with discontinuous edg es or reflectiv e sur f aces in the e-waste dataset. Segmentation per f ormance is optimized using a hybrid loss function defined as: 𝐿 total = 𝜆 CIoU 𝐿 CIoU + 𝜆 DFL 𝐿 DFL + 𝜆 BCE 𝐿 BCE (4) where 𝐿 CIoU (complete intersection o ver union loss) controls bounding bo x localization by accounting f or ov erlap, aspect ra- tio, and center distance betw een predicted and ground-tr uth re- gions. 𝐿 DFL (distribution focal loss) shar pens probabilistic pre- dictions b y penalizing uncer tain classifications in ambiguous re- gions. 𝐿 BCE (binary cross-entropy loss) refines pix el-wise mask boundaries b y minimizing er rors between predicted and tr ue seg- mentation maps. The weighting coefficients ( 𝜆 CIoU , 𝜆 DFL , 𝜆 BCE ) determine the contr ibutions of each ter m dur ing training. This h ybrid f ormulation addresses challeng es in e-w aste segmentation, such as ir regular shapes, occlusions, and fragmented edg es. 3.3. Comparison of Model Approaches S AM2 and Y OLOv8 differ in their design objectiv es and segmentation strategies. SAM2 is a f oundation model trained on larg e-scale and div erse data to produce class-agnostic masks. It generates multiple candidate segmentation masks per imag e and uses e xternal prompts or post-processing to associate masks with specific classes. This design provides fle xibility in representing div erse object str uctures but may produce redundant or o v er lap- ping masks when applied to domain-specific tasks without fur ther optimization. Y OLOv8 is a task -specific architecture that performs detec- tion and segmentation in a single f orward pass. It is trained end-to-end on a targ et dataset and predicts class-labeled masks. The anchor -free detection head and feature fusion module help the model lear n domain-specific shape patter ns, which benefits the segmentation of ir regular components in e-waste images. T able 1 summar izes the main differences between the tw o models. 4 Cop yright © 2026 by ASME C 2 f C o n v I n p u t C o n v C o n v C 2 f C o n v C 2 f C o n v C 2 f S P P F C o n c a t C 2 f C o n c a t C o n c a t U p s a m p l e C 2 f D e t e c t C 2 f C o n c a t C 2 f D e t e c t D e t e c t U p s a m p l e C o n v C o n v C o n v S p l i t B o t t l e n e c k B o t t l e n e c k C o n c a t C o n v N l a y e r s … C 2 f B l o c k B a c k b o n e B l o c k H e a d C o n v Ma x P o o li n g 2D C o n c a t Ma x P o o li n g 2D Ma x P o o li n g 2D C o n v S P P F B l o c k C o n v C o n v C o n v C o n v Bottl e n e c k Bl oc k S h o r t c u t = T r u e S h o r t c u t = F a l s e C o n v 2 d B a t ch N o r m 2 d S i L U C o n v B l o c k C o n v C o n v C o n v 2 d B o u n d i n g B o x L o s s 𝑐 = 4 × 𝑟𝑒 𝑔 𝑚 𝑎 𝑥 C o n v C o n v C o n v 2 d C l a s s L o s s 𝑐 = 𝑛 × 𝑐 D e te c t FIGURE 2: Y OLO V8 ARCHITECTURE T ABLE 1: COMP ARISON OF SAM2 AND Y OLO V8 FOR E-W ASTE SEGMENT A TION. Aspect S AM2 Y OLOv8 Design ob- jectiv e General-purpose and class-agnostic segmentation T ask -specific detection and segmentation T raining strategy Pre-trained on SA - V dataset, and fine-tuned on target data T rained from scratch on target dataset Mask g en- eration Multiple candidate masks per image One mask per detected object Class assignment Req uires post-processing or prompting Directly predicts class labels Irregular shapes Uses lear ned shape priors from diverse pre-training Leans domain-specific f eatures Inf erence speed Higher computational cost Lightw eight and real-time capable 4. D A T ASET Data w ere collected dur ing the manual disassembl y of end- of-use laptops. After remo ving the back case, operators disas- sembled components la y er -by -la y er . Images w ere captured from multiple angles bef ore and after disassembly , each depicting ir- regularl y shaped e-was te components. Depending on the laptop’ s condition, imag es contain either single or multiple components within a lay er . FIGURE 3: MANUAL DISASSEMBL Y OF E-W ASTE D A T ASET . The dataset compr ises 560 raw imag es from 3 Apple Mac- Book models, cleaned and annotated to include 4 e-waste cate- gories: logic boards, heat sinks, IO boards, and f ans. Component counts and distributions are sho wn in Figure 3 . The dataset re- flects real-w orld complexity , with objects ranging from large logic boards to small IO boards. A total of 1,717 segmentation masks w ere annotated by two e xper ts f or precise boundar y delineation. For model training, w e appl y a set of data augmentation strategies that include hor izontal and v er tical flips, random crop- ping (0% minimum zoom and 14% maximum zoom), and rota- tions ranging from -15 ° to +15 ° without reducing image reso- 5 Cop yright © 2026 by ASME lution. Eac h training imag e generated three variants, e xpanding the training set from 448 to 1,344 imag es. This augmentation en- riches the variety of object appearances and strengthens a model’s learning ability . The validation and testing sets each contain 56 images. The dataset provides a benchmark for ev aluating seg- mentation algor ithms. 5. RESUL TS 5.1. SAM Segmentation Results The results illustrate SAM perf ormance on the discussed dataset. Experiments compare models without fine-tuning and those fine-tuned at 40, 100, and 200 epochs. T r ials also assess lo w -resolution versus high-resolution images. The ev aluations sho w the influence of fine-tuning and image quality on segmen- tation accuracy for comple x e-was te components. For fine-tuning, we froze the image encoder of S AM2 and updated only the mask decoder . This strategy reduces computa- tional cost and concentrates g radient updates on domain-specific segmentation behavior . W e used the A damW optimizer with a w eight deca y of 0.01 and a batch size of 4. W e sa v ed model chec kpoints at 40, 100, and 200 epochs to ex amine how segmen- tation quality ev olv es with training duration. The loss function combined binar y cross-entropy and dice loss to balance pixel- lev el accuracy and region-le v el o v erlap. W e also compared fine- tuning with lo w-resolution and high-resolution images to ev aluate the effect of input quality on segmentation per f or mance. The model processes the entire image and assigns a seg- mentation label to e v ery pixel. The pretrained S AM model uses a T ransformer -based encoder -decoder architecture. This design generates dense segmentation masks in the image. The architec- ture, trained on larg e-scale datasets with ov er 80 million param- eters, lear ns a broad set of visual f eatures and object boundar ies. The segmentation map cov ers ev er y pix el by associating lear ned representations with spatial regions. The model generalizes from e xtensiv e pretraining, where it encountered diverse object shapes, te xtures, and conte xts. Ev en without task -specific adaptation, the S AM model produces segmentation outputs f or the entire input image. In Figure 4 , the model sho ws impro v ement after 40 epochs of fine-tuning. The model remo v es larg e background regions. At 100 epochs, the model delineates logic board outlines with precision and separates the f oreground from the background. The progression demonstrates the benefit of e xtended fine-tuning in distinguishing targ et components from background f eatures. Figure 5 and 6 present per f ormance for single-object and multi-object segmentation, respectiv ely . In Figure 5 , the model without fine-tuning assigns random segmentation labels. At 40 epochs, the attached logic board remains undetected. At 200 epochs, the segmentation captures the pr imary object with defined boundaries. This result sugg ests that 200 epochs may suffice f or single-object segmentation tasks. On the other hand, Figure 6 sho ws that at 200 epoc hs, the model detects two objects but fails to detect the heat sink. For multi-object segmentation, training for 200 epochs does not cap- ture all pre-labeled components. The results sugges t that fur ther fine-tuning or architecture adjustment ma y be required f or sce- narios with dense objects or varied visual character is tics. FIGURE 4: SAM2 INST ANCE SEGMENT A TION FOR LOGIC BOARD. FIGURE 5: SAM2 INST ANCE SEGMENT A TION FOR LOGIC BO ARD A TT ACHED A T A LAPTOP . Figure 7 illustrates the influence of image resolution on seg- mentation per f or mance. Without fine-tuning, the model produces segmentation masks f or both targets and background. F ine- tuning with lo w-resolution images impro v es segmentation but task -specific capture. Fine-tuning with high-resolution images yields coherent boundar ies and improv ed object separation. The observation underscores the impor tance of high-quality data f or e-was te disassembly tasks that include small and intr icate com- ponents. 5.2. Y OLOv8 Segment ation Results The results plot (in Figure 8 ) show s training and validation losses f or bo x regression, segmentation accuracy , classification accuracy , and distr ibution f ocal loss. With increasing epochs, both training and validation losses decrease steadil y , indicating reduced ov er fitting. 6 Cop yright © 2026 by ASME FIGURE 6: SAM2 INST ANCE SEGMENT A TION FOR LOGIC BOARD, HEA T SINK AND IO BOARD. FIGURE 7: SAM2 INST AN CE SEGMENT A TION FOR DOUBLE-F AN. ALL IMA GES USE HIGH-RESOLUTION INPUT BY DEF AUL T UN- LESS OTHER WISE NOTED. Bo x loss gov er ns the accuracy of predicted bounding box co- ordinates. Its decreasing trend reflects improv ed spatial localiza- tion of e-was te components. Segmentation loss measures mask boundaries and pixel-le v el accuracy . Low er values correspond to more precise delineation of irregular shapes. Classification loss distinguishes foreground from background and reduces false positiv es. Distribution f ocal loss shar pens prediction probabil- ities in ambiguous regions and results in reliable object center predictions. The four loss cur v es start at different values and conv erg e to different endpoints. In particular , segmentation loss is slightly abo v e the other losses and reflects the challeng e of captur ing fine-grained boundar ies f or ir regular shapes. The Y OLOv8 segmentation output in Figure 9 includes in- stance segmentation with bounding bo x es and predicted classes FIGURE 8: Y OLO V8 TRAININ G AND V ALIDA TION LOSS. f or four e-was te components. The confidence score reflects the model’ s cer tainty that each detected object belongs to its as- signed class. In Y OLOv8, confidence scores der iv e from two components: the objectness score and the class probability . The objectness score represents the probability that a bounding bo x contains an y object, while the class probability indicates the like- lihood that the detected object belongs to a specific class. The final confidence score is calculated as the product of these tw o values: 𝐶 𝑜 𝑛 𝑓 𝑖 𝑑𝑒 𝑛 𝑐 𝑒 = objectness score × class probability (5) FIGURE 9: Y OL OV8 INST ANCE SEGMENT A TION FOR FOUR COM- PONENTS. 5.3. Segmentation Model Performance Comparison T o compare the models, this study adopts ev aluation metrics from the standard COCO segmentation framew ork [ 1 ]. mAP 50 calculates av erage precision at an intersection o v er union (IoU) threshold of 0.5, while mAP 50-95 combines av erage precision o v er thresholds from 0.5 to 0.95. mAP 50 is adequate for tasks that allow minor inaccuracies, whereas mAP 50–95 is cr itical f or scenarios requir ing precise object delineation. 7 Cop yright © 2026 by ASME T ABLE 2: SEGMENT A TION RESUL T S OF TWO MODELS Model mAP 50 mAP 50-95 S AM2 8.4% 9% Y OLOv8 98.8% 85% T able 2 presents segmentation per f or mance f or SAM2 and Y OLOv8. Y OLOv8 achie v es mAP 50 of 98.8% and mAP 50 – 95 of 85%. SAM2 achie v es mAP 50 of 8.4% and mAP 50 – 95 of 9%. The large gap in quantitativ e scores does not indicate that SAM2 fails to segment e-w aste components. As sho wn in Figure 4 to 7 , S AM2 produces meaningful segmentation after fine-tuning, including defined logic board contours in Figure 4 , pr imary ob- ject capture in single-object scenes in Figure 5 , and impro v ed object separation with high-resolution input in Figure 7 . The lo w mAP scores instead reflect a mismatch betw een SAM2’ s class-agnostic mask g eneration strategy and the COC O ev aluation protocol, which requires cor rect class-mask association. S AM2 generates multiple candidate masks per image without assigning class labels. Man y of these masks are counted as f alse posi- tiv es e v en when they cor respond to visuall y meaningful regions. S AM2 also produces o v er lapping masks f or adjacent components in dense scenes of Figure 6 , which fur ther increases false posi- tiv e counts. On the other hand, Y OLOv8 directly predicts class- specific masks with non-maximum suppression, which eliminates redundant detections. The similar mAP 50 and mAP 50 - 95 values f or S AM2 indicate that its per f or mance is consistentl y lo w f or all IoU thresholds. This confirms that S AM2’ s pr imary limitation is not boundar y precision but rather the lack of class-specific mask assignment. If boundar y quality w ere the main issue, a larg er drop from mAP 50 to mAP 50 - 95 w ould be expected, as obser v ed with Y OLOv8 (98.8% to 85%). The choice of mAP 50 and mAP 50-95 f ollo w s the s tandard COCO segmentation framew ork [ 1 ], which supports compar ison with published results. Ho we v er , these metrics are designed f or class-a ware ev aluation and may not fully reflect the quality of a class-agnostic model such as SAM2. W e also note that S AM2 and Y OLOv8 ser v e different pur poses: SAM2 is a g eneral-pur pose f oundation model, Y OLOv8 is task -specific. The pur pose of this comparison is to assess their practical suitability f or e-w aste seg- mentation. The results sugges t that task -specific models achie v e higher scores under standard protocols. Foundation models re- quire additional post-processing or prompt engineering to trans- late their segmentation capability into class-aw are predictions. The test set contains 56 images from three Apple MacBook models. The limited device div ersity and consistent component la y out within MacBook models may contr ibute to Y OLOv8’ s high mAP 50 score. W e consider the cur rent results a strong baseline f or the proposed dataset. Future work will e xtend the ev aluation to additional laptop brands and de vice categories to assess cross-domain generalization. 6. CONCLUSION In this study , we e valuated tw o state-of-the-art segmentation algorithms, SAM2 and Y OLOv8, using a new l y dev eloped dataset of annotated laptop components from e-waste disassembly . The dataset captures real-wor ld complexity through ir regular geome- tries, o v erlapping parts, and dense spatial ar rang ements. It could serve as a benchmark f or assessing segmentation per f or mance in industrial recy cling environments. Y OLOv8 achie v ed higher segmentation accuracy (mAP 50 = 98.8%, mAP 50-95 = 85%) and deliv ered stable, real-time per - f ormance due to its lightweight architecture and f eature fusion mechanism. On the other hand, SAM2, a transf ormer -based model with generativ e and hierarchical encoding, exhibited flex- ibility in handling div erse object structures but struggled with precise boundary delineation and produced redundant masks in densely pack ed scenes. These results show that larg e, pre-trained models require targeted fine-tuning and task -specific optimization to achiev e pixel-le v el precision in robotic disassembly tasks. This study establishes a dataset and benchmarking frame- w ork to guide future segmentation research in circular man- ufacturing sys tems. Limitations include the dataset’ s f ocus on laptop components and restricted fine-tuning iterations f or S AM2 due to computational constraints. Future w ork will e x- tend the dataset to additional device categor ies, incorporate multimodal sensing (depth and infrared), and in v estig ate h y- brid transf or mer -conv olutional architectures optimized f or pre- cise, real-time robotic disassembly . A CKNOWLEDGMENTS This work has been suppor ted b y the National Science Foun- dation - USA under grants CMMI-2349178, CMMI-2026276 and CMMI-2422826. Any opinions, findings, and conclusions or rec- ommendations e xpressed in this mater ial are those of the authors and do not necessarily reflect the vie ws of the National Science Foundation. REFERENCES [1] Lin, T sung- Yi, Maire, Michael, Belongie, Serg e, Hay s, James, Perona, Pietro, Ramanan, Dev a, Dollár , Piotr and Zitnick, C Lawrence. “Microsoft coco: Common objects in conte xt.” Computer vision–ECCV 2014: 13th European conf er ence, zuric h, Switzerland, September 6-12, 2014, pr o- ceedings, par t v 13 : pp. 740–755. 2014. Spr ing er . [2] Ev eringham, Mark, V an Gool, Luc, Williams, Chr isto- pher KI, Winn, John and Zisserman, Andrew . “ The pascal visual object classes (voc) challenge.” International jour nal of computer vision V ol. 88 No. 2 (2010): pp. 303–338. [3] Chen, W ei, Huang, Zhongtian, Mu, Qian and Sun, Yi. “PCB def ect detection method based on transf or mer - Y OLO.” IEEE access V ol. 10 (2022): pp. 129480–129489. [4] Mallick, Arijit, del P obil, Angel P and Cervera, Enric. “Deep learning based object recognition for robot picking task.” Proceedings of the 12th international confer ence on ubiquitous information manag ement and communication : pp. 1–9. 2018. [5] Minaee, Sher vin, Bo yk o v , Y uri, Porikli, Fatih, Plaza, Anto- nio, Kehtarna vaz, Nasser and T erzopoulos, Demetr i. “Im- age segmentation using deep learning: A surve y .” IEEE transactions on pattern analysis and mac hine intellig ence V ol. 44 No. 7 (2021): pp. 3523–3542. 8 Cop yright © 2026 by ASME [6] He, Kaiming, Gkiox ar i, Georgia, Dollár , Piotr and Girshick, R oss. “Mask r-cnn.” Proceedings of the IEEE international conf er ence on computer vision : pp. 2961–2969. 2017. [7] Aarthi, R and Rishma, G. “ A vision based approach to localize waste objects and geometric features e xaction f or robotic manipulation.” Procedia Computer Science V ol. 218 (2023): pp. 1342–1352. [8] Kijdech, Dumrongsak and V ongbuny ong, Supachai. “Ma- nipulation of a Comple x Object Using Dual-Arm Robot with Mask R -CNN and Grasping Strategy .” Jour nal of Intellig ent & Robotic Systems V ol. 110 No. 3 (2024): p. 103. [9] Jahanian, Ali, Le, Quang H., Y oucef- T oumi, Kamal and T setserukou, Dzmitr y . “See the E- W aste! T raining Visual Intelligence to See Dense Circuit Boards f or Recy cling.” Proceedings of the IEEE/CVF Confer ence on Computer V i- sion and P attern Recognition (CVPR) W orkshops . 2019. [10] Zhou, Chuangchuang, W u, Yif an, S terkens, W outer, Piessens, Mathijs, V andew alle, Patrick and Peeters, Jef R. “T o w ards robotic disassembly : A compar ison of coarse-to- fine and multimodal fusion screw detection methods.” Jour - nal of Manufacturing Syst ems V ol. 74 (2024): pp. 633–646. [11] Glučina, Matko, Anđelić, Nik ola, Lorencin, Ivan and Car , Zlatan. “Detection and classification of printed circuit boards using Y OLO algor ithm.” Electronics V ol. 12 N o. 3 (2023): p. 667. [12] Zhang, Xiny ao, Eltouny , Kareem, Liang, Xiao and Behdad, Sara. “ Automatic scre w detection and tool recommendation sys tem f or robotic disassembly .” Journal of Manuf acturing Science and Engineering V ol. 145 No. 3 (2023): p. 031008. [13] Zheng, Sixiao, Lu, Jiachen, Zhao, Hengshuang, Zhu, Xia- tian, Luo, Zek un, W ang, Y abiao, Fu, Y anw ei, Feng, Jian- f eng, Xiang, T ao, T or r , Philip HS et al. “Rethinking seman- tic segmentation from a sequence-to-sequence perspectiv e with transf ormers.” Proceedings of the IEEE/CVF con- f er ence on computer vision and pattern recognition : pp. 6881–6890. 2021. [14] Xie, Enze, W ang, W enhai, Y u, Zhiding, Anandkumar , An- ima, Alv arez, Jose M and Luo, Ping. “SegFormer: Simple and efficient design f or semantic segmentation with trans- f ormers.” Advances in neural information processing sys- tems V ol. 34 (2021): pp. 12077–12090. [15] Dong, Zhiming, Chen, Junjie and Lu, W eisheng. “Computer vision to recognize construction was te compositions: A no v el boundary-a ware transf or mer (B A T) model.” Journal of environmental management V ol. 305 (2022): p. 114405. [16] Iliev , Dimitar I, Marinov , Marin B and Or tmeier , Frank. “ A Proposal f or a Ne w E- W aste Imag e Dataset Based on the UNU-KEY S Classification.” 2024 23r d International Sym- posium on Electrical Apparatus and T ec hnologies (SIEL A) : pp. 1–5. 2024. IEEE. [17] V oskergian, Daniel and Ishaq, Isam. “Smar t e-w aste man- agement system utilizing Internet of Things and Deep Learning approaches.” Journal of Smart Cities and Society No. Preprint (2023): pp. 1–22. [18] Y ang, Mindy and Thung, Gary . “Classification of trash f or recy clability status.” CS229 project report V ol. 2016 No. 1 (2016): p. 3. [19] Lu, W eisheng and Chen, Junjie. “Computer vision f or solid was te sor ting: A cr itical revie w of academic research.” W aste Manag ement V ol. 142 (2022): pp. 29–43. [20] Ekunday o, Oluw atobi, Mur ph y , Lisa, Pathak, Pramod and Stynes, Paul. “ An on-device deep lear ning frame w ork to encourage the recycling of was te.” Intellig ent Syst ems and Applications: Pr oceedings of the 2021 Intellig ent Sys- tems Confer ence (IntelliSy s) V olume 3 : pp. 405–417. 2022. Springer . [21] Shre y as Madhav , A V , Ra jaraman, Raghav , Har ini, S and Kiliroor , Cinu C. “ Application of ar tificial intelligence to en- hance collection of E-was te: A potential solution f or house- hold WEEE collection and segregation in India.” W aste Manag ement & Resear c h V ol. 40 No. 7 (2022): pp. 1047– 1053. [22] Sel vakanmani, S, Ra jeswari, P , Kr ishna, B V and Manikan- dan, J. “Optimizing E-waste manag ement: Deep lear ning classifiers f or effective planning.” Jour nal of Cleaner Pro- duction V ol. 443 (2024): p. 141021. [23] Liu, Chang, Balasubramaniam, Badr inath, Y ance y , Neal A, Sev erson, Michael H, Shine, Adam, Bov e, Philip, Li, Bei- w en, Liang, Xiao and Zheng, Minghui. “Raise: A robot- assisted selective disassembly and sorting system for end- of-lif e phones.” Resour ces, Conser v ation and Recycling V ol. 225 (2026): p. 108609. [24] Abou Baker , Nermeen, Szabo-Müller, Paul and Handmann, Uw e. “ T ransf er lear ning-based method f or automated e- was te recy cling in smart cities.” EAI endorsed transactions on smar t cities V ol. 5 No. 16 (2021): pp. e1–e1. [25] Islam, Niful, Jon y , Md Mehedi Hasan, Hasan, Emam, Su- tradhar , Sunny , Rahman, Atikur and Islam, Md Motaharul. “Ewas tenet: A two-s tream data efficient image transf or mer approach f or e-was te classification.” 2023 IEEE 8th Interna- tional Conf er ence On Softwar e Engineering and Computer Sys tems (ICSECS) : pp. 435–440. 2023. IEEE. [26] Rupok, Hasibul Hasan, Sourov , N ahian, Anannay a, San- jida Jannat, Afroz, Amina, Bipul, Mahmudul Hasan and Islam, Md Motaharul. “ElectroSortNet: A N o v el CNN Ap- proach f or E- W aste Classification and Io T -Driven Separation Sys tem.” 2024 3rd International Confer ence on Advance- ment in Electrical and Electr onic Engineering (ICAEEE) : pp. 1–6. 2024. IEEE. [27] Zhou, Chuangchuang, W u, Yifan, Sterkens, W outer, V an- de walle, Patrick, Zhang, Jian wei and Peeters, Jef R. “Multi- vie w g raph transf ormer f or was te of electric and electronic equipment classification and retr ie v al.” Resources, Conser - vation and Recycling V ol. 215 (2025): p. 108112. [28] Jahanian, Ali, Le, Quang H, Y oucef- T oumi, Kamal and T setserukou, Dzmitr y . “See the e-waste! training visual intelligence to see dense circuit boards f or recycling.” Pro- ceedings of the IEEE/CVF Confer ence on Computer Vision and P attern Recognition W orkshops : pp. 0–0. 2019. [29] W u, Yif an, Zhou, Chuangchuang, S terkens, W outer, Piessens, Mathijs, De Marelle, Dieter, De wulf, Wim and Peeters, Jef. “ A Color and Depth Image Defect Segmenta- tion Frame w ork f or EEE Reuse and Recy cling.” 2024 Elec- tronics Goes Green 2024+(EGG) : pp. 1–7. 2024. IEEE. 9 Cop yright © 2026 by ASME [30] Kirillov , Alexander , Mintun, Er ic, Ravi, Nikhila, Mao, Hanzi, Rolland, Chloe, Gustaf son, Laura, Xiao, T ete, Whitehead, Spencer , Berg, Alexander C, Lo, W an- Y en et al. “Segment anything.” Pr oceedings of the IEEE/CVF inter - national confer ence on computer vision : pp. 4015–4026. 2023. [31] Ma, Jun, He, Y uting, Li, Feif ei, Han, Lin, Y ou, Chenyu and W ang, Bo. “Segment anything in medical images.” N atur e Communications V ol. 15 No. 1 (2024): p. 654. [32] Chen, K ey an, Liu, Chen y ang, Chen, Hao, Zhang, Hao- tian, Li, W enyuan, Zou, Zhengxia and Shi, Zhen w ei. “RSPrompter: Lear ning to prompt f or remote sensing in- stance segmentation based on visual f oundation model.” IEEE T ransactions on Geoscience and Remot e Sensing V ol. 62 (2024): pp. 1–17. [33] Ra vi, Nikhila, Gabeur , V alentin, Hu, Y uan- Ting, Hu, R ong- hang, Ry ali, Chaitany a, Ma, T engyu, Khedr, Haitham, Rä- dle, R oman, R olland, Chloe, Gustaf son, Laura et al. “Sam 2: Segment an ything in imag es and videos.” arXiv pr eprint arXiv :2408.00714 (2024). 10 Cop yright © 2026 by ASME

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment