Improving Attributed Long-form Question Answering with Intent Awareness

Large language models (LLMs) are increasingly being used to generate comprehensive, knowledge-intensive reports. However, while these models are trained on diverse academic papers and reports, they are not exposed to the reasoning processes and inten…

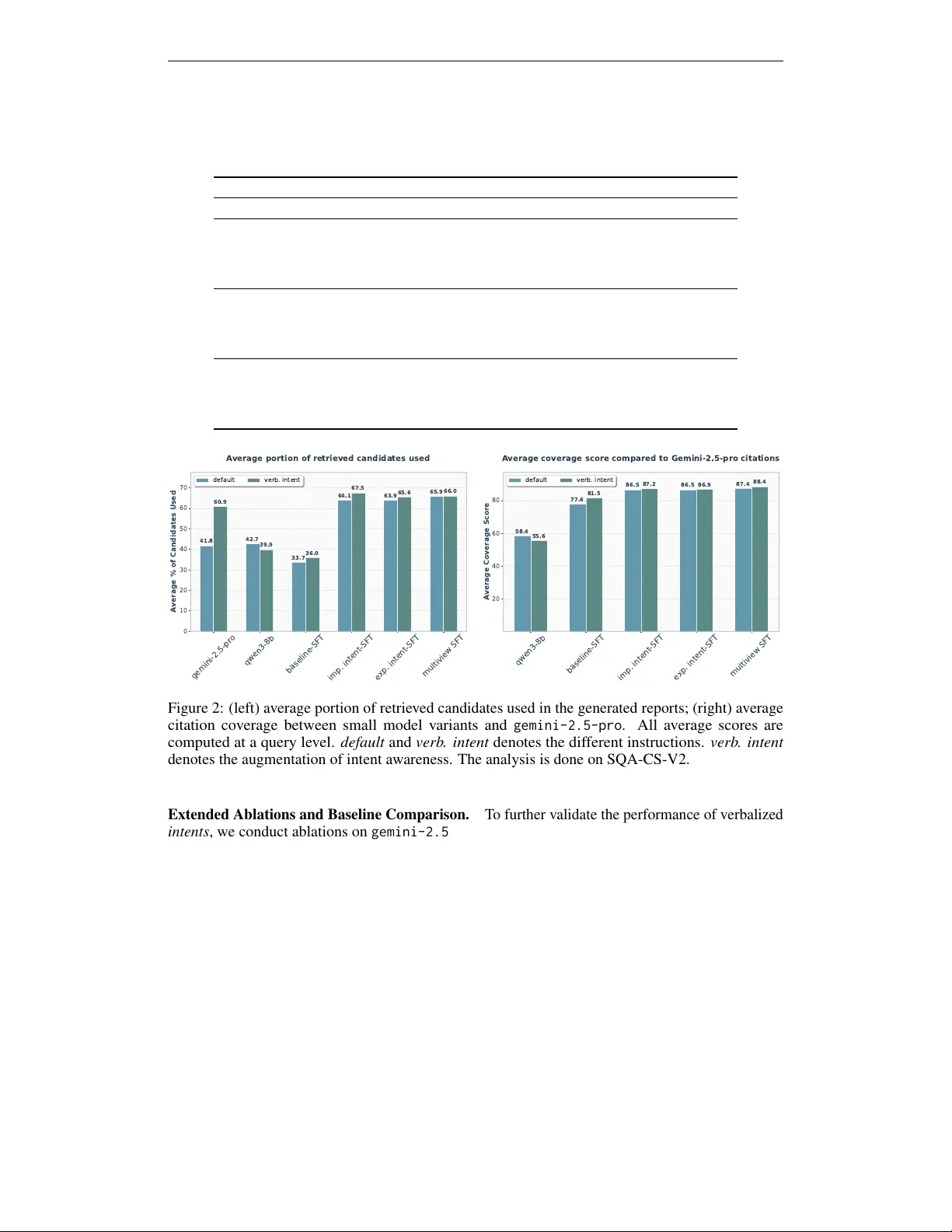

Authors: Xinran Zhao, Aakanksha Naik, Jay DeYoung