Multiple-Prediction-Powered Inference

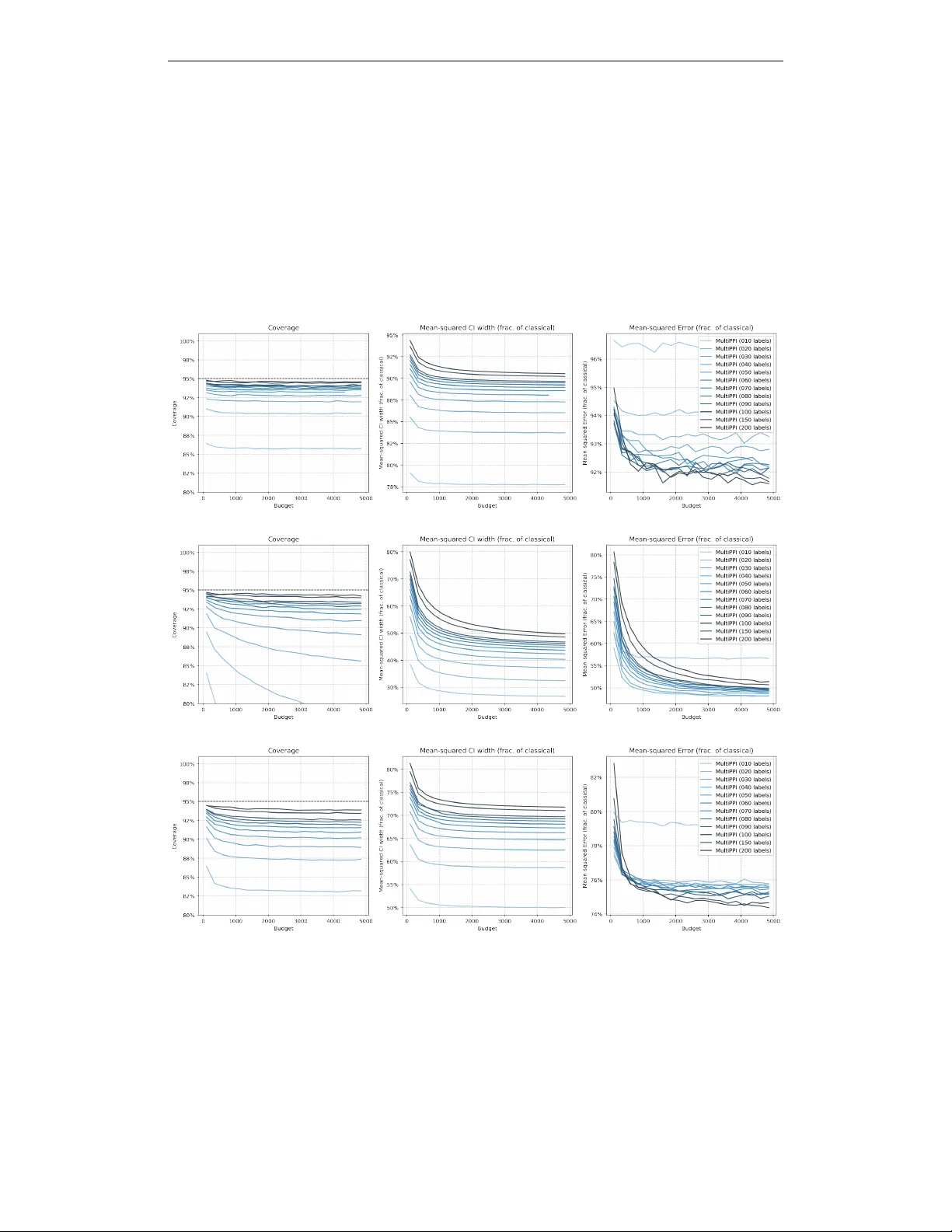

Statistical estimation often involves tradeoffs between expensive, high-quality measurements and a variety of lower-quality proxies. We introduce Multiple-Prediction-Powered Inference (MultiPPI): a general framework for constructing statistically eff…

Authors: Charlie Cowen-Breen, Alekh Agarwal, Stephen Bates