Scaling Sim-to-Real Reinforcement Learning for Robot VLAs with Generative 3D Worlds

The strong performance of large vision-language models (VLMs) trained with reinforcement learning (RL) has motivated similar approaches for fine-tuning vision-language-action (VLA) models in robotics. Many recent works fine-tune VLAs directly in the …

Authors: Andrew Choi, Xinjie Wang, Zhizhong Su

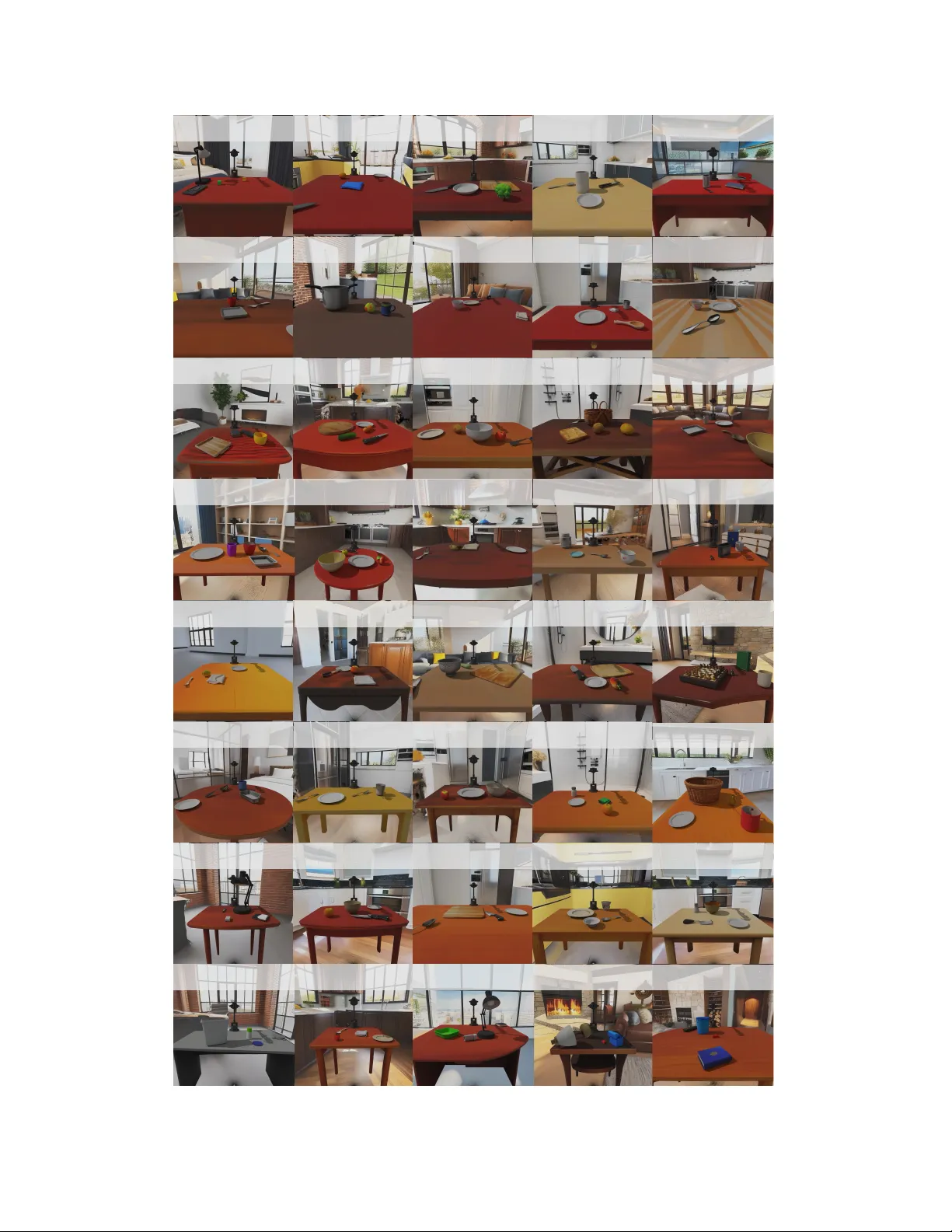

Scaling Sim-to-Real Reinf or cement Learning f or Robot VLAs with Generativ e 3D W orlds Andrew Choi 1 , Xinjie W ang 1 , Zhizhong Su 1 , and W ei Xu 1 , † { firstname.lastname } @horizon.auto 1 Horizon Robotics † Corresponding author Sim-to-real Transfer Real-world Data put the blue block on red arch put the banana in the colander put the cup on the plate put the knife on the cutting board open the microwave fold the cloth from the bottom In-distribution put the cucumber in the colander put the tennis ball in the gray basket Out-of-distribution put the chess pawn on the chessboard put the lego brick in the bucket Diverse distractors put the green apple in the fruit bowl put the purple cup on the tray put the w h i t e mouse on the mousepad put the pepper grinder on the tray Generated Scenes "put the blue pen in the bowl." Task Description: "put the teapot on top of the notebook" Scene Designer 3D World Generative Model Diverse 3D backgrounds Diverse 3D objects Pretrained prior RL Fine-tune in Sim Imitation Policy (s,r) a env Massive parallelization + domain randomization "put the broccoli in the mug." Figure 1: Overall pipeline diagram. Real-world data trains an imitation policy π pre . T ask descriptions are fed to a language-driv en scene designer , which forw ards layouts to a 3D world generati ve model to produce fully generated scenes. π θ is trained across these scenes, initialized from π pre , with massiv e parallelization and domain randomization. Finally , the trained π θ is deployed in the real world. Abstract: The strong performance of large vision–language models (VLMs) trained with reinforcement learning (RL) has motiv ated similar approaches for fine-tuning vision–language–action (VLA) models in robotics. Many recent works fine-tune VLAs directly in the real world to avoid addressing the sim-to-real gap. While real-world RL circumvents sim-to-real issues, it inherently limits the generality of the resulting VLA, as scaling scene and object di versity in the physical world is prohibiti vely dif ficult. This leads to the paradoxical outcome of transforming a broadly pretrained model into an o verfitted, scene-specific polic y . T raining in simulation can instead provide access to di verse scenes, but designing those scenes is also costly . In this work, we show that VLAs can be RL fine-tuned without sacrificing generality and with reduced labor by leveraging 3D world generativ e models. Using these models together with a language-driven scene designer , we generate hundreds of div erse interactive scenes containing unique objects and backgrounds, enabling scalable and highly parallel policy learning. Starting from a pretrained imitation baseline, our approach increases simulation success from 9.7% to 79.8% while achieving a 1.25 × speedup in task completion time. W e further demonstrate successful sim-to-real transfer enabled by the quality of the generated scenes together with domain randomization, improving real-world success from 21.7% to 75% and achieving a 1.13 × speedup. Finally , we further highlight the benefits of le veraging the ef fectiv ely unlimited data from 3D world generati ve models through an ablation study sho wing that increasing scene diversity directly improv es zero-shot generalization. Keyw ords: sim-to-real reinforcement learning, vision-language-action models, robot manipulation, generativ e simulation 1 Introduction Though internet-scale training of VLMs has seen explosi ve success, the performance of VLAs has comparativ ely lagged behind. As of recently , the dominant pipeline for training robot foundation models consists of le veraging all layers of the “data pyramid”: 1) pretraining a VLM backbone on internet-scale data, 2) further pretraining via imitation learning on lar ge-scale robot datasets [ 1 , 2 ], and 3) task- and embodiment-specific fine-tuning via imitation learning, real-w orld reinforcement learning, or sim-to-real reinforcement learning. While the first two stages are lar gely addressed by training on publicly av ailable data, the third stage often requires significant human in volv ement. For imitation learning and of fline RL, this inv olvement comes in the form of manual real-world data collection. For real-world RL, human-in-the-loop (HIL) supervision is often required to achieve sufficient sample ef ficiency [ 3 , 4 ]. Con versely , sim-to-real RL eliminates the need for manual data collection and HIL by instead generating an abundance of data through massi ve parallelization [ 5 ], b ut introduces new challenges in the form of visual and dynamic sim-to-real gaps. Furthermore, though elements of the learning pipeline such as en vironment resets and re ward detection are significantly easier in simulation, designing the 3D en vironments themselves—albeit a one-time cost—also requires substantial human effort. An often-overlooked drawback of real-world fine-tuning is the loss of generality that arises when training on only a small number of scenes. Because scaling scene di versity in the physical w orld is prohibitiv ely expensi ve, most prior w ork fine-tunes VLAs in narrowly scoped settings. As a result, fine-tuning frequently transforms a broadly pretrained VLA into an ov erfitted, scene-specific policy . In this paper , we sho w that generati ve 3D world models can automatically construct lar ge training distributions of interacti ve en vironments for RL fine-tuning of VLAs, enabling scalable sim-to-real learning beyond the small number of hand-designed en vironments used in prior work. A snapshot of the ov erall pipeline can be seen in Fig. 1 . Overall, our main contrib utions in volve sho wing that 1. Reinfor cement learning and 3D world generative models strongly complement the large capacity of VLAs. W e introduce a language-dri ven scene designer that con verts task descriptions into structured scene layouts, which are then used by a 3D world generati ve model to produce fully interactiv e en vironments. Paired with highly parallelized RL, this enables VLAs to be fine-tuned across a wide variety of scenes without requiring manual scene design. In this work, we fine-tune a pretrained VLA in simulation, achie ving a 70.1-per centage-point impro vement in success rate and a 1.25 × task completion speedup across 100 unique generated scenes. 2. Increasing scene div ersity substantially improves zer o-shot generalization. Through extensi ve ablations in simulation, we show that zero-shot generalization scales positiv ely with training distribution di versity . Results show that fine-tuning on 50 scenes can incr ease zero-shot success rate by 24.7 percentage points compared to fine-tuning on a single scene. 3. T echniques f or sim-to-real transfer of shallow models carry over to VLAs. W e achieve strong sim-to-real deployment results by le veraging 1) high-fidelity generated 3D objects and scenes, 2) simple domain randomization, and 3) using PD control with gravity compensation. W e achieve a 53.3-percentage-point impr ovement in success rate and a 1.13 × task completion speedup across 12 scenes and 240 real-world experiments. 4. RL fine-tuning flow matching VLAs into Gaus sian policies is effective. W e demonstrate that PPOFlow—a PPO-based [ 6 ] variant of ReinFlo w [ 7 ]—effecti vely fine-tunes lar ge pretrained flow matching VLAs. PPOFlow can transform a multi-step flow matching policy into a single-step Gaussian policy , which was previously thought to harm performance [ 8 ]. Instead, our findings directly support the recent work of P an et al. [ 9 ] in that the multimodality of dif fusion models is not an important factor for ha ving a high-performing robot policy . Furthermore, we demonstrate an inference latency speedup of 2.36 × through PPOFlo w by removing the need for iterativ e denoising of actions, similar to consistenc y policies [ 10 , 11 , 3 ]. More details can be seen in Sec. B . 2 2 Related W ork VLA models. Motiv ated by the successes of VLMs in computer vision and NLP , lar ge open-source robot datasets, both physical [ 12 , 1 ] and synthetic [ 2 , 13 ], hav e been created to facilitate supervised pretraining of VLAs. Using such datasets, a series of open-source robot VLAs hav e been released, such as OpenVLA [ 14 ], SpatialVLA [ 15 ], GraspVLA [ 2 ], π 0 [ 16 ], π 0 . 5 [ 17 ], and many more. A common strategy is to then supervised fine-tune a pretrained model with imitation learning on task-specific demonstration data [ 18 ]. Though straightforward, such approaches often suffer in performance when encountering out-of-distrib ution states [ 19 ] and recent work has suggested that dataset fragmentation introduces unwanted shortcut learning of VLAs [ 20 ]. Giv en this, there has been an increasing trend of instead using reinforcement learning to fine-tune VLAs. Learning in real. One popular strategy for RL fine-tuning VLAs has been to perform RL directly in the real world [ 3 , 21 , 4 , 22 , 23 , 24 , 25 ]. Though RL in real circumvents issues arising from sim-to-real gap, such methods must handle tedious issues that don’ t arise in simulation. One ke y consideration is ho w to administer the rew ard signal. For simplicity , most methods often rely on a sparse success rew ard signal, which is then administered by either a trained re ward model [ 21 , 23 , 3 , 26 ], human engineered checkers [ 24 ], or a human labeler [ 4 ]. Some approaches have used more dense rew ard formulations such as steps-to-go [ 22 ]. Another consideration is how to perform scene resets, which can be handled by manual resets [ 3 , 4 ], scripted resets [ 3 , 25 ], or learning the reset task [ 21 , 23 , 24 ]. Lastly , it has become increasingly evident that a combination of offline RL and human-in-the- loop learning is a ke y necessity to get reasonable sample efficienc y when ex ecuting RL on real hardware [ 23 , 3 , 4 ]. Though such methods have shown impressive results for learning tasks on a set scene and objects in a reasonable amount of time, the ability to maintain the generality of such policies in both scene and object space through scaling is a major question, especially giv en the necessity for human guidance. Learning in simulation. The other method of RL fine-tuning VLAs is to do so in simulation [ 27 , 28 , 5 ]. Compared to real, training in simulation offers numerous benefits, such as oracle success detection, automatic resets, and access to privileged information for teacher-student training setups [ 29 , 30 , 31 , 32 ]. Due to the domain difference, special consideration must be taken to ensure that such policies transfer from sim-to-real successfully . Several strate gies for handling the sim-to-real gap exist including domain randomization [ 29 , 33 ], real-to-sim delta action models [ 34 ], real-to-sim physical property estimation [ 35 ], real-to-sim scene reconstruction [ 36 , 31 , 37 ], and more (albeit many of these methods hav e been used on smaller models, rather than foundation-scale). T raining shallow models from scratch often requires hea vily engineered reward functions [ 29 , 34 , 33 ]. With this, fine-tuning VLAs has immense benefits o ver shallow models as a well-trained VLA prior only requires a sparse re ward and massi ve parallelization to learn [ 5 ]. Recent works for RL fine-tuning VLAs include le veraging real-to-sim-to-real robot video synthesis for le veraging both the real data and scalability of simulation [ 27 ] as well as learning within learned world models [ 28 ]. In contrast to these prior works, our main focus in this manuscript is to instead explore a dif ferent angle: can RL fine-tuning be done for as wide of a scene distribution as possible? And if so, ho w does increasing scene div ersity improv e zero-shot generalization? 3D world generative models. Constructing large-scale, realistic 3D simulation en vironments remains a fundamental bottleneck for sim-to-real reinforcement learning. Existing 3D generative paradigms (e.g., TRELLIS [ 38 ], W orldGen [ 39 ]) primarily focus on producing static visual assets that lack physical interacti vity . Although recent works such as Holodeck [ 40 ] and RoboGen [ 41 ] attempt to procedurally construct interactiv e en vironments, end-to-end generation of task-level ph ysical scenes remains challenging. Recently , emerging generative engines, exemplified by EmbodiedGen [ 42 ], hav e enabled natural language-driv en generation of interacti ve 3D scenes. In this work, we e xplore the integration of generativ e 3D engines as a low-cost, highly parallelizable pipeline for scalable scene generation, enabling large-scale RL fine-tuning of VLA models in simulation. 3 Figure 2: Overview of the simulation en vironment generation pipeline. A GPT -4o-po wered scene designer conv erts task descriptions into structured scene graphs over semantic roles and spatial relations, which are instantiated into fully interactiv e 3D w orlds. A quality assurance loop filters physically implausible configurations before simulation, enabling scalable, on-demand generation of div erse en vironments for RL training. 3 Methodology Generative simulation. T o construct large-scale, physically interacti ve 3D en vironments for sim- to-real reinforcement learning, we employ ManiSkill 3 [ 43 ] as our GPU-parallelized simulator and extend EmbodiedGen [ 42 ] into a comprehensiv e simulation en vironment generation backend. As illustrated in Fig. 2 , EmbodiedGen takes a structured scene tree layout as input and produces a fully interactiv e physical 3D world via its te xt-to-3D asset generation and layout composition interfaces. W e de velop a scene designer po wered by GPT -4o [ 44 ] that automatically produces v alid scene layouts from task descriptions. Given an instruction such as “put the pen in the pen holder”, the scene designer parses it into a structured scene graph comprising core semantic roles (background, context, distractors, manipulation targets, and the robot) along with their spatial relations (e.g., ON, IN). This graph-based representation enables fle xible, compositional control ov er scene complexity and distractor density , allo wing systematic modulation of training difficulty . Each generated scene is then passed through an automated GPT -4o-driv en quality assurance loop that checks physical plausibility and geometric consistency , discarding configurations that would cause simulation instability . Marko v decision process. W e formulate our learning problem as a finite-horizon partially observable Marko v decision process (POMDP) with state space S , action space A , and discount factor γ ∈ [0 , 1] . At each timestep t , the agent receiv es an observation o t and selects an action a t according to a policy π ( a t | o t ) . An observation consists of an RGB image I ∈ R H × W × 3 , language instruction e ∈ R L , and proprioceptiv e information in the form of (xyz, rpy , gripper) end-ef fector pose q ∈ R 7 . This full observation is giv en by o t = [ I t , e t , q t ] . Actions are represented as (end-effector delta-pose, binary gripper) action chunks A ∈ R C × 7 , where C denotes the action chunk length. W e treat each action chunk as a single decision step, resulting in a continuous action space A ⊂ R C × 7 . The objectiv e is to learn a policy that maximizes the expected discounted return J ( π ) = E π [ P T − 1 t =0 γ t r t ] , where T denotes the episode horizon. W e use a sparse rule-based success rew ard computed directly from simulator states and object relations (Eq. A.1 ). Flow matching models. For RL fine-tuning to work with solely a sparse rew ard signal, we look to use a pretrained robot foundation model to boost sample efficiency [ 5 ]. In this work, we use π 0 [ 16 ] as our baseline imitation model and pretrain it on BridgeV2 [ 12 ]. The π 0 model consists of a VLM backbone E θ and an action e xpert head v θ (left of Fig. 3 ). It is trained using a rectified flo w-matching objectiv e L flow ( θ ) = E o t , A 1 t ∼D , ϵ ∼N ( 0 , I ) , τ ∼U (0 , 1) ∥ v θ ( A τ t , K V θ ( o t ) , τ ) − ( A 1 t − ϵ ) ∥ 2 2 , (1) where D denotes the demonstration dataset, τ ∈ [0 , 1] is the continuous inte gration time, K V θ ( o t ) denotes the key-v alue tensors of E θ ( o t ) for cross-attention, and A τ t = τ A 1 t + (1 − τ ) ϵ . Robot 4 Gemma (2B) (300M) action expert SigLip (400M) [0.236, ..., 1.00] "put the cucumber in the colander" "put the cucumber in the colander" [0.236, ..., 1.00] value head noise head Gemma action expert stop-grad value SigLip Figure 3: Architecture diagram of the π 0 model as a pretrained imitation model π pre (left) and then modified for RL fine-tuning, π θ (right). actions are generated by numerically integrating the learned ODE d A τ t dτ = v θ ( A τ t , K V θ ( o t ) , τ ) , (2) where A 0 t ∼ N ( 0 , I ) . W e denote the resulting pretrained imitation policy as π pre . PPOFlow . As flo w matching models are deterministic, the importance ratio cannot be computed, pre venting standard PPO updates. ReinFlow solves this by injecting learnable noise σ ϕ ( A τ t , z t , τ ) into the numerical integration process, where z t denotes the stop-gradient hidden state z t = sg ( E θ ( o t )) . This effecti vely con verts each integration step A τ +∆ τ t = A τ t + v θ ( A τ t , K V θ ( o t ) , τ )∆ τ into a Gaussian sample ˆ A = A τ t + v θ ( A τ t , K V θ ( o t ) , τ )∆ τ , (3) A τ +∆ τ t ∼ N ( ˆ A , σ ϕ ( A τ t , z t , τ )) . (4) Let us denote the number of numerical integration steps as K = 1 / ∆ τ . This then allows us to compute the joint log probability of the denoising process for a particular step t as log π ( A 0 t , ..., A 1 t | o t ) = log N ( 0 , I ) + K − 1 X k =0 log π A ( k +1)∆ τ t | A k ∆ τ t , o t . (5) In addition to the noise head σ ϕ , we also add a value head V ψ ( z t ) for estimating the state value. A full diagram of π θ can be observ ed on the right side of Fig. 3 . W e can then directly use the log probability (Eq. 5 ) to compute the follo wing power -scaled importance ratio, which is then used along with the value from V ψ in the original PPO clipped objectiv e ˆ r t = π θ ( A 0 t , ..., A 1 t | o t ) π θ, old ( A 0 t , ..., A 1 t | o t ) s , (6) L PPOFlow ( θ , ϕ ) = E t h min ˆ r t ˆ A t , clip ( ˆ r t , 1 − ϵ, 1 + ϵ ) ˆ A t i , (7) where ˆ A t is the advantage estimate computed with GAE [ 45 ], ϵ is the clipping range, and s ∈ (0 , 1] is a scaling factor that reduces variance and helps enforce the importance ratio to stay within a more stable numerical range [ 46 ]. 4 Experiments Experiment design. T o study the effect of scaling the number of scenes N , we generate 100 unique scenes and denote this full set as W (see Sec. C for details and metrics). In this work, we focus on a pick-and-place task in which, giv en a language command, the policy must grasp an object and place it on top of another amid se veral distractors. T o e valuate out-of-distrib ution (OOD) generalization, we construct three randomly sampled subsets of W , each containing 50 scenes, denoted H i for i ∈ { 0 , 1 , 2 } . For each subset, the corresponding OOD set is defined as ¯ H i = W \ H i . Using each H i , we train three independent runs for each N ∈ { 1 , 3 , 10 , 25 , 50 } , where training uses the first N scenes under a fixed ordering of H i . In addition, we train a single run on the full set W . 5 Logitech C922 Webcam Interbotix WidowX 250S Experiment Area Figure 4: Experiment setup. The initial flo w-matching imitation policy π pre serves as a baseline and uses K = 10 and action chunk size C = 4 . π pre is trained on BridgeV2, which is predominantly pick-and-place (over 70%), with the remainder consisting of tasks such as sweeping, to wel folding, and drawer opening. This yields a strong initialization that is well aligned with our task. T o in vestigate the benefits of training on generated scenes, we introduce another baseline where we RL fine- tune a policy on three manually designed Bridge tabletop scenes from SimplerEn v [ 47 ]. For embodiment, we use an Interbotix W idowX 250S manipulator with a single external Logitech C922 webcam for vision (Fig. 4 ). Real-world ev aluation is conducted on an NVIDIA R TX 4090 GPU. T raining details. For training, we use 8 NVIDIA R TX 6000 Ada GPUs to LoRA fine-tune the VLM E θ and fully fine-tune the action expert v θ ov er 5 days. The v alue head V ψ and noise head σ ϕ are shallow MLPs and are also fully fine-tuned. T raining curves are sho wn in Fig. 5 . T o enable successful sim-to-real transfer , we rely on three factors: (1) the high fidelity of generated objects and scenes, (2) domain randomization, and (3) PD control with gravity compensation. W ith gravity compensation enabled, we found that explicit system identification was largely unnec- essary , pro vided that both simulation and real-world controllers could reach the majority of tar get poses with sufficiently lo w tracking error , thereby minimizing the dynamics sim-to-real gap. W e use an action chunk size of C = 4 and set the number of inte gration steps to K = 1 . All other training hyperparameters and domain randomization ranges are provided in T ables A.1 and A.2 , respectiv ely . Figure 5: Training curv es for all N . Note that in Fig. 5 , the final training success rates decrease monotonically as N increases. This behav- ior is expected, as we use the same batch size and mini-batch size for all runs; consequently , each scene receiv es fewer samples, scaling linearly with 1 / N . W e hypothesize that scaling the batch size and mini- batch size proportionally with N could mitigate this effect. Ho wev er, as sho wn in the next section, higher training success rates with lo w N do not necessarily translate to improv ed OOD performance. Effect of number of scenes N . T able 1 reports simulation results as a function of the number of training scenes N . W e e valuate both a verage success rate (SR) and average time to finish (TF) across four dif ferent sets of ev aluation scenes. Results reported with standard de viation are av eraged ov er three independent runs trained on different subsets H i . Each scene is ev aluated over 100 episodes. For policies trained on EmbodiedGen (EG) scenes, increasing N produces a clear trade-off between in-distribution (ID) and out-of-distribution (OOD) performance. As N increases, the success rate on the training scenes H i decreases, which is expected gi ven the fix ed mini-batch size used during training, while success rates on both EG OOD scenes ¯ H i and the SimplerEn v (SE) scenes increase steadily . For example, ID success decreases from 94.3% at N = 1 to 80.5% at N = 50 , while EG OOD success improves from 53.2% to 77.9%. Similarly , SE success increases from 36.1% to 68.4%. Increasing N also substantially reduces the gap between ID and OOD performance. At N = 1 , the gap between EG ID and EG OOD success rates is 41.1 percentage points (94.3% vs. 53.2%). This gap shrinks to 27.4 points at N = 3 , 14.6 points at N = 10 , and only 2.6 points at N = 50 . A similar trend is observed for SE e valuation, where the g ap between EG ID and SE success decreases from 58.2 points at N = 1 to 12.1 points at N = 50 . These results indicate that increasing scene di versity reduces ov er-specialization to training en vironments and improves cross-scene generalization. They also suggest that scene diversity encourages the policy to learn task-le vel manipulation strategies rather than scene-specific behaviors. Notably , the N = 100 policy achie ves the best performance on SE scenes (74.3%) despite nev er being trained on those en vironments. 6 T able 1: Simulation e valuation results. EG: EmbodiedGen. SE: SimplerEn v . For a certain policy (row), the e v aluation results are color coded as OOD , ID , and mixture . Policy EG ID scenes H i ( N ) EG OOD scenes ¯ H i (50) EG all scenes W (100) SE scenes (3) SR (%) [ ↑ ] TF (s) [ ↓ ] SR (%) [ ↑ ] TF (s) [ ↓ ] SR (%) [ ↑ ] TF (s) [ ↓ ] SR (%) [ ↑ ] TF (s) [ ↓ ] N = 0 ( π pre ) − − 9 . 6 10 . 3 9 . 7 10 . 0 23 . 7 9 . 6 N = 3 ( SE ) − − 36 . 5 8 . 5 36 . 0 8 . 6 96 . 7 6 . 7 N = 1 94 . 3 ± 2 . 6 7 . 7 ± 0 . 9 53 . 2 ± 5 . 5 9 . 0 ± 0 . 5 51 . 6 ± 5 . 3 9 . 0 ± 0 . 3 36 . 1 ± 10 . 4 9 . 3 ± 1 . 7 N = 3 88 . 7 ± 4 . 7 7 . 6 ± 0 . 5 61 . 3 ± 1 . 8 8 . 7 ± 0 . 5 60 . 0 ± 4 . 6 8 . 8 ± 0 . 5 47 . 4 ± 2 . 3 9 . 8 ± 0 . 6 N = 10 87 . 0 ± 3 . 3 7 . 7 ± 0 . 4 72 . 4 ± 0 . 4 8 . 2 ± 0 . 2 72 . 1 ± 0 . 9 8 . 2 ± 0 . 1 54 . 3 ± 9 . 2 9 . 2 ± 0 . 4 N = 25 84 . 3 ± 2 . 4 7 . 9 ± 0 . 2 77 . 6 ± 0 . 8 8 . 1 ± 0 . 2 78 . 3 ± 0 . 5 8 . 1 ± 0 . 1 70 . 1 ± 5 . 4 8 . 4 ± 0 . 1 N = 50 80 . 5 ± 0 . 4 7 . 9 ± 0 . 1 77 . 9 ± 0 . 9 8 . 0 ± 0 . 2 79 . 2 ± 0 . 3 7 . 9 ± 0 . 1 68 . 4 ± 5 . 5 8 . 5 ± 0 . 6 N = 100 − − − − 79 . 8 8 . 0 74 . 3 8 . 4 In contrast, the policy trained on the three manually designed SE scenes achie ves very high success on those same scenes (96.7%) but exhibits the largest performance drop when ev aluated on EG en vironments (36.0% SR), a 60.7-point gap. This highlights the limited co verage of the SE scenes compared to the greater di versity provided by EG-generated en vironments. Finally , training on the full scene set yields the strongest o verall performance. Compared to the imitation baseline π pre ( N = 0 ), the N = 100 policy improves success rate on EG scenes from 9.7% to 79.8%, while reducing av erage completion time from about 10 s to 8 s. Though some RL-in-real works report success rates exceeding 90%, these results are typically obtained in narro wly defined settings [ 3 , 23 ]. Our goal is not to saturate such constrained settings, but to demonstrate that generati ve simulation enables a scalable training distribution for RL fine-tuning of VLAs. Nev ertheless, our frame work also achiev es high success rates in these regimes, despite the added dif ficulty introduced by domain randomization, with N = 1 and N = 3 (SE) reaching 94.3% and 96.7%, respecti vely . Sim-to-real perf ormance. T able 2 reports sim-to-real ev aluation results across 12 scenes and 240 real-world trials. Each experiment consists of 10 trials for both the imitation baseline π pre and the N = 100 RL fine-tuned policy . W e report partial success rate (PSR), defined as correctly grasping and lifting the tar get object, overall task success rate (SR), dynamics f ailure rate (DFR), semantics failure rate (SFR), and time to finish (TF). DFR measures failures caused by ex ecution errors such as inaccurate grasp attempts or dropped objects, while SFR measures failures caused by incorrect task interpretation (e.g., interacting with the wrong object). These failure categories are not mutually exclusi ve, as a single failure may in volve both semantic and dynamics-related errors. Overall performance improves substantially after RL fine-tuning. Partial success increases from 45% for π pre to 88.3%, indicating a large improv ement in reliable object acquisition. Overall task success improv es even more, increasing from 21.7% to 75%. In addition to higher success rates, the RL policy produces more efficient behavior , reducing the average completion time from 11.5 s to 10.2 s. Examining the failure breakdo wn rev eals that most errors of the imitation baseline arise from dynamics-related issues. Across all trials, the baseline e xhibits a DFR of 66.7%, which decreases to 18.3% after RL fine-tuning, indicating a substantial improvement in manipulation robustness. Semantic failures are comparati vely less frequent but also decrease from 18.3% to 6.7%, suggesting improv ed task grounding and object selection. Performance gains are consistent across nearly all scenes. For example, scene 10 introduces a screwdri ver that does not appear in the RL training distribution, yet the RL policy achie ves an SR of 50% compared to 0% for the baseline. Similarly , scene 11 evaluates a teacup stacking task in volving unseen object instances and task composition, where the RL policy impro ves SR from 20% to 50% while maintaining perfect partial success (100%). Overall, these results demonstrate that policies fine-tuned in large-scale generati ve simulation transfer ef fectiv ely to real-w orld manipulation. RL fine-tuning improves both low-le vel execution robustness and high-lev el task success while maintaining generalization to pre viously unseen objects, attributes, and task v ariations. Qualitativ e examples of the imitation and RL polic y rollouts across different scenes are sho wn in Sec. H . 7 T able 2: Sim-to-real ev aluation results. Objects or attributes that are OOD during RL training are highlighted . Attributes in parentheses were not included in the language command. Columns are color coded as success , failure , and time metrics. Scene Language Command Scene Distractors 0 “put the banana on the bowl. ” broccoli, strawberry , plate 1 “put the broccoli on the mug. ” knife, cutting board 2 “put the mushroom on the bowl. ” banana, broccoli, plate 3 “put the spoon on the napkin. ” fork, plate 4 “put the (pink) eraser on the notebook. ” black pen, mug 5 “put the (yellow) brush on the bo wl. ” banana, yellow marker 6 “put the white eraser on the mug. ” pink eraser , black pen, blue pen 7 “put the red knife on the cutting board. ” regular knife 8 “put the blue pen on the (blue) bowl. ” black pen, white eraser , basket 9 “put the green marker on the bask et. ” red marker , yellow marker , dry erase eraser , cardboard box 10 “put the screwdriv er on the basket. ” knife, black marker 11 “put the blue teacup on the yellow teacup. ” purple teacup, pink teapot, blue plate, yellow plate Imitation baseline π pre N = 100 PSR [ ↑ ] SR [ ↑ ] DFR [ ↓ ] SFR [ ↓ ] TF (s) [ ↓ ] PSR [ ↑ ] SR [ ↑ ] DFR [ ↓ ] SFR [ ↓ ] TF (s) [ ↓ ] 0 0 . 8 0 . 6 0 . 4 0 . 0 10 . 7 ± 6 . 3 0 . 9 0 . 9 0 . 1 0 . 0 7 . 4 ± 1 . 5 1 0 . 4 0 . 2 0 . 8 0 . 0 13 . 3 ± 2 . 0 1 . 0 0 . 7 0 . 3 0 . 0 10 . 4 ± 7 . 5 2 0 . 3 0 . 1 0 . 9 0 . 0 11 . 5 0 . 9 0 . 9 0 . 1 0 . 0 8 . 4 ± 2 . 5 3 0 . 2 0 . 1 0 . 7 0 . 5 6 . 1 0 . 8 0 . 7 0 . 1 0 . 2 12 . 2 ± 5 . 1 4 0 . 4 0 . 3 0 . 7 0 . 1 11 . 0 ± 4 . 9 1 . 0 1 . 0 − − 10 . 2 ± 4 . 2 5 0 . 6 0 . 3 0 . 7 0 . 0 10 . 5 ± 1 . 1 1 . 0 1 . 0 − − 9 . 5 ± 3 . 9 6 0 . 4 0 . 2 0 . 8 0 . 0 7 . 8 ± 0 . 1 1 . 0 0 . 7 0 . 3 0 . 0 11 . 1 ± 5 . 5 7 0 . 7 0 . 2 0 . 2 0 . 6 14 . 6 ± 0 . 6 0 . 8 0 . 8 0 . 0 0 . 2 12 . 2 ± 6 . 5 8 0 . 3 0 . 1 0 . 9 0 . 0 29 . 6 0 . 9 0 . 7 0 . 2 0 . 1 12 . 2 ± 7 . 4 9 0 . 6 0 . 3 0 . 6 0 . 1 9 . 2 ± 1 . 8 0 . 7 0 . 6 0 . 4 0 . 0 8 . 8 ± 2 . 0 10 0 . 2 0 . 0 0 . 8 0 . 3 − 0 . 6 0 . 5 0 . 2 0 . 3 10 . 4 ± 5 . 5 11 0 . 5 0 . 2 0 . 5 0 . 6 11 . 6 ± 2 . 0 1 . 0 0 . 5 0 . 5 0 . 0 11 . 0 ± 2 . 0 All 0 . 45 0 . 217 0 . 667 0 . 183 11 . 5 ± 5 . 5 0 . 883 0 . 75 0 . 183 0 . 067 10 . 2 ± 5 . 1 5 Conclusion In this work, we explored the use of 3D world generativ e models for scaling sim-to-real reinforcement learning of vision–language–action (VLA) policies. By leveraging generati ve simulation to automati- cally construct div erse interactiv e en vironments, we fine-tuned a pretrained VLA across 100 unique scenes using massively parallel RL while av oiding the cost of manual scene design. Our results show that increasing scene di versity significantly impro ves zero-shot generalization across multiple OOD e valuation scenes. Furthermore, RL fine-tuning on the generated scenes impro ves simulation success rates by 70.1 percentage points, while sim-to-real deployment yields a 53.3-percentage-point improv ement in real-world task success. Beyond improved performance, our findings highlight the complementary roles of generativ e simulation and RL. Generative 3D world models provide a scalable source of di verse training en vironments, while RL enables efficient adaptation of large pretrained policies using only sparse re wards. Our language-driv en scene designer further reduces engineering ef fort by automatically translating task descriptions into structured environments for simulation. T ogether , these components form a practical pipeline for scaling robot foundation models while minimizing the need for additional real-world data collection or human-in-the-loop training. Limitations and futur e work. W e note that the experiments in this work focus on pick-and-place tasks, primarily due to current limitations in the types of objects that EmbodiedGen [ 42 ] can reliably generate. Pick-and-place tasks also constitute a large portion of e xisting robot datasets, accounting for over 70% of BridgeV2. Looking forward, we aim to extend the current framew ork to support richer manipulation behaviors such as articulated object manipulation, tool use, and multi-stage tasks. Scaling the distribution of automatically generated scenes and tasks will enable RL fine-tuning of VLAs on a broader range of interaction distrib utions, further improving generalization and robustness. Nev ertheless, we believ e this work represents an important first step toward demonstrating the potential of combining generativ e simulation with RL to scale the fine-tuning of VLA models. 8 References [1] O. X.-E. Collaboration. Open X-Embodiment: Robotic learning datasets and R T -X models. https://arxiv.org/abs/2310.08864 , 2023. [2] S. Deng, M. Y an, S. W ei, H. Ma, Y . Y ang, J. Chen, Z. Zhang, T . Y ang, X. Zhang, W . Zhang, H. Cui, Z. Zhang, and H. W ang. Graspvla: a grasping foundation model pre-trained on billion-scale synthetic action data, 2025. URL . [3] Y . Chen, S. T ian, S. Liu, Y . Zhou, H. Li, and D. Zhao. Conrft: A reinforced fine-tuning method for vla models via consistenc y policy . In Pr oceedings of Robotics: Science and Systems, RSS 2025, Los Angeles, CA, USA, Jun 21-25, 2025 , 2025. doi:10.15607/RSS.2025.XXI.019 . [4] P . Intelligence, A. Amin, R. Aniceto, A. Balakrishna, K. Black, K. Conley , G. Connors, J. Darpinian, K. Dhabalia, J. DiCarlo, D. Driess, M. Equi, A. Esmail, Y . Fang, C. Finn, C. Glossop, T . Godden, I. Goryachev , L. Groom, H. Hancock, K. Hausman, G. Hussein, B. Ichter , S. Jakubczak, R. Jen, T . Jones, B. Katz, L. Ke, C. Kuchi, M. Lamb, D. LeBlanc, S. Le vine, A. Li-Bell, Y . Lu, V . Mano, M. Mothukuri, S. Nair , K. Pertsch, A. Z. Ren, C. Sharma, L. X. Shi, L. Smith, J. T . Springenberg, K. Stacho wicz, W . Stoeckle, A. Swerdlo w , J. T anner, M. T orne, Q. V uong, A. W alling, H. W ang, B. W illiams, S. Y oo, L. Y u, U. Zhilinsky , and Z. Zhou. π ∗ 0 . 6 : a vla that learns from experience, 2025. URL . [5] H. Li, Y . Zuo, J. Y u, Y . Zhang, Z. Y ang, K. Zhang, X. Zhu, Y . Zhang, T . Chen, G. Cui, D. W ang, D. Luo, Y . Fan, Y . Sun, J. Zeng, J. Pang, S. Zhang, Y . W ang, Y . Mu, B. Zhou, and N. Ding. Simple vla-rl: Scaling vla training via reinforcement learning, 2025. URL https://arxiv.org/abs/2509.09674 . [6] J. Schulman, F . W olski, P . Dhariwal, A. Radford, and O. Klimov . Proximal policy optimization algorithms, 2017. URL . [7] T . Zhang, C. Y u, S. Su, and Y . W ang. Reinflow: Fine-tuning flow matching policy with online reinforcement learning. In The Thirty-ninth Annual Conference on Neural Information Pr ocessing Systems , 2025. URL https://openreview.net/forum?id=ACagRwCCqu . [8] A. Z. Ren, J. Lidard, L. L. Ankile, A. Simeonov , P . Agraw al, A. Majumdar , B. Burchfiel, H. Dai, and M. Simchowitz. Dif fusion policy policy optimization. In arXiv pr eprint arXiv:2409.00588 , 2024. [9] C. Pan, G. Anantharaman, N.-C. Huang, C. Jin, D. Pfrommer , C. Y uan, F . Permenter , G. Qu, N. Bof fi, G. Shi, and M. Simchowitz. Much ado about noising: Dispelling the myths of generativ e robotic control, 2025. URL . [10] Y . Chen, H. Li, and D. Zhao. Boosting continuous control with consistency policy , 2024. URL https://arxiv.org/abs/2310.06343 . [11] A. Prasad, K. Lin, J. W u, L. Zhou, and J. Bohg. Consistency policy: Accelerated visuomotor policies via consistency distillation, 2024. URL . [12] H. W alke, K. Black, A. Lee, M. J. Kim, M. Du, C. Zheng, T . Zhao, P . Hansen-Estruch, Q. V uong, A. He, V . Myers, K. F ang, C. Finn, and S. Levine. Bridgedata v2: A dataset for robot learning at scale. In Confer ence on Robot Learning (CoRL) , 2023. [13] T . Chen, Z. Chen, B. Chen, Z. Cai, Y . Liu, Q. Liang, Z. Li, X. Lin, Y . Ge, Z. Gu, et al. Robotwin 2.0: A scalable data generator and benchmark with strong domain randomization for rob ust bimanual robotic manipulation. arXiv pr eprint arXiv:2506.18088 , 2025. [14] M. J. Kim, K. Pertsch, S. Karamcheti, T . Xiao, A. Balakrishna, S. Nair , R. Rafailo v , E. F oster, G. Lam, P . Sanketi, Q. V uong, T . Kollar , B. Burchfiel, R. T edrake, D. Sadigh, S. Le vine, P . Liang, and C. Finn. Open vla: An open-source vision-language-action model, 2024. URL https://arxiv.org/abs/2406.09246 . 9 [15] D. Qu, H. Song, Q. Chen, Y . Y ao, X. Y e, Y . Ding, Z. W ang, J. Gu, B. Zhao, D. W ang, and X. Li. Spatialvla: Exploring spatial representations for visual-language-action model, 2025. URL https://arxiv.org/abs/2501.15830 . [16] K. Black, N. Brown, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, L. Groom, K. Hausman, B. Ichter , S. Jakubczak, T . Jones, L. K e, S. Levine, A. Li-Bell, M. Mothukuri, S. Nair , K. Pertsch, L. X. Shi, J. T anner, Q. V uong, A. W alling, H. W ang, and U. Zhilinsky . π 0 : A vision-language- action flow model for general robot control, 2024. URL 24164 . [17] P . Intelligence, K. Black, N. Brown, J. Darpinian, K. Dhabalia, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, M. Y . Galliker , D. Ghosh, L. Groom, K. Hausman, B. Ichter , S. Jakubczak, T . Jones, L. Ke, D. LeBlanc, S. Levine, A. Li-Bell, M. Mothukuri, S. Nair, K. Pertsch, A. Z. Ren, L. X. Shi, L. Smith, J. T . Springenber g, K. Stacho wicz, J. T anner , Q. V uong, H. W alke, A. W alling, H. W ang, L. Y u, and U. Zhilinsky . π 0 . 5 : a vision-language-action model with open-world generalization, 2025. URL . [18] M. J. Kim, C. Finn, and P . Liang. Fine-tuning vision-language-action models: Optimizing speed and success, 2025. URL . [19] J. Liu, F . Gao, B. W ei, X. Chen, Q. Liao, Y . W u, C. Y u, and Y . W ang. What can rl bring to vla generalization? an empirical study , 2026. URL . [20] Y . Xing, X. Luo, J. Xie, L. Gao, H. Shen, and J. Song. Shortcut learning in generalist robot policies: The role of dataset di versity and fragmentation, 2025. URL abs/2508.06426 . [21] A. Sharma, A. M. Ahmed, R. Ahmad, and C. Finn. Self-improving robots: End-to-end autonomous visuomotor reinforcement learning. In 7th Annual Conference on Robot Learning , 2023. URL https://openreview.net/forum?id=ApxLUk8U- l . [22] S. K. S. Ghasemipour , A. W ahid, J. T ompson, P . R. Sanketi, and I. Mordatch. Self-improving embodied foundation models. In The Thirty-ninth Annual Conference on Neural Information Pr ocessing Systems , 2025. URL https://openreview.net/forum?id=KXMIIVUB9U . [23] J. Y ang, M. S. Mark, B. V u, A. Sharma, J. Bohg, and C. Finn. Robot fine-tuning made easy: Pre-training re wards and policies for autonomous real-world reinforcement learning. In 2024 IEEE International Conference on Robotics and Automation (ICRA) , pages 4804–4811, 2024. doi:10.1109/ICRA57147.2024.10610421 . [24] H. R. W alke, J. H. Y ang, A. Y u, A. Kumar , J. Orbik, A. Singh, and S. Levine. Don’ t start from scratch: Leveraging prior data to automate robotic reinforcement learning. In K. Liu, D. Kulic, and J. Ichno wski, editors, Pr oceedings of The 6th Confer ence on Robot Learning , volume 205 of Proceedings of Mac hine Learning Researc h , pages 1652–1662. PMLR, 14–18 Dec 2023. URL https://proceedings.mlr.press/v205/walke23a.html . [25] R. Mendonca and D. Pathak. Continuously improving mobile manipulation with autonomous real-world RL. In RSS 2024 W orkshop: Data Generation for Robotics , 2024. URL https: //openreview.net/forum?id=qASoq07bXh . [26] Y . Du, K. K on yushkov a, M. Denil, A. Raju, J. Landon, F . Hill, N. de Freitas, and S. Cabi. V ision- language models as success detectors, 2023. URL . [27] Y . Fang, Y . Y ang, X. Zhu, K. Zheng, G. Bertasius, D. Szafir , and M. Ding. Rebot: Scaling robot learning with real-to-sim-to-real robotic video synthesis, 2025. URL abs/2503.14526 . 10 [28] H. Li, P . Ding, R. Suo, Y . W ang, Z. Ge, D. Zang, K. Y u, M. Sun, H. Zhang, D. W ang, and W . Su. Vla-rft: V ision-language-action reinforcement fine-tuning with verified re wards in w orld simulators, 2025. URL . [29] H. Zhang, H. Y u, L. Zhao, A. Choi, Q. Bai, Y . Y ang, and W . Xu. Learning multi-stage pick- and-place with a legged mobile manipulator . IEEE Robotics and Automation Letters , 10(11): 11419–11426, 2025. doi:10.1109/LRA.2025.3608425 . [30] R. Singh, A. Allshire, A. Handa, N. Ratliff, and K. V . W yk. Dextrah-r gb: V isuomotor policies to grasp anything with dexterous hands, 2025. URL . [31] P . Dan, K. Kedia, A. Chao, E. Duan, M. A. P ace, W .-C. Ma, and S. Choudhury . X-sim: Cross- embodiment learning via real-to-sim-to-real. In 9th Annual Confer ence on Robot Learning , 2025. URL https://openreview.net/forum?id=BO7qo66YJ2 . [32] Z. Chen, Q. Y an, Y . Chen, T . W u, J. Zhang, Z. Ding, J. Li, Y . Y ang, and H. Dong. Clutter- dexgrasp: A sim-to-real system for general dexterous grasping in cluttered scenes. In 9th Annual Confer ence on Robot Learning , 2025. URL https://openreview.net/forum?id= 4XKKUifQ9c . [33] T . He, Z. W ang, H. Xue, Q. Ben, Z. Luo, W . Xiao, Y . Y uan, X. Da, F . Casta ˜ neda, S. Sastry , C. Liu, G. Shi, L. Fan, and Y . Zhu. V iral: V isual sim-to-real at scale for humanoid loco-manipulation, 2025. URL . [34] T . He, J. Gao, W . Xiao, Y . Zhang, Z. W ang, J. W ang, Z. Luo, G. He, N. Sobanbab, C. Pan, Z. Y i, G. Qu, K. Kitani, J. Hodgins, L. J. Fan, Y . Zhu, C. Liu, and G. Shi. Asap: Aligning simulation and real-world physics for learning agile humanoid whole-body skills, 2025. URL https://arxiv.org/abs/2502.01143 . [35] M. W ang, S. T ian, A. Swann, O. Shorinw a, J. W u, and M. Schwager . Phys2real: Fusing vlm priors with interacti ve online adaptation for uncertainty-aw are sim-to-real manipulation, 2025. URL . [36] H. Sun, H. W ang, C. Ma, S. Zhang, J. Y e, X. Chen, and X. Lan. Prism: Projection-based rew ard integration for scene-a ware real-to-sim-to-real transfer with fe w demonstrations, 2025. URL https://arxiv.org/abs/2504.20520 . [37] T . G. W . Lum, O. Y . Lee, K. Liu, and J. Bohg. Crossing the human-robot embodiment gap with sim-to-real RL using one human demonstration. In 9th Annual Conference on Robot Learning , 2025. URL https://openreview.net/forum?id=CgGSFtjplI . [38] J. Xiang, Z. Lv , S. Xu, Y . Deng, R. W ang, B. Zhang, D. Chen, X. T ong, and J. Y ang. Structured 3d latents for scalable and versatile 3d generation, 2025. URL 2412.01506 . [39] Z. Xie. W orldgen: Generate any 3d scene in seconds. https://github.com/ZiYang- xie/ WorldGen , 2025. [40] Y . Y ang, F .-Y . Sun, L. W eihs, E. V anderBilt, A. Herrasti, W . Han, J. Wu, N. Haber , R. Krishna, L. Liu, C. Callison-Burch, M. Y atskar , A. K embhavi, and C. Clark. Holodeck: Language guided generation of 3d embodied ai en vironments, 2024. URL 09067 . [41] Y . W ang, Z. Xian, F . Chen, T .-H. W ang, Y . W ang, K. Fragkiadaki, Z. Erickson, D. Held, and C. Gan. Robogen: T owards unleashing infinite data for automated robot learning via generative simulation, 2024. URL . [42] X. W ang, L. Liu, Y . Cao, R. W u, W . Qin, D. W ang, W . Sui, and Z. Su. Embodiedgen: T ow ards a generative 3d world engine for embodied intelligence, 2025. URL abs/2506.10600 . 11 [43] S. T ao, F . Xiang, A. Shukla, Y . Qin, X. Hinrichsen, X. Y uan, C. Bao, X. Lin, Y . Liu, T . kai Chan, Y . Gao, X. Li, T . Mu, N. Xiao, A. Gurha, V . N. Rajesh, Y . W . Choi, Y .-R. Chen, Z. Huang, R. Calandra, R. Chen, S. Luo, and H. Su. Maniskill3: Gpu parallelized robotics simulation and rendering for generalizable embodied ai. Robotics: Science and Systems , 2025. [44] J. Achiam, S. Adler , S. Agarwal, L. Ahmad, I. Akkaya, F . L. Aleman, D. Almeida, J. Altenschmidt, S. Altman, S. Anadkat, et al. Gpt-4 technical report. arXiv pr eprint arXiv:2303.08774 , 2023. [45] J. Schulman, P . Moritz, S. Levine, M. Jordan, and P . Abbeel. High-dimensional continuous control using generalized advantage estimation, 2018. URL 02438 . [46] C. Zheng, S. Liu, M. Li, X.-H. Chen, B. Y u, C. Gao, K. Dang, Y . Liu, R. Men, A. Y ang, J. Zhou, and J. Lin. Group sequence policy optimization, 2025. URL 18071 . [47] X. Li, K. Hsu, J. Gu, K. Pertsch, O. Mees, H. R. W alke, C. Fu, I. Lunawat, I. Sieh, S. Kir- mani, S. Le vine, J. W u, C. Finn, H. Su, Q. V uong, and T . Xiao. Evaluating real-world robot manipulation policies in simulation, 2024. URL . [48] A. W agenmaker , M. Nakamoto, Y . Zhang, S. Park, W . Y agoub, A. Nagabandi, A. Gupta, and S. Levine. Steering your diffusion policy with latent space reinforcement learning, 2025. URL https://arxiv.org/abs/2506.15799 . [49] D. McAllister , S. Ge, B. Y i, C. M. Kim, E. W eber, H. Choi, H. Feng, and A. Kanazaw a. Flow matching policy gradients, 2025. URL . 12 A T raining Details In this section, we list the training parameters used for all policies in T able A.1 . W e also summarize sev eral practical observations encountered during training: 1. Sev eral training strategies were e valuated, including: (a) Freezing the VLM and LoRA fine-tuning the action head. (b) Freezing the VLM and fully fine-tuning the action head. (c) Freezing the SigLip vision encoder , LoRA fine-tuning Gemma, and LoRA fine-tuning the action head. (d) LoRA fine-tuning the VLM and LoRA fine-tuning the action head. (e) LoRA fine-tuning the VLM and fully fine-tuning the action head. Overall, we found that freezing the VLM (a, b) significantly lo wers the training ceiling and often leads to model collapse during prolonged training, where task success rates initially peak, then saturate, and e ventually decline to ward zero. As a result, updating the VLM is crucial for stable learning. Fully fine-tuning the action head consistently outperformed the corresponding configuration that LoRA fine-tunes it. Freezing the SigLip vision encoder reduced success rates monotonically by a few percent compared to LoRA fine-tuning it. Since LoRA fine-tuning the entire VLM did not noticeably affect sim-to-real performance, we adopt option (e) in this w ork. 2. Adding the scaling exponent s to the importance ratio (Eq. 6 ) is crucial for stabilizing training and prev enting immediate collapse. W ithout it, the computed log probabilities can spike to very large values, causing many updates to fall near the clipping threshold and resulting in the majority of gradients being clipped. T able A.1: Training parameters. Parameter V alue Parameter V alue number of en vironments 192 action chunk size C 4 batch size 19200 number of inte gration steps K 1 mini batch size 1920 learning rate 2e − 5 episode length 25 gradient global norm clip 0 . 5 discount factor γ 0 . 99 clipping ratio ϵ 0 . 2 UTD ratio 1 importance ratio scale s 0 . 2 LoRA rank 32 σ ϕ log min − 2 . 5 control frequency (Hz) 5 σ ϕ log max − 2 . 0 Domain randomization. Domain randomization ranges can be seen in T able A.2 . For lighting, we use the same lighting setup as the one in SimplerEnv’ s Bridge setting [ 47 ]. For camera position randomization, we first record the intersection point between the camera’ s principal ray and the table in the default configuration. W e then compute a ne w camera orientation such that the principal ray of the perturbed camera re-aligns with this original intersection point. W e found that control-delay randomization was unnecessary due to the use of action chunks ( C = 4 ), which allow the majority of actions to be ex ecuted with minimal latency . T able A.2: Domain randomization ranges. Parameter V alue Parameter V alue object x-position (m) [0 . 2 , 0 . 4] robot z-height perturb (m) [0 , 0 . 05] object y-position (m) [ − 0 . 15 , 0 . 15] robot joint pos perturb (rad) [ − 0 . 1 , 0 . 1] 6 object yaw orientation (rad) [0 , 2 π ] camera xyz-position (m) [ − 0 . 05 , 0 . 05] 3 ambient light RGB color [0 , 0 . 6] 3 directional light brightness [0 . 5 , 1 . 5] 13 Rewards. W e employ a sparse success re ward, enabled by the strong pre-training of the VLA during the imitation phase. An episode is considered successful if success = contact ( A, B ) ∧ ¬ contact ( A, table ) ∧ ¬ contact ( A, robot ) , (A.1) where A denotes the manipulated object and B denotes the target object onto which A is placed. B Does Multimodality Matter? Effect of K In this work, we set K = 1 , ef fectiv ely con verting the flo w-matching policy π pre into a single-step Gaussian polic y π θ during RL fine-tuning. While prior w ork such as Dif fusion Steering [ 48 ] and Flow Policy Optimization (FPO) [ 49 ] demonstrate that flo w-matching models can be fine-tuned without collapsing into a Gaussian policy , we found that: (1) PPOFlow led to significantly more stable and repeatable training, and (2) single-step ( K = 1 ) RL fine-tuning exhibited no observ able degradation in policy performance compared to maintaining multimodality ( K > 1 ). W e validate the latter through an ablation over K ∈ { 1 , 2 , 4 } on the entire scene–task set W , where larger K enables greater expressi vity by chaining multiple Gaussian transitions, with K = 1 corresponding to a unimodal policy . From the left side of T able B.1 , increasing K does not improv e performance; in fact, K = 1 achiev es the highest success rate. Although the margin is modest (approximately 2–2.5%) and based on a single run, these results suggest that multimodality does not meaningfully benefit RL fine-tuning in this setting. Our findings align with recent work by Pan et al. [ 9 ] , which argues that multimodality is not the primary dri ver of diffusion-based polic y performance in robot manipulation. W e hypothesize that multimodality is most beneficial during imitation learning when the demonstration distribution itself is multimodal [ 8 ]. In contrast, under reward-guided optimization, unimodal Gaussian policies appear sufficient for ef fecti ve policy impro vement. Beyond maintaining competitiv e performance, reducing K substantially impro ves computational efficienc y . As shown in T able B.1 , K = 1 achie ves a 1.17 × speedup in backward pass time relati ve to K = 2 and a 1.45 × speedup relativ e to K = 4 . Inference gains are even more pronounced. Compared to the original imitation setting of K = 10 , K = 1 yields a 2.72 × speedup in inference latency under a regular forward pass and a 2.36 × speedup when using torch.compile . These improv ements stem from eliminating iterative denoising steps, effecti vely reducing the policy to a single forward pass, similar in spirit to consistency policies [ 10 , 11 , 3 ]. Overall, these results suggest that multimodality of fers limited benefit during RL fine-tuning while incurring significant computational ov erhead. T able B.1: Effect of K on RL fine-tuning and inference. K RL SR (%) [ ↑ ] RL TF (s) [ ↓ ] Backward Time (s) [ ↓ ] Inference Latency (s) [ ↓ ] Reg. torch.compile K = 10 N/A N/A N/A 0 . 267 0 . 172 K = 4 77 . 29 7 . 76 108 . 80 0 . 153 0 . 107 K = 2 77 . 23 8 . 36 87 . 55 0 . 120 0 . 086 K = 1 79 . 75 7 . 94 74 . 74 0 . 098 0 . 073 14 C Generative Simulation Metrics T able C.1 reports the profiling statistics of the generativ e simulation pipeline illustrated in Fig. 2 . Across 100 generated environments, the system produces 516 unique object assets, averaging 5.16 in- teracti ve objects per scene. Because the asset generation pipeline proceeds from text to a background- remov ed foreground image and then to a 3D asset, we deploy a GPT -4o-based automated quality assurance (QA) loop at multiple stages. The Semantic Appearance chec ker serv es as an early filter on the intermediate foreground image, v erifying whether it matches the target object cate gory and key visual attributes. If this check fails, the system immediately resamples the te xt-to-image seed and retries, prev enting semantically incorrect intermediate outputs from propagating into the do wnstream image-to-3D stage. The Mesh Geometry chec ker then ev aluates whether the generated 3D mesh is complete and free of major geometric defects. Finally , the Cr oss-modal T ext-to-3D Alignment check er assesses whether the final 3D asset remains semantically consistent with the original text description, thereby capturing semantic drift introduced during 3D generation. Combined with this GPT -4o-based QA-dri ven rejection-and-retry mechanism, assets require only 1.37 generation attempts on average to satisfy all constraints. Manual inspection shows that 85% of the generated en vironments are directly usable for end-to-end reinforcement learning without human intervention. The remaining failures are mainly due to scale mismatches or imperfect initial object placements (representative failure case see Fig. C.1 ), which are generally minor and can be rectified with minimal manual adjustment. Under fully online sequential generation on a single NVIDIA R TX 4090 GPU, the pipeline requires 46 . 8 ± 5 . 0 minutes per scene. When reusable interactiv e assets are drawn from a pre-built asset library , the scene generation time is reduced to approximately 2 minutes per environment. T able C.1: Profiling statistics of the generativ e simulation pipeline (Fig . 2 ). Results are aggre gated ov er 100 generated scenes under sequential execution on a single NVIDIA R TX 4090 GPU. QA pass rates denote stage-wise single-attempt pass rates, and av erage attempts are measured per accepted object asset. Category Metric V alue Generation Scale T otal generated en vironments 100 T otal unique 3D object assets 516 A vg. interactiv e assets per scene 5.16 T otal background assets 100 Generation Efficiency T otal time per scene 46 . 8 ± 5 . 0 min ⌞ T ime per background asset 25 . 0 ± 3 . 2 min ⌞ T ime per object asset 3 . 9 ± 1 . 6 min A utomated QA Pass Rates Semantic Appearance 83.3% Mesh Geometry 75.2% Cross-modal T ext-to-3D Alignment 91.9% A vera ge attempts per valid asset 1.37 Manual Inspection Final en vironment acceptance rate 85% A uto-scaling of objects. Since the W ido wX 250S manipulator (Fig. 4 ) used in this work has a narro w gripper width of at most 74 mm, many generated objects are too large to be grasped. T o address this, we automatically scale down o versized objects (based on their mesh bounding box es) to ensure they are graspable. W e also reduce mesh resolution to simplify contact computation. 15 Prompt: mat te black cerami c jar with a narrow neck and slightly textured finish. X T wo objects are p resent, jar lacks narrow neck and textured finish. Semantic Appearance Checker X Due to a Scene De signer error, the carrot is initialized directly on the p late. Ta s k s d e s c : P u t t h e c a r r o t o n t h e p l a t e o n the table. X The mushroom is too l arge for the robotic arm to grasp. Ta s k s d e s c : P i c k u p t h e m u s h r o o m a n d p l a c e i t in the saucepan. X Overlapping an d redundant leg geom etry . Prompt: sim ple round wooden tab le with a light natural finish. Mesh Geometr y Checker X Geometry res embles a handle, n ot a remote control. Prompt: blac k plastic remot e control with raised tactile buttons. Cross - modal T ext - to -3D Alignment Checker X The carrot starts at the table edge and easily rolls off, making it hard to grasp. Ta s k s d e s c : P i c k u p t h e c a r r o t a n d p u t i t i n t h e soup pot. Figure C.1: Representative failure cases in the generative simulation pipeline. T op: failures detected by the automated QA modules, including semantic appearance mismatch, mesh defects, and text-to-3D semantic drift. Bottom: residual failures identified by manual inspection after all automated checks pass, including incorrect initialization, scale mismatch, and unstable object placement. D F oundation Model Candidates In addition to π 0 , we also considered OpenVLA [ 14 ], but observed a significant real-to-sim perfor - mance gap 1 . Beyond this gap, the lar ger size of OpenVLA (7B parameters) compared to π 0 (3B) made the latter a more practical choice. W e also ev aluated SpatialVLA [ 15 ]. Although it exhibited strong real-to-sim transfer , we found its inference latency to be substantially higher than that of π 0 . Overall, we selected π 0 for its smaller model size (3B), strong real-to-sim transfer , and low inference latency . W e note that, rather than using the π 0 model released by Physical Intelligence, we adopt a model from a third-party repository ( https://github.com/allenzren/open- pi- zero ), as it provides a checkpoint pretrained on BridgeV2 (denoted π pre ). In parallel with this paper’ s experiments, we also fine-tuned the official π 0 and π 0 . 5 [ 17 ] models from Physical Intelligence on BridgeV2. W e found that while the of ficial π 0 achiev ed comparable task success to π pre , the newer π 0 . 5 performed worse than both across multiple random seeds. Thus, we use π pre throughout this work, although we expect RL fine-tuning to yield substantial gains regardless of the initial imitation policy , provided that the starting success rate is sufficiently high. 1 See https://github.com/openvla/openvla/issues/7#issuecomment- 2330572696 for analysis by the authors on the OpenVLA real-to-sim gap. 16 E SimplerEn v Scenes Figure E.2: SimplerEnv scenes with domain randomization used for training and e v aluation. W e use the three manually designed tabletop scenes from SimplerEn v [ 47 ] to both train a N = 3 baseline policy and as OOD ev aluation scenes for EmbodiedGen scene trained policies. T o be able to use the same domain randomization techniques as those in T able A.2 , we remove the static png background so that camera pose and lighting randomization can properly take ef fect (Fig. E.2 ). F Simulation Scenes and Per -scene Results 0 1 3 10 25 50 100 N u m b e r o f t r a i n i n g s c e n e s , N 0.0 0.2 0.4 0.6 0.8 1.0 Success rate E G a l l s c e n e s ( 1 0 0 ) E G O O D S c e n e s i ( 5 0 ) SE OOD Scenes (3) 0 1 3 10 25 50 100 N u m b e r o f t r a i n i n g s c e n e s , N 8 9 10 11 T ime to finish (s) Figure F .3: Success rate and time to finish as a function of N . EG: EmbodiedGen. SE: SimplerEn v . In this section, we pro vide a te xtual description of each scene in W (see T ables F .1 and F .2 ). W e additionally report the individual success rates for each scene in W for the policies listed in T able 1 , shown in Figs. F .4 , F .5 , F .6 , and F .7 . Finally , a plot v ersion of T able 1 (partially) is sho wn in Fig. F .3 which showcases the monotonic increase in a verage success rate as N increases. 17 T able F .1: Generated scenes 0-49. Scene Language Command Scene Distractors 0 green cube → yellow cube keyboard 1 carrot → plate knife, bowl 2 ceramic teapot → tray mug, plate 3 orange → blue napkin plate 4 banana → red napkin mug, plate 5 broccoli → white dish cutting board 6 tomato → saucepan – 7 cucumber → metal colander knife, cutting board 8 knife → round container spoon, plate 9 orange → blue napkin spoon, plate 10 red cup → tray fork, plate 11 spoon → black jar plate 12 pen → round container book 13 pear → pot mug, plate 14 apple → plate book 15 wooden spoon → white plate glass cup, napkin 16 teacup → plate spoon, napkin 17 orange → bowl spoon, plate 18 marker → round container coffee mug, notebook 19 yellow cup → wooden tray remote control, book 20 green apple → basket knife, plate 21 white ping-pong ball → blue cup remote control, book 22 cucumber → basket knife, cutting board 23 carrot → plate knife, cutting board 24 mushroom → pot knife, cutting board 25 ceramic teapot → tray mug, plate 26 cucumber → metal colander knife, cutting board 27 knife → utensil holder salt shaker , plate 28 orange → napkin knife, plate 29 lemon → plate fork, napkin 30 orange → bowl knife, cutting board 31 tennis ball → gray basket cof fee mug, book 32 marker → round container coffee mug, notebook 33 yellow cup → wooden tray fork, napkin 34 green eraser → red box pen, notebook 35 fork → metal tray napkin, plate 36 apple → basket knife, plate 37 spoon → black jar napkin, plate 38 pen → pen holder notebook 39 pear → pot knife, plate 40 teacup → plate spoon 41 banana → napkin fork, plate 42 wooden spoon → white plate salt shaker , napkin 43 marker → pen holder cof fee mug, notebook 44 green apple → basket salt shaker , plate 45 apple → basket fork, plate 46 red cup → tray salt shaker , napkin 47 cucumber → basket knife, cutting board 48 green apple → fruit bowl red apple, knife, plate 49 purple cup → tray spoon, red cup, plate 18 T able F .2: Generated scenes 50-99. Scene Language Command Distractors 50 white mouse → mouse pad black mouse, keyboard 51 water bottle → tray mug, plate 52 apple → fruit plate glass cup 53 orange → round plate fork, square plate 54 spoon → plate glass, fork, napkin 55 cup → tray spoon, plate, bowl 56 cup → tray napkin, plate 57 banana → plate fork, orange, napkin 58 potato → basket knife, onion, cutting board 59 tomato → bowl spoon, potato, plate 60 black pen → round container stapler , red pencil, notebook 61 cucumber → plate knife, carrot, cutting board 62 blue cup → tray spoon, plate 63 spoon → white plate fork, glass 64 apple → fruit bowl knife, plate 65 orange → green napkin salt shaker , plate 66 banana → plate fork, glass 67 lemon → metal bowl knife, plate 68 tomato → white plate fork, napkin 69 pear → basket mug, plate 70 red marker → pen holder mug, notebook 71 eraser → notebook pen 72 green apple → tray fork, plate 73 teacup → saucer spoon, napkin 74 knife → wooden cutting board spoon, plate 75 banana → basket mug, plate 76 lemon → napkin fork, plate 77 mushroom → saucepan spoon, plate 78 potato → plate fork, glass 79 yellow cup → basket spoon, plate 80 apple → cutting board knife, plate 81 spoon → saucer cup, plate 82 spatula → plate salt shaker , bo wl 83 red block → blue box lamp, book 84 banana → bowl spoon, plate 85 pear → napkin spoon, plate 86 red apple → wicker basket mug, plate 87 green lime → white bowl knife, plate 88 yellow banana → wooden cutting board knife, bowl 89 strawberry → small saucer fork, napkin 90 carrot → soup pot knife, cutting board 91 soup spoon → empty bowl plate, napkin 92 pepper grinder → metal tray salt shaker , napkin 93 red marker → white mug mouse, keyboard 94 eraser → open notebook pen 95 glue stick → plastic bin stapler , mouse pad 96 pencil sharpener → green tray book 97 lego brick → plastic bucket remote control, book 98 chess pawn → chessboard mug, book 99 sponge → sink basin to wel 19 Figure F .4: Success rates for scenes 0-24. 20 Figure F .5: Success rates for scenes 25-49. 21 Figure F .6: Success rates for scenes 50-74. 22 Figure F .7: Success rates for scenes 75-99. 23 Move the green cube and put it into the yellow cube Pick up the orange and place it on the blue napkin Pu t th e br oc co l i o n t he white dish on the table Pick up the knife and put it in the utensil holder Pu t th e pe n i n t h e p e n holder on the table Pu t th e re d c up on th e tray on the table Pu t th e pe a r i n t he po t o n the table Pu t th e te a cu p o n t he plate on the table Pu t th e wo od e n s po o n o n the white plate Pu t th e te a cu p o n t he p la te on wooden striped table Pu t th e ye ll ow cu p o n t h e woode n t ray Pu t th e cu cu m be r on th e plate Pu t th e to ma to in t o t he bowl Pu t th e po ta t o i nt o t he basket Pu t th e cu p o n to t h e t ra y Pu t th e pu r pl e c u p o nt o the tray Pu t th e gr ee n ap p le in to the fruit bowl Pick up the banan a and place it on the napkin Pu t th e te a cu p o n t he plate Pu t th e ma r ke r i n t he pe n holder Pu t th e le m on on th e plate Pick up the orange and place it on the napkin Pu t th e cu cu m be r in th e metal colander Pu t th e ca rr ot on th e pl at e Pu t th e bl u e c up on th e tray Pick up the spoon and place it on the white plate Pu t th e ap p le in t o t he fr u it bowl Place the orange on the green nap kin Pick up the pear and place it in the basket Place the eraser on th e notebook Pick up the orange and place it in the bowl Pu t th e kn i fe o n th e woode n cu ttin g b oard Place the spoon on the saucer Pick up the spatul a and put it on the plate Pu t th e re d b lo c k i nt o t h e blue box Pick up the strawberry and put it on the small saucer Pu t th e gl u e s ti c k i n t he plastic bin Pu t th e pe n ci l s h ar p en e r on the green tray Pu t th e lego brick in the plastic buck et Pu t th e ch e ss pa w n o n the chessboard square Figure F .8: Generated example simulation scenes for RL fine-tuning. Zoom in for details. 24 G Sim-to-real Scenes Figure G.9: All objects used in the sim-to-real experiments. Figure G.10: Example of 10 trial object randomization (scene 1 in T able 2 ). 25 H Real-world Rollout Examples RL IL Figure H.11: IL: semantic failure. RL: success. A trial example of the red knife → cutting board task (scene 7 from T able 2 ). The imitation learning (IL) policy first grasps the red knife but immediately drops it after entering an out-of-distribution state. It then grasps and lifts the knife once more before dropping it again and ev entually timing out. In comparison, the reinforcement learning (RL) policy grasps the red knife and deliberately places it on the cutting board. RL IL Figure H.12: IL: dynamics failure. RL: success. A trial example of the green marker → basket task (scene 9 from T able 2 ). The IL policy initially misses the grasp on the green marker and then pushes the basket aw ay . It makes two regrasp attempts before finally lifting the marker , b ut places it next to the basket instead and e ventually times out. In comparison, the RL policy correctly places the green marker inside the basket. 26 IL RL Figure H.13: IL: dynamics and semantic failure. RL: success. A trial example of the scre wdriv er → basket task (scene 10 from T able 2 ). The IL policy attempts to grasp the scre wdri ver but misses. It then becomes confused and grasps the knife instead, placing it in the basket and failing due to incorrect task execution. In comparison, the RL policy correctly places the screwdri ver in the basket. IL RL Figure H.14: IL: dynamics and semantic failure. RL: success. A trial example of the blue teacup → yellow teacup stacking task (scene 11 from T able 2 ). The IL policy first misses the grasp on the blue teacup and then mov es toward the yello w teacup twice. It subsequently becomes confused and attempts to grasp the purple teacup instead, eventually timing out. In comparison, the RL policy quickly grasps the correct teacup and stacks it onto the yellow one. 27 IL RL Figure H.15: IL: dynamics failure. RL: dynamics failure. A trial example of the white eraser → mug task (scene 6 from T able 2 ). The IL policy misses the grasp on the white eraser se veral times before ultimately hov ering, leading to a timeout. In contrast, the RL policy successfully grasps and lifts the white eraser , b ut it slips from the grasp and f alls onto the black pen, confusing the policy . The policy then grasps and clears the black pen before returning to the white eraser , b ut still times out. RL IL Figure H.16: IL: dynamics failure. RL: dynamics failure. A trial example of the broccoli → mug task (scene 1 from T able 2 ). The IL policy repeatedly misses the grasp on the broccoli and ev entually times out. In contrast, the RL policy quickly grasps the broccoli and attempts to place it into the mug, but it hits the edge and rolls out of reach of the manipulator . A significant portion of failures was caused by objects moving out of reach, pre venting re grasp attempts. 28

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment