Shapley meets Rawls: an integrated framework for measuring and explaining unfairness

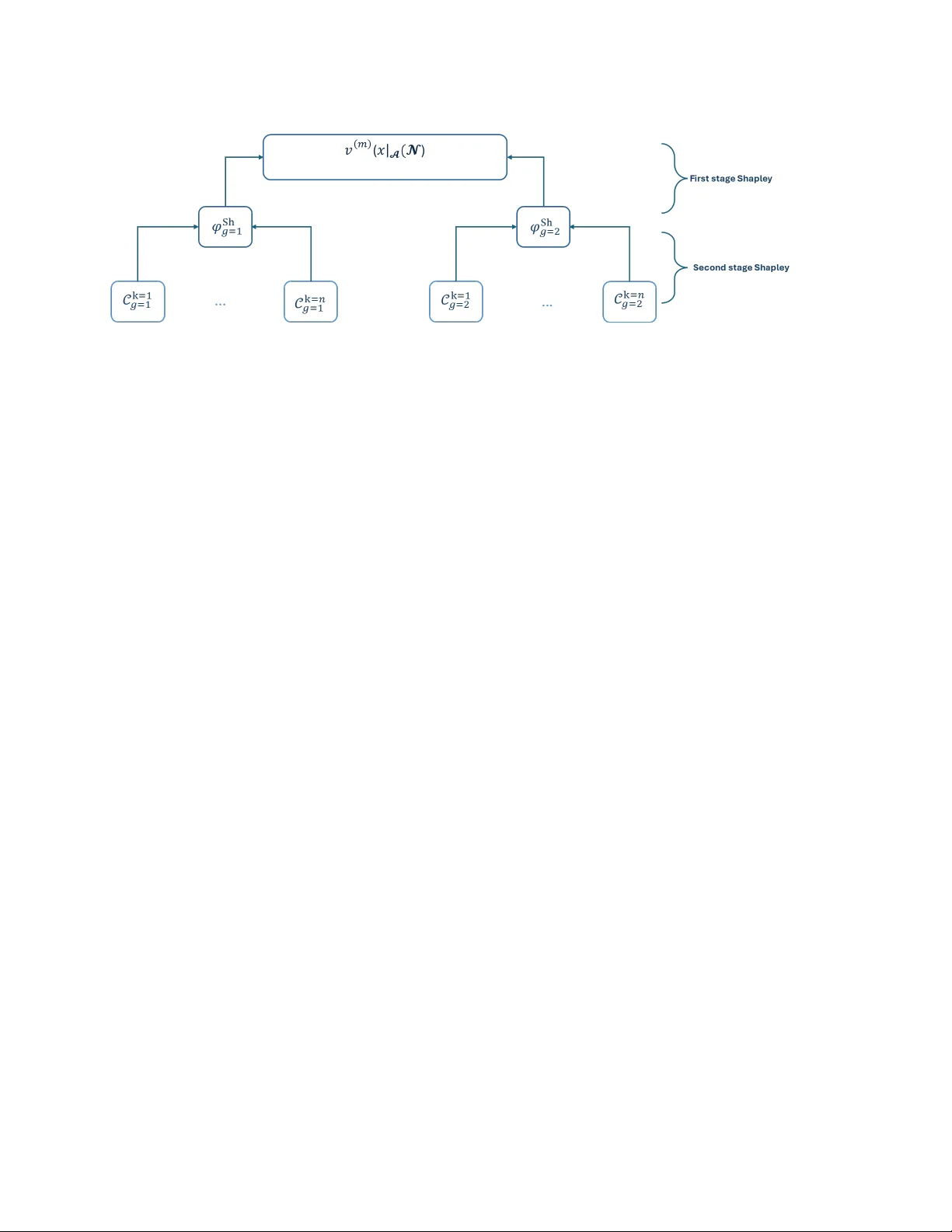

Explainability and fairness have mainly been considered separately, with recent exceptions trying the explain the sources of unfairness. This paper shows that the Shapley value can be used to both define and explain unfairness, under standard group f…

Authors: Fadoua Amri-Jouidel, Emmanuel Kemel, Stéphane Mussard