Steady State Distributed Kalman Filter

This paper addresses the synthesis of an optimal fixed-gain distributed observer for discrete-time linear systems over wireless sensor networks. The proposed approach targets the steady-state estimation regime and computes fixed observer gains offlin…

Authors: Francisco Rego

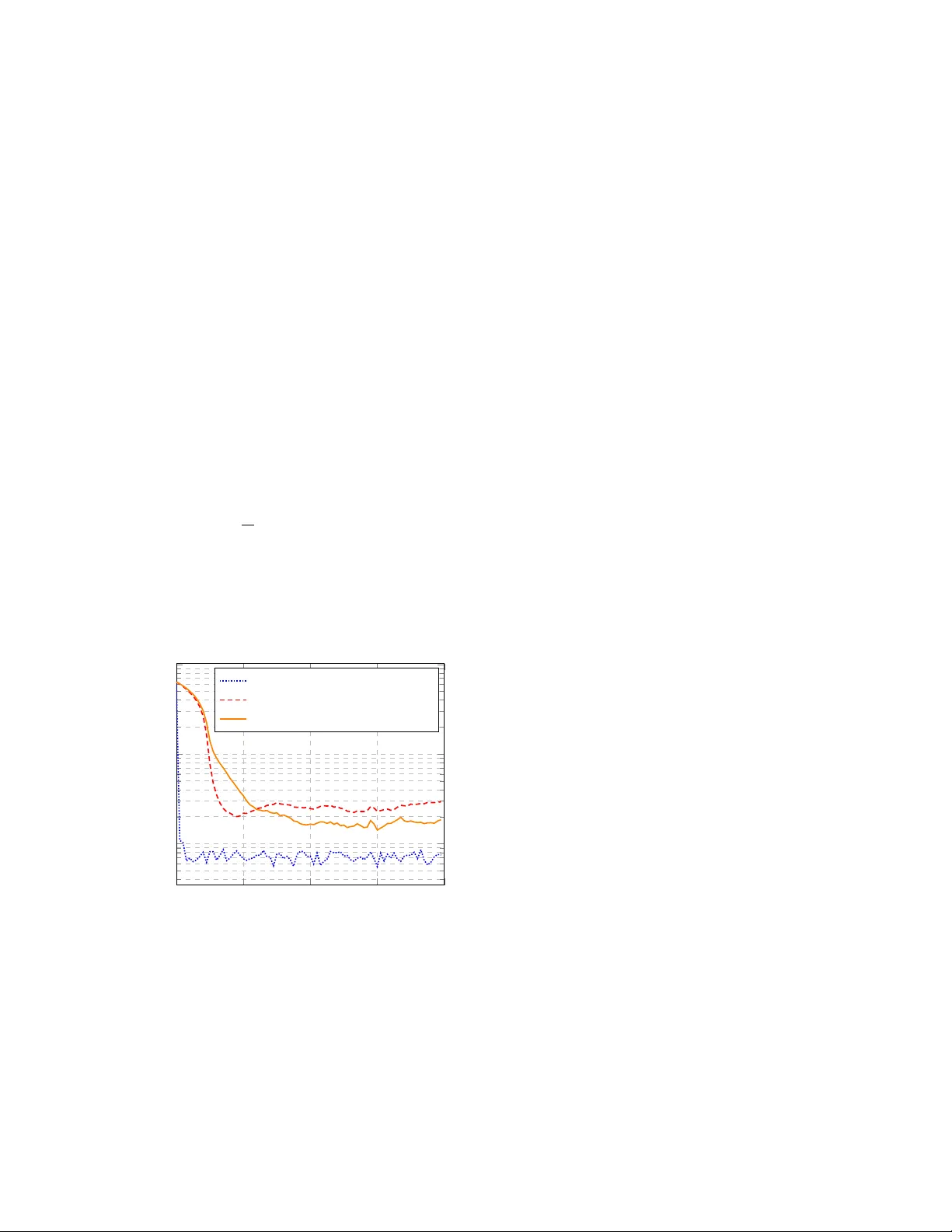

Steady State Distrib uted Kalman Filter Francisco F . C. Rego 1 1 Universidade Lus ´ ofona, INESC INO V - Lab, Lisboa, P ortugal. Abstract This paper addresses the synthesis of an optimal fixed- gain distributed observer for discrete-time linear systems ov er wireless sensor netw orks. The proposed approach targets the steady-state estimation regime and computes fixed observer gains of fline from the asymptotic error co- variance of the global distributed BLUE estimator . Each node then runs a local observer that exchanges only state estimates with its neighbors, without propagating error cov ariances or performing online information fusion. Un- der collecti ve observability and strong network connec- tivity , the resulting distributed observer achiev es optimal asymptotic performance among fixed-gain schemes. In comparison with covariance intersection-based methods, the proposed design yields strictly lo wer steady-state es- timation error covariance while requiring minimal com- munication. Numerical simulations illustrate the ef fec- tiv eness of the approach and its advantages in terms of accuracy and implementation simplicity . Keyw ords— Distributed State Estimation, Linear Systems, W ireless Sensor Networks 1 Intr oduction In man y large-scale sensing applications, the state of a dynam- ical system must be estimated by a network of spatially dis- tributed sensors connected through a communication graph. In such settings, no single sensor has access to sufficient infor- mation to reconstruct the global state, and communication con- straints prevent the use of fully centralized architectures. Dis- tributed state estimation aims at enabling each node to compute a local estimate of the global state by combining its own mea- surements with information received from neighboring nodes. Distributed estimation arises in applications such as netw ork lo- calization, environmental monitoring, and cooperative tracking under communication constraints (e.g., Akyildiz et al. [2002], Xu [2002], Bahr et al. [2009], Soares et al. [2013] and refer- ences therein). A wide range of distributed estimation algorithms has been proposed in the literature, particularly for linear time-v arying and nonlinear systems. Among these, distributed Kalman filter- ing and cov ariance intersection (CI)-based methods are widely used due to their rob ustness to partial information and unkno wn correlations. Methods based on cov ariance intersection (CI) cope with unknown correlations by maintaining consistent up- per bounds, at the cost of conserv atism Julier and Uhlmann [1997]. Alternativ e approaches based on distrib uted observer design hav e also been proposed for linear time-v arying and communication-constrained settings, including constructibility Gramian-based methods and quantized observer schemes Rego [2023], Rego et al. [2016]. Howe ver , these approaches typically rely on the online propagation and fusion of error covariances or information matrices, which results in significant computa- tional and communication overhead. Importantly , this overhead persists e ven when the estimation process reaches steady state. Consensus-based distributed Kalman filtering schemes mitigate information imbalance through iterative consensus steps, but typically require additional communication and/or cov ariance- related computations Olf ati-Saber [2005], Carli et al. [2007], Battistelli and Chisci [2014], Battistelli et al. [2015a], T alebi and W erner [2019]. In many practical scenarios, the transient behavior of the es- timator is of limited interest, while performance and simplicity in steady state are the primary concerns. This observation moti- vates a shift in perspective: rather than designing optimal time- varying distributed filters, one may seek to synthesize distributed observers with fixed gains that are optimal in the steady-state regime. Such observers can be implemented with substantially reduced information exchange, as the y no longer require the on- line propagation of cov ariance information. In this paper , we address the synthesis of an optimal fixed- gain distributed observer for discrete-time linear time-inv ariant systems. The proposed design exploits the asymptotic error co- variance of the global distributed BLUE estimator to compute observer gains of fline. During operation, each node runs a local 1 observer and exchanges only state estimates with its neighbors. Under collective observability and strong connectivity assump- tions, the resulting distributed observer achiev es optimal asymp- totic performance among fix ed-gain schemes. In contrast to CI-based methods, the proposed approach yields strictly lower steady-state estimation error cov ariance while requiring minimal communication and computational effort. 1.1 Notation Throughout this paper, we will use the symbol ⊗ for the Kro- necker product. The notation | · | represents the cardinality of a set. I M denotes an M × M identity matrix, and 1 represents an N × 1 vector with ones in ev ery entry . When clear from the context, the superscript of a variable, e.g. x i , refers to the node index of that variable, where i ∈ { 1 , . . . , N } := N . The operator row( · ) is defined by ro w( X i ) := [ X 1 , . . . , X N ] , the operator col( · ) represents the column operator, i.e. col( X i ) := ro w( X i ⊺ ) ⊺ , and the operator diag( X i ) yields a block diagonal matrix whose diagonal elements are X 1 , . . . , X N . 2 Mathematical backgr ound This section briefly recalls the elements of Best Linear Unbi- ased Estimation (BLUE) and Kalman filtering that are required for the synthesis of fixed-gain distributed observers. The pre- sentation is intentionally concise and focuses on the role of error cov ariances in the computation of optimal observer gains. 2.1 Best Linear Unbiased Estimation Consider the estimation of a deterministic variable x ∈ R n from linear noisy measurements y = F x + ϵ, where ϵ ∼ N ( 0 , P ) with P ≻ 0 , and F ∈ R m × n has full column rank. The Best Linear Unbiased Estimate (BLUE) of x is giv en by ˆ x = ( F T P − 1 F ) − 1 F T P − 1 y , (1) and minimizes the estimation error co variance among all lin- ear unbiased estimators V erhaegen and V erdult [2007], Kailath et al. [2000], Rao [1973]. This result will be used repeatedly to characterize optimal gains in both centralized and distributed estimation settings. 2.2 Kalman filtering and steady-state co- variances Consider the discrete-time linear system x t +1 = Ax t + w t , (2) with measurements y t = C x t + v t , where w t ∼ N ( 0 , Q ) and v t ∼ N ( 0 , R ) , with Q ≻ 0 and R ≻ 0 . The Kalman filter recursively computes a minimum- variance state estimate by alternating prediction and correction steps. The associated estimation error covariance P t ev olves according to P t = C T R − 1 C + ¯ P − 1 t − 1 , ¯ P t +1 = AP t A T + Q. (3) Under standard detectability assumptions on ( A, C ) , the co- variance sequence con verges to a constant matrix P , and the cor- responding observer gains conv erge to fix ed values. This steady- state property motiv ates the synthesis of fixed-gain observers, where optimal gains are computed offline from asymptotic error cov ariances and remain constant during operation. In the se- quel, this principle is exploited to design distributed observers that achiev e optimal steady-state performance while requiring reduced online information exchange. 3 Pr oblem Definition This section formulates the distributed estimation problem ad- dressed in this paper , with emphasis on the steady-state regime and on the synthesis of fixed-gain distrib uted observers. 3.1 Networked system W e consider a discrete-time linear time-inv ariant system moni- tored by a network of sensing nodes. The setup consists of: (i) a discrete-time dynamical system; (ii) a set of nodes N with cardi- nality N := |N | , each endowed with local sensing capabilities; and (iii) a communication network described by a directed graph ( N , A ) . The system dynamics are giv en by x t +1 = Ax t + w t , (4) where x t ∈ R n is the system state and w t ∼ N ( 0 , Q ) is the process noise. Each node i ∈ N acquires measurements of the form y i t = C i x t + v i t , (5) where v i t ∼ N ( 0 , R i ) denotes the measurement noise. 2 Assumption A1. The pair ( A, C ) is detectable, where C := col( C i ) . Assumption A1 requires only collective detectability and does not impose detectability of ( A, C i ) at each individual node. Assumption A2. The process and measurement noises satisfy w t ∼ N ( 0 , Q ) , v i t ∼ N ( 0 , R i ) , i ∈ N , with Q ≻ 0 and R i ≻ 0 , and are mutually uncorrelated across nodes and with the process noise. W e further assume synchronized operation and ideal commu- nication. Assumption A3. All nodes are synchronized and operate at the same sampling rate. Assumption A4. Between two consecutiv e sampling instants, nodes can broadcast one message according to the communica- tion graph A . 3.2 Steady-state distributed estimation ob- jective Under Assumptions A1–A4, each node i ∈ N computes a local estimate ˆ x i t of the global state x t using its own measurements and information receiv ed from neighboring nodes. In contrast with general distributed filtering problems, the focus of this paper is on the steady-state estimation regime. Specifically , we seek to synthesize distributed observers with constant gains that achieve optimal asymptotic estimation per- formance. The objective is to design observer gains such that, in steady state, the local estimation error cov ariances P i t := E [ e i t ( e i t ) T ] are minimized, subject to the information exchange constraints imposed by the network. Throughout the paper, ˆ x i t denotes the state estimate at node i , ¯ x i t the corresponding predicted state, and e i t := ˆ x i t − x t the estimation error . Global error and covariance quantities are de- fined by stacking local v ariables across nodes. These definitions allow us to characterize optimality in terms of the asymptotic er - ror cov ariance of the global distributed BLUE estimator , which forms the basis for the fixed-gain observer synthesis developed in Section 4. 4 Distrib uted observ er synthesis based on BLUE This section addresses the synthesis of distributed observers for linear systems with Gaussian process and measurement noise. Rather than proposing a generic distributed Kalman filtering al- gorithm, we use the classical BLUE formulation (1) as a de- sign tool to characterize optimal steady-state performance and to deriv e observer gains. W e first recall a time-varying dis- tributed BLUE formulation, which serves as a reference bench- mark but requires the online propagation of global cov ariance information. W e then sho w that, for linear time-in variant sys- tems, the asymptotic error cov ariance of the global distributed BLUE estimator can be e xploited to compute fix ed observer gains offline. The resulting scheme is a steady-state distributed observer that exchanges only state estimates among neighbor- ing nodes, achiev es optimal asymptotic performance within the class of fixed-gain distributed observers, and significantly re- duces communication and computational ov erhead when com- pared to consensus-based or cov ariance-intersection approaches. 4.1 Time-v arying distributed BLUE f ormu- lation W e begin by recalling a distributed formulation of the BLUE es- timator with time-varying g ains, which serves as a reference for the development of the proposed fixed-gain observer . This for- mulation achiev es optimal estimation performance in a stochas- tic sense, but requires the online propagation of global cov ari- ance and cross-cov ariance information, rendering it impractical for large-scale netw orks. In detail, the method w orks as follows. Assume that we be gin with an estimate ¯ x i t of the state x t with a Gaussian distribution and the following characteristics: E h ¯ x i t − x t i = 0 , (6) E ¯ x i t − x t ¯ x j t − x t T := ¯ P ij t . (7) W e define the global co variance matrix as ¯ P t := ¯ P ij t ij ∈N and the vector ¯ x t := col ¯ x i t . The following theorem describes how to compute the BLUE of the state at the next time ¯ x i t +1 , giv en the local measurement y i t and the estimates of the neighbours ¯ x j t , j ∈ N i , as well as the global covariance matrix P t +1 := E ¯ e t +1 ¯ e T t +1 , where ¯ e t := col( ¯ e i t ) with ¯ e i t := ¯ x i t − x t . Theorem 1. Consider the matrices η i ∈ R | N i | n × N n , defined by η i := row e j , j ∈ N i ⊗ I n , where vector e i is a column vector with all entries equal to 0 except for entry i which is 1 , 1 i ∈ R | N i | × n defined by 1 i := 1 ⊗ I n , and Γ ij ∈ R | N i | n × n defined by Γ ij := η i ( e j ⊗ I n ) . Define ˜ Ω i t := 1 T i η i ¯ P t η T i † 1 i and Ω i t := ˜ Ω i t + S i , where η i ¯ P t η T i † is the Moore-Penrose pseudo-in verse of η i ¯ P t η T i 1 . 1 which is equiv alent to η i ¯ P t η T i − 1 if η i ¯ P t η T i is full rank 3 Giv en the estimates ¯ x i t ; i ∈ N , satisfying (6) and the global cov ariance matrix ¯ P t := ¯ P ij t ij ∈N and defining the correction terms s i t := C i T V i y i t and S i := C i T V i C i , the evoluti on of the BLUE of the state at time t + 1 , at node i , gi ven the local measurement y i t and the estimates of the neighbours ¯ x j t , j ∈ N i , is described by ¯ x i t +1 = A Ω i t − 1 X j ∈N i 1 T i η i ¯ P t η T i † Γ ij ¯ x j t + s i t (8) whereas global cov ariance matrix is described by ¯ P t +1 = T t ¯ P t T T t + diag AP i t S i P i t A T + 1 N 1 T N ⊗ Q, (9) where T t is defined as T t := T ij t , with T ij t := ( AP i t 1 T i η i ¯ P t η T i † Γ ij , j ∈ N i 0 , j / ∈ N i Pr oof. See the Appendix. Although this formulation achiev es optimal estimation per- formance, it requires each node to access global cov ariance and cross-cov ariance information, whose dimension grows with the network size. Moreo ver , the gains must be recomputed online and depend on the full covariance matrix ¯ P t . These require- ments make the approach unsuitable for long-term operation and motiv ate the search for fixed-gain distributed observ ers. 4.2 Fixed-gain steady-state distrib uted ob- server W e now depart from time-v arying distributed filtering and ad- dress the synthesis of a fixed-gain distributed observer operating in steady state. The objective is no longer to recursi vely prop- agate error co variances or to adapt observer gains online, but rather to compute constant gains offline from asymptotic error statistics and to deploy them in a purely state-based distributed observer . Specifically , we consider linear time-in variant systems for which the global cov ariance recursion associated with the time- varying distrib uted BLUE formulation in Section 4.1 conv erges to a stationary matrix ¯ P . This asymptotic covariance character - izes the steady-state performance of the global distributed BLUE estimator and serves as the reference object for observ er synthe- sis. Exploiting this property , we show that fixed observer gains can be computed offline and subsequently used by each node to perform distributed state estimation by exchanging only state estimates with neighboring nodes. 4.2.1 Offline gain computation Assume that the global covariance recursion (9) conv erges to a steady-state matrix ¯ P ≻ 0 . In steady state, the information matrices Ω i t become constant and are giv en by Ω i := 1 T i η i ¯ P η T i † 1 i + S i , where S i := ( C i ) T V i C i . The fixed observer gains are then obtained by freezing the time-varying gains of Section 4.1 at their asymptotic v alues. For each node i ∈ N and each neighbor j ∈ N i , define D ij := A (Ω i ) − 1 1 T i η i ¯ P η T i † Γ ij , and F i := A (Ω i ) − 1 ( C i ) T V i . These matrices are computed once, offline, using knowledge of the system model, noise statistics, and network topology . No cov ariance or cross-co variance information is required during online operation. For clarity , the of fline gain computation procedure is summa- rized in Algorithm 1. Algorithm 1 Offline computation of fixed distrib uted ob- server gains Input: System matrices A , { C i , R i } i ∈N , process noise cov ariance Q , network topology A Output: Fix ed gains { D ij , F i } 1: Initialize ¯ P 0 ≻ 0 2: r epeat 3: Update ¯ P k +1 using the global cov ariance recur - sion (9) 4: until ∥ ¯ P k +1 − ¯ P k ∥ < ε 5: Set ¯ P ← ¯ P k +1 6: f or each node i ∈ N do 7: Compute Ω i := 1 T i ( η i ¯ P η T i ) † 1 i + S i 8: for each neighbor j ∈ N i do 9: Compute D ij := A (Ω i ) − 1 1 T i ( η i ¯ P η T i ) † Γ ij 10: end for 11: Compute F i := A (Ω i ) − 1 ( C i ) T V i 12: end f or 4.2.2 Online distributed obser ver Once the fixed gains { D ij , F i } ha ve been computed offline, each node runs a simple distrib uted observer . At each time step, node i receiv es the current state estimates from its neighbors and 4 combines them with its o wn measurement to update its estimate according to ˆ x i t +1 = X j ∈N i D ij ˆ x j t + F i y i t . (10) Importantly , the online implementation does not require the propagation or fusion of covariance matrices, nor any iterativ e consensus procedure. The only information exchanged between nodes consists of state estimates of dimension n . The online execution at a generic node is summarized in Al- gorithm 2. Algorithm 2 Online fixed-gain distributed observer at node i Input: Fix ed gains { D ij , F i } , neighbor set N i 1: Initialize ˆ x i 0 2: f or each time step t do 3: Receiv e ˆ x j t from neighbors j ∈ N i 4: Acquire local measurement y i t 5: Update estimate: ˆ x i t +1 = X j ∈N i D ij ˆ x j t + F i y i t 6: end f or The proposed scheme constitutes a distributed observer with constant gains whose online implementation requires minimal communication and computation. The observer gains are com- puted offline from the asymptotic error cov ariance of the global distributed BLUE estimator and remain fixed during operation. As a result, the online execution requires only the exchange of state estimates among neighboring nodes, with no propagation or fusion of cov ariance information. The design explicitly targets steady-state operation and pro- vides a clear separation between offline observer synthesis and online state estimation. W ithin the class of fixed-g ain dis- tributed observers compatible with the network topology , the proposed approach achieves the same steady-state performance as the global distributed BLUE estimator whene ver con vergence is attained, while a voiding the conserv atism and communication ov erhead typically associated with cov ariance-intersection and consensus-based filtering methods. Remark (Con vergence considerations) . A formal proof of con- ver gence of the global covariance recursion (9) and of the re- sulting fixed-gain distributed observer is beyond the scope of this paper . Nev ertheless, numerical e xperiments consistently in- dicate con vergence of the co variance iteration and stable steady- state behavior of the proposed observer . These empirical obser- vations suggest that the proposed fixed-gain design is robust in practice, even though a complete theoretical characterization of its con ver gence properties remains an open problem. 5 Numerical r esults In this section, we illustrate the performance of the proposed distributed observ er through numerical e xperiments. The objec- tiv e is twofold: to empirically assess the steady-state behavior of the fixed-gain design and to compare its estimation perfor- mance with that of a consensus-based distributed Kalman filter- ing method Battistelli et al. [2015b]. In addition, we report em- pirical con ver gence properties of the global cov ariance iteration used for the offline g ain computation. Simulation setup W e consider a distributed system of the form (4)–(5) that is col- lectiv ely observable but not locally observable. The network consists of N = 20 nodes. The system dynamics are defined by the identity matrix A := I n , corresponding to decoupled agent dynamics. Let e i denote the canonical row vector with a 1 in position i and zeros else where. The local observ ation matrices are defined as C i := e i ⊺ − e ( i +1) ⊺ e ( i − 1) ⊺ − e i ⊺ , except for i = 1 , where i − 1 is replaced by N , and for i = N , where C N := e N ⊺ . This sensing configuration introduces coupling through the measurements while preserving decoupled dynamics, resulting in collectiv e but not local observability and therefore requiring distributed state estimation. Process and measurement noise are modeled as zero-mean Gaussian disturbances with cov ariances Q = I 2 N and R i = I m i , respecti vely . The initial state is drawn from a Gaussian distribution with covariance P 0 = 10 6 I 2 N . The communica- tion network is an undirected ring, where each node exchanges information with its two immediate neighbors. Empirical con vergence of the global covari- ance The fixed observer gains are computed offline from the steady- state solution of the global covariance recursion. Since a gen- eral con vergence proof is not available, we assessed con ver gence 5 empirically through a Monte Carlo study . Using the same base- line configuration defined pre viously , we performed 10 indepen- dent runs with randomized system and measurement matrices. The covariance iteration was deemed con ver ged when the Frobe- nius norm of the difference between successive iterates fell be- low 10 − 4 . Across all runs, conv ergence was consistently observed. The number of iterations required to reach con vergence ranged from 36 to 124 , with a mean of 73 . 3 iterations and a median of 65 . 5 iterations. These results indicate that, for the considered class of systems and network topologies, the of fline covariance compu- tation con ver ges reliably within a moderate number of iterations, supporting the practical feasibility of the proposed fixed-gain de- sign. Estimation perf ormance Figure 1 compares the av erage estimation error norm 1 N X i ∈N ∥ ˆ x i t − x t ∥ obtained with dif ferent estimation strategies: a centralized Kalman filter , the consensus-based distributed Kalman filter of Battistelli et al. [2015b], the proposed distributed estimator with time-varying gains, and its steady-state fix ed-gain counter- part. 0 20 40 60 80 10 1 10 2 10 3 t estimation error norm Centralized Kalman filter Consensus-based DKF Proposed fixed-gain observ er Figure 1: A verage norm of the estimation errors for dif- ferent estimation strategies. The results show that the proposed fixed-gain distributed ob- server consistently achieves lo wer steady-state estimation er- ror norms than the consensus-based distributed Kalman filter . Moreov er , its performance closely matches that of the time- varying distributed formulation, while requiring substantially re- duced online computation and communication. These observa- tions provide empirical e vidence that the proposed fix ed-gain design attains the steady-state performance targeted by the of- fline covariance synthesis, in agreement with the conv ergence discussion presented earlier . 6 Conclusion This paper addressed the synthesis of fixed-gain distributed ob- servers for linear time-in variant systems under collectiv e observ- ability and limited communication. Using the BLUE formula- tion as a design tool, observer gains were computed of fline from the steady-state solution of a global cov ariance recursion, lead- ing to a distributed observer that exchanges only state estimates during online operation. Numerical results demonstrated that the proposed observ er exhibits reliable steady-state behavior and consistently outper- forms a consensus-based distributed Kalman filtering approach in terms of estimation accurac y , while significantly reducing on- line computational and communication requirements. Although a general con vergence proof for the covariance recursion re- mains an open problem, empirical evidence indicates that con- ver gence is achiev ed in a moderate number of iterations for the considered scenarios. These results highlight the practical ef fec- tiv eness of fixed-gain observer synthesis as a viable alternative to adaptiv e distributed filtering in L TI settings. Acknowledgments The work of Francisco Rego was funded by national funds through FCT – Funda ˜ A§ ˜ A£o para a Ci ˜ Aªncia e a T ec- nologia, I.P ., under projects/supports UID/06486/2025 ( https://doi.org/10.54499/UID/06486/2025 ), UID/PRR/06486/2025 ( https://doi.org/10.54499/ UID/PRR/06486/2025 ), and UID/PRR2/06486/2025 ( https://doi.org/10.54499/UID/PRR2/06486/ 2025 ), and by COF A C/ILIND/COPELABS through the Seed Funding Program (7th edition), project LoRaMAR ( https://doi.org/10.62658/COFAC/ILIND/ COPELABS/1/2025 ). A Pr oof of Theorem 1 Pr oof. After the agents communicate among themselves, the nodes compute ˜ x i t , the BLUE estimate giv en the estimates of 6 the neighbours, i.e. given ¯ x j t , j ∈ N i , where N i is the set of neighbours of i . The estimate ˜ x i t is the BLUE of x t such that η i ¯ x t = 1 i x t + ϵ t with ϵ t ∼ N 0 , η i ¯ P t η T i . Therefore from (1), if ¯ P ii t is full rank, then ˜ x i t = ¯ Ω i t − 1 1 T i η i ¯ P t η T i † η i ¯ x t and ˜ x i t has the following characteristics: E h ˜ x i t − x t i = 0 , E ˜ x i t − x t ˜ x i t − x t T = ˜ Ω i t − 1 , where ˜ Ω i t := 1 T i η i ¯ P t η T i † 1 i . The estimate ˜ x i t can also be expressed as ˜ x i t = ¯ Ω i t − 1 1 T i η i ¯ P t η T i † X j ∈N i Γ ij ¯ x j t . After taking a measurement, the nodes compute ˆ x i t , the BLUE of x t giv en ˜ x i t and y i t . This can be obtained directly as ˆ x i t = Ω i t − 1 ¯ Ω i t ˜ x i t + C i T R i − 1 y i t . Finally , the nodes compute the prediction, i.e. the estimate of x t +1 giv en ˆ x i t , which is simply ¯ x i t +1 = A ˆ x i t . It now remains to compute the ne xt global covariance matrix ¯ P t +1 . For this purpose we analyze the error dynamics, i.e. the dynamics of ¯ e i t := ¯ x i t − x t . The dynamics of the estimate ¯ x i t can be written as follows: ¯ x i t +1 = A Ω i t − 1 X j ∈N i 1 T i η i ¯ P t η T i † Γ ij ¯ x j t + A Ω i t − 1 S i x t + A Ω i t − 1 C i T R i − 1 v i t . Giv en that ¯ Ω i t = P j ∈N i 1 T i η i ¯ P t η T i † Γ ij (from the fact that P j ∈N i Γ ij = 1 i ) the state dynamics can be expressed as x t +1 = Ax t + w t = A Ω i t − 1 X j ∈N i 1 T i η i ¯ P t η T i † Γ ij x t + A Ω i t − 1 S i x t + w t . The error dynamics can then be written as e i t +1 = A Ω i t − 1 X j ∈N i 1 T i η i ¯ P t η T i † Γ ij e j t + A Ω i − 1 C i T R i − 1 v i t − w t . (11) Defining v t = col( v i t ) yields e t +1 = T t e t + K v t + 1 N ⊗ I n w t . with K := diag A Ω i t − 1 C i T R i − 1 . Finally , one computes the update law for ¯ P t as in (9). Refer ences I. F . Akyildiz, W . Su, Y . Sankarasubramaniam, and E. Cayirci. A survey on sensor networks. IEEE Communications Maga- zine , 40(8):102–114, 2002. A. Bahr , J. J. Leonard, and M. F . Fallon. Cooperative localiza- tion for autonomous underwater vehicles. The International Journal of Robotics Resear ch , 28(6):714–728, 2009. Giorgio Battistelli and Luigi Chisci. Kullback–leibler av erage, consensus on probability densities, and distributed state esti- mation with guaranteed stability . A utomatica , 50(3):707–718, 2014. doi: 10.1016/j.automatica.2013.11.042. Giorgio Battistelli, Luigi Chisci, Giov anni Mugnai, Alfonso Fa- rina, and Antonio Graziano. Consensus-based linear and non- linear filtering. IEEE T ransactions on Automatic Contr ol , 60 (5):1410–1415, 2015a. doi: 10.1109/T AC.2014.2357135. Giorgio Battistelli, Luigi Chisci, Giov anni Mugnai, Alfonso Fa- rina, and Antonio Graziano. Consensus-Based Linear and Nonlinear Filtering. IEEE T ransactions on Automatic Con- tr ol , 60(5):1410–1415, May 2015b. ISSN 0018-9286, 1558- 2523. doi: 10.1109/T AC.2014.2357135. Ruggero Carli, Alessandro Chiuso, Luca Schenato, and San- dro Zampieri. Distributed kalman filtering using consensus strategies. In Pr oceedings of the 46th IEEE Conference on Decision and Contr ol (CDC) , 2007. Simon J. Julier and Jeffre y K. Uhlmann. A non-div ergent esti- mation algorithm in the presence of unknown correlations. In Pr oceedings of the 1997 American Contr ol Conference (A CC) , pages 2369–2373, Albuquerque, NM, USA, 1997. T . Kailath, A. H. Sayed, and B. Hassibi. Linear estimation , vol- ume 1. Prentice Hall Upper Saddle River , NJ, 2000. 7 Reza Olfati-Saber . Distrib uted kalman filter with embedded con- sensus filters. In Pr oceedings of the 44th IEEE Conference on Decision and Contr ol (CDC) , pages 8179–8184, 2005. doi: 10.1109/CDC.2005.1583486. C. R. Rao. Linear statistical inference and its applications . W i- ley Ne w Y ork, 1973. Francisco FC Rego. Distributed observers for ltv systems: A distributed constructibility gramian based approach. Auto- matica , 155:111117, 2023. Francisco FC Rego, Y e Pu, Andrea Alessandretti, A Pedro Aguiar , Antonio M Pascoal, and Colin N Jones. Design of a distributed quantized luenberger filter for bounded noise. In 2016 American Contr ol Conference (ACC) , pages 6393– 6398. Ieee, 2016. J. M. Soares, A. P . Aguiar, A. M. Pascoal, and A. Martinoli. Joint ASV/A UV range-based formation control: Theory and exper- imental results. In 2013 IEEE International Confer ence on Robotics and Automation (ICRA) , pages 5579–5585, 2013. S. P . T alebi and S. W erner . Distributed kalman filtering and con- trol through embedded average consensus information fusion. IEEE T ransactions on Automatic Contr ol , 64(10):4396–4403, 2019. doi: 10.1109/T AC.2019.2897887. M. V erhaegen and V . V erdult. F iltering and system identifica- tion: A least squares appr oach . Cambridge univ ersity press, 2007. N. Xu. A survey of sensor network applications. IEEE Commu- nications Magazine , 40(8):102–114, 2002. 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment