Calorimeter Shower Superresolution with Conditional Normalizing Flows: Implementation and Statistical Evaluation

In High Energy Physics, detailed calorimeter simulations and reconstructions are essential for accurate energy measurements and particle identification, but their high granularity makes them computationally expensive. Developing data-driven technique…

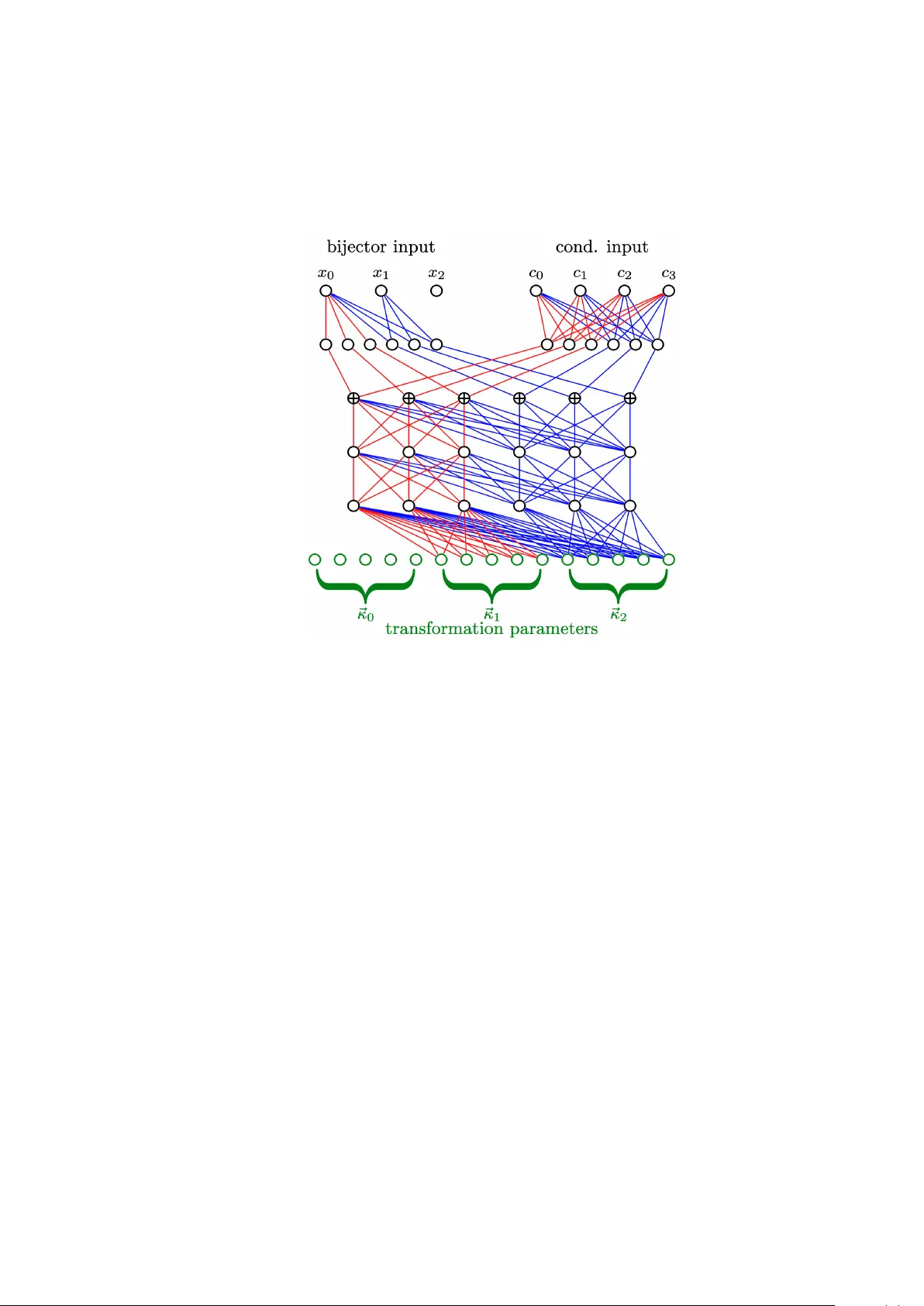

Authors: Andrea Cosso