Deep Learning Aided Vision System for Planetary Rovers

This study presents a vision system for planetary rovers, combining real-time perception with offline terrain reconstruction. The real-time module integrates CLAHE enhanced stereo imagery, YOLOv11n based object detection, and a neural network to esti…

Authors: Lomash Relia, Jai G Singla, Amitabh

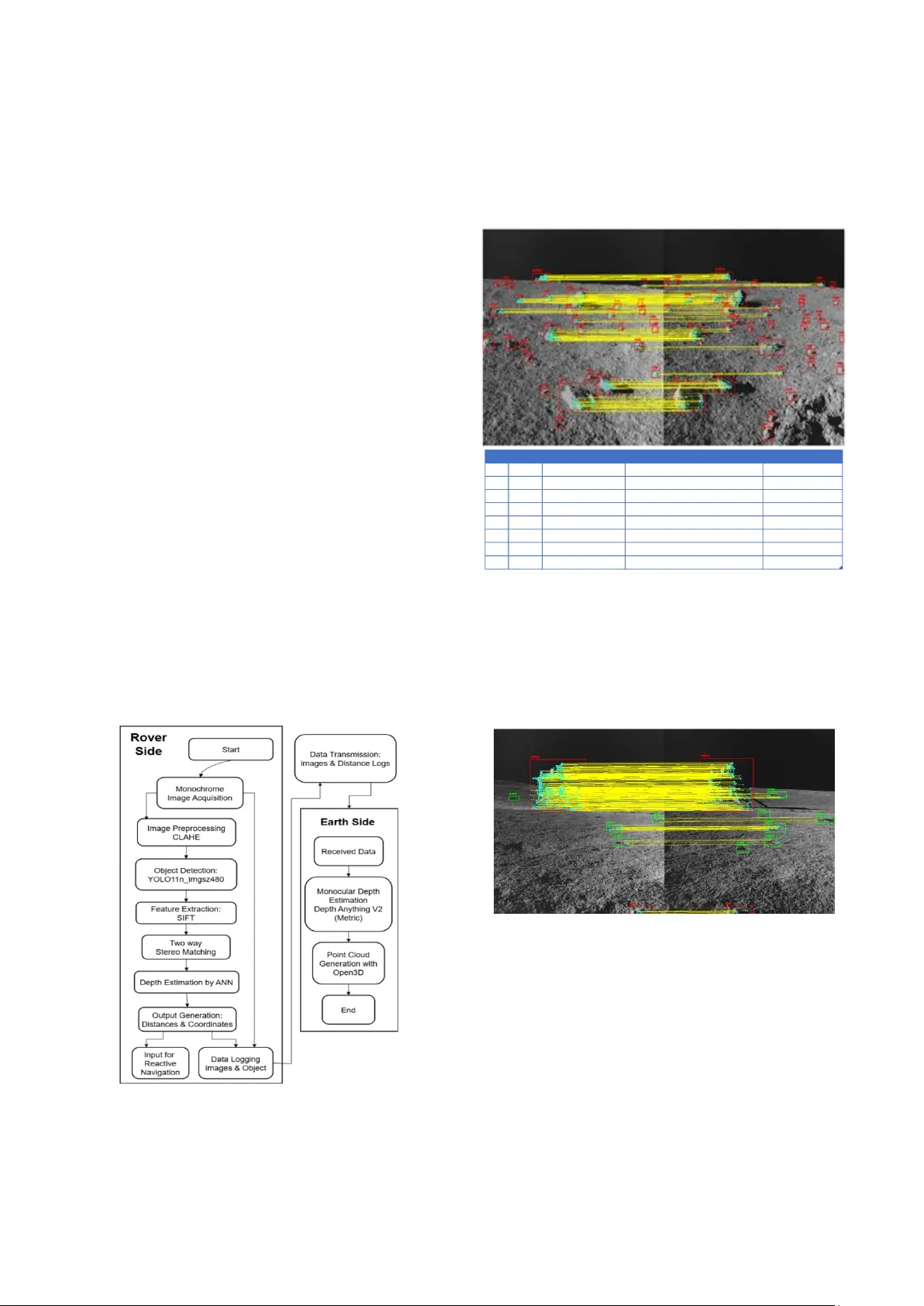

XXX -X-XXXX- XXXX -X/XX/$XX. 00 ©20XX IEEE Deep Learning Aided V ision System for Planetary Rovers Lomash Relia Department of Computer Engineering Devang Patel Institute of Advanced Technology and Research Anand, India lomashrelia@gmail.com Nitant Dube Space Applications Centre Indian Space Research Organization Ahmedabad, India nitant@sac.isro.gov.in Jai G Sin gla Space Applications Centre Indian Space Research Organization Ahmedabad, India jaisingla@sac.isro.gov.in Amitabh Space Applications Centre Indian Space Research Organization Ahmedabad, India amitabh@sac.isro.gov.in Abstract — This study presents a v ision system for planetary rovers, combining real-time perception with offline terrain reconstruction. The real-time module integrates CLAHE- enhanced stereo imagery, YOLOv11n-based object detection, and a neural network to esti mate object distances. The offline module uses the Depth Anything V2 (metric) monocular depth estimation model to generate depth m aps from captured images, which are fused into dense point clouds using Open3D. Real- world distance estima tes from the real-time pipeline provide reliable metric context alongside the qu alitative reconstructions. Evaluation on Chandrayaan-3 NavCam stereo imagery, benchmarked against a CA HV-based utility, shows that th e neural network achieves a medi an depth err or of 2.26 cm w ithin a 1 – 10 meter range. The object detection model maintains a balanced precision – recall trade-off on grayscale lunar scenes. This architecture offers a scalable, compute-efficient vision solution for autonomous planetary exploration. Keywords — planetary rover, stereo vision, ob ject detection, monocular depth estimation, lunar navigation, deep learning I. I NTRODUCTIO N Autonomous navigation presents a sign ificant challenge for planetary e xploration missions, wher e communication delays and unstructured extraterrestrial environments necessitate real-time perce ption and d ecision - making cap abilities. Planetary rover s must be capable of reliably detecting and classifyin g o bstacles, assessing terrain traversability, and supporting scientific operatio ns with minimal g round interven tion. Am ong the sensing mo dalities available, ster eo vision h as emerged as a preferred so lution, offering passive depth p erception with lower power requirements com pared to active sensors such as LiDAR. The successful deployment of stereo vision systems in missions such as NASA’s Mars Exploration Rover s— Sojourner, Curiosity, and Perseverance [1] — as well as ISRO’s Chandrayaan - 3 [2], has demonstrated the maturity of this technology for hazar d detection and terrain mapping [3] . However, the computational l imitations o f rover har dware, combined with the sparse visual features of lu nar and Martian terrains, continue to constrain the effectiveness of real -time, high-f idelity stereo processing. Classical geometric approaches such as CAHVOR modelling with least -squares triangulatio n d eliver high d epth accuracy [3] but are computatio nally intensive, making th em impractical for low-power onboard execution. Similarly, dense stereo match ing algorithms like Semi- Global Matching (SGM) [4] degrade in performance on low -texture regolith surfaces. Recent machine learning -based approaches [5], [6], [7], [8] offer promising alternatives b ut remain largely challenging for real-time use in harsh extraterrestrial environments d ue to their power and m emory requirements. We propo se a stereo vision fram ework tailored to the constraints of planetary rover s. The real - time module performs lightweight ob ject detection using YOLO11n [9] and distance estimatio n v ia a n eural network trained on s tereo keypoints. In p arallel, a secondar y offline pipelin e leverages monocular d epth estimation fo r high -resolu tion terrain reconstruction using captured imagery. The system is evaluated on Chandray aan -3 NavCam stereo imagery, demonstrating both compu tational eff iciency and metric accuracy suitab le for au tonomous navigatio n. By decoup ling immediate percep tion from post -mission analysis, the proposed fram ework enables reliable obstacle detection in th e field while supporting p assive gen eration of high -quality 3D reconstruction s for scientific applications. II. R ELATED W ORK Stereo vision has played a f oundational role in planetary rover autonomy since NAS A’s Mar s Exp loration Rovers (MER), which em ployed stereo camer as for real -time h azard detection and visual odometry [5]. Subsequent missions such as Curiosity and Perseverance advanced these capabilities by enabling enhanced 3D terrain mapping to support long-range navigation . More rec ently, stereo navigation camera (NavCam) imag ery from ISRO’s Chandray aan -3 Prag yan rover has been used to generate digital elevation models (DEMs) for lunar surface analysis [2]. Classical approaches to 3D reconstruction common ly rely on CAHV or CAHVOR camera models. Yak imovsky an d Cunningham [10 ] first introduced CAHV m odelling for stereo vision in spac e applications, using least- squares triangulation to compu te 3D coord inates. This in volves solving an ov erdetermined system of linear eq uations fo r each matched featu re p air from the stereo imag es — specifically using their pixel coordin ates — to estimate the real-world X, Y and Z po sitions with respect to origin of the defined world coordinate system. The CAHVOR extension accounts for le ns distortion and im proves modelling accuracy, but remains computationally expensive for large- scale keypoint matching. Feature -b ased correspondence methods such as SIFT o ffer robust matching under geometric and photometric tra nsformations [11]. De nse s tereo matching algorithms like Sem i-Glob al Matching (SGM) [4] offer full- field depth estimation but suffer from reduced reliability in low-textur e terrains like lunar regolith especially if the images are mo nochrome. To address these lim itations, r ecent studies h ave explored machine learning-based alternatives. Ligh tweight neural networks can approxim ate geom etric trian gulation and reduce computational overhead by o perating on in put features in batch mode. Object detec tion models like YOLO11 Nano [9] h ave shown effectiveness in p erforming fast h azard recognition with limited compute requirements. Likewise, mono cular depth estimation m odels such as De pth Anything V2 [12] off er high-resolutio n reconstru ctions from single grayscale images. This work brid ges the gap by introducing a vision pipeline that lev erages b oth par adigms: efficien t, r eal-time object detection and depth estimation using deep lear ning, coupled with offline monocular 3D reconstruction for comprehensive terrain co nstruction. Th e approach is validated on Chandrayaa n-3 Nav Cam stereo imag ery, demonstrating its feasibility for use in r esource-constrain ed p lanetary missions. III. M ETHODOLOGY The Chandrayaan -3 Pragyan rover was equipped with a calibrated stereo ca mera system th at captured monochrom e image p airs with a fixed b aseline of 0 .24 meter s, facilitating accurate d epth per ception in the lunar en vironment. The stereo setup featured a 39 - degree field of view, enabling coverage o f terrain with significant illumination variability caused by lunar shadows. As shown in Figure 1, Contr ast Limited Adaptive Histog ram Eq ualization (CLAHE) was applied to enhance local co ntrast, using a clip limit of 2.0 an d a tile grid size of 8×8. This preprocessing step signif icantly improved f eature visibility in low -textur e regolith regions, thereby en hancing the robustness of subseq uent detectio n and matching algor ithms. Figure 1 Pipeline Flowchart Figure 1 illustrates the overall flowch art of the propo sed dual- pipeline architectu re. On the rover side, imag es under go CLAHE-based preprocessing and are p assed to th e YOLO11n model for object detection. SIFT features are extracted within detected bounding boxes only and matched bidirectionally to identify corresponding objects. A n eural network then estimates the 3D p osition (X, Y, Z) fr om the matched features but we only u tilize the distance value as t he 2D ob ject po sitions are already defined by the b ou nding box coordinates . Objects lack ing at least 4 correspondence s due to illumination differen ces or limited overlap are excluded to maintain triang ulation reliability. Figure 2 Example detections and output table The output, as shown in Figure 2, includes object class, bounding box, and median distance per object, wh ich is logged and tr ansmitted along with images for Earth -side analysis. Offline, Dep th Anything V2 generates m onocular depth maps from the cap tured left images. Open3D fuses this output with the p reviously estimated 3D p oints to reconstruct a dense po int cloud of the lunar surface. Figure 3 Rocks, craters and artifacts detected in stereo images YOLO11n was selected for object d etection after a comparativ e analysis with YOLOv8 [13] and YOLOv12 [14], due to its lig htweight architecture and fast inference — qualities essential for deploymen t on resource - constrained planetary rovers. The mo del was trained using an open - source dataset of Apollo mission im ages [15], comprising 1,579 RGB images annotated with bo unding bo xes in COCO, YOLO, and Pascal V OC fo rmats. These images were converted to grayscale and labeled for three classes: crater s, rocks, and ar tifacts. An inp ut r esolution of 4 80×480 was chosen, offering an optimal balance b etween precision and recall fo r monoch rome imagery, while red ucing inferen ce time an d memory usage compared to th e stan dard 6 40×640 ID class median distance bbox left coordinates 1 rock 140.55 [341.32, 451.81, 435.37, 532.89] [410.14, 5 19.4] 7 rock 150.18 [539.34, 456.86, 583.08, 494.5] [563.05, 488.61] 6 rock 204.1 [704.77, 392.36, 742.36, 422.62] [725.35, 4 15.79] 4 rock 214.63 [470.27, 371.35, 518.99, 408.24] [505.19, 3 95.45] 2 rock 228.22 [479.11, 289.63, 598.28, 372.94] [562.92, 3 13.59] 9 rock 336.99 [93.39, 3 77.77, 1 22.44, 3 97.98] [120.05, 390.77] 0 rock 349.33 [346.96, 307.84, 402.03, 350.56] [378.67, 3 24.98] 3 artifact 950 [281.13, 208.25, 380.87, 254.53] [349.92, 2 38.2] setting. Most train ing hyperparameters followed Ultralytics' defaults, with adjustments includ ing a batch size of 16, an initial lear ning rate of 0 .001, and the use of aggressive data augmentatio ns to improve g eneralization. Training was conducted over 150 epochs with ear ly stop ping based on validation loss. Model generalization was evaluated usin g stereo image pair s from the Chandray aan- 3 Pragyan rover [16], verifying performance on unseen lunar terrain. Figure 4a. Inverse Proportionality of disparity and distances Figure 4 b. Artificial Neural Network for triangulation The regions within d etected bou nding boxes from both left and right images are designated as reg ions of interest (ROI s). SIFT features are extrac ted only within th ese ROIs, significantly reducing computational overhead and improving fea ture relevance. A two-way brute-force matcher is applied to match SIFT featu res bidirec tionally across the stereo pair. This helps ensure accurate i dentification of corresponding objects — e.g., correctly associating the same rock detected in both images. Objects detected outside the region of stereo overlap or those lacking sufficien t match es (fewer than four) are discarded. For each set of valid matched features, an artificial neural netwo rk (ANN) is used t o triangulate and estimate the 3D coo rdinates (X, Y, Z). The ANN takes a s input four values (x₁, y₁, x₂, y₂) and outputs the corresponding real-wo rld coordinates. Training data was generated using ISRO's CAHV- based triangulation utility on real stereo pairs. A simple analysis of this data revealed that the distance v alue is inver sely prop ortional to the difference in x pixel coordinates of a feature in the stereo image pairs, as shown in Figure 3a . Th e ANN arch itecture (figure 3b) includes three hidden layers with 128, 64, and 16 neurons respectively, each u sing Le aky ReLU activation, and is trained using Mean Absolu te Error (MAE) loss with the NAdam optim izer. An initial learning rate of 0.001 an d early stopping with patien ce of 10 were used. This com bination was ab le to achiev e minimum loss values an d g eneralization without overfitting, focusing on capturing the relation observed in Figure 3a . T he ANN achiev ed a training MAE of 0.15 centimetres. Figure 5 Point cloud of Vikram Lander at Shiv Shakti Point, Moon For offline terrain reconstru ction, the lef t g rayscale image is passed thr ough Dep th Anything V2 (metric var iant), which outputs a r elative depth map aligned with the inpu t resolution. This map is u sed with Open3D to con struct a dense 3D point cloud of the terrain. The fusion lev erages both the per -object metric distances estimated by the ANN and the dense dep th map o btained offline to c reate visually coherent and metrically consistent reconstructions. The final point cloud is color-coded by input image intensity for visualizatio n. IV. RESULTS AND DISCUSS IONS Figure 6 Object Detection on Lunar Terrain A comprehensive compar ison of YOLO model variants was conducted to ev aluate detection accuracy , model size, and deployment suitability on grayscale lunar imag ery. Among them, the YOLOv 11n_imgsz640 configuration exhibited the highest prec ision while maintain ing the smallest mod el footprint, making it ideal for applicatio ns wher e minim izing false positives and resource usage is critical. In contrast, YOLOv12s_img sz640 achieved th e h ighest recall (~0.64), prioritizing broader obstacle detec tion at the expense o f increased computational cost and par ameter co unt. Intermediate configurations such as YOL Ov11s_img sz480 offered a practical b alance betwe en r ecall an d precision, making them suitable for general -purpose scenarios. An increase in input r esolution consistently led to mod est g ains in detection accuracy, although with a significant rise in computation al load. Acr oss all test ed families — YOL Ov8, YOLOv11, and YOLOv12 — the perf ormance m etrics were closely matched; howev er, YOLOv 11 and YOLOv12 variants generally ou tperfor med YOL Ov8 in both accuracy and model compactn ess. Notably, YOLOv1 1n provided the highest p recision, while YOLOv12n delivered better recall, showcasing their complemen tary strengths. Input resolution played a critical role: models with 480×480 input s achiev ed substantially faster inference with min imal performan ce degradation compared to 640×640 variants. Overall, YOLOv11n_ imgsz480 emerged as the most favourab le choice, offering a strong balance between detection accuracy and computation al efficiency — well suited for real -time perception tasks on planetary rovers. Figure 7 Comparison of distance values derived from the image in f ig ure 2 - ANN vs. CAHV tool results Figure 8 True vs. predicted values of 3855 test samples The ANN -based triangulatio n meth od was evaluated against depth values generated by the standard CAHV -based geometric model. On a test set of 3,855 u nseen samples (Figure 8), the ANN achieved a median a bsolute error of 2.26 cm, with an inter quartile ran ge of 0. 91 – 5.58 cm — demonstrating reliab le performance in the critical 1 – 10 meter range relevant for planetary obstacle d etection. The mod el successfully preserved the inverse r elationship between x - coordinate disp arity and depth, en suring geometric consistency with stereo vision p rinciples. Unlike the CAHV pipeline, wh ich performs sequential tr iangulation for each feature pair, the ANN enables scalable, b atched inference through a single forward p ass, offer ing significant computation al efficiency. Notably, the ANN also d elivered stable p rediction s for distant objects: beyond 10 meters, the model consistently capped depth esti mates around this range, maintaining spatial coherence. In co ntrast, the CAHV tool often yielded inconsistent and un reliable depth valu es for different regions of the same ob ject wh en its distance exceeded 10 meters, resulting in spatial inco nsistency. Furthermor e, wh ile the legacy CAHV utility required manual selection of matchin g featu res in stereo pairs, the prop osed pipeline achieves full automation via YOL O -based object detection and SIFT -based keypoint matchin g. This automation streamlines the work flow, reduces manu al effort, and minimizes u ser-indu ced errors. V. C ONCLUSION AND FU TURE WORK The proposed vision system demonstrates an effective combination of lightweig ht real -time object d etection and depth estimation with high-resolution offline terrain reconstruction , making it w ell-su ited for planetary ro ver applications. Th e system achiev es reliable ob stacle detection and distance estimation under resource-co nstrained conditions. However, several components mer it further refinement. Th e ANN -based triang ulation model, wh ile accurate, is purely data -driven. Incorporating physics- informed neural n etworks could embed geometric con straints and enhance generalization. Additionally, the current framework lacks au tonomous control cap abilities. Future work sho uld explor e integrating lig htweight r einforcement learning (RL) policies for onboar d decision -making. Finally, coupling the vision system with visual odo metry, localization, an d mapping framewo rks tailored to lunar terrain would furth er strength en spatial awareness and navigation robustness. A CKNOWLEDGMENT The authors ex tend their since re g ratitude to the team members of Planetar y and Space Data Processing Division (PSPD) for their invaluable vision, continuous support, and insightful gu idance throughout this work . R EFERENCES [1] B. Wilcox and T. Nguyen, “Sojourner on Mars and Lessons Learned for Future Planetary Rovers,” presented at the International Conference On Environmental Systems, Jul. 1998, p. 98169 5. doi: 10.4271/981695. [2] K. V. Iyer, A. K. Prashar, M. S. Alurka r, S. V. Trivedi, A. S. A. Zinjani, and K. Suresh, “Digi tal Elevation Model Generation from Navigation Cameras of Chan drayaan- 3 Rover f or Rover mobili ty.,” 2024. [3] K. Di and R. Li, “CAHVOR camera model and its photogrammetri c conversion for planetary applications,” J. Geophys. Res. Planets , vol. 109, no. E4, p. 2003JE0021 99, Apr. 2004, doi: 10.1029/2003JE00 2199. [4] H. Hirschmull er, “S tereo Pro cessing by Semi global Matching and Mutual Information,” IEEE Trans. Pattern Anal. Mach. Intell. , vol. 3 0, no. 2, pp. 328 – 341, Feb. 2008, doi: 10.1109/TPAMI .2007.1166. [5] Yang Cheng, M. Maim one, and L . Matthi es, “Visual Odometry on the Mars Expl oration Ro vers,” in 2005 IE EE Intern ational C onference o n Systems, Man and Cybernetics , Waikoloa, HI, USA: IEEE, 2005, pp. 903 – 910. doi: 10.1109/ICSMC.20 05.1571261. [6] M. Azkarate, L. Gerdes, L. Joudr ier, and C. J. Pérez -del- Pulgar , “A GNC Architec ture for Planetar y Rovers wit h Autonomo us Navigation Capabilities,” in 2020 IE EE Internatio nal Conference on R obotics and Automation (ICRA) , May 2020, pp. 3003 – 3009. doi: 10.1109/ICRA4 0945.2020.919712 2. [7] D. P. Miller, D. J. At kinson, B. H . Wilcox, and A . H. Mish kin, “Autonomous Navigation and Control o f a Mars Rover,” IFAC Proc. Vol. , vol. 2 2, n o. 7, pp. 1 11 – 114, Jul. 1989, doi: 1 0.1016/S1474- 6670(17)53392- 3. [8] O. Lamarre and J. Kelly, “Overc oming the Challenges of Solar Rover Autonomy: Enabling Long - Duration Planetary Navigation,” 2018, arXiv . doi: 10.48 550/ARXIV .1805.05451. [9] G. J o cher and J. Qiu, U ltralytics YOLO11 . (2024). [Online]. Available: https://github.c om/ultralytics/ultra lytics [10] Y. Yakimovsky and R. Cunningham, “ A system for extracti ng three - dimensional measuremen ts from a st ereo pair of TV cameras,” Comput. Graph. Image Process. , vol. 7, no. 2, pp. 195 – 210, Apr. 1 978, doi: 10.1016/0 146-664X(78)90112- 0. [11] D. G. Lowe, “Distinctive Image Features from Scale -Invariant Keypoints,” Int. J. Comput. Vis. , vol. 60, no. 2, pp. 91 – 110, N ov. 2004, doi: 10.1023/B:VI SI.000002966 4.99615.94. [12] L. Yang et al. , “Depth Anything V2,” Oct. 20, 2024, a rXiv : arXiv:2406.0941 4. doi: 10.48550 /arXiv.2406.094 14. [13] G. Jocher, A. Chaurasia, and J. Qiu, Ultralytics YOLOv8 . (2023). [Online]. Avai lable: https://githu b.com/ultralytics/u ltralytics [14] Y. Tian, Q. Ye, and D. Doerma nn, YOLOv12: Atten tion-Centric Re al- Time Objec t Detec tors . (2025). [Online] . Available : https://github.c om/sunsmarterjie /yolov12 [15] vel tech multi tech , “Lunar Rover( 123) Dataset,” Roboflow U niverse . Roboflow, Nov. 2022. [Online] . Available: https://universe.r oboflow.com/vel-tech-mu lti-tech/lunar-rover- 123 [16] Indian Space Research Organisation (ISRO), “Chandrayaa n -3 Pragyan Rover Navigatio n Camera (NavCam) Level - 1 Image Archive.” 2 024. [Online]. Available: https://pradan.iss dc.gov.in/CH 3/Pragyan/NavCa m/ ID Class Predicted Distance (cm) - ANN Distan ce (cm) - CAHV Absolute Error ( cm) 1 r ock 140.55 141.23 0.68 7 r ock 150.18 154.64 4.46 6 r ock 204.10 203.44 0.66 4 r ock 214.63 213.87 0.76 2 r ock 228.22 231.24 3.03 9 r ock 336.99 340.86 3.87 0 r ock 349.33 351.45 2.12 3 a rtifact 95 0.00 3915.83 2965.83

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment