Sharp Concentration Inequalities: Phase Transition and Mixing of Orlicz Tails with Variance

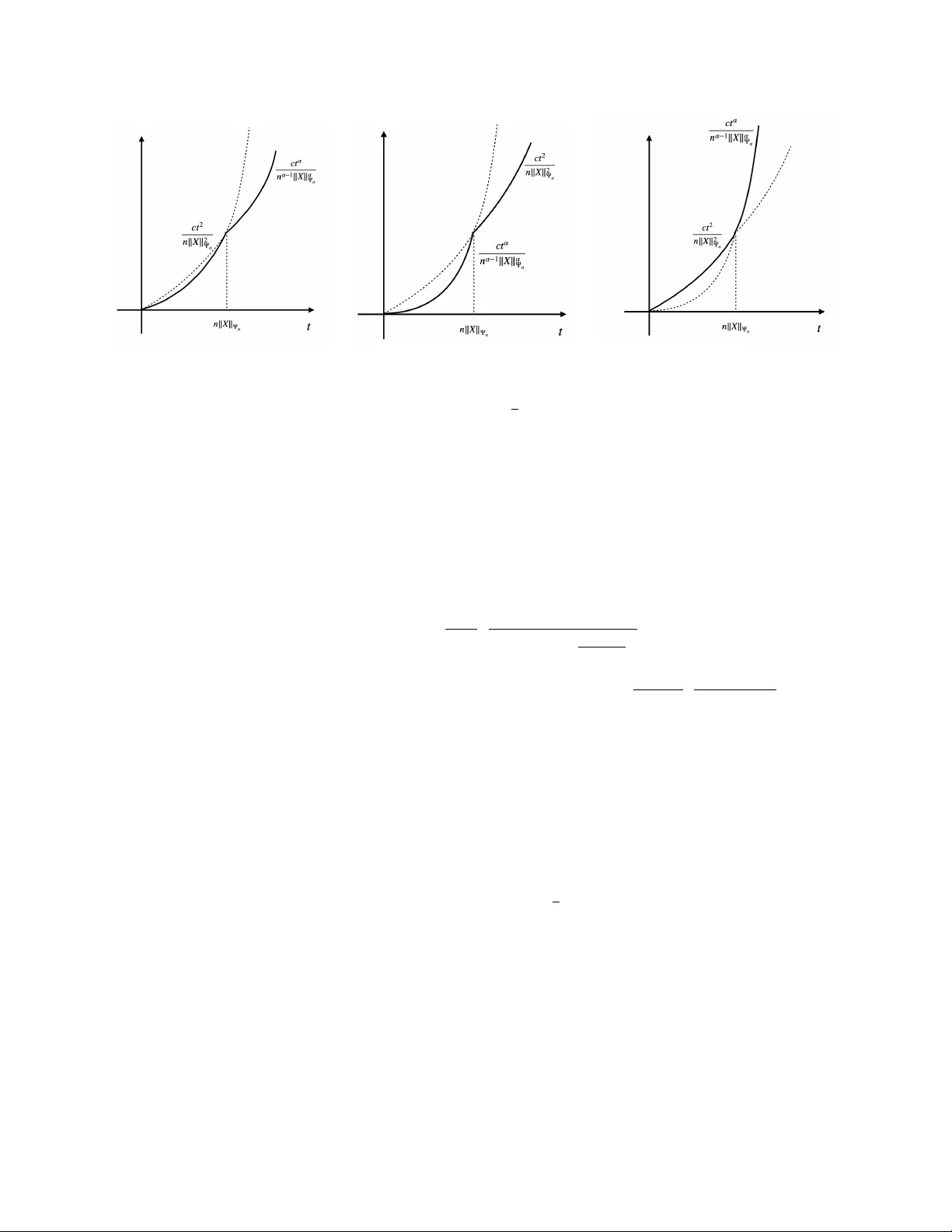

In this work, we investigate how to develop sharp concentration inequalities for sub-Weibull random variables, including sub-Gaussian and sub-exponential distributions. Although the random variables may not be sub-Guassian, the tail probability aroun…

Authors: Yinan Shen, Jinchi Lv