On the Expressive Power of Contextual Relations in Transformers

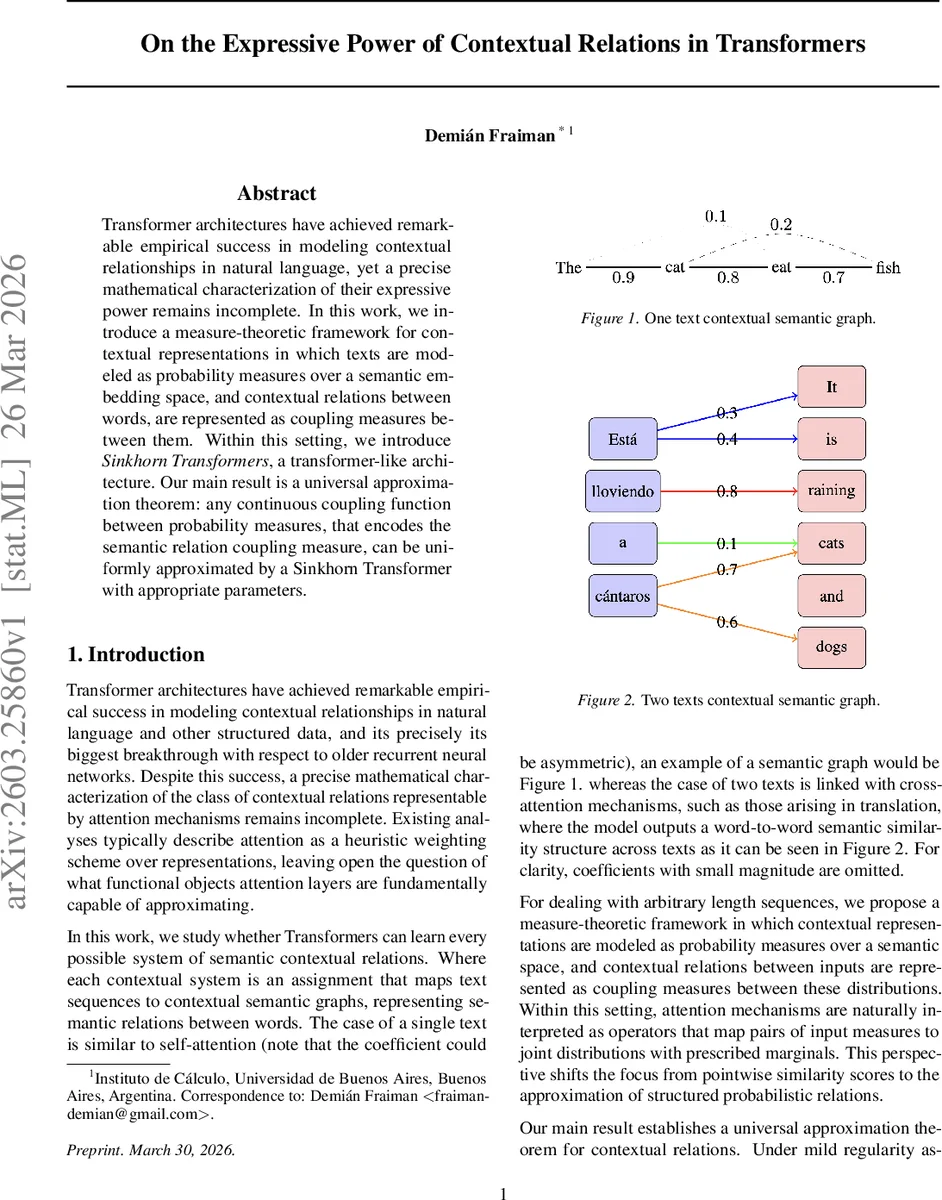

Transformer architectures have achieved remarkable empirical success in modeling contextual relationships in natural language, yet a precise mathematical characterization of their expressive power remains incomplete. In this work, we introduce a measure-theoretic framework for contextual representations in which texts are modeled as probability measures over a semantic embedding space, and contextual relations between words, are represented as coupling measures between them. Within this setting, we introduce Sinkhorn Transformer, a transformer-like architecture. Our main result is a universal approximation theorem: any continuous coupling function between probability measures, that encodes the semantic relation coupling measure, can be uniformly approximated by a Sinkhorn Transformer with appropriate parameters.

💡 Research Summary

The paper tackles a fundamental gap in the theoretical understanding of transformer models: while transformers excel empirically at capturing contextual relationships in language, there is no precise mathematical description of the class of functions they can represent. To fill this gap, the authors propose a measure‑theoretic framework that treats a piece of text as a probability measure over a compact semantic embedding space (X\subset\mathbb{R}^d). Concretely, a text consisting of tokens with embeddings ((x_1,\dots,x_n)) is represented by the empirical measure (\mu = \frac{1}{n}\sum_{i=1}^n \delta_{x_i}). This representation naturally handles variable‑length sequences and enables the use of tools from optimal transport and functional analysis.

The core idea is to model the contextual relation between two texts (\mu) and (\nu) not as a pointwise similarity matrix but as a coupling (joint probability measure) (\pi) belonging to the set (\Pi(\mu,\nu)) of all measures on (X\times Y) with prescribed marginals (\mu) and (\nu). The authors define a “coupling system” as a continuous map

(F : \mathcal{P}(X)\times\mathcal{P}(Y) \to \mathcal{P}(X\times Y))

such that (F(\mu,\nu)\in\Pi(\mu,\nu)) for every pair of input measures. Continuity is taken with respect to the weak topology on probability measures, which is natural for probabilistic objects.

Next, they lift the standard multi‑head attention mechanism to a measure‑valued operator. For a probability measure (\mu) and a query point (x\in X), the attention map (\Gamma_\theta(\mu,x)) is defined as

(x + \sum_{h=1}^H W_h \int_X \text{softmax}^h_\theta(\mu;x,y),V_h y , d\mu(y)),

where each head’s softmax is built from learned query/key matrices (Q_h,K_h) and normalizes over the support of (\mu). When (\mu) is discrete, this reduces exactly to the usual scaled‑dot‑product attention, showing that the definition is a true generalization. The authors also introduce the notions of “context maps” and “context operators” to formalize how a map (\Gamma) pushes forward an input measure to an output measure.

A deep transformer is then described as a composition of such context maps (the attention layers) interleaved with pointwise feed‑forward networks (MLPs). This mirrors the architecture of standard transformers but now operates on the space of probability measures.

The central theoretical contribution is a universal approximation theorem for coupling systems. The authors first characterize the functional space

(C = C(\mathcal{P}(X)\times\mathcal{P}(Y), \mathcal{M}(X\times Y)))

of continuous maps from pairs of measures to signed Radon measures, equipped with the uniform convergence topology. Within this space they identify the affine subspace (A) of maps whose marginals are zero, and the convex closed set (C_P) of maps taking values in probability measures. Their intersection (\mathcal{A}_\mathcal{P}=A\cap C_P) is precisely the set of admissible coupling systems; it is convex and closed.

To prove density of a concrete class of models, the paper leverages entropy‑regularized optimal transport (the Sinkhorn algorithm). For a continuous cost function (c) and regularization parameter (\varepsilon>0), the Sinkhorn operator (S_{c,\varepsilon}) maps a pair ((\mu,\nu)) to the unique minimizer (\pi_\varepsilon) of

(\min_{\pi\in\Pi(\mu,\nu)} \int c,d\pi + \varepsilon , KL(\pi|\mu\otimes\nu)).

Key properties (Lipschitz continuity in the cost, stability under weak convergence of the marginals) are cited from recent work by Nutz & Wiesel (2022).

Lemma 5.4 shows that any coupling (\pi) can be approximated arbitrarily well (in the weak topology) by a sequence of Sinkhorn plans with suitably chosen continuous costs. The proof proceeds by first approximating (\pi) with an absolutely continuous plan (\tilde\pi) and then smoothing its density to obtain a continuous function. Theorem 5.5 then uses an approximate selection theorem to construct, for any target coupling system (F), a family of continuous costs whose Sinkhorn plans converge uniformly to (F). Consequently, the family ({S_{c}}{c\in C(X\times Y)}) is dense in (\mathcal{A}\mathcal{P}).

Armed with this universality result, the authors propose the Sinkhorn Transformer. The architecture retains standard transformer layers (self‑attention, feed‑forward, layer norm) for all but the final interaction stage. At the top layer, instead of a softmax‑based attention matrix, the model computes a cost matrix from the learned representations, applies the Sinkhorn algorithm (with a fixed regularization (\varepsilon=1)), and outputs the resulting coupling (\pi). This coupling directly encodes the semantic relation between the two input texts and can be used for downstream tasks such as cross‑lingual alignment, document‑document similarity, or construction of semantic graphs.

In summary, the paper provides a rigorous functional‑analytic foundation for transformer expressivity, shifting the focus from pointwise vector mappings to the approximation of joint probability measures. By proving that Sinkhorn‑augmented transformers are universal approximators of continuous coupling systems, it bridges the gap between deep learning practice and optimal transport theory, offering a principled way to interpret and design models that learn rich, structured semantic relations. The work opens avenues for future research on training dynamics, regularization, and extensions to multimodal or graph‑structured data where coupling‑based representations are natural.

Comments & Academic Discussion

Loading comments...

Leave a Comment