A generalized Bayesian approach to multiple changepoint analysis

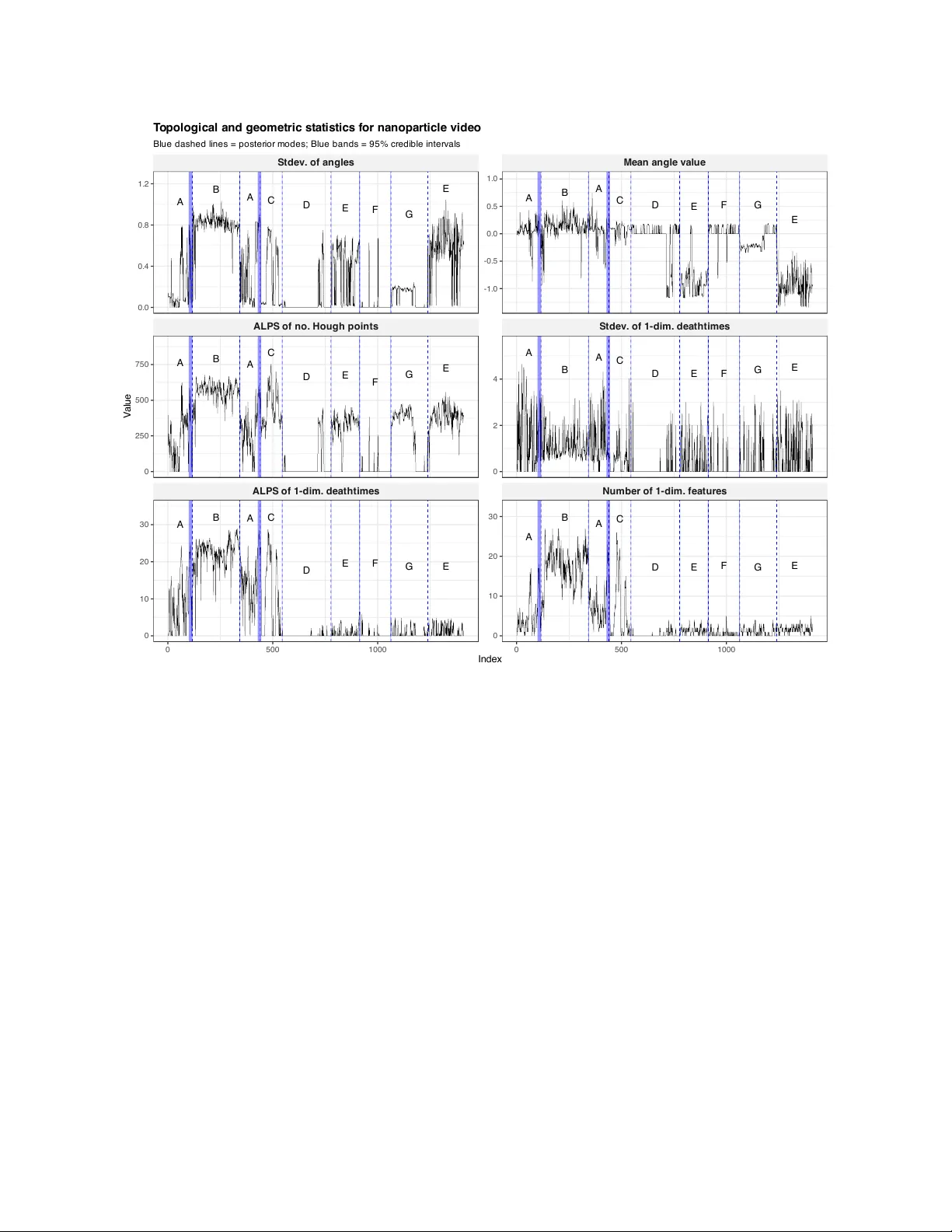

We introduce a generalized Bayesian method for multiple changepoint analysis with a loss function inspired by multinomial logistic regression. The method does not require a specification of the data-generating process and avoids restrictive assumptio…

Authors: Yuhui Wang, Andrew M. Thomas, Michael Jauch