Instance-optimal stochastic convex optimization: Can we improve upon sample-average and robust stochastic approximation?

We study the unconstrained minimization of a smooth and strongly convex population loss function under a stochastic oracle that introduces both additive and multiplicative noise; this is a canonical and widely-studied setting that arises across opera…

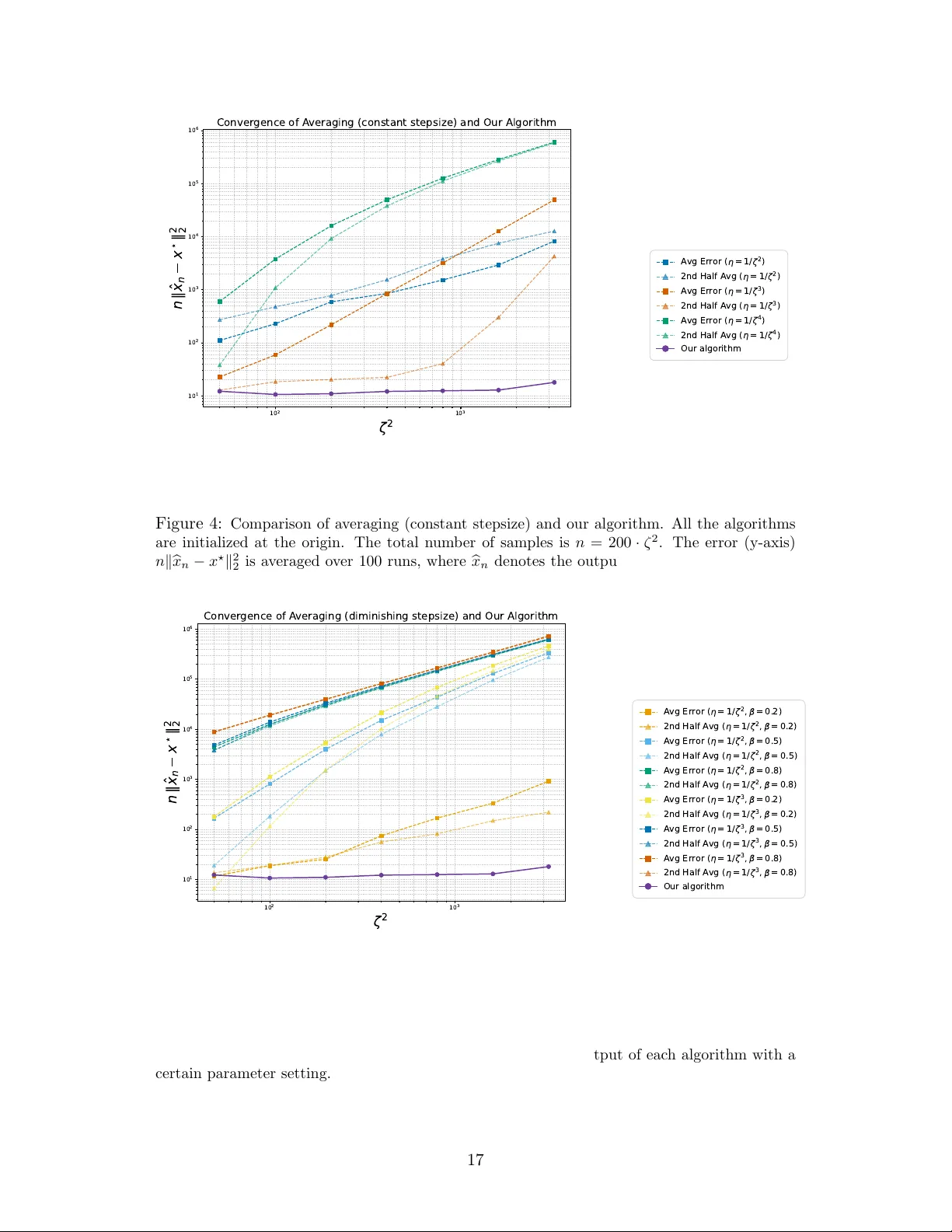

Authors: Liwei Jiang, Ashwin Pananjady