Spatiotemporal System Forecasting with Irregular Time Steps via Masked Autoencoder

Predicting high-dimensional dynamical systems with irregular time steps presents significant challenges for current data-driven algorithms. These irregularities arise from missing data, sparse observations, or adaptive computational techniques, reduc…

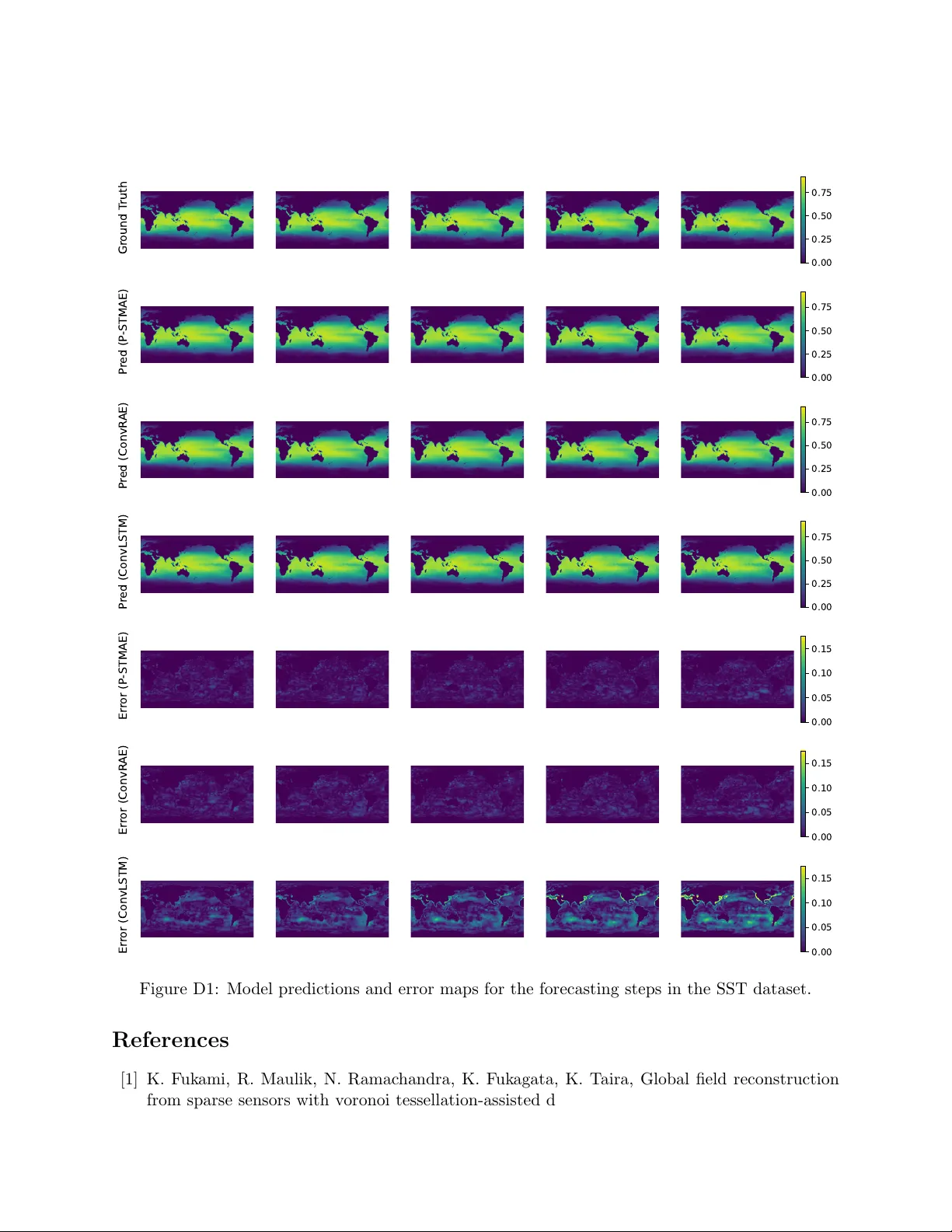

Authors: Kewei Zhu, Yanze Xin, Jinwei Hu