Voxtral TTS

We introduce Voxtral TTS, an expressive multilingual text-to-speech model that generates natural speech from as little as 3 seconds of reference audio. Voxtral TTS adopts a hybrid architecture that combines auto-regressive generation of semantic spee…

Authors: Alex, er H. Liu, Alexis Tacnet

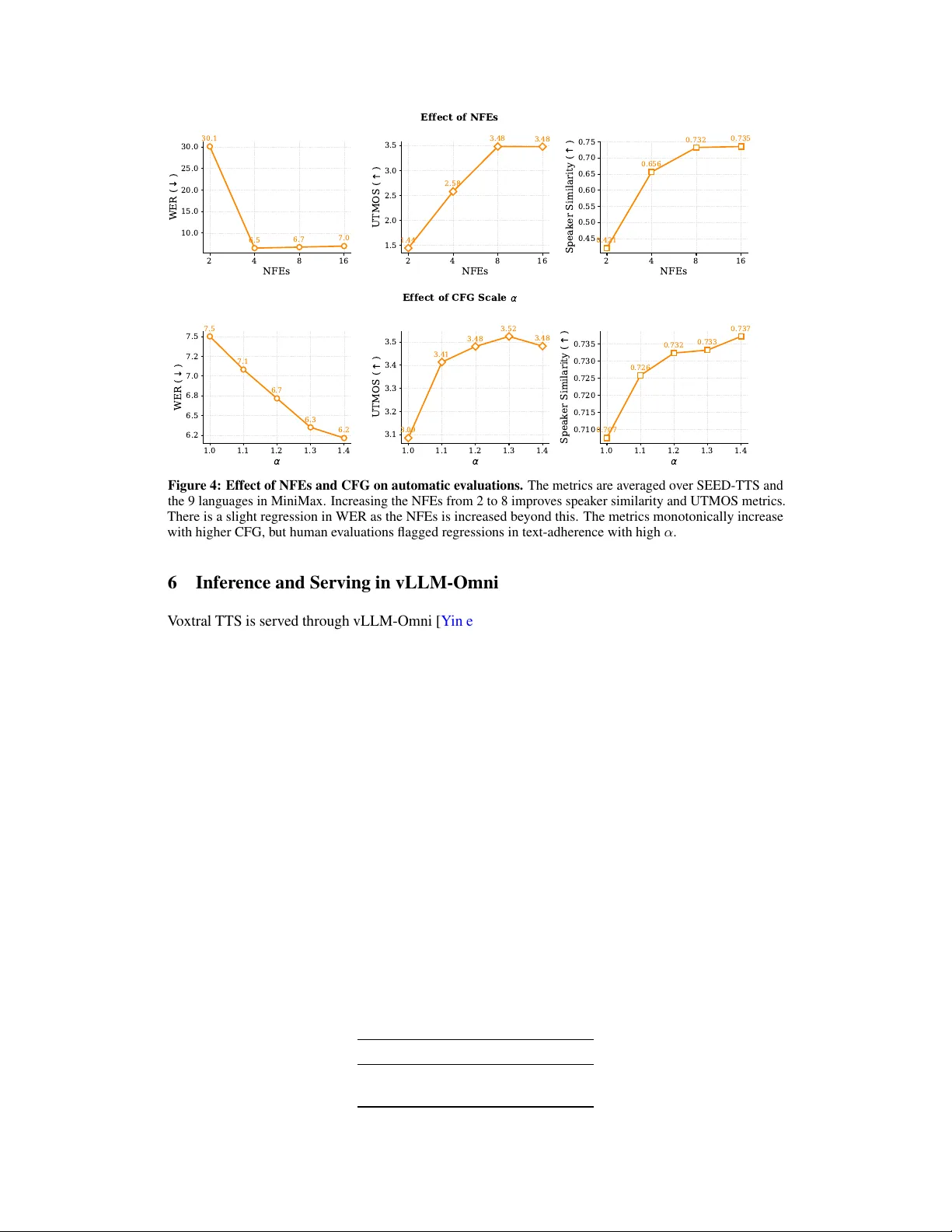

V oxtral TTS Abstract W e introduce V oxtral TTS, an e xpressiv e multilingual te xt-to-speech model that generates natural speech from as little as 3 seconds of reference audio. V oxtral TTS adopts a hybrid architecture that combines auto-regressi v e generation of semantic speech tokens with flo w-matching for acoustic tok ens. These tokens are encoded and decoded with V oxtral Codec, a speech tokenizer trained from scratch with a hybrid VQ-FSQ quantization scheme. In human e v aluations conducted by nativ e speakers, V oxtral TTS is preferred for multilingual voice cloning due to its naturalness and expressi vity , achieving a 68.4% win rate o v er Elev enLabs Flash v2.5. W e release the model weights under a CC BY -NC license. W ebpage: https://mistral.ai/news/voxtral- tts Model weights: https://huggingface.co/mistralai/Voxtral- 4B- TTS- 2603 0 20 40 60 80 100 A verage Listener Preference (%) V oice cloning Flagship voices 68.4% 31.6% 58.3% 41.7% W in R ate V oxtral T TS ElevenLabs Flash v2.5 Figure 1: V oxtral TTS is preferred to Ele venLabs Flash v2.5 in human evaluations. W e plot the win rate for V oxtral TTS against Ele venLabs Flash v2.5 in human ev aluations across two cate gories. For flagship v oices, we use the default v oices for each model and 77 unique text examples. In the voice cloning set-up, we pro vide a short audio reference clip and 60 text prompts. In both categories, human annotators blindly rate which audio is better between the two models. V oxtral TTS is preferred in 58.3 and 68.4% of instances. 1 Introduction Natural and expressi v e text-to-speech (TTS) remains a cornerstone of fle xible human-computer interactions, with applications spanning virtual assistants, audiobooks, and accessibility tools. While recent neural TTS models achiev e strong intelligibility , capturing the nuances and expressi vity of human speech remains an open challenge, particularly in the zero-shot voice setting. Recent zero-shot TTS systems typically condition generation on discrete speech tokens extracted from a short v oice prompt, enabling generalization to unseen speak ers and natural synthesis across long sequences [ Borsos et al. , 2023 , W ang et al. , 2023 ]. In parallel, diff usion and flow-based models are ef fectiv e for modeling rich acoustic variation in speech generation [ Popov et al. , 2021 , Le et al. , 2023 ]. Recent speech codecs demonstrate that speech can be factorized into a low-rate semantic stream and a higher-rate acoustic stream [ Défossez et al. , 2024 ]. Hierarchical generators such as Moshi already exploit this structure using a temporal transformer over timesteps and a depth transformer over codec lev els. Howe v er , acoustic generation in these systems remains depth-wise autoregressiv e. For TTS, this raises the question whether the dense acoustic component must be modeled auto-regressi v ely at all, or whether it can instead be generated more effecti v ely with a conditional continuous model. In this work, we introduce V oxtral TTS, a multilingual zero-shot TTS system built around a representation-aware hybrid architecture. A voice prompt is tokenized through V oxtral Codec, a lo w-bitrate speech tok enizer with an ASR-distilled semantic token and finite scalar quantized (FSQ) acoustic tokens [ Mentzer et al. , 2023 ]. Giv en this factorized representation, a decoder-only trans- former auto-regressi v ely predicts the semantic tok en sequence, while a lightweight flo w-matching model predicts the acoustic tokens conditioned on the decoder states. This design combines the strengths of auto-regressi ve modeling for long-range consistenc y with continuous flow-matching for rich acoustic detail. W e adapt Direct Preference Optimization (DPO) [ Rafailov et al. , 2023 ] to this hybrid discrete-continuous setting by combining a standard preference objective o ver semantic tok en generation with a flow-based preference objecti v e for acoustic prediction [ Zi v et al. , 2025 ]. V oxtral TTS supports 9 languages, supports voice prompts as short as 3 seconds, and is designed for lo w-latency streaming inference. Across automatic e v aluations on SEED-TTS [ Anastassiou et al. , 2024 ] and MiniMax-TTS [ Zhang et al. , 2025 ], it achieves strong intelligibility and naturalness, beating Ele venLabs v3 on speak er similarity scores. In human ev aluation for multilingual zero-shot voice cloning, it is preferred over Ele venLabs Flash v2.5 with a 68.4% win rate, while remaining competitiv e with strong proprietary systems on e xpressi ve flagship-v oice e v aluations. 2 Modeling Figure 2 highlights the architecture of V oxtral TTS. It consists of a novel audio codec—V oxtral Codec—which encodes a reference voice sample into audio tokens consisting of semantic and acoustic tokens. The audio tokens are combined with text tokens to form the input to the LM decoder backbone. T o generate speech, the decoder backbone auto-regressi vely generates semantic token outputs. A flow-matching transformer generates the acoustic tokens. The codec decoder maps the output tokens to the corresponding audio wa v eform. 2.1 V oxtral Codec V oxtral Codec is a con v olutional–transformer autoencoder [ Défossez et al. , 2022 ] that compresses ra w 24 kHz mono wa v eforms into 12.5 Hz frames of 37 discrete tokens (1 semantic + 36 acoustic), achiev- ing a total bitrate of 2.14 kbps. These tokens serve as the input audio representation to V oxtral TTS. Through a no vel combination of architectural and training objectiv e improv ements, V oxtral Codec out- performs e xisting baselines such as Mimi [ Défossez et al. , 2024 ], with results presented in Section 4.1 . W avef orm A utoencoder . Inspired by prior works on transformer-based audio codecs [ Parker et al. , 2024 , W u et al. , 2024 ], our audio tokenizer operates on “patchified” wa veforms. A 24 kHz mono input wa veform is chunk ed into non-o verlapping patches of 240 samples, yielding a 100 Hz input to the encoder . The 100 Hz input frames are first projected to 1024-dimensional embeddings via a causal con v olution with kernel size 7. The embeddings are then forwarded through 4 encoder blocks, each comprising: • A 2-layer causal self-attention transformer with sliding window attention (window sizes 16 → 8 → 4 → 2 , halved at each do wnsampling stage), ALiBi positional bias [ Press et al. , 2021 ], QK-norm, and LayerScale [ T ouvron et al. , 2021 ] initialized at 0.01. • A causal CNN layer . In the first three blocks, the CNN downsamples by 2 × (stride 2), yielding a cumulativ e 8 × reduction from 100 Hz to 12.5 Hz. In the fourth block, the CNN has stride 1 and projects the 1024-dimensional representation to a 292-dimensional latent space. 2 Autoregr essive Decoder Backbone A T A A A T T T A A A A A Semantic Flow-Matching T ransformer Acoustic A 80ms audio A < EOA > voice refer ence Linear Head Figure 2: Architectur e over view of V oxtral TTS. A voice reference ranging from 3s-30s is fed to the V oxtral Codec encoder to obtain audio tokens at a frame rate of 12.5 Hz. Each audio frame (labeled A ) consists of a semantic token and acoustic tokens. The voice reference audio tokens along with the te xt prompt tokens (labeled T ) are fed to the decoder backbone. The decoder auto-regressiv ely generates a sequence of semantic tokens until it reaches a special End of Audio token ( ). At each timestep, the semantic token from the decoder backbone is fed to a flow-matching transformer , which is run multiple times to predict the acoustic tokens. The semantic and acoustic tokens are fed to the V oxtral Codec decoder to obtain the generated wav eform. 24 kHz Patch Causal Conv T ransformer Block T ransformer Block Strided Causal Conv Linear Linear q 1 (d=256) q 2 (d=36) VQ FSQ (21 levels) x36 T ransformer Block T ransformer Block Strided Causal Conv T ransposed Causal Conv T ransformer Block T ransformer Block Causal Conv Adversarial + Reconstruction Losses Soft-Aligned ASR Distillation Loss ASR Model Unpatch Encoder Decoder Repeat 4x Repeat 4x Quantization Figure 3: Architecture o verview and training of V oxtral Codec. It consists of a split semantic VQ codebook and acoustic FSQ codebooks. Both semantic and acoustic tokens are combined for reconstruction. The semantic token has an additional distillation loss from a supervised ASR model. 3 The 292-dimensional latent is subsequently quantized to audio tokens (detailed below). The decoder mirrors the encoder in re verse: a causal CNN first projects the 292-dimensional latent back to 1024 dimensions, followed by 4 blocks each containing a transposed CNN (for 2 × upsampling) and a 2-layer causal self-attention transformer , gradually restoring the 12.5 Hz latent to 100 Hz. A final causal conv olution with kernel size 7 maps from 1024 dimensions back to the patch size of 240 samples to reconstruct the wa veform. Representation Quantization. The 292-dimensional latent is split into a 256-dimensional semantic component and a 36-dimensional acoustic component, which are quantized independently: • The semantic component is quantized through a learned vector quantizer (VQ; [ V an Den Oord et al. , 2017 ]) with a codebook of size 8192. During training, VQ is applied with 50% probability; the remaining samples pass through unquantized. • Each of the 36 acoustic dimensions is passed through a tanh activ ation and independently quantized to 21 uniform le vels via finite scalar quantization (FSQ; [ Mentzer et al. , 2023 ]). During training, we apply dither -style FSQ [ Parker et al. , 2024 ]: 50% of samples are quantized with FSQ, 25% recei ve uniform noise of magnitude 1 /L (where L =21 is the number of lev els), and 25% pass through unquantized. The total bitrate is 12 . 5 × (log 2 8192 + 36 × log 2 21) ≈ 2 . 14 kbps. Semantic T oken Learning . T o better incorporate the semantic content of speech into the semantic tokens, we adopt an auxiliary ASR distillation loss. Unlik e prior works that learn “semantic” tokens by distilling self-supervised speech representations [ Zhang et al. , 2023 , Défossez et al. , 2024 ], which are more phonetic than semantic [ Liu et al. , 2024 ], we distill from a supervised ASR model. This has been shown to produce more ef fectiv e semantic representations [ V ashishth et al. , 2024 ]. A frozen Whisper [ Radford et al. , 2023 ] model is run auto-regressiv ely on the input audio to generate decoder hidden states and cross-attention weights. The post-VQ semantic embeddings are linearly projected to match the Whisper hidden dimension and then aligned to the decoder hidden states from the last decoder layer using a cosine distance loss: L ASR = 1 − 1 L L X l =1 ˜ z l · h l ∥ ˜ z l ∥ ∥ h l ∥ , ˜ z l = F X f =1 A l,f z f (1) where z f are the projected post-VQ semantic embeddings at codec frame f , h l are the last-layer decoder hidden states from Whisper at token position l , and A ∈ R L × F is a soft alignment matrix deriv ed from a subset of Whisper’ s cross-attention heads identified as best correlating with word- lev el timestamps via dynamic time warping (DTW) [ Berndt and Clif ford , 1994 ]. T o compute A , the cross-attention weights from these heads are normalized across the decoder token dimension, median-filtered, and a veraged over heads. The resulting matrix is linearly interpolated along the encoder frame axis to match the codec frame rate (12.5 Hz), so that ˜ z l is the attention-weighted sum of codec embeddings aligned to the l -th decoder token. This design allo ws the tokenizer to learn text-aligned semantic tokens without requiring an external forced aligner or paired transcripts, since the alignment is deri ved implicitly from Whisper’ s cross- attention weights. Distilling from continuous hidden states rather than hard transcript labels provides richer supervision, including model confidence and phonetic similarities. Adversarial T raining. A multi-resolution discriminator with 8 STFT sizes (2296, 1418, 876, 542, 334, 206, 126, 76) is trained along with the codec. Each discriminator is trained as a binary classifier between real audios x and reconstructed audios ˆ x using a hinge loss. An L 1 -based feature-matching loss is computed on the activ ations of ev ery layer of each discriminator: L feature ( x , ˆ x ) = 1 M N M X m =1 N X n =1 ∥ D m n ( x ) − D m n ( ˆ x ) ∥ 1 (2) Here, D m n denotes the m -th layer of the n -th discriminator, where each of the N discriminators has M layers. Following Défossez et al. [ 2024 ], Parker et al. [ 2024 ], we use this feature-matching 4 T able 1: Key h yperparameters of the V oxtral Codec. Parameter V alue Input / Pr eprocessing Sampling rate 24000 Patch size 240 AutoEncoder Encoder patch projection kernel size 7 Encoder patch projection dimension 1024 Encoder transformer layers 1 2 → 2 → 2 → 2 Encoder sliding window size 16 → 8 → 4 → 2 Encoder con v kernels 4 → 4 → 4 → 3 Encoder con v strides 2 → 2 → 2 → 1 (Decoder flips all → to ← and uses transposed con volutions) Discr ete bottleneck Semantic VQ 2 codebook size 8192 Acoustic FSQ 3 codebook count × size 36 × 21 Discriminator FFT sizes 2296, 1418, 876, 542, 334, 206, 126, 76 Channels 256 1 For training stability , we use LayerScale with initial scale of 0.01 and QK normalization with ϵ = 10 − 6 . 2 During training, VQ is applied with 50% probability . 3 During training: 50% quantized with FSQ, 25% dithered (uniform noise of magnitude 1 /L ), 25% unquan- tized. loss in place of the standard GAN generator loss, as the ev olving discriminator features provide an increasingly discriminativ e reconstruction signal throughout training. T raining Objective. V oxtral Codec is trained end-to-end with the follo wing losses: α L feature + β L ASR + γ L L1 + γ L STFT + δ L commit (3) where α =1 . 0 , β =1 . 0 , γ =0 . 9999 t (with t the current training step), and δ =0 . 1 . L L1 is the L 1 distance between the original and reconstructed wav eforms, and L STFT is an L 1 loss on their STFT magnitudes. Both reconstruction losses share the same exponential decay schedule γ , which bootstraps learning early in training and diminishes their influence as the adversarial signal strengthens [ Parker et al. , 2024 ]. L commit = ∥ z e − sg ( z q ) ∥ 2 2 is the VQ commitment loss [ V an Den Oord et al. , 2017 ], where sg denotes the stop-gradient operator . T able 1 presents a summary of the V oxtral Codec configuration. The full model has approximately 300M parameters. All decisions are ablated and the final configuration achiev es stable optimization with the best audio quality . 2.2 Decoder Backbone The decoder backbone of V oxtral TTS follo ws the architecture of Ministral 3B [ Liu et al. , 2026 ], an auto-regressi ve decoder -only transformer . The input sequence consists of voice reference audio tokens follo wed by text tokens, from which the output audio tok ens are auto-regressi vely generated. Each audio frame is represented by 37 discrete tokens (1 semantic, 36 acoustic). Each codebook has its own embedding lookup table (8192 entries for semantic and 21 for each acoustic), which are summed to produce a single embedding per audio frame. The decoder backbone generates a sequence of hidden states. A linear head projects each hidden state h to logits ov er the semantic codebook v ocabulary (8192 entries plus a special End of Audio ( ) token), trained with a standard cross-entropy loss. T o predict the acoustic tok ens, h is fed to a flow-matching transformer , described in Section 2.3 . The float-valued outputs of the flow-matching transformer are discretized before the next AR step to maintain a fully discrete token interf ace. 5 2.3 Flow-Matching T ransformer T o predict the acoustic tokens, a flo w-matching (FM) transformer operates independently on the hidden state h from each generation step in the decoder backbone. W e model acoustic tokens in continuous space to leverage the smooth velocity field of FM, and discretize only at the output to interface with the AR backbone’ s discrete token vocab ulary . The FM transformer consists of a bidirectional 3-layer transformer with the same width as the decoder backbone. It models the velocity field that transports Gaussian noise ( x 0 ) to acoustic embedding ( x 1 ) ov er a series of function ev aluation steps 0 ≤ t ≤ 1 . It receives as input h , the current function ev aluation step t encoded as a sinusoidal embedding, and the current acoustic embedding x t ∈ R 36 . W e use a separate projection layer for each input h , t and x t , because the scale of activ ations are different for each one. W e also ablated providing conditioning using DiT style adaptiv e LayerNorm (AdaLN) layers [ Peebles and Xie , 2023 ], but found our approach superior . During training, the hidden state is dropped out 10% of the time for “unconditional” modeling. For inference, we use the Euler method to inte grate the velocity v ector field v t using 8 function ev aluations (NFEs) and classifier-free guidance (CFG) [ Ho and Salimans , 2022 ]. Concretely , the form of v t and x t is: v t = αv θ ( x t , t, h ) + (1 − α ) v θ ( x t , t, ∅ ) (4) x t − ∆ t = x t − v t · ∆ t (5) where h is the hidden state from decoder backbone and ∅ is the unconditional case where we pass a vector of zeros with the same shape as h . v θ ( x t , t, h ) is the predicted velocity field at time step t , sam- ple x t and conditioning input h . W e set ∆ t = 1 / 8 and α = 1 . 2 based on the analysis in Section 5.2 . Note that in our architecture, CFG is applied independently at every frame in the FM transformer . Hence, it only requires an extra forward-propagation of only the FM transformer , and is thus significantly cheaper than applying CFG in the decoder backbone. The float values predicted by the FM transformer are con verted to discrete integer values by quantizing to the 21 FSQ levels. These discretized tokens are provided as input to the decoder backbone in the ne xt decoding step. Giv en the inputs to the decoder backbone are discrete tokens with embedding lookup, we also considered alternati ve architectures inspired by MaskGIT [ Chang et al. , 2022 ] and Depth T ransformer [ Défossez et al. , 2024 ]. Both approaches performed reasonably well, b ut were inferior to FM in human ev aluations, especially on expressi vity . In addition, MaskGIT requires attending ov er all 36 acoustic codebook positions and conditioning tok ens, resulting in a per-frame sequence length of 38, compared to just 3 in the FM transformer ( h , t , x t ). Similarly , the Depth Transformer requires 36 auto-re gressive decoding steps, compared to 8 NFEs for FM. Thus, FM is superior in quality , compute and latency . 3 T raining 3.1 Pretraining W e train the model using paired audio and transcripts pseudo-labelled with V oxtral Mini Tran- scribe [ Liu et al. , 2025 ]. Each training sample consists of a tuple ( A 1 , T 2 , A 2 ) where A 1 is a v oice reference and T 2 is the transcript for A 2 , which is our target for generation. Similar to V oxtral, we in- terleav e these segments with a special token between A 1 and T 2 , and a < repeat > special token between T 2 and A 2 . W e ensure that A 1 and A 2 are single-speaker se gments from the same speaker , but not necessarily temporally adjacent. The maximum duration of A 1 and A 2 are 180 seconds, and we ensure A 1 is at least 1 second long. Due to the long-tailed nature of natural conv ersational human speech duration, we find the model works best on v oice prompts ( A 1 ) between 3 and 25 seconds. The loss is computed only on the tokens of A 2 . W e optimize the model using a two-part loss function consisting of a cross-entropy loss on the semantic token L semantic and flow-matching loss L acoustic on the acoustic tokens. W e use the simple conditional flow-matching objecti ve as sho wn below: L acoustic = E t ∼U [0 , 1] ,x 0 ∼D ,x 1 ∼N (0 , 1) ∥ v θ ( x t , t ) − u t ( x t | x 1 , x 0 ) ∥ 2 2 (6) u t ( x t | x 1 , x 0 ) = x 1 − x 0 (7) 6 where u t is the conditional velocity target, v θ is the velocity predicted by the FM transformer , x 1 is sampled from a normal distribution, and x 0 the data distrib ution D . W e initialize the decoder backbone with Ministral 3B. Ne wly introduced modules, such as the FM transformer , audio codebook embedding lookup-tables and output projection layers, are randomly initialized. During training, we freeze the te xt-embedding layers in the decoder backbone to impro ve robustness to text tokens that appear with low-frequenc y in the V oxtral Mini Transcribe transcriptions. T o avoid o verfitting to silence, we also use a lower loss weight for frames that hav e no speech as determined by a voice-acti vity-detection (V AD) model and set the loss weight to 0 for extremely long silences. W e also perform simple LLM based rewrites of the transcripts to introduce robustness to normalized vs un-normalized text (e.g. "5 - 4" vs "fiv e minus four"). 3.2 Direct Pr eference Optimization W e use Direct Preference Optimization (DPO) [ Rafailo v et al. , 2023 ] to post-train the model, focusing on improving word error rate (WER) and speak er similarity . F or the semantic codebook, we use the standard DPO objectiv e. Gi ven that the acoustic codebooks are predicted with flo w-matching, we adapt the objectiv e from Ziv et al. [ 2025 ]: L ( θ ) = − E t ∼U (0 , 1) , x w ,x l log σ − β ∆ θ ( x w , x l , t ) − ∆ θ ref ( x w , x l , t ) , (8) where ∆ θ ( x w , x l , t ) = ∥ v θ ( x w t , t ) − u t ( x w t | x w ) ∥ 2 2 − ∥ v θ ( x l t , t ) − u t ( x l t | x l ) ∥ 2 2 . (9) W e make the objective suitable for our auto-regressi ve setup (note the bold t showing each tok en has a differently sampled t) by computing: ∆ θ ( x w , x l , t ) = N w X i =1 ∥ v θ ( x w i, t i , t i ) − u w i, t i ∥ 2 2 − N l X i =1 ∥ v θ ( x l i, t i , t i ) − u l i, t i ∥ 2 2 (10) and find that length normalization (dividing by length of winner) causes instability . W e ensure that the t and x 0 sampled for each location in the sequence is consistent for the policy model θ and reference model θ ref . The tw o DPO losses are added with uniform weights but we use a β semantic = 0 . 1 and β acoustic = 0 . 5 as training is sensitiv e to the flow-DPO loss. A low learning rate of 8e − 8 is used for training stability . The data for DPO is gathered using a rejection-sampling pipeline that takes as input a set of v oice samples from a held-out set of single-speaker voice samples and div erse synthetically generated text- prompts. W e prompt Mistral Small Creative 1 with the transcript of the v oice prompt and randomly chosen personas to synthesize a di verse array of texts which continue or reply to the conv ersational context. The pretrained checkpoint then takes as input the v oice and text prompts and generates multiple samples from each input, from which winner and loser pairs can be constructed. W inners and losers are determined from WER, speaker similarity , loudness consistency , UTMOS-v2 [ Baba et al. , 2024 ] and other LM judge metrics. W e optimize the model using the combined DPO loss along with the pretraining objective on high-quality speech for 1 epoch, as we found that training longer on synthetic data led to more robotic speech. 4 Results 4.1 V oxtral Codec T able 2 shows a comparison between V oxtral Codec and Mimi on the Expresso dataset [ Nguyen et al. , 2023 ]. W e evaluate on the following objective metrics: Mel distance, STFT distance, perceptual ev aluation of speech quality (PESQ), extended short-time objective intelligibility (ESTOI), word error rate between transcriptions generated using an ASR model corresponding to the source and reconstruction (ASR-WER), speaker similarity score computed using a speaker embedding model. W e also report the bitrates and frames per second (fps), which are relev ant as these codecs are used in the context of auto-regressi ve decoder models. Giv en Mimi uses an R VQ design for acoustic 1 https://docs.mistral.ai/models/mistral- small- creative- 25- 12 7 codebooks, it has the flexibility to choose a subset of codebooks to trade-off bitrate and quality . When V oxtral Codec is compared to Mimi in a 16 codebook configuration, such that the bitrates are similar, V oxtral Codec outperforms on all the objective metrics. On an internal subjectiv e assessment, we found V oxtral Codec to be comparable or better than Mimi at 16 codebooks on audios consisting of speech which is our main focus. T able 2: Comparison of V oxtral Codec and Mimi on the Expresso dataset. Model fps token/frame × vocab . size bitrate Reconstruction ( ↓ ) Intrusiv e ( ↑ ) Perceptual (kbps) Mel STFT PESQ EST OI ASR-WER (%) ↓ Speaker Sim ↑ Mimi – 8cb (Moshi) 12.5 8 × (2048) 1.1 0.702 1.177 2.07 0.803 11.75 0.672 Mimi – 16cb 12.5 16 × (2048) 2.2 0.618 1.100 2.67 0.865 11.01 0.829 Mimi – full 32cb 12.5 32 × (2048) 4.4 0.552 1.040 3.18 0.910 10.25 0.902 V oxtral Codec 12.5 1 × (8192) + 36 × (21) 2.1 0.545 0.982 3.05 0.882 10.66 0.843 4.2 A utomatic Evaluations W e e valuate V oxtral TTS, Elev enLabs v3 and Elev enLabs Flash v2.5 on SEED-TTS [ Anastassiou et al. , 2024 ] and the nine supported languages in MiniMax-TTS [ Zhang et al. , 2025 ] using automated metrics: 1. W ord Error Rate (WER) : Measured by V oxtral Mini Transcribe v2 to capture the intelligi- bility of speech. 2. UTMOS-v2 [ Baba et al. , 2024 ]: Predicts the Mean Opinion Score (MOS) of generated speech. 3. Speaker Similarity : Speaker embeddings are predicted using the ECAP A-TDNN model [ Desplanques et al. , 2020 ] and the cosine similarity is computed ag ainst the ref- erence embedding. This ev aluates how closely generated speech emulates the provided voice reference. The results for the three models are presented in T able 3 . While both Elev enLabs models achieve lo w WERs across languages, V oxtral TTS significantly outperforms ElevenLabs on the speaker similarity metrics. Surprisingly , we find that ElevenLabs Flash v2.5 performs better on most automated metrics and Ele venLabs v3 better on human e valuations, particularly with emotion steering. This highlights the importance of performing human ev aluations in conjunction with automatic ev aluations. T able 3: WER, UTMOS, and Speaker Similarity scores for V oxtral TTS, ElevenLabs v3, and Ele venLabs Flash v2.5. WER (%) ↓ UTMOS ↑ Speaker Sim ↑ T ask V oxtral ElevenLabs v3 Ele venLabs Flash V oxtral ElevenLabs v3 Ele venLabs Flash V oxtral ElevenLabs v3 Ele venLabs Flash MiniMax Arabic 2.68 3.67 2.86 3.07 2.50 2.89 0.746 0.546 0.539 German 0.83 0.45 1.08 3.12 2.90 3.27 0.721 0.457 0.489 English 0.63 0.48 0.33 4.30 4.27 4.27 0.786 0.484 0.489 Spanish 0.51 0.87 0.49 3.41 3.18 2.99 0.762 0.443 0.541 French 3.22 2.34 2.26 2.83 2.90 2.94 0.587 0.339 0.378 Hindi 4.99 8.71 5.08 3.56 3.56 3.35 0.839 0.707 0.679 Italian 1.32 0.58 0.55 3.43 3.08 3.09 0.739 0.527 0.485 Dutch 1.99 1.52 0.83 3.89 3.53 3.68 0.720 0.397 0.598 Portuguese 1.02 0.92 1.15 3.66 3.41 3.41 0.785 0.571 0.642 Seed TTS 1.23 1.26 0.86 4.11 3.92 4.09 0.628 0.392 0.413 4.3 Human Evaluations Automated metrics cannot measure the naturalness and e xpressivity of a TTS model, especially the ability of the model to speak with a specific emotion. W e find that UTMOS is only a loose proxy , not well calibrated across languages and only weakly correlated with human preference. Hence, we perform two sets of human e valuations in which annotators compare generations between two models without kno wing their identities. The ev aluation consists of 77 prompts, with 11 of them neutral while 66 of them hav e an associated expected emotion. F or all ev aluations, annotators are instructed to choose whether one of the generations is "slightly better", "much better" or if they are "both good" 8 or "both bad". During labeling, all audio samples are resampled to 24 kHz W A V format (even the reference samples) to ensure there is no bias due to audio quality . 4.3.1 Flagship voices First, we compare our flagship voices ( British-F emale, British-Male, American-Male, F renc h-F emale ) against the flagship voices of same gender and accent provided by competitors. W e run two sub-ev aluations: 1. Explicit steering : W e test the ability to bias a TTS model’ s generation to ward a specific emotion. The TTS prompts which hav e an associated emotion (not Neutral) are pro vided as free-form instruction to Gemini 2.5 Flash TTS as it supports free-form instructions such as "Speak in an angry tone.". For ElevenLabs v3 we pro vide emotion tags enclosed in brackets 2 . While V oxtral TTS does not support emotion tags/text-instructions, we steer the generation by le veraging a diff erent voice prompt provided from the same speaker which embodies the requested emotion. 2. Implicit steering : W e test the model’ s capabilities to infer emotion from provided text (e.g. "This is the best day of my life!"). No emotion label or instruction is provided to the model. For V oxtral TTS, we use a neutral v oice prompt. W e use three annotators who are native speak ers of the same dialect for each language per pair . The win rates of V oxtral TTS (excluding ties) are presented in T able 4 . Gemini 2.5 Flash TTS is the strongest model, and V oxtral TTS is competiti ve against Elev enLabs v3. In the implicit steering setting, V oxtral TTS consistently outperforms both Elev enLabs models. T able 4: V oxtral TTS win rates by steering type. In the explicit steering setting, V oxtral TTS is competitive with Ele venLabs v3, while ha ving a higher win rate compared to both ElevenLabs models in the implicit steering setting. Emotion steering Opponent Model V oxtral TTS Win Rate (%) Explicit Elev enLabs v3 51.0 Gemini 2.5 Flash TTS 35.4 Implicit Elev enLabs Flash v2.5 58.3 Elev enLabs v3 55.4 Gemini 2.5 Flash TTS 37.1 4.3.2 Zero-Shot V oice Cloning T o ev aluate voice cloning capabilities, we source high quality audios from two recognized speakers in each language. W e generate speech from each model in a zero-shot setting, and instruct annotators to rate the generations based on (a) likeness of the generated audio to voice prompt and (b) naturalness of speech and expressi vity . T able 5 sho ws the V oxtral TTS win rates against Ele venLabs Flash v2.5 across languages. Overall, V oxtral TTS has a win rate of 68.4%, with significantly better results across both high and lo w- resource languages (such as Arabic and Hindi). Notably , the V oxtral TTS win rate is much higher in the zero-shot setting (68.4%) compared with flagship voices (58.3%), highlighting that V oxtral TTS is a far more generalizable model, capturing a di verse range of user-v oices. 5 Analysis In this Section, we provide a comparison between the pretrained and DPO checkpoints and ablate the pertinent inference parameters. 2 ht t ps: / /el e venl abs . io/ b log/ ele v en- v3- au dio- ta gs- ex pres sing- em otio nal- co ntex t- i n- speech 9 T able 5: V oxtral TTS win rate against ElevenLabs Flash v2.5 across languages. V oxtral TTS matches or outperforms Elev enLabs Flash v2.5 on every language, and has an o verall micro-av erage win rate of 68.4%. Language V oxtral TTS Win Rate (%) Arabic 72.9 Dutch 49.4 English 60.8 French 54.4 German 72.0 Hindi 79.8 Italian 57.1 Portuguese 74.4 Spanish 87.8 Overall 68.4 5.1 DPO impro vements T able 6 shows the WER and UTMOS metrics for the pretrained and DPO checkpoints. Overall, DPO improv es on both metrics, with the largest gains in German and French and regressions only on Hindi. Qualitati vely , we find that the DPO model hallucinates less and skips fewer words. DPO also ameliorates the pretrained model’ s occasional tendency to significantly taper in v olume throughout the audio. Interestingly , DPO has minimal effect on speaker similarity , which is within ± 0 . 01 of the pretrained checkpoint (not presented here for brevity). T able 6: DPO improves WER and UTMOS across languages. WER (%) ↓ UTMOS ↑ T ask Pretrain DPO Pretrain DPO MiniMax Arabic 2.80 2.68 (-0.12) 3.01 3.07 (+0.06) German 4.08 0.83 (-3.25) 3.05 3.12 (+0.07) English 0.84 0.63 (-0.21) 4.25 4.30 (+0.05) Spanish 0.56 0.51 (-0.06) 3.38 3.41 (+0.04) French 5.01 3.22 (-1.79) 2.76 2.83 (+0.07) Hindi 3.39 4.99 (+1.61) 3.43 3.56 (+0.13) Italian 2.18 1.32 (-0.85) 3.36 3.43 (+0.07) Dutch 3.10 1.99 (-1.11) 3.85 3.89 (+0.04) Portuguese 1.17 1.02 (-0.15) 3.60 3.66 (+0.06) Seed TTS 1.58 1.23 (-0.35) 4.07 4.11 (+0.04) 5.2 Inference P arameters Figure 4 demonstrates the effect on the automatic e valuation metrics as the number of functional ev aluations (NFEs) and choice of CFG α are varied. There are marked improv ements across metrics as the NFEs is increased from 2 to 8. W e find that increasing number of NFEs be yond 8 yields marginal impro vement in speak er similarity and minor de gradations in WER. Thus, we chose 8 NFEs as our default inference setting. Increasing the v alue of CFG α , we find that there is a nearly monotonic impro vement in all metrics except UTMOS-v2. Ho wev er , internal human e valuations found that a higher α led to o ver-adherence to the provided v oice-prompt and the model failed to bias to wards emotions that are implicit in the text prompt. W e also find that lo wer α = 1 . 2 works best for higher quality audio (e.g. professional recordings), while in-the-wild recordings might benefit from a higher α . 10 2 4 8 16 NFEs 10.0 15.0 20.0 25.0 30.0 W E R ( ) 30.1 6.5 6.7 7.0 2 4 8 16 NFEs 1.5 2.0 2.5 3.0 3.5 U T M O S ( ) 1.44 2.58 3.48 3.48 2 4 8 16 NFEs 0.45 0.50 0.55 0.60 0.65 0.70 0.75 S p e a k e r S i m i l a r i t y ( ) 0.421 0.656 0.732 0.735 1.0 1.1 1.2 1.3 1.4 6.2 6.5 6.8 7.0 7.2 7.5 W E R ( ) 7.5 7.1 6.7 6.3 6.2 1.0 1.1 1.2 1.3 1.4 3.1 3.2 3.3 3.4 3.5 U T M O S ( ) 3.09 3.41 3.48 3.52 3.48 1.0 1.1 1.2 1.3 1.4 0.710 0.715 0.720 0.725 0.730 0.735 S p e a k e r S i m i l a r i t y ( ) 0.707 0.726 0.732 0.733 0.737 Effect of NFEs E f f e c t o f C F G S c a l e Figure 4: Effect of NFEs and CFG on automatic evaluations. The metrics are averaged o ver SEED-TTS and the 9 languages in MiniMax. Increasing the NFEs from 2 to 8 improves speaker similarity and UTMOS metrics. There is a slight regression in WER as the NFEs is increased be yond this. The metrics monotonically increase with higher CFG, but human e valuations flagged regressions in te xt-adherence with high α . 6 Inference and Ser ving in vLLM-Omni V oxtral TTS is served through vLLM-Omni [ Y in et al. , 2026 ], an extension of the vLLM [ Kwon et al. , 2023 ] for multi-stage multimodal models. V oxtral TTS is decomposed into a two-stage pipeline: a generation stage that predicts the audio tokens (semantic and acoustic), follo wed by a codec decoding stage that con verts the tok ens into a w aveform. The two stages communicate through an asynchronous chunked streaming protocol over shared memory , enabling first-audio latency well before the full wa veform has been generated. 6.1 CUD A Graph Acceleration for Flow-Matching T ransformer The flow-matching transformer is the computational bottleneck of the generation stage. Each decoding step requires N function ev aluations with CFG, requiring 2 × N forward passes per generated frame. T o eliminate Python-lev el overhead and kernel-launch latency , the entire ODE solver is captured into CUD A graphs. At startup, an eager warmup pass is performed for each b ucket size and the corresponding CUD A graph is then captured. During inference, the actual batch size is rounded up to the nearest buck et by padding the input with zeros. Next, the CUD A graph is replayed and outputs are sliced back to the actual batch size. If the batch size exceeds the largest captured buck et, the model falls back to eager ex ecution. T o ev aluate the ef fect of CUD A graph acceleration, we compare the latency and real-time factor (R TF) when decoding with eager mode and CUDA graphs. T able 7 reports results for a 500-character text input, a 10-second audio reference and concurrency 1 on a single H200. Enabling CUD A graphs results in a 47% improv ement to latency and reduces the R TF by 2.5x. T able 7: Effect of CUD A graph acceleration on the flow-matching transformer . Configuration Latency R TF Eager mode 133 ms 0.258 CUD A graph 70 ms 0.103 11 6.2 Asynchronous Chunk ed Streaming The two pipeline stages run in separate scheduling loops. T o ov erlap the autoregressi ve generation stage decoding with codec decoding stage wav eform synthesis, an asynchronous chunked streaming protocol is introduced. After each generation step, the vLLM-Omni transfer manager stores the audio tokens to a per-request buf fer . Once the b uffer length reaches a pre-defined length, a chunk of tokens are emitted to the codec decoding stage. T o ensure coherence between chunks, each emitted chunk includes a slice of pre vious frames in addition to the ne w frames. This o verlap enables the codec decoder’ s causal sliding-window attention to maintain temporal coherence across chunk boundaries. 6.3 Inference Thr oughput W ith the techniques introduced in this section, V oxtral TTS achie ves lo w-latency , high-throughput inference. T able 8 shows the serving performance on a single NVIDIA H200 from concurrency 1 to 32 with 500-character text inputs and 10-second audio references. As the concurrenc y is increased from 1 to 32, the throughput scales from 119 to 1,431 characters per second per GPU, a 12x increase, while latency remains sub-second. The wait rate, defined as the fraction of audio chunks for which the client must stall since it is waiting for outputs, remains zero across all concurrency levels. As concurrency gro ws, per-request R TF increases modestly to 0.302 at concurrency 32, still well within the real-time boundary . These results demonstrate that V oxtral TTS is suitable for production deployment: a single H200 can serve o ver 30 concurrent users with uninterrupted streaming output and sub-second time to first audio. T able 8: Serving performance of V oxtral TTS on a single H200. Concurrency Latency R TF Throughput (char/s/GPU) W ait Rate 1 70 ms 0.103 119.14 0% 16 331 ms 0.237 879.11 0% 32 552 ms 0.302 1430.78 0% 7 Conclusion W e introduced V oxtral TTS, a multilingual TTS model that le verages a hybrid architecture for auto-regressi ve generation of semantic tokens and flow-matching for acoustic tokens. The tokens correspond to those from V oxtral Codec, a speech tok enizer that combines ASR-distilled semantic tokens with FSQ acoustic tokens. V oxtral TTS is able to generate expressiv e, v oice-cloned speech from as little as 3 seconds of reference audio, and is preferred to API baselines in human e valuations. W e release V oxtral TTS as open weights under the CC BY -NC license to support further research and de velopment of expressiv e TTS systems. Core contrib utors Alexander H. Liu, Alexis T acnet, Andy Ehrenberg, Andy Lo, Chen-Y o Sun, Guillaume Lample, Henry Lagarde, Jean-Malo Delignon, Jae young Kim, John Harvill, Khyathi Raghavi Chandu, Lorenzo Signoretti, Margaret Jennings, Patrick von Platen, Pav ankumar Reddy Muddireddy , Rohin Arora, Sanchit Gandhi, Samuel Humeau, Soham Ghosh, Srijan Mishra, V an Phung. Contributors Abdelaziz Bounhar , Abhinav Rastogi, Adrien Sadé, Alan Jeff ares, Albert Jiang, Alexandre Cahill, Alexandre Gav audan, Ale xandre Sablayrolles, Amélie Héliou, Amos Y ou, Andre w Bai, Andrew Zhao, Angele Lenglemetz, Anmol Agarwal, Anton Elisee v , Antonia Calvi, Arjun Majumdar, Arthur Fournier , Artjom Joosen, A vi Sooriyarachchi, A ysenur Karaduman Utkur , Baptiste Bout, Baptiste Rozière, Baudouin De Monicault, Benjamin T ibi, Bowen Y ang, Charlotte Cronjäger , Clémence Lanfranchi, Connor Chen, Corentin Barreau, Corentin Sautier , Cyprien Courtot, Darius Dabert, 12 Diego de las Casas, Elizaveta Demyanenko, Elliot Chane-Sane, Emmanuel Gottlob, Enguerrand Paquin, Etienne Goffinet, Fabien Niel, Faruk Ahmed, Federico Baldassarre, Gabrielle Berrada, Gaëtan Ecrepont, Gauthier Guinet, Genevie ve Hayes, Georgii Novikov , Giada Pistilli, Guillaume Kunsch, Guillaume Martin, Guillaume Raille, Gunjan Dhanuka, Gunshi Gupta, Han Zhou, Harshil Shah, Hope McGov ern, Hugo Thimonier, Indraneel Mukherjee, Irene Zhang, Jacques Sun, Jan Ludziejewski, Jason Rute, Jérémie Dentan, Joachim Studnia, Jonas Amar , Joséphine Delas, Josselin Somerville Roberts, Julien T auran, Karmesh Y adav , Kartik Khandelwal, Kilian T ep, Kush Jain, Laurence Aitchison, Laurent Fainsin, Léonard Blier, Lingxiao Zhao, Louis Martin, Lucile Saulnier, Luyu Gao, Maarten Buyl, Manan Sharma, Marie Pellat, Mark Prins, Martin Alexandre, Mathieu Poirée, Mathieu Schmitt, Mathilde Guillaumin, Matthieu Dinot, Matthieu Futeral, Maxime Darrin, Maximilian Augustin, Mert Unsal, Mia Chiquier , Mikhail Biriuchinskii, Minh-Quang Pham, Mircea Lica, Morg ane Rivière, Nathan Grinsztajn, Neha Gupta, Olivier Bousquet, Olivier Duchenne, P atricia W ang, Paul Jacob, Paul W ambergue, Paula K urylowicz, Philippe Pinel, Philomène Chagniot, Pierre Stock, Piotr Miło ´ s, Prateek Gupta, Pravesh Agrawal, Quentin T orroba, Ram Ramrakhya, Randall Isenhour , Rishi Shah, Romain Sauvestre, Roman Soletskyi, Rosalie Millner , Rupert Menneer, Sag ar V aze, Samuel Barry , Samuel Belkadi, Sandeep Subramanian, Sean Cha, Shashwat V erma, Siddhant W aghjale, Siddharth Gandhi, Simon Lepage, Sumukh Aithal, Szymon Antoniak, T arun Kumar V angani, T ev en Le Scao, Théo Cachet, Theo Simon Sorg, Thibaut La vril, Thomas Chabal, Thomas Foubert, Thomas Robert, Thomas W ang, Tim Lawson, T om Be wley , T om Edwards, T yler W ang, Umar Jamil, Umberto T omasini, V aleriia Nemychnikov a, V edant Nanda, V ictor Jouault, V incent Maladière, V incent Pfister, V irgile Richard, Vladislav Batae v , W assim Bouaziz, W en-Ding Li, W illiam Hav ard, W illiam Marshall, Xinghui Li, Xingran Guo, Xin yu Y ang, Y annic Neuhaus, Y assine El Ouahidi, Y assir Bendou, Y ihan W ang, Y imu Pan, Zaccharie Ramzi, Zhenlin Xu. 7.1 Acknowledgements W e would lik e to thank Han Gao, Hongsheng Liu, Roger W ang, and Y ueqian Lin from the vLLM- Omni team for their support and contrib utions in inte grating V oxtral TTS into the vLLM-Omni framew ork. 13 References Philip Anastassiou, Jiawei Chen, Jitong Chen, Y uanzhe Chen, Zhuo Chen, Ziyi Chen, Jian Cong, Lelai Deng, Chuang Ding, Lu Gao, Mingqing Gong, Peisong Huang, Qingqing Huang, Zhiying Huang, Y uanyuan Huo, Dongya Jia, Chumin Li, Feiya Li, Hui Li, Jiaxin Li, Xiaoyang Li, Xingxing Li, Lin Liu, Shouda Liu, Sichao Liu, Xudong Liu, Y uchen Liu, Zhengxi Liu, Lu Lu, Junjie P an, Xin W ang, Y uping W ang, Y uxuan W ang, Zhengnan W ei, Jian W u, Chao Y ao, Y ifeng Y ang, Y uanhao Y i, Junteng Zhang, Qidi Zhang, Shuo Zhang, W enjie Zhang, Y ang Zhang, Zilin Zhao, Dejian Zhong, and Xiaobin Zhuang. Seed-tts: A f amily of high-quality versatile speech generation models, 2024. URL . Kaito Baba, W ataru Nakata, Y uki Saito, and Hiroshi Saruwatari. The t05 system for the V oiceMOS Challenge 2024: Transfer learning from deep image classifier to naturalness MOS prediction of high-quality synthetic speech. In IEEE Spoken Language T echnology W orkshop (SL T) , pages 818–824, 2024. doi: 10.1109/SLT61566.2024.10832315. Donald J. Berndt and James Clif ford. Using dynamic time warping to find patterns in time series. In Pr oceedings of the 3r d International Confer ence on Knowledge Discovery and Data Mining , AAAIWS’94, page 359–370. AAAI Press, 1994. Zalán Borsos, Raphaël Marinier , Damien V incent, Eugene Kharitonov , Olivier Pietquin, Matt Sharifi, Dominik Roblek, Oli vier T eboul, David Grangier , Marco T agliasacchi, and Neil Zeghidour . Au- diolm: A language modeling approach to audio generation. IEEE/A CM T ransactions on Audio, Speech, and Languag e Pr ocessing , 31:2523–2533, 2023. doi: 10.1109/T ASLP .2023.3288409. Huiwen Chang, Han Zhang, Lu Jiang, Ce Liu, and William T . Freeman. Maskgit: Masked generati ve image transformer . In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , pages 11315–11325, June 2022. Alexandre Défossez, Jade Copet, Gabriel Synnaeve, and Y ossi Adi. High fidelity neural audio compression. arXiv preprint , 2022. Alexandre Défossez, Laurent Mazaré, Manu Orsini, Amélie Royer , Patrick Pérez, Hervé Jégou, Edouard Gra ve, and Neil Ze ghidour . Moshi: a speech-text foundation model for real-time dialogue. arXiv pr eprint arXiv:2410.00037 , 2024. Brecht Desplanques, Jenthe Thienpondt, and Kris Demuynck. ECAP A-TDNN: Emphasized channel attention, propagation and aggreg ation in TDNN based speaker v erification. In Interspeech 2020 , pages 3830–3834, 2020. doi: 10.21437/Interspeech.2020- 2650. Jonathan Ho and Tim Salimans. Classifier-free dif fusion guidance. arXiv preprint , 2022. URL . W oosuk Kwon, Zhuohan Li, Siyuan Zhuang, Y ing Sheng, Lianmin Zheng, Cody Hao Y u, Joseph E. Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for large language model serving with PagedAttention. In Pr oceedings of the ACM SIGOPS 29th Symposium on Operating Systems Principles , 2023. doi: 10.1145/3600006.3613165. Matt Le, Apoorv Vyas, Bowen Shi, Brian Karrer , Leda Sari, Rashel Moritz, Mary Williamson, V imal Manohar , Y ossi Adi, Jay Mahadeokar, and W ei-Ning Hsu. V oicebox: T ext-guided multilingual univ ersal speech generation at scale. In Advances in Neural Information Pr ocessing Systems , volume 36, 2023. URL https://proceedings.neurips.cc/paper_files/paper/2023/ha sh/2d8911db9ecedf866015091b28946e15- Abstract- Conference.html . Alexander H Liu, Sung-Lin Y eh, and James R Glass. Revisiting self-supervised learning of speech representation from a mutual information perspecti ve. In ICASSP 2024-2024 IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , pages 12051–12055. IEEE, 2024. Alexander H. Liu, Andy Ehrenberg, Andy Lo, Clément Denoix, Corentin Barreau, Guillaume Lample, Jean-Malo Delignon, Khyathi Ragha vi Chandu, Patrick von Platen, P avankumar Reddy Muddireddy , Sanchit Gandhi, Soham Ghosh, Srijan Mishra, and Thomas Foubert. V oxtral, 2025. URL . 14 Alexander H Liu, Kartik Khandelwal, Sandeep Subramanian, V ictor Jouault, Abhinav Rastogi, Adrien Sadé, Alan Jeff ares, Albert Jiang, Alexandre Cahill, Alexandre Gav audan, et al. Ministral 3. arXiv pr eprint arXiv:2601.08584 , 2026. Fabian Mentzer , Da vid Minnen, Eirikur Agustsson, and Michael Tschannen. Finite scalar quantization: VQ-V AE made simple, 2023. T u Anh Nguyen, W ei-Ning Hsu, Antony d’A virro, Bowen Shi, Itai Gat, Maryam Fazel-Zarani, T al Remez, Jade Copet, Gabriel Synnaev e, Michael Hassid, et al. Expresso: A benchmark and analysis of discrete expressi ve speech resynthesis. arXiv preprint , 2023. Julian D Park er, Anton Smirnov , Jordi Pons, CJ Carr , Zack Zukowski, Zach Evans, and Xubo Liu. Scaling transformers for low-bitrate high-quality speech coding. arXiv pr eprint arXiv:2411.19842 , 2024. W illiam Peebles and Saining Xie. Scalable diffusion models with transformers. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision (ICCV) , pages 4195–4205, October 2023. V adim Popov , Ivan V ovk, Vladimir Gogoryan, T asnima Sadeko va, and Mikhail Kudino v . Grad- TTS: A diffusion probabilistic model for te xt-to-speech. In Pr oceedings of the 38th International Confer ence on Machine Learning , volume 139 of Proceedings of Machine Learning Researc h , pages 8599–8608. PMLR, 2021. URL https://proce e dings.mlr.press/v139/popo v21a. html . Ofir Press, Noah A Smith, and Mike Lewis. Train short, test long: Attention with linear biases enables input length extrapolation. arXiv pr eprint arXiv:2108.12409 , 2021. Alec Radford, Jong W ook Kim, T ao Xu, Greg Brockman, Christine McLeave y , and Ilya Sutske ver . Robust speech recognition via large-scale weak supervision. In International confer ence on machine learning , pages 28492–28518. PMLR, 2023. Rafael Rafailo v , Archit Sharma, Eric Mitchell, Stefano Ermon, Christopher D. Manning, and Chelsea Finn. Direct preference optimization: Y our language model is secretly a re ward model. In Advances in Neural Information Pr ocessing Systems , 2023. URL . Hugo T ouvron, Matthieu Cord, Alexandre Sablayrolles, Gabriel Synnae ve, and Hervé Jégou. Going deeper with image transformers. In Proceedings of the IEEE/CVF international conference on computer vision , pages 32–42, 2021. Aaron V an Den Oord, Oriol V inyals, et al. Neural discrete representation learning. Advances in neural information pr ocessing systems , 30, 2017. Shikhar V ashishth, Harman Singh, Shikhar Bharadwaj, Sriram Ganapathy , Chulayuth Asaw aro- engchai, Kartik Audhkhasi, Andrew Rosenberg, Ankur Bapna, and Bhuvana Ramabhadran. Stab: Speech tokenizer assessment benchmark. arXiv preprint , 2024. Chengyi W ang, Sanyuan Chen, Y u W u, Ziqiang Zhang, Long Zhou, Shujie Liu, Zhuo Chen, Y anqing Liu, Huaming W ang, Jinyu Li, Lei He, Sheng Zhao, and Furu W ei. Neural codec language models are zero-shot te xt to speech synthesizers. arXiv pr eprint arXiv:2301.02111 , 2023. URL https://arxiv.org/abs/2301.02111 . Haibin W u, Naoyuki Kanda, Sefik Emre Eskimez, and Jinyu Li. Ts3-codec: Transformer -based simple streaming single codec. arXiv preprint , 2024. Peiqi Y in, Jiangyun Zhu, Han Gao, Chenguang Zheng, Y ongxiang Huang, T aichang Zhou, Ruirui Y ang, W eizhi Liu, W eiqing Chen, Canlin Guo, Didan Deng, Zifeng Mo, Cong W ang, James Cheng, Roger W ang, and Hongsheng Liu. vllm-omni: Fully disaggre gated serving for any-to-any multimodal models, 2026. URL . Bowen Zhang, Congchao Guo, Geng Y ang, Hang Y u, Haozhe Zhang, Heidi Lei, Jialong Mai, Junjie Y an, Kaiyue Y ang, Mingqi Y ang, Peikai Huang, Ruiyang Jin, Sitan Jiang, W eihua Cheng, Y awei Li, Y ichen Xiao, Y iying Zhou, Y ongmao Zhang, Y uan Lu, and Y ucen He. Minimax- speech: Intrinsic zero-shot text-to-speech with a learnable speaker encoder , 2025. URL https: //arxiv.org/abs/2505.07916 . 15 Xin Zhang, Dong Zhang, Shimin Li, Y aqian Zhou, and Xipeng Qiu. Speechtok enizer: Unified speech tokenizer for speech lar ge language models. arXiv preprint , 2023. Alon Zi v , Sanyuan Chen, Andros Tjandra, Y ossi Adi, W ei-Ning Hsu, and Bo wen Shi. Mr-flo wdpo: Multi-rew ard direct preference optimization for flow-matching text-to-music generation, 2025. URL . 16

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment