CHIRP dataset: towards long-term, individual-level, behavioral monitoring of bird populations in the wild

Long-term behavioral monitoring of individual animals is crucial for studying behavioral changes that occur over different time scales, especially for conservation and evolutionary biology. Computer vision methods have proven to benefit biodiversity …

Authors: Alex Hoi Hang Chan, Neha Singhal, Onur Kocahan

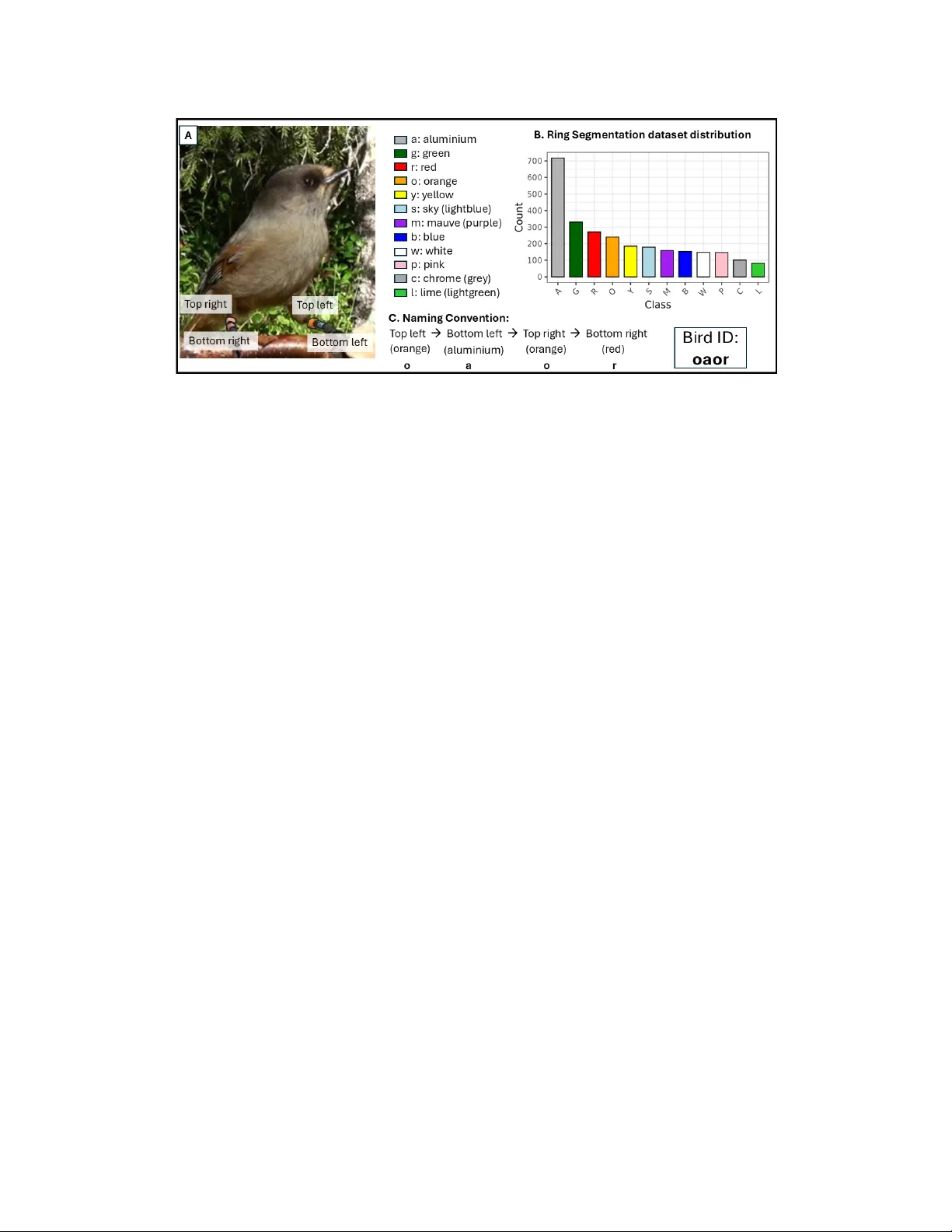

CHIRP dataset: towards long-term, indi vidual-lev el, beha vioral monitoring of bird populations in the wild Alex Hoi Hang Chan 123 , Neha Singhal 4 , Onur K ocahan 4 , Andrea Meltzer 1235 , Sav erio Lubrano 1235 Miyako H. W arrington 56 , Michael Griesser 12357 ∗ , Fumihiro Kano 123 ∗ , Hemal Naik 138 ∗ 1 Centre for the Advanced Study of Collecti v e Beha viour , Uni versity of K onstanz 2 Dept. of Collectiv e Behavior , Max Planck Institute of Animal Behavior 3 Dept. of Biology , Uni versity of K onstanz, 4 Dept. of Computer and Information Science, Univ ersity of Konstanz 5 Luondua Boreal Field Station 6 School of Biological and Medical Sciences, Oxford Brookes Uni versity 7 Dept. of Zoology , Stockholm Uni versity 8 Dept. of Ecology of Animal Societies, Max Planck Institute of Animal Behavior ∗ shared senior authorship. { hoi-hang.chan, andrea.meltzer, saverio.lubrano, michael.griesser, fumihiro.kano } @uni-konstanz.de { onurkocahan, neha.singhal.blr } @gmail.com, mwarrington@brookes.ac.uk, hnaik@ab.mpg.de Abstract Long-term behavioral monitoring of individual animals is crucial for studying behavioral c hanges that occurs over differ ent time scales, especially for conservation and evo- lutionary biology . Computer vision methods have pr oven to benefit biodiversity monitoring, but automated behavior monitoring in wild populations r emains challenging. This stems from the lack of datasets that cover a range of com- puter vision tasks necessary to extr act biologically mean- ingful measur ements of individual animals. Her e, we intro- duce such a dataset (CHIRP) with a ne w method (COR VID) for individual re-identification of wild bir ds. The CHIRP ( C ombining be H aviour , I ndividual R e-identification and P ostur es) dataset is curated fr om a long-term population of wild Siberian jays studied in Swedish Lapland, support- ing r e-identification (r e-id), action r ecognition, 2D ke ypoint estimation, object detection, and instance se gmentation. In addition to traditional task-specific benc hmarking, we intr oduce application-specific benchmarking with biologi- cally rele vant metrics (feeding rates, co-occurr ence rates) to evaluate the performance of models in real-world use cases. F inally , we pr esent CORVID ( CO lou R -based V ideo r e- ID ), a no vel pipeline for individual identification of bir ds based on the se gmentation and classification of colored le g rings, a widespr ead appr oach for visual identification of in- dividual bir ds. CORVID of fers a pr obability-based id tr ack- ing method by matching the detected combination of color rings with a database. W e use application-specific bench- marking to show that CORVID outperforms state of the art r e-id methods. W e hope this work offer s the community a blueprint for curating r eal-world datasets from ethically ap- pr oved biological studies to bridge the gap between com- puter vision r esearc h and biological applications. 1 Introduction Behavior is often the first response of animals when adapt- ing to environmental changes [ 53 , 55 ]. Measuring changes in behavior over time is hence crucial for conducting re- search in behavioral ecology and conservation. In recent years, rapid de velopments in technologies hav e opened new av enues for measuring behavior of wild animals, one of which is the application of computer vision to replace man- ual observation from images and videos. Recent developments in computer vision hav e shown im- pressiv e applications for collecting beha vior data in field conditions (outdoor), from 2D [ 16 , 24 , 32 , 60 , 63 ] and 3D posture estimation [ 12 , 30 , 57 ] to action recognition [ 3 , 9 , 22 , 56 ] and indi vidual re-identification [ 20 , 33 , 52 ]. Howe ver , large-scale or long-term deployment of these ap- plications is still limited mainly due to two problems. Firstly , computer vision research focuses on solving spe- cific tasks such as object detection, re-id or ke ypoint estima- tion, whereas long-term monitoring often requires multiple computer vision tasks to be solv ed simultaneously . In long- term monitoring, biologists are generally interested in iden- 1 Figure 1. CHIRP dataset summary . A) Solving the problem of who, with video re-identification datset of individuals. B) Solving the problem of what, with action recognition dataset and 2D keypoint estimation. C) Additional annotations to support the main tasks, including segmentation (yello w) of color rings, bounding box (green box) and segmentation (yellow) of birds. D) Application specific benchmark, 12 independent test videos with per frame annotation for bounding box, identities and behaviors, with novel metrics on errors related to biological measures like individual feeding rates and paired co-occurrence rates. tifying who did what behavior . T o achiev e automated mon- itoring, re-identification and behavioral recognition tasks hav e to be solved together , either directly or using sup- porting sub-tasks like detection, tracking and keypoint es- timation. Therefore, the classic strate gy of de veloping task- specific methods is not suitable for behavioral monitoring because often it is difficult to readily combine and deploy nov el algorithms directly for data collection. This challenge can be alle viated by curating large datasets with annotations to solve multiple tasks using the same real-world data. Secondly , traditional benchmarking proces ses lack addi- tional metrics to support deployment for biological appli- cations. While some recently published datasets provide annotations for solving a larger range of computer vision tasks (See supplementary; [ 17 , 35 , 36 , 45 – 47 ]), benchmark- ing is often done for each indi vidual task in isolation. This creates ambiguity for users while selecting methods for de- ployment, because task specific benchmarking does not re- flect the impact of choosing a specific method on the fi- nal biological measurements. The unpredictable nature of error propagation is demonstrated with recent case studies [ 8 , 48 ], and a possible solution is to include a benchmarking mechanism that allows tasks to be combined and tested. In this paper , we address these problems and introduce computer vision solutions for long-term, individual-le vel behavioral monitoring in the wild. Firstly , we present the CHIRP dataset ( C ombining be H aviour I ndividual R e- identification and P ostures), the first dataset focusing on social behaviors in a wild bird population, the Siberian jay ( P erisor eus infaustus ), a group living corvid. Secondly , we present the application-specific benchmark, a novel ev alu- ation paradigm that makes use of application-specific met- rics like feeding rate and co-occurrence rates of individu- als. Our benchmarking allows no vel methods to be directly tested for their impact on the final biological measurement, encouraging method optimizations for downstream appli- cations instead of individual tasks. Thirdly , we introduce COR VID ( CO lou R -based V ideo re- ID entification), a nov el automatic re-id frame work as a baseline for the applica- tion specific benchmark, by identifying birds via individual color rings. While color leg rings are widely used to mark individuals in wild bird populations [ 2 , 7 ], to the best of our knowledge, we present the first computer vision frame- work to automatically identify individual birds using color rings. W e hope this work can illustrate how datasets and ev aluation procedures can be designed with clear deploy- ment objecti ves, to allo w nov el computer vision methods to be better applied for downstream biological understanding. 2 Related W orks Over the last decade, the popularity of animal-specific datasets has increased significantly . Initial datasets in- cluded task-specific data to tackle computer vision prob- lems such as detection and tracking [ 58 , 65 ], 2D and 3D keypoint estimation [ 16 , 30 , 34 , 38 , 64 ], action recogni- tion [ 4 , 5 , 10 , 23 , 31 ] and re-identification (see [ 66 ]). These datasets ha ve contributed to bringing computer vision inno- vations to animal studies, allow biodiv ersity monitoring to be scaled and automated [ 54 ]. Howe ver , it is widely ac- knowledged that long-term studies in volve solving multiple computer vision tasks together . In response, recent datasets are moving tow ards more holistic and real-world datasets that contain multiple computer vision tasks within the same study system (see supplementary). Datasets such as Animal Kingdom [ 47 ] primarily focus on the problem of animal behavior recognition of 850 an- imal species, with ground truth labels on action recogni- tion tasks, but also for object detection and keypoint esti- mation. Howe ver , the dataset was collated from internet- sourced youtube videos and direct applicability of methods dev eloped with the dataset for biological studies remain un- validated. Datasets like LoTE-animal [ 35 ] or Baboonland [ 17 ] use data directly sourced from biological studies and focuses on action recognition tasks, with support of a wide range of computer vision tasks but do not provide re-id. Simi- 2 Figure 2. Summary of leg color ring definitions and naming conv ention of the Siberian jays. A) Sample image of an individual, and a list of the different color rings . Ring positions are defined as top/bottom left/ right from the bird’ s perspectiv e. B) Class distribution of ring masks provided in the ring segmentation dataset. C) Naming con vention, each bird is named in the order of top left, bottom left, top right, bottom right ring. The color combination of bird in the picture is oaor: orange (o), aluminium (a), orange (o), red (r). larly , datasets like 3D-POP [ 45 ] and Bucktales [ 46 ], focus on tracking large groups for collectiv e beha vior and provide ground truth for bounding box, re-id and 2D/3D postures but no annotations for individual activity . These datasets allow novel algorithms to be better bridged to downstream biological applications because they are curated from the data collected for biological studies. In the CHIRP dataset, we follow the same blueprint to ensure that both behavior and identity annotations are supported for downstream ap- plications. The WILD dataset [ 62 ] is a dataset from a social be- havior study and offers multi-view 3D tracking and re-id of cowbirds in semi-wild environment. While not focus- ing on action recognition, that dataset proposes bird re- identification, with the bird subjects also fitted with color leg rings. Howe ver , the authors did not explicitly make use of color ring information for re-id, instead use an image classifier on an image crop of the birds. Similarly , Individ- ualBirdID [ 20 ] also use cropped image of the back patterns of birds for re-id, showing that CNNs can reliably identify individuals across 3 bird species. Here, we include bird re- id problem from an additional perspectiv e of using color information from the leg rings with COR VID. Finally , ChimpA CT [ 36 ] is a dataset on semi-captiv e chimpanzees in Leipzig Zoo, with ground-truth labels on identities, bounding boxes, postures and behaviors, focus- ing on longtitudinal (long-term) monitoring of chimpanzee groups. ChimpA CT provides task specific benchmarking results [ 36 , 37 ], which makes it hard to ev aluate whether individual model performances are sufficient to achiev e ac- curate longtitudinal indi vidual-lev el behavioral monitoring. CHIRP dataset includes application specific benchmarks, with new metrics designed to ev aluate the performance of algorithms directly in the context of the final use case. 3 The CHIRP Dataset T o facilitate long-term, individual-le vel, behavioral moni- toring, it is critical to record who does what ( Figure 1 ). Thus, we first provide a large-scale video re-identification dataset to determine who is present in the video frame. Then, to classify what the indi viduals are doing, we provide annotations for action recognition of behavioral classes, and 2D ke ypoints for fine-scaled kinematics. Belo w , we first describe the study system and data collection, then detailed descriptions of the provided annotations, and fi- nally the application-specific benchmark that complements the dataset. All data samples in the dataset will be pro- vided with a date of data acquisition, to allo w for time- aware splits. W e also note that except for the video re-id dataset, all datasets adopt an 80/20 train/test split. The com- plete dataset, code and metadata is av ailable here: https: //github.com/alexhang212/CHIRP_Dataset 3.1 Data collection CHIRP dataset is collected in a long-term study population of Siberian jays ( P erisor eus infaustus ), in Swedish Lapland (65°40’ N, 19°0’ E) between 2014 and 2022. Siberian jays are group-living birds (group size range: 2-7); groups typ- ically consist of a breeding pair and non-breeder , which are either retained offspring or unrelated immigrants [ 18 ]. Groups are year -round territorial and can be found in pre- dictable locations, allowing reliable monitoring of groups. Each bird is fitted with an aluminum ring and a unique com- bination of 2 to 3 colored plastic rings of 11 unique col- ors (permit via Ume ˚ a Animal Ethics Board, A23-20 and A26-13 under the license of the Swedish Museum of Nat- ural History). This provides up to 1331 (11 3 ) possible unique combinations, allo wing researchers to visually iden- tify indi viduals in the field ( Figure 2 A). Color rings en- 3 sure monitoring accuracy across lifetime and is not af fected by changing plumage of birds. Due to the unique social structure of Siberian jays, the long-term study stri ves to an- swer questions related to the evolution of cooperativ e be- havior and social buf fering effects towards en vironmental changes [ 28 , 59 ]. In this study , videos are taken as part of a standardized behavioral recording protocol, where 15-30 min long videos (25fps, 1920 x 1080) are recorded in each group using a standardized feeding perch. Then, researchers manually an- notate identity and behavior from videos using the BORIS annotation software [ 21 ] e.g. feeding bouts, submissi ve be- haviors, and displacements for each individual (see supple- mentary for complete ethogram). BORIS [ 21 ] is a widely used annotation software in animal behavior studies to man- ually code time of behavior bouts, and these annotations were used to reduce annotation effort. Overall, 443 unique videos (across 9 years, 2014-2022) were used to prepare the CHIRP dataset, and date for each data sample is alw ays pro- vided for time-aware splits. W e also ensured that samples from the same behavioral videos are nev er assigned in the same split for all subsequent datasets. In addition, we also collected multi-view data on jays foraging on the ground, which only contributes to the 2D k eypoint dataset. 3.2 Problem 1: Who is present? 3.2.1 V ideo r e-identification dataset The video re-identification (re-id) dataset consists of 16,190 video clips (25 frames, 1 second each) for 183 unique in- dividuals ( Figure 1 ). Each individual has on average 89 samples, and 32.42% of birds (N= 59) has samples across multiple years. W e first train a YOLOv8 object detection model [ 29 ] (see subsection 3.4 ) to detect birds within each 15-30 minute long behavioral video. Then using manual annotation from BORIS, 1-second long cropped video clips (25 frames) are extracted, in video segments where only 1 bird was present for both BORIS and YOLO, allowing auto- matic matching of identities. W e manually check the video clips to ensure the automatic ID assignment is correct, filter - ing out 8.3% false positiv e video clips due to manual mis- labelling and false positi ve detections in Y OLO. W e ensure all short clips from the same 15-30 minute beha vioral video are always in separate data splits, to assure no data leakage from background information is present. Using the clips of individuals, we prepared a closed split, disjointed split and open split based on definitions formal- ized by Wildlife Datasets [ 66 ]. The closed set split is clos- est to the final use-case for the Siberian jay system, as re- searchers are alw ays present when recording ne w videos al- lowing new individuals to be acknowledged and added to the gallery . In the closed-set split, all indi viduals are both in the train and test set (with 80/20 ratio), and the task is to assign individuals in the test set to individuals in the train- ing set. Next, the disjointed split is created to test for gen- eralization across systems. W e assign approximately half the individuals to the train and test set respectiv ely . W ithin the test set, we further assign all clips from a single be- havioral video as the gallery , and the rest as query . The re-identification task here is to match query clips to an indi- vidual within the gallery database. Finally , the open-set split is prepared to test for the ro- bustness against new indi viduals, for alternativ e deploy- ments that may not in volve researchers on site (e.g. pas- siv e camera traps). W e assign 20% and 80% of individ- uals as ”unknown” and ”known” respectively . Within the known indi viduals, data samples are split into train and test set (80/20 ratio), with data samples of the unknown indi- viduals added to the test set. The re-identification task is to determine if a data sample is ”known” or ”unknown”, then if known, assign the correct ID. Siberian jays are territorial and group members remain fairly consistent within each location, with occasional en- counters with neighbouring territories [ 27 ]. T aking advan- tage of this biological feature, we offer two levels of meta- data for each video. First, a short-list of individuals within the territory (N=2-4, mean 2.92), and second, a list of indi- viduals that are also likely likely to be seen in that territory (neighbours; N=9-25, mean 14.58). This adds a layer of complexity and varying difficulty to the dataset, allowing domain-specific knowledge to be built into potential com- puter vision solutions for the re-id problem. 3.3 Problem 2: What are they doing? 3.3.1 Action recognition dataset The action recognition dataset includes manual annotations of 1387 short video crops of birds (min 25 frames, max 74 frames, mean 66.73 frames) performing each behavior ( Fig- ure 1 ). W e code “eat” as a bird that was pecking at the food, “submissiv e” as individuals displaying stereotyped wing- flapping behavior [ 27 ], and “others” as any other behav- ior , which includes vigilance, resting and flying (see Fig- ure 3 for class distribution). T o speed up annotation, we first train a YOLOv8 model [ 29 ] (see subsection 3.4 ) to extract bird tracks from each video, then use BORIS annotations to identify and extract video segments that contains behaviors of interest. Finally , we manually revie w and annotate each short clip with the appropriate behavior . 3.3.2 2D Ke ypoint estimation dataset W e provide a comprehensi ve 2D keypoint dataset of 1176 individual bird instances, across 879 images ( Figure 1 ), with manually annotated keypoint ground truth for 13 unique keypoints. 36% of frames are taken from standard- ized feeding videos, while 64% are taken from videos from another feeding context, where birds are foraging on the ground. Each instance also includes corresponding bound- 4 Figure 3. Class distribution f or action recognition dataset ing box annotation for each individual bird. 3.4 Additional annotations In addition to the main tasks described above, we also provide additional annotations to support the main tasks. Firstly , we provide annotations for object detection and in- stance segmentation. For this, we provide 1156 frames of 1669 bird instances with annotated bounding box and seg- mentation mask of birds and the feeding stick, as well as bounding box around the food. T o reduce manual anno- tation time, we use SAM2 [ 49 ] to automatically generate masks of birds based on bounding box prompts, which we validate against 688 annotations, with a mean 0.84 inter- section over union (IOU). Secondly , as the color rings are the most visually salient features that directly encodes indi- vidual identities within an image in Siberian jays, we also provide bounding box and segmentation masks of individ- ual color rings. W e provide annotations across 944 frames of cropped images of birds, with 2713 unique ring instances of 12 unique color classes ( Figure 2 B). Finally , to allow for training end-to-end or multi-modal methods, we also provide model-annotated datasets by us- ing current best model (see Section 5 ) to produce 2D key- points and segmentation for the video re-id and action recognition datasets. 3.5 Application specific benchmark W e provide 12 independent test videos of 35 bird individ- uals with frame-by-frame annotations of eating behavior , identities and bounding boxes for the application specific benchmark. These videos are not present in any other an- notations provided, thus no data leakage is possible. W e first use YOLOv8 trained on the bounding box annotations abov e to obtain 2D tracks, then manually assigned bird ID to each track. W e match these tracks to additional BORIS annotations, where an observ er marks ev ery instance where the beak of a bird indi vidual touches the food when feed- ing. Since the tracks obtained from YOLO contains seg- mented tracks (e.g., when an indi vidual jumps from one side to the other), we use linear interpolation to join two tracks that were marked as the same individual. The re- sulting dataset consists of frame-by-frame bounding box with coded IDs and all instances of feeding by each individ- ual. In addition to acting as an application specific bench- mark, this dataset also acts as a multi-object tracking (MO T) benchmark dataset due to the a vailability of frame-by-frame bounding box annotations with identities. Since we design the application specific benchmark specifically for the CHIRP dataset, we also introduce novel metrics to e valuate and compare the performance of mod- els for application relev ant use cases (see supplementary methods for detailed descriptions). First, we ev aluate on two lo wer-le vel metrics, 1) proportion correct frame assign- ments, defined as the proportion of ground truth tracks and frames that are assigned to the correct individual, to e val- uate tracking and individual identification performance. 2) W e compute mean precision, recall and F1-score for indi- vidual feeding ev ents by splitting each video into 1s time windows, with true positi ves defined as pecking of the given individual detected within the same time window , av eraged across all individuals. Next, we also calculate two higher- lev el biological measures, 1) individual lev el feeding rates (pecks/minute) and 2) co-occurrence rates, as the proportion of time spent together between each pair of individuals, di- vided by total video length. For both biological measures, we computed the mean, median and standard de viation of absolute errors and Pearson’ s correlation between the pre- dicted and ground truth. 4 COR VID: COlouR-based Video re-ID T o set an initial benchmark for the re-identification task, we propose a nov el pipeline that aims to detect indi vidual color rings for individual identification. Attaching unique combi- nations of color rings to wild birds is common practice for population monitoring (861 ”combination of uncoded color leg rings” projects covered by cr-birds database [ 19 ]) and long-term demographic studies (105/175 populations color ringed covered by SPI-bird database [ 14 ]), thus a method for individual identification based on color rings is widely applicable. Ho wev er , to the best of our knowledge, we are the first to directly leverage this feature of bird study sys- tems to achieve individual identification through the detec- tion of color ring patterns. Previous work uses computer vision methods to automatically detect tags that are placed on animals, including color barcodes [ 40 , 43 , 44 , 51 ], and fiducial markers (e.g QR codes or aruco tags; [ 1 , 13 , 61 ]. Other work has also explored deep learning based classi- fiers to recognize bird individuals, both in captivity and in the wild [ 20 , 62 ]. Howev er, compared to existing re-id ap- proaches, our approach do not rely on any of the training data provided in the video re-id dataset, and only relies on the detection of color rings. This allows for the method to be the generalized to any new individuals given the ring combinations are known, and no ne w color is introduced. The pipeline has three main components ( Figure 4 ). 5 Inpu t clips Ma sk2F orm er inst a nce s eg men ta tion Identif y ring pair s (dis ta nce th r eshol d) a : 0 .01 b :0.03 c: 0 .00 g : 0 .00 l : 0 .00 m: 0 .01 o: 0 .01 p :0.7 8 r :0.16 s :0.00 w :0.00 y :0.00 a : 0 .01 b :0.03 c: 0 .00 g : 0 .00 l : 0 .00 m: 0 .01 o: 0 .01 p :0.7 8 r : 0.16 s :0.00 w :0.00 y :0.00 a : 0 .01 b :0.03 c : 0.00 g : 0 .00 l : 0 .00 m: 0 .01 o: 0 .01 p :0.7 8 r :0.16 s :0.00 w :0.00 y :0.00 a : 0 .01 b :0.03 c: 0 .00 g : 0 .00 l : 0 .00 m: 0 .01 o: 0.01 p :0.7 8 r :0.16 s :0.00 w :0.00 y :0.00 R a ndom f or es t Color ide ntif ic a tion R es i ze and c o nv ert to hs v s pa c e mapg : 0.62 m ar m : 0. 47 P ossi ble bir ds lis t S elect mo s t lik ely ind iv id u a l Ring pair col or pr oba bi lities pool ed a cr oss f r a mes Figure 4. COR VID pipeline. Schematic for the color based re-ID approach pipeline. 1 second clips from CHIRP are fed into Mask2Former instance segmentation model, to extract masks of rings. The rings are grouped into ring pairs based on a distance threshold, then resized and con verted into hsv space. The images are fed into a random forest model to predict probabilities of each color, then combined with associated ring pair to create a probability matrix of ev ery color pair, then pooled across frames. Finally , the most probable bird is selected based on the possible birds that could be present in a given video. Firstly , we detect individual rings using a Mask2Former in- stance segmentation model [ 11 ] trained on the ring se gmen- tation dataset, then cropped, transformed into HSV space, and resized into a 20x20 resolution images. In the second step, we feed color histograms from the images into a ran- dom forest model trained on the ring segmentation dataset, to output confidence scores for each color . W e formalize the problem as a multi-class classification problem to allow for the model to predict confidence scores for each color class, considering some color classes are similar to each other . As the final step, we implement a matching algorithm by first identifying ring pairs based on centroid distance threshold of each ring detection, then sum up the probability of ev- ery paired ring color combination based on the outputs of the random forest classifier , across the 25 video frames. W e then match the final score with the possible ID metadata for the data sample, and the most likely ID for a giv en video clip is identified. W e refer to the supplementary section for more details on the pipeline and exploration of the CHIRP ring segmentation dataset. 5 Benchmarking T o explore the performance of state-of-the-art models on the CHIRP dataset, we provide task-specific benchmarks for each of the main tasks proposed. Next, we implement a sim- ple pipeline to be applied on the application-specific bench- mark, to provide a baseline on how the best algorithms per- forms when combined for automated data extraction. 5.1 Video r e-identification For video re-id, we compare our proposed COR VID pipeline to Mega Descriptor , a foundation model for an- imal re-identification [ 66 ]. W e compare COR VID with Mega-descriptor pre-trained on other animal datasets, and Mega Descriptor that is fine-tuned with CHIRP ( T able 1 ). For Mega Descriptor, we pooled frame-wise probabilities within a tracklet, and the ID with the highest a verage score was assigned. For benchmarking, we only benchmarked the closed and disjointed split, as we do not hav e a reliable way of distinguishing between known and unknown individu- als using our proposed COR VID method. For each data split, we test three conditions, we use possible birds for the giv en data sample in the gallery (“within territory”), possi- ble birds with neighbours (”within territory + neighbours) and all birds in the re-id dataset (”All”). W e found that COR VID outperforms MegaDescriptor with the within ter- ritory and neighbours constraint, but the contrary is true in the closed-set when the gallery include all individuals ( T a- ble 1 ). This shows that explicitly detecting and using ring color information from the COR VID pipeline seem to be better than state-of-the-art deep metric learning techniques, but this relies on the within-territory constraint. 5.2 Action Recognition Next, we train a series of models for video action recog- nition using the MMAction2 library [ 41 ]. W e benchmark video-based methods here, but posture based methods can also be used. W e train each model for 100 epochs, and the best epoch in terms of top 1 accuracy in the test set was chosen ( T able 2 ). Overall, C3D performs the best, with an accuracy of 0.72. 5.3 2D Keypoint Estimation For keypoint estimation, we train models using the MM- Pose library [ 42 ]. W e compute mean and median Euclidean error , root mean squared error (RMSE) and percentage cor - rect ke ypoints (PCK05, PCK10), as a ke ypoint estimate that lies within 5% and 10% of the lar gest dimension of the ground truth bounding box. W e train all models for 100 epochs, and the best epoch based on test PCK is selected. T able 3 shows benchmarking results, with V iTPose large being the best performing model, and all architectures per- forming well as evident from the high PCK values. This is indicative that the keypoint annotations provided will al- low for training of accurate keypoint estimation models for further tasks like action recognition. 6 T able 1. Video Re-ID benchmarks. W e compare COR VID, pre-trained and fine-tuned mega descriptor across different evaluation settings. Bold denotes best performing model for each metric. Method Closed set Disjointed set W ithin T erritory W ithin T err. + Neighbours All W ithin T erritory W ithin T err. + Neighbours All T op-1 T op-3 T op-1 T op-3 T op-1 T op-3 T op-1 T op-3 T op-1 T op-3 T op-1 T op-3 COR VID 0.66 0.96 0.29 0.49 0.05 0.07 0.69 0.97 0.31 0.53 0.06 0.13 Pre-trained Mega 0.28 0.67 0.19 0.41 0.10 0.19 0.31 0.62 0.14 0.32 0.05 0.10 Fine-tuned Mega 0.27 0.56 0.17 0.35 0.10 0.17 0.41 0.71 0.13 0.27 0.05 0.09 T able 2. Action recognition benchmarks. W e compute precision, recall, f1-score and top-1 accuracy for each model. Bold denotes best performing model for each metric. Model Precision Recall F1 Accuracy SlowF ast 0.460 0.678 0.548 0.678 C3D 0.675 0.715 0.684 0.715 X3D 0.460 0.678 0.548 0.678 T able 3. 2D Keypoint estimation benchmarks . Mean, median and root mean squared error (RMSE) was computed using Euclidean distances be- tween predicted and ground truth ke ypoints. PCK10 and PCK05 stands for percentage correct k eypoints within 10% and 5% of ground truth, scaled by the largest dimension of the bounding box. Bold denotes best performing model for each metric. Model Mean Error (px) Median Error (px) RMSE (px) PCK@10 PCK@5 ResNet-50 12.03 7.568 20.65 0.940 0.832 ResNet-101 12.47 7.491 21.24 0.940 0.830 ResNet-152 10.91 7.245 17.94 0.961 0.848 HRNet 10.86 6.463 19.42 0.951 0.862 V iTPose-small 10.32 6.872 16.70 0.957 0.859 V iTPose-large 7.773 5.194 12.64 0.978 0.915 5.4 Application-specific benchmark W e implement a simple pipeline that combines different models and algorithms with the aim of extracting individ- ual co-occurrence and feeding rates. The pipeline includes 1) object detection to identify bounding boxes of birds, 2) tracking algorithm to combine detections into tracklets, 3) individual identification for each tracklet and 4) action recognition within each tracklet. As an initial experiment to determine ho w accuracies in task-specific metrics propa- gates to application-specific metrics, we compared 3 meth- ods for ID assignment: COR VID, MegaDescriptor fine- tuned on the disjointed set and random assignment (re- peated 100 times, to obtain mean estimates). For all three methods, we use a list of possible birds within the terri- tory to constraint the possible birds to a list of 2-5 indi vid- uals. As solutions improv e in the future, the possible bird list can be expanded to include neighbours, and ev entually the whole population. For the rest of the pipeline, we use Y OLOv8 for object detection and BoTSOR T (using boxmot library; [ 6 ]) for tracking. For action recognition, we use the best performing C3D model for action recognition by split- ting tracklets into 1s se gments. In addition, we also provide a human benchmark, by coding the first 5-minutes of each video by an independent human coder to provide baseline value, as the tar get for future models to reach. W e find that differences in performance between COR VID and Me ga Descriptor in task-specific metrics ( T a- ble 1 ) predicts the accuracy in application-specific metrics, as evident from higher performance when using COR VID in the pipeline ( T able 4 , 5 ). Surprisingly , random assign- ment performs the best in some metrics ( T able 5 ), showing that the proposed methods still ha ve room for improv ement. W e also find that MegaDescriptor performs worse than ran- dom assignment across all higher-le vel metrics ( Figure 5 ), highlighting that the model is not suitable for deployment. Finally , errors from all pipelines are still high compared with the human benchmark in both individual feeding rates and co-occurrence rates ( Figure 5 , T able 5 ), and further im- prov ements will be needed, highlighting the value of the CHIRP dataset. T able 4. Lower -level baseline metrics for application specific bench- mark. Metrics evaluate tracking, ID assignment accuracy , and behavioral recognition performance. Bold denotes best performing model for each metric. Individual Identification Prop. correct frames Peck Precision Peck Recall Peck F1 COR VID 0.647 0.485 0.725 0.537 MegaDescriptor 0.617 0.410 0.550 0.408 Random 0.331 0.303 0.436 0.327 T able 5. Higher lev el biologically relev ant metrics. Comparison of indi- vidual feeding rates and co-occurrence rates across different pipeline con- figurations. Bold denotes best performing method for each metric, and arrows represents whether a higher or lo wer value is better Individual ID Individual feeding rates Co-occurrence rates Mean ↓ Median ↓ SD ↓ r ↑ Mean ↓ Median ↓ SD ↓ r ↑ COR VID 9.000 8.131 7.342 0.582 0.041 0.019 0.057 0.654 MegaDesc. 13.14 8.604 13.08 0.505 0.056 0.028 0.063 0.557 Random 9.349 9.287 5.667 0.437 0.041 0.028 0.046 0.799 Human 1.88 0.80 3.47 0.910 0.028 0.007 0.053 0.913 6 Discussion and Limitations Application driven ML is increasingly important, which raises recent discussions on how computer vision innov a- tions can be bridged to real world applications [ 8 , 50 ]. The CHIRP dataset is a task-diverse dataset consists of exist- ing data collected from an ongoing long-term system. This ensures that the dataset is directly relev ant for automated 7 Figure 5. Application specific benchmark results. Comparing ground truth measurements and predictions from pipelines to test for how different components af fects biological measurements. W e compared proposed COR VID pipeline, fine-tuned Me gaDescriptor and random assignments for indi vidual recognition. All pipelines used YOLOv8 for object detection, BoTSOR T for tracking, and C3D for action recognition. Absolute errors of A) individual-lev el feeding rates and, B) co-occurrence rates and correlation of C) individual-le vel feeding rates and, D) co-occurrence rates. Individual feeding rates defined as number of times individual pecks at the food (pecks/min), and co-occurence rates is defined by the proportion of time two individuals were detected together , scaled by video length. individual-le vel behavioral monitoring in the wild. T o fur- ther the goal of bridging computer vision and the appli- cation domain, we also introduce the application-specific benchmarks, a collection of independent test videos, with nov el metrics to ev aluate the ability of computer vision al- gorithms to extract biologically relev ant measures. This new mechanism acts as a independent ”system test” for computer vision algorithms, as errors in different parts of a pipeline can propagate in unpredictable ways [ 8 , 48 ]. While these newly proposed metrics are not meant to replace tra- ditional task-specific benchmarking, they acts as a bridge to allow biologists to directly ev aluate whether computer vision solutions are sufficient for application, and directly apply architectures appropriately . Another feature of application-driv en ML is the av ail- ability of domain-specific kno wledge when designing com- puter vision solutions [ 50 ]. W e incorporate this concept throughout the design and benchmarking of the CHIRP dataset, by providing color ring segmentation and metadata on the probable birds to constraint the re-id problem. This added layer of complexity encourages future computer vi- sion solutions to make use of the constraints of the study system, as we demonstrate with the COR VID, which iden- tifies individuals purely from detecting the presence of color rings, contrary to traditional re-id approaches. The COR VID pipeline is more flexible than traditional approaches as it does not rely on a gallery to be matched with, and only relies on a list of possible color combina- tions. Ho wever , benchmarks on CHIRP showed that the accuracy of the frame work depends on the constraint where only limited birds can be present in a video ( T able 1 ), mak- ing the method unscalable to larger bird populations. Future work can combine image based methods to compute simi- larities of appearances as demonstrated in other bird species [ 20 , 62 ]. This will be important for birds as many birds change appearance ov er time or seasons. Now , we discuss some limitations with the CHIRP dataset. Firstly , in contrary to the ChimpACT dataset [ 36 ], annotations presented in the current dataset are done in dif- ferent data subsets. This stems from the annotation strat- egy we employ , focusing our manual annotation efforts to div erse frames instead of video sequences like in Chim- pA CT [ 36 ]. T o provide a solution to this problem, we pro- vide model-annotated 2D keypoints and segmentation on the re-id and action recognition datasets. Next, compared to other action recognition datasets, the number of annotations and behavioral classes provided in the dataset is limited, and is highly ske wed tow ards feed- ing beha vior ( Figure 3 ). This is primarily a reflection of the distribution of behaviors that jays perform on the feeder, as they primarily spend their time feeding. The specific be- haviors required depends on the final use case, ho wev er , quantifying feeding and indi vidual presence/ co-presence is valuable for long-term behavioral monitoring, in relation to food acquisition and understanding the e volution of social behaviors [ 15 , 27 , 39 ]. In addition, other beha viors like vig- ilance on the feeder (head held upright to identify predators [ 25 , 26 ]) can be reliably e xtracted from body postures if 2D keypoint estimates are accurate, and thus the types of behav- iors that can be extracted using this dataset is not limited by the action recognition dataset. Finally , the CHIRP dataset only includes data from a sin- gle species and study system, making it hard to ev aluate whether developed algorithms can be generalized. How- ev er, CHIRP is a first of its kind dataset of a wild long- term bird population, which is limited by the years of effort that is required to establish and collect. W e hope that the approach for preparing the CHIRP dataset, together with the novel application-specific benchmarking can act as a blueprint for future work, on how to design datasets care- fully to encourage computer vision algorithms to be read- ily applied to the application domain. With well designed datasets and algorithms, computer vision can potentially rev olutionize individual level behavior monitoring for the study of animal behavior , conservation and beyond. 8 7 Acknowledgments This work is funded by the Deutsche Forschungsge- meinschaft (DFG, German Research Foundation) under Germany’ s Excellence Strategy—EXC 2117—422037984, DFG project 15990824, DFG Heisenberg Grant no. GR 4650/21 and DFG project Grant no. FP589/20. MHW is supported by an Oxford Brookes University Emerging Leaders Research Fellowship. W e thank Francesca Frisoni and Jyotsna Bellary for doing additional annotations for the application specific benchmark. W e thank all the re- searchers and field workers who worked in the Luondua Boreal Field Station ov er the years for their contributions to the long-term dataset. References [1] Gustav o Alarc ´ on-Nieto, Jacob M Graving, James A Klarev as-Irby , Adriana A Maldonado-Chaparro, Inge Mueller , and Damien R Farine. An automated barcode track- ing system for behavioural studies in birds. Methods in Ecol- ogy and Evolution , 9(6):1536–1547, 2018. 5 [2] Guy Q.A. Anderson and Rhys E. Green. The value of ringing for bird conserv ation. Ringing & Migration , 24(3):205–212, 2009. 2 [3] Th ´ eo Ardoin and C ´ edric Sueur . Automatic Identification of Stone-Handling Behaviour in Japanese Macaques Using LabGym Artificial Intelligence, 2023. [cs]. 1 [4] Otto Brookes, Majid Mirmehdi, Colleen Stephens, Samuel Angedakin, Katherine Corogenes, Dervla Dowd, Paula Dieguez, Thurston C. Hicks, Sorrel Jones, Kevin Lee, V era Leinert, Juan Lapuente, Maureen S. McCarthy , Amelia Meier , Mizuki Murai, Emmanuelle Normand, V irginie V ergnes, Erin G. W essling, Roman M. W ittig, K evin Langer- graber , Nuria Maldonado, Xinyu Y ang, Klaus Zuberb ¨ uhler , Christophe Boesch, Mimi Arandjelovic, Hjalmar K ¨ uhl, and T ilo Burghardt. Panaf20k: A large video dataset for wild ape detection and behaviour recognition. International J ournal of Computer V ision , 132(8):3086–3102, 2024. 2 [5] Otto Brookes, Maksim K ukushkin, Majid Mirmehdi, Colleen Stephens, Paula Dieguez, Thurston C. Hicks, Sor- rel Jones, K evin Lee, Maureen S. McCarthy , Amelia Meier , Emmanuelle Normand, Erin G. W essling, Roman M. W it- tig, Ke vin Langergraber , Klaus Zuberb ¨ uhler , Lukas Boesch, Thomas Schmid, Mimi Arandjelo vic, Hjalmar K ¨ uhl, and T ilo Burghardt. The PanAf-FGBG Dataset: Understanding the Impact of Backgrounds in W ildlife Behaviour Recogni- tion. pages 5433–5443, 2025. 2 [6] Mikel Brostr ¨ om. BoxMO T : pluggable SOT A tracking mod- ules for object detection, segmentation and pose estimation models. 7 [7] B Calvo and R W Furness. A re view of the use and the effects of marks and devices on birds. Ringing & Migration , 13(3): 129–151, 1992. 2 [8] Alex Hoi Hang Chan, Otto Brookes, Urs W aldmann, Hemal Naik, Iain D. Couzin, Majid Mirmehdi, No ¨ el Adiko Houa, Emmanuelle Normand, Christophe Boesch, Lukas Boesch, Mimi Arandjelovic, Hjalmar K ¨ uhl, T ilo Burghardt, and Fu- mihiro Kano. T owards Application-Specific Evaluation of V ision Models: Case Studies in Ecology and Biology , 2025. 2 , 7 , 8 [9] Hoi Hang Chan, Prasetia Putra, Harald Schupp, Johanna K ¨ ochling, Jana Straßheim, Britta Renner, Julia Schroeder, W illiam D Pearse, Shinichi Nakag awa, T erry Burke, Michael Griesser , Andrea Meltzer , Saverio Lubrano, and Fumihiro Kano. Y olo-behaviour: A simple, flexible framework to au- tomatically quantify animal behaviours from videos. Meth- ods in Ecology and Evolution , 16(4):760–774, 2025. 1 [10] Jun Chen, Ming Hu, Darren J Coker , Michael L Berumen, Blair Costelloe, Sara Beery , Anna Rohrbach, and Mohamed Elhoseiny . Mammalnet: A large-scale video benchmark for mammal recognition and behavior understanding. In Pro- ceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 13052–13061, 2023. 2 [11] Bo wen Cheng, Ishan Misra, Alexander G. Schwing, Ale xan- der Kirillov , and Rohit Girdhar . Masked-attention Mask T ransformer for Universal Image Se gmentation, 2022. arXiv:2112.01527 [cs]. 6 [12] Michael Chimento, Alex Hoi Hang Chan, Lucy M Aplin, and Fumihiro Kano. Peering into the world of wild passer- ines with 3d-socs: Synchronized video capture and posture estimation. Methods in Ecology and Evolution , 2025. 1 [13] James D. Crall, Nick Gravish, Andrew M. Mountcastle, and Stacey A. Combes. BEEtag: A Low-Cost, Image-Based T racking System for the Study of Animal Behavior and Lo- comotion. PLOS ONE , 10(9):e0136487, 2015. Publisher: Public Library of Science. 5 [14] Antica Culina, Frank Adriaensen, Liam D Bailey , Mal- colm D Burgess, Anne Charmantier , Ella F Cole, T apio Eeva, Erik Matthysen, Chlo ´ e R Nater , Ben C Sheldon, et al. Con- necting the data landscape of long-term ecological studies: The spi-birds data hub . Journal of Animal Ecology , 90(9): 2147–2160, 2021. 5 [15] Filipe CR Cunha and Michael Griesser . Who do you trust? wild birds use social knowledge to avoid being deceived. Sci- ence Advances , 7(22):eaba2862, 2021. 8 [16] Nisarg Desai, Praneet Bala, Rebecca Richardson, Jessica Raper , Jan Zimmermann, and Benjamin Hayden. OpenApe- Pose, a database of annotated ape photographs for pose esti- mation. eLife , 12:RP86873, 2023. Publisher: eLife Sciences Publications, Ltd. 1 , 2 [17] Isla Duporge, Maksim Kholiavchenko, Roi Harel, Scott W olf, Daniel I Rubenstein, Margaret C Crofoot, T anya Berger -W olf, Stephen J Lee, Julie Barreau, Jenna Kline, et al. Baboonland dataset: Tracking primates in the wild and au- tomating behaviour recognition from drone videos: I. du- porge et al. International Journal of Computer V ision , pages 1–12, 2025. 2 [18] Jan Ekman and Michael Griesser . Siberian jays: Delayed dispersal in the absence of cooperative breeding. In Coop- erative Breeding in V ertebrates: Studies of Ecology , Evolu- tion, and Behavior , pages 6–18. Cambridge Univ ersity Press, Cambridge, 2016. 3 [19] European colour-ring Birding. European colour-ring birding. 9 https://cr- birding.org/ , 1995–. Accessed: 2026- 03-03. 5 [20] Andr ´ e C Ferreira, Liliana R Silv a, Francesco Renna, Hanja B Brandl, Julien P Renoult, Damien R Farine, Rita Covas, and Claire Doutrelant. Deep learning-based methods for indi- vidual recognition in small birds. Methods in Ecology and Evolution , 11(9):1072–1085, 2020. 1 , 3 , 5 , 8 [21] Oli vier Friard and Marco Gamba. BORIS: a free, versatile open-source event-logging software for video/audio coding and live observations. Methods in Ecology and Evolution , 7 (11):1325–1330, 2016. 4 [22] Michael Fuchs, Emilie Genty , Klaus Zuberb ¨ uhler , and Paul Cotofrei. ASBAR: an Animal Skeleton-Based Action Recognition framework. Recognizing great ape beha viors in the wild using pose estimation with domain adaptation. eLife , 13, 2024. Publisher: eLife Sciences Publications Lim- ited. 1 [23] V alentin Gabeff, Haozhe Qi, Brendan Flaherty , Gencer Sum- bul, Alexander Mathis, and Devis Tuia. MammAlps: A Multi-view V ideo Behavior Monitoring Dataset of W ild Mammals in the Swiss Alps. pages 13854–13864, 2025. 2 [24] Jacob M Gra ving, Daniel Chae, Hemal Naik, Liang Li, Ben- jamin K oger , Blair R Costelloe, and Iain D Couzin. Deep- posekit, a software toolkit for fast and robust animal pose estimation using deep learning. Elife , 8:e47994, 2019. 1 [25] Michael Griesser . Nepotistic vigilance behavior in Siberian jay parents. Behavioral Ecology , 14(2):246–250, 2003. 8 [26] Michael Griesser and Magdalena Nystrand. V igilance and predation of a forest-living bird species depend on large- scale habitat structure. Behavioral Ecology , 20(4):709–715, 2009. 8 [27] Michael Griesser, Peter Halv arsson, Szymon M. Drobniak, and Carles V il ` a. Fine-scale kin recognition in the absence of social familiarity in the Siberian jay , a monogamous bird species. Molecular Ecology , 24(22):5726–5738, 2015. 4 , 8 [28] Michael Griesser, Szymon M Drobniak, Shinichi Nakagawa, and Carlos A Botero. Family living sets the stage for co- operativ e breeding and ecological resilience in birds. PLoS biology , 15(6):e2000483, 2017. 4 [29] Glenn Jocher, A yush Chaurasia, and Jing Qiu. Ultralytics Y OLO, 2023. 4 [30] Daniel Joska, Liam Clark, Naoya Muramatsu, Ricardo Jericevich, Fred Nicolls, Alexander Mathis, Mackenzie W Mathis, and Amir Patel. Acinoset: a 3d pose estimation dataset and baseline models for cheetahs in the wild. In 2021 IEEE international conference on r obotics and automation (ICRA) , pages 13901–13908. IEEE, 2021. 1 , 2 [31] Maksim Kholia vchenko, Jenna Kline, Michelle Ramirez, Sam Stevens, Alec Sheets, Reshma Babu, Namrata Banerji, Elizabeth Campolongo, Matthew Thompson, Nina V an Tiel, Jackson Miliko, Eduardo Bessa, Isla Duporge, T anya Berger- W olf, Daniel Rubenstein, and Charles Stewart. KABR: In- Situ Dataset for Kenyan Animal Behavior Recognition from Drone V ideos. In 2024 IEEE/CVF W inter Confer ence on Ap- plications of Computer V ision W orkshops (W ACVW) , pages 31–40, W aikoloa, HI, USA, 2024. IEEE. 2 [32] Benjamin K oger, Edward Hurme, Blair R. Costelloe, M. T eague O’Mara, Martin W ikelski, Roland Kays, and Dina K. N. Dechmann. An automated approach for count- ing groups of flying animals applied to one of the world’ s largest bat colonies. Ecospher e , 14(6):e4590, 2023. 1 [33] Hjalmar S. K ¨ uhl and Tilo Burghardt. Animal biometrics: quantifying and detecting phenotypic appearance. T r ends in Ecology & Evolution , 28(7):432–441, 2013. Publisher: El- sevier . 1 [34] Ci Li, Ylva Mellbin, Johanna Krogager , Senya Poliko vsky , Martin Holmberg, Nima Ghorbani, Michael J. Black, Hedvig Kjellstr ¨ om, Silvia Zuffi, and Elin Hernlund. The Poses for Equine Research Dataset (PFERD). Scientific Data , 11(1): 497, 2024. Publisher: Nature Publishing Group. 2 [35] Dan Liu, Jin Hou, Shaoli Huang, Jing Liu, Y uxin He, Bochuan Zheng, Jifeng Ning, and Jingdong Zhang. Lote- animal: A long time-span dataset for endangered animal be- havior understanding. In Pr oceedings of the IEEE/CVF In- ternational Confer ence on Computer V ision , pages 20064– 20075, 2023. 2 [36] Xiaoxuan Ma, Stephan Kaufhold, Jiajun Su, W entao Zhu, Jack T erwilliger, Andres Meza, Y ixin Zhu, Federico Rossano, and Y izhou W ang. Chimpact: A longitudinal dataset for understanding chimpanzee behaviors. Advances in Neural Information Pr ocessing Systems , 36:27501–27531, 2023. 2 , 3 , 8 [37] Xiaoxuan Ma, Y utang Lin, Y uan Xu, Stephan P . Kaufhold, Jack T erwilliger, Andres Meza, Y ixin Zhu, Federico Rossano, and Y izhou W ang. AlphaChimp: T rack- ing and Beha vior Recognition of Chimpanzees, 2024. arXiv:2410.17136 [cs]. 3 [38] Jesse D Marshall, Ugne Klibaite, Amanda Gellis, Diego E Aldarondo, Bence P ¨ Olveczk y , and T imothy W Dunn. The pair-r24m dataset for multi-animal 3d pose estimation. bioRxiv , pages 2021–11, 2021. 2 [39] Andrea Meltzer . The ecological and social drivers of co- operation: Experimental field studies in a group-living bird. 2025. 8 [40] Luke Me yers, Josu ´ e Rodr ´ ıguez Cordero, Carlos Corrada Brav o, Fanfan Noel, Jos ´ e Agosto-Rivera, T ugrul Giray , and R ´ emi M ´ egret. T owards automatic honey bee flower -patch assays with paint marking re-identification. arXiv preprint arXiv:2311.07407 , 2023. 5 [41] MMAction2-Contributors. OpenMMLab’ s Next Generation V ideo Understanding T oolbox and Benchmark, 2020. 6 [42] MMPose-Contributors. OpenMMLab Pose Estimation T ool- box and Benchmark, 2020. 6 [43] M ´ at ´ e Nagy , G ´ abor V ´ as ´ arhelyi, Benjamin Pettit, Isabella Roberts-Mariani, T am ´ as V icsek, and Dora Biro. Context- dependent hierarchies in pigeons. Pr oceedings of the Na- tional Academy of Sciences , 110(32):13049–13054, 2013. 5 [44] M ´ at ´ e Nagy , Jacob D Davidson, G ´ abor V ´ as ´ arhelyi, D ´ aniel ´ Abel, Enik ˝ o Kubinyi, Ahmed El Hady , and T am ´ as V icsek. Long-term tracking of social structure in groups of rats. Sci- entific Reports , 14(1):22857, 2024. 5 [45] Hemal Naik, Alex Hoi Hang Chan, Junran Y ang, Mathilde Delacoux, Iain D Couzin, Fumihiro Kano, and M ´ at ´ e Nagy . 3d-pop-an automated annotation approach to facilitate mark- erless 2d-3d tracking of freely moving birds with marker - 10 based motion capture. In Pr oceedings of the IEEE/CVF con- fer ence on computer vision and pattern r ecognition , pages 21274–21284, 2023. 2 , 3 [46] Hemal Naik, Junran Y ang, Dipin Das, Margaret Cro- foot, Akanksha Rathore, and V ivek Hari Sridhar . Buck- tales: A multi-uav dataset for multi-object tracking and re- identification of wild antelopes. Advances in Neural Infor- mation Pr ocessing Systems , 37:81992–82009, 2024. 3 [47] Xun Long Ng, Kian Eng Ong, Qichen Zheng, Y un Ni, Si Y ong Y eo, and Jun Liu. Animal kingdom: A large and div erse dataset for animal behavior understanding. In Pr o- ceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 19023–19034, 2022. 2 [48] Omiros Pantazis, Peggy Bev an, Holly Pringle, Guil- herme Braga Ferreira, Daniel J Ingram, Emily Madsen, Liam Thomas, Dol Raj Thanet, Thakur Silwal, Santosh Rayama- jhi, et al. Deep learning-based ecological analysis of cam- era trap images is impacted by training data quality and size. arXiv pr eprint arXiv:2408.14348 , 2024. 2 , 8 [49] Nikhila Ravi, V alentin Gabeur, Y uan-Ting Hu, Ronghang Hu, Chaitanya Ryali, T engyu Ma, Haitham Khedr , Roman R ¨ adle, Chloe Rolland, Laura Gustafson, Eric Mintun, Junt- ing Pan, Kalyan V asudev Alwala, Nicolas Carion, Chao- Y uan W u, Ross Girshick, Piotr Doll ´ ar , and Christoph Feicht- enhofer . SAM 2: Segment Anything in Images and V ideos, 2024. arXiv:2408.00714 [cs]. 5 [50] David Rolnick, Alan Aspuru-Guzik, Sara Beery , Bistra Dilk- ina, Priya L. Donti, Marzyeh Ghassemi, Hannah K erner , Claire Monteleoni, Esther Rolf, Milind T ambe, and Adam White. Position: Application-Driv en Innovation in Machine Learning. In Pr oceedings of the 41st International Con- fer ence on Machine Learning , pages 42707–42718. PMLR, 2024. ISSN: 2640-3498. 7 , 8 [51] Gabriel Santiago-Plaza, Luke Meyers, Andrea Gomez- Jaime, Rafael Mel ´ endez-Rıos, Fanf an Noel, Jose Agosto, T ugrul Giray , Josu ´ e Rodrıguez-Cordero, and R ´ emi M ´ egret. Identification of honeybees with paint codes using con volu- tional neural networks. Pr oceedings of the 19th International Joint Conference on Computer V ision, Imaging and Com- puter Graphics Theory and Applications , 772:779, 2024. 5 [52] Stefan Schneider, Graham W . T aylor, Stefan Linquist, and Stefan C. Kremer . Past, present and future approaches us- ing computer vision for animal re-identification from cam- era trap data. Methods in Ecology and Evolution , 10(4):461– 470, 2019. 1 [53] Andre w Sih, Maud CO Ferrari, and David J Harris. Evolu- tion and beha vioural responses to human-induced rapid envi- ronmental change. Evolutionary applications , 4(2):367–387, 2011. 1 [54] De vis T uia, Benjamin K ellenberger , Sara Beery , Blair R Costelloe, Silvia Zuffi, Benjamin Risse, Alexander Mathis, Mackenzie W Mathis, Frank van Langev elde, T ilo Burghardt, et al. Perspectives in machine learning for wildlife conservation. Natur e communications , 13(1):792, 2022. 2 [55] Ulla T uomainen and Ulrika Candolin. Behavioural responses to human-induced en vironmental change. Biological Re- views , 86(3):640–657, 2011. 1 [56] Y ana van de Sande, W im Pouw , and Lara M. Southern. Au- tomated Recognition of Grooming Behavior in W ild Chim- panzees. Pr oceedings of the Annual Meeting of the Cognitive Science Society , 46(0), 2024. 1 [57] Urs W aldmann, Alex Hoi Hang Chan, Hemal Naik, M ´ at ´ e Nagy , Iain D. Couzin, Oliver Deussen, Bastian Goldluecke, and Fumihiro Kano. 3D-MuPPET: 3D Multi-Pigeon Pose Estimation and T racking. International Journal of Computer V ision , 2024. 1 [58] Fasheng W ang, Ping Cao, Fu Li, Xing W ang, Bing He, and Fuming Sun. W A TB: W ild Animal T racking Benchmark. International J ournal of Computer V ision , 131(4):899–917, 2023. 2 [59] Miyako H W arrington, David N Fisher, Jan Komdeur , Na- talie Pilakouta, and Michael Griesser . Stronger together? a framew ork for studying population resilience to climate change impacts via social shielding. 2024. 4 [60] Charlotte Wiltshire, James Lewis-Cheetham, V iola Kome- dov ´ a, T etsuro Matsuzawa, Kirsty E. Graham, and Cather- ine Hobaiter . DeepWild: Application of the pose estima- tion tool DeepLabCut for behaviour tracking in wild chim- panzees and bonobos. Journal of Animal Ecology , 92(8): 1560–1574, 2023. 1 [61] Scott W . W olf, Dee M. Ruttenberg, Daniel Y . Knapp, An- drew E. W ebb, Ian M. Traniello, Grace C. McKenzie-Smith, Sophie A. Lehen y , Joshua W . Shaevitz, and Sarah D. Kocher . N APS: Integrating pose estimation and tag-based track- ing. Methods in Ecology and Evolution , 14(10):2541–2548, 2023. 5 [62] Shiting Xiao, Y ufu W ang, Ammon Perkes, Bernd Pfrommer, Marc Schmidt, K ostas Daniilidis, and Marc Badger . Multi- view tracking, re-id, and social network analysis of a flock of visually similar birds in an outdoor aviary . International Journal of Computer V ision , 131(6):1532–1549, 2023. 3 , 5 , 8 [63] Shaokai Y e, Anastasiia Filippov a, Jessy Lauer , Stef fen Schneider , Maxime V idal, Tian Qiu, Alexander Mathis, and Mackenzie W eygandt Mathis. Superanimal pretrained pose estimation models for behavioral analysis. Nature Commu- nications , 15(1):5165, 2024. 1 [64] Hang Y u, Y ufei Xu, Jing Zhang, W ei Zhao, Ziyu Guan, and Dacheng T ao. Ap-10k: A benchmark for animal pose es- timation in the wild. In Thirty-fifth Confer ence on Neural Information Processing Systems Datasets and Benchmarks T rack (Round 2) , 2021. 2 [65] Libo Zhang, Junyuan Gao, Zhen Xiao, and Heng Fan. Ani- malT rack: A Benchmark for Multi-Animal Tracking in the W ild. International Journal of Computer V ision , 131(2): 496–513, 2023. 2 [66] V ojt ˇ ech ˇ Cerm ´ ak, Lukas Picek, Luk ´ a ˇ s Adam, and Kostas Pa- pafitsoros. W ildlifeDatasets: An Open-Source T oolkit for Animal Re-Identification. In Proceedings of the IEEE/CVF W inter Confer ence on Applications of Computer V ision , pages 5953–5963, 2024. 2 , 4 , 6 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment