High-Resolution Inertial Dynamics with Time-Rescaled Gradients for Nonsmooth Convex Optimization

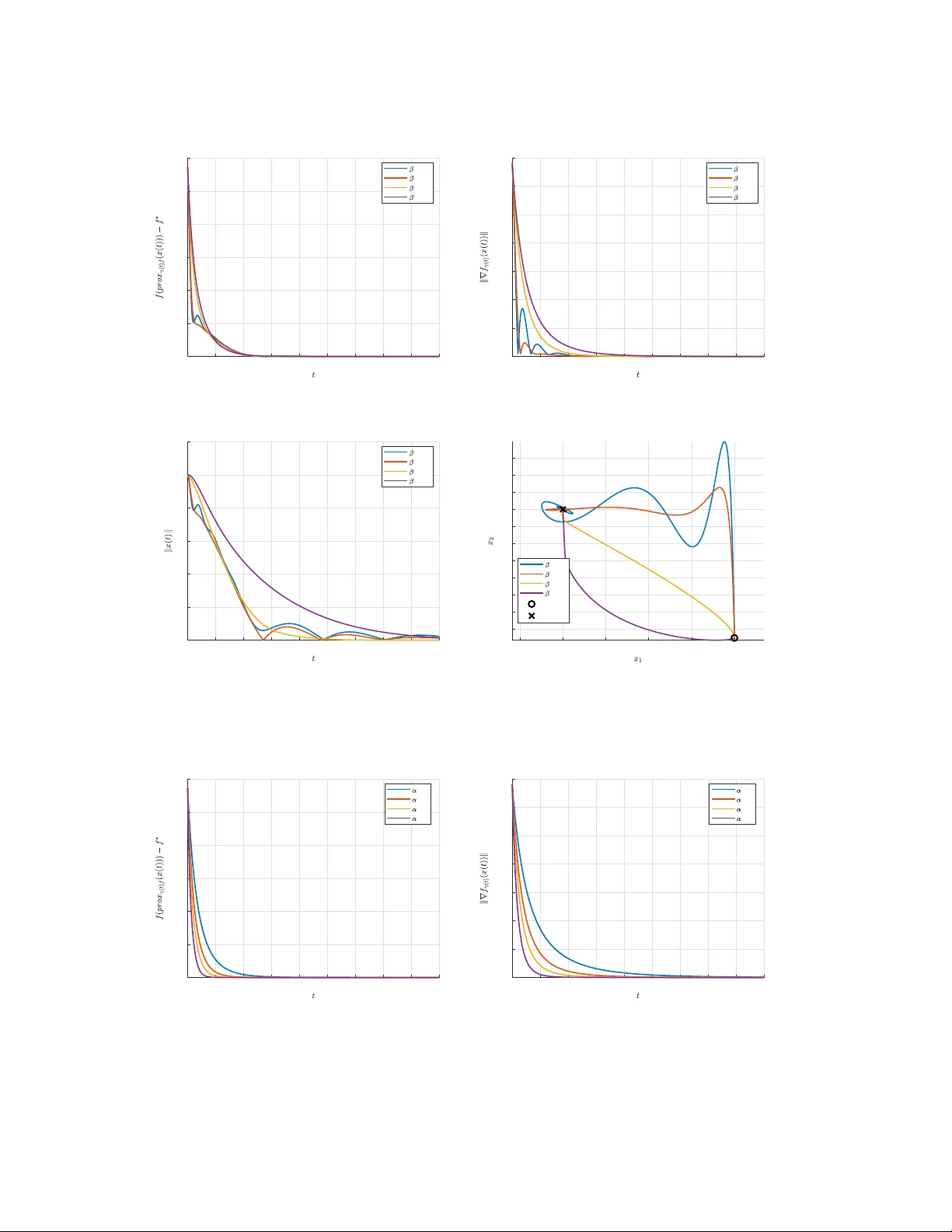

We study nonsmooth convex minimization through a continuous-time dynamical system that can be seen as a high-resolution ODE of Nesterov Accelerated Gradient (NAG) adapted to the nonsmooth case. We apply a time-varying Moreau envelope smoothing to a p…

Authors: Manh Hung Le, Andrea Simonetto