Optimization on Weak Riemannian Manifolds

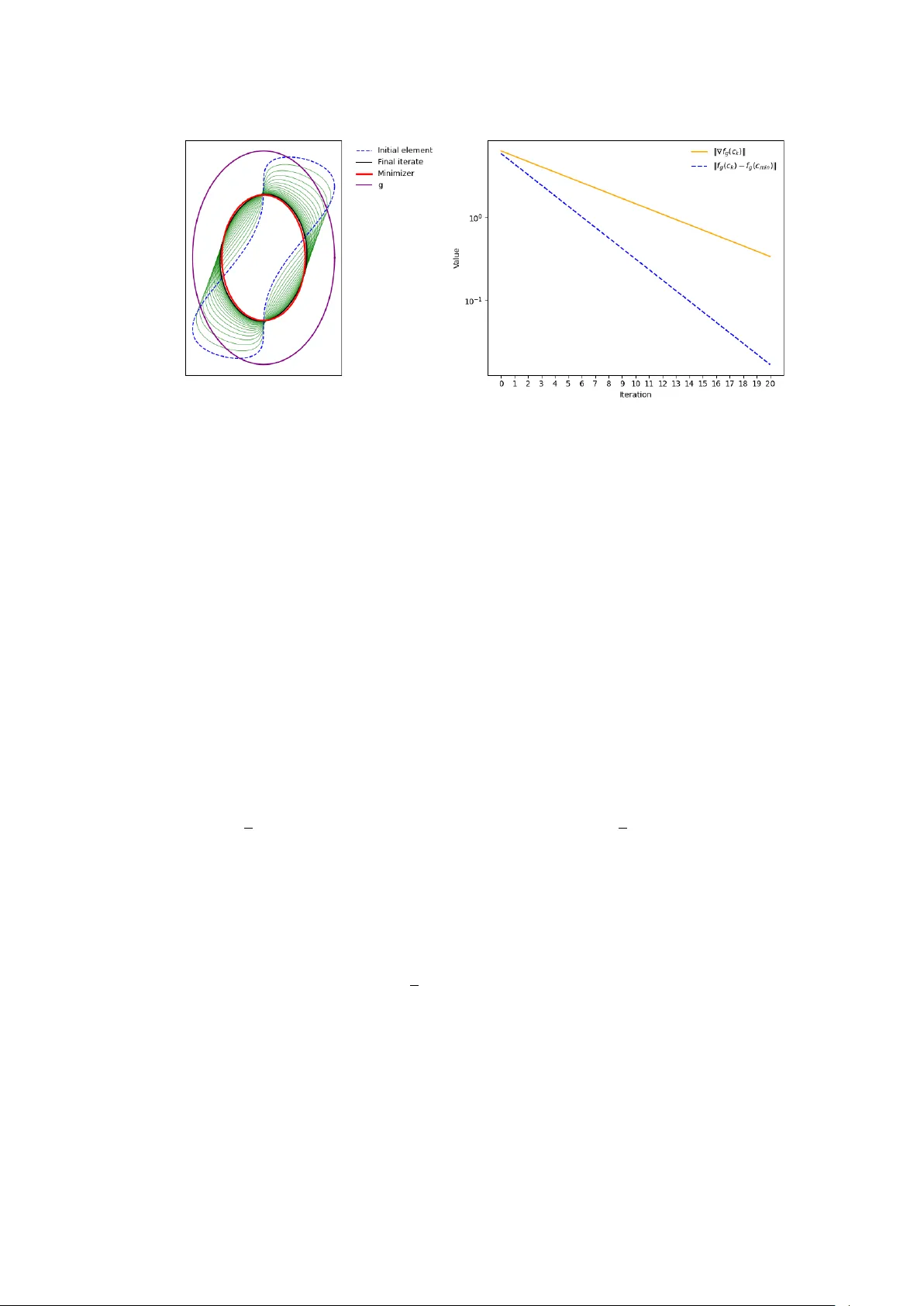

Riemannian structures on infinite-dimensional manifolds arise naturally in shape analysis and shape optimization. These applications lead to optimization problems on manifolds which are not modeled on Banach spaces. The present article develops the b…

Authors: Valentina Zalbertus, Max Pfeffer, Alex