A Wireless World Model for AI-Native 6G Networks

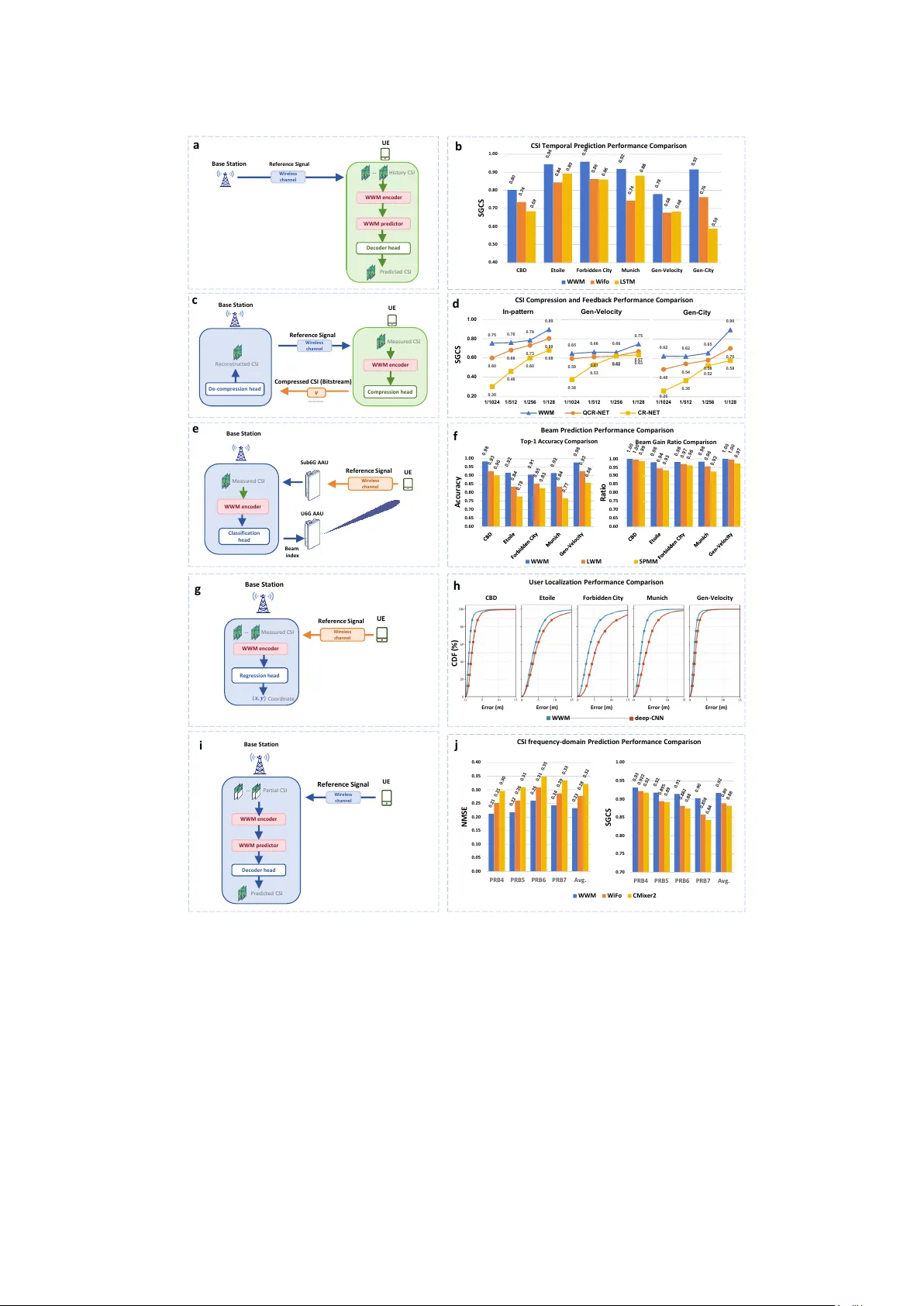

Integrating AI into the physical layer is a cornerstone of 6G networks. However, current data-driven approaches struggle to generalize across dynamic environments because they lack an intrinsic understanding of electromagnetic wave propagation. We in…

Authors: Ziqi Chen, Yi Ren, Yixuan Huang