Reinforcement learning for quantum processes with memory

In reinforcement learning, an agent interacts sequentially with an environment to maximize a reward, receiving only partial, probabilistic feedback. This creates a fundamental exploration-exploitation trade-off: the agent must explore to learn the hi…

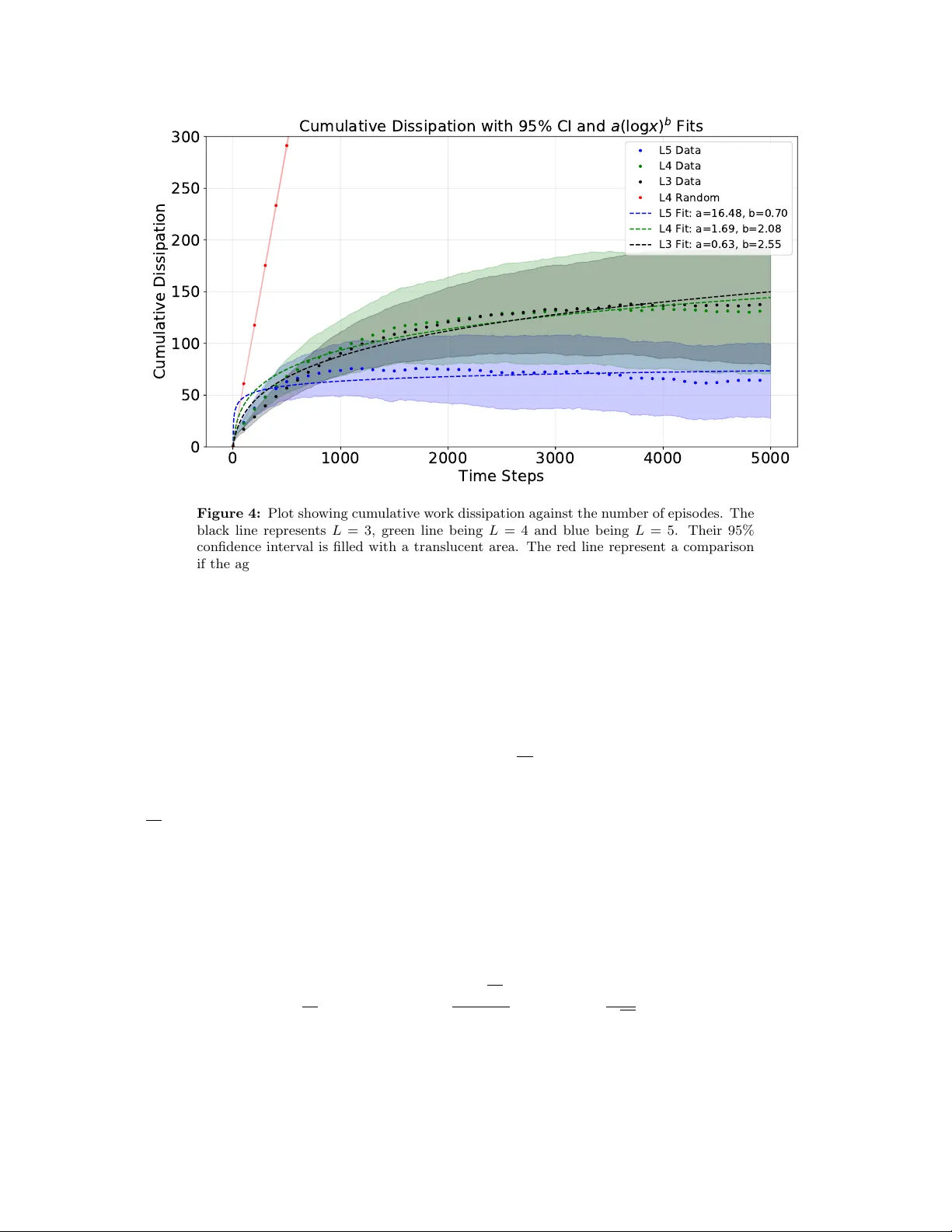

Authors: Josep Lumbreras, Ruo Cheng Huang, Yanglin Hu