The Value of Information in Resource-Constrained Pricing

Firms that price perishable resources -- airline seats, hotel rooms, seasonal inventory -- now routinely use demand predictions, but these predictions vary widely in quality. Under hard capacity constraints, acting on an inaccurate prediction can irr…

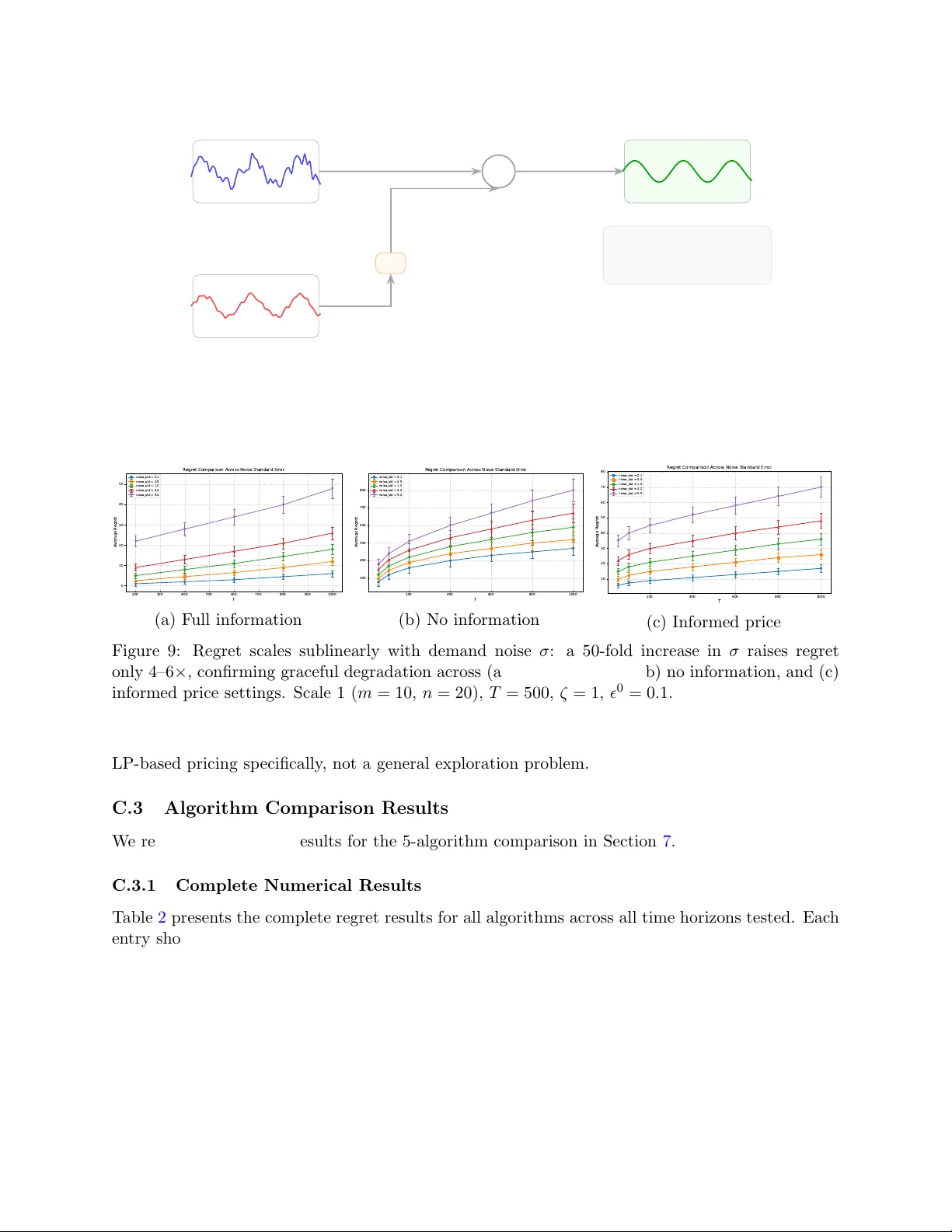

Authors: Ruicheng Ao, Jiashuo Jiang, David Simchi-Levi