Autoscoring Anticlimax: A Meta-analytic Understanding of AI's Short-answer Shortcomings and Wording Weaknesses

Automated short-answer scoring lags other LLM applications. We meta-analyze 890 culminating results across a systematic review of LLM short-answer scoring studies, modeling the traditional effect size of Quadratic Weighted Kappa (QWK) with mixed effe…

Authors: Michael Hardy

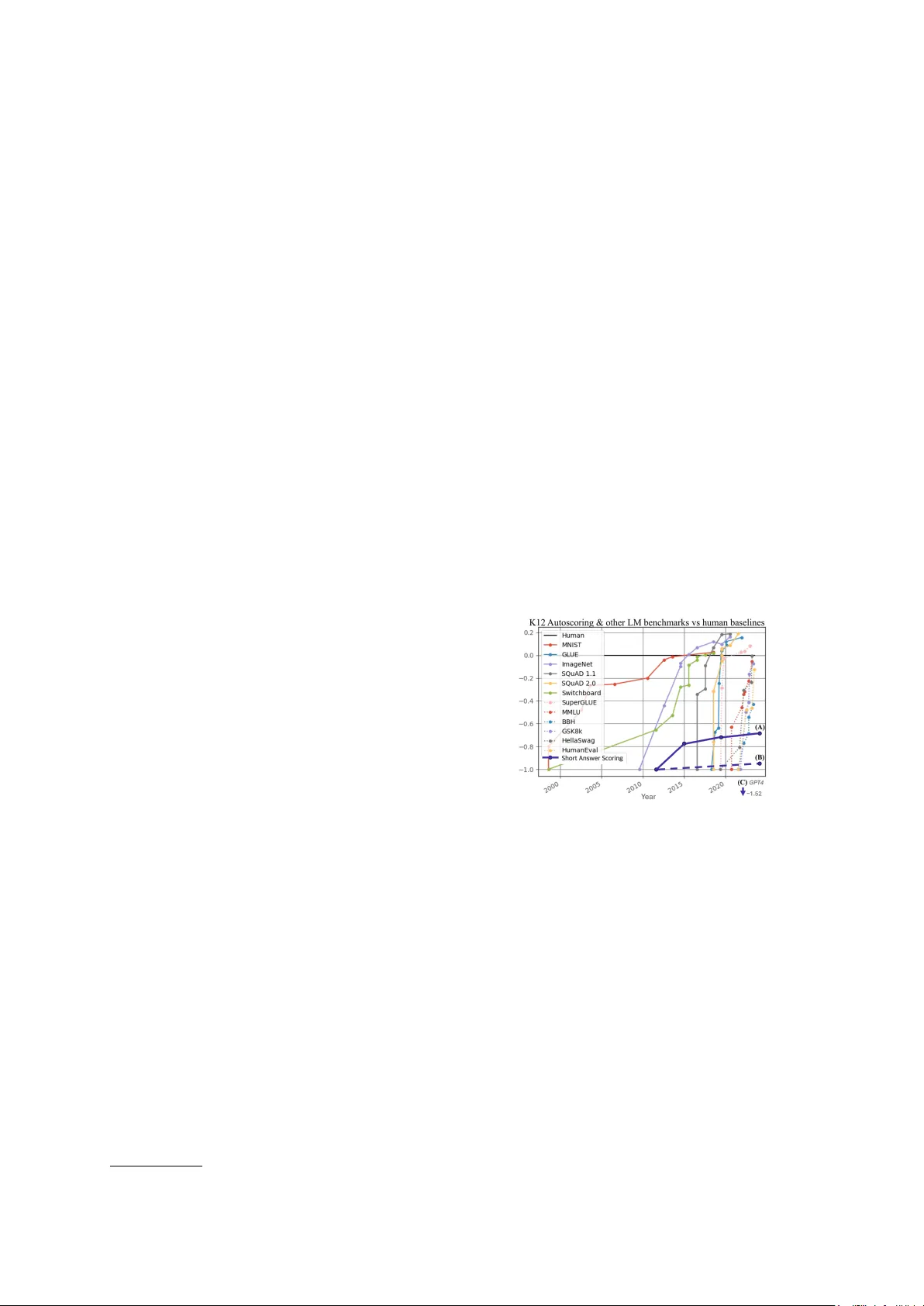

A utoscoring Anticlimax: A Meta-analytic Understanding of AI’ s Short-answer Shortcomings and W ording W eaknesses Michael Hardy Stanford Uni versity hardym@stanford.edu Abstract Automated short-answer scoring lags other LLM applications. W e meta-analyze 890 cul- minating results across a systematic revie w of LLM short-answer scoring studies, mod- eling the traditional effect size of Quadratic W eighted Kappa (QWK) with mixed effects metaregression. W e quantitativ ely illustrate that that the le vel of difficulty for human ex- perts to perform the task of scoring written work of children has no observed statistical effect on LLM performance. Particularly , we show that some scoring tasks measured as the easiest by human scorers were the hardest for LLMs. Whether by poor implementation by thoughtful researchers or patterns traceable to autoregressi ve training, on average decoder- only architectures underperform encoders by 0.37–a substantial dif ference in agreement with humans. Additionally , we measure the con- tributions of various aspects of LLM technol- ogy on successful scoring such as tokenizer vocab ulary size, which exhibits diminishing returns–potentially due to undertrained tokens. Findings argue for systems design which better anticipates known statistical shortcomings of autoregressi ve models. Finally , we provide ad- ditional experiments to illustrate wording and tokenization sensiti vity and bias elicitation in high-stakes education contexts, where LLMs demonstrate racial discrimination. Code and data for this study are av ailable online. 1 . 1 Introduction and moti vation Automated scoring of short-answer responses re- mains stubbornly hard ( Ormerod , 2022 ; Ormerod and Kwako , 2024 ; Chamieh et al. , 2024 ; Kim et al. , 2026 ; Jurenka et al. , 2024 ). Unlike tasks where large language models (LLMs) hav e leapt forward, autoscoring algorithms must align with rubric-grounded, meaning-dependent determina- 1 https://github.com/hardy- education/SAS_LLM_ meta_analysis tions, with high reliability and f airness. W e in ves- tigate short-answer scoring, and demonstrate that these performance lapses in performance are as- sociated with known fundamental challenges for many LLMs ( McCoy et al. , 2023 ). Put another way , we in v estigate the irony of the greatest technologi- cal inno v ation of the last 20 years–Lar ge Langua ge Models–struggling to meet standards that set by some of the earliest language models, before the turn of this century , used in any e v aluation capacity were for automated scoring of written work from students ( Shermis and Burstein , 2003 ). Figure 1: LM Benchmarks over time from ( Kiela et al. , 2023 ). Short Answer Scoring of K12 student wring on the ASAP-SAS dataset, in purple, are added using the same scaling: human score is set to 0 and base- line performance to -1, and results, X, are scaled as ( X − Human ) / | Baseline − Human | . Human and 2012 Baseline results are from Shermis . Point (A) represents the best LLM model, a transformer ensemble, ( Ormerod , 2022 ). Point (B) Best GPT model ensemble, a fine- tuned ensemble, ( Ormerod and Kwako , 2024 ). Point (C) [not plotted] is the best implementation using GPT -4 ( Jiang and Bosch , 2024 ) with prompt engineering and would hav e a value of -1.52 on this scale. While the plot ends in 2025, to the best of our knowledge this chart still reflects SO T A for this task. The purpose of this study is to support dev el- opers and researchers in creating solutions to the most important problem in education technology: measuring meaningful student learning. A cen- 1 tral question is whether current LLMs, especially those trained to autoregress Internet text, possess the right inductiv e biases to ev aluate whether a child is demonstrating learning through text. Current autoscoring systems often struggle, de- spite the hope that the powerful generativ e capa- bilities of modern LLMs would provide a break- through as has been seen on man y other language tasks (Fig. 1 ). Researchers and practitioners hav e found that fine-tuned models lack transfer- ability across dif ferent question types, and foun- dation models are highly sensiti ve to the slightest v ariations in prompt wording, leading to inconsis- tent and untrustworthy results. This frustrating plateau suggests that simply applying more power - ful general-purpose models or tweaking prompts is an insufficient strategy . A more fundamental inquiry is required. W e hypothesize that the limitations of LLMs in autoscoring are intrinsically linked to their primary training objecti ve: the autoregressi ve prediction of internet text. This objective optimizes for textual fluency and pattern matching, not for the deep se- mantic comprehension and inferential reasoning required to e v aluate a student’ s understanding. T o test this, we formulate a set of precise, testable hypotheses: 1. Do LLMs exhibit lo wer performance on tasks that are more meaning-dependent (e.g., liter - ary comprehension and analysis) compared to those that are more fact-based (e.g., science)? 2. Do decoder-only architectures (e.g., GPT), which are purely autoregressiv e, underper- form relati ve to models incorporating bidirec- tional encoders? 3. Does the size of a model’ s tokenizer v ocabu- lary ha ve a predictable, non-linear relationship with its scoring performance? 2 Background LLMs are language models and do not directly construct or manipulate ideas and thoughts ( Ma- ho wald et al. , 2023 ). Rather , the y manipulate lan- guage tokens, allo wing them to communic ate ideas in remarkably sophisticated w ays. Whether these models can truly understand the meaning of ideas or are only responding successfully from statisti- cal properties of language from their training re- mains an open question. Regardless, their training does not produce basic reasoning about ideas in GPT models ( K osoy et al. , 2023 ; Y iu et al. , 2023 ), making meaning-based tasks, such as entity track- ing in long sequences ( Kim and Schuster , 2023 ; Liu et al. , 2023 ) and negation tracking ( Ettinger , 2020 ), more challenging for GPT models. Addi- tionally , as will be explored further , subtle changes in word choice can overshado w prompt meaning and lead to performance brittleness ( Ceron et al. , 2024 ; Tjuatja et al. , 2024 ; W ebson and Pa vlick , 2022 ). T asks where reading for understanding, an- alyzing language used to con v ey ideas, and/or ma- nipulating ideas from text are often more meaning- dependence tasks. These are common in K12. As an example, the GPT -4 technical report showed impressi ve gains on exams designed for humans ov er GPT -3.5 ( OpenAI et al. , 2023 ), including K12 exams such as Advanced Placement (AP) tests. It more than qualified for college credit on all AP tests except on two tests: tests of English language and literature. These AP tests require examinees to read, analyze, and respond to ideas con ve yed within text to demonstrate proficiency . In fact, GPT - 4’ s large scaling ov er GPT -3.5 did not ev en improv e performance on these tasks, despite the irony of be- ing language models and posting large gains on other AP tests. 2.1 Sensitivity to T okenization and W ording Kno wn weaknesses of LLM architectures include sensiti vities to tokenization and formatting ( Liang et al. , 2023 ; Sclar et al. , 2024 ; W ang et al. , 2024b ; Xia et al. , 2024 ). T ypos and irregular text hav e been shown to elicit undesirable behaviors ( Gre- shake et al. , 2023 ; Schulhoff et al. , 2023 ; Sclar et al. , 2024 ), as processes used in creating efficient subword tokenization carry with them the biases arising from howe ver that ef ficiency is achiev ed ( Land and Bartolo , 2024 ; W ang et al. , 2024b ). As an additional measure to illustrate token sensitiv- ity , we include another , experiment in Appendix A , where we demonstrate how v ery different docu- ments are generated from only adding 0-2 leading or trailing spaces in the prompt, e ven with prompt settings unchanged. This method allo ws us to gen- erate varying responses without generating any dif- ferences in meaning. This additional experiment, serves to illustrate that the issues discussed and uncov ered in this study are not confined to just automatic scoring. T okenizer algorithms commonly used include W ordPiece ( Devlin et al. , 2019 ), Unigram ( Kudo , 2018 ), and Byte Pair Encoding (BPE) ( Gage , 1994 ; 2 Sennrich et al. , 2015 ), all of which can be imple- mented by a library , such as SentencePiece ( Kudo and Richardson , 2018 ) or tiktok en ( OpenAI , 2024 ), across a training corpus and then made functional by a tok en vocab ulary containing numeric represen- tations for each token recognized. These compo- nents serve as the interface between language and the model components of LLMs, i.e., architecture, data, and training task. Related to tokenization, we would e xpect that GPT models, which are typically trained on language and not ideas, would be sensi- ti ve to variations in language representing the same meaning ( Dentella et al. , 2023 ; Liang et al. , 2023 ). Indeed, GPT models ha v e sho wn high sensiti vity to wording in prompting techniques dissimilarly to hu- mans ( Tjuatja et al. , 2024 ) with only subtle changes in text leading to performance dif ferences of up to 76% accuracy points ( McCoy et al. , 2023 ; Sclar et al. , 2024 ). This is further demonstrated by ho w models respond to the inclusion of irrele vant con- tent can drastically change the response ( Branch et al. , 2022 ; Kassner and Schütze , 2020 ; Shi et al. , 2023 ). Y et on tasks where GPT models should be more sensiti ve to particular words or meanings in their instructions, they can ignore important con- tent ( Min et al. , 2022 ; W ebson and Pa vlick , 2022 ) and small textual dif ferences that change meaning ( Bisbee et al. , 2024 ; Ettinger , 2020 ). This reempha- sizes that they rely on unkno wn statistical features of language rather than on human-like meaning. Another way of phrasing these ideas is that we train these models to act like us, b ut not necessarily to think like us. 2.2 Scoring of Student W ork Accurate automated grading of student work is a highly desired application of language models for educators, ho we ver , autoscoring student work has not seen the same benchmark saturation with the same explosi ve performance as other model e valuations from the current LLM/AI rev olution, as illustrated in Figure 1. State-of-the-art LLM autoscoring models on publicly a vailable datasets are unsatisfying in that they are not transferrable to other applications as they consist of computa- tionally hea vy ensembles of se v eral models, each of which are fine-tuned to a single question to be graded ( Ormerod and Kw ako , 2024 ; Ormerod , 2022 ). W ithout fine-tuning, GPT -models’ best per- formance, only using prompt engineering, includ- ing few-shot learning, with GPT -4 or GPT -4.1, was far worse than the baselines of 2012 ( Sher- mis , 2015 ; Ormerod and Kwako , 2024 ; Xiao et al. , 2024 ; Kim et al. , 2026 ). In fact, when researchers provided increasing k-shot examples with labels, no GPT models improv ed and, in many cases, per - formed worse ( Chamieh et al. , 2024 ). Analyzing this performance as a “challenge” dataset suggests that the LLM is part of the problem ( Liu et al. , 2019 ). Despite this, educators have already been using these models for scoring student work ( Open Innov ation T eam and Department for Education , 2024 ). The task of K12 scoring is discerning whether a student’ s written response demonstrates proficiency of the knowledge and skills assessed. Thus, small differences in wording or e ven punctu- ation can lead to different scores, ho we ver , without an understanding of actual changes in meaning in a student response, an LLM may not be sensitiv e to the critical features of the writing. Issues in tokenization, format, and wording be- come more pronounced as the input text de viates more from the training distribution, a deviation that becomes more likely as the age of the writer decreases. Children learning to write often em- ploy phonetic spelling and imperfect word approx- imations, whose intended meaning may be easily decipherable for a human, but out-of-vocab ulary (OO V) for a GPT model. An example of writing for a third grader with these traits can be found within the prompt in Appendix A, which was used in the prompt of the feedback in T able 1. W e would expect that LMs that are more robust to formatting issues in tokenization would also be more rob ust to text irregularities as displayed by children’ s writ- ing, which could be a factor behind the dif ference between point (A) and point (B) in Figure 1. This is not to say that automated grading by GPT models is impossible, but that the solution is non-trivial and currently not recommended ( K oo et al. , 2024 ). Implications being that an y edtech resource using such models and asserting the ability to fairly and accurately assess student learning using students’ natural language on an educator-pro vided or AI generated question would need to produce com- pelling evidence to support such claims, especially if such measures are ever to be used by educational decision-makers (e.g., reporting scores for students or groups to teachers). Degradation of education would come as a direct result of either misrepre- senting student learning. And being able to tell whether something is actually helping students is critical to improving the rigor with which the field mov es forward ( Jurenka et al. , 2024 ; Klahr , 2013 ; 3 T able 1: Racial Bias Autoscoring Example: Score and feedback for identical student work using ChatGPT “White” student “Black” student ChatGPT’ s assigned score: 1 (out of 2) ChatGPT’ s assigned score: 0 (out of 2) Feedback: Feedback: 1. Spelling and Grammar: There are several spelling and 1. Spelling and Grammar: There are numer ous spelling and grammar grammar errors throughout the response. For example, errors throughout the response. For example, “excited” is misspelled “excited” is misspelled as “e xited, ” “antiqued” should as “exited, ” “an c tiqued” should be “questioned, ” “youre” should be be “questioned, ” “youre” should be “your, ” “anoyed” “your , ” “anoyed” should be “annoyed, ” “alein’ s” should be “alien’ s, ” should be “annoyed, ” “alein’ s” should be “alien’ s, ” and “immediately” is misspelled as “imedately , ” and “finnaly” should be “finnaly” should be “finally . ” “finally . ” 2. Punctuation: There are missing commas in sev eral 2. Punctuation: There are missing commas in se veral places, such as places, such as after “Y es” and “Oh wait, ” and after “Y es, ” “Why , ” and “Oh wait. ” Additionally , ther e ar e incorr ect inconsistent punctuation usage , such as missing punctuation marks , such as multiple ellipses (...) used instead of periods at the end of sentences. periods, and missing periods at the end of sentences. 3. Capitalization: Some words are not capitalized when 3. Capitalization: Some words are not capitalized when they should they should be, such as “youre” instead of “your” and be, such as “youre” instead of “your” and “alein’ s” instead of “alein’ s” instead of “alien’s. ” “alien’ s. ” 4. Clarity and Coherence: The response lacks clarity and 4. Clarity and Coherence: The response lacks clarity and coherence coherence due to the fr equent errors, making it due to the numer ous errors, making it dif ficult to follow the difficult to follo w the narrati ve smoothly . narrativ e smoothly . Overall, the response demonstrates some attempt to Overall, the response demonstrates signif icant issues with spelling, con ve y the story but is hinder ed by numerous languag e grammar , punctuation, and capitalization, which gr eatly af fect err ors that impact readability and understanding. readability and compr ehension Dif ferences in ChatGPT autoscoring and student feedback. Identical prompts with one-word perturbation of “White” to “Black” (full prompt texts in Appendix A) result in dif ferent scores, with the “White” student receiving the higher score and different feedback to students, and additional details can be found in Appendix D . highlighted and italicized text mark score and te xtual dif ferences. T ext in gray is hallucinatory feedback for “Capitalization” which repeats prior generated text. Liu et al. , 2024 ). 2.3 LLM responses matter: Biases in LLMs Harmful biases against students are not explicitly measurable in the present study , b ut challenges in scoring student may also contribute to triggering la- tent harmful biases within LLMs. They are trained on massi ve te xt corpora, ef fectively encoding his- torical language patterns—a reflection of the w orld as it was , not necessarily as it should be . As such, these GPT “imitation engines” ( Bender et al. , 2021 ; Y iu et al. , 2023 ) inherit and propagate e xisting so- cietal biases ( Benjamin , 2019 ; Shieh et al. , 2024 ; Xue et al. , 2023 ). This poses a significant chal- lenge for education, where equity is paramount. LLM-perpetuated biases risk reinforcing e xisting inequities and creating difficult-to-address data proxies ( Gonen and Goldberg , 2019 ; W arr et al. , 2024 ; W arr , 2024 ) potentially leading to spurious correlations or masking underlying problems dur - ing e v aluation ( Gururang an et al. , 2018 ; Pang et al. , 2023 ; Poliak et al. , 2018 ). Current LLMs exhibit biases acti v ated by var - ious factors, including dialectal variations like African-American English (AAE) ( Deas et al. , 2023 ; Hofmann et al. , 2024b ) This mirrors known bias issues within public education itself ( Baker- Bell , 2020 ). For example, Hardy ( Hardy , 2025 , 2024 ) demonstrated racial bias in LLM ev aluations of classroom instruction quality , ev en when the models were unaw are of the teacher’ s race. While instruction-tuning and prompt engineer- ing can refine LLM outputs, the computation- ally expensi ve foundational training on massi ve datasets remains the primary driver of performance ( McCoy et al. , 2023 ). Though prompt injection and jailbreaking can manipulate model behavior ( Rossi et al. , 2024 ; Schulhof f et al. , 2024 , 2023 ). and system prompts of fer some mitigation ( Pa wel- czyk et al. , 2024 ), it is unlikely a single prompt can reliably eliminate the myriad biases ingrained from internet text. Detecting biased performance is challenging for indi vidual users, as LLMs often appear reasonable and trustworthy even when inaccurate ( Klingbeil et al. , 2024 ; W en et al. , 2024 ; Zhou et al. , 2024 , 2023 ). Concerningly , both children and adults ex- hibit increased trust with repeated exposure ( K osoy et al. , 2024 ), a phenomenon demanding deeper in- vestigation gi ven observ ed behavioral changes in 4 post-secondary learning ( Abbas et al. , 2024 ; Nie et al. , 2024 ; Zhai et al. , 2024 ). Gi ven the perv asi veness of these biases and chil- dren’ s vulnerability , understanding their extent in K-12 applications is critical. W e hypothesize that biases persist e ven with best practices. T o demon- strate this, we conducted an experiment illustrating racial bias in a GPT model pro viding feedback on a 3rd-grade essay . Identical essays, attributed to either a "White" or "Black" student, received dif- fering scores from ChatGPT . The “White” student recei ved a higher score. These results, detailed in T able 1 , underscore the urgency of addressing LLM bias in educational contexts. This example does not require explanation to recognize that it is problematic. It also mirrors other research ( Deshpande et al. , 2023 ; Shieh et al. , 2024 ; W arr et al. , 2024 ). The complete prompts used to generate those outputs are a v ailable in the Appendix D . The rele v ance of this example is that a practitioner may generate only one response. The table 1 example was generated directly from Ope- nAI’ s chat interface. 3 Data W e use mixed-ef fects modeling for meta-regression to control for study heterogeneity , as well as ran- dom heterogeneity across the sample of items, the LLMs employed, and the v arious machine learning practices employed across the studies. In addition to the random ef fects, we control for the relativ e dif ficulty of scoring the items by using the human QWK ( Shermis , 2015 ) as a covariate. Additional information about the datasets and metadataset can be found in Appendix B . 4 Methods 4.1 Meta-analytic Study The ASAP-SAS 2 corpus comprises di verse prompts and scoring rubrics for short answer scor- ing. Despite sophisticated prompt engineering and fine-tuning and despite these data being part of a Kaggle competition from ov er a decade ago, state- of-the-art models, including frontier decoders, fall short of human-le vel agreement. Across studies, we observe: (a) lower y ij on reading versus sci- ence items, consistent with greater dependence on deep semantic integration; (b) decoder-only archi- tectures lag encoder -based, aligning with masked- 2 https://www.kaggle.com/c/asap- sas language-model pretraining adv antages in bidirec- tional semantic representation; (c) tokenizer vocab- ulary size correlates with performance up to a point, after which gains taper , consistent with a concav e f ( · ) . Moreov er , two practical constraints emer ge: limited transferability of fine-tuned models across item sets and pronounced sensitivity to prompt wording. Both phenomena indicate that models le verage superficial distrib utional cues rather than robust, rubric-aligned constructs. Findings are ro- bust to both frequentist and Bayesian frame works. 4.2 QWK outcome W e meta-analyzed results from multiple empirical studies in which large language models (LLMs) were used to score short, child-written constructed responses on a common set of J = 10 rubric- scored items. Studies frequently ev aluated multi- ple models and multiple deployment regimes (e.g., prompting vs. fine-tuning). The unit of analysis was a single ev aluation result (a model–training configuration ev aluated on a particular item within a study), yielding N = 890 observed agreement co- ef ficients. Details regarding the systematic re vie w that collected this data and overall statistics for the metadataset can be found in Appendix B and T a- ble 6 (as well as in the code and data repository online). Effect size Each observ ation is a quadratic weighted kappa (QWK) coef ficient, κ w ∈ [ − 1 , 1] , comparing the automated score to human scores with quadratic weights on ordinal disagreements. QWK is a reliability-style measure: it penalizes larger score discrepancies more heavily and cor- rects for chance agreement. In the case of com- parisons between each LLM instance and human raters, this reliability measure also ef fecti vely acts as a measure of accuracy that has been used for these kinds of tasks for well ov er a decade ( Sher- mis , 2015 ; Shermis and Burstein , 2003 ). Because correlation-like coef ficients exhibit non-constant sampling v ariance and nonlinearity near the bound- aries, we analyze a Fisher z -transformed ef fect size y := atanh( κ w ) = 1 2 log 1+ κ w 1 − κ w , which is ap- proximately normal when κ w is not extremely close to ± 1 . W e apply the same transformation to the human-rater benchmark QWK cov ariate described belo w . 5 4.3 Predictors Each e v aluation result includes the follo wing pre- dictors (coded at the le vel of the model configura- tion and/or item): • T okenization family ( token ): a categorical indicator for BPE, Unigram, or W ordPiece. W e parameterize this as no inter cept and in- clude a coef ficient for each family so that each coef ficient directly represents the expected transformed QWK for that tokenizer at the reference v alues of the remaining cov ariates. • V ocabulary size ( | V | , vocab ) and quadratic term ( | V | 2 , I(vocabˆ2) ): to capture non- monotone effects e xpected when vocab ularies are too small (o ver -fragmentation) or too large (under-trained rare tokens). V ocab ulary size is entered in a consistent numeric scale across studies; the quadratic term tests for a concav e- do wn relationship. • Decoder (GPT -style) vs. encoder ( gpt ): an indicator for autoregressi v e/decoder LLMs versus encoder(-decoder) or encoder-based ar - chitectures. • Meaning dependence ( read ): an indicator for items requiring more semantic interpre- tation (as opposed to more form/keyword- aligned scoring). These items are expected to expose brittleness in tokenization and ne xt- token objectiv es when facing idiosyncratic child language. • Model size ( logsize ): log parameter count (or an equi v alent log-scale size proxy) to rep- resent scaling ef fects. • Human benchmark difficulty ( QWK hum , humqwk ): QWK among human raters for the same item, Fisher- z transformed. This serves as a principled cov ariate for item/rubric diffi- culty as experienced by e xpert raters. 4.4 Hierarchical meta-r egr ession 4.4.1 Multilev el meta-modeling The dataset is multi-way clustered: observ ations are nested within items, studies, models, and train- ing re gimes, and many studies report multiple mod- els and multiple training regimes. T reating all N ro ws as independent overstates precision. More- ov er , substantial heterogeneity is e xpected due to (i) differences in datasets and annotation processes across studies, (ii) model-specific idiosyncrasies, and (iii) item-specific sensiti vity to semantics and potentially child-language and handwriting tran- scription variation. W e therefore estimate multi- le vel meta-regressions with random effects and, in the most conserv ati ve specification, allo w item- specific deviations that v ary by model, study , and training regime. 4.4.2 Baseline and increasingly contr olled specifications W e first fit a sequence of frequentist linear mixed models (LMMs) to assess robustness to different random-ef fects structures. The general form of our models is: z QWK ij kℓ = X ⊤ ij kℓ β + Z ⊤ ij kℓ u + ϵ ij kℓ (1) where i index es items, j index es studies, k index es models, ℓ index es training approaches, X contains fixed effects, β are fixed-effect coefficients, Z is the design matrix for random ef fects, u are random ef fect realizations, and ϵ ij kℓ ∼ N (0 , σ 2 ϵ ) . The random ef fects progress from simpler two- way structures (e.g., random intercepts for item and implementation) to more fully crossed structures including study/model/training and their interac- tions, and finally to random item slopes within each higher-le vel factor . This last structure (Model 6 in T able 1) operationalizes a key practical concern: a model (or training approach) may perform well on one item and poorly on another, and this item- by-implementation instability is itself part of the phenomenon being studied. 4.5 Bayesian estimation of the maximal stable model The most conserv ati ve item-varying specification is high-dimensional relativ e to the number of stud- ies and training regimes. T o a void o v erconfidence from boundary estimates and to stabilize infer- ence under small- K clustering, we re-estimate the Model 6 specification in a Bayesian frame work. 4.5.1 Likelihood Let y n denote the Fisher - z transformed QWK for observ ation n . W e assume y n | µ n , σ ∼ N ( µ n , σ 2 ) , with linear predictor µ n = x ⊤ n β + u ( m ) m [ n ] , j [ n ] + u ( s ) s [ n ] , j [ n ] + u ( t ) t [ n ] , j [ n ] , (2) where x n contains the fixed ef fects for tokeniza- tion family , QWK hum , read , | V | , | V | 2 , gpt , and 6 logsize . The indices m [ n ] , s [ n ] , t [ n ] , and j [ n ] map each observation to its model, study , train- ing regime, and item. The random effects u ( m ) m,j , u ( s ) s,j , and u ( t ) t,j are item-specific de viations for each model/study/training group, respecti vely . 4.5.2 Random-effects distributions For each grouping factor g ∈ { m, s, t } , the vec- tor of item de viations is modeled as u ( g ) k := ( u ( g ) k, 1 , . . . , u ( g ) k,J ) ⊤ ∼ N 0 , Σ ( g ) , with Σ ( g ) pa- rameterized by per-item standard deviations and an item-to-item correlation matrix. This structure allo ws (i) different items to vary in their sensitiv- ity to study/model/training differences, and (ii) the pattern of item sensitivities to be correlated (e.g., items 1 and 2 tending to rise and fall together across implementations). 4.6 Model reporting and inter pretability W e report fixed-ef fect estimates with uncertainty interv als (frequentist 95% CIs; Bayesian 95% cred- ible intervals). Because ef fects are estimated on Fisher- z scale, coefficients can be mapped back to the QWK scale by tanh( · ) for interpretation: b κ w = tanh( b y ) . W e emphasize sign and rela- ti ve magnitude across co v ariates, and we interpret random-ef fects v ariance components as implemen- tation instability (how strongly a gi ven factor in- duces item-by-item variability in scoring reliabil- ity). 5 Discussion The results from estimating these models are in T able 2 . 5.1 What the meta-regr ession r ev eals about LLM scoring of child responses Across studies and items, the hierarchical meta- regressions identify consistent correlates of scor- ing reliability on a task that is deceptiv ely dif ficult for generati ve models: assigning rubric-consistent ordinal scores to short, noisy , child-written text. Three patterns stand out. (1) Meaning dependence r eliably lowers agr ee- ment. The read indicator is negati ve across spec- ifications, including the Bayesian model (posterior mean ≈ − 0 . 21 on Fisher- z scale). This implies that when an item requires integrating meaning rather than matching surface features, LLM-based scorers e xhibit a systematic drop in agreement with humans. Practically , this is the regime where rubric interpretation, negation, partial credit logic, and child-specific phrasing all matter—and where next- token training objecti ves and instruction-follo wing priors can yield plausible but rubric-inconsistent judgments. (2) Decoder (GPT -style) architectures are not unif ormly advantaged f or r eliability . The gpt coef ficient is negati v e in the Bayesian specification (posterior mean ≈ − 0 . 39 , with the 95% credible interv al excluding 0 in the pro vided fit). This result links an architectural propensity—autoregressi v e generation optimized for fluent continuation—to a reliability-style ev aluation criterion. In short- answer scoring, the goal is not to generate a good explanation; it is to apply a stable or dinal decision rule . Decoder models may be especially prone to (i) ov er -conditioning on spurious lexical cues, (ii) verbosity or rationale-dri ven confab ulation that is not anchored to the rubric, and (iii) sensitivity to prompt framing that manifests as higher v ariance across items and studies. Our item-varying random ef fects make this visible: ev en when average per - formance is acceptable, instability across items can remain substantial. (3) T okenization matters, and vocabulary size exhibits a “Goldilocks” region. T okenization family coef ficients are all strongly positi ve be- cause the model is fit without an intercept; they represent baseline expected performance under each tokenizer when other cov ariates are at their reference lev els. The more diagnostic result is the quadratic vocab ulary effect: | V | 2 is negati v e (Bayes ≈ − 0 . 06 , excluding 0), consistent with a concav e-do wn relationship. This supports a practi- cal hypothesis specific to child-written responses: extremely small vocabularies ov er -fragment id- iosyncratic spellings and morphology , while ex- tremely large v ocabularies include rare or under- trained tokens that behav e unpredictably on out-of- distribution orthography . Both failure modes re- duce scoring reliability , e v en if the y do not strongly af fect perceiv ed fluenc y . 5.2 Scaling helps, but it is not the primary lev er Model size ( logsize ) is positiv e in the best- controlled models (Bayes ≈ 0 . 06 ), indicating that scaling can improv e agreement. Howe ver , the ef- fect is modest relativ e to the systematic penalty associated with meaning dependence and the ar- 7 chitectural/tokenization correlates. This aligns with practitioner experience: larger LLMs may be- come better at paraphrase and world kno wledge, but rubric-aligned ordinal scoring remains bottle- necked by stability , calibration, and robustness to child-language noise. 5.3 Human item difficulty does not explain LLM difficulty A central and practically important null result is that QWK hum ( humqwk ) is not reliably associated with LLM QWK in any parameterization, including the Bayesian model (posterior mean near 0 with wide uncertainty). This disconnect is informati ve: • Items that are “hard for humans” (lo w human– human agreement) are not neces sarily hard for LLMs. • Con v ersely , items that humans score consis- tently can still be dif ficult for LLMs, particu- larly when they require semantic inte gration under noisy writing. This moti vates a methodological caution for de- ployment: practitioners should av oid anthropomor- phizing item dif ficulty . Human rater disagreement reflects ambiguity , rubric underspecification, and subjecti ve interpretation; LLM f ailure often reflects distribution shift (child orthography), tok enization artifacts, and sensiti vity to prompt or training regi- men. The two dif ficulty notions are not interchange- able, so human reliability metrics should be used as quality contr ol for labels , not as a proxy for expected model beha vior . 5.4 Why the maximal random-effects structure changes the story Comparing specifications shows that simpler random-intercept models can yield ov erly opti- mistic or unstable fixed-ef fect conclusions because they attrib ute structured heterogeneity to residual noise. The item-v arying random ef fects in Model 6 (and its Bayesian re-estimation) explicitly model a phenomenon that is operationally critical in ed- ucational scoring: a model can be r eliable on some items and unr eliable on others, and this pat- tern differs acr oss studies and training re gimes . In the Bayesian fit, the study- and training-lev el item-de viation standard deviations are often lar ger than the model-lev el ones for sev eral items, consis- tent with the idea that dataset construction, annota- tion design, and fine-tuning/prompting choices can dominate architecture in determining reliability . This provides a concrete recommendation for future e v aluations: report not only an overall QWK but also item-wise profiles and an instability mea- sure (e.g., v ariance of item-specific deviations) across replications. 5.5 Implications f or deploying LLM scorers in education Design for robustness to child text. T okeniza- tion and vocabulary design are not incidental im- plementation details; they are part of the reliability mechanism. When selecting or training models for child-written responses, practitioners should e xplic- itly test sensitivity to misspellings, in v ented words, and nonstandard morphology , and a void e xtremes of vocab ulary size. T r eat semantic items as a separate regime. Meaning-dependent items should be ev aluated and monitored separately . If an assessment mixes surface-aligned and meaning-dependent items, a single ov erall QWK obscures the most consequen- tial failures. Prefer reliability-center ed training and evalu- ation. Decoder LLMs can be competitiv e, but the meta-analytic evidence suggests that without careful calibration and rubric-constrained decision rules, they may underperform in reliability terms. T raining objecti v es and inference procedures that minimize v ariability (e.g., deterministic decoding, calibration on ordinal thresholds, or constrained classification heads) are likely to matter as much as raw scale. 5.6 Linking outcomes to autoregr ession The meta-analytic regularities follo w from the au- toregressi v e objecti ve ( McCoy et al. , 2023 ) which re wards fluency , local coherence, and surface-form patterning ( Mahow ald et al. , 2023 ). Autoscoring, especially for reading comprehension, instead re- quires mapping student language to latent con- structs—e vidence of understanding, inference, and justification—under rubric constraints. Decoder- only architectures optimize unidirectional likeli- hood p ( x t | x

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment