Structure, Analysis, and Synthesis of First-Order Algorithms

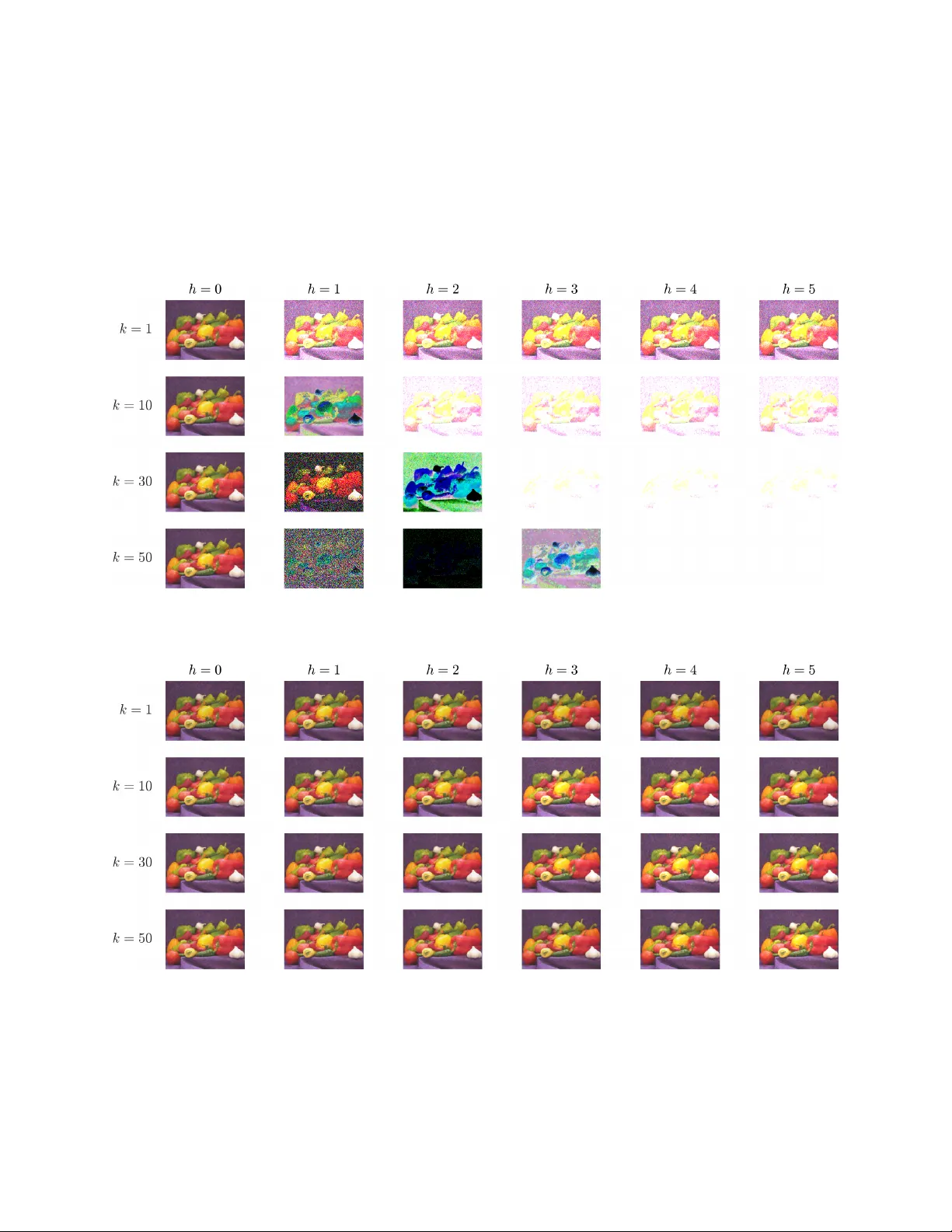

Optimization algorithms can be interpreted through the lens of dynamical systems as the interconnection of linear systems and a set of subgradient nonlinearities. This dynamical systems formulation allows for the analysis and synthesis of optimizatio…

Authors: Jared Miller, Carsten Scherer, Fabian Jakob