Conformal Selective Prediction with General Risk Control

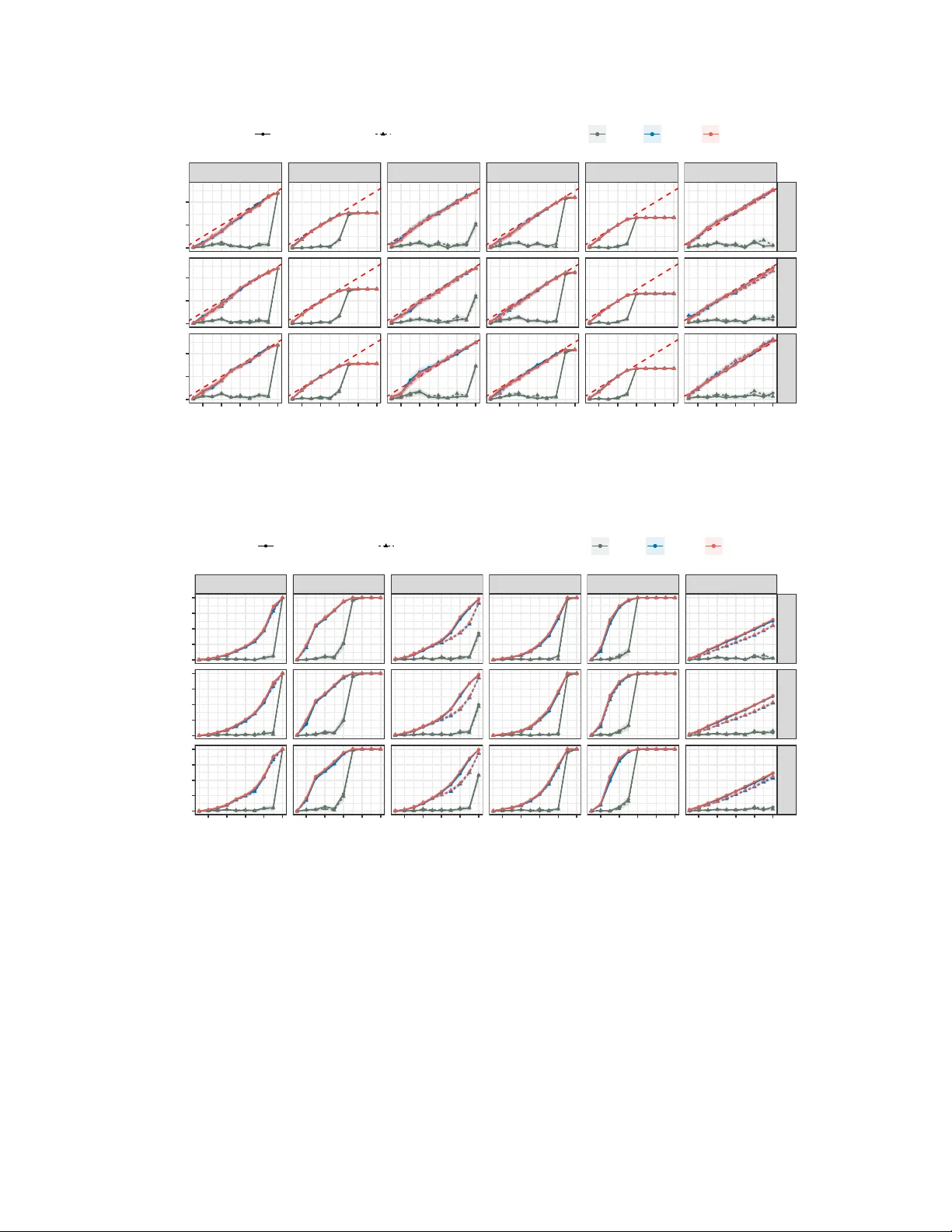

In deploying artificial intelligence (AI) models, selective prediction offers the option to abstain from making a prediction when uncertain about model quality. To fulfill its promise, it is crucial to enforce strict and precise error control over ca…

Authors: Tian Bai, Ying Jin