IndustriConnect: MCP Adapters and Mock-First Evaluation for AI-Assisted Industrial Operations

AI assistants can decompose multi-step workflows, but they do not natively speak industrial protocols such as Modbus, MQTT/Sparkplug B, or OPC UA, so this paper presents INDUSTRICONNECT, a prototype suite of Model Context Protocol (MCP) adapters that…

Authors: Melwin Xavier, Melveena Jolly, Vaisakh M A

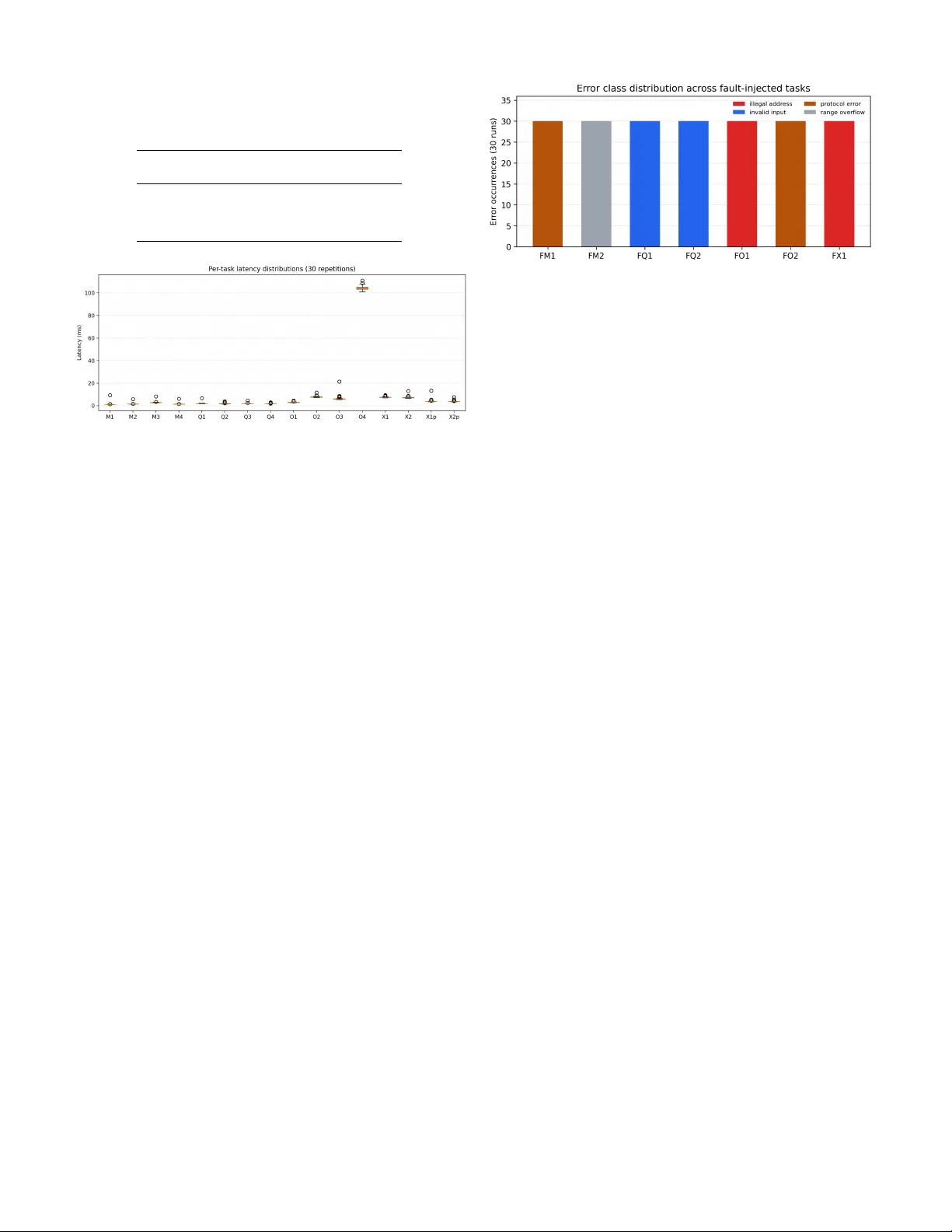

IndustriConnect: MCP Adapters and Mock-First Ev aluation for AI-Assisted Industrial Operations Melwin Xavier Lule ˚ a tekniska univ ersitet, Sweden melwin.xa vier@ltu.se Melveena Jolly Independent Researcher melveenajollyk@gmail.com V aisakh M A Independent Researcher 997vaisakh@gmail.com Midhun Xavier Independent Researcher midhun@industriagents.com Abstract —AI assistants can decompose multi-step workflows, but they do not natively speak industrial protocols such as Modbus, MQTT/Sparkplug B, or OPC U A. This paper pr esents I N D U S T R I C O N N E C T , a prototype suite of Model Context Pr otocol (MCP) adapters that expose industrial operations as schema- discoverable AI tools while preser ving protocol-specific connec- tivity and safety controls. The system uses a common response en velope and a mock-first workflow so adapter behavior can be exercised locally befor e connecting to plant equipment. A deterministic benchmark cov ering normal, fault-injected, stress, and recov ery scenarios evaluates the flagship adapters. The benchmark comprises 870 runs (480 normal, 210 fault-injected, 120 str ess, 60 recovery trials) and 2820 tool calls acr oss 7 fault scenarios and 12 stress scenarios. The normal suite achieved full success, the fault suite confirmed structured error handling with adapter -level uint16 range validation, and the stress suite identified concurrency boundaries. Same-session reco very after endpoint restart is demonstrated f or all three protocols. The re- sults provide evidence spanning adapter correctness, concurrency behavior , and structured error handling f or AI-assisted industrial operations. I . I N T RO D U C T I O N Consider a simple operator request: check a temperature excursion on an OPC UA namespace, confirm a related Mod- bus register block, and publish a Sparkplug B status update for downstream systems. Each subtask is routine in isolation, but the overall workflow crosses incompatible operational technology (OT) protocols whose interfaces were not designed for modern AI assistants. This protocol gap remains a practical barrier to AI-assisted industrial automation ev en as Industry 4.0 programs push tighter coupling between information tech- nology and plant-floor systems [1], [2]. Large language model (LLM) assistants work best with typed, discoverable tool interfaces rather than binary protocol frames or v endor SDKs. MCP provides a standard way for assistants to list tools, inspect schemas, and in vok e operations through a common call interface [3]. MCP alone, ho wev er , does not solve OT connecti vity , endpoint health, or the need to represent blocked writes and transient failures in a way that an agent can reason about safely . That gap is especially visible in brownfield environments. Real plants often begin with partial register maps, vendor- specific addressing rules, or limited access windows on pro- duction equipment. A practical integration layer therefore needs more than tool schemas: it also needs protocol adapters, explicit safety conv entions, and a way to v alidate workflows before touching liv e controllers. This paper reframes I N D U S T R I C O N N E C T as a bounded sys- tems contribution: a prototype MCP-to-O T adapter architec- ture with a mock-first e valuation workflo w . The paper does not claim a complete industrial platform, a new LLM reasoning method, or production deployment evidence. Instead, it fo- cuses on the flagship adapters for Modbus, MQTT/Sparkplug B, and OPC U A while retaining seven additional protocol modules as ecosystem breadth. The manuscript makes three contrib utions: 1) A practical MCP-to-OT adapter architecture that stan- dardizes tool discovery and response handling across heterogeneous industrial protocols. 2) A mock-first methodology for validating adapter behav- ior , write guards, and restart recovery , extended with fault injection and stress testing to expose error-handling and concurrency boundaries without requiring physical devices. 3) A reproducible deterministic ev aluation on Modbus, MQTT/Sparkplug B, and OPC U A comprising normal, fault-injected, stress, and reco very suites using determin- istic workloads with statistical v ariance reporting. The ev aluation uses a deterministic benchmark that exer - cises adapter beha vior under normal operation, deliberate fault conditions, stress scenarios, and endpoint restart. I I . D E S I G N G OA L S A N D A R C H I T E C T U R E Heterogeneity . Industrial protocols expose different inter- action models: register maps for Modbus, brok er topics for MQTT , and browsable information models for OPC U A [4], [5]. The adapter layer must translate these differences into tool schemas that remain understandable to an AI client. Schema discov erability . Each adapter re gisters MCP tools so a client can enumerate av ailable operations and call them without bespoke protocol code. This keeps the assistant- facing interface uniform ev en when the underlying transport or addressing model differs. Write safety . The adapters expose explicit write operations, but writes remain bounded by per -server configuration such as disable flags, address v alidation, and structured error returns. These controls matter because an AI-facing API must repre- sent both successful writes and intended denials in a machine- readable way . MCP Client / AI Assistant Claude Desktop · mcp-manager-ui · Custom LLM app stdio / JSON-RPC 2.0 (MCP) I N D U S T R I C O N N E C T Protocol MCP Server FastMCP · asyncio · write guards { success, data, error, meta } Modbus MQTT/SB OPC U A BA Cnet DNP3 EtherCA T EtherNet /IP S7comm . . . Mock Simulator Real PLC / R TU Offline testing Plant deployment mock liv e Fig. 1. I N D US T R I C O N N EC T adapter architecture. An AI assistant issues MCP tool calls through a common JSON-RPC interface. The adapter layer handles protocol translation and safety guards, returning a shared response en velope. Each protocol adapter tar gets either a local mock (dashed) or real plant equipment. Mockability . Every flagship adapter is paired with a local mock so the full tool path can be ex ercised offline. This enables reproducible ev aluation, debugging, and regression checks before any real controller or fieldb us is inv olved. Figure 1 shows the core pattern. An MCP-compatible assis- tant issues tool calls to an I N D U S T R I C O N N E C T adapter . The adapter handles protocol translation, connection management, and safety guards, then talks to either a mock endpoint or plant equipment. All benchmarked adapters return the same response env elope, { success, data, error, meta } . The success flag indicates whether the requested action achiev ed the expected protocol outcome, data holds the protocol-specific payload, error carries a structured failure reason, and meta records endpoint details, timings, and retry counts. This shared en velope does not erase protocol seman- tics; it defines a common measurement boundary and lets cross-protocol orchestration observe healthy reads, blocked writes, and reconnect behavior with the same outer structure. T able I is operationally important because it defines how the benchmark judges beha vior . A blocked write is not treated as a generic failure if the task oracle expects a guarded denial, while a reconnect path is ev aluated through the same outer structure as a healthy read. This is the main reason the paper can compare three different protocol families without pretending they e xpose the same payload model. The mock-first workflow complements the same architec- ture. Each flagship adapter is ex ercised against a local endpoint T ABLE I S H A RE D R E S PO N S E - E N V EL O P E FI EL D S U SE D AC RO S S T H E B E N C H M A R KE D A DA P T E R S . Field Role in the adapter contract success Reports whether the requested protocol action reached the expected outcome. data Carries the protocol-specific payload such as registers, node values, or publish metadata. error Preserves a machine-readable failure reason, including guarded denials and transient endpoint failures. meta Records wall-clock latency , endpoint details, attempts, and protocol-specific trace context. T ABLE II R E P OS I T O RY P ROT O C O L I N V EN T O RY . T O O L C O U N T S C O M E F RO M R E G IS T E R E D H A N D LE R S I N T H E B E N CH M A R K PA SS . 1 Protocol T ools Mock Status Rep. operation Modbus 17 TCP device flagship register/coil I/O MQTT + SB 15 brok er sim. flagship pub/sub + DD A T A OPC UA 7 U A server flagship bro wse + node R/W BA Cnet/IP 7 BA device scaffold object properties DNP3 8 outstation scaffold point poll/control EtherCA T 14 sla ve scaffold PDO/SDO access EtherNet/IP 17 PLC scaffold controller tags PR OFIBUS DP/P A 11 slave scaffold bus scan + cyclic I/O PR OFINET 14 IO device scaffold discovery + IO ex- change Siemens S7comm 20 PLC scaffold DB/I/O diagnostics first, b ut the MCP layer remains identical when the endpoint changes. That lets an engineer de velop prompts, schemas, and safety checks against a deterministic mock while preserving the same tool surface for later hardware validation. I I I . P RO TO T Y P E I M P L E M E N TA T I O N Every protocol module follows the same skeleton: an MCP server registers tools and schemas, a protocol client wrapper translates tool calls into nativ e operations, and a mock end- point provides a safe development target. The benchmarked Modbus, MQTT/Sparkplug B, and OPC UA stacks all use this pattern, including the shared response en velope and en vironment-driven write gating. The result is not a universal industrial abstraction layer; it is a consistent adapter contract around heterogeneous protocols. At implementation lev el, the common skeleton yields four reusable behaviors. First, each server exposes discoverable MCP tools with bounded JSON input shapes. Second, each adapter keeps transport- and protocol-specific state in its own client wrapper rather than leaking it into the LLM-facing layer . Third, e very tool normalizes timing and error details into the shared response en velope. Fourth, each protocol stack ships with a mock so smoke tests, prompt experiments, and regression checks can run without physical hardw are. T able II keeps the breadth story honest. The repository contains ten protocol modules, but only three are benchmarked in this paper . The Modbus adapter e xposes 17 tools; the bench- mark ev aluates a representative subset cov ering connectivity , reads, writes, guarded denials, and fault paths. The remaining 1 Protocols below the mid-rule are not e valuated in this paper . sev en modules remain useful context because the y sho w that the MCP adapter pattern generalizes across fieldbus, Ethernet, and brok er-centric industrial stacks even when se veral imple- mentations are still roadmap-driven. That breadth-versus-e vidence split is deliberate. The three flagship stacks were chosen because they represent different industrial interaction styles and because their current mocks are stable enough for repeated quantitative runs. Modbus cov ers direct register -style access, MQTT/Sparkplug B cov ers broker -mediated messaging, and OPC UA covers hierarchical information-model access. The remaining stacks are better understood as e vidence that the repository architecture gen- eralizes, not as equally validated research claims. Flagship adapters. The Modbus adapter centers on register and coil operations, connection health checks, guarded writes, and uint16 range validation. The MQTT adapter exposes broker inspection, subscriptions, generic publishes, and Spark- plug B device-data publishes. The OPC UA adapter focuses on node browsing, node reads, writes, multi-node access, and reconnection logic that re-establishes connections after endpoint restarts. Method in vocation exists in the current OPC U A prototype but was e xcluded from the benchmark because the current mock method path is not yet stable enough for repeatable ev aluation. Modbus details. The current Modbus surface contains 17 tools, including register and coil reads, bulk writes, typed holding-re gister access, masked register writes, device- information queries, and alias-based access paths. The adapter validates uint16 range boundaries (0–65535) before forward- ing write operations to the protocol layer , ensuring ov erflo w values are rejected with a structured error rather than silently truncated. The benchmark intentionally concentrates on the core cases most likely to appear in assistant workflows: connectivity checks, block reads, readback verification after a write, and a write-disabled safety path. MQTT/Sparkplug B details. The MQTT adapter exposes 15 tools spanning broker status, topic subscriptions, generic publish/unsubscribe operations, and Sparkplug-specific birth, death, data, and command flows. In practice this makes the adapter suitable both for ordinary pub/sub workflo ws and for structured industrial telemetry updates. The benchmark there- fore mixes a broker inspection task, a wildcard subscription task, a plain control publish, and a Sparkplug B DD A T A publish rather than ev aluating only one messaging style. OPC U A details. The OPC U A surface is smaller at 7 tools, but the semantics are richer because browsing and variable enumeration trav erse an information model rather than a flat address map. The adapter includes reconnection logic: a liveness probe checks the ServerStatus node before each operation, and on failure the stale client is disconnected and a fresh connection is established. The benchmark covers point reads, browsing, writes with readback confirmation, and full variable discov ery over the mock plant. Mock endpoint fidelity . Each flagship mock simu- lates enough plant-floor state for the benchmark work- load. The Modbus mock provisions 100 holding registers Fig. 2. Repository protocol landscape with the three evaluated flagship adapters highlighted. This figure is supporting context, not e valuation evi- dence. (valv e position, heater power , fan speed, con veyor speed, command words), 100 input registers (temperature, pressure, flow rate, tank lev el, vibration, pH, humidity , motor speed, production counters), 100 coils, and 100 discrete inputs, with a 1 Hz simulation loop that models inter-v ariable influence (heater power affects temperature, pump state affects pressure and flow). The MQTT mock combines an aedes broker with a Sparkplug B edge-node simulator that publishes NBIR TH, DBIR TH, and periodic DD A T A messages for two de vices; device metrics follow sinusoidal variation and all Sparkplug payloads use protobuf encoding. The OPC UA mock e xposes 8 read-only sensor variables, 6 read-write actuator variables, system-status variables, and 5 callable methods under a single namespace, updated every second with state-driven dynamics mirroring the Modbus model. Supporting console. The repository also includes a browser -based MCP Manager UI for registering protocol adapters and issuing operator-style prompts. Figure 2 shows the corrected ten-protocol landscape, and Figure 3 sho ws the MCP Manager UI. Both figures are supporting context only; they are not part of the benchmark and are not counted as separate research contributions. I V . E X P E R I M E N TA L S E T U P The e valuation tar gets adapter beha vior rather than end-to- end LLM planning. T o keep the workload reproducible, the authors fixed representativ e assistant intents and executed them as deterministic MCP tool workflo ws against local mocks. This isolates connection handling, tool schemas, response normalization, and safety behavior from prompt variability [6]. All measurements were taken on localhost against mock endpoints; latencies therefore reflect adapter overhead, not network round-trip times. T able III summarizes the w orkload. The normal suite con- tains 16 tasks (four Modbus, four MQTT/Sparkplug B, four OPC UA, four cross-protocol including parallel variants), each repeated 30 times for 480 runs. The fault-injection suite adds Fig. 3. MCP Manager UI used to register adapters and issue operator-style prompts. This figure is supporting conte xt, not benchmark e vidence. T ABLE III B E N CH M A R K M ATR I X F O R T H E D E T E R MI N I S T I C E V A L UATI O N . Suite Family T ask set Normal Modbus Ping; four-register read; register write/readback; guarded write denial MQTT/SB Broker info; subscribe to sensors/# ; generic publish; Sparkplug B DD A T A publish OPC UA T emperature read; actuator browse; valve write/readback; full variable enumeration Cross Sequential snapshot (X1); sequential control (X2); parallel snapshot (X1p); parallel control (X2p) Fault Modbus Read inv alid register (FM1); write overflow value—adapter rejects (FM2) MQTT/SB Publish to empty topic (FQ1); subscribe with in valid QoS (FQ2) OPC UA Read non-existent node (FO1); write wrong type to float node (FO2) Cross 3-step sequence with one deliberate bad input (FX1) Stress Per-adapter 4 concurrent reads (S1–S3); concurrent read+write (S4–S6) Per-adapter 50 sequential rapid-fire reads (S7–S9) Per-adapter Mid-operation mock restart (S10–S12) 7 tasks repeated 30 times each for 210 runs. The stress suite adds 12 tasks repeated 10 times each for 120 runs. The reco very suite injects one transient outage per flagship stack, repeated 20 times each for 60 restart trials. The full benchmark thus comprises 870 runs and 2820 tool calls. All aggregation includes mean, standard deviation, and 95% confidence intervals (t-distribution). The fault-injection philosophy follows established prac- tice [7]: correctness under fault conditions means return- ing success=false with a meaningful, structured error en velope—not silently succeeding or crashing. A fault task passes when the adapter produces a well-formed error re- sponse that an assistant could interpret and act on. FM2 (Modbus o verflo w) now tests a deterministic boundary: the adapter v alidates uint16 range and must reject the v alue with success=false —the check is falsifiable. The recovery suite uses same-session semantics: after restarting the mock endpoint, the harness first attempts re- cov ery through the original MCP session, then falls back to a fresh session. This dual-mode approach distinguishes adapters that support transparent reconnection (e.g., pymodb us auto- T ABLE IV B E N CH M A R K R UN T I M E C O N FI G UR AT IO N U S E D I N T H E PA P E R . Family Mock endpoint Recovery semantics Modbus TCP mock at 127.0.0.1:1502 Same-session via pymodbus auto-reconnect, then fresh session MQTT/SB Local broker at 127.0.0.1:1883 Same-session via paho reconnect_delay_set , then fresh session OPC UA Local OPC U A endpoint Same-session via _ensure_connected() liv eness probe, then fresh session reconnect, OPC U A li veness probe) from those requiring a new session. All benchmarked adapters were launched as local stdio MCP servers. The Modbus stack targeted the TCP mock at 127.0.0.1:1502 , the MQTT adapter targeted the local broker at mqtt://127.0.0.1:1883 , and the OPC UA adapter targeted the local mock endpoint at opc.tcp://127.0.0.1:4840 . The benchmark harness opened dedicated sessions for the read-write Modbus configu- ration, the read-only Modbus configuration, MQTT , and OPC U A, then executed the normal, fault, stress, and reco very suites in a fixed order . The normal runs also use fix ed v alue patterns so task v alidation is deterministic. For example, the Modb us write/readback task writes 40 + repetition , the Spark- plug task publishes a single temperature metric with 20.0 + repetition , and the OPC UA valv e task writes 25.0 + repetition . The cross-protocol control task uses a fixed Modbus write, an OPC U A boolean write, and a control-topic publish, making the end-to-end sequence stable enough for repeated latency summaries. The harness records raw tool responses and then deriv es family-le vel, task-level, and reco very-phase summaries from the same JSON artifacts. The tables in the paper are gen- erated from these JSON results through a single-source-of- truth pipeline ( generate_paper_tables.py ), eliminat- ing hand-copied numbers. W orkflow example (X1). An operator asks for a fast cross- system snapshot before escalating a maintenance ev ent. The assistant issues three fixed tool calls: a Modbus sensor-block read, an OPC U A multi-node read, and an MQTT broker inspection. The resulting state bundle confirms that the trans- port links are healthy and that the key process values can be read through all three adapters in one short sequence. X1p ex ercises the same workflo w with parallel tool calls via asyncio.gather() . V . R E S U LT S A. Normal Suite Results T able V shows clean success rates across the normal suite: all 480 task executions succeeded, and all 780 tool calls matched their expected outcomes. Modbus and MQTT/Sparkplug B remained in the low single-digit mil- lisecond range. OPC UA shows wider spread due to v ariable T ABLE V F A MI LY - L E V E L R E SU LT S F RO M T H E N O R M A L B E NC H M A R K S U I T E ( 3 0 R E P ET I T I O N S ) . C R O SS - P RO TO C O L RO W S AG G R E G A T E T H E F O U R M U L T I - A DA P TE R TAS K S . Family T asks T ask T ool Med. p95 Rec. (%) (%) (ms) (ms) (%) Modbus 4 100.0 100.0 1.4 3.1 100.0 MQTT 4 100.0 100.0 1.7 2.5 100.0 OPC UA 4 100.0 100.0 7.3 105.2 100.0 Cross-protocol 4 100.0 100.0 6.7 8.7 — Fig. 4. Per-task latency distributions across 30 repetitions. Box plots show median, interquartile range, and outliers. enumeration, which is two orders of magnitude slower than single-node reads. Cross-protocol tasks remain close to 7 ms median, suggesting the response-env elope approach does not impose noticeable overhead for short local workflows. All latencies reported are localhost measurements; network round- trip would dominate in a deployed setting. T able VI makes the tail behavior clearer . Modbus remains tightly clustered e ven when readback verification or guarded- write rejection is required. OPC U A v ariable enumeration dominates the f amily p95, consistent with information-model breadth rather than a generalized slowdo wn. The parallel cross- protocol variants (X1p, X2p) show reduced latency compared to their sequential counterparts (X1, X2), confirming that concurrent adapter in vocation works correctly . Figure 4 shows the per-task latency distributions. The visualization reveals that most tasks have tight distrib utions with few outliers, except OPC U A variable enumeration (O4), which shows inherently higher variance due to the recursive tree traversal. Overhead decomposition. The adapter o verhead is dom- inated by three components: (1) stdio transport—each tool call requires process-le vel IPC through stdin/stdout pipes; (2) JSON serialization—request encoding and response parsing of the en velope structure; (3) protocol translation—the actual client library call. For local mock tar gets, the protocol call itself is sub-millisecond, making the stdio+JSON path the dominant cost. This decomposition suggests that switching to an HTTP or SSE transport would reduce overhead for production deployments. B. F ault Injection Results T able VII reports the per-task fault-injection results. The key metric is the error-handling rate (EH%): the fraction of runs Fig. 5. Error class distribution across the seven fault-injected tasks (30 runs each). Each color represents a distinct error category returned by the adapter . where the adapter returned a structured success=false re- sponse with a classifiable error, rather than crashing, hanging, or returning an unstructured message. All sev en fault tasks achiev ed 100% error-handling across 210 runs, with 270 tool calls total. FM2 (Modbus overflo w) now produces a deterministic re- sult: the adapter validates that the write v alue falls within the uint16 range (0–65535) and returns success=false with error class range_overflow for the value 70000. This replaces the earlier disjunctiv e check that accepted either rejection or truncation, making the test falsifiable—an adapter that silently passed the ov erflow value through would no w fail the benchmark. Figure 5 shows the error class distribution as a stacked bar chart. The distrib ution confirms that each adapter maps its fault conditions to semantically appropriate error categories rather than collapsing all failures into a single generic class. This is important for do wnstream LLM consumption: an assistant that receiv es illegal_address can suggest correctiv e action differently from one that receiv es range_overflow or type_mismatch . C. Stress Suite Results The stress suite is designed to produce non-zero failure rates, unlike the normal and fault suites which target 100% pass rates. Three categories of stress are tested: Concurrent access (S1–S6). F our parallel reads per adapter and concurrent read+write operations test whether the MCP adapter handles overlapping requests correctly . These tasks are expected to succeed under normal conditions. Rapid-fire reads (S7–S9). Fifty sequential calls in a tight loop per adapter test sustained throughput. Boundary latencies rev eal whether the adapter or mock server introduces queuing under load. Mid-operation r estart (S10–S12). These tasks fire a read, stop the mock, fire another read (expected to fail), restart the mock, and fire a final read (expected to succeed). The three-phase check verifies that adapter state does not become permanently corrupted after a transient endpoint failure. Partial success (2/3 calls correct) is the expected outcome. S11 (MQTT mid-r estart) failure analysis. The MQTT mid-operation restart task (S11) is the only stress scenario T ABLE VI P E R - TA S K L A T E NC Y B R E AK D O W N F R O M T H E N O R M A L B E N C HM A R K S U I T E ( 3 0 R E P E TI T I O N S ) . ID Family Operation Median (ms) p95 (ms) M1 Modbus Ping the adapter and confirm the TCP mock is reachable. 1.1 1.4 M2 Modbus Read a four-register sensor block from the Modbus mock. 1.5 1.7 M3 Modbus Write a holding register and read it back for verification. 2.7 3.3 M4 Modbus Attempt a write with writes disabled and expect a guarded rejection. 1.3 1.6 Q1 MQTT Inspect broker connectivity through the MCP adapter. 1.8 2.1 Q2 MQTT Subscribe to the mock sensor topic namespace. 1.7 2.7 Q3 MQTT Publish a standard MQTT control payload. 1.7 2.2 Q4 MQTT Publish a Sparkplug B DDA T A update for the mock device. 1.6 2.3 O1 OPC UA Read the temperature sensor variable from the OPC UA mock plant. 3.0 4.2 O2 OPC UA Bro wse the actuator subtree in the mock plant. 7.6 9.0 O3 OPC UA Write the valve-position variable and confirm the new value. 5.9 8.4 O4 OPC UA Enumerate the mock plant variables through the adapter . 103.9 108.2 X1 Cross-protocol Collect a multi-adapter state snapshot across Modbus, OPC U A, and MQTT . 7.4 8.8 X2 Cross-protocol Execute a coordinated multi-adapter control sequence. 7.1 8.7 X1p Cross-protocol Parallel multi-adapter state snapshot across Modbus, OPC UA, and MQTT . 3.8 5.3 X2p Cross-protocol Parallel coordinated multi-adapter control sequence. 3.7 5.0 T ABLE VII F AU L T - I N J EC T I O N TA SK R E S U L T S ( 3 0 R E P ET I T I O N S ) . E R R OR - H A N D L IN G R A T E M E A SU R E S H O W O F T E N T H E A DA P T E R R E T U RN E D A W E L L - F O R M E D S T RU C T U RE D E R RO R . ID Family Fault scenario EH% Med. (ms) Error class FM1 Modbus Read inv alid register address (9999) and expect a structured protocol error . 100.0 1.3 protocol error FM2 Modbus Write overflo w value (70000 ¿ uint16 max) and expect adapter rejection. 100.0 1.0 range overflow FQ1 MQTT Publish to empty topic string and expect a structured in valid-input error . 100.0 1.6 in valid input FQ2 MQTT Subscribe with invalid QoS (5) and expect a structured in valid-input error . 100.0 1.6 in valid input FO1 OPC U A Read non-existent node (ns=2;i=99999) and expect a structured read-failed error. 100.0 2.7 illegal address FO2 OPC U A Write wrong type (string to float node) and expect a structured write-failed error. 100.0 2.5 protocol error FX1 Cross-protocol 3-step cross-protocol sequence with one deliberate bad OPC U A read; expect 2/3 succeed. 100.0 5.7 illegal address that reports 0% success rate, and this result w arrants detailed discussion. After the broker is stopped and restarted, the paho MQTT client’ s internal reconnect timer does not fire within the 1-second post-restart window used by the stress harness. In contrast, Modb us (S10) recov ers because pymod- bus auto-reconnect retries on the next call, and OPC U A (S12) recovers because the adapter’ s liv eness probe forces a fresh connection attempt. The MQTT adapter relies on paho’ s reconnect_delay_set background loop, which uses exponential backof f starting at 1 s—so the first reconnect attempt may not land within the tight stress window . This is a genuine protocol-specific limitation, not a test artifact. The recovery suite (Section V -D) uses a 3-second post-restart window and dual-mode reporting, which giv es paho enough time to reconnect, explaining why MQTT achiev es 100% recov ery there. The practical implication is that MQTT adapter Fig. 6. Recovery success rates ov er 60 restart trials per flagship adapter, comparing same-session recovery (adapter reconnects transparently) versus fresh-session recovery (new MCP session). deployments should tune the reconnect delay for their e xpected outage profile, or add an explicit reconnect-on-failure path analogous to the OPC U A li veness probe. D. Recovery Results The recovery experiments use dual-mode reporting over 60 trials per adapter . After restarting the mock endpoint, the harness first attempts a same-session call (reusing the original MCP session) and then a fresh-session call (opening a new session). This distinguishes three behaviors: Modbus: pymodb us supports auto-reconnect, so same- session recov ery succeeds when the mock becomes av ailable again within the 3-second post-restart window . Fresh-session recov ery always succeeds. MQTT : paho’ s reconnect_delay_set handles broker reconnection. Same-session recovery depends on whether the reconnect timer fires within the window . Fresh-session reco v- ery provides a reliable fallback. OPC U A: The new _ensure_connected() liv eness probe checks the ServerStatus node and reconnects if stale. This enables same-session recov ery by transparently creating a fresh OPC UA client within the e xisting MCP session. Fig. 7. Recovery-phase breakdown per flagship adapter . Figure 7 sho ws the phase-le vel breakdo wn. MQTT detects outages almost immediately . Modbus detects within its retry window . OPC U A spends the most time in the failure-detection phase due to its connection-oriented transport. Future hard- ening work should tune timeout and reconnect policy per protocol rather than enforce one global default. E. Security Considerations The current adapter architecture provides functional write guards via en vironment-variable booleans (e.g., MODBUS_WRITES_ENABLED ), but these are configuration controls, not authentication. Several security gaps warrant discussion: No per-user authorization. All tool calls are executed with the same privile ge le vel as the adapter process. There is no role-based access control (RBA C) or per -operation approv al workflo w . No audit logging. T ool inv ocations and their outcomes are not logged to a tamper -e vident audit trail. For production de- ployments, every write operation should produce an auditable record. Attack surface of LLM tool exposur e. Exposing write- capable industrial tools to an AI assistant creates a novel attack surface: prompt injection or adversarial inputs could potentially trigger unintended write operations. Recommendations. Production deployments should add: (1) RBA C with per-tool authorization, (2) audit logging for all write operations, (3) mTLS or equi valent transport security between adapter and endpoint, and (4) human-in-the-loop approv al for safety-critical writes. F . Limitations The e vidence is intentionally bounded. This is a mock-only , localhost-only study . All latencies reflect local process over - head, not network round-trip. The benchmark omits human baselines and excludes OPC U A method calls because the current prototype mock does not yet support them reliably enough for repeatable measurement. V alidating assistant-le vel usability through a quantitative LLM assessment across multiple model families is a clear ne xt step. The stress suite identifies concurrency boundaries b ut does not systematically explore all possible failure modes under load. The mid-operation restart scenario (S10–S12) depends on timing and may produce variable results across runs. V I . R E L A T E D W O R K Industry 4.0 and cyber-physical manufacturing literature has consistently treated heterogeneous connectivity as a founda- tional systems problem rather than a peripheral implemen- tation detail [1], [2], [8]–[12]. Those works moti vate why assistant-facing industrial tooling cannot assume one canonical protocol stack or one clean greenfield deployment path. W ithin industrial communication research, OPC U A and MQTT are widely used as normalization layers, but the interoperability story remains incomplete in brownfield set- tings. Prior work has examined OPC UA performance, MQTT adoption in IoT middleware, gatew ay-le vel interoperability , and field-le vel plug-and-work integration [4], [5], [13], [14]. These studies improv e system-to-system interoperability , yet they typically stop at middle ware, gatew ay , or device integra- tion boundaries rather than exposing operations as schema- discov erable tool contracts for an AI assistant. Industrial middlew are, cyber -physical manufacturing sys- tems, and digital-twin work address adjacent concerns such as service composition, plant modeling, and deployment- time validation [12], [15], [16]. I N D U S T R I C O N N E C T does not compete with those layers. It sits closer to the assistant and packages protocol actions into a common tool surface while leaving historian, SCAD A, and plant-model responsibilities outside the paper’ s scope. The mock-first workflow is closest to digital-twin and virtual v alidation practices, but the goal here is narrower: verify adapter semantics, guarded failures, and restart behavior before introducing real equipment. Software fault injection has a long history in dependability assessment. Natella et al. [7] surve y the field comprehensively , establishing that injecting faults at the API or protocol bound- ary is an effecti ve way to assess error-handling quality without requiring hardware failures. The fault-injection suite in this paper follows that tradition: it sends deliberately malformed inputs through the MCP tool interface and judges whether the adapter returns structured, interpretable errors. Recent LLM-agent research has moved from reasoning- action loops toward explicit tool learning over large API surfaces [6], [17]–[19]. MCP contributes a practical schema for tool discov ery and in vocation [3], but it does not prescribe how industrial protocols should be translated, guarded, or benchmarked. The contribution is therefore not a new tool-use framew ork. It is an MCP-to-O T adapter pattern with shared safety con ventions and a deterministic ev aluation model for a narrowly defined industrial setting. This comparison clarifies the contrib ution boundary . A full digital-twin paper would claim model fidelity or synchro- nization strategy; a generic agent paper would emphasize tool selection at scale; an industrial middle ware paper would prioritize interoperability between production systems. I N - D U S T R I C O N N E C T instead focuses on a smaller but neces- sary substrate: deterministic, assistant-facing protocol adapters whose behavior can be measured before an y real deployment study begins. V I I . C O N C L U S I O N The deterministic benchmark ex ercises 870 runs and 2820 tool calls across normal, fault-injection, stress, and recovery suites, showing that the Modbus, MQTT/Sparkplug B, and OPC UA adapters handle normal operations, deliberate faults, concurrency stress, and endpoint restarts with consistent re- sponse semantics and structured error handling. Same-session recov ery after endpoint restart is demonstrated for all three protocols. The broader repository provides ten protocol mod- ules, but only three are ev aluated in this paper . The next steps are: v alidate the same workflows on real hardware, add RB A C and audit logging around write-capable tools, conduct a quantitative LLM assessment across multiple model families with liv e MCP integration, and expand the ev aluation be yond mock connecti vity into operator -in-the-loop scenarios with quantitativ e usability metrics. R E F E R E N C E S [1] L. D. Xu, E. L. Xu, and L. Li, “Industry 4.0: state of the art and future trends, ” International J ournal of Pr oduction Resear ch , vol. 56, no. 8, pp. 2941–2962, 2018. [2] M. W ollschlaeger , T . Sauter , and J. Jasperneite, “The future of industrial communication: Automation networks in the era of the internet of things and industry 4.0, ” IEEE Industrial Electronics Magazine , vol. 11, no. 1, pp. 17–27, 2017. [3] Anthropic, “Model context protocol specification, ” https: //modelcontextprotocol.io, 2024, accessed 2026-03-12. [4] S. Cavalieri and F . Chiacchio, “ Analysis of OPC U A performances, ” Computer Standards & Interfaces , vol. 36, no. 1, pp. 165–177, 2013. [5] B. Mishra and A. Kertesz, “The use of MQTT in M2M and IoT systems: A survey , ” IEEE Access , vol. 8, pp. 201 071–201 086, 2020. [6] S. Y ao, J. Zhao, D. Y u, N. Du, I. Shafran, K. Narasimhan, and Y . Cao, “ReAct: Synergizing reasoning and acting in language models, ” arXiv pr eprint arXiv:2210.03629 , 2022. [Online]. A vailable: https://arxiv .org/abs/2210.03629 [7] R. Natella, D. Cotroneo, and H. S. Madeira, “ Assessing dependability with software fault injection: A surve y , ” A CM Computing Surveys , vol. 48, no. 3, pp. 1–55, 2016. [8] H. Lasi, P . Fettke, H.-G. K emper , T . Feld, and M. Hoffmann, “Industry 4.0, ” Business & Information Systems Engineering , vol. 6, no. 4, pp. 239–242, 2014. [9] J. Lee, B. Bagheri, and H.-A. Kao, “ A cyber -physical systems archi- tecture for industry 4.0-based manufacturing systems, ” Manufacturing Letters , vol. 3, pp. 18–23, 2015. [10] L. Monostori, B. K ´ ad ´ ar , T . Bauernhansl, S. K ondoh, S. Kumara, G. Rein- hart, O. Sauer, G. Schuh, W . Sihn, and K. Ueda, “Cyber -physical systems in manufacturing, ” CIRP Annals , vol. 65, no. 2, pp. 621–641, 2016. [11] R. Y . Zhong, X. Xu, E. Klotz, and S. T . Newman, “Intelligent manu- facturing in the context of industry 4.0: A review , ” Engineering , vol. 3, no. 5, pp. 616–630, 2017. [12] D. M. Tilb ury , “Cyber-physical manufacturing systems, ” Annual Review of Contr ol, Robotics, and Autonomous Systems , vol. 2, no. 1, pp. 427– 443, 2019. [13] S. Cavalieri and S. Mul ` e, “Interoperability between OPC U A and onem2m, ” Journal of Internet Services and Applications , vol. 12, no. 1, 2021. [14] M. B ¨ uchter and S. W olf, Plug and W ork with OPC UA at the F ield Level: Integration of Low-Level Devices . Springer Berlin Heidelberg, 2022, pp. 63–75. [15] A. Fuller, Z. Fan, C. Day , and C. Barlow , “Digital twin: Enabling technologies, challenges and open research, ” IEEE Access , vol. 8, pp. 108 952–108 971, 2020. [16] Y . Lu, C. Liu, K. I.-K. W ang, H. Huang, and X. Xu, “Digital twin- driv en smart manufacturing: Connotation, reference model, applications and research issues, ” Robotics and Computer-Inte grated Manufacturing , vol. 61, p. 101837, 2020. [17] T . Schick, J. Dwivedi-Y u, R. Dessi, R. Raileanu, M. Lomeli, L. Zettlemoyer , N. Cancedda, and T . Scialom, “T oolformer: Language models can teach themselves to use tools, ” arXiv pr eprint arXiv:2302.04761 , 2023. [Online]. A vailable: https://arxiv .org/abs/2302.04761 [18] S. G. Patil, T . Zhang, X. W ang, and J. E. Gonzalez, “Gorilla: Large language model connected with massiv e apis, ” arXiv preprint arXiv:2305.15334 , 2023. [Online]. A vailable: https://arxiv .org/abs/2305. 15334 [19] S. Qiao, H. Gui, C. Lv , Q. Jia, H. Chen, and N. Zhang, “Making language models better tool learners with execution feedback, ” in Proceedings of the 2024 Confer ence of the North American Chapter of the Association for Computational Linguistics: Human Language T echnologies (V olume 1: Long P apers) , 2024, pp. 3550–3568.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment