Spectral methods: crucial for machine learning, natural for quantum computers?

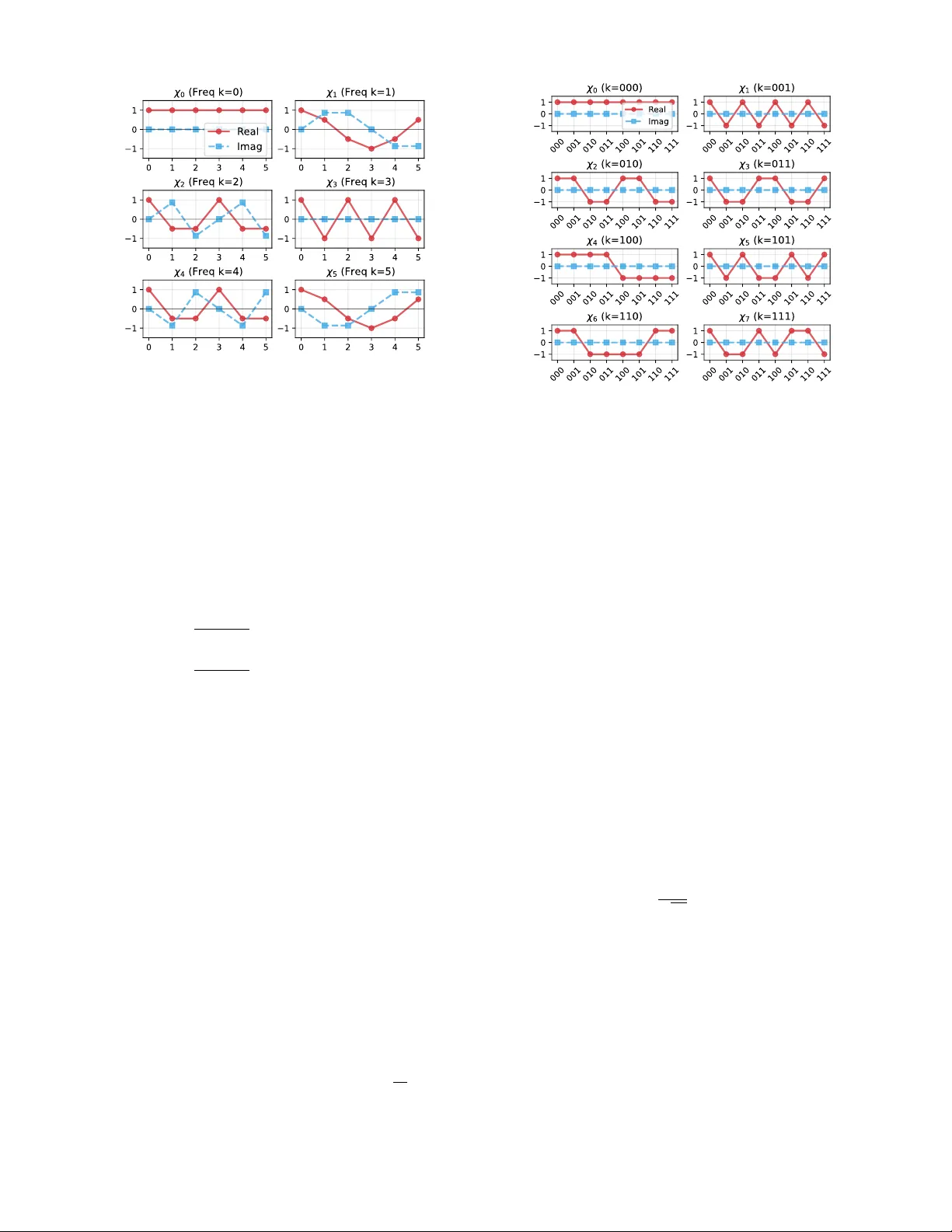

This article presents an argument for why quantum computers could unlock new methods for machine learning. We argue that spectral methods, in particular those that learn, regularise, or otherwise manipulate the Fourier spectrum of a machine learning …

Authors: Vasilis Belis, Joseph Bowles, Rishabh Gupta