When Consistency Becomes Bias: Interviewer Effects in Semi-Structured Clinical Interviews

Automatic depression detection from doctor-patient conversations has gained momentum thanks to the availability of public corpora and advances in language modeling. However, interpretability remains limited: strong performance is often reported witho…

Authors: Hasindri Watawana, Sergio Burdisso, Diego A. Moreno-Galván

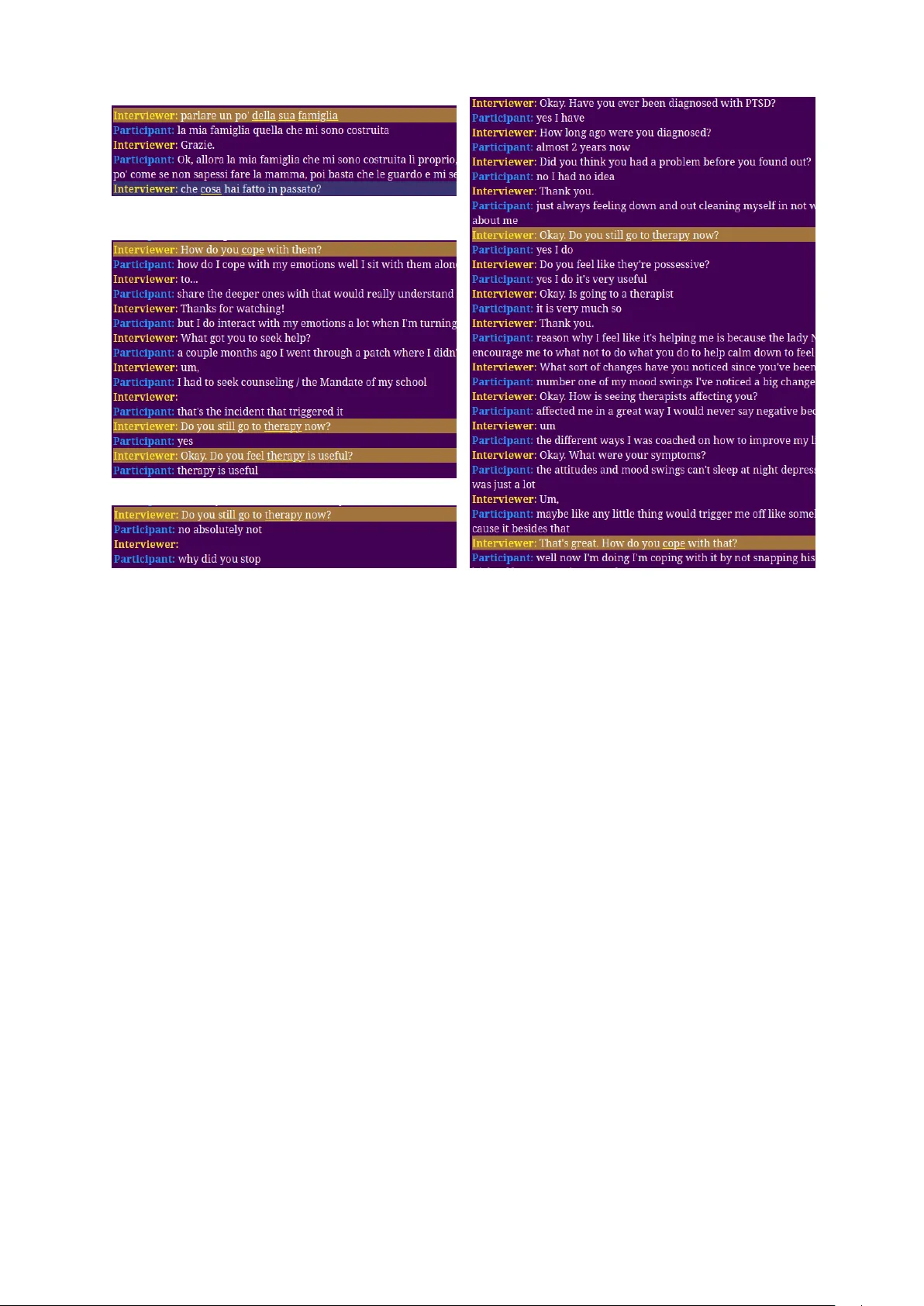

When Consistency Becomes Bias: Interviewer Effects in Semi-Structured Clinical Inter views Hasindri W atawana 1 , 2 , Sergio Burdisso 1 , Diego A. Mor eno-Galván 3 Fernando Sánchez- V ega 3 , A. Pastor López-Monroy 3 , Petr Mo tlicek 1 , 4 , Esaú Villatoro- T ello 1 1 Idiap Research Institut e, Switzerland {hwatawana,sburdisso,pmotlicek,evillatoro}@idiap.ch 2 EPFL, Switzerland 3 Centro de Inv estigación en Mat emáticas (CIMA T), Me xico {diego.moreno,fernando.sanchez,pastor.lopez}@cimat.mx 4 Brno University of T echnology , Czech Republic Abstract Automatic depression detection from doctor–patient con versations has gained momentum thanks to the availability of public corpora and advances in language modeling. Howe ver , interpretability remains limited: strong performance is often repor ted without revealing what drives predictions. W e analyze thr ee datasets—ANDROIDS, DAIC- WOZ, and E-D AIC—and identify a syst ematic bias from int er viewer prompts in semi-structured inter views. Models trained on interviewer turns exploit fixed prompts and positions to distinguish depressed from control subjects, often achieving high classification scores without using participant language. Restricting models to participant utter ances distributes decision evidence more broadly and reflects genuine linguistic cues. While semi-structured protocols ensure consistency , including int er viewer prompts inflates per formance by leveraging script ar tifacts. Our results highlight a cross-dataset, architectur e-agnostic bias and emphasize the need for analyses that localize decision evidence by time and speak er to ensure models learn from par ticipants’ language. Ke ywords: Depression Corpora, Clinical Interviews, Graph Convolutional Network (GCN) 1. Introduction Language has long been established as a pow er ful indicator of personality , socio-emotional state, and mental health ( P ennebaker et al. , 2003 ; T ac kman et al. , 2019 ). This insight has spurred a rich body of work at the intersection of AI, natural language processing, and clinical psy chology , showing that structured interviews and written responses can rev eal im por tant aspects of cognitiv e and behav- ioral functioning, par ticularly for automatic depres- sion detection ( Malandrakis and Nara y anan , 2015 ; Villatoro- T ello et al. , 2021a , b ). While a growing body of recent work trains auto- matic depression det ection models on both partici- pant responses and int er viewer prompts ( Zhuang et al. , 2024 ; Agar wal and Dias , 2024 ; Milintse vich et al. , 2023 ; Shen et al. , 2022 ), it remains unclear how much each contributes to model performance. This study asks: to what e xt ent can a model clas- sify a par ticipant as depressed using only the in- terview er’ s questions? Surprisingly , across three datasets (ANDROIDS, D AIC-W OZ, E-D AIC) and two model f amilies, interview er -only ( I ) models of- ten match or outperform participant-only ( P ) mod- els. This does not imply that interviewer questions are clinically more informative; instead, qualitative analyses show that I -models e xploit syst ematic shor tcuts tied to the interview script, focusing on recurring prompts and q uestion positions—a phe- nomenon we term prompt-induced bias . Our main contributions are threefold: (1) We quantify prompt-induced bias across multiple datasets, showing that I -models can outper form P -models. (2) W e show that this effect is model- agnostic by reproducing it with both graph con vo- lutional networks and transformers. (3) We pro- vide qualitative analy ses that rev eal how I -models concentrate on narrow inter view segments while P -models distribute evidence more broadly . These findings highlight the importance of care- fully handling interviewer prompts and verifying that models truly le verage par ticipant language rather than spurious cues. 2. Datasets Within clinical practice, the initial assessment of mental illness is a semi-structured inter view in which clinicians pose a standardized ye t open- ended sequence of questions to elicit symptoms, history , and functioning. This prot ocol balances consistency with fle xibility , enabling reliable assess- ment while allowing patients to elaborate in their own words. The depression corpora we study are designed to simulate such standardized screening protocols for identifying people at risk: an inter - viewer (human or vir tual) delivers a controlled set of prompts to ensure replicability and cov erage of diagnostic cues, while participants respond freely , producing language that can be analyzed for clini- cal indicators. 2.1. D AIC-W OZ The Distress Analysis Interview Corpus – Wizard of Oz (D AIC-W OZ) dataset ( Gratch et al. , 2014 ) contains semi-structured clinical inter views in Nor th American English conducted by “Ellie”, an animated vir tual inter viewer controlled b y a human in another room (“Wizard-of-Oz” setup where a user interacts with a mock int er face controlled, to some degree, by a person). DAIC- WOZ is multimodal—audio, video, and manual transcripts—and includes PHQ- 8 depression ratings for each par ticipant ( Kroenke et al. , 2009 ). Ellie’ s design em phasizes replicability and consistency : she draw s from a finite reper - toire of 191 prompts spanning general q uestions (e.g., lifestyle and sleep), neutral backchannels, positive/negativ e empathic responses, surprise to- kens, continuation prompts (e.g., “could you tell me more?”), and miscellaneous control items. This controlled prompting is intended to elicit beha viors associated with depression and related symptoms while reducing variability attributable to the inter - viewer . D AIC- WOZ com prises 189 subjects split into 107 train, 35 dev elopment, and 47 test inter - views. 2.2. E-D AIC E-D AIC ( De V ault et al. , 2014 ) is an ext ension of D AIC-W OZ that preser ves the same inter view for - mat while scaling the collection. The key difference is that the vir tual inter viewer used in E-D AIC is fully automatic in contrast to the human-controlled Ellie in D AIC- WOZ. While D AIC-WOZ distributes com- plete two-speaker transcripts (inter viewer and par - ticipant), E-D AIC provides only the participant side. Therefor e, for our experiments, we prepare auto- matic transcripts for E-D AIC using WhisperX ( Bain et al. , 2023 ) pipeline. The process is explained in Section 3 . We corrected se veral mislabeled sub- jects after verifying inconsist encies between PHQ scores and the provided binary labels, an issue noted pre viously by others ( Ali et al. , 2025 ). The official split sizes are 163 train, 56 development, and 56 test inter views. 2.3. ANDROIDS ANDROIDS ( T ao et al. , 2023 ) is an Italian speech corpus for depression detection collected “in the wild” using laptop microphones. Each par tici- pant was recorded in two tasks: a Reading T ask (RT)—e veryone reads the same shor t, simple story to reduce literacy/education effects—and an Interview T ask (IT) with spontaneous speech, where the int er viewer is instructed to ask only minimal prompts, yielding semi-structured but low- intervention interactions. In this work we focus only on the data from the IT task. It comprises 116 native-Italian participants (64 depressed, 52 con- trols), with controls matched to the depr essed co- hor t by gender , age, and education to minimize de- mographic confounds. Inter views are manually seg- mented int o turns; diagnostic labels (“depressed” vs. “control”) come from clinicians following DSM-5 criteria. 1 The release includes audio and acoustic featur es, but no ground-truth transcripts. Therefore, we generated WhisperX transcripts for ANDROIDS (see Section 3 ). 3. Methodology Data Preparation Our study is based on the te xt modality . D AIC-W OZ provides complet e transcripts including both inter viewer and partic- ipant utterances. ANDROIDS doesn’t provide ground-truth transcripts, and E-D AIC releases transcripts for the par ticipant side only . F or ANDROIDS and E-DAIC, we theref ore built complete textual data as follo ws: using par ticipant utterance timestamps provided in the metadata, we derived complementar y (non-ov erlapping) interviewer timestamps, extr acted int er viewer and participant audio clips separat ely using the timestamp data, and generated automatic transcripts with the WhisperX ASR pipeline ( Bain et al. , 2023 ) (Whisper large-v3 ( Radford et al. , 2022 ) with a faster -whisper backend). 2 T o hav e a fair comparison between the im pact of using interviewer vs. par ticipant utterances on automatic depression detection, we do not use the E-D AIC par ticipant gold tr anscripts and inst ead rely on matched ASR transcripts for both speakers. Models W e evaluat e two architectures to test if prompt effects are model-agnostic: (i) Long- former ( Beltagy et al. , 2020 ), a transformer with sparse attention suitable for long documents such as inter views, and (ii) GCN ( Burdisso et al. , 2023 ), a graph-based model with word and document nodes. Using these two types of models allow s us to ana- lyze results from both a conte xtualized, semantic transformer and a k eyw ord-focused GCN. • Longformer : A linear classification head is added on top of the pre-trained Longformer -BERT 1 Diagnostic and Statistical Manual of Mental Disor - ders, Fif th Edition ( Association , 2013 ). 2 This pipeline yields a WER of 15.3% on the E-DAIC par ticipant side, ev aluated against the originally pro vided transcripts as ground truth. Model Source ANDROIDS D AIC-WOZ E-D AIC † P I A v g D C A v g D C A v g D C ( Burdisso et al. , 2023 ) ! – – – 0.84 0.80 0.89 0.80 0.67 0.94 ( Ilias and Ask ounis , 2024 ) ! ! 0.93 – – – – – – – – ( Borraccino , 2025 ) ! ! 0.92 0.93 0.9 – – – – – – ( Milintsevich et al. , 2023 ) ! ! – – – 0.81 – – – – – P -Longformer ! 0.79 0.82 0.76 0.71 0.61 0.81 0.67 0.56 0.79 I -Longformer ! 0.98 0.98 0.98 0.73 0.64 0.83 0.65 0.50 0.80 P -GCN ! 0.93 0.95 0.92 0.85 0.81 0.88 0.70 0.54 0.86 I -GCN ! 0.97 0.97 0.98 0.88 0.85 0.91 0.74 0.57 0.90 T able 1: Dev elopment-set F 1 scores on ANDROIDS, D AIC-W OZ, and E-DAIC. For each dataset, we report macro-aver age ( A vg ), Depressed ( D ), and Control ( C ) F 1 . ANDROIDS scores are repor ted as 5-fold a verages; D AIC-WOZ and E-D AIC use the official dev elopment split. Sources: par ticipant ( P ) and interviewer ( I ) te xt. Upper block: te xt-only baselines from prior w ork. Bold = best in group; underlined = best o verall text-onl y result. † : E-D AIC prior -work results use gold participant transcripts and are not directly comparable. to classify using the [CLS] token; both the encoder and head are fine-tuned on the training split. Ex- periments are conducted with both longformer -mini- 1024 and longformer -base-4096 but only the best- performing configuration is repor ted. • GCN: W e use the two-lay er ω -GCN of Burdisso et al. ( 2023 ), which represents each corpus as a graph with word and document (interview) nodes. Representations evolv e through three stages: (i) an initial one-hot la yer , (ii) a latent embedding af ter the first conv olution, and (iii) a two-dimensional output after the second convolution, corresponding to de- pressed and control probabilities. Because words and documents share the same embedding space, the final la yer provides class probabilities for both interviews and individual words, offering an inter - pretability handle that identifies which words—and interviewer prompts—serve as discriminative evi- dence (see Section 5 ). Quantifying Inter viewer Bias Our approach builds on Burdisso et al. ( 2024 ), who analyzed prompt- induced bias within the D AIC-W OZ corpus. We e x- tend this line of work in three wa ys. Fir st, we gener - alize the analysis across multiple clinical int er view corpora—ANDROID and E-D AIC—to test whether the interviewer -related bias persists be yond a sin- gle dataset. Second, while Burdisso et al. ( 2024 ) focused on dev elopment splits, we also quantify the im pact of inter viewer bias on held-out test data, pro viding a clearer estimate of its effect on repor ted model per formance. Third, we ev aluate the bias using automatically generat ed transcriptions, as manual interviewer transcripts are unav ailable for ANDROID and only par tially available for E-D AIC. This setup allows us to assess whether the bias remains det ectable under more realistic, automati- cally transcribed conditions. For each archit ecture, we train and e valuat e two model variants: • participant-only ( P ): trained and evaluat ed us- ing only the participant’s responses • interview er -only ( I ): trained and ev aluated us- ing only the interviewer’ s prompts These two model variants allow us to directly inv estigate our main research question by com par - ing per formance when models hav e access only to par ticipant responses ( P ) v ersus only to inter - viewer prompts ( I ). This comparison quantifies the ext ent to which interviewer questions carr y implicit diagnostic information, re vealing potential bias in automatic depression detection models. 4. Experiments and Results Main results are repor ted in T able 1 . For AN- DROIDS fiv e-fold av erages are reported; for D AIC- WOZ and E-D AIC the official dev split is used. Across all three corpora we train two variants per architectur e—par ticipant-only ( P -GCN and P - Longformer) and inter viewer -only ( I -GCN and I - Longformer). For each GCN model variant, we optimize and ev aluate results using multiple setups (e.g. using all vocabular y , only top vocabulary etc), and record the best per formance on dev split. Sim- ilarly for Longformer , best dev per formance among longformer -mini-1024 and longformer -base-4096 is reported. On DAIC- WOZ, the inter viewer side is consis- tently stronger than the par ticipant side for both model families (P -Longformer 0.71 → I-Longformer 0.73; P -GCN 0.85 → I-GCN 0.88). This reproduces prior findings reported in ( Burdisso et al. , 2024 ), that Ellie’ s prompts pro vide highly discriminativ e (a) ANDROIDS (b) E-DAIC Figure 1: T em poral heatmaps comparing keyw ord evidence learned by interviewer -only ( I , top) vs. par ticipant-only ( P , bottom) models across inter views in the ANDROIDS and E-DAIC datasets. Each column represents one int er view . The y-axis corresponds to the normalized inter view timeline, where 0% marks the beginning of the int er view and 100% marks its end. White ver tical lines denot e split boundaries (train/dev/t est for E-D AIC; train/dev only for ANDR OIDS). The ANDROIDS plot is shown for Fold 1. Model D AIC–WOZ E–D AIC A vg D C A v g D C P -Longformer 0.68 0.54 0.82 0.40 0.17 0.62 I -Longformer 0.53 0.27 0.78 0.56 0.44 0.68 P -GCN 0.59 0.56 0.63 0.54 0.47 0.62 I -GCN 0.62 0.54 0.70 0.54 0.33 0.76 T able 2: T est-set F 1 scores (macro average A vg , D epressed, C ontrol) on D AIC–W OZ and E–D AIC, for participant-only ( P ) and interviewer -only ( I ) vari- ants of Longformer and GCN. For each model, the test result corresponds to the same configuration that achiev ed the best dev per formance in T able 1 . shor tcuts, and shows the effect is not tied to a spe- cific architectur e. On ANDROIDS, the bias is even more pro- nounced: I-Longformer achiev es 0.98 macro-F 1 on dev gaining a 19% advantage over P-Longf ormer . I-GCN outperforms P -GCN by 4%. Despite being a different language and collection setting, inter - viewer prompts again dominate, reinforcing that the advantage arises from inter view structure. This bias is evident on E-DAIC with the GCN models; with Longformer , the P and I variants per form com- parably . In T able 2 we also report results on the official test sets of D AIC- WOZ and E-D AIC to assess whether the inter viewer bias carries ov er to unseen data. On E-D AIC, both GCN and Longformer models show that interviewer -only variants outper form or match par ticipant-only variants, confirming that the bias persists bey ond the dev split. On D AIC-W OZ, the effect is architectur e-dependent: ke yword-based GCNs still benefit from inter viewer turns (I-GCN 0.62 vs P -GCN 0.59), while not in case of the conte xt-based Longformer (P -Longformer 0.68 vs I- Longformer 0.53), suggesting that semantic model- ing could par tially mitigate prompt-driv en shor tcuts in some cases. Overall, these results show that inter viewer prompts can inflate performance in semi-structured interviews, independent of architecture. This cross- dataset contr ast underscores our central point: gains attributed t o “using inter viewer q uestions as conte xt" ( Zhuang et al. , 2024 ; Agar wal and Dias , 2024 ; Milintsevich et al. , 2023 ; Shen e t al. , 2022 ) may simply reflect prompt-driv en bias rather than improv ed modeling of the participant’s language. 5. Analysis and Discussion The heatmaps in Figure 1 visualizes where each model finds decision evidence (words used by the model to identify the depressed group; which we ref er as “keyw ords") ov er time and by speak er . For ev er y interview (x-axis), we aggregat e the model’s learned “ke ywords” along the normalized interview timeline (y-axis, 0–100%) and plot ke yword density heatmaps separatel y for interviewer -only ( I -GCN) and par ticipant-only ( P -GCN) models, and for De- pressed vs. Control cohor ts. By analyzing the heatmaps across different datasets, we consistently found that patterns di- verge sharply by speaker stream. I -GCN exhibits narrow , high-contrast bands, indicating that the model relies on specific inter viewer turns to make its decision (concentrat ed ke ywords). In contrast, (a) Example inter view from ANDROIDS. First utterance translates into ‘talk about y our family’ (b) Example of a depressed interview from E-D AIC (c) Example of a control inter view from E-D AIC (d) Example of a depressed interview from E-D AIC Figure 2: Color -coded interview e xcerpts in which prompts identified by the I -model as bias-carr ying are highlighted. Underlined words denote the model’s learned k e ywor ds , corresponding to the high-contrast narrow bands in Figure 1 . P -GCN shows low-contrast activity spread across most of the timeline, consistent with drawing evi- dence from many par ticipant utterances rather than a fe w fix ed positions. The qualitative content of those interviewer bands differs by dataset, ANDROIDS : I -GCN repeatedly focus on prompts that probe family context, how the last week was spent, and work status (see Fig. 2a ). These fo- cused regions recur across interviews e ven though their absolute timing varies, suggesting the model has learned the type of prompt. E-D AIC and DAIC-W OZ : I -GCN mainly concen- trates on three prompts—“How do you cope with that?”, “Do y ou still go t o therapy?” followed by “Do you feel therapy is useful?”—while largely ignor - ing other clinically rele vant prompts (e.g., PTSD screening, reasons for seeking help, symptoms, and effects of therapy). This again reflects selec- tivity for a small subset of inter viewer turns. De- pressed inter views in Fig. 2b and Fig. 2d clearly illustrate this shor tcut behavior . Fig. 2c shows a control subject : af ter the highlight ed bias prompt, the follo w-up questioning pattern shifts, providing enough signal for the model to distinguish control from depressed int er views. 6. Conclusion Our analysis shows that inter viewer prompts enable models to distinguish between depressed and con- trol par ticipants across D AIC-W OZ, ANDROIDS, and E-D AIC, with Inter viewer models focusing on specific, localized q uestions whereas P ar ticipant models distribute cues broadly across the con- versation. These results highlight a key method- ological issue: including int er viewer turns intro- duces unintended biases, as models may e xploit scripted prompts as shor tcuts rather than learning genuine linguistic or behavioral mar kers of depres- sion. Impor tantly , this bias is consistent across datasets and model architectur es, underscoring its relev ance as a general methodological concern rather than an ar tifact of any par ticular experimental setup. F uture work should carefully account for the interviewer’s influence when designing and ev al- uating conversational mental health assessment syst ems, for instance by isolating par ticipant-only turns or dev eloping bias-aw are ev aluation prot o- cols. 7. Acknowledgments This work was par tially funded by the SNSF through the SPIRIT project ORIENTER : tOwards undeR- standing and modelIng the language of mENT al health disordERs (grant no. IZS TZ0_223488). 8. Ethics Statement Data privacy , consent, and dataset use. Our study relies on publicly distributed clinical-interview corpora with documented consent and privacy safeguards. For D AIC–WOZ and E-D AIC, par - ticipants completed informed consent prior to interviews; consent materials included an op- tion permitting data sharing for research. The released transcriptions under went systematic de-identification (e.g., remo val of names, specific dates, addresses) and identifying utt erances are withheld; only appropriately anonymized audio/video featur es and transcripts are distributed under the institutional ethical guidelines, with broader raw data shared case-by-case. For ANDROIDS, data were collected in mental-health centers under institutional and national ethical regulations, with all par ticipation voluntary and ev er y participant signing an informed-consent letter ; psychiatrists provided diagnostic labels (DSM-5 framework), and the r elease includes turn segmentation and person-independent protocols while pro tecting identity . In our work, we use te xt only (including ASR where ground truth is unav ailable), do not attempt re-identification, and adhere to all dataset usage terms. Role of AI in Healthcar e. Our experiments are intended to underscore the v alue of interpre table, AI-assisted methods as decision suppor t , not as replacements for clinicians. Diagnostic authority must remain with qualified professionals; delegat- ing clinical decisions to an algorithm introduces un- acceptable risk in high-stak es healthcare settings. By exposing shor tcut learning and prompt-induced biases in interview data, our work contributes to the dev elopment of bias-awar e models and evaluation practices for clinical int er view analysis. 9. Limitations Our study has sev eral limitations. T ranscript Quality and Ground T ruth AN- DROIDS provides no manual transcripts and E-D AIC releases only par ticipant transcripts; complete transcripts for bot h of these datasets were generated with ASR. Consequently , compar - isons between P and I models can be affected by ASR errors and speaker segmentation, and any adv antage/disadv antage may partly reflect transcription noise rather than underlying language alone. Ground-truth, two-speaker transcripts for E-D AIC and ANDR OIDS would enable a mor e precise estimat e of prompt-induced bias. Modality Restriction Our analysis is t ext-only across ANDROIDS, D AIC-W OZ, and E-D AIC. While this isolates the linguistic contribution, it does not capture the acoustic and visual features, where interviewer structure and par ticipant signals may manifest differentl y . As future work, we expect to ext end the study to multimodal aspects, running the same P vs. I ablations to verify whether the prompt-induced bias persists, weakens, or strength- ens when non-te xt modalities are included. 10. Bibliographical References Navnee t Agarwal and Gaël Dias. 2024. Analysing Relev ance of Discour se Structure for Improv ed Mental Health Estimation . In 9th W orkshop on Computational Linguis tics and Clinical Psy chol- ogy (CLPS Y C H) associated t o 18th Conf erence of the European Chapter of the Association f or Computational Linguis tics (EA CL) , Saint Julian, Malta. Abdelrahman A. Ali, A y a E. Fouda, Radwa J. Hanafy , and Mohammed E. Fouda. 2025. Lev er - aging audio and text modalities in mental health: A study of llms performance . American Psychiatric Association. 2013. Diagnos- tic and Statis tical Manual of Mental Disorders (DSM-5) . American Psychiatric Publishing. Max Bain, Jaesung Huh, T engda Han, and Andrew Zisserman. 2023. Whisper x: Time-accurat e speech transcription of long-form audio . Iz Beltagy , Matthew E. Pet ers, and Arman Cohan. 2020. Longformer : The long-document trans- former . . Annapia Borraccino. 2025. Modeling depressiv e patterns in italian discourse: Insights from nat- ural language processing . Master’ s thesis, The Graduate Center , City Univ ersity of New Y ork (CUNY), New Y ork, NY . Sergio Burdisso, Ernesto Rey es-R amírez, Esaú Villatoro- T ello, Fernando Sánchez- V ega, Pas- tor López-Monro y , and Pe tr Motlicek. 2024. Daic-woz: On the validity of using the thera- pist’s prompts in automatic depression detec- tion from clinical interviews. arXiv preprint arXiv :2404.14463 . Sergio Burdisso, Esaú Villatoro- T ello, Srikanth Madikeri, and Pe tr Motlicek. 2023. Node- weighted Graph Convolutional Network for De- pression Det ection in T ranscribed Clinical Inter - views . In Proc. INTERSPEECH 2023 , pages 3617–3621. Loukas Ilias and Dimitris Askounis. 2024. A Cross- Att ention Lay er coupled with Multimodal Fusion Methods for Recognizing Depression from Spon- taneous Speech . In Interspeech 2024 , pages 912–916. Kurt Kroenke, T ara W Strine, Rober t L Spitzer , Janet BW Williams, Joy ce T Berr y , and Ali H Mok - dad. 2009. The phq-8 as a measure of current depression in the general population. Journal of affectiv e disorders , 114(1-3):163–173. Nikolaos Malandrakis and Shrikanth S Naray anan. 2015. Therapy language analysis using automat- ically generated psy cholinguistic norms. In Proc. Interspeec h 2015 . Kirill Milintsevich, Kairit Sir ts, and Gaël Dias. 2023. T ow ards automatic text-based estimation of de- pression through symptom prediction. Brain in- for matics , 10(1):4. James W Pennebak er , Matthias R Mehl, and Kate G Niederhoffer . 2003. Psychological as- pects of natural language use: Our words, our selves. Annual re view of psychology , 54(1):547– 577. Alec Radf ord, Jong Wook Kim, T ao Xu, Greg Brock - man, Christine McLeav ey , and Ilya Sutske ver . 2022. Robust speech recognition via large-scale weak supervision . Ying Shen, Huiyu Y ang, and Lin Lin. 2022. Auto- matic depression detection: An emotional audio- te xtual corpus and a gru/bilstm-based model. In IC ASSP 2022-2022 IEEE International Confer - ence on Acoustics, Speech and Signal Process- ing (IC ASSP) , pages 6247–6251. IEEE. Allison M T ackman, David A Sbarra, Angela L Care y , M Brent Donnellan, Andrea B Horn, Nicholas S Holtzman, T o’Meisha S Edwards, James W P ennebaker , and Matthias R Mehl. 2019. Depression, negativ e emotionality , and self-refer ential language: A multi-lab, multi- measure, and multi-language-task research syn- thesis. Journal of personality and social psychol- ogy , 116(5):817. Esaú Villatoro- T ello, Gabriela Ramírez-de-la Rosa, Daniel Gática-Pérez, Mathew Magimai.-Doss, and Héctor Jiménez-Salazar . 2021a. Approxi- mating the mental le xicon from clinical interviews as a suppor t tool for depression det ection . In Proc. ICMI’21 , page 557–566. Esaú Villatoro- T ello, S. Pa vankumar Dubagunta, Gabriela Ramírez-de-la-R osa Julian F ritsch, Pe tr Motlicek, and Mathew Magimai-Doss. 2021b. Late Fusion of the Av ailable Lexicon and Raw W av eform-Based Acoustic Modeling for Depres- sion and Dementia Recognition . In Proc. Inter - speech 2021 , pages 1927–1931. Chen Zhuang, Deng Jiawen, Zhou Jinfeng, Wu Jin- cenzi, Qian Tieyun, and Minlie Huang. 2024. De- pression det ection in clinical interviews with LLM- empowered structural element graph. In Pro- ceedings of the 2024 Conference of the Nor th American Chapt er of the Association f or Compu- tational Linguistics: Human Language T echnolo- gies . Association for Computational Linguistics. 11. Language Resource References David De V ault, Ron Ar tstein, Grace Benn, T eresa Dey , Ed F ast, Alesia Gainer , Kallirroi Georgila, Jon Gratch, Arno Har tholt, Margaux Lhommet, et al. 2014. Simsensei kiosk: A vir tual human interviewer for healthcare decision suppor t. In Proceedings of the 2014 international conference on A utonomous agents and multi-agent syst ems , pages 1061–1068. Jonathan Gratch, Ron Ar tstein, Gale M L ucas, Giota Strat ou, Stefan Scherer , Angela Nazarian, Rachel W ood, Jill Boberg, David De V ault, Stacy Marsella, et al. 2014. The distress analysis inter - view corpus of human and computer int er views. In Lrec , volume 14, pages 3123–3128. Re ykjavik. F uxiang T ao, Anna Esposito, and Alessandro Vin- ciarelli. 2023. The androids corpus: A new pub- licly a vailable benchmar k for speech based de- pression det ection . In Interspeech 2023 , pages 4149–4153.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment