Trust Region Constrained Bayesian Optimization with Penalized Constraint Handling

Constrained optimization in high-dimensional black-box settings is difficult due to expensive evaluations, the lack of gradient information, and complex feasibility regions. In this work, we propose a Bayesian optimization method that combines a pena…

Authors: Raju Chowdhury, Tanmay Sen, Prajamitra Bhuyan

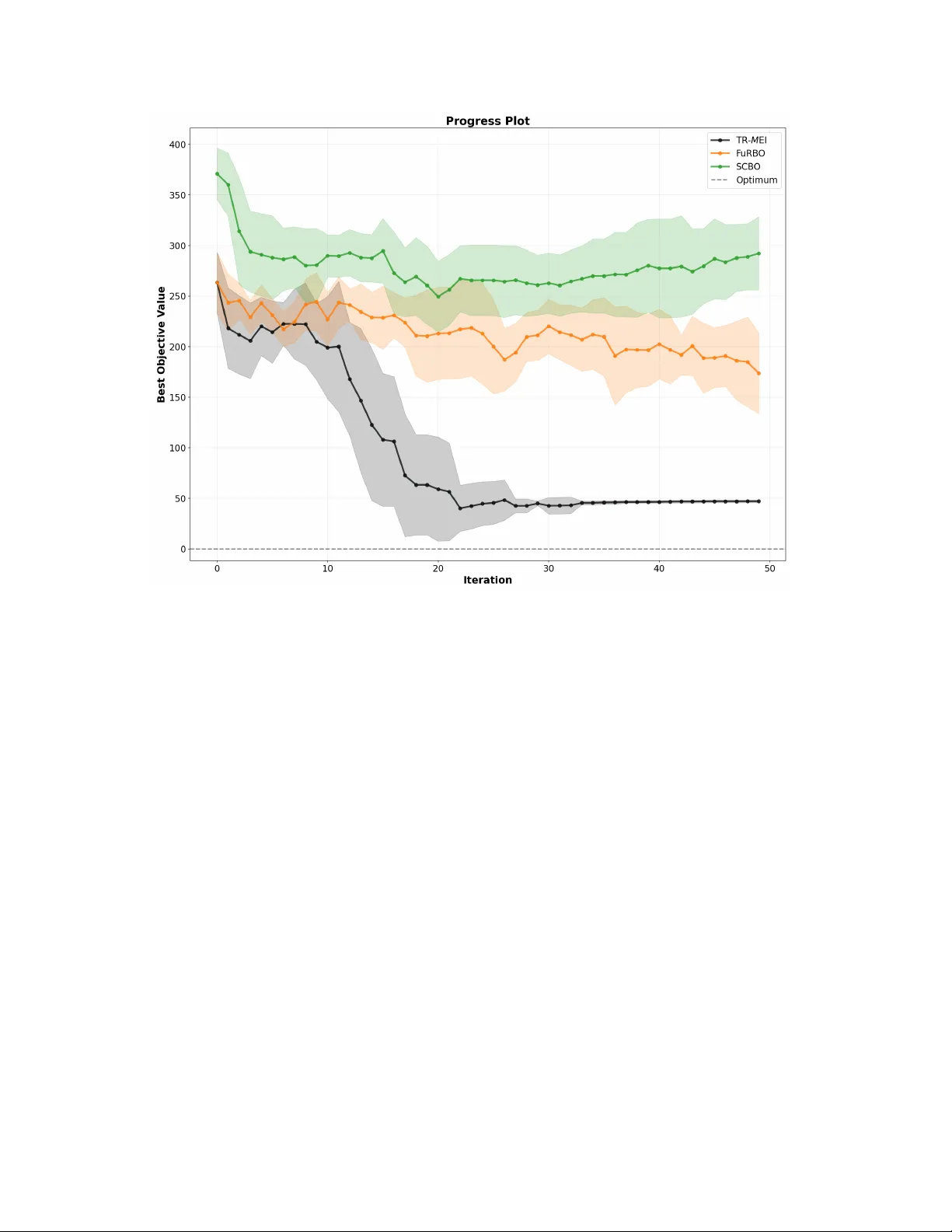

T rust Region Constrained Ba y esian Optimization with P enalized Constrain t Handling Ra ju Cho wdhury a , T anma y Sen b , Pra jamitra Bh uyan c , Bisw abrata Pradhan d abd Statistical Qualit y Control and Operations Research Unit, Indian Statistical Institute, Kolk ata, India. c Op erations Managemen t Group, Indian Institute of Managemen t Calcutta, Kolk ata, India. Abstract Constrained optimization in high-dimensional blac k-b o x settings is difficult due to exp ensiv e ev aluations, the lack of gradien t information, and complex feasibilit y regions. In this w ork, w e prop ose a Ba yesian optimization method that combines a penalty form ulation, a surrogate mo del, and a trust region strategy . The constrained problem is con v erted to an unconstrained form by p enalizing constrain t violations, whic h pro vides a unified modeling framework. A trust region restricts the searc h to a local region around the curren t b est solution, which improv es stability and efficiency in high dimensions. Within this region, w e use the Expected Impro vemen t acquisition function to select ev aluation p oints by balancing improv ement and uncertaint y . The prop osed T rust Region metho d integrates penalty-based constrain t handling with lo cal surrogate modeling. This combination enables efficient exploration of fea- sible regions while main taining sample efficiency . W e compare the proposed metho d with state-of-the-art methods on syn thetic and real-w orld high-dimensional constrained optimization problems. The results sho w that the metho d identifies high-qualit y feasi- ble solutions with fewer ev aluations and main tains stable performance across differen t settings. Keywor ds: blac k-b ox function, Expected Impro v ement, Gaussian Pro cess, P enalty function, Hybrid optimization. 1 1 Intr oduction In man y real-w orld settings, optimization problems arise without an explicit mathematical form. These problems are treated as blac k-b ox optimization problems, where the ob jective and constraint functions can only b e ev aluated at selected input p oints. Suc h problems ap- p ear in engineering design, mo del tuning, and scientific sim ulations. The difficulty becomes more pronounced in high-dimensional spaces due to the curse of dimensionalit y (Po well, 2019). As the num b er of v ariables gro ws, the search s pace b ecomes large and harder to ex- plore with limited ev aluations. It becomes difficult to efficiently identify promising regions. The presence of constraints further reduces the feasible region, which is often small and irregular, making the search for feasible and optimal solutions more challenging. Ba yesian optimization (BO) (Shahriari et al., 2016; Gramacy, 2020; Garnett, 2023) pro vides a principled approac h for solving black-box optimization problems when function ev aluations are expensive. It builds a probabilistic surrogate mo del of the unknown ob jec- tiv e, often using Gaussian Pro cesses, which giv es b oth a prediction of the function v alue and a measure of uncertain ty in unexplored regions. An acquisition function then selects new ev aluation p oints b y balancing improv emen t in the ob jective with uncertaint y in the predictions. The metho d pro ceeds sequentially , with eac h new observ ation up dating the surrogate mo del and impro ving future decisions. This approac h is sample efficient and is w ell-suited for high-dimensional, and constrained settings where ev aluations are costly , and the feasible region may be limited. Ho wev er, in high-dimensional settings (Greenhill et al., 2020; Papenmeier et al., 2026), standard BO often p erforms p o orly because surrogate mo dels b ecome less accurate ov er the full domain, and optimizing the acquisition function b ecomes difficult. This leads to inefficien t exploration and unstable search behavior. As the dimension increases, the search space grows rapidly , making it harder to iden tify promising regions with limited ev aluations. The presence of constraints further increases the difficult y (Amini et al., 2025), as the feasible region ma y b e small, irregular, and hard to locate. T ogether, these challenges mak e it difficult to maintain reliable p erformance and efficien tly identify feasible and optimal solutions. The classical optimization literature has long studied metho ds for handling constraints, including p enalt y functions, augmen ted Lagrangian metho ds, and barrier functions (No- 2 cedal and W right, 2006). These approaches transform constrained problems into uncon- strained ones or decomp ose them into simpler subproblems that can b e solved iteratively . Building on these ideas, Gramacy et al. (2016) com bined classical optimization with BO and prop osed an Augmen ted Lagrangian-based BO framework, where the acquisition function is defined on the predictiv e mean surface. Inspired by this approach, Ariafar et al. (2019) in tro duced a framework, ADMMBO, that uses the big- M penalty and the Alternating Di- rections Metho d of Multipliers together with the Exp ected Improv ement acquisition func- tion. In a similar direction, Pourmohamad and Lee (2022) dev elop ed a log-barrier based BO framew ork and constructed the acquisition function using the predictive mean. Re- cen tly , Upadhy e and Chowdh ury (2024) prop osed a big- M p enalty-based approach, known as M -LCBBO, in which a constrained v ersion of the Low er Confidence Bound acquisition function is derived. High-dimensional constrained optimization is c hallenging due to the large searc h space and difficulty in identifying feasible regions. Global surrogate mo dels often b ecome unre- liable, whic h motiv ates lo cal modeling strategies. T rust region based metho ds restrict the searc h to local regions and adapt their size based on progress. T uRBO (Eriksson et al., 2019) demonstrates impro v ed p erformance in high dimensions, while SCBO (Eriksson and P olo czek, 2021) extends this idea to constrained settings, defines a hyperrectangular trust region. F uRBO (Ascia et al., 2025) further mo difies the trust region using a hyperspheri- cal domain to improv e exploration. These approaches demonstrate that lo cal trust-region strategies are effective for high-dimensional constrained optimization. W e prop ose a trust region based BO framew ork, referred to as TR- M EI, to address high- dimen tional constrained optimization problem. The framework in tegrates big- M p enalt y approac h with surrogate mo deling and a trust region strategy . In this setting, w e use a mo dified Exp ected Improv ement acquisition function defined ov er the p enalized ob jective, balancing exploration and exploitation within the lo cal trust region. The article is organized as follows. Section 2 presen ts the relev an t background and existing trust region based BO metho ds. Section 3 describ es the prop osed metho dology along with the trust region strategy . Section 4 presen ts the empirical comparison with existing metho ds on synthetic and real-w orld problems. Section 5 concludes the article with a discussion and directions for future researc h. 3 2 Bac kground In this Section, we briefly describ e Bay esian Optimization and its main comp onen ts, in- cluding surrogate mo deling and Exp ected Improv emen t acquisition function for selecting ev aluation p oints. W e als o outline a trust region based Bay esian optimization approac h for high-dimensional settings. 2.1 Ba yesian Optimization Ba yesian Optimization (BO) (Shahriari et al., 2016; F razier, 2018) is an effectiv e metho d for optimizing blac k b o x functions. It is a deriv ative-free and sequen tial approac h that aims to find the optim um with a small n umber of ev aluations. BO has tw o main comp onents. The first is a surrogate mo del that appro ximates the original function. The second is an acquisition function that guides the selection of the next sample point. A widely used sur- rogate is the Gaussian Pro cess (GP) (Rasm ussen and Williams, 2006), defined by its mean and co v ariance or k ernel function. Among acquisition functions, Exp ected Improv ement (EI) (Garnett, 2023) is w ell structured, easy to compute, and widely used. Below, w e give a brief mathematical description of GP surrogate mo delling and EI. Surrogate Modelling: Let D n = { x i , f ( x i ) } n i =1 denote the observed input output pairs. W e mo del these data using a GP Y ( · ) with mean function m ( · ) and co v ariance function k ( · , · ). The mo del is trained on m ( · ) and k ( · , · ), estimated using D n . Next, for a new input x , the trained mo del Y ( · ) giv es a p osterior prediction that follows a normal distribution with mean µ ( x ) and v ariance σ 2 ( x ) (Rasm ussen and Williams, 2006), that is Y ( x ) | D n ∼ N µ ( x ) , σ 2 ( x ) µ ( x ) = k ( x, X ) K − 1 y σ 2 ( x ) = k ( x, x ) − k ( x, X ) K − 1 k ( X , x ) where X = [ x 1 , . . . , x n ], y = [ f ( x 1 ) , . . . , f ( x n )] ⊤ , and K is the co v ariance matrix with en tries K ij = k ( x i , x j ). Th us, these p osterior predictive quantities, µ ( x ) and σ 2 ( x ), are used in the EI acquisition function. Exp ected Impro v emen t (EI): 4 The idea of EI is based on the impro vemen t statistic defined as I ( x ) = max 0 , f ∗ − Y ( x ) , whic h measures the impro v em en t ov er the current best v alue f ∗ = min( f ( x )). Based on this, Jones et al. (1998) defines the EI acquisition function as the exp ectation of I ( x ). The expression of EI is EI( x ) = f ∗ − µ ( x ) Φ f ∗ − µ ( x ) σ ( x ) ! + σ ( x ) ϕ f ∗ − µ ( x ) σ ( x ) ! , (1) where µ ( x ) and σ ( x ) are the p osterior predictive mean and standard deviation of Y ( x ) at some p oin t x , and Φ( · ) and ϕ ( · ) denote the cum ulative distribution function and probabil- it y densit y function of the standard normal distribution. EI balances the trade-off betw een exploration and exploitation during the search process. The first term emphasizes exploita- tion b y fa voring p oints with high predicted v alues. The second term emphasizes exploration b y fa voring p oints with high uncertain ty . The next ev aluation p oint is obtained b y maxi- mizing EI, that is, x ∗ = argmax EI( x ) , which is then used for the next function ev aluation and mo del up date. 2.2 T rust Region CBO Approac hes Solving a constrained optimization problem in high-dimensional space using the BO al- gorithm is c hallenging due to the large searc h space and the influence of constrain ts. T o handle high dimensionality , the trust region method restricts the search to a local region around the curren t b est solution. This region adapts during the optimization pro cess; it expands when improv emen ts are observed and shrinks when progress slows. This lo cal searc h impro v es efficiency and stability in high-dimensional settings. Here, w e discuss tw o recen tly prop osed trust-region based BO approaches. 2.2.1 Scalab le Constrained Ba yesian Optimization (SCBO) A framework based on the trust-region algorithm (Y uan, 2015), Eriksson et al. (2019) prop osed the trust region BO (T uRBO) algorithm for solving the unconstrained blac k-b ox optimization problem in a high-dimensional setting. Later, Eriksson and P olo czek (2021) 5 extends T uRBO to Scalable Constrained Ba yesian Optimization (SCBO) to handle black- b o x high-dimensional optimization problems with constraints. SCBO initialized a trust region, that is, a hyperrectangle, around the b est feasible solution found so far. If no feasible point is a v ailable, it is cen tered at the p oin t with the smallest constrain t violation. A t each iteration, SCBO generates a candidate p oint within the current trust region using Thompson sampling (TS) (Thompson, 1933) for the next function ev aluation. TS balance improv ement from the current b est v alue and the feasibility of constraints. Also, the size of the trust region is adjusted during the optimization pro cess. It expands when successful impro vemen ts are observ ed and shrinks when the search fails to mak e progress. This adaptiv e strategy helps maintain efficient, stable p erformance in high-dimensional, constrained settings. 2.2.2 F easibility-Dr iv en T rust Region Ba y esian Optimization (FuRBO) The F uRBO framework (Ascia et al., 2025) builds on the T uRBO algorithm (Eriksson et al., 2019), but differs in the w ay the trust region is defined. Similar to SCBO, the trust region is centered at the b est feasible p oin t, or at the p oin t with the smallest constraint violation when no feasible solution is av ailable. How ev er, F uRBO defines the trust region as a hypersphere instead of a hyperrectangle. This spherical region provides an isotropic search space around the center, whic h av oids bias tow ard co ordinate directions. Candidate p oints are sampled within this h yp ersphere, whic h leads to a more uniform exploration of the lo cal region. The size of the trust region is adapted during the optimization pro cess. It expands when impro vemen ts are observ ed and shrinks when progress slows down. This design im pro ves the robustness of the search in high-dimensional constrained settings. 3 Methodology This Section discusses the methodology that combines the big- M p enalt y approac h for con- strain t handling with the trust region to address the high dimensionality of the optimization problem. 6 3.1 Form ulation The general constrained optimization problem is defined as min x ∈B f ( x ) sub ject to, g j ( x ) ≤ 0 , j = 1 , 2 , . . . , J. (2) where B is a high-dimensional searc h space, typically a bounded domain in R D . In this form ulation, f ( x ) denotes the ob jective function, and g j ( x ) represents the set of constrain t functions. All functions in (2) are treated as black-box. Their analytical forms are unknown, and gradien t information is not a v ailable. Only p oint-wise ev aluations of f ( x ) and g j ( x ) can be obtained, whic h are often exp ensive to compute. The goal is to iden tify a feasible solution that satisfies all constrain ts while minimizing the ob jective function using a limited n um b er of ev aluations. In recen t y ears, conv erting a constrained problem into an unconstrained one has b ecome common in the BO literature (Gramacy et al., 2016; Ariafar et al., 2019; Upadh y e and Cho wdhury, 2024; Zhao and Xu, 2024; Cho wdhury and Upadhy e, 2025). These approac hes rely on p enalt y-based metho ds from classical optimization (No cedal and W right, 2006), where constrain t violations are incorp orated into the ob jective function. In this article, we adopt the big- M p enalty approach, whic h imp oses a p enalty when constrain ts are violated. The constrained problem is reformulated as the following uncon- strained problem (Ariafar et al., 2019; Upadhy e and Cho wdhury, 2024; Chowdh ury and Upadh ye, 2025), min x ∈B F ( x ) = f ( x ) + M J X j =1 I g j ( x ) > 0 , (3) where I ( · ) is the indicator function that returns 1 if the constrain t is violated and 0 other- wise, and M is a large p ositive constan t that p enalizes infeasible solutions. This formulation assigns an additional cost to an y p oint that violates one or more constrain ts. As a result, feasible solutions are alwa ys preferred ov er infeasible ones when M is sufficiently large. The transformed ob jective F ( x ) can then b e optimized using standard BO metho ds without explicitly handling constraints. No w, we mo del the unconstrained ob jectiv e in (3) using independent GPs for the ob- jectiv e and each constraint. Let Y f ( x ) denote the GP mo del for the ob jective and Y g j ( x ) 7 denote the GP mo del for the j -th constrain t. The resulting surrogate for the p enalized ob jectiv e is giv en by Y F ( x ) = Y f ( x ) + M J X j =1 I Y g j ( x ) > 0 . (4) This formulation combines the predictive distributions of the ob jective and constraints. Since each comp onent is modeled indep endently , w e can deriv e the predictive mean and v ariance of Y F ( x ) at a new p oint x as E Y F ( x ) = µ F ( x ) = µ f ( x ) + M J X j =1 p Y g j ( x ) > 0 , (5) V Y F ( x ) = σ 2 F ( x ) = σ 2 f ( x ) + M 2 J X j =1 p Y g j ( x ) > 0 1 − p Y g j ( x ) > 0 , (6) where p Y g j ( x ) > 0 = 1 − Φ − µ g j ( x ) σ g j ( x ) denotes the probability of constrain t violation. The mean µ F ( x ) increases with the probability of infeasibility , which penalizes regions that are lik ely to violate constraints. The v ariance σ 2 F ( x ) reflects both the uncertain ty in the ob jectiv e and the uncertain t y in constrain t satisfaction. Using these quantities, w e define the p enalized EI acquisition function (Chowdh ury and Upadh ye, 2025) as M EI( x ) = F ∗ − µ F ( x ) Φ F ∗ − µ F ( x ) σ F ( x ) ! + σ F ( x ) ϕ F ∗ − µ F ( x ) σ F ( x ) ! , (7) where F ∗ = min F ( x ) . This acquisition function guides the search tow ard regions with lo w ob jective v alues while accoun ting for the lik eliho o d of constrain t satisfaction. Next, w e in tegrate the M EI acquisition function with a trust region strategy to obtain T rust Region M EI (TR- M EI). Instead of optimizing M EI o v er the entire search space B , the optimization is restricted to a lo cal trust region cen tered at the current best solution. 3.2 T rust-Region M EI In a high-dimensional constrained optimization setting, the trust region approac h is effective for locating feasible and optimal solutions. In the literature, SCBO and F uRBO are t wo trust region based metho ds that differ in how the next sample p oin t is selected during the BO pro cedure. As discussed in Section 2.2, SCBO defines the trust region ov er a h yp errectangle domain, while F uRBO defines it ov er a hypersphere domain. 8 In this article, w e embrace the hyperrectangle domain setting and use co ordinate-wise p erturbation to generate candidate p oints within the trust region. W e also employ the EI acquisition function within this lo cal region. A t each iteration, a trust region is defined around the b est observed p oin t. Candidate p oin ts are generated within this region and ev aluated using the M EI criterion. The size of the trust region is up dated during the optimization pro cess. It expands when improv ements are observed and shrinks when no progress is made. This adaptiv e mec hanism fo cuses the searc h in promising regions while maintaining stabilit y . By com bining the big- M p enalt y-based form ulation with a trust region strategy , TR- M EI impro ves efficiency in high-dimensional constrained problems. It reduces the effect of the large search space and guides the optimization to ward feasible and optimal regions. F urther, we compare the prop osed TR- M EI metho d with SCBO and F uRBO to high- ligh t the differences: • TR- M EI solv es an unconstrained version of the constrained problem, while SCBO and F uRBO handle the constrained problem explicitly . • TR- M EI and SCBO define the h yp errectangular trust region, while F uRBO uses h yp erspherical. • TR- M EI uses the standard EI acquisition function, while SCBO and F uRBO rely on Thompson sampling strategies. The adv an tages of the proposed metho dology are as follo ws. First, the TR- M EI acquisition function admits an explicit mathematical expression. This allows direct computation and a voids the need for approximation or sampling based estimates. As a result, the method remains simple to implement and computationally efficien t. Second, the metho d can start from infeasible initial p oin ts. The big- M p enalty for- m ulation assigns higher ob jective v alues to constraint violations, which guides the searc h to ward feasible regions even when no feasible solution is av ailable at the b eginning. This mak es the approac h robust in practical scenarios where feasible initialization is difficult. Finally , the in tegration of the trust region strategy enables the metho d to handle high- dimensional constrained optimization problems effectively . By restricting the search to a lo cal region and adapting its size based on observed progress, the metho d impro v es 9 stabilit y and reduces the effect of the large searc h space while main taining con vergence to ward feasible and optimal solutions. 4 Experiments This section presents the comparison results of the prop osed algorithm on a range of syn- thetic and real-world high-dimensional constrained optimization problems. The goal is to ev aluate the metho d’s p erformance in terms of solution qualit y , constraint satisfaction, and computational efficiency . Algorithm 1 T rust Region M -EI 1: Ev aluate initial design X and train surrogate mo dels ( f , g j ) 2: x best ← arg min x ∈B S ( X ; f , g j ) 3: while Optimization budget not exhausted do 4: T R ← define TR 5: x next ← TR- M EI(( f , g j ) , T R ) 6: f next ← f ( x next ) 7: g next j ← g j ( x next ) 8: S ← S ∪ { ( x next , f next , g next j ) } 9: Fit surrogate mo dels ov er S 10: Up date n s and n f 11: if n s = τ s or n f = τ f then 12: L ← adjust( L ) 13: end if 14: x best ← arg min x ∈X S ( X ; f , g j ) 15: end while 16: return x best 4.1 Experimental setup W e ev aluate the proposed TR- M EI algorithm against SCBO and F uRBO. The comparison fo cuses on their p erformance in high-dimensional constrained optimization problems, where b oth feasibilit y and sample efficiency are imp ortant. 10 F or ev aluation, we consider three representativ e test functions, namely Ac kley , Levy , and Rastrigin. These functions capture different landscape c haracteristics such as separable, ill-conditioned, and m ultimo dal behavior. Each function is defined in 20 dimensions with mo derate constrain t difficulty . The same exp erimental setting is used for all metho ds to ensure a fair comparison. Each algorithm b egins with an initial design of 2 D p oin ts and is allo wed a total ev aluation budget of 50 function ev aluations. All exp eriments are repeated m ultiple times with differen t initial designs and random seeds to account for v ariabilit y in p erformance. F or a fair comparison across all frameworks, the num b er of initial random p oints is k ept the same. W e set n = 2 × d , where d is the dimension of the problem. W e use a zero mean function and a Mat ´ e rn 5 / 2 kernel for fitting the GP surrogate mo del. The results are a veraged o ver 30 runs and rep orted with one standard error to reflect v ariabilit y . All implemen tations of TR- M EI, F uRBO, and SCBO are carried out in Python using the GPyT orch (Gardner et al., 2018) and BoT orch (Balandat et al., 2020) libraries. A common implementation framew ork is used for all metho ds to ensure consistency in mo del- ing and ev aluation. This setup allows a fair comparison across metho ds while maintaining computational efficiency . F urther Algorithm 1 provides the flow-c hart of the TR- M EI metho dology . F easible solutions are alwa ys preferred o ver infeasible ones. Any infeasible p oint is as- signed the worst ob jectiv e v alue observ ed for the corresp onding problem across all metho ds. This ensures that the ev aluation prop erly reflects b oth optimality and constraint satisfac- tion. 4.2 Results In this subsection, we compare TR- M EI, F uRBO, and SCBO on the Ac kley , Levy , and Rastrigin synthetic problems in a 20 dimensional constrained setting. These b enc hmark functions are widely used to ev aluate optimization algorithms due to their complex land- scap es. The Ackley function is c haracterized by a m ultimo dal surface with many lo cal minima, while the Levy function presen ts a c hallenging structure with strong nonlinearity . The mathematical formulations of the problems are giv en in (A1), (A2), (A3). In Figures 1, 2, and 3, we visualize the p erformance of eac h metho d across different 11 Figure 1: Ac kley b enc hmark problems. F or the Ac kley problem, the results show the ability of each metho d to handle a m ultimo dal landscap e and iden tify feasible solutions. In Figure 1, TR- M EI con verges to a feasible optimal solution faster than F uRBO and SCBO, which contin ue to impro ve o ver iterations. T able 1 sho ws that the a verage p erformance of TR- M EI is b etter than the other metho ds, which indicates consisten t p erformance. T able 1: Best v alid optimal solution with mean ± standard deviation. Problems TR- M EI F uRBO SCBO Ac kley 0 . 0085 ± 0 . 0102 4 . 4120 ± 0 . 3733 7 . 3662 ± 1 . 0541 Levy 0 . 0071 ± 0 . 0002 4 . 8199 ± 0 . 5856 5 . 4482 ± 0 . 3754 Rastrigin 47 . 0507 ± 1 . 1983 173 . 7190 ± 40 . 0435 292 . 2801 ± 36 . 344 F or the L ˆ e vy problem, the comparison shows how the metho ds handle strong nonlin- earit y and complex constraint structures. Figure 2 shows that TR- M EI reaches a feasible 12 Figure 2: Levy optimal solution around 15 function ev aluations, while F uRBO and SCBO approach the optim um only after ab out 50 ev aluations. T able 1 shows the consistency of the solution in terms of av erage p erformance. F or the Rastrigin problem, the results sho w the performance of eac h method in a setting with man y regularly distributed lo cal minima, whic h makes global optimization difficult. Figure 3 sho ws that TR- M EI ac hieves b etter performance compared to F uRBO and SCBO. This observ ation is also supp orted by T able 1. 13 Figure 3: Rastrigin 4.3 Application to por tfolio optimization T o b e written. 14 5 Discussion An optimization problem in a high-dimensional searc h space under constrain ts is c halleng- ing for iden tifying a feasible global optimum. In this article, we propose a h ybrid BO metho dology that combines the classical big M p enalty , GP surrogate mo deling, and a trust region algorithm. The proposed TR- M EI metho d uses the EI acquisition function, whic h balances exploration and exploitation within a lo cal trust region. The integration of EI within the trust region enables the metho d to select informativ e p oin ts based on the predicted mean and uncertaint y . As a result, the proposed TR- M EI approac h provides an effectiv e balance b etw een feasibilit y and optimalit y while maintaining sample efficiency in high-dimensional constrained optimization problems. Finally , the comparison of TR- M EI with SCBO and F uRBO on b oth syn thetic and real-w orld problems demonstrates its efficiency and effectiveness. The results show that TR- M EI is able to iden tify high-quality feasible solutions with fewer function ev alua- tions. It maintains stable p erformance across different problem settings and scales w ell with increasing dimensionalit y . These observ ations highlight the adv an tage of combining the p enalt y-based form ulation with a trust region strategy in constrained BO literature. Appendix A: The mathematical descriptions of the syn thetic constrained optimization problems are given b elo w: Constrained Ac kley Problem Let x = ( x 1 , . . . , x d ) ∈ R d . The Ac kley function is defined as f ( x ) = − a exp − b v u u t 1 d d X i =1 x 2 i − exp 1 d d X i =1 cos( cx i ) ! + a + e, where the standard parameter v alues are a = 20 , b = 0 . 2 , c = 2 π . 15 The constrained optimization problem is min x ∈ R d f ( x ) s.t. d X i =1 x i ≤ 0 , ∥ x ∥ 2 ≤ 5 . (A1) Constrained L ˆ e vy Problem Let x = ( x 1 , . . . , x d ) ∈ R d . Define w i = 1 + x i − 1 4 , i = 1 , . . . , d. The optimization problem is min x ∈ R d sin 2 ( π w 1 ) + d − 1 X i =1 ( w i − 1) 2 1 + 10 sin 2 ( π w i + 1) + ( w d − 1) 2 1 + sin 2 (2 π w d ) s.t. d X i =1 x i ≤ 0 , ∥ x ∥ 2 ≤ 5 . (A2) Constrained Rastrigin Problem Let x = ( x 1 , . . . , x d ) ∈ R d . The Rastrigin function is defined as f ( x ) = Ad + d X i =1 x 2 i − A cos(2 π x i ) , where the standard parameter v alue is A = 10 . The constrained optimization problem is min x ∈ R d f ( x ) s.t. d X i =1 x i ≤ 0 , ∥ x ∥ 2 ≤ 5 . (A3) 16 Dec laration of Interest The authors declare no conflicts of interest. All authors contributed equally . References Amini, S., V annieu wenh uyse, I., and Morales-Hern´ andez, A. (2025). Constrained Bay esian Optimization: A Review. IEEE A c c ess , 13:1581–1593. Ariafar, S., Coll-F on t, J., Bro oks, D., and Dy , J. (2019). ADMMBO: Ba yesian optimiza- tion with unkno wn constraints using ADMM. Journal of Machine L e arning R ese ar ch , 20(123):1–26. Ascia, P ., Rap oni, E., B¨ ac k, T., and Duddeck, F. (2025). F easibility-Driv en T rust Region Ba yesian Optimization. In Akoglu, L., Do err, C., v an Rijn, J. N., Garnett, R., and Gardner, J. R., editors, Pr o c e e dings of the F ourth International Confer enc e on Automate d Machine L e arning , volume 293 of Pr o c e e dings of Machine L e arning R ese ar ch , pages 2/1– 28. PMLR. Balandat, M., Karrer, B., Jiang, D. R., Daulton, S., Letham, B., Wilson, A. G., and Baksh y , E. (2020). BoT orc h: A F ramework for Efficient Mon te-Carlo Ba yesian Optimization. In A dvanc es in Neur al Information Pr o c essing Systems 33 . Cho wdhury , R. and Upadhy e, N. S. (2025). Addressing mixed constraints: an improv ed framew ork for blac k-b ox optimization. Journal of Glob al Optimization , 92:889–908. Eriksson, D., P earce, M., Gardner, J., T urner, R. D., and P olo czek, M. (2019). Scalable Global Optimization via Lo cal Bay esian Optimization. A dvanc es in neur al information pr o c essing systems , 32. Eriksson, D. and Poloczek, M. (2021). Scalable Constrained Bay esian Optimization. In International c onfer enc e on artificial intel ligenc e and statistics , pages 730–738. PMLR. F razier, P . I. (2018). Ba yesian Optimization. In R e c ent advanc es in optimization and mo deling of c ontemp or ary pr oblems , pages 255–278. Informs. Gardner, J. R., Pleiss, G., Bindel, D., W einberger, K. Q., and Wilson, A. G. (2018). Gp ytorch: Blac kb ox matrix-matrix gaussian pro cess inference with gpu acceleration. In A dvanc es in Neur al Information Pr o c essing Systems . Garnett, R. (2023). Bayesian Optimization . Cambridge Univ ersity Press. Gramacy , R. B. (2020). Surr o gates . Chapman and Hall/CR C. Gramacy , R. B., Gray , G. A., Le Digab el, S., Lee, H. K., Ranjan, P ., W ells, G., and Wild, S. M. (2016). Mo deling an augmen ted Lagrangian for blac kb o x constrained optimization. T e chnometrics , 58(1):1–11. Greenhill, S., Rana, S., Gupta, S., V ellanki, P ., and V enk atesh, S. (2020). Bay esian Opti- mization for Adaptive Experimental Design: A Review. IEEE A c c ess , 8:13937–13948. 17 Jones, D. R., Schonlau, M., and W elch, W. J. (1998). Efficient Global Optimization of Exp ensiv e Black-Bo x F unctions. Journal of Glob al Optimization , 13(4):455–492. No cedal, J. and W right, S. J. (2006). Numeric al Optimization . Springer, 2 edition. P ap enmeier, L., Poloczek, M., and Nardi, L. (2026). Understanding high-dimensional Ba yesian optimization. In Pr o c e e dings of the 42nd International Confer enc e on Machine L e arning , ICML’25. JMLR.org. P ourmohamad, T. and Lee, H. K. (2022). Bay esian optimization via barrier functions. Journal of Computational and Gr aphic al Statistics , 31(1):74–83. P ow ell, W. B. (2019). A unified framework for sto c hastic optimization. Eur op e an journal of op er ational r ese ar ch , 275(3):795–821. Rasm ussen, C. E. and Williams, C. K. (2006). Gaussian Pr o c esses for Machine L e arning , v olume 2. MIT press Cam bridge, MA. Shahriari, B., Swersky , K., W ang, Z., Adams, R. P ., and de F reitas, N. (2016). T aking the Human Out of the Lo op: A Review of Ba yesian Optimization. Pr o c e e dings of the IEEE , 104(1):148–175. Thompson, W. R. (1933). On the Likelihoo d That One Unknown Probability Excee ds Another in View of the Evidence of Tw o Samples. Biometrika , 25(3/4):285–294. Upadh ye, N. S. and Cho wdhury , R. (2024). Constrained Bay esian optimization with lo wer confidence b ound. T e chnometrics , 66(4):561–574. Y uan, Y.-X. (2015). Recen t adv ances in trust region algorithms. Math. Pr o gr am. , 151(1):249–281. Zhao, J. and Xu, J. (2024). Bay esian Optimization via Exact Penalt y. T e chnometrics . 18

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment