Project and Generate: Divergence-Free Neural Operators for Incompressible Flows

Learning-based models for fluid dynamics often operate in unconstrained function spaces, leading to physically inadmissible, unstable simulations. While penalty-based methods offer soft regularization, they provide no structural guarantees, resulting…

Authors: Xigui Li, Hongwei Zhang, Ruoxi Jiang

Pr oject and Generate: Div ergence-Fr ee Neural Operators f or Incompr essible Flows Xigui Li * 1 2 Hongwei Zhang * 1 2 Ruoxi Jiang † 1 2 Deshu Chen 1 2 Chensen Lin 1 2 Limei Han 1 2 Y uan Qi 1 2 Xin Guo † 1 2 Y uan Cheng † 1 2 Abstract Learning-based models for fluid dynamics often operate in unconstrained function spaces, leading to physically inadmissible, unstable simulations. While penalty-based methods offer soft regulariza- tion, they provide no structural guarantees, result- ing in spurious di vergence and long-term collapse. In this work, we introduce a unified framew ork that enforces the incompressible continuity equa- tion as a hard, intrinsic constraint for both deter- ministic and generativ e modeling. First, to project deterministic models onto the diver gence-free sub- space, we integrate a differentiable spectral Leray projection grounded in the Helmholtz–Hodge de- composition, which restricts the regression hy- pothesis space to physically admissible velocity fields. Second, to generate physically consis- tent distributions, we show that simply project- ing model outputs is insufficient when the prior is incompatible. T o address this, we construct a div ergence-free Gaussian reference measure via a curl-based pushforward, ensuring the entire probability flo w remains subspace-consistent by construction. Experiments on 2D Navier–Stokes equations demonstrate exact incompressibility up to discretization error and substantially improv ed stability and physical consistency . 1. Introduction Learning-based models ha ve sho wn remarkable success in predicting and generating fluid flows, driven by adv ances in neural operators ( Li et al. , 2021 ; Lu et al. , 2021 ; Adrian * Equal contribution 1 Artificial Intelligence Innov ation and Incubation Institute, Fudan Univ ersity , Shanghai, China 2 Shanghai Academy of Artificial Intelligence for Sci- ence, Shanghai, China. Correspondence to: Ruoxi Jiang < roxie jiang@fudan.edu.cn > , Xin Guo < guoxin@sais.org.cn > , Y uan Cheng < cheng yuan@fudan.edu.cn > . Pr eprint. Marc h 26, 2026. et al. , 2025 ; Sanz-Alonso & W aniorek , 2025 ) and modern generativ e modeling frame works ( Ho et al. , 2020 ; Song et al. , 2021 ; Lipman et al. , 2023 ; Liu et al. , 2023 ; Chen et al. , 2024 ). These methods of fer substantial computational speedups ov er classical solvers and enable data-driv en emu- lation of complex dynamical systems. Howe ver , most e xist- ing approaches operate in unconstrained function spaces and do not explicitly encode the physical structures that go vern fluid dynamics. As a result, ev en models that achie ve low short-term prediction error may produce velocity fields that violate fundamental physical principles, exhibiting spurious div ergence, distorted ener gy spectra, or other nonphysical artifacts ( Richter-Po well et al. , 2022 ; Hansen et al. , 2023 ; Krishnapriyan et al. , 2021 ; W ang et al. , 2022 ; Shu et al. , 2023 ). This issue is particularly pronounced for incompressible flows, where physical admissibility is determined by an exact conserv ation law rather than an empirical regularity . In incompressible fluid dynamics, the velocity field u is required to satisfy the continuity equation ∇ · u = 0 , (1) which expresses mass conserv ation and restricts solutions to a closed linear subspace of div ergence-free vector fields. Unlike approximate physical priors, this constraint does not admit small, localized violations: an y de viation nec- essarily alters the global flow structure. As a result, even weak di vergence errors can lead to artificial sources or sinks and qualitati vely incorrect dynamics ( Mohan et al. , 2019 ; Richter-Po well et al. , 2022 ). From a learning perspectiv e, howe ver , most data-driv en models are formulated and trained in unconstrained function spaces, where the div ergence-free structure is not preserved by construction. Even when training data satisfy ∇ · u = 0 , approximation errors, finite model capacity , and optimiza- tion artifacts can introduce spurious di vergence into predic- tions. Because incompressibility defines a global subspace rather than a pointwise condition, such violations cannot be eliminated by local corrections and may accumulate over time, degrading long-term stability . In contrast, classical numerical solvers enforce incompressibility exactly through 1 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows (a) Qualitative Comparison of Physica l Consistency (b) Comparison of Computational P aradigms Baseline (Unconstrained) Input Base Predictor Output V iolates Continuity Leray Projection (Constrained) Input Base Predictor Leray Projector Output Intermediate ! " Strictly Conservative Numerical Solver (CFD) Input Advection-Diffusion Pressure Poisson Solver Output T entative (! ∗ ) Computationally Expensive F igure 1. Motivation f or the P RO J E C T & G E N E R ATE framework illustrated using numerical solvers. (a) V isual comparison. V elocity streamlines (top, colored by error magnitude), di ver gence fields (middle), and recovered physical pressure (bottom). The Baseline approach (middle), which enforces only the momentum equation, exhibits se vere violations of mass conservation with nonzero di vergence and noisy pressure fields. A Leray-pr ojected solution (right) enforces incompressibility via a spectral Leray projection, yielding a div ergence-free velocity field ( ∇ · u = 0 ) with machine precision and recov ering a smooth, physically consistent pressure field. The result is visually indistinguishable from the Gr ound T ruth numerical solution (left). (b) Computational paradigms. Left: Classical numerical solvers (CFD) enforce incompressibility through iterativ e Poisson solves, which require accessing the pressure v ariable and are computationally expensi ve. Middle: Unconstrained end-to-end learning approaches typically violate the continuity constraint. Right: W e lev erage Leray projection to decouple dynamics from constraint enforcement, enabling hard physical constraints to be imposed via a fast, differentiable spectral operator . projection-based schemes, typically by introducing an aux- iliary pressure variable and solving a Poisson equation at each time step. While effecti ve, this approach is dif ficult to integrate into modern learning pipelines due to its implicit coupling and iterativ e nature. Motiv ated by this classical perspectiv e, we identify the Leray projection as the essen- tial structural component that enforces incompressibility , without explicitly introducing pressure. A common strategy to mitigate this issue is to enforce incom- pressibility through soft penalties, as in physics-informed neural networks (PINNs) and related approaches ( Raissi et al. , 2019 ). While such methods can reduce diver gence in practice, they do not guarantee exact satisfaction of the con- straint and often require careful tuning of penalty weights. More fundamentally , soft constraints treat incompressibil- ity as an auxiliary optimization objectiv e rather than as a defining property of the solution space, causing instability in challenging regimes such as high Re ynolds numbers or long-term rollouts. In this work, we adopt a different perspective and treat incompressibility as a har d structural constraint on both model outputs and generati ve processes. As shown in Fig- ure 1 , this approach effecti vely eliminates the non-physical artifacts observed in baselines and reco vers strict mass con- servation. Our approach is based on the Leray projection arising from the Helmholtz–Hodge decomposition ( Chorin , 1967 ; 1968 ), which maps arbitrary vector fields onto the div ergence-free subspace by removing the irrotational com- ponents. W e integrate this projection as a modular operator that can be applied consistently across both learning and in- ference paradigms, without modifying the underlying model architecture. This operator-le vel enforcement ensures that all predicted velocity fields lie exactly in the physically admissible function space. For regression tasks, the proposed projection guarantees incompressibility of model predictions by construction. For generativ e modeling, howe ver , enforcing di ver gence-free structure poses additional challenges. In generati ve frame- works such as flow matching, not only the generated sam- ples but also the reference measure, interpolation paths, and learned dynamics must remain compatible with the under - lying physical constraint. Naiv ely projecting samples or velocities can lead to inconsistencies between probability measures and may render the probability path ill-defined, particularly in infinite-dimensional settings where mutual 2 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows absolute continuity is not guaranteed. Building on the func- tional flo w matching perspecti ve ( Kerrigan et al. , 2024 ), we extend our framework to generativ e modeling by con- structing probability paths that ev olve entirely within the div ergence-free subspace. T o this end, we design the refer- ence measure to be compatible with the projection operator, so that the induced probability path is well-defined on the space of incompressible velocity fields. This alignment ensures that all intermediate states and learned dynamics remain physically admissible throughout the generation. While all models are implemented on finite-resolution grids, our methodology is motiv ated by a function-space perspec- tiv e, in which both regression and generation are viewed as approximations to operators acting on spaces of diver gence- free velocity fields. From this vie wpoint, the Leray projec- tion and the design of the reference measure are structural components of the modeling pipeline, rather than auxil- iary regularization mechanisms. Empirical results on two- dimensional Navier –Stokes equations demonstrate that en- forcing incompressibility as a hard constraint leads to im- prov ed physical fidelity , enhanced stability under long-term rollout, and superior performance. Our main contributions are summarized as follo ws: • W e propose a unified framew ork that treats incompress- ibility as an intrinsic geometric property of the function space, enabling exact physical enforcement across both regression and generati ve modeling tasks. • W e introduce a modular Leray projection and a div ergence-free Gaussian reference measure based on stream functions, yielding well-defined flo w matching probability paths in function space. • W e demonstrate through extensi ve experiments on two-dimensional Navier –Stokes equations that the pro- posed approach significantly improv es physical fidelity and long-term stability . 2. Related W ork Data-Driven Neural Operators. Neural operators ha ve emerged as efficient surrogates for PDE solvers by learn- ing mappings between infinite-dimensional function spaces. Methods such as the Fourier Neural Operator ( Li et al. , 2021 ) and DeepONet ( Lu et al. , 2021 ) achiev e substantial speedups by predicting flow e volution in a single forward pass, and hav e been applied to a wide range of fluid problems ( Pathak et al. , 2022 ; Price et al. , 2023 ; Lippe et al. , 2023 ; Azizzade- nesheli et al. , 2024 ; Jiang et al. , 2025 ; Barthel Sorensen et al. , 2026 ). Howe ver , deterministic operators often ex- hibit spectral bias, leading to ov er-smoothed predictions that under-represent high-frequenc y turbulent structures ( Jiang et al. , 2023 ; Falasca , 2025 ). T o address this limitation, re- cent work has explored stochastic and generativ e models for fluid dynamics, including dif fusion and flow-matching- based approaches ( Molinaro et al. , 2024 ; K ohl et al. , 2024 ). Despite their improv ed expressi vity , these models typically operate in unconstrained vector spaces and may violate fun- damental physical constraints such as incompressibility . Physics-Inf ormed Constraints in Learning . Physical constraints are commonly incorporated into learning frame- works through either soft penalties or inference-time cor- rections. PINNs ( Raissi et al. , 2019 ) and related extensions penalize PDE residuals during training, an approach that has also been adopted in generativ e models ( Ben-Hamu et al. , 2024 ; Shu et al. , 2023 ; Huang et al. , 2024 ; Bastek et al. , 2025 ). Despite their flexibility , such soft constraints provide no guarantee of exact physical validity and often lead to ill-conditioned optimization landscapes ( W ang et al. , 2022 ). Related approaches in differentiable ph ysics, such as Hamiltonian ( Greydanus et al. , 2019 ) and Lagrangian neural networks ( Cranmer et al. , 2020 ), encode physical structure directly into the model to preserve in variants. Alternativ ely , some methods enforce constraints only at inference time via projection or correction steps ( Sojitra & San , 2025 ; Utkarsh et al. , 2025 ; Cheng et al. , 2025 ), which, while effecti ve at the output lev el, introduce additional computational ov er- head and do not structurally constrain the learned model or the underlying probability flow . 3. Preliminaries 3.1. Notations and Functional Spaces Let R and Z denote the sets of real numbers and integers, respectiv ely , and define the unit torus T 2 := R 2 / Z 2 . W e denote by ∇ and ∆ the spatial gradient and Laplacian oper- ators. For a v ector field u = ( u 1 , u 2 ) ⊤ on T 2 , we define the scalar curl as ∇ × u := ∂ 1 u 2 − ∂ 2 u 1 and the vector curl as ∇ ⊥ ψ := ( ∂ 2 ψ , − ∂ 1 ψ ) ⊤ . Let L 2 per ( T 2 ; R 2 ) denote the Hilbert space of square- integrable periodic vector fields, and H r per the periodic Sobolev spaces of order r ≥ 0 . Unless otherwise speci- fied, all norms and inner products are taken in L 2 . 3.2. Na vier –Stokes Equations and Hodge Decomposition Incompressible Dynamics. W e consider the 2D incom- pressible Navier –Stokes equations on the torus T 2 : ( ∂ t u + ( u · ∇ ) u = ν ∆ u + f − ∇ p, ∇ · u = 0 , (2) where u is the v elocity field and ν > 0 the viscosity . Hodge Decomposition and the Subspace V . T o handle the incompressibility constraint ∇ · u = 0 , we inv oke the 3 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows (a) Projective Regr ession (b) Projective Gen erative Model Input ! Unconstrained prediction Prediction w/ divergence T arget output Divergence-Free Neural Operator Initial Condition ! Divergence- free noise Divergence-Free Neural Operator Unconstrained Prediction T arget output F igure 2. Schematic of the proposed Pr ojective Framew ork. The grey surface represents the div ergence-free subspace V (the physical manifold). (a) Projecti ve Regression: The framework takes an input and uses a base neural operator f θ to produce an intermediate prediction in the ambient space (shown ho vering above the manifold). This unconstrained prediction is then strictly mapped onto V via the spectral Leray projection P . The composite system constitutes a Diver gence-F ree Neur al Operator , ensuring the final output is physically consistent. (b) Projective Generati ve Model: The generation process is initialized with a sample from a divergence-fr ee noise distribution (bottom left) constrained to V . During the probability flow e volution ( τ ∈ [0 , 1] ), the vector field predicted by f θ is continuously projected via P (red arrows) back onto the manifold. This enforces hard constraints at ev ery step of the flow matching process, ensuring the entire trajectory stays within the physically admissible subspace. Hodge decomposition. Throughout this work, we restrict attention to zero-mean velocity fields, thereby excluding the harmonic (constant) component. Under this con vention, L 2 per admits the orthogonal decomposition L 2 per = V ⊕ V ⊥ , (3) where V is the subspace of div ergence-free v ector fields with zero mean (solenoidal fields), and V ⊥ is the subspace of gradient fields (irrotational fields): V := v ∈ L 2 per ∇ · v = 0 , Z T 2 v dx = 0 , V ⊥ := ∇ q q ∈ H 1 per ( T 2 ; R ) . (4) 4. Method In this section, focusing on 2D incompressible flows, we present a unified frame work for learning incompressible fluid dynamics with hard diver gence-free constraints. By embedding incompressibility directly into the operator struc- ture, all model states including intermediate dynamics, final outputs, and stochastic perturbations, are restricted to the div ergence-free subspace by construction. As illustrated in Figure 2 , this is realized through two complementary mech- anisms: (i) a Leray projection enforcing incompressibility , and (ii) a di ver gence-free noise construction for generative modeling. 4.1. Enfor cing Incompressibility via Leray Pr ojection T o ensure that learned velocity fields lie strictly in the div ergence-free subspace, we embed the Leray projection P as a structural component of the neural operator . Unlike soft- penalty methods (e.g., PINNs), this construction restricts the hypothesis space to V , ensuring physical consistency regardless of the optimization state. Our approach builds on the Helmholtz–Hodge decompo- sition. Under periodic boundary conditions, the space L 2 per ( T 2 ; R 2 ) admits an orthogonal decomposition into div ergence-free and irrotational components. W e denote by P : L 2 per → V the associated Leray projection onto the div ergence-free subspace. For any w ∈ L 2 per , the operator P denotes the L 2 - orthogonal projection onto the diver gence-free subspace V , which remov es the gradient component and yields the closest diver gence-free field in the L 2 sense, equiv alently characterized by P w = argmin u ∈V ∥ w − u ∥ L 2 . (5) By composing the neural operator output with P , incom- pressibility is enforced by construction, without auxiliary loss terms or post-hoc corrections. Spectral Implementation. Under periodic boundary con- ditions, the Leray projector admits an ef ficient Fourier -space implementation. Let ˆ w ( k ) denote the Fourier coef ficient of w at w av e vector k = ( k x , k y ) ∈ Z 2 . The incompressibility constraint ∇ · u = 0 is then characterized by k · ˆ u ( k ) = 0 . For k = 0 , the Leray projection is giv en by d P w ( k ) = I − k ⊗ k ∥ k ∥ 2 2 ˆ w ( k ) , (6) where I denotes the 2 × 2 identity matrix. The zero mode is set to zero to enforce a zero-mean condition, thereby ensuring the uniqueness of the Helmholtz–Hodge decom- 4 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Algorithm 1 Leray Projection in Fourier Space Require: V ector field v ∈ L 2 per ( T 2 ; R 2 ) Ensure: Diver gence-free field P v ∈ V 1: Compute Fourier transform: ˆ v ← F [ v ] 2: for each w ave v ector k = 0 do 3: α ← ( k · ˆ v ( k )) / ∥ k ∥ 2 2 4: c P v ( k ) ← ˆ v ( k ) − α k 5: end for 6: Set c P v ( 0 ) ← 0 7: P v ← ℜ ( F − 1 [ c P v ]) 8: retur n P v position under periodic boundaries (see Appendix F for the corresponding code implementation). 4.2. Learning Div ergence-Free V ector Fields W e parametrize time-dependent velocity fields v θ ( · , t ) using neural operators. T o enforce incompressibility , the Leray projection is composed with the model output, ensuring that all learned dynamics ev olve in V . Regression. F or deterministic prediction tasks, a neural operator f θ produces an unconstrained velocity field predic- tion f θ ( · , t ) ∈ L 2 per ( T 2 ; R 2 ) . W e enforce incompressibility by applying the Leray projection pointwise in time, ˆ u ( · , t ) = P f θ ( · , t ) , (7) ensuring the resulting prediction remains div ergence-free by construction. This projection-based formulation contrasts with PINNs, where incompressibility is typically imposed via soft penalty terms in the loss function. Such approaches require balanc- ing multiple objecti ves (including data fidelity , diver gence penalties, and boundary conditions), often necessitating problem-specific tuning of loss weights. In contrast, com- posing the neural operator with the Leray projection en- forces incompressibility at the level of the hypothesis space, eliminating auxiliary div ergence losses and ensuring physi- cal admissibility by design. Generative Modeling via Flow Matching . Standard flow matching is formulated in the ambient L 2 space and does not explicitly account for incompressibility constraints. W e instead restrict the learned probability flow to the diver gence- free subspace V . Flow Matching performs regression on a tar get v elocity field defined along an interpolation between data samples and reference samples. W e therefore enforce incompressibility by ensuring that this regression target lies in V . T o this end, we parametrize an unconstrained vector field v θ : L 2 per × [0 , 1] → L 2 per , and enforce incompressibility by composing it with the Leray projection. Let ν denote the data distribution supported on V , and let µ 0 be a reference distribution. Giv en u 1 ∼ ν and u 0 ∼ µ 0 , define the linear interpolation u τ = (1 − τ ) u 0 + τ u 1 , τ ∼ U (0 , 1) , (8) with target v elocity v τ = u 1 − u 0 . When both endpoints lie in V , v τ is div ergence-free due to linearity . Under this condition, the resulting di vergence-free flow matching objecti ve is L ( θ ) = E τ , u 0 , u 1 | c h P v θ ( u τ , τ ; c ) − v τ 2 L 2 i . (9) Here c denotes conditioning information, such as observed states at earlier time instances or physical initial conditions. This objectiv e enforces incompressibility at the level of the learned velocity field. Howe ver , consistency of the induced probability flow additionally requires the reference distrib u- tion µ 0 to be supported on V , posing a nontri vial technical challenge, particularly in infinite-dimensional settings. W e address this issue in the following subsection. 4.3. Diver gence-Free Gaussian Noise via Curl Pushforward While the Leray projection guarantees that the learned v e- locity field is div ergence-free, it is not suf ficient to ensure a well-defined flo w matching formulation. If the reference noise distribution is not supported on V , the regression tar - get generally contains compressible components, whereas the model output is constrained to be di vergence-free after projection, resulting in a structurally inconsistent objectiv e and ill-posed regression problem. Moreover , probability mass may leav e the physically admissible space, and the in- duced continuity equation may become ill-defined in infinite- dimensional settings. T o ensure consistency between the regression target, the learned dynamics, and the underly- ing probability measures, the reference noise must itself be supported on V , motiv ating the diver gence-free Gaussian construction introduced below . Curl-Based Gaussian Reference Measure on V . W e con- struct a di ver gence-free Gaussian reference measure using the stream function formulation of 2D incompressible flo ws. Let X := H 1 per ( T 2 ; R ) ∩ ψ : R T 2 ψ = 0 denote the space of zero-mean stream functions, and define the operator ∇ ⊥ : X → L 2 per ( T 2 ; R 2 ) by ∇ ⊥ ψ = ( ∂ 2 ψ , − ∂ 1 ψ ) ⊤ . 5 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Lemma 4.1. The operator ∇ ⊥ is a bounded linear map whose image is exactly the closed subspace of diver gence- fr ee, zer o-mean vector fields in L 2 . This property allo ws us to lift Gaussian measures from the scalar space X to the div ergence-free velocity space V . Let γ ψ = N (0 , C ψ ) be a centered Gaussian measure on X , and define the reference measure µ 0 := ( ∇ ⊥ ) # γ ψ . Then µ 0 is a centered Gaussian measure supported on V , with co v ariance operator C u = ∇ ⊥ C ψ ( ∇ ⊥ ) ∗ and Cameron– Martin space H µ 0 = ∇ ⊥ ( H γ ψ ) . Conditional Probability P aths. Conditioned on a fixed data sample y ∈ V , we define a family of time-indexed ran- dom fields ( u y τ ) τ ∈ [0 , 1] via an affine interpolation in function space, u y τ = σ τ u 0 + τ y , u 0 ∼ µ 0 , (10) where σ τ = 1 − (1 − σ min ) τ is a variance schedule with σ min > 0 . For each fixed τ ∈ [0 , 1) , the random field u y τ follows a Gaussian distribution on V with mean τ y and covariance operator C τ = σ 2 τ C u . In particular , all such conditional distributions share the same co variance structure and dif fer only in their means. Under the assumption that the data distrib ution ν is sup- ported on the Cameron–Martin space H µ 0 of the reference Gaussian measure, these conditional Gaussian distributions are mutually absolutely continuous for ν -almost e very y . Existence of a Divergence-Free Flow Matching V ector Field. A key dif ficulty in infinite-dimensional flow match- ing is the potential mutual singularity of probability mea- sures, which can obstruct the definition of a global velocity field. T o ensure well-posedness, we construct probability paths that remain absolutely continuous by design. Let ν ∈ P ( V ) denote the data distribution. For each y ∼ ν , the conditional path ( µ y τ ) τ ∈ [0 , 1] is defined as in Eq. ( 10 ) , with u 0 ∼ µ 0 . The corresponding marginal path µ τ := Z µ y τ d ν ( y ) is therefore supported on V . Under the assumption that all conditional measures share the same cov ariance operator and differ only by admissible Cameron–Martin shifts, the measures µ y τ are mutually absolutely continuous. Theorem 4.2 (Existence of a Div ergence-Free Flow Match- ing V ector Field) . Let ( µ τ ) τ ∈ [0 , 1] be the mar ginal path on V constructed above. Under the absolute continuity condition µ y τ ≪ µ τ , the Radon–Nikodym derivative w τ ( x , y ) := d µ y τ d µ τ ( x ) is well-defined for µ τ -almost every x . The vector field v τ ( x ) = Z V v y τ ( x ) w τ ( x , y ) d ν ( y ) (11) generates the path ( µ τ ) τ ∈ [0 , 1] , wher e v y τ denotes the veloc- ity field associated with the conditional path µ y τ . The proof is provided in Appendix D . In theory , the exis- tence result relies on the assumption that the data distri- bution ν is supported on the Cameron–Martin space asso- ciated with the reference covariance operator . V erifying this condition directly is generally intractable, particularly in infinite-dimensional settings. In practice, howe ver , our models operate on finite-resolution discretizations, where all probability measures are supported on finite-dimensional subspaces and the abov e absolute continuity condition is automatically satisfied. Consequently , follo wing prior work on functional flo w matching ( Kerrigan et al. , 2024 ), we do not explicitly enforce or verify this assumption in our e xperi- ments. A rigorous characterization of when such conditions hold in the infinite-dimensional limit is left for future work. 5. Experiments In this section, we validate the effectiv eness of our pro- posed framew ork on two-dimensional incompressible turbu- lent flows. W e compare our method against state-of-the-art regression and generativ e baselines, focusing on physical consistency (di vergence, spectrum) and long-term stability . Specifically , the ev aluation centers on two primary objec- tiv es: (1) demonstrating that enforcing incompressibility as a hard constraint improves prediction accuracy and stability compared to unconstrained models; and (2) establishing that our generati ve framew ork produces div erse, high-fidelity rollouts that rigorously respect conservation laws without sacrificing sample quality . 5.1. Experimental Setup Dataset and Implementation Details. Experiments focus on the 2D incompressible Navier–Stok es equations with pe- riodic boundary conditions at Re = 1000 . The dataset, gen- erated via a pseudo-spectral solver , contains 10,000 training trajectories ( 64 × 64 , 50 timesteps). Follo wing benchmarks ( Li et al. , 2021 ; Cheng et al. , 2025 ), the task inv olves pre- dicting 35-frames from a 15-frame history , with long-term rollouts (300 steps) to assess stability . W e e valuate per- formance using mean squared error (MSE) and di vergence ( |∇ · u | 2 ). Details are provided in Appendices G and H . 6 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Te s t R ollout (a) u velocity Te s t R ollout (b) v velocity Te s t R ollout (c) Streamlines Te s t R ollout (d) Reconstructed Pressure F igure 3. Flow Field V isualization. T op Row: Snapshot of velocity components ( u, v ). Bottom Row: Streamlines and Pressure field. The pressure is reconstructed from the predicted v elocity field via the pressure Poisson equation, serving as a proxy for the dynamical consistency of the flo w structure. Benchmarking utilizes the FNO backbone across regression and generati ve tasks. W e compare unconstrained baselines ( Reg. / Gen. Base ) against our proposed methods ( Reg. / Gen. Ours ), which enforce physical constraints via Leray projection and curl-based priors (Figure 2 ). Flow matching paths are sampled using the dopri5 solver . For generativ e ev aluation, metrics are reported as the ensemble mean and standard deviation o ver 20 independent realizations. 5.2. Results and Analysis Quantitative Perf ormance. T able 1 summarizes the quan- titativ e results across prediction (training horizon), short- term rollout, and long-term extrapolation. In the regression setting, Reg . (Base) achie ves reasonable initial accuracy but degrades drastically during extrapolation, e ventually div erg- ing due to rapid div ergence error accumulation. In contrast, Reg. (Ours) maintains structural stability throughout the rollout by enforcing e xact incompressibility up to discretiza- tion error , pre venting the growth of non-physical modes. A similar trend is observed in the generati ve comparison. Gen. (Base) produces plausible short-term samples b ut fails catastrophically in the long run (“Inf ” indicates numerical ov erflow). Our method, Gen. (Ours) , achiev es the best per- formance across all metrics. Notably , it outperforms even the regression models in MSE. This can be attributed to the model’ s ability to capture the correct statistical distribution of the flow , rather than conv erging to a smoothed mean prediction that dampens physically rele vant fluctuations. 7 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows T able 1. Quantitative Comparison on Re = 1000. W e report Mean ± Std over the test set. Bold indicates best performance. Gray values indicate model di vergence. Prediction and Short-term metrics are scaled by 10 − 3 and 10 − 2 respectiv ely . Model Prediction ( T 15 - 49 ) Short-term ( T 50 - 100 ) Long-term ( T 101 - 300 ) U-MSE V -MSE Div U-MSE V -MSE Div U-MSE V -MSE Div Regression Models Base 4 . 0 ± 3 . 6 5 . 6 ± 5 . 4 625 . 0 ± 576 . 0 16 . 6 ± 17 . 5 17 . 5 ± 15 . 5 21 . 7 ± 18 . 6 4 . 3 e 3 ± 5 . 1 e 3 4 . 4 e 3 ± 5 . 2 e 3 1 . 5 e 5 ± 1 . 9 e 5 Ours 3 . 4 ± 3 . 0 3 . 2 ± 2 . 9 0 . 1 ± 0 . 1 5 . 9 ± 4 . 3 6 . 9 ± 5 . 4 0 . 0 ± 0 . 0 227 . 0 ± 335 . 0 210 . 0 ± 328 . 0 118 . 0 ± 160 . 0 Generative Models Base 4 . 3 ± 3 . 6 4 . 6 ± 4 . 3 1 . 7 ± 1 . 6 1 . 0 ± 0 . 7 1 . 2 ± 1 . 4 0 . 6 ± 2 . 5 Inf Inf Inf Ours 1 . 0 ± 1 . 2 1 . 1 ± 1 . 3 4 . 0 e- 4 ± 2 . 0 e- 4 0 . 5 ± 0 . 3 0 . 6 ± 0 . 4 2 . 0 e- 5 ± 1 . 0 e- 5 7 . 0 e- 3 ± 4 . 0 e- 3 7 . 0 e- 3 ± 4 . 0 e- 3 1 . 6 e- 7 ± 4 . 1 e- 8 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 Enstr ophy A v g : t [ 1 5 , 4 9 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 5 0 , 2 0 0 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 2 0 1 , 3 0 0 ] Ground T ruth Reg. (Base) Reg. (Ours) Gen. (Base) Gen. (Ours) F igure 4. Enstroph y Spectrum Analysis. Comparison of energy spectra. Our method accurately recov ers the inertial range scalings, preserving the correct energy cascade across scales. Physical Consistency and Spectral Fidelity . The “Div” column in T able 1 highlights the critical role of the hard constraint. The baselines exhibit significant di vergence vio- lations ( O (10 2 ) to O (10 5 ) ), whereas our methods maintain div ergence errors near machine precision (e.g., 2 × 10 − 7 for Gen. Ours). This structural advantage is further e videnced by the pressure reconstruction (Figure 3d ). Since pressure is gov erned by the Poisson equation − ∆ p = ∇ · ( u · ∇ u ) , its accurate recovery depends on the correct computation of velocity gradients, which is only possible if the field is smooth and div ergence-free. Furthermore, the streamline visualization (Figure 3c ) demonstrates that our method gen- erates coherent v ortex structures without the non-physical sources or sinks often observed in unconstrained baselines. Figure 4 presents the enstrophy spectrum analysis. The energy cascade in turbulence follows a specific po wer law . Unconstrained models t end to de viate from this physical la w , either by dissipating energy too quickly (spectral damping) or by accumulating spurious energy at high frequencies (spectral blocking). Our method closely matches the ground truth spectrum, indicating that the predicted flo ws not only satisfy kinematic constraints but also preserve the correct multi-scale energy distrib ution of the turbulent system. Regression vs. Generative Modeling W e observe that Gen. (Ours) yields superior long-term stability ( MSE ≈ 0 . 007 ) compared to Reg. (Ours) ( MSE ≈ 210 ). This significant gap suggests that for chaotic dynamical systems, learning the probability flow of the trajectory (Generati ve) is more robust than minimizing pointwise error (Re gression), provided that the generati ve process is constrained to the physical manifold. By confining the probability path to the diver gence-free subspace, our framew ork maximizes the benefits of generativ e modeling while ensuring physical validity . 6. Conclusion In this work, we presented a unified framew ork that en- forces incompressibility as an intrinsic constraint within neural operator architectures. By lev eraging the Helmholtz– Hodge decomposition, we restrict the hypothesis space via a dif ferentiable spectral Leray projection, ensuring that both deterministic predictions and generati ve dynamics remain div ergence-free. T o achie ve consistency in generati ve mod- eling, we construct a diver gence-free Gaussian reference measure using a curl-based pushforw ard, which preserves subspace consistency of the induced probability flo w . Our ev aluation on 2D Navier–Stok es equations confirms that 8 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows embedding physical constraints directly into the operator structure enhances the stability and physical fidelity of the simulations. The results demonstrate that the proposed framew ork achieves e xact incompressibility and accurately captures the statistical characteristics of the flow . While focusing on periodic domains, the principles estab- lished here provide a foundation for future extensions to complex boundary conditions and 3D fluid systems. Impact Statement This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here. References Adrian, M., Sanz-Alonso, D., and Willett, R. Data assim- ilation with machine learning surrogate models: A case study with fourcastnet. Artificial Intelligence for the Earth Systems , 4(3):e240050, 2025. Azizzadenesheli, K., K ovachki, N., Li, Z., Liu-Schiaffini, M., Kossaifi, J., and Anandkumar, A. Neural operators for accelerating scientific simulations and design. Natur e Revie ws Physics , 6(5):320–328, 2024. Barthel Sorensen, B., Zepeda-N ´ u ˜ nez, L., Lopez-Gomez, I., W an, Z. Y ., Carv er, R., Sha, F ., and Sapsis, T . P . A proba- bilistic framew ork for learning non-intrusive corrections to long-time climate simulations from short-time training data. Journal of Advances in Modeling Earth Systems , 18 (1):e2024MS004755, 2026. Bastek, J.-H., Sun, W ., and K ochmann, D. Physics-informed diffusion models. In The Thirteenth International Con- fer ence on Learning Representations , 2025. Ben-Hamu, H., Puny , O., Gat, I., Karrer, B., Singer , U., and Lipman, Y . D-flow: Dif ferentiating through flo ws for controlled generation. In International Confer ence on Machine Learning , pp. 3462–3483. PMLR, 2024. Chen, Y ., Goldstein, M., Hua, M., Albergo, M. S., Bof fi, N. M., and V anden-Eijnden, E. Probabilistic forecast- ing with stochastic interpolants and f \ ” ollmer processes. arXiv pr eprint arXiv:2403.13724 , 2024. Cheng, C., Han, B., Maddix, D. C., Ansari, A. F ., Stuart, A., Mahoney , M. W ., and W ang, B. Gradient-free gen- eration for hard-constrained systems. In The Thirteenth International Confer ence on Learning Representations , 2025. Chorin, A. J. A numerical method for solving incompress- ible viscous flow problems. Journal of computational physics , 2(1):12–26, 1967. Chorin, A. J. Numerical solution of the navier -stokes equa- tions. Mathematics of computation , 22(104):745–762, 1968. Cranmer , M., Greydanus, S., Hoyer , S., Battaglia, P ., Spergel, D., and Ho, S. Lagrangian neural networks. arXiv pr eprint arXiv:2003.04630 , 2020. Falasca, F . Probing forced responses and causality in data- driv en climate emulators: Conceptual limitations and the role of reduced-order models. Physical Review Resear ch , 7(4):043314, 2025. Greydanus, S., Dzamba, M., and Y osinski, J. Hamiltonian neural netw orks. Advances in neural information pr ocess- ing systems , 32, 2019. Hansen, D., Maddix, D. C., Alizadeh, S., Gupta, G., and Mahoney , M. W . Learning physical models that can respect conservation la ws. In International Confer ence on Machine Learning , pp. 12469–12510. PMLR, 2023. Ho, J., Jain, A., and Abbeel, P . Denoising dif fusion proba- bilistic models. In Advances in Neur al Information Pr o- cessing Systems (NeurIPS) , v olume 33, pp. 6840–6851, 2020. Huang, J., Y ang, G., W ang, Z., and Park, J. J. Diffusion- pde: Generative pde-solving under partial observation. Advances in Neural Information Pr ocessing Systems , 37: 130291–130323, 2024. Jiang, R., Lu, P . Y ., Orlov a, E., and W illett, R. Training neural operators to preserve in v ariant measures of chaotic attractors. Advances in Neural Information Processing Systems , 36:27645–27669, 2023. Jiang, R., Zhang, X., Jakhar , K., Lu, P . Y ., Hassanzadeh, P ., Maire, M., and Willett, R. Hierarchical implicit neural emulators. arXiv pr eprint arXiv:2506.04528 , 2025. Kerrig an, G., Migliorini, G., and Smyth, P . Functional flow matching. In International Conference on Artificial Intelligence and Statistics , pp. 3934–3942. PMLR, 2024. K ohl, G., Chen, L.-W ., and Thuerey , N. T urbulent flo w sim- ulation using autoregressi ve conditional dif fusion models. arXiv pr eprint arXiv:2309.01745 , 2024. Krishnapriyan, A., Gholami, A., Zhe, S., Kirby , R., and Mahoney , M. W . Characterizing possible failure modes in physics-informed neural netw orks. Advances in neural information pr ocessing systems , 34:26548–26560, 2021. 9 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Li, Z., K ov achki, N., Azizzadenesheli, K., Liu, B., Bhat- tacharya, K., Stuart, A., and Anandkumar , A. Fourier neu- ral operator for parametric partial dif ferential equations. In International Confer ence on Learning Repr esentations (ICLR) , 2021. Lipman, Y ., Chen, R. T ., Ben-Hamu, H., Nickel, M., and Le, M. Flo w matching for generativ e modeling. In Interna- tional Confer ence on Learning Representations , 2023. Lippe, P ., V eeling, B., Perdikaris, P ., T urner , R., and Brand- stetter , J. Pde-refiner: Achieving accurate long rollouts with neural pde solvers. Advances in Neural Information Pr ocessing Systems , 36:67398–67433, 2023. Liu, X., Gong, C., et al. Flow straight and fast: Learning to generate and transfer data with rectified flow . In Interna- tional Confer ence on Learning Repr esentations (ICLR) , 2023. Lu, L., Jin, P ., Pang, G., Zhang, Z., and Karniadakis, G. E. Learning nonlinear operators via deeponet based on the univ ersal approximation theorem of operators. Natur e machine intelligence , 3(3):218–229, 2021. Mohan, A. T ., Lubbers, N., Liv escu, D., and Chertkov , M. Embedding hard physical constraints in conv olutional neural networks for 3d turbulence. In ICLR 2020 W ork- shop on Inte gration of Deep Neural Models and Differ en- tial Equations , 2019. Molinaro, R., Lanthaler , S., Raoni ´ c, B., Rohner , T ., Arme- gioiu, V ., Simonis, S., Grund, D., Ramic, Y ., W an, Z. Y ., Sha, F ., et al. Generati ve ai for fast and accurate statistical computation of fluids. arXiv pr eprint arXiv:2409.18359 , 2024. Pathak, J., Subramanian, S., Harrington, P ., Raja, S., Chattopadhyay , A., Mardani, M., Kurth, T ., Hall, D., Li, Z., Azizzadenesheli, K., et al. Fourcastnet: A global data-driven high-resolution weather model us- ing adaptiv e fourier neural operators. arXiv preprint arXiv:2202.11214 , 2022. Price, I., Sanchez-Gonzalez, A., Alet, F ., Andersson, T . R., El-Kadi, A., Masters, D., Ewalds, T ., Stott, J., Mohamed, S., Battaglia, P ., et al. Gencast: Dif fusion-based ensemble forecasting for medium-range weather . arXiv pr eprint arXiv:2312.15796 , 2023. Raissi, M., Perdikaris, P ., and Karniadakis, G. E. Physics- informed neural networks: A deep learning framework for solving forward and in verse problems inv olving nonlinear partial dif ferential equations. J ournal of Computational physics , 378:686–707, 2019. Richter-Po well, J., Lipman, Y ., and Chen, R. T . Neural conservation laws: A diver gence-free perspecti ve. Ad- vances in Neural Information Pr ocessing Systems , 35: 38075–38088, 2022. Sanz-Alonso, D. and W aniorek, N. Long-time accuracy of ensemble kalman filters for chaotic dynamical systems and machine-learned dynamical systems. SIAM Journal on Applied Dynamical Systems , 24(3):2246–2286, 2025. Shu, D., Li, Z., and Farimani, A. B. A physics-informed dif fusion model for high-fidelity flow field reconstruction. Journal of Computational Physics , 478:111972, 2023. Sojitra, A. and San, O. Method of manufactured learning for solver-free training of neural operators. arXiv pr eprint arXiv:2511.12890 , 2025. Song, Y ., Sohl-Dickstein, J., Kingma, D. P ., K umar , A., Er- mon, S., and Poole, B. Score-based generative modeling through stochastic differential equations. 2021. Utkarsh, U., Cai, P ., Edelman, A., Gomez-Bombarelli, R., and Rackauckas, C. V . Physics-constrained flo w match- ing: Sampling generativ e models with hard constraints. In The Thirty-ninth Annual Confer ence on Neural Infor - mation Pr ocessing Systems , 2025. W ang, S., Y u, X., and Perdikaris, P . When and why pinns fail to train: A neural tangent kernel perspectiv e. J ournal of Computational Physics , 449:110768, 2022. 10 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows A. Incompressibility and Pr ojection-Based Methods In this section, we elaborate on the motivation behind adopting the Spectral Leray Projection. W e contrast our approach with classical pressure-correction schemes and soft-penalty methods, specifically addressing the computational bottlenecks of traditional solvers within deep learning graphs and the theoretical inconsistencies they introduce in generati ve modeling. Classical Pressur e-Correction and Its Limitations in Deep Learning. In classical Computational Fluid Dynamics (CFD), particularly in fractional-step methods, incompressibility ( ∇ · u = 0 ) is strictly enforced by solving a pressure Poisson equation: ∇ 2 p = ∇ · u ∗ ∆ t , (12) where u ∗ is a tentati ve velocity field. While this method effecti vely handles comple x boundaries ( Chorin , 1968 ), it relies on iterati ve linear solv ers (e.g., Conjugate Gradient) that are computationally intensi ve. Integrating such iterati ve solvers directly into a neural network training loop presents fundamental optimization challenges. T raining a neural operator requires differentiating through the entire model; embedding an iterativ e solver necessitates implicit differentiation or unrolling solver steps during backpropagation. This process is not only computationally expensiv e but also numerically unstable, often leading to vanishing or e xploding gradients that hinder con ver gence. Manifold Consistency in Generati ve Modeling. Beyond computational costs, relying on post-hoc Poisson corrections introduces theoretical inconsistencies in generativ e frameworks like Flo w Matching. The goal of these models is to learn a vector field that generates a continuous probability path from noise to data. If a model operates in an unconstrained space and applies a Poisson correction only at discrete steps, the underlying probability flow becomes ill-defined. The vector field attempting to bridge the Gaussian noise and the incompressible data would continuously drift of f the diver gence-free subspace V , resulting in a “broken” generati ve process where the learned dynamics contradict physical constraints ( K errigan et al. , 2024 ; Lipman et al. , 2023 ). Methodological Advantages. T o address these issues, our frame work integrates the Spectral Leray Projection directly into the architecture. This approach offers distinct adv antages: • Exact Differ entiability and Stability: Unlike iterativ e solvers, the Spectral Leray Projection is a closed-form, linear operator based on Fast Fourier T ransforms (FFT). It enables exact, stable, and ef ficient backpropagation, allowing the network to learn the projection mechanism as an intrinsic geometric constraint. • Decoupling fr om Pr essure: This approach eliminates the need to explicitly model the pressure field p , simplifying the learning task by focusing purely on the kinematic velocity field. • Har d Constraints with Machine Pr ecision: Unlike soft-penalty methods (e.g., PINNs ( Raissi et al. , 2019 )) that treat div ergence as an optimization objectiv e, which often results in “spectral creep” and high-frequency errors ( Jiang et al. , 2023 ), our method enforces ∇ · u = 0 to machine precision by construction. • Generalizability: While implemented spectrally for efficienc y on periodic domains, the principle of differentiable projection is generalizable to complex geometries via other dif ferentiable bases or sparse solvers in future work. B. Gaussian Measures in Hilbert Spaces The push-forward measure is characterized by: ( F # γ )( B ) = γ ( { x ∈ H : F ( x ) ∈ B } ) for ev ery Borel set B ∈ B ( R ) . The existence and uniqueness of m and C are guaranteed by the Riesz Representation Theorem, which ensures that the bounded linear and bilinear forms appearing on the right-hand sides of the abov e equations correspond to unique elements and operators in H . Specifically , the mean m satisfies ⟨ m, h ⟩ = Z H ⟨ x, h ⟩ γ (d x ) , ∀ h ∈ H , (13) 11 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows T able 2. Summary of notation. W e distinguish between data samples, probability measures, and vector fields used in div ergence-free flow matching. Symbol Category Meaning y ∈ V data sample div ergence-free velocity field from the dataset ν data distribution probability distribution of data samples on V µ 0 reference distribution di vergence-free Gaussian noise on V u 0 ∼ µ 0 noise sample reference div ergence-free random field u y τ interpolated sample noisy interpolation between u 0 and data sample y µ y τ conditional distribution distribution induced by u y τ µ τ marginal distrib ution mixture of µ y τ ov er y ∼ ν v y τ conditional vector field velocity field generating the conditional path v τ vector field velocity field generating the mar ginal probability path v θ ( · , τ ) neural operator learned (unconstrained) velocity field P projection operator Leray projection onto the div ergence-free subspace V and the cov ariance operator C , which is symmetric, non-negati ve, and trace-class, is defined by ⟨ C h, k ⟩ = Z H ⟨ x − m, h ⟩⟨ x − m, k ⟩ γ (d x ) , ∀ h, k ∈ H . (14) The regularity of samples from γ is gov erned by the Cameron–Martin space H γ . Since C is self-adjoint, non-negati ve and trace-class, it admits a unique square root C 1 / 2 . W e define H γ := Im( C 1 / 2 ) , equipped with the inner product ⟨ h 1 , h 2 ⟩ H γ = ⟨ C − 1 / 2 h 1 , C − 1 / 2 h 2 ⟩ H , where C − 1 / 2 denotes the pseudo-in verse of C 1 / 2 . C. Proof of Lemma 4.1 Lemma C.1 (Properties of the Curl Push-forward) . The operator ∇ ⊥ is a bounded linear map whose image is e xactly the closed subspace of diver gence-fr ee, zer o-mean vector fields in L 2 . Pr oof. W e verify the linearity , boundedness, and the properties of the image sequentially . 1. Linearity . The linearity of ∇ ⊥ follows directly from the linearity of the weak dif ferentiation operators. W e verify this by checking additivity and homogeneity e xplicitly . Additivity: Let ψ 1 , ψ 2 ∈ X be arbitrary stream functions. By the distributi ve property of partial deriv ativ es, we have: ∇ ⊥ ( ψ 1 + ψ 2 ) = ∂ 2 ( ψ 1 + ψ 2 ) − ∂ 1 ( ψ 1 + ψ 2 ) = ∂ 2 ψ 1 + ∂ 2 ψ 2 − ∂ 1 ψ 1 − ∂ 1 ψ 2 = ∂ 2 ψ 1 − ∂ 1 ψ 1 + ∂ 2 ψ 2 − ∂ 1 ψ 2 = ∇ ⊥ ψ 1 + ∇ ⊥ ψ 2 . (15) Homogeneity: Let c ∈ R be an arbitrary scalar and ψ ∈ X . By the commutativity of differentiation with scalar multiplication: ∇ ⊥ ( cψ ) = ∂ 2 ( cψ ) − ∂ 1 ( cψ ) = c∂ 2 ψ − c∂ 1 ψ & = c ∂ 2 ψ − ∂ 1 ψ = c ∇ ⊥ ψ . (16) Thus, ∇ ⊥ is a linear operator . 2. Boundedness and Closed Image. For an y ψ ∈ X ⊂ H 1 per , the L 2 -norm of the vector field is: ∥∇ ⊥ ψ ∥ 2 L 2 = ∥ ∂ 2 ψ ∥ 2 L 2 + ∥ − ∂ 1 ψ ∥ 2 L 2 = ∥∇ ψ ∥ 2 L 2 ≤ ∥ ψ ∥ 2 H 1 . (17) This implies that ∇ ⊥ is a bounded operator with operator norm at most 1 . Furthermore, since X consists of zero-mean functions, the Poincar ´ e inequality holds: ∥ ψ ∥ L 2 ≤ C ∥∇ ψ ∥ L 2 . Consequently , the map is an isomorphism onto its image (specifically , it is bounded from belo w), which implies that the image is a closed subspace in L 2 per ( T 2 ; R 2 ) . 12 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows 3. Divergence-Free and Zer o-Mean Property . The resulting vector field u = ( u 1 , u 2 ) ⊤ = ( ∂ 2 ψ , − ∂ 1 ψ ) ⊤ is div ergence- free in the distributional sense. For smooth approximations, Schwarz’ s theorem giv es: ∇ · u = ∂ 1 u 1 + ∂ 2 u 2 = ∂ 1 ( ∂ 2 ψ ) + ∂ 2 ( − ∂ 1 ψ ) = ∂ 12 ψ − ∂ 21 ψ = 0 . (18) Additionally , by the periodicity of the domain T 2 , the integral of any partial deri vati ve of a periodic function vanishes (e.g., R T 2 ∂ 2 ψ = 0 ). Thus, the vector field has zero mean. D. Pr oof of Theorem D .1 Theorem D .1 (Existence of a Di vergence-Free Flo w Matching V ector Field) . Let ( µ τ ) τ ∈ [0 , 1] be the mar ginal path on V constructed above. Under the absolute continuity condition µ y τ ≪ µ τ , the Radon–Nikodym derivative w τ ( x , y ) := d µ y τ d µ τ ( x ) is well-defined for µ τ -almost every x . The vector field v τ ( x ) = Z V v y τ ( x ) w τ ( x , y ) d ν ( y ) (19) generates the path ( µ τ ) τ ∈ [0 , 1] , wher e v y τ denotes the velocity field associated with the conditional path µ y τ . Pr oof. W e follo w the argument of Theorem 1 in ( K errigan et al. , 2024 ). Let φ ∈ C ∞ c ( V × [0 , 1]) be an arbitrary smooth test function. W e aim to sho w that ( µ τ ) τ ∈ [0 , 1] and the vector field v τ defined in ( 19 ) satisfy the continuity equation in weak form, namely Z 1 0 Z V ∂ τ φ ( x , τ ) d µ τ ( x ) d τ = − Z 1 0 Z V ⟨ v τ ( x ) , ∇ φ ( x , τ ) ⟩ d µ τ ( x ) d τ . (20) By definition of the marginal measure µ τ = R µ y τ d ν ( y ) and by Fubini–T onelli, the left-hand side can be written as Z 1 0 Z V ∂ t φ ( x , τ ) d µ t ( x ) d τ = Z 1 0 Z V Z V ∂ t φ ( x , τ ) d µ y t ( x ) d ν ( y ) d τ . (21) Since, for ν -almost ev ery y , the conditional path ( µ y τ ) τ ∈ [0 , 1] is generated by the velocity field v y τ through the continuity equation, we obtain = − Z 1 0 Z V Z V ⟨ v y τ ( x ) , ∇ φ ( x , τ ) ⟩ d µ y τ ( x ) d ν ( y ) d τ . (22) Under the absolute continuity assumption µ y τ ≪ µ t , we may change measure and write = − Z 1 0 Z V Z V ⟨ v y τ ( x ) , ∇ φ ( x , τ ) ⟩ w t ( x , y ) d µ τ ( x ) d ν ( y ) d τ , (23) where w τ ( x , y ) = d µ y τ / d µ τ ( x ) . Since Bochner integrals commute with inner products, another application of Fubini–T onelli yields = − Z 1 0 Z V Z V v y τ ( x ) w τ ( x , y ) d ν ( y ) , ∇ φ ( x , τ ) d µ τ ( x ) d τ . (24) By definition of v τ in ( 19 ) , this coincides with the right-hand side of ( 20 ) . Therefore, ( µ τ ) τ ∈ [0 , 1] and v τ solve the continuity equation in weak form, and v τ generates the marginal probability path ( µ τ ) τ ∈ [0 , 1] . Finally , since each conditional velocity field v y τ takes v alues in the div ergence-free subspace V and V is linear , the averaged field v τ also belongs to V . 13 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows E. Continuity Equation and Probability Flo ws Probability paths ( µ τ ) τ ∈ [0 , 1] on V are generated by time-dependent v ector fields ( v τ ) τ ∈ [0 , 1] through the continuity equation ∂ τ µ τ + div( v τ µ τ ) = 0 . (25) Equation ( 25 ) is interpreted in the weak sense: for all test functions φ : V × [0 , 1] → R , Z 1 0 Z V ∂ τ φ ( x , τ ) + ⟨ v τ ( x ) , ∇ x φ ( x , τ ) ⟩ d µ τ ( x ) d τ = 0 . (26) F . Implementation Details and Algorithmic Realization Algorithm 2 Gaussian Div ergence-Free Noise (stream function). from torchvision.transforms.functional import gaussian_blur def sample_div_free_noise(B, H, W, T, sigma, device): # dims: (B, H, W, T) spatial-temporal dimensions # sigma: smoothing standard deviation # output: divergence-free velocity field u # 1. Sample scalar stream function psi = torch.randn(B, H, W, T, device=device) psi = gaussian_blur(psi, sigma) # smooth in spatial dimensions # 2. Spatial gradients dpsi_dy, dpsi_dx = torch.gradient(psi, dim=(1, 2)) # 3. Curl construction (2D incompressible) u = torch.stack([dpsi_dy, -dpsi_dx], dim=-1) return u Algorithm 3 Spectral Leray Projection (Div ergence-Free V elocity). def leray_projection(uv_raw): # uv_raw: Tensor of shape (B, 2, H, W, T) u, v = uv_raw[:, 0], uv_raw[:, 1] u_hat = fft2(u) v_hat = fft2(v) # Wave numbers H, W = u.shape[-2], u.shape[-1] kx, ky = make_wavenumbers(H, W) k2 = kx ** 2 + ky ** 2 proj = (kx * u_hat + ky * v_hat) / k2 proj = torch.where(k2 == 0, torch.zeros_like(proj), proj) u_hat = u_hat - kx * proj v_hat = v_hat - ky * proj u = ifft2(u_hat) v = ifft2(v_hat) return torch.stack([u, v], dim=1) 14 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows G. Experimental Implementation Details W e ev aluate our proposed frame work on the task of turbulent flow prediction, formulated as a sequence-to-sequence learning problem follo wing standard benchmarks ( Li et al. , 2021 ). The model observes a historical velocity conte xt of 15 frames ( u 0:14 ) to predict the subsequent 35 frames ( u 15:49 ). For the r egression task , the model learns a direct deterministic mapping from the history to the future sequence. For the generative modeling task , the historical conte xt u 0:14 serves as the condition c ; the model generates the tar get sequence u 15:49 by transforming an initial div ergence-free noise distribution conditioned on c . T o strictly assess long-term stability and physical conserv ation be yond the standard training horizon, we additionally perform autoregressi ve rollouts (extrapolation) up to T = 300 steps during e valuation. Model Architectur es and Baselines. T o strictly isolate the impact of our proposed geometric constraints, we maintain identical backbone architectures across all comparisons. W e utilize the Fourier Neural Operator (FNO) ( Li et al. , 2021 ) as the core parameterized mapping. The baseline models ( Reg. Base and Gen. Base ) map directly to the ambient space L 2 per ( T 2 ; R 2 ) without structural constraints. In contrast, our proposed methods ( Reg. Ours and Gen. Ours ) integrate the dif ferentiable spectral Leray projection as a final layer . This design ensures that all model outputs, covering both deterministic predictions and generativ e samples, lie exactly on the di ver gence-free manifold ∇ · u = 0 . Flow Matching Configuration. For generati ve modeling, we employ the Flo w Matching framework ( Lipman et al. , 2023 ) to learn the probability flo w of the turbulent trajectory . The baseline uses a standard Gaussian prior in the ambient space, which is incompatible with the incompressible subspace. Our method constructs a specific div ergence-free Gaussian process via a stream-function pushforward, ensuring that the entire probability path is subspace-consistent. Sampling is performed using the dopri5 (Dormand-Prince) adaptiv e ODE solver with strict absolute and relative tolerances set to 10 − 5 . This high-precision inte gration ensures that v alid samples are not corrupted by numerical drift. W e use a Neural ODE formulation with 50 function ev aluations (NFE) per step to balance computational cost and sample fidelity . H. Data Generation and Numerical Methods W e detail the rigorous procedure used to generate the high-fidelity turbulent flo w dataset. The data is produced by solving the 2D Navier –Stokes equations in the vorticity-streamfunction formulation using a pseudo-spectral method. H.1. Gover ning Equations and Solver The underlying dynamics are governed by the vorticity transport equation with a viscous damping term and an external forcing component: ∂ t ω ( x , t ) + ( u · ∇ ) ω ( x , t ) = ν ∆ ω ( x , t ) + f ( x ) , (27) where ω = ∇ × u is the vorticity , ν = 10 − 3 is the kinematic viscosity (Reynolds number Re = 1000 ), and f is a deterministic forcing term. The system is ev olved in the spectral domain. W e apply the Crank–Nicolson scheme for the linear viscous term and an explicit forward step for the non-linear advection and forcing terms. The discrete update rule in Fourier space ( ˆ ω ) with time step ∆ t = 10 − 3 is giv en by: (1 + 0 . 5 ν k 2 ∆ t ) ˆ ω t +1 = (1 − 0 . 5 ν k 2 ∆ t ) ˆ ω t + ∆ t ˆ f − \ u · ∇ ω t , (28) where k 2 = 4 π 2 ( k 2 x + k 2 y ) . Dealiasing is performed using the 2 / 3 rule to suppress spectral blocking artifacts. H.2. V elocity Extraction and Incompr essibility Since our machine learning models operate directly on velocity fields, we strictly recov er u from the ev olved vorticity ω at each storage step. This process ensures the data is diver gence-free by construction. First, the stream function ψ is solved via the Poisson equation in the spectral domain: − ∆ ψ = ω = ⇒ ˆ ψ ( k ) = ˆ ω ( k ) 4 π 2 | k | 2 , for k = 0 . (29) 15 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows The velocity components u = ( u, v ) are then deri ved as: u = ∂ ψ ∂ y , v = − ∂ ψ ∂ x . (30) Numerically , this corresponds to ˆ u = i 2 π k y ˆ ψ and ˆ v = − i 2 π k x ˆ ψ . This exact spectral relationship guarantees that ∇ · u = ∂ x u + ∂ y v = 0 is satisfied to machine precision in the generated dataset. H.3. Specific Dataset Configurations The dataset is generated on a spatial grid of resolution 64 × 64 o ver a unit domain Ω = [0 , 1] 2 . • Initialization : Initial vorticity fields ω 0 are sampled from a Gaussian Random Field (GRF) ω 0 ∼ N (0 , C ) . The cov ariance operator C is defined diagonally in the Fourier domain with a power -law decay , ensuring smoothness of the initial condition. Specifically , the Fourier coef ficients ˆ ω 0 ( k ) are independent Gaussian v ariables with variance: E [ | ˆ ω 0 ( k ) | 2 ] ∝ 4 π 2 | k | 2 + τ 2 − α , (31) where we set α = 2 . 5 and τ = 7 . 0 . This parameter choice balances the energy spectrum to produce physically realistic turbulent structures at t = 0 . • For cing : W e effecti vely use a K olmogorov-style forcing defined as f ( x, y ) = 0 . 1 √ 2 sin(2 π ( x + y ) + ϕ i ) , where the phase ϕ i varies across trajectories to induce di verse flo w patterns. • T emporal Resolution : The solver runs with an internal step of δ t = 10 − 3 . W e record snapshots ev ery ∆ T = 1 . 0 physical time units (e very 1000 solver steps). • T rajectory Length : Each training trajectory contains 50 snapshots ( T total = 50 ). For testing, we generate extended trajectories of 300 snapshots ( T test = 300 ) to ev aluate long-term stability . • Scale : The final dataset comprises 10,000 trajectories for training and 100 for testing/v alidation. I. Generative Model V ariance Analysis This section pro vides a detailed variance analysis of the generativ e models to explicitly quantify the stability and consistency of the generated ensembles. Unlike deterministic regression approaches such as the standard Fourier Neural Operator ( Li et al. , 2021 ), generativ e models like Flow Matching ( Lipman et al. , 2023 ; Liu et al. , 2023 ) produce a distribution of trajectories for a gi ven initial condition. Capturing the variability of this distrib ution is essential for assessing whether the model learns the true physical statistics of the turbulent flo w or merely memorizes a mean path. T o ev aluate this, we generated R = 20 independent realizations for each test window in the dataset. W e measured performance using the Mean Squared Error (MSE) for velocity components ( u, v ) and the div ergence error (Di v), aggreg ating these metrics over three distinct temporal stages: (1) Prediction ( T = 15 . . . 50 ), corresponding to the training horizon; (2) Short-term Extrapolation ( T = 51 . . . 150 ); and (3) Long-term Extrapolation ( T = 151 . . . 300 ). The statistics reported in T able 3 represent the global mean and standard deviation computed ov er the pooled population of all realizations across all test samples. For the baseline Conditional Flow Matching model, we note that the reported statistics include trajectories that numerically div erged, effecti vely highlighting the catastrophic failure modes inherent in unconstrained generativ e modeling ( Kerrigan et al. , 2024 ). Instability of Unconstrained Baselines. The results in T able 3 reveal a critical instability in the standard Flow Matching baseline. While the baseline performs reasonably well within the training distribution (Prediction stage), it exhibits catastrophic div ergence during the Short-term Extrapolation phase ( T = 51 . . . 150 ), with errors exploding to astronomical values ( MSE ∼ 10 28 ). This demonstrates that without explicit physical constraints, the generated trajectories rapidly drift off the v alid data manifold, leading to numerical blow-up. In strong contrast, our proposed Diver gence-Free Flow Matching maintains numerical stability and physical consistency throughout the entire rollout. Even in the extended extrapolation regime ( T = 151 . . . 300 ), our method maintains a low MSE ( ≈ 0 . 007 ) and machine-precision di ver gence ( O (10 − 7 )), confirming that enforcing the diver gence-free constraint via the Leray projection is essential for robust long-term generation of turbulent flo ws. 16 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows T able 3. Quantitative Comparison on Re = 1000 (Generati ve Models). W e report the Mean ± Std computed across all windows and realizations ( 20 × ). Bold indicates best performance. Note the astronomical error values for the Base model in Short-term Extrapolation, indicating model blow-up. Model Stage T otal MSE U-MSE V -MSE Div-MSE Gen. (Base) Prediction ( T 15 - 50 ) 0 . 0046 ± 0 . 0035 0 . 0044 ± 0 . 0033 0 . 0047 ± 0 . 0038 0 . 0075 ± 0 . 0120 Short-term Ext. ( T 51 - 150 ) 2 . 40 e 28 ± NaN 9 . 22 e 30 ± NaN 2 . 60 e 28 ± NaN 2 . 64 e 26 ± NaN Long-term Ext. ( T 151 -End ) 0 . 0076 ± 0 . 0009 0 . 0079 ± 0 . 0009 0 . 0072 ± 0 . 0012 0 . 0024 ± 0 . 0046 Gen. (Ours) Prediction ( T 15 - 50 ) 0 . 0011 ± 0 . 0010 0 . 0011 ± 0 . 0009 0 . 0011 ± 0 . 0011 9 . 3 e- 7 ± 1 . 2 e- 7 Short-term Ext. ( T 51 - 150 ) 0 . 0073 ± 0 . 0024 0 . 0071 ± 0 . 0025 0 . 0074 ± 0 . 0023 7 . 5 e- 7 ± 4 . 0 e- 8 Long-term Ext. ( T 151 -End ) 0 . 0074 ± 0 . 0013 0 . 0073 ± 0 . 0013 0 . 0076 ± 0 . 0012 7 . 4 e- 7 ± 2 . 7 e- 8 J. Extended V isual Analysis In this section, we present a comprehensi ve fine-grained visual and spectral assessment of the performance of the models. W e inspect primitiv e velocity variables, derived physical quantities including vorticity and pressure, and spectral error distributions across three distinct temporal regimes. The ev aluation strictly compares our proposed diver gence-free framew ork against the unconstrained baseline to highlight the benefits of enforcing hard physical constraints. All models are trained to observe a historical context of 15 frames ( u 0:14 ) to predict the future evolution. For the e xtrapolation regimes where T > 49 , we employ a recursiv e rolling window strategy . In this setup, the predictions from the model are fed back as input for the next step, extending the rollout up to T = 300 to rigorously assess long-term stability and conservation properties. J.1. Regime I: Standard Pr ediction Horizon ( T 15 - 49 ) This regime serves to v alidate the ability of the model to reconstruct fine-scale turbulent features within the training distribution. Figure 5 presents a multi-panel visualization of the flow state which juxtaposes kinematic fidelity with physical consistency checks. Kinematic Reconstruction. The top row , showing v elocity components u and v , demonstrates that our constrained model faithfully captures the instantaneous velocity distrib ution. The contour lines are sharp and the extrema magnitudes match the Ground T ruth. This indicates that the model successfully learns the fine-grained advection dynamics without suffering from the ov er-smoothing often seen in regression-based approaches. Conservation and Pr essure Recovery . The middle row highlights the critical structural advantage of our frame work. The unconstrained baseline exhibits significant “checkerboard” artifacts in the di vergence field. This is a characteristic symptom of spectral leakage where high-frequency errors alias into the grid scale. This violation has a catastrophic downstream effect on the pressure field p . Since physical pressure is governed by the Poisson equation − ∆ p = ∇ · ( u · ∇ u ) , the nonzero di ver gence in the baseline acts as a corrupted source term. Consequently , the recovered pressure de generates into non-physical white noise. In contrast, our method enforces ∇ · u ≈ 0 to machine precision by construction. This allows for the recov ery of smooth and structurally coherent pressure fields that accurately reflect the internal stress of the fluid. T opological Realism and Spectral Fidelity . The bottom row , displaying streamlines and vorticity ω , confirms the topological realism of our approach. Our method recov ers filamentary vorticity structures and coherent vortex loops effecti vely . This prev ents the spectral blocking observed in simpler baselines where energy piles up at the cutoff frequenc y . This observation is quantitatively corroborated by the Enstrophy spectrum in Figure 6 (T op). Our method matches the inertial range ener gy cascade where E ( k ) ∝ k − 3 up to the Nyquist frequenc y , whereas the baseline suf fers from spectral damping. J.2. Regime II: Short-term Extrapolation ( T 50 - 100 ) This regime marks the onset of out-of-distrib ution recursive prediction where prediction errors typically accumulate. Drift and Spectral Bias. Figure 7 illustrates the flo w state at T = 100 . The baseline model begins to drift off the ph ysical 17 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows manifold. The vorticity field ω loses its sharp gradients and becomes amorphous and ov erly smooth. This represents a common failure mode in MSE-trained neural operators kno wn as spectral bias. In this mode, the model preferentially fits low-frequenc y components while discarding the high-frequency turbulent details that are essential for accurate e volution. Mechanism of Diver gence Explosion. Crucially , the middle row rev eals the mechanism of failure for the baseline. Div ergence errors do not simply persist but amplify through the recurrent process. In the absence of a correction step, small non-zero di ver gence acts as a spurious source term in the transport equations. This leads to a numerical “blow-up” where the pressure field collapses into high-magnitude noise as shown in Fig. 7 d. This indicates that the trajectory has left the v alid solution manifold. Stabilization via Projection. In contrast, our framework maintains tight structural coherence. By strictly enforcing the solenoidal constraint at e very timestep, the spectral Leray projection acts as an inducti ve bias that stabilizes the recurrent dynamics. The generated flows remain on the attracti ve manifold of the Navier -Stokes equations. This prevents the “spectral creep” observed in unconstrained models and ensures the simulation remains physically plausible e ven outside the training horizon. J.3. Regime III: Long-term Extrapolation ( T 101 - 300 ) This final regime e valuates the er godicity and asymptotic stability of the generativ e process. W e assess whether the model can remain on the physical attractor indefinitely . Catastrophic Decoher ence of Baseline. As sho wn in Figure 9 , the baseline model completely loses physical inte grity by T = 300 . The velocity field de grades into smooth and wa ve-lik e patterns that bear no resemblance to chaotic turb ulence. The div ergence errors saturate at a high le vel which renders both the pressure in the middle ro w and vorticity in the bottom row ph ysically meaningless. The energy spectrum in Figure 10 (T op) confirms this with a severe drop-of f in high-frequency energy . This indicates the model has ceased to simulate turbulence ef fectiv ely . In variant Measure Sampling. Con versely , our proposed generativ e method continues to generate sharp and rich turbulent features indefinitely . Crucially , the statistics remain stationary . The Enstrophy spectrum in Figure 10 (T op) o verlaps almost perfectly with the ground truth. This demonstrates that our model is effecti vely sampling from the inv ariant measure of the system. It implies that the framework does not merely memorize trajectories b ut has effecti vely learned the underlying conservation la ws. This ensures that the probability mass stays within the v alid configuration space and pre vents leakages that would otherwise destroy the long-term statistics. 18 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Gr ound T ruth t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.1695 0.0000 0.1695 u (a) Zonal V elocity u Gr ound T ruth t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.1875 0.0000 0.1875 v (b) Meridional V elocity v Gr ound T ruth t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.5 0.0 0.5 Diver gence (FD) (c) Div ergence Error Gr ound T ruth t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.01585 0.00000 0.01585 Pressure (Reconstructed) (d) Pressure GT (Str eamlines) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.0000 0.2387 Speed magnitude (e) Streamlines Gr ound T ruth t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 1.723 0.000 1.723 w (f) V orticity ω F igure 5. Physical Field Reconstruction (Prediction). A unified view of the kinematic and topological state. T op: V elocity fields. Middle: Conservation metrics sho wing baseline failure. Bottom: T opology . 19 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 Enstr ophy A v g : t [ 1 5 , 2 4 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 2 5 , 3 4 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 3 5 , 4 9 ] Ground T ruth Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) (a) Enstrophy Spectrum Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000000 0.003152 MSE: u (b) MSE u Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000000 0.003025 MSE: v (c) MSE v Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000000 0.008502 Diver gence (FD) (d) MSE Div ergence Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000 4.922 Pressure (Reconstructed) 1e 5 (e) MSE Pressure Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000000 0.002944 MSE: speed (f) MSE Speed Reg.(Base) t=15 t=25 t=30 t=35 t=40 t=49 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.0000 0.1058 MSE: w (g) MSE ω F igure 6. Spectral Fidelity and Err or Distributions (Prediction). T op: Enstrophy Spectrum showing partial alignment with ground truth. Rows 2-4: Squared error distributions for primiti ve and deriv ed variables. 20 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Gr ound T ruth t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.1896 0.0000 0.1896 u (a) Zonal V elocity u Gr ound T ruth t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.187 0.000 0.187 v (b) Meridional V elocity v Gr ound T ruth t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.5 0.0 0.5 Diver gence (FD) (c) Div ergence Accumulation Gr ound T ruth t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.01661 0.00000 0.01661 Pressure (Reconstructed) (d) Pressure Collapse GT (Str eamlines) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000 0.248 Speed magnitude (e) Streamlines Gr ound T ruth t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 1.325 0.000 1.325 w (f) V orticity ω F igure 7. Short-term Stability (Extrapolation). Snapshots at T = 100 . T op: V elocity drift in baseline. Middle: Conservation failure. Bottom: T opological degradation. 21 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 Enstr ophy A v g : t [ 5 0 , 6 0 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 6 1 , 7 0 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 7 1 , 8 0 ] Ground T ruth Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) (a) Enstrophy Spectrum Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00000 0.02513 MSE: u (b) MSE u Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00000 0.03197 MSE: v (c) MSE v Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000 2.469 Diver gence (FD) (d) MSE Div ergence Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000000 0.001585 Pressure (Reconstructed) (e) MSE Pressure Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00000 0.03066 MSE: speed (f) MSE Speed Reg.(Base) t=50 t=55 t=60 t=65 t=70 t=80 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000 5.184 MSE: w (g) MSE ω F igure 8. Stationarity and Error Gr owth (Short-term). T op: Enstrophy spectrum remains stable. Ro ws 2-4: Significant di vergence errors appear in the baseline. 22 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows Gr ound T ruth t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.1909 0.0000 0.1909 u (a) Zonal V elocity u Gr ound T ruth t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.1907 0.0000 0.1907 v (b) Meridional V elocity v Gr ound T ruth t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.5 0.0 0.5 Diver gence (FD) (c) Div ergence Accumulation Gr ound T ruth t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.01802 0.00000 0.01802 Pressure (Reconstructed) (d) Pressure Collapse GT (Str eamlines) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 0.0000 0.2686 Speed magnitude (e) Streamlines Gr ound T ruth t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) 1.257 0.000 1.257 w (f) V orticity ω F igure 9. Long-term Stability (Extrapolation). Snapshots at T = 300 . T op: Complete decoherence in baseline. Middle: Continued physical v alidity in ours. Bottom: Stationarity of topological features. 23 Project and Generate: Diver gence-Free Neural Operators f or Incompressible Flows 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 Enstr ophy A v g : t [ 2 0 0 , 2 2 0 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 2 2 1 , 2 6 0 ] 1 0 0 1 0 1 k ( W a v e n u m b e r ) 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 A v g : t [ 2 6 1 , 3 0 0 ] Ground T ruth Reg.(Base) Reg.(Ours) Gen.(Base) Gen.(Ours) (a) Enstrophy Spectrum Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00 0.05 MSE: u (b) MSE u Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00 0.05 MSE: v (c) MSE v Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.00 0.01 Diver gence (FD) (d) MSE Div ergence Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.000 0.001 Pressure (Reconstructed) (e) MSE Pressure Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0.0 0.1 MSE: speed (f) MSE Speed Reg.(Base) t=200 t=210 t=220 t=250 t=260 t=270 Reg.(Ours) Gen.(Base) Gen.(Ours) 0 1 MSE: w (g) MSE ω F igure 10. Long-term Stability (Extrapolation). T op: Enstrophy spectrum. Rows 2-4: Baseline errors saturate, while ours remain bounded. 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

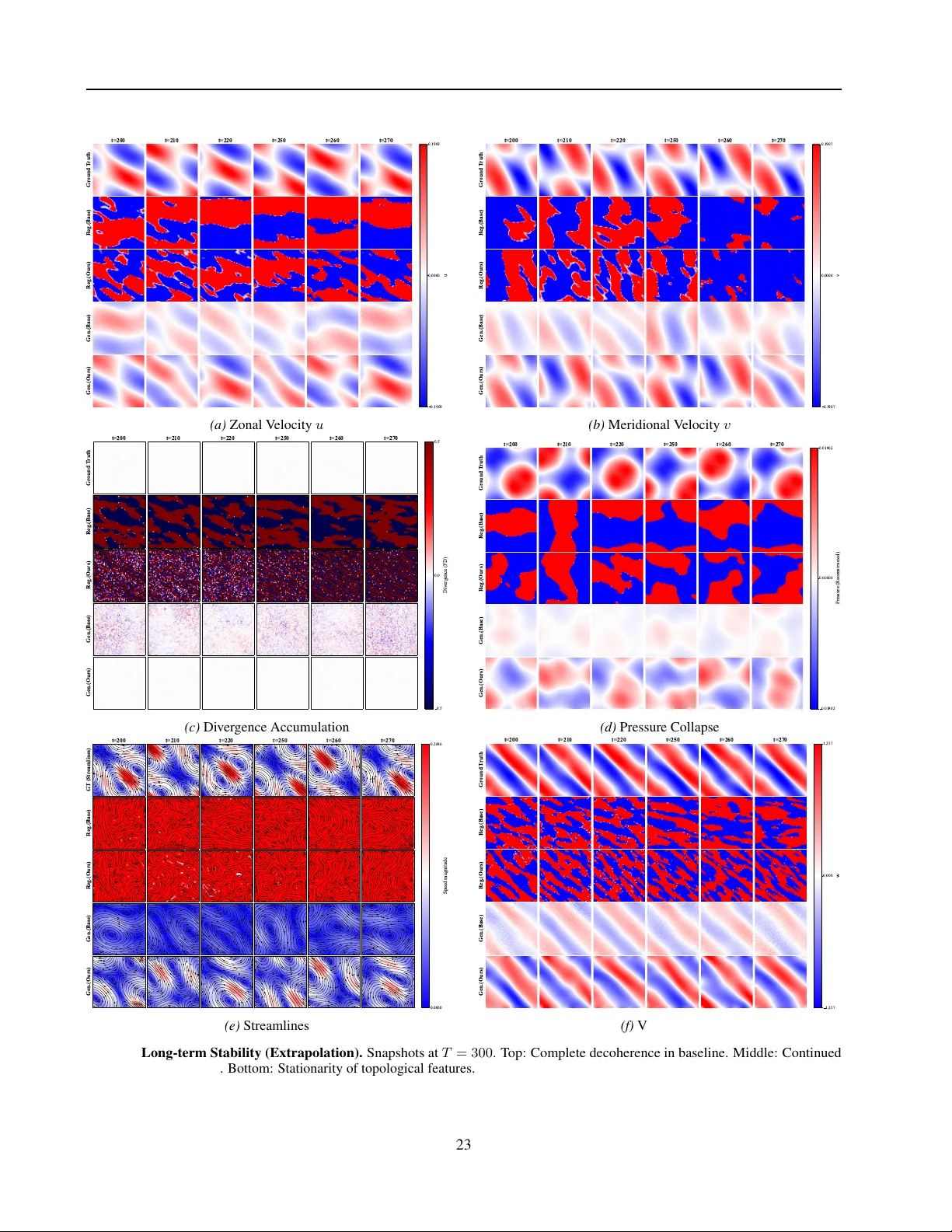

Leave a Comment