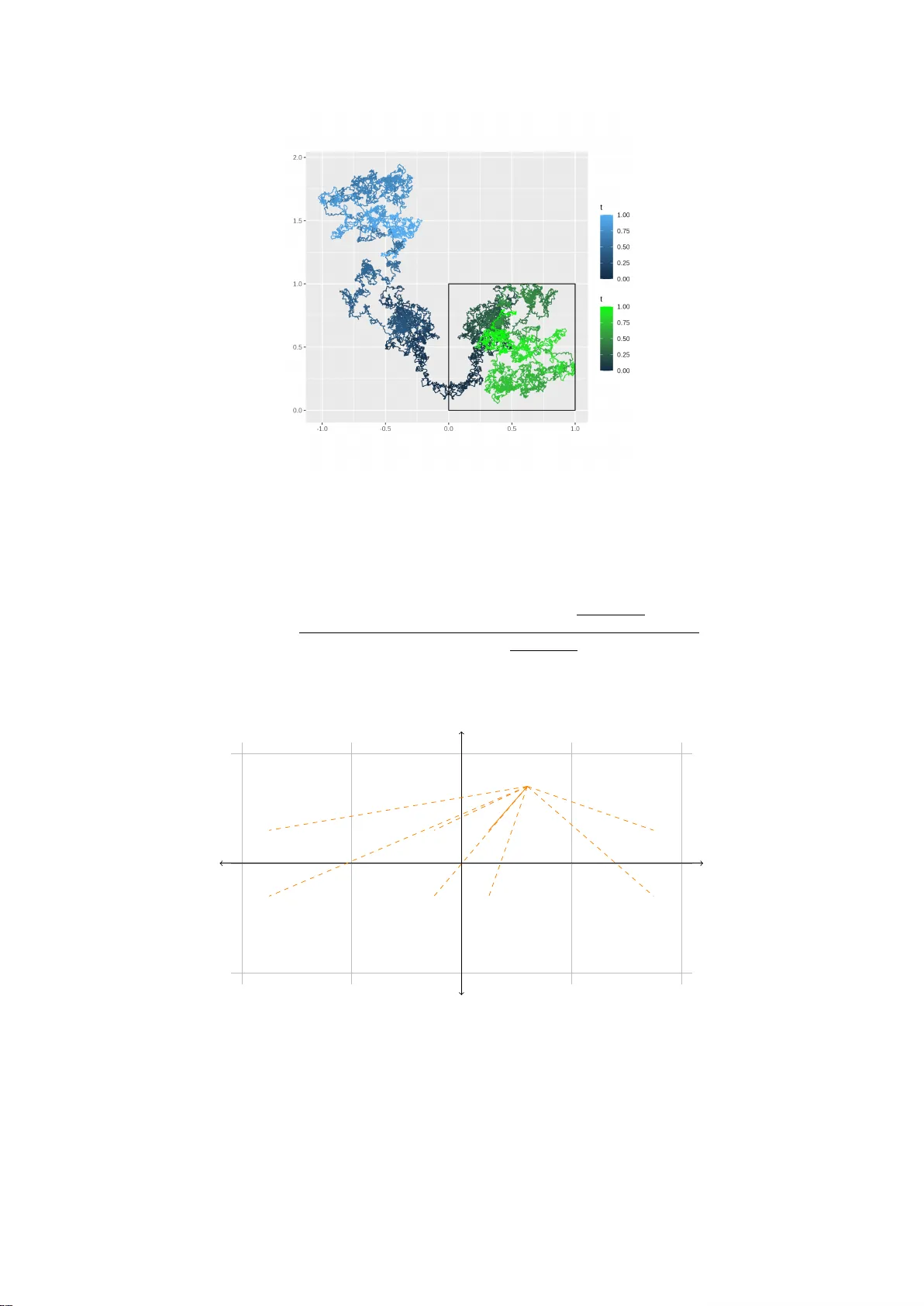

Reflected diffusion models adapt to low-dimensional data

While the mathematical foundations of score-based generative models are increasingly well understood for unconstrained Euclidean spaces, many practical applications involve data restricted to bounded domains. This paper provides a statistical analysi…

Authors: Asbjørn Holk, Claudia Strauch, Lukas Trottner