Language-Guided Structure-Aware Network for Camouflaged Object Detection

Camouflaged Object Detection (COD) aims to segment objects that are highly integrated with the background in terms of color, texture, and structure, making it a highly challenging task in computer vision. Although existing methods introduce multi-sca…

Authors: Min Zhang

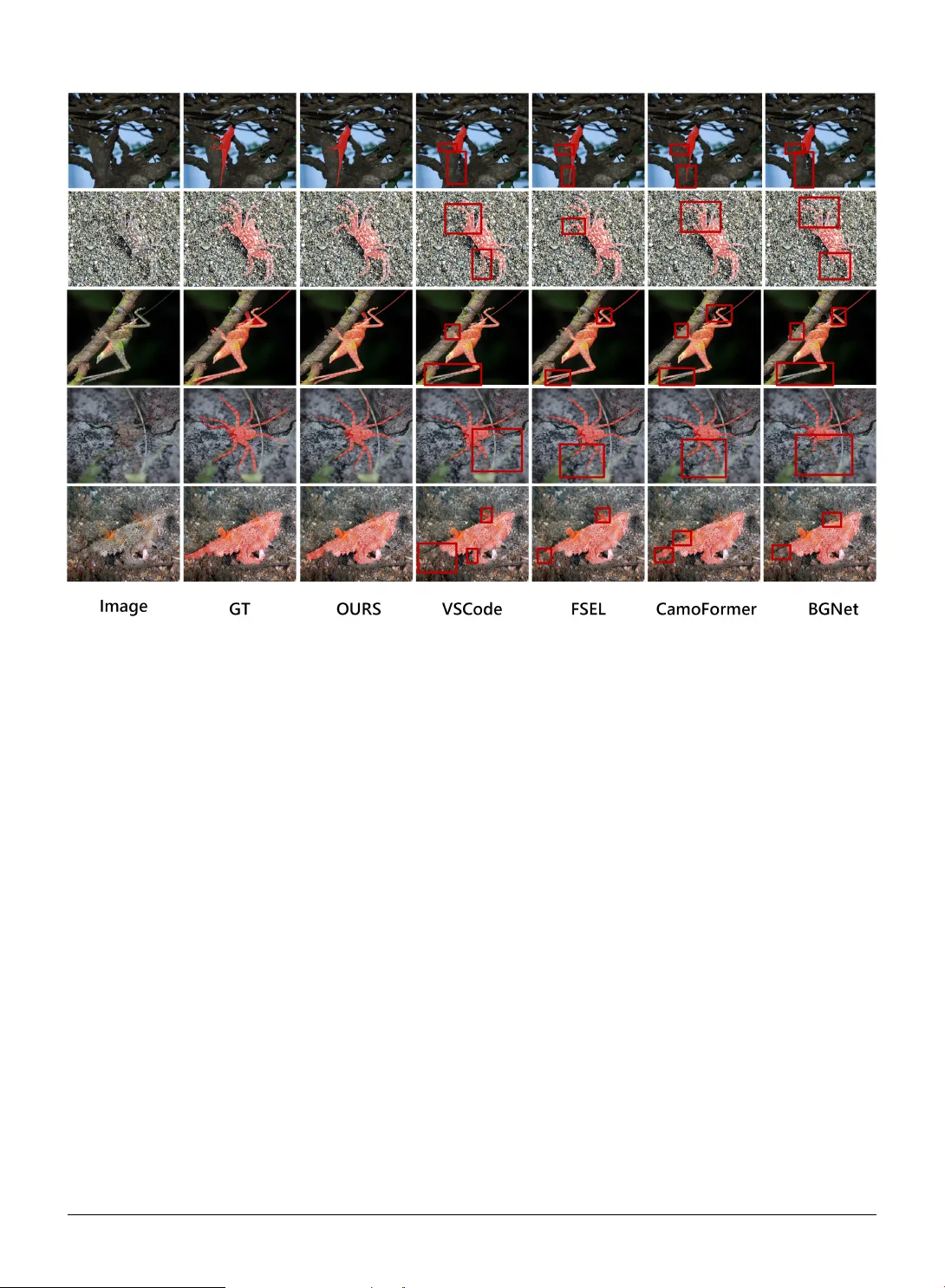

Languag e-Guided Structure- A w ar e Ne tw ork f or Camouflag ed Object Detection Min Zhang a a School of Ar tificial Intelligence, Chongqing Univ ersity of T echnology, Chongqing, 401120, China A R T I C L E I N F O Keyw ords : Camouflaged Object Detection Language-Guided Edge Enhancement Structure-A w are Attention A B S T R A C T Camouflaged Object Detection (COD) aims to segment objects t hat are highly integrated with the background in terms of color, texture, and str ucture, making it a highly challenging t ask in com- puter vision. Although existing methods introduce multi-scale fusion and attention mechanisms to alleviate t he abov e issues, they generally lack the guidance of textual semantic pr iors, which limits the model’ s ability to focus on camouflaged regions in complex scenes. To address this issue, this paper proposes a Language-Guided Structure-A w are Network(LGS AN). Specifically , based on the visual backbone PVT-v2, we introduce CLIP to generate masks from text prompts and RGB images, t hereby guiding the multi-scale features extracted by PV T -v2 to focus on po- tential targe t regions. On this f oundation, we fur ther design a Four ier Edge Enhancement Module (FEEM), which integrates multi-scale features with high-frequency inf ormation in t he frequency domain to extract edg e enhancement f eatures. Furt hermore, we propose a Structure-A w are Attention Module (SAAM) to effectivel y enhance the model’s perception of object str uctures and boundaries. Finally , we introduce a Coarse-Guided Local Refinement Module (CGLRM) to enhance fine-grained reconstr uction and boundar y integ rity of camouflaged object regions. Extensive experiments demonstrate that our method consistentl y achie ves highly competitive performance across multiple COD datasets, validating its effectiveness and robustness. Our code has been made publicly av ailable. https://github.com/tc- fro/LGSAN 1. Introduction Cod is an impor tant yet challenging task in computer vision, whose core objective is to identify objects that closely resemble their surroundings in terms of color, texture, and shape within complex backgrounds. Due to the inherent lack of saliency in camouflaged objects, t hey often exhibit blur red boundar ies, weak ened contours, and extremely low semantic responses, making it difficult f or traditional semantic segment ation me thods to ac hiev e satisf actor y performance on such task s. COD [ 1 ], [ 2 ] has significant application value in various fields, such as natural ecological monitor ing (e.g., wildlife protection), medical image analy sis (e.g., lesion detection), industr ial def ect inspection, and militar y concealed t arg et detection. Therefore, dev eloping efficient and robust camouflaged object detection methods holds significant theoretical import ance and practical value. Compared wit h Generic Object Detection (GOD) [ 3 ], [ 4 ] and Salient Object Detection (SOD) [ 5 ], [ 6 ], the main challeng e of COD lies in the high similarity of visual features between t he objects and the back ground, which limits the effectiveness of traditional methods based on saliency or contrast. In view of the challenge posed by the high similar ity between camouflaged objects and the back ground, recent deep learning–based COD research can be broadl y distilled into f our concise categor ies: multi-scale context modeling, bio- inspired mechanism simulation, multi-source inf or mation fusion, and multi-task lear ning. Among t hem, multi-scale context aims to e xploit rich contextual inf ormation to capture t he diversity of camouflag ed objects in appearance and scale, while aggregating cross-la yer f eatures and progressivel y refining representations. Suc h as HCM [ 7 ], CamoFormer [ 8 ], FSPNet [ 9 ]; Bio-inspired mec hanism simulation strategies draw inspiration from t he behavior al patterns of natural predators or the human visual detection system. Such as SINet [ 2 ], ZoomNet [ 10 ], MFFN [ 11 ]; Multi-source inf or mation fusion strategies, in addition to R GB cues, introduce external information such as frequency- domain f eatures, depth, and prompts. Such as FEMNet [ 12 ], DCE [ 13 ], CGCOD [ 14 ]. Multi-task learning aims to jointly optimize multiple related tasks by lev eraging shared information and complementar y f eatures across task s. Such as MGL [ 15 ], FindNet [ 16 ], ASBI [ 17 ]. zm321098@163.com (M. Zhang) OR CI D (s): : Preprint submitted to Elsevier Page 1 of 14 In recent y ears, t he t ask of C OD has still faced man y challenges. First, COD models usually rely on a single visual backbone and lack explicit guidance from textual semantic pr iors, making it difficult to effectivel y f ocus on camouflaged regions; Second, camouflaged objects generall y suffer from weak boundar y information and non-salient structural cues, which poses challenges for precise edge localization and detail restoration; and third, the inter nal structures of camouflaged regions are complex yet subtle, making it difficult to per f orm fine-grained modeling of local areas, which in turn affects the regional consistency and str uctural integr ity of segmentation results. Considering that t he detection targets in this study are all known species and t hat the targe t categor ies are av ailable as prior know ledge, text prompts can be introduced to provide category-related semantic inf or mation and thus offer t he model a clearer focus of attention. Theref ore, to address the abov e issues, this paper proposes a language semantics- guided structure-aw are network for camouflaged object detection. First, we input text prompts and RGB images into CLIP to obtain the textual and visual features of camouflaged objects, and employ a text-guided decoder to generate object masks, which guide t he multi-scale features output by t he PVT -v2 backbone [ 18 ] to focus on potential targe t regions. Subsequently , we design the FEEM, which integrates multi-scale semantic features and e xtracts high- frequency information in t he frequency domain for edge enhancement. The resulting edge enhancement features pro vide explicit boundar y cues f or the subsequent modules. On this basis, we propose the SAAM to enhance t he model’ s perception of object str uctures and boundar ies. Howe ver , relying solely on this module still makes it difficult to ensure the coherence and local consistency of target regions. To this end, we fur ther propose the CGLRM, which adopts a dual-branch str ucture: one branch incor porates channel attention and spatial attention to perform global perception modeling and generate global attention guidance; t he other branch divides the input features into four local regions and performs local structural refinement under the guidance of global attention, thereby effectivel y impro ving the boundar y integr ity and structural consistency of camouflaged object segment ation results. In summar y , our contr ibutions are as follo ws: • W e propose t he LGS AN f or Camouflaged Object Segmentation, which introduces CLIP to generate region- guided masks, effectivel y improving t he model’ s focusing ability on targe t regions. • W e propose the FEEM, which integrates multi-scale semantic inf or mation wit h a frequency -domain modeling strategy to generate edge enhancement f eatures, providing boundary cues f or subsequent modules. • W e design the SAAM, which integ rates structural features of camouflaged objects with edge f eatures to enhance the model’ s perception of object structures and boundaries. • W e propose the CGLRM, which enhances the str uctural consistency of target regions by combining spatial partitioning, global guidance fusion, and local refinement. 2. Related W ork COD initially originated from traditional image-lev el methods, which relied on manually designed low -lev el f eatures (such as te xture, intensity , and color) to capture subtle differences between t he f oreground and background, thereby laying the f oundation for early research in t his field. Although traditional methods achiev e cer tain effectiveness in low -complexity scenar ios, they often fail in cases of low resolution or high similar ity between f oreground and back ground, and their feature represent ation capability is limited. With the dev elopment of deep learning, end-to-end approaches for lear ning complex representations hav e gradually become mainstream. Recent deep lear ning-based COD methods can be broadly categorized into f our representative strategies: multi- scale context modeling, bio-inspired mechanism simulation, multi-source information fusion, and multi-task lear ning. Among them, multi-scale conte xt aims to exploit rich contextual inf or mation to capture t he diversity of camouflaged objects in appearance and scale, while agg regating cross-lay er features and progressivel y refining representations. For ex ample, HCM [ 7 ] focuses on low -confidence regions through a rev ersible recalibration mechanism, t hereby detecting parts that are initially ov erlooked; CamoFor mer [ 8 ] introduces masked separable attention to achiev e top-down multi- lev el feature refinement; FSPNet [ 9 ] designs a non-local token enhancement mechanism to improv e feature interaction capability , and incor porates a f eature shrinkage decoder to optimize the results; OWinC ANet [ 19 ] introduces cross- la yer ov erlapping window attention based on a shifted window strategy , achieving a balance between local and global inf or mation through sliding-aligned window s. Bio-inspired mechanism simulation strategies dra w inspiration from t he behavioral patter ns of natural predators or the human visual detection system, typically adopting a multi-stage, coarse-to-fine process to progressively impro v e the : Preprint submitted to Elsevier P age 2 of 14 localization and segment ation accuracy of camouflaged objects. SINet [ 2 ] is inspired by t he first two stages of hunting and consists of two main modules: the Search Module and the Identification Module. The former is responsible for searching f or camouflaged objects, while the latter is used to precisely detect them; ZoomNet [ 10 ], which mimics how humans obser ve vague images by zooming in and out, employ s t his zoom strategy—toge ther with a designed scale integration unit and a hierarchical mixed-scale unit—to lear n discr iminativ e mixed-scale semantics; MFFN [ 11 ] acquires complementar y information from multiple view s (different angles, distances, and perspectives), t hereby effectivel y handling complex scenar ios inv olving camouflaged objects. Multi-source inf ormation fusion strategies, in addition to RGB cues, introduce external inf or mation such as frequency -domain f eatures, depth, and prompts to enhance t he discr iminative po wer and robustness of COD. Frequency -domain methods (e.g., FEMNet [ 12 ]) use frequency-domain information as a supplementar y cue to impro ve the detection of camouflaged objects. Depth-based methods, such as DCE [ 13 ], introduce auxiliar y depth estimation and a GAN-based multi-modal confidence loss. Prompt-based methods, such as CGCOD [ 14 ], combine visual and textual prompts to enhance the perception of camouflaged scenes. Multi-source information fusion strategies, in addition to RGB cues, introduce external information such as frequency -domain features, dept h, and prompts. Multi-task lear ning in camouflaged object detection aims to jointly optimize multiple related tasks, lev eraging shared information and complementar y f eatures across t asks to significantly enhance the model’ s discriminative capability and generalization per f ormance. Within this framew ork, boundar y-supervised methods (e.g., MGL [ 15 ], FindNe t [ 16 ], ASBI [ 17 ]) introduce edge-detection branches, enabling t he model to better capture the edge details of camouflaged objects, thereby effectivel y improving boundary accuracy and the representation of object details. Figure 1: The architecture of LGSAN. The overall framewo rk of the mo del consists of five key comp onents: the PVT-v2 backb one, the CLIP backb one, the FEEM, the SAAM, and the CGRLM. Refer to Section 3 for details. : Preprint submitted to Elsevier P age 3 of 14 3. METHODOLOG Y 3.1. Netw ork Ov erview As shown in Fig. 1 , we propose a Language-Guided Structure-A w are Netw ork (LGSAN) f or Camouflaged Object Detection. The ov erall frame work of the model consists of fiv e ke y components: the PVT-v2-b3 backbone, the CLIP backbone, t he FEEM, the S AAM, and the CGRLM. Ref er to Section 3 f or details. For the visual backbone, we adopt PV T -v2-b3 as t he image feature extractor to obtain four -scale feature maps from the input image 𝐼 ∈ ℝ 𝐻 × 𝑊 ×3 , denoted as 𝐹 𝑖 4 𝑖 =1 . To enhance the semantic inf ormation of camouflaged objects, we furt her introduce a frozen CLIP model: the text encoder extracts textual features from te xt prompts (e.g., “a photo of the camouflaged ow l”), while the visual encoder extracts multi-scale visual f eatures from its 8t h, 16th, and 24th lay ers. Subsequentl y , cross-scale alignment and fusion are performed via a FPN [ 20 ] to obtain t he CLIP visual features 𝐴𝑡𝑡𝑛 , which are combined with the te xtual features and f ed into the T ext-Guided decoder to g enerate t he object mask 𝑀 1 . The mask is applied to { 𝐹 𝑖 } 4 𝑖 =1 and 𝐴𝑡𝑡𝑛 through the MGFA operation, as shown in Fig. 1 , to achie ve explicit f eature enhancement, resulting in { 𝐹 𝑖 } 4 𝑖 =1 and 𝐴𝑡𝑡𝑛 1 , which enable the model to f ocus on potential camouflaged regions. The enhanced f eatures 𝐴𝑡𝑡𝑛 1 are first fed into a transf or mer to obtain the 𝑎𝑡𝑡𝑛 features. Subsequently , the Four ier Edge Enhancement Module (FEEM) t akes { 𝐹 𝑖 } 4 𝑖 =1 and 𝑎𝑡𝑡𝑛 as inputs to generate t he edge enhancement f eatures 𝐸 . In t he next st age, the highest-lev el f eature 𝑓 4 is concatenated wit h 𝑎𝑡𝑡𝑛 and passed through the CGRLM to obt ain coarse-grained str uctural features. These features, together with 𝐸 and 𝑎𝑡𝑡𝑛 , are then input into the SAAM to produce structure-enhanced f eatures, which are subsequently refined by the CGRLM to generate 𝑐 𝑎𝑚 4 . For t he remaining scales 𝑖 = 3 , 2 , 1 , t he refinement process begins by upsampling t he previous output 𝑐 𝑎𝑚 𝑖 +1 and concatenating it with the cor responding backbone feature 𝑓 𝑖 . A conv olution bloc k is applied to compress the result into 𝑓 𝑖 , whic h, along with the edge guidance 𝐸 and 𝑐 𝑎𝑚 𝑖 +1 , is fed into the SAAM. Finally , local refinement is per f ormed via the CGRLM, yielding the output 𝑐 𝑎𝑚 𝑖 . In t he prediction stage, the netw ork outputs segmentation results at multiple scales t hrough conv olution: inter me- diate prediction maps 𝑂 3 , 𝑂 2 , 𝑂 1 are obtained from 𝑐 𝑎𝑚 3 , 𝑐 𝑎𝑚 2 , 𝑐 𝑎𝑚 1 , respectivel y , and are upsampled to the original resolution via inter polation; 𝑂 4 is generated from 𝑎𝑡𝑡𝑛 as the semantic-guided prediction, 𝑂 𝑒 is der ived from 𝐸 as the edge prediction, and the mask 𝑀 1 is also included. The final output set is = { 𝑂 1 , 𝑂 2 , 𝑂 3 , 𝑂 4 , 𝑀 1 , 𝑂 𝑒 } . Figure 2: The architecture of the FEEM. The FEEM generates edge enhancement features through multi-scale fusion, edge enhancement, and h igh-frequency mo deling in the frequency domain. 3.2. F ourier Edg e Enhancement Module As sho wn in Fig. 2 , the FEEM takes the features { 𝐹 𝑖 } 4 𝑖 =1 and 𝑎𝑡𝑡𝑛 as inputs. First, the highest-lev el f eature 𝑓 4 is concatenated with 𝑎𝑡𝑡𝑛 and passed through a channel compression conv olution to obt ain the high-level fused featur es: 𝑓 ′ 4 = Conv C(Up( 𝑓 4 ) , 𝑎𝑡𝑡𝑛 ) . (1) The feature is then progressivel y upsampled and concatenated with the low er-le vel features, follo wed by conv olu- tional fusion and enhancement of boundary representations through t he EdgeEnhancer [ 21 ] module: 𝑓 ′ 3 = Enh Con v(C(Up( 𝑓 ′ 4 ) , 𝑓 3 )) , (2) : Preprint submitted to Elsevier P age 4 of 14 𝑓 ′ 2 = Enh Con v(C(Up( 𝑓 ′ 3 ) , 𝑓 2 )) , (3) 𝑓 ′ 1 = Enh Con v(C(Up( 𝑓 ′ 2 ) , 𝑓 1 )) . (4) Here, C denotes feature concatenation, U p denotes feature upsampling, Enh denotes the EdgeEnhancer module, and Con v denotes conv olution. The EdgeEnhancer is based on the idea of a verage pooling difference, where input features are smoothed and t heir differences are computed to extract high-g radient regions (i.e., edges). An edge weight map is then generated and fused with the original features in a residual manner, thereby explicitl y enhancing boundary contrast in t he spatial domain. Its mathematical formulation can be expressed as: 𝐸 dif f = 𝑋 − Pool( 𝑋 ) , (5) 𝑊 edge = 𝜎 BN(Con v 1×1 ( 𝐸 dif f )) , (6) 𝑋 enh = 𝑋 + 𝑊 edge . (7) Here, Pool( ⋅ ) denotes a 3 × 3 a ver age pooling operation, 𝜎 ( ⋅ ) represents the Sigmoid function, and 𝑊 edge indicates the edge weight map. T o fur ther extract high-frequency detail information of object boundar ies, we apply a Four ier Transf or m on t he fused low -level feature 𝑓 ′ 1 , explicitl y captur ing high-frequency responses: 𝐸 high = ReLU FFT high ( 𝑋 ′ 1 ) . (8) Finall y , edge enhancement f eatures are output: 𝐸 = Reshape ( 𝑒 high ) . (9) 3.3. Structure-A ware Attention Module As shown in Fig. 3 , to enhance the model’s ability to discr iminate boundar y details and object structures of camouflaged t arg ets, we propose the SAAM. Unlik e traditional self-attention mechanisms t hat equally consider all spatial positions, the SAAM incorporates the semantic inf ormation 𝑀 of camouflaged objects (i.e., 𝑐 𝑎𝑚 𝑖 in Fig. 1 , which is r ich in semantic cues of camouflaged t arg ets) and the edge-guided f eatures 𝐸 (i.e., 𝐸 in Fig. 1 , which contains boundary information) to achie ve task -specific guided attention modeling. Specifically , given an input feature map 𝑥 ∈ ℝ 𝐵 × 𝐶 × 𝐻 × 𝑊 (i.e., the 𝑓 𝑖 in Section 3.1), we first apply con v olutions to e xtract the quer y (Q), k ey (K), and value (V) features, thereby reducing computational cost. Then, we incor porate external guidance into the attention modeling: the semantic information 𝑀 of camouflaged objects is applied to the quer y vectors (emphasizing the structure of camouflaged targ ets), while t he edge enhancement f eatures 𝐸 are applied to the key v ectors (highlighting boundar y inf or mation), yielding: 𝑄 = Nor m CNN( 𝑥 ) ⊗ 𝑀 , (10) 𝐾 = Nor m CNN( 𝑥 ) ⊗ 𝐸 , (11) 𝑉 = CNN ( 𝑥 ) . (12) Here, CNN denotes conv olution, ⊗ denotes element-wise multiplication, and Nor m denotes vector nor malization. Subsequentl y , 𝑄 , 𝐾 , and 𝑉 are reshaped from [ 𝐵 , 𝐶 , 𝐻 , 𝑊 ] to the token represent ation [ 𝐵 , 𝐻 𝑊 , 𝐶 ] . To fur ther reduce memor y consumption, we adopt an appro ximate attention computation strategy: the transposed ke y features : Preprint submitted to Elsevier P age 5 of 14 Figure 3: The SAAM intro duces semantic information of camouflaged objects into a lightw eight attention framewo rk to highlight camouflaged regions, while incorporating edge enhancement features to emphasize b ounda ry information, thereby enabling the mo del to fo cus on structural and b ounda ry details of camouflaged objects at high resolution. are first processed wit h softmax, t hen multiplied by the value vectors, and finally multiplied by t he query vectors. The computation process is as follo ws: 𝐴 = Softmax ( 𝐾 ⊤ ) , (13) 𝐴𝑉 = 𝐴 ⋅ 𝑉 , (14) Output = 𝑄 ⋅ 𝐴𝑉 . (15) Here, 𝐾 ⊤ denotes t he transpose ov er the last two axes, ⋅ denotes batched matrix multiplication, in Fig. 3 , ⊕ denotes element-wise addition. The output f eatures are reshaped to restore the spatial str ucture and fused with the original input f eatures through residual connection. 3.4. Coarse-Guided Local R efinement Module As shown in Fig. 4 , we propose the CGLRM. Given an input feature map 𝑥 ∈ ℝ 𝐵 × 𝐶 × 𝐻 × 𝑊 , we first apply Channel Attention (CA) and Spatial Attention (SA) to extract attention-enhanced features: 𝑥 ca = CA ( 𝑥 ) ⊗ 𝑥, (16) 𝑔 = S A ( 𝑥 ca ) ⊗ 𝑥 ca . (17) Here, ⊗ denotes element-wise multiplication. The attention-enhanced f eatures 𝑔 provide coarse-grained spatial guidance for the t arg et. Ne xt, we spatially divide t he input f eature 𝑥 into four non-over lapping sub-regions: { 𝑥 𝑖 } 4 𝑖 =1 = SpatialSplit ( 𝑥 ) . (18) : Preprint submitted to Elsevier P age 6 of 14 Figure 4: The CGLRM emplo ys channel and spatial attention to obtain global guidance, p erforms lo cal refinement through 2 × 2 spatial partitioning, thereb y ensuring structural consistency and b oundary integrit y . The attention-enhanced features 𝑔 are divided in the same manner into { 𝑔 𝑖 } 4 𝑖 =1 , ensur ing that each local region is equipped with the cor responding global guidance inf ormation. This spatial par titioning strategy effectively suppresses ir rele vant interference by introducing global guidance into the cor responding regions; meanwhile, it maint ains t he independence of each region while enhancing over all str uctural coherence and the ability to restore fine details. As f ollows: 𝑥 𝑖 = LocalRefine 𝑥 𝑖 ⊗ 𝜎 ( 𝑔 𝑖 ) , 𝑖 ∈ {1 , 2 , 3 , 4} . (19) Here, 𝜎 () denotes t he Sigmoid activation used to generate smooth guidance weights, and LocalRefine denotes a con volution operation. All local refinement results are concatenated into a complete feature map: 𝑥 local = C ({ 𝑥 𝑖 } 4 𝑖 =1 ) . (20) Subsequentl y , it is fused with the attention-enhanced features: 𝑥 fuse = CNN C ( 𝑥 local , 𝑔 ) , (21) where CNN denotes con volution, and C denotes f eature concatenation. And finall y passed through the Output Enhancement Module to generate the final output: Output = CNN ( 𝑥 fuse ) ⊕ 𝑥, (22) where CNN denotes conv olution, ⊕ denotes element-wise addition, and C denotes f eature concatenation. This design explicitl y models local str uctures under global guidance, effectively improving boundar y integr ity and str uctural consistency . 3.5. Loss Function In this model, the loss function from the boundar y-guided camouflaged object detection network (BGNet) proposed by Sun et al. [ 22 ] is adopted to improv e COD per f ormance. The total loss function consists of two par ts: the camouflaged object mask ( 𝐺 𝑜 ) and the camouflaged object boundar y ( 𝐺 𝑒 ) . For the camouflaged object mask, the weighted binar y cross-entropy loss ( 𝐿 𝑊 𝐵 𝐶 𝐸 ) and t he weighted IoU loss ( 𝐿 𝑊 𝐼 𝑂 𝑈 ) are combined, f ollowing the approach of W ei et al. [ 23 ]. For boundar y prediction, t he Dice loss 𝐿 dice [ 24 ] is used as the boundary super vision signal. Theref ore, the total loss function is defined as follo ws: : Preprint submitted to Elsevier P age 7 of 14 𝐿 total = 4 𝑖 =1 𝐿 W BCE ( 𝑂 𝑖 , 𝐺 𝑜 ) + 𝐿 W IoU ( 𝑂 𝑖 , 𝐺 𝑜 ) + 𝐿 W BCE ( 𝑀 1 , 𝐺 𝑜 ) + 𝐿 W IoU ( 𝑀 1 , 𝐺 𝑜 ) + 𝜆𝐿 dice ( 𝑂 𝑒 , 𝐺 𝑒 ) , (23) where 𝜆 is a weighting parameter used to balance mask super vision and edge supervision. In t he experiments, 𝜆 is set to 5. 4. EXPERIMENT 4.1. Implementation Details In this study , the model is implemented based on t he PyT orch framew ork and trained and tested on an NVIDIA GeForce RTX 5000 GPU. Dur ing training, all input images are uniformly resized to a resolution of 521 × 521. The Adam optimizer [ 25 ] is employ ed, with the training process set to 25 epochs and a batch size of 4. The initial learning rate is set to 0.0001, and a poly lear ning rate decay strategy is adopted wit h t he deca y pow er parameter set to 0.9 to dynamically adjust the lear ning rate. Dur ing testing, the input images are also resized to 521 × 521, and af ter inference, the outputs are restored to their or iginal resolution f or model performance evaluation. 4.2. Datasets T o assess the effectiveness of the proposed approach, we ev aluate it on three standard camouflaged object detection benchmarks—C AMO [ 26 ], COD10K [ 2 ], and NC4K [ 27 ]. LGS AN is trained on t he training splits of CAMO and COD10K, and ev aluated on their official test sets as w ell as the held-out NC4K test set, pro viding a comprehensive assessment of both detection performance and generalization. 4.3. Evaluation Metrics In image-le vel camouflaged object detection t asks, the commonly used ev aluation metr ics mainly include Mean Absolute Er ror (MAE, M) [ 28 ], W eighted F -measure ( 𝐹 𝑤 𝛽 ) [ 29 ], Structural Similar ity Index ( 𝑆 𝛼 ) [ 30 ], and mean E-measure ( 𝐸 𝜙 ) [ 31 ]. 4.4. Compar ed With the State-of-the- Art Methods T o thoroughly assess the effectiveness of t he proposed LGSAN , we per f orm extensiv e compar isons with repre- sentative recent methods, including BGNe t [ 22 ], ZoomNet [ 10 ], EVP [ 32 ], EAMNet [ 33 ], FSPNet [ 9 ], FEDER [ 1 ], DCNet [ 34 ], SARNe t [ 35 ], DINet [ 36 ], SDRBet [ 37 ], VSCode [ 38 ], FSEL [ 39 ], CamoFormer [ 8 ], IPNet[ 40 ], CODdiff [ 41 ], ESNet [ 42 ], SENet [ 43 ], KCN et [ 44 ], UGDNett [ 45 ], BDCLNet [ 46 ], and CGCOD [ 14 ], on three mainstream COD benchmarks (CAMO, COD10K, and NC4K). 4.4.1. Quantitative Evaluation T able 1 presents the quantitativ e compar ison betw een LGSAN and existing methods in terms of f our commonly used metrics: 𝑆 𝛼 , 𝐸 𝜙 , 𝐹 𝑤 𝛽 , and 𝑀 . It can be obser ved t hat LGSAN achiev es consistently competitiv e performance across almost all metrics on t he three datasets. (1) On CAMO, LGSAN ranks first on 𝐸 𝜙 , 𝐹 𝑤 𝛽 , and 𝑀 , and achie v es a score of 0.896 on 𝑆 𝛼 , tying with CGCOD f or t he best result. (2) On COD10K, LGSAN achie v es the best performance across all four metr ics, with 𝑆 𝛼 = 0.894, 𝐹 𝑤 𝛽 = 0.833, and 𝑀 = 0.018. (3) On NC4K, LGSAN ties wit h CGCOD for the best results on 𝐸 𝜙 , 𝐹 𝑤 𝛽 , and 𝑀 , while showing only a marginal gap of 0.001 on 𝑆 𝛼 . Overall, LGSAN not only maintains structural consistency but also significantly enhances boundary details, demonstrating strong generalization and robustness. 4.4.2. Qualitative Evaluation T o pro vide a more intuitiv e validation of the segment ation performance of the proposed LGSAN , qualitativ e visual analy ses are conducted from two perspectives: (1) Evolution of outputs across decoding stages. Fig. 5 illustrates the predictions and attention distributions of LGS AN at different decoding stages. Specifically , 𝑂 1 – 𝑂 4 denote t he outputs from the four decoding st ages, 𝑀 1 is the semantic mask generated by CLIP , and 𝑂 𝑒 is t he edge prediction from FEEM. As shown, t he shallow outputs ( 𝑂 4 , 𝑂 3 ) can roughly localize t arg ets but still suffer from blurr y boundar ies. With progressive decoding ( 𝑂 2 , 𝑂 1 ), the network gradually suppresses background noise and refines object contours, enabling a transition from coarse localization to : Preprint submitted to Elsevier P age 8 of 14 Figure 5: The heatmaps of LGSAN from 𝑂 4 to 𝑂 1 illustrate the progressive refinement p rocess, while the heatmaps of 𝑀 1 and 𝑂 𝑒 a re also presented fo r comparison. structurally detailed, high-quality predictions. In this process, 𝑀 1 pro vides stable global attention, while 𝑂 𝑒 delivers explicit boundar y cues; t heir synergy enhances the str uctural consistency and boundar y integ rity of the final results. (2) Comparison with representative SOT A methods. Fig. 6 presents compar isons between LGSAN and represen- tative methods, including V SCode, FSEL, CamoFor mer , and BGNet, under diverse challenging scenar ios. It can be observed that LGS AN consistently produces masks wit h clear boundaries, coherent str uctures, and r ich details across various t arg et categories (e.g., insects, cr ustaceans, camouflaged animals). Even in cases where t arg et and background textures are highly similar , LGSAN accurately separates the t arg et regions. In contrast, other methods often suffer from fractures, boundar y omissions, or excessiv e smoothing in fine par ts such as limbs and antennae. These visual results demonstrate t hat LGS AN maintains super ior t arg et consistency and detail fidelity under complex backgrounds and low -contrast conditions, yielding predictions closest to the ground tr uth (GT). 4.5. Ablation Study T o comprehensiv ely validate t he contributions of each key module to camouflaged object segmentation, we conducted step-by -step ablation studies on t he COD10K dat aset (see Table 2 ). Star ting from the backbone netw ork as the baseline, we progressivel y introduced CLIP , FEEM, and the jointly designed SAAM+CGLRM module, thereby systematicall y analyzing t he per f ormance improv ements and underlying mechanisms of each component. 4.5.1. Baseline (B) The baseline model adopts the PVT- V2-B3 backbone coupled with a simple CNN decoder (from SARNe t-H [ 35 ]), relying solely on visual f eatures for segmentation without explicit semantic guidance or structural enhancement. On the COD10K dataset, this model achiev es an 𝑆 𝛼 of 0.831, with limited boundar y accuracy and object consistency . This indicates that in camouflaged object scenarios characterized by comple x backgrounds and extremel y weak saliency , relying solely on the visual backbone leads to attention drif t and boundar y blur r ing issues. : Preprint submitted to Elsevier P age 9 of 14 T able 1 Quantitative comparison with state-of-the-art metho ds for COD on 3 b enchmarks using 4 widely used evaluation metrics ( 𝑆 𝛼 , 𝐸 𝜙 , 𝐹 𝑤 𝛽 , 𝑀 ). “ ↑ ” / “ ↓ ” indicates that larger/smaller is b etter. The b est results are in bold ,**** means the model was not tested on this dataset. Metho d Pub./Y ea r CAMO COD10K NC4k 𝑆 𝛼 ↑ 𝐸 𝜙 ↑ 𝐹 𝑤 𝛽 ↑ 𝑀 ↓ 𝑆 𝛼 ↑ 𝐸 𝜙 ↑ 𝐹 𝑤 𝛽 ↑ 𝑀 ↓ 𝑆 𝛼 ↑ 𝐸 𝜙 ↑ 𝐹 𝑤 𝛽 ↑ 𝑀 ↓ BGNet IJCAI’22 0.812 0.870 0.749 0.073 0.831 0.901 0.722 0.033 0.851 0.907 0.788 0.044 Zo omNet CVPR’22 0.820 0.892 0.752 0.066 0.838 0.911 0.729 0.029 0.853 0.912 0.784 0.059 EVP CVPR’23 0.846 0.895 0.777 0.059 0.843 0.907 0.742 0.029 **** **** **** **** EAMNet ICME’23 0.831 0.890 0.763 0.064 0.839 0.907 0.733 0.029 0.862 0.916 0.801 0.040 FSPNet CVPR’23 0.856 0.899 0.799 0.050 0.851 0.895 0.735 0.026 0.879 0.915 0.816 0.035 FEDER CVPR’23 0.822 0.886 0.809 0.067 0.851 0.917 0.752 0.028 0.863 0.917 0.827 0.042 DCNet TCSVT’23 0.870 0.922 0.831 0.050 0.873 0.934 0.810 0.022 **** **** **** **** SARNet TCSVT’23 0.868 0.927 0.828 0.047 0.864 0.931 0.777 0.024 0.886 0.937 0.842 0.032 DINet TMM’24 0.821 0.874 0.790 0.068 0.832 0.903 0.761 0.031 0.856 0.909 0.824 0.043 SDRNet KBS’24 0.872 0.924 0.826 0.049 0.871 0.924 0.785 0.023 0.889 0.934 0.842 0.032 VSCo de CVPR’24 0.873 0.925 0.844 0.046 0.869 0.931 0.806 0.023 0.891 0.935 0.863 0.032 FSEL ECCV’24 0.885 0.942 0.857 0.040 0.877 0.937 0.799 0.021 0.892 0.941 0.852 0.030 CamoF o rmer TP AMI’24 0.876 0.930 0.856 0.043 0.838 0.916 0.753 0.029 0.888 0.937 0.863 0.031 IPNet EAAI’24 0.864 0.924 0.836 0.047 0.850 0.922 0.785 0.026 **** **** **** **** CODdiff KBS’25 0.839 0.911 0.802 0.054 0.837 0.919 0.759 0.026 0.865 0.926 0.827 0.036 ESNet IV C’25 0.860 0.918 0.846 0.050 0.864 0.933 0.803 0.024 0.880 0.931 0.856 0.035 SENet TIP’25 0.888 0.932 0.847 0.039 0.865 0.925 0.780 0.024 0.889 0.933 0.843 0.032 K CNet EAAI’25 0.882 0.934 0.847 0.039 0.865 0.925 0.780 0.024 0.889 0.933 0.843 0.032 UGDNet TMM’25 0.888 0.942 0.865 0.038 0.885 0.947 0.822 0.019 0.895 0.943 0.862 0.028 BDCLNet KBS’25 0.881 0.929 0.845 0.039 0.869 0.935 0.790 0.022 0.888 0.932 0.844 0.032 CGCOD A CMMM’25 0.896 0.947 0.864 0.036 0.890 0.948 0.824 0.018 0.904 0.949 0.869 0.026 LGSAN Ours 0.896 0.949 0.870 0.034 0.894 0.950 0.833 0.018 0.903 0.949 0.869 0.026 T able 2 Ablation study of LGSAN. In the table, “B” denotes the baseline composed of PVT-v2-B3 and a simple CNN, “E” represents the FEEM mo dule, “c” indicates the use of CLIP fo r generating the 𝑀 1 mask, and “SC” refers to the SAAM and CGLRM. Mo del Method COD10K 𝑆 𝛼 ↑ 𝐸 𝜙 ↑ 𝐹 𝑤 𝛽 ↑ 𝑀 ↓ a B 0.831 0.905 0.706 0.032 b B + C 0.890 0.947 0.825 0.019 c B + C + E 0.893 0.948 0.830 0.018 d B + C + E + SC 0.894 0.950 0.833 0.018 4.5.2. Effect of CLIP (B → B+C) By incor porating the semantic mask 𝑀 1 generated by CLIP into t he baseline (model b), the networ k is endo wed with t ask -relev ant linguistic pr iors dur ing t he feature extraction stage, thereby guiding attention to focus on potential camouflaged regions. This guidance effectiv ely reduces interf erence from irrelev ant background, leading to an impro vement of 𝑆 𝛼 to 0.890, 𝐸 𝜙 to 0.947, and an increase of 0.119 in 𝐹 𝑤 𝛽 , indicating a significant enhancement in the model’ s ability to capture the ov erall object str ucture. 4.5.3. Effect of F our ier Edge Enhancement Module (B+C → B+C+E) By fur ther introducing the FEEM (model c), Edge Enhancement features are e xplicitly extracted through multi- scale f eature fusion and frequency-domain high-frequency enhancement. With FEEM incor porated, 𝐹 𝑤 𝛽 increases from 0.825 to 0.830, and 𝑀 decreases from 0.019 to 0.018, demonstrating t he effectiveness of FEEM in enhancing boundar y detail quality . : Preprint submitted to Elsevier P age 10 of 14 Figure 6: Compared with existing metho ds, the p rop osed approach achieves sup erio r p erformance in terms of lo calization accuracy , structural integrit y , and b oundary details across multiple categories of camouflaged objects. 4.5.4. Effect of Structure-A war e Attention Module and Coarse-Guided Local R efinement Module (B+C+E → B+C+E+SC) Finall y , the SAAM and the CGLRM are introduced on top of model c (model d). Specifically , the S AAM module, guided by semantic and edge information, enhances cross-scale str uctural and boundar y modeling, but using it alone still makes it difficult to ensure ov erall regional consistency . The CGLRM module g enerates coarse global guidance through global attention and per f or ms local refinement via spatial par titioning, thereby improving boundar y integr ity and regional coherence. Since the two modules are complementar y in design, they are introduced as a whole in the ablation study . Exper imental results show that this combination improv es 𝑆 𝛼 to 0.894 and 𝐸 𝜙 to 0.950, and achiev es the best performance across all metrics, furt her validating the effectiveness of this module combination. 5. Conclusion This paper proposes a Language-Guided Str ucture- A ware Netw ork, which integrates CLIP , the FEEM, the SAAM, and the CGLRM. The proposed framew ork effectively addresses t he challenges of camouflaged object detection, including high similar ity be tween objects and bac kgrounds, and missing local structures, ac hieving significant performance impro vements on multiple benchmark datasets. How ev er , t he method still has cer tain limitations: the ov erall network structure is relativel y complex, leading to high computational over head. Future wor k may f ocus on designing more lightweight semantic guidance and boundary modeling mechanisms to fur ther reduce inference costs and improv e practical applicability . : Preprint submitted to Elsevier P age 11 of 14 6. Declaration of compe ting interest All t he aut hors declare t hat t he y hav e no competing financial interests or personal relationships that could influence the work repor ted in this paper. 7. Data A v ailability The dat a for t his study’ s findings are a vailable online. 8. A cknow ledgements This work w as suppor ted by t he National Natural Science Foundation of China (Grants 61976158 and 61673301) and the Chongqing Municipal Science and Tec hnology Bureau, China (Grant No. CSTB2025TIAD-qykjggX0189). : Preprint submitted to Elsevier P age 12 of 14 Ref erences [1] Chunming He, Kai Li, Y achao Zhang, Longxiang T ang, Y ulun Zhang, Zhenhua Guo, and Xiu Li. Camouflaged object detection with feature decomposition and edge reconstruction. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 22046–22055, 2023. [2] Deng-Ping Fan, Ge-Peng Ji, Guolei Sun, Ming-Ming Cheng, Jianbing Shen, and Ling Shao. Camouflaged object detection. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 2777–2787, 2020. [3] Yif an Pu, Yizeng Han, Y ulin Wang, Junlan Feng, Chao Deng, and Gao Huang. Fine-grained recognition with learnable semantic data augmentation. IEEE T ransactions on Imag e Processing , 33:3130–3144, 2024. [4] Yif an Pu, Yiru W ang, Zhuofan Xia, Yizeng Han, Yulin W ang, W eihao Gan, Zidong W ang, Shiji Song, and Gao Huang. Adaptive rotated conv olution for rotated object detection. In Proceedings of the IEEE/CVF International Confer ence on Computer Vision , pages 6589–6600, 2023. [5] Mingchen Zhuge, Deng-Ping Fan, Nian Liu, Dingwen Zhang, Dong Xu, and Ling Shao. Salient object detection via integ rity learning. IEEE T r ansactions on P atter n Analysis and Machine Intelligence , 45(3):3738–3752, 2022. [6] Jia-Xing Zhao, Jiang-Jiang Liu, Deng-Ping Fan, Y ang Cao, Jufeng Y ang, and Ming-Ming Cheng. Egnet: Edge guidance network for salient object detection. In Proceedings of the IEEE/CVF inter national conference on computer vision , pages 8779–8788, 2019. [7] Fengyang Xiao, Pan Zhang, Chunming He, Runze Hu, and Y utao Liu. Concealed object segmentation wit h hierarchical coherence modeling. In CAAI International Conference on Ar tificial Intelligence , pages 16–27. Spr inger , 2023. [8] Bow en Yin, Xuying Zhang, Deng-Ping Fan, Shaohui Jiao, Ming-Ming Cheng, Luc V an Gool, and Qibin Hou. Camoformer: Masked separable attention for camouflaged object detection. IEEE T ransactions on P attern Analysis and Machine Intellig ence , 2024. [9] Zhou Huang, Hang Dai, Tian-Zhu Xiang, Shuo W ang, Huai-Xin Chen, Jie Qin, and Huan Xiong. Feature shr inkage pyramid f or camouflaged object detection with transformers. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 5557–5566, 2023. [10] Y ouwei Pang, Xiaoqi Zhao, Tian-Zhu Xiang, Lihe Zhang, and Huchuan Lu. Zoom in and out: A mixed-scale tr iplet network for camouflaged object detection. In Proceedings of the IEEE/CVF Conference on com puter vision and patter n recognition , pages 2160–2170, 2022. [11] Dehua Zheng, Xiaochen Zheng, Laurence T Y ang, Y uan Gao, Chenlu Zhu, and Yiheng Ruan. Mffn: Multi-view f eature fusion network for camouflaged object detection. In Proceedings of the IEEE/CVF winter confer ence on applications of computer vision , pages 6232–6242, 2023. [12] Yi jie Zhong, Bo Li, Lv T ang, Senyun Kuang, Shuang W u, and Shouhong Ding. Detecting camouflaged object in frequency domain. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 4504–4513, 2022. [13] Mochu Xiang, Jing Zhang, Y unqiu Lv , Aixuan Li, Yiran Zhong, and Y uchao Dai. Exploring depth contribution f or camouflaged object detection. arXiv preprint , 2021. [14] Chenxi Zhang, Qing Zhang, Jiayun Wu, and Y ouwei Pang. Cgcod: Class-guided camouflaged object detection. arXiv preprint arXiv:2412.18977 , 2024. [15] Qiang Zhai, Xin Li, Fan Y ang, Chenglizhao Chen, Hong Cheng, and Deng-Ping Fan. Mutual g raph lear ning for camouflaged object detection. In Proceedings of the IEEE/CVF conference on computer vision and patter n recognition , pages 12997–13007, 2021. [16] Peng Li, Xuef eng Y an, Hongwei Zhu, Mingqiang W ei, Xiao-Ping Zhang, and Jing Qin. Findnet: Can you find me? boundar y-and-te xture enhancement network for camouflaged object detection. IEEE T ransactions on Imag e Processing , 31:6396–6411, 2022. [17] Qiao Zhang, Xiaoxiao Sun, Y ur ui Chen, Y anliang Ge, and Hongbo Bi. Attention-induced semantic and boundary interaction network for camouflaged object detection. Computer Vision and Image Under standing , 233:103719, 2023. [18] W enhai W ang, Enze Xie, Xiang Li, Deng-Ping Fan, Kait ao Song, Ding Liang, Tong Lu, Ping Luo, and Ling Shao. Pvt v2: Improv ed baselines with pyramid vision transformer . Computational visual media , 8(3):415–424, 2022. [19] Jiepan Li, Fangxiao Lu, Nan Xue, Zhuohong Li, Hongyan Zhang, and Wei He. Cross-level attention wit h ov erlapped windows for camouflaged object detection. arXiv preprint , 2023. [20] Zhaoqing Wang, Y u Lu, Qiang Li, Xunqiang T ao, Y andong Guo, Mingming Gong, and Tongliang Liu. Cris: Clip-dr iven referring image segmentation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages 11686–11695, 2022. [21] Shixuan Gao, Pingping Zhang, Tianyu Y an, and Huchuan Lu. Multi-scale and detail-enhanced segment anything model for salient object detection. In Proceedings of the 32nd ACM International Confer ence on Multimedia , pages 9894–9903, 2024. [22] Y ujia Sun, Shuo W ang, Chenglizhao Chen, and Tian-Zhu Xiang. Boundary-guided camouflaged object detection. arXiv pr epr int arXiv:2207.00794 , 2022. [23] Jun W ei, Shuhui Wang, and Qingming Huang. F 3 net: fusion, feedback and focus for salient object detection. In Proceedings of the AAAI confer ence on artificial intellig ence , volume 34, pages 12321–12328, 2020. [24] Enze Xie, W enjia W ang, W enhai Wang, Mingyu Ding, Chunhua Shen, and Ping Luo. Segmenting transparent objects in the wild. In European confer ence on computer vision , pages 696–711. Springer, 2020. [25] Diederik P Kingma and Jimmy Ba. Adam: A method f or stochastic optimization. arXiv preprint , 2014. [26] Trung-Nghia Le, Tam V Nguy en, Zhongliang Nie, Minh- T r iet Tran, and Akihiro Sugimoto. Anabranch network for camouflaged object segmentation. Computer vision and image understanding , 184:45–56, 2019. [27] Y unqiu Lv , Jing Zhang, Y uchao Dai, Aixuan Li, Bowen Liu, Nick Bar nes, and Deng-Ping Fan. Simultaneously localize, segment and rank the camouflaged objects. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition , pages 11591–11601, 2021. [28] Federico Perazzi, Philipp Krähenbühl, Y ael Pr itch, and Alexander Hornung. Saliency filters: Contrast based filter ing for salient region detection. In 2012 IEEE confer ence on computer vision and pattern recognition , pages 733–740. IEEE, 2012. [29] Ran Margolin, Lihi Zelnik -Manor, and A yellet Tal. How to evaluate foreground maps? In Proceedings of the IEEE confer ence on computer vision and pattern recognition , pages 248–255, 2014. [30] Deng-Ping Fan, Ming-Ming Cheng, Y un Liu, Tao Li, and Ali Borji. Structure-measure: A new wa y to evaluate for eground maps. In Proceedings of the IEEE international conference on com puter vision , pages 4548–4557, 2017. : Preprint submitted to Elsevier P age 13 of 14 [31] Deng-Ping Fan, Ge-Peng Ji, Xuebin Qin, and Ming-Ming Cheng. Cognitive vision inspired object segment ation metric and loss function. Scientia Sinica Informationis , 6(6):5, 2021. [32] W eihuang Liu, Xi Shen, Chi-Man Pun, and Xiaodong Cun. Explicit visual prompting for low -lev el str ucture segmentations. In Proceedings of the IEEE/CVF Confer ence on Computer Vision and P attern Recognition , pages 19434–19445, 2023. [33] Dongyue Sun, Shiyao Jiang, and Lin Qi. Edge-a ware mirror network f or camouflaged object detection. In 2023 IEEE Inter national Confer ence on Multimedia and Expo (ICME) , pages 2465–2470. IEEE, 2023. [34] Guanghui Y ue, Houlu Xiao, Hai Xie, Tian wei Zhou, W ei Zhou, W eiqing Y an, Baoquan Zhao, Tianfu W ang, and Qiuping Jiang. Dual-constraint coarse-to-fine network for camouflaged object detection. IEEE T ransactions on Circuits and Systems for Video T echnology , 34(5):3286–3298, 2023. [35] Haozhe Xing, Shuyong Gao, Y an W ang, Xujun W ei, Hao T ang, and W enqiang Zhang. Go closer to see better: Camouflaged object detection via object area amplification and figure-ground conversion. IEEE T ransactions on Circuits and Systems for Video T echnology , 33(10):5444–5457, 2023. [36] Xiaof ei Zhou, Zhicong Wu, and Runmin Cong. Decoupling and integ ration network for camouflaged object detection. IEEE T r ansactions on Multimedia , 26:7114–7129, 2024. [37] Juwei Guan, Xiaolin Fang, Tongxin Zhu, and W eiqi Qian. Sdrnet: Camouflaged object detection with independent reconstruction of str ucture and detail. Knowledg e-Based Systems , 299:112051, 2024. [38] Ziyang Luo, Nian Liu, W angbo Zhao, Xuguang Y ang, Dingwen Zhang, Deng-Ping Fan, Fahad Khan, and Junw ei Han. Vscode: General visual salient and camouflaged object detection wit h 2d prompt learning. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 17169–17180, 2024. [39] Y anguang Sun, Chuny an Xu, Jian Y ang, Hanyu Xuan, and Lei Luo. Frequency-spatial ent anglement learning for camouflaged object detection. In European Conference on Computer Vision , pages 343–360. Spr inger , 2024. [40] Xin W ang, Jiajia Ding, Zhao Zhang, Junfeng Xu, and Jun Gao. Ipnet: Polarization-based camouflaged object detection via dual-flow networ k. Engineering Applications of Artificial Intelligence , 127:107303, 2024. [41] Hong Zhang, Yixuan Lyu, Tian He, Xuliang Li, Y aw ei Li, Ding Y uan, and Yifan Y ang. Coddiff: Pr ior leading diffusion model for camouflage object detection. Know ledg e-Based Systems , page 113381, 2025. [42] Hongbo Bi, Jianing Y u, Disen Mo, Shiyuan Li, and Cong Zhang. Edge-guided semantic-aw are network for camouflaged object detection with pvtv2. Imag e and Vision Computing , page 105720, 2025. [43] Chao Hao, Zitong Y u, Xin Liu, Jun Xu, Huanjing Y ue, and Jingyu Y ang. A simple yet effective netw ork based on vision transformer for camouflaged object and salient object detection. IEEE T ransactions on Image Processing , 2025. [44] Dan Wu, Mengyin W ang, Jing Sun, and Xu Jia. Know ledge-guided and collaborativ e learning networ k for camouflaged object detection. Engineering Applications of Artificial Intelligence , 153:110771, 2025. [45] Jinsheng Y ang, Bineng Zhong, Qihua Liang, Zhiyi Mo, Shengping Zhang, and Shuxiang Song. Uncertainty-guided diffusion model for camouflaged object detection. IEEE T ransactions on Multimedia , 2025. [46] Rui Zhao, Y uetong Li, Qing Zhang, and Xinyi Zhao. Bilateral decoupling complement arity lear ning networ k f or camouflaged object detection. Know ledg e-Based Systems , 314:113158, 2025. : Preprint submitted to Elsevier P age 14 of 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment