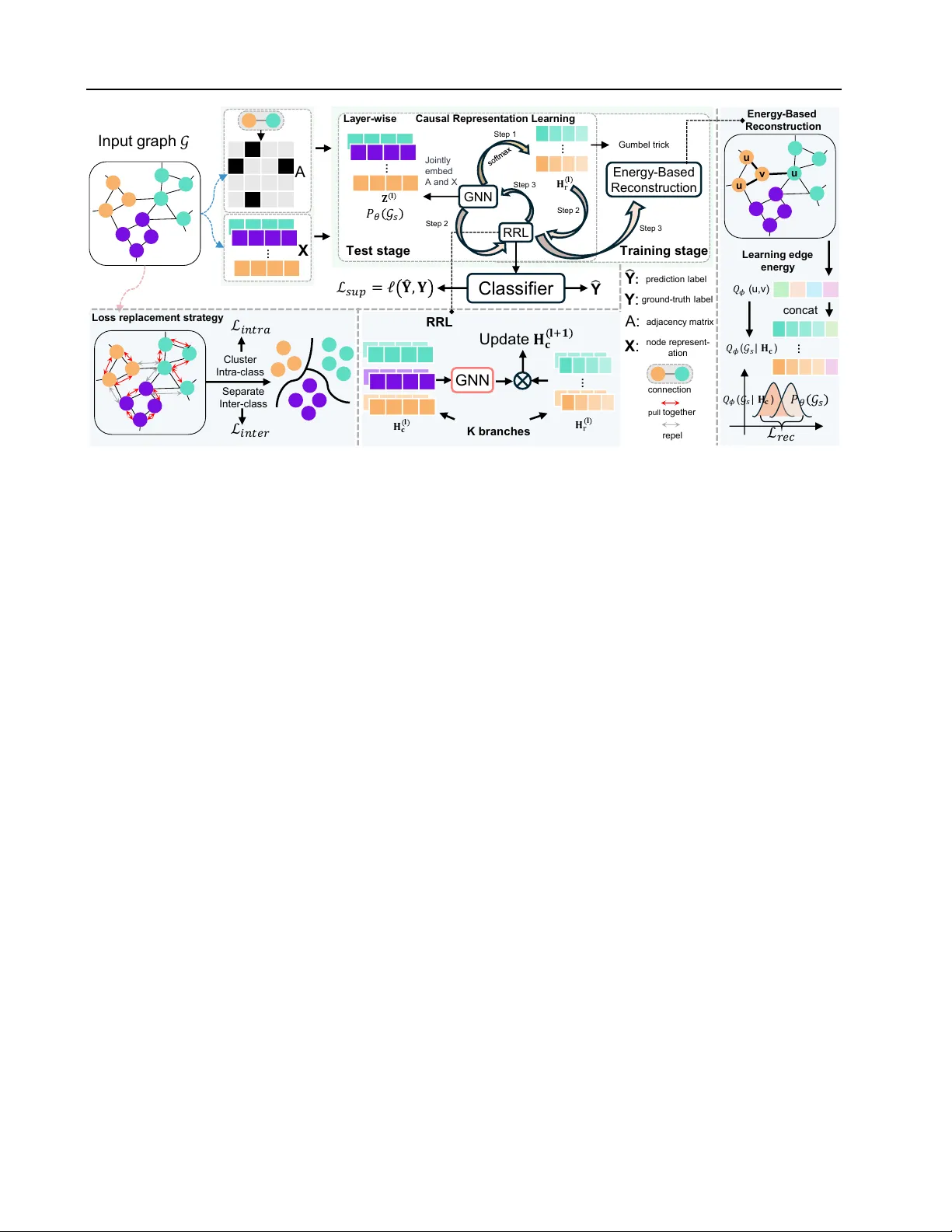

CGRL: Causal-Guided Representation Learning for Graph Out-of-Distribution Generalization

Graph Neural Networks (GNNs) have achieved impressive performance in graph-related tasks. However, they suffer from poor generalization on out-of-distribution (OOD) data, as they tend to learn spurious correlations. Such correlations present a phenom…

Authors: Bowen Lu, Liangqiang Yang, Teng Li