Accelerating Diffusion-based Video Editing via Heterogeneous Caching: Beyond Full Computing at Sampled Denoising Timestep

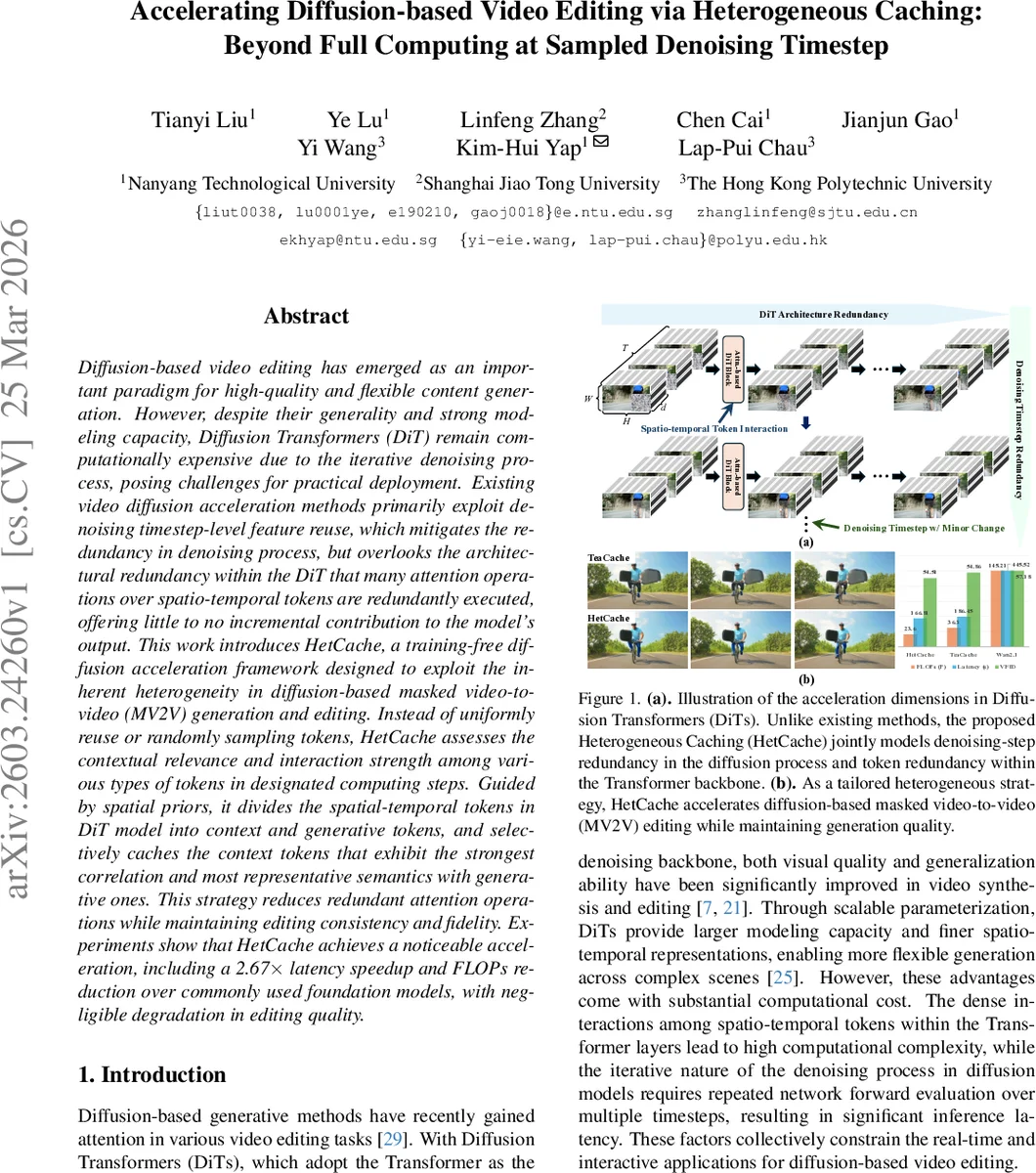

Diffusion-based video editing has emerged as an important paradigm for high-quality and flexible content generation. However, despite their generality and strong modeling capacity, Diffusion Transformers (DiT) remain computationally expensive due to the iterative denoising process, posing challenges for practical deployment. Existing video diffusion acceleration methods primarily exploit denoising timestep-level feature reuse, which mitigates the redundancy in denoising process, but overlooks the architectural redundancy within the DiT that many attention operations over spatio-temporal tokens are redundantly executed, offering little to no incremental contribution to the model output. This work introduces HetCache, a training-free diffusion acceleration framework designed to exploit the inherent heterogeneity in diffusion-based masked video-to-video (MV2V) generation and editing. Instead of uniformly reuse or randomly sampling tokens, HetCache assesses the contextual relevance and interaction strength among various types of tokens in designated computing steps. Guided by spatial priors, it divides the spatial-temporal tokens in DiT model into context and generative tokens, and selectively caches the context tokens that exhibit the strongest correlation and most representative semantics with generative ones. This strategy reduces redundant attention operations while maintaining editing consistency and fidelity. Experiments show that HetCache achieves a noticeable acceleration, including a 2.67$\times$ latency speedup and FLOPs reduction over commonly used foundation models, with negligible degradation in editing quality.

💡 Research Summary

The paper addresses the computational inefficiency of diffusion‑based video editing, particularly when using Diffusion Transformers (DiT) for masked video‑to‑video (MV2V) tasks. While prior acceleration methods focus on reusing features across denoising timesteps, they ignore the redundancy that exists inside the transformer’s attention layers, where many spatio‑temporal tokens contribute little to the final output. To tackle both sources of redundancy, the authors propose HetCache, a training‑free caching framework that exploits heterogeneity across two dimensions: (1) timestep‑level redundancy and (2) token‑level redundancy.

First, HetCache estimates how much the model’s output is expected to change between consecutive timesteps by computing a lightweight L1 distance between timestep‑embedding‑modulated inputs. By accumulating this distance, each timestep is classified as a “Full‑Compute” step (large change, requires full forward pass and cache refresh), a “Partial‑Compute” step (moderate change, allows a lightweight refresh), or a “Reuse” step (tiny change, simply reuses previously cached results).

During a Full‑Compute step, the method uses the editing mask to split all tokens into three groups: Context tokens (unmasked background), Margin tokens (tokens around the mask boundary), and Generative tokens (masked region that must be synthesized). Context tokens are the main source of redundant computation. HetCache clusters these tokens in semantic space, selects the most representative token from each cluster, and evaluates the attention interaction strength between these representatives and the Generative tokens. Only the most informative Context tokens are cached; Margin and Generative tokens are always processed fully to preserve boundary fidelity and content quality.

In subsequent Partial‑Compute steps, the cached representative Context tokens replace the full set of Context tokens, dramatically reducing the number of self‑attention operations while still providing sufficient semantic guidance for the Generative tokens. An exponential moving average updates the cache gradually, allowing it to adapt to slow changes over time.

Experiments are conducted on popular DiT backbones (e.g., DiT‑B/2, DiT‑L) using the VACE‑Benchmark and VP‑Bench datasets, covering a variety of MV2V scenarios such as text‑guided inpainting, object removal, and style transfer. HetCache achieves an average 2.67× reduction in inference latency and roughly 30% fewer FLOPs, with negligible impact on visual quality: VFID, PSNR, and SSIM differences are all below 0.01 compared to the full‑compute baseline. The method is robust across different mask shapes, prompt complexities, and video lengths.

Ablation studies explore the effect of the cache‑update threshold Δ and the number of semantic clusters K, finding that moderate Δ values and K≈8 provide the best trade‑off between speed and quality. The authors also discuss limitations, such as handling multiple or ambiguous ROIs and the modest overhead of clustering, and suggest future directions like online clustering or meta‑learning‑based token importance predictors.

In summary, HetCache introduces a principled way to quantify and exploit both temporal and token heterogeneity in diffusion‑based video editing, delivering substantial speed‑ups without any additional training. This makes real‑time, interactive video editing with high‑quality diffusion models far more practical for deployment on consumer hardware and edge devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment