Embracing Heteroscedasticity for Probabilistic Time Series Forecasting

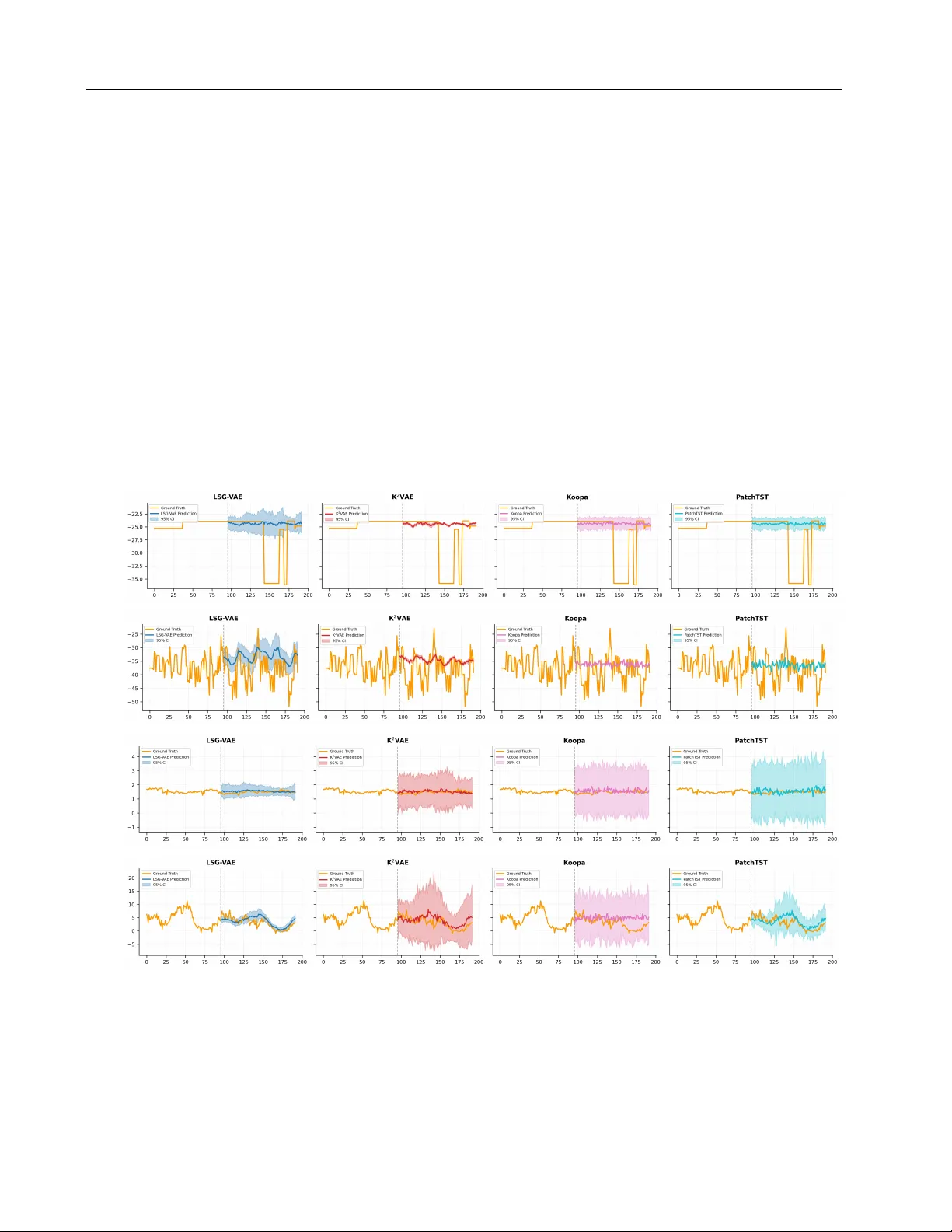

Probabilistic time series forecasting (PTSF) aims to model the full predictive distribution of future observations, enabling both accurate forecasting and principled uncertainty quantification. A central requirement of PTSF is to embrace heteroscedas…

Authors: Yijun Wang, Qiyuan Zhuang, Xiu-Shen Wei