Modeling Spatiotemporal Neural Frames for High Resolution Brain Dynamic

Capturing dynamic spatiotemporal neural activity is essential for understanding large-scale brain mechanisms. Functional magnetic resonance imaging (fMRI) provides high-resolution cortical representations that form a strong basis for characterizing f…

Authors: Wanying Qu, Jianxiong Gao, Wei Wang

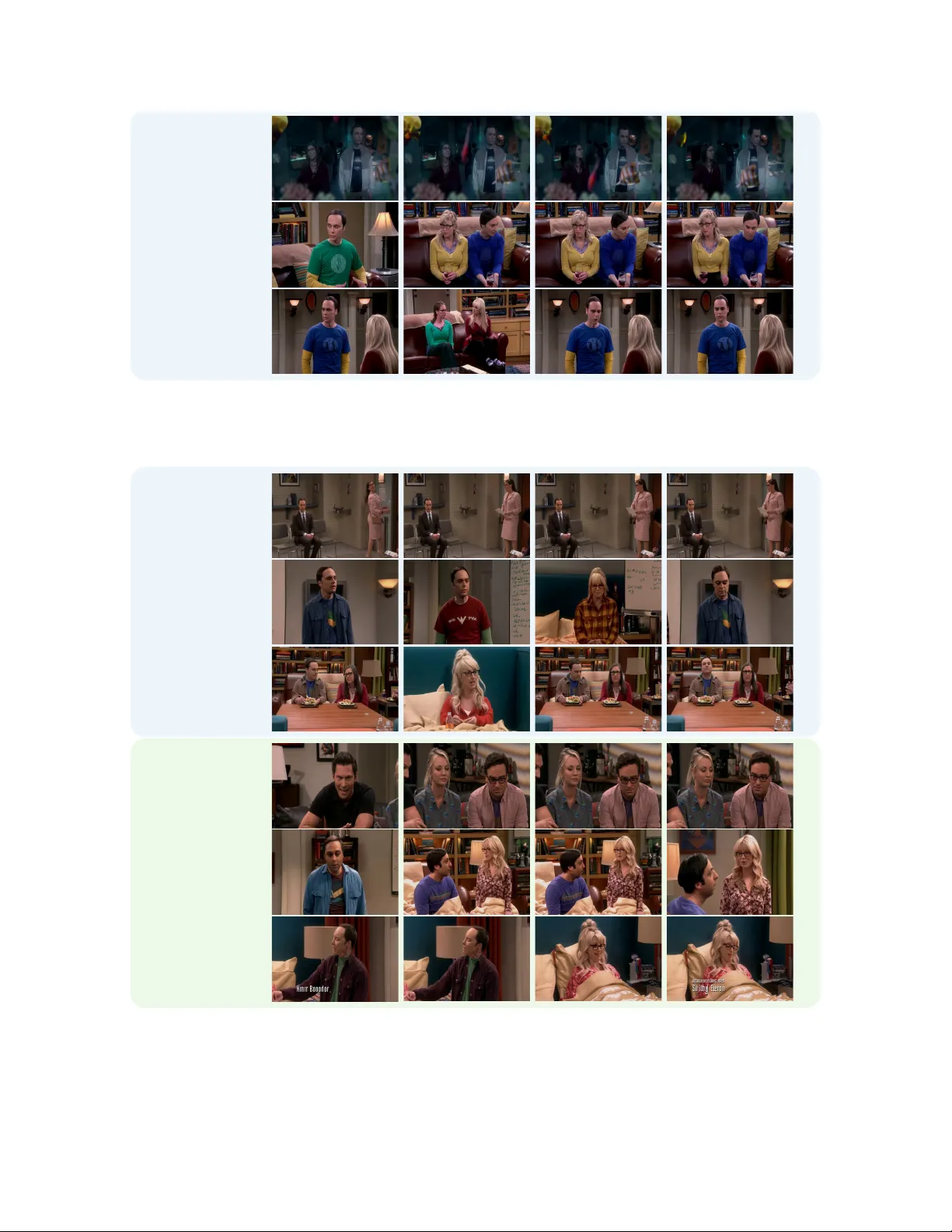

Modeling Spatiotemporal Neural Frames f or High Resolution Brain Dynamics W anying Qu 1 , Jianxiong Gao 1 , W ei W ang 2 , 3 , Y anwei Fu 1 , 3 B 1 Fudan Uni versity , 2 Southern Uni versity of Science and T echnology , 3 Shanghai Innov ation Institute { wyqu24,jxgao22,yanweifu } @fudan.edu.cn, 12445027@mail.sustech.edu.cn Vi s i o n Stimuli Vi d e o Recon . EEG Collect time fMRI Tr an sl a ti o n fMRI InterRe con time Figure 1. This paper reconstructs dynamic fMRI frames with high spatial detail and temporal coherence from EEG. W e further introduce intermediate frame reconstruction (InterRecon), and use visual decoding as a downstream task to e v aluate high-le vel semantic preserv ation. Abstract Capturing dynamic spatiotempor al neural activity is essen- tial for understanding lar ge-scale brain mechanisms. Func- tional magnetic r esonance imaging (fMRI) pr ovides high- r esolution cortical r epr esentations that form a str ong ba- sis for characterizing fine-gr ained brain activity patterns. The high acquisition cost of fMRI limits lar ge-scale ap- plications, ther efor e making high-quality fMRI reconstruc- tion a crucial task. Electr oencephalography (EEG) of- fers millisecond-level tempor al cues that complement fMRI. Lever aging this complementarity , we pr esent an EEG- conditioned fr amework for r econstructing dynamic fMRI as continuous neural sequences with high spatial fidelity and str ong temporal coher ence at the cortical-vertex level. T o addr ess sampling irr e gularities common in r eal fMRI acqui- sitions, we incorporate a null-space intermediate-frame r e- construction, enabling measurement-consistent completion of arbitrary intermediate frames and impr oving sequence continuity and practical applicability . Experiments on the CineBrain dataset demonstr ate superior vertex-wise r econ- struction quality and r obust temporal consistency acr oss whole-brain and functionally specific r e gions. The r econ- structed fMRI also pr eserves essential functional informa- tion, supporting downstr eam visual decoding tasks. This work pr ovides a new pathway for estimating high-r esolution fMRI dynamics fr om EEG and advances multimodal neu- B Corresponding author . r oimaging towar d mor e dynamic brain activity modeling. 1. Introduction Understanding brain dynamics requires capturing both high spatial detail and temporal continuity [ 1 ]. Functional mag- netic resonance imaging (fMRI) measures neural activ- ity through blood-oxygen-le vel–dependent (BOLD) signals and provides fine-grained spatial resolution for studying cognition and brain function [ 2 , 3 ]. Each fMRI frame re- flects an ongoing state of brain activity , and together these frames form a continuous temporal sequence. The high ac- quisition cost of fMRI limits lar ge-scale applications, there- fore making high-quality fMRI reconstruction a crucial task. Howe ver , con ventional analyses and many reconstruc- tion methods treat frames independently [ 4 – 6 ], o verlook- ing the rich temporal dependencies that gov ern how neural patterns emerge, evolv e, and interact. Modeling these dy- namics is inherently challenging, as it requires reconstruct- ing high-dimensional spatiotemporal sequences that remain both temporally coherent and spatially detailed. This raises a central question: how can we reconstruct fMRI frames that are both temporally coherent and spa- tially detailed, capturing the brain’ s continuous dynamics rather than isolated snapshots? T o address this challenge, we turn to electroencephalography (EEG), which captures neural acti vity with millisecond temporal resolution [ 7 ], complementing the spatial precision of fMRI. By leverag- ing EEG as a conditioning signal, we can guide the recon- 1 struction of dynamic fMRI frames that preserve temporal continuity across frames. Beyond reconstruction fidelity , a second critical question arises: ho w to evaluate the re- constructed fMRI, and more importantly, whether it can support meaningful downstream applications. Existing approaches only partially address these chal- lenges. Methods focusing on temporal resolution, such as R OI-based models [ 8 ] capture dynamic trajectories but op- erate at coarse spatial granularity , limiting interpretability for cortical mapping. Methods focusing on spatial res- olution, including v oxel-lev el renconstruction [ 5 , 9 , 10 ] and v ertex-based fMRI translation models [ 6 ] preserv e spa- tial detail but lack temporal coherence, leading to frame- wise artifacts and biologically implausible inconsistencies. Moreov er , con ventional e valuation paradigms rely on v oxel or vertex level metrics such as MSE, SSIM [ 11 ], and PSNR [ 12 ], which cannot assess whether the reconstructed fMRI encodes meaningful neural representations usable for higher-le vel tasks. T o overcome these limitations, we propose a diffusion- transformer-based framework for EEG-conditioned fMRI reconstruction that addresses both temporal coherence and spatial resolution, as shown in Figure 1 . Our ke y insight is to model brain activity as e volving spatiotemporal neural frames rather than independent snapshots, jointly capturing verte x-lev el spatial detail and temporal continuity within a diffusion-based generative process. Critically , we introduce a null-space constrained sampling mechanism that enables intermediate frame reconstruction (InterRecon) without re- training. InterRecon serves two important purposes. First, it provides an intrinsic e valuation of temporal consistenc y , al- lowing us to assess whether the model can generate physio- logically plausible transitions between known fMRI frames; Second, it addresses practical needs in real fMRI data pro- cessing, where missing or corrupted frames often occur dur- ing acquisition and interpolation is routinely required dur- ing preprocessing [ 13 ]. Beyond reconstruction metrics, we validate functional plausibility through downstream visual decoding task, ensuring the reconstructed fMRI preserves task-relev ant neural representations. W e demonstrate that this framework achie ves both goals: reconstructing temporally coherent fMRI frames with high spatial fidelity while preserving functional information val- idated through downstream decoding tasks. Our contribu- tions are threefold: • W e formulate EEG-to-fMRI reconstruction as a spa- tiotemporal pattern recognition and generation task, where the goal is to identify task-rele vant neural dy- namics from EEG and reconstruct corresponding fMRI frames. W e introduce a diffusion transformer frame work that reconstructs full fMRI sequences at verte x-lev el cor- tical resolution while explicitly modeling temporal de- pendencies and cortical spatial geometry to produce co- herent and physiologically consistent representations. • W e dev elop a null-space constrained sampling mecha- nism to reconstruct intermediate frames at arbitrary tem- poral positions without retraining, offering practical util- ity in real fMRI processing scenarios. • W e conduct a comprehensiv e ev aluation of the recon- structed fMRI, assessing quantitative metrics such as verte x-lev el fidelity , temporal coherence, and structural correspondence, as well as functional validity through a downstream visual decoding task. 2. Related W ork 2.1. EEG-to-fMRI T ranslation EEG-to-fMRI translation methods can be divided into R OI- lev el sequence modeling and voxel or surface-lev el recon- struction. R OI-based methods such as NeuroBOL T [ 8 ] re- construct fMRI sequences at the parcel lev el. These meth- ods naturally capture temporal continuity across frames b ut lose spatial precision because parcellation removes fine cor- tical detail. V oxel-le vel or cortical-level approaches, such as CNN-TC [ 9 ], CNN-T A G [ 5 ], adversarial models includ- ing E2fGAN and E2fNet [ 10 ], and CA TD [ 6 ] operate in volumetric or cortical space. They achie ve higher spatial fidelity but typically reconstruct each fMRI frame indepen- dently . As a result, these methods lack explicit modeling of temporal coherence across the sequence. Evaluation protocols follow the same pattern. ROI-le vel methods mainly report correlation-based metrics between predicted and ground-truth time series. V oxel-le vel meth- ods commonly rely on image reconstruction metrics such as MSE, correlation, SSIM, and PSNR. These metrics reflect reconstruction fidelity but do not re veal whether the recon- structed fMRI contains functional information that can sup- port downstream applications such as intermediate-frame completion or neural decoding. Our work addresses these gaps. W e introduce an EEG- conditioned diffusion framew ork that models spatial detail and temporal consistency jointly at vertex-le vel resolution. W e further incorporate a null space-based strategy that en- ables measurement-consistent reconstruction of intermedi- ate frames without retraining. More importantly , we ev alu- ate whether the reconstructed fMRI preserves task-relev ant neural representations that support do wnstream functional applications. 2.2. Diffusion Models Denoising Diffusion Probabilistic Models (DDPMs) [ 14 , 15 ] have demonstrated strong capabilities in modeling com- plex data distrib utions, achie ving remarkable success in im- age generation [ 16 ]. Ov er time, diffusion models ha ve ev olved into a flexible probabilistic modeling framework whose iterati ve denoising process naturally captures multi- 2 scale spatial structure and complex data manifolds while of- fering stable training dynamics. Subsequent works, such as Diffusion T ransformers (DiT), have further scaled up diffusion architectures, enabling a new wav e of po werful generativ e vision models [ 17 – 20 ]. This expansion has re- vealed diffusion’ s strong capacity for high-dimensional se- quence modeling, controllable generation, and modality- conditioned synthesis. Beyond visual synthesis, diffusion models have also been extended to cross-modal distribu- tion modeling, including brain-to-vision generation from fMRI signals [ 21 – 24 ], reflecting their suitability for learn- ing structured relationships across heterogeneous data do- mains. 3. Method 3.1. T ask F ormulation In this study , we aim to construct a generativ e framework that learns to reconstruct fMRI frames from EEG measure- ments. Let X = { x 1 , x 2 , . . . , x K } denote the target fMRI se- quence represented on the cortical surface (fsLR), with X ∈ R K × N v , where K is the number of fMRI frames and each frame x k ∈ R N v captures cortical activ ation across N v vertices. Let S ∈ R C × T s denote the temporally aligned EEG recording, where C is the number of electrodes and T s is the total number of EEG samples. T emporal align- ment accounts for hemodynamic response delays follo wing standard preprocessing protocols. Sequence-Level fMRI Organization. W e denote a contin- uous fMRI window of length K w as X k 0 : k 0 + K w − 1 ∈ R K w × N v , where each x k is a verte x-lev el cortical map. Unlike prior frame-by-frame approaches, we jointly model the entire K w -frame sequence to capture temporal continuity . Sequence-Level EEG Conditioning. W e condition the generator on a sequence of EEG windows S k 0 : k 0 + K w − 1 = { s k 0 , . . . , s k 0 + K w − 1 } , where each fMRI frame x k is temporally aligned with a cor- responding EEG window s k ∈ R C × W , and W is the win- dow length in EEG samples. Our goal is to learn a conditional generati ve prior p θ ( X | S ) , that supports two tasks under a unified formulation: 1. EEG-to-fMRI translation. Giv en an aligned EEG seg- ment { s k 0 , . . . , s k 0 + K w − 1 } , reconstruct the correspond- ing verte x-lev el fMRI sub-sequence: ˆ X k 0 : k 0 + K w − 1 ∼ p θ X k 0 : k 0 + K w − 1 | s k 0 : k 0 + K w − 1 . 2. EEG-guided intermediate fMRI frame reconstruc- tion (InterRecon). For temporal consistency e valua- tion, we use sparse anchor frames y = { x k − ∆ , x k +∆ } ( ∆ ≥ 1 ) along with their corresponding EEG win- dows { s k − ∆ , . . . , s k +∆ } to constrain the reconstruction of intermediate frames at arbitrary query indices K ∗ = { k (1) , . . . , k ( M ) } ⊂ ( k − ∆ , k + ∆) : ˆ X K ∗ ∼ p θ X K ∗ { s k − ∆ , . . . , s k +∆ } , y 3.2. Denoising Null-Space Diffusion T ransformer W e formulate EEG-to-fMRI reconstruction as a conditional denoising dif fusion transformer process that jointly mod- els spatiotemporal fMRI sequences. Our framew ork b uilds upon the diffusion transformer architecture (Figure 2 -a)), adapted to handle vertex-le vel cortical representations with sequence-lev el EEG conditioning, as shown in Figure 2 . Spatiotemporal T okenization and EEG Conditioning. T o jointly model the K w -frame fMRI sequence, we tok- enize the spatiotemporal volume into ( K w × N v ) verte x- lev el tokens, where each vertex at each time frame consti- tutes an indi vidual token with temporal positional encoding to distinguish frames. EEG features are extracted for the entire K w -frame window using a temporal encoder f ϕ : h EEG = f ϕ ( S k 0 : k 0 + K w − 1 ) , producing a sequence-lev el representation. During reverse diffusion, each verte x token is conditioned on h EEG through cross-attention mechanisms at ev ery transformer layer , en- abling the model to capture temporal dependencies guided by the EEG signal. Diffusion Forward Pr ocess. The forward diffusion process gradually perturbs fMRI frames x (0) ov er N timesteps by injecting Gaussian noise according to a v ariance schedule { β n } N n =1 : q ( x ( n ) | x ( n − 1) ) = N ( x ( n ) ; p 1 − β n x ( n − 1) , β n I ) , (1) which equiv alently samples x ( n ) = √ 1 − β n x ( n − 1) + √ β n ϵ with ϵ ∼ N (0 , I ) . By the reparameterization prop- erty , we can directly sample noisy states from the clean data at any dif fusion step n : q ( x ( n ) | x (0) ) = N ( x ( n ) ; √ ¯ α n x (0) , (1 − ¯ α n ) I ) , (2) where α n = 1 − β n and ¯ α n = Q n i =1 α i . Conditional Diffusion Sampling and T raining f or T rans- lation. The reverse process learns to denoise by training a neural network ϵ θ ( x ( n ) , n, h EEG ) to predict the injected noise, conditioned on EEG features. The model is opti- mized via the denoising score matching objectiv e: L diff = E n, x , ϵ h ∥ ϵ − ϵ θ ( x ( n ) , n, h EEG ) ∥ 2 i , (3) where x ( n ) = √ ¯ α n x (0) + √ 1 − ¯ α n ϵ with ϵ ∼ N (0 , I ) . During inference, the re verse process recov ers x ( n − 1) from 3 EEG Encoder AAAB8XicbVDLSgMxFL1TX7W+qi7dBIvgqsyIVJdFNy4r2Ae2Q8mkmTY0yQxJRhiG/oUbF4q49W/c+Tdm2llo64HA4Zx7ybkniDnTxnW/ndLa+sbmVnm7srO7t39QPTzq6ChRhLZJxCPVC7CmnEnaNsxw2osVxSLgtBtMb3O/+0SVZpF8MGlMfYHHkoWMYGOlx4HAZhKEKK0MqzW37s6BVolXkBoUaA2rX4NRRBJBpSEca9333Nj4GVaGEU5nlUGiaYzJFI9p31KJBdV+Nk88Q2dWGaEwUvZJg+bq740MC61TEdjJPKFe9nLxP6+fmPDaz5iME0MlWXwUJhyZCOXnoxFTlBieWoKJYjYrIhOsMDG2pLwEb/nkVdK5qHuNeuP+sta8Keoowwmcwjl4cAVNuIMWtIGAhGd4hTdHOy/Ou/OxGC05xc4x/IHz+QPJU5Ba y Multi - Head Cross - Att enti on Layer Nor m Multi - Head Self - Att entio n Layer Nor m Pointwise Feedforward Layer Nor m N x Blocks Layer Nor m Linear & Reshape Input Tokens AAAC1HicjVHLTsJAFD3UFz6punTTSE1ckZYFuiS6cYmJPBJA0pYBGvpKOzUSdGXc+gNu9ZuMf6B/4Z2xJCoxOk3bM+eec2fuvXbkuQk3jNecsrC4tLySX11b39jcKqjbO40kTGOH1Z3QC+OWbSXMcwNW5y73WCuKmeXbHmva41MRb16xOHHD4IJPItb1rWHgDlzH4kT11IKud3yLj+yBdn3Jdb2nFo2SIZc2D8wMFJGtWqi+oIM+QjhI4YMhACfswUJCTxsmDETEdTElLibkyjjDLdbIm5KKkcIidkzfIe3aGRvQXuRMpNuhUzx6Y3JqOCBPSLqYsDhNk/FUZhbsb7mnMqe424T+dpbLJ5ZjROxfvpnyvz5RC8cAx7IGl2qKJCOqc7IsqeyKuLn2pSpOGSLiBO5TPCbsSOesz5r0JLJ20VtLxt+kUrBi72TaFO/iljRg8+c450GjXDIrpcp5uVg9yUadxx72cUjzPEIVZ6ihLmf+iCc8Kw3lRrlT7j+lSi7z7OLbUh4+AHIBlJM= x t AAAB8HicbVDLSgMxFL1TX7W+qi7dBIvgqsyIVJdFNy4r2Ie0Q8mkmTY0yQxJRhiGfoUbF4q49XPc+Tdm2llo64HA4Zx7ybkniDnTxnW/ndLa+sbmVnm7srO7t39QPTzq6ChRhLZJxCPVC7CmnEnaNsxw2osVxSLgtBtMb3O/+0SVZpF8MGlMfYHHkoWMYGOlx4HAZhKEKB1Wa27dnQOtEq8gNSjQGla/BqOIJIJKQzjWuu+5sfEzrAwjnM4qg0TTGJMpHtO+pRILqv1sHniGzqwyQmGk7JMGzdXfGxkWWqcisJN5QL3s5eJ/Xj8x4bWfMRknhkqy+ChMODIRyq9HI6YoMTy1BBPFbFZEJlhhYmxHFVuCt3zyKulc1L1GvXF/WWveFHWU4QRO4Rw8uIIm3EEL2kBAwDO8wpujnBfn3flYjJacYucY/sD5/AGR0JBG y AAAC2HicjVHLTsJAFD3UF+ILZemmEUzcSFoW6JLoxiUm8oiApC0DNPSVdmokhMSdcesPuNUvMv6B/oV3xpKoxOg0bc+ce8+ZufeagWNHXNNeU8rC4tLySno1s7a+sbmV3d6pR34cWqxm+Y4fNk0jYo7tsRq3ucOaQcgM13RYwxydinjjmoWR7XsXfBywjmsMPLtvWwYnqpvNFQpt1+BDs6/eXE34oT4tFLrZvFbU5FLngZ6APJJV9bMvaKMHHxZiuGDwwAk7MBDR04IODQFxHUyICwnZMs4wRYa0MWUxyjCIHdF3QLtWwnq0F56RVFt0ikNvSEoV+6TxKS8kLE5TZTyWzoL9zXsiPcXdxvQ3Ey+XWI4hsX/pZpn/1YlaOPo4ljXYVFMgGVGdlbjEsivi5uqXqjg5BMQJ3KN4SNiSylmfVamJZO2it4aMv8lMwYq9leTGeBe3pAHrP8c5D+qlol4uls9L+cpJMuo0drGHA5rnESo4QxU18h7jEU94Vi6VW+VOuf9MVVKJJodvS3n4AE0klhE= x t 1 replace EEG a) Diffusion Transformer with Cross-Attention b) Null-space Sampling T ranslation InterRecon AAAB8HicbVDLSgNBEOyNrxhfUY9eBoPgKeyKRI9BLx4jmIckS5idzCZD5rHMzAphyVd48aCIVz/Hm3/jJNmDJhY0FFXddHdFCWfG+v63V1hb39jcKm6Xdnb39g/Kh0cto1JNaJMornQnwoZyJmnTMstpJ9EUi4jTdjS+nfntJ6oNU/LBThIaCjyULGYEWyc99iI2VAlPTb9c8av+HGiVBDmpQI5Gv/zVGyiSCiot4diYbuAnNsywtoxwOi31UkMTTMZ4SLuOSiyoCbP5wVN05pQBipV2JS2aq78nMiyMmYjIdQpsR2bZm4n/ed3UxtdhxmSSWirJYlGccmQVmn2PBkxTYvnEEUw0c7ciMsIaE+syKrkQguWXV0nrohrUqrX7y0r9Jo+jCCdwCucQwBXU4Q4a0AQCAp7hFd487b14797HorXg5TPH8Afe5w8akJCg ! AAAB8HicbVDLSgNBEOyNrxhfUY9eBoPgKeyKRI9BLx4jmIckS5idzCZD5rHMzAphyVd48aCIVz/Hm3/jJNmDJhY0FFXddHdFCWfG+v63V1hb39jcKm6Xdnb39g/Kh0cto1JNaJMornQnwoZyJmnTMstpJ9EUi4jTdjS+nfntJ6oNU/LBThIaCjyULGYEWyc99iI2VAlPTb9c8av+HGiVBDmpQI5Gv/zVGyiSCiot4diYbuAnNsywtoxwOi31UkMTTMZ4SLuOSiyoCbP5wVN05pQBipV2JS2aq78nMiyMmYjIdQpsR2bZm4n/ed3UxtdhxmSSWirJYlGccmQVmn2PBkxTYvnEEUw0c7ciMsIaE+syKrkQguWXV0nrohrUqrX7y0r9Jo+jCCdwCucQwBXU4Q4a0AQCAp7hFd487b14797HorXg5TPH8Afe5w8akJCg ! AAAB8HicbVDLSgNBEOyNrxhfUY9eBoPgKeyKRI9BLx4jmIckS5idzCZD5rHMzAphyVd48aCIVz/Hm3/jJNmDJhY0FFXddHdFCWfG+v63V1hb39jcKm6Xdnb39g/Kh0cto1JNaJMornQnwoZyJmnTMstpJ9EUi4jTdjS+nfntJ6oNU/LBThIaCjyULGYEWyc99iI2VAlPTb9c8av+HGiVBDmpQI5Gv/zVGyiSCiot4diYbuAnNsywtoxwOi31UkMTTMZ4SLuOSiyoCbP5wVN05pQBipV2JS2aq78nMiyMmYjIdQpsR2bZm4n/ed3UxtdhxmSSWirJYlGccmQVmn2PBkxTYvnEEUw0c7ciMsIaE+syKrkQguWXV0nrohrUqrX7y0r9Jo+jCCdwCucQwBXU4Q4a0AQCAp7hFd487b14797HorXg5TPH8Afe5w8akJCg ! Decomp ose Diff usion Sampling Null - space Sampling Linear AAAC2XicjVHLTsJAFD3UF+ILHzs3jWCCG1JYoEuiG1cGE3kkgKQtAzT0lXZqRMLCnXHrD7jVHzL+gf6Fd8aSqMToNG3PnHvPmbn3Gr5thVzTXhPK3PzC4lJyObWyura+kd7cqoVeFJisanq2FzQMPWS25bIqt7jNGn7AdMewWd0Ynoh4/YoFoeW5F3zks7aj912rZ5k6J6qT3slmW47OB0ZvfD25HOfODibZbCed0fKaXOosKMQgg3hVvPQLWujCg4kIDhhccMI2dIT0NFGABp+4NsbEBYQsGWeYIEXaiLIYZejEDunbp10zZl3aC89Qqk06xaY3IKWKfdJ4lBcQFqepMh5JZ8H+5j2WnuJuI/obsZdDLMeA2L9008z/6kQtHD0cyRosqsmXjKjOjF0i2RVxc/VLVZwcfOIE7lI8IGxK5bTPqtSEsnbRW13G32SmYMXejHMjvItb0oALP8c5C2rFfKGUL50XM+XjeNRJ7GIPOZrnIco4RQVV8r7BI57wrDSVW+VOuf9MVRKxZhvflvLwAQU5lsA= x ( N ) AAAC2XicjVHLTsJAFD3UF+ILHzs3jWCCG9KyQJdENy4xkUcCSNoyQENfaadGJCzcGbf+gFv9IeMf6F94Z6yJSoxO0/bMufecmXuvGTh2xDXtJaXMzS8sLqWXMyura+sb2c2teuTHocVqlu/4YdM0IubYHqtxmzusGYTMcE2HNczRiYg3LlkY2b53zscB67jGwLP7tmVworrZnXy+7Rp8aPYnV9OLScE7mObz3WxOK2pyqbNAT0AOyar62We00YMPCzFcMHjghB0YiOhpQYeGgLgOJsSFhGwZZ5giQ9qYshhlGMSO6DugXSthPdoLz0iqLTrFoTckpYp90viUFxIWp6kyHktnwf7mPZGe4m5j+puJl0ssx5DYv3Sfmf/ViVo4+jiSNdhUUyAZUZ2VuMSyK+Lm6peqODkExAnco3hI2JLKzz6rUhPJ2kVvDRl/lZmCFXsryY3xJm5JA9Z/jnMW1EtFvVwsn5VyleNk1GnsYg8FmuchKjhFFTXyvsYDHvGktJQb5Va5+0hVUolmG9+Wcv8OUbmW4A== x ( n ) AAAC23icjVHLSsNAFD3GV31XBTdugo1QF5akC3VZdONSwapQa5nEaQ3mRTIRJXblTtz6A271f8Q/0L/wzjiCD0QnJDlz7j1n5t7rJoGfCdt+HjAGh4ZHRktj4xOTU9Mz5dm5/SzOU483vTiI00OXZTzwI94Uvgj4YZJyFroBP3DPtmT84JynmR9He+Iy4e2Q9SK/63tMENUpL1jWUcjEqdstLvrHRTVadVb6ltUpV+yarZb5EzgaVKDXTlx+whFOEMNDjhAcEQThAAwZPS04sJEQ10ZBXErIV3GOPsZJm1MWpwxG7Bl9e7RraTaivfTMlNqjUwJ6U1KaWCZNTHkpYXmaqeK5cpbsb96F8pR3u6S/q71CYgVOif1L95H5X52sRaCLDVWDTzUlipHVedolV12RNzc/VSXIISFO4hOKp4Q9pfzos6k0mapd9pap+IvKlKzcezo3x6u8JQ3Y+T7On2C/XnPWamu79UpjU4+6hEUsoUrzXEcD29hBk7yvcI8HPBpt49q4MW7fU40BrZnHl2XcvQGKo5dS x ( n → 1) AAAC2XicjVHLTsJAFD3UF+ILHzs3jWCCG9KyQJdENy4xkUcCSNoyQENfaadGJCzcGbf+gFv9IeMf6F94Z6yJSoxO0/bMufecmXuvGTh2xDXtJaXMzS8sLqWXMyura+sb2c2teuTHocVqlu/4YdM0IubYHqtxmzusGYTMcE2HNczRiYg3LlkY2b53zscB67jGwLP7tmVworrZnXy+7Rp8aPYnV9OLSUE7mObz3WxOK2pyqbNAT0AOyar62We00YMPCzFcMHjghB0YiOhpQYeGgLgOJsSFhGwZZ5giQ9qYshhlGMSO6DugXSthPdoLz0iqLTrFoTckpYp90viUFxIWp6kyHktnwf7mPZGe4m5j+puJl0ssx5DYv3Sfmf/ViVo4+jiSNdhUUyAZUZ2VuMSyK+Lm6peqODkExAnco3hI2JLKzz6rUhPJ2kVvDRl/lZmCFXsryY3xJm5JA9Z/jnMW1EtFvVwsn5VyleNk1GnsYg8FmuchKjhFFTXyvsYDHvGktJQb5Va5+0hVUolmG9+Wcv8OvXKWog== x (0) AAAC2XicjVHLTsJAFD3UF+ILHzs3jWCCG9KyQJdENy4xkUcCSNoyQENfaadGJCzcGbf+gFv9IeMf6F94Z6yJSoxO0/bMufecmXuvGTh2xDXtJaXMzS8sLqWXMyura+sb2c2teuTHocVqlu/4YdM0IubYHqtxmzusGYTMcE2HNczRiYg3LlkY2b53zscB67jGwLP7tmVworrZnXy+7Rp8aPYnV9OLScE7mObz3WxOK2pyqbNAT0AOyar62We00YMPCzFcMHjghB0YiOhpQYeGgLgOJsSFhGwZZ5giQ9qYshhlGMSO6DugXSthPdoLz0iqLTrFoTckpYp90viUFxIWp6kyHktnwf7mPZGe4m5j+puJl0ssx5DYv3Sfmf/ViVo4+jiSNdhUUyAZUZ2VuMSyK+Lm6peqODkExAnco3hI2JLKzz6rUhPJ2kVvDRl/lZmCFXsryY3xJm5JA9Z/jnMW1EtFvVwsn5VyleNk1GnsYg8FmuchKjhFFTXyvsYDHvGktJQb5Va5+0hVUolmG9+Wcv8OUbmW4A== x ( n ) AAAC23icjVHLSsNAFD3GV31XBTdugo1QF5akC3VZdONSwapQa5nEaQ3mRTIRJXblTtz6A271f8Q/0L/wzjiCD0QnJDlz7j1n5t7rJoGfCdt+HjAGh4ZHRktj4xOTU9Mz5dm5/SzOU483vTiI00OXZTzwI94Uvgj4YZJyFroBP3DPtmT84JynmR9He+Iy4e2Q9SK/63tMENUpL1jWUcjEqdstLvrHRTVadVb6ltUpV+yarZb5EzgaVKDXTlx+whFOEMNDjhAcEQThAAwZPS04sJEQ10ZBXErIV3GOPsZJm1MWpwxG7Bl9e7RraTaivfTMlNqjUwJ6U1KaWCZNTHkpYXmaqeK5cpbsb96F8pR3u6S/q71CYgVOif1L95H5X52sRaCLDVWDTzUlipHVedolV12RNzc/VSXIISFO4hOKp4Q9pfzos6k0mapd9pap+IvKlKzcezo3x6u8JQ3Y+T7On2C/XnPWamu79UpjU4+6hEUsoUrzXEcD29hBk7yvcI8HPBpt49q4MW7fU40BrZnHl2XcvQGKo5dS x ( n → 1) AAAC23icjVHLTsJAFD3UF+ILNXHjphFMXJHCAl0S3bjERB4JENKWASb0lXZqJMjKnXHrD7jV/zH+gf6Fd8aSqMToNG3PnHvPmbn3WoHDI2EYryltYXFpeSW9mllb39jcym7v1CM/Dm1Ws33HD5uWGTGHe6wmuHBYMwiZ6VoOa1ijMxlvXLEw4r53KcYB67jmwON9bpuCqG52L992TTG0+pPraXditF3e071pvpvNGQVDLX0eFBOQQ7KqfvYFbfTgw0YMFwweBGEHJiJ6WijCQEBcBxPiQkJcxRmmyJA2pixGGSaxI/oOaNdKWI/20jNSaptOcegNSanjkDQ+5YWE5Wm6isfKWbK/eU+Up7zbmP5W4uUSKzAk9i/dLPO/OlmLQB8nqgZONQWKkdXZiUusuiJvrn+pSpBDQJzEPYqHhG2lnPVZV5pI1S57a6r4m8qUrNzbSW6Md3lLGnDx5zjnQb1UKJYL5YtSrnKajDqNfRzgiOZ5jArOUUWNvG/wiCc8ax3tVrvT7j9TtVSi2cW3pT18AMwQmEI= x 0 | n AAAC4XicjVG7TsNAEBzMK7wDlFBYBCSqyKYASgQNZZBIgoRRdL5cyAm/ZJ8RyEpDR4do+QFa+BnEH8BfsHcYiYcQnGV7bnZn7nbXTwKZKcd5HrKGR0bHxisTk1PTM7Nz1fmFVhbnKRdNHgdxeuSzTAQyEk0lVSCOklSw0A9E2z/b0/H2uUgzGUeH6jIRJyE7jWRPcqaI6lSXV70+U4UXMtX3e8XFYNApHC+UXTsarHaqNafumGX/BG4JaihXI64+wUMXMThyhBCIoAgHYMjoOYYLBwlxJyiISwlJExcYYJK0OWUJymDEntH3lHbHJRvRXntmRs3plIDelJQ21kgTU15KWJ9mm3hunDX7m3dhPPXdLunvl14hsQp9Yv/SfWT+V6drUehh29QgqabEMLo6Xrrkpiv65vanqhQ5JMRp3KV4Spgb5UefbaPJTO26t8zEX0ymZvWel7k5XvUtacDu93H+BK2NurtZ3zzYqO3slqOuYAkrWKd5bmEH+2igSd5XuMcDHi1uXVs31u17qjVUahbxZVl3bwVHmw8= ˆ x 0 | n AAACGHicbZDLSgMxFIYz9VbrbdSlm2ARXNUZkeqy6sZlBXuBTi2ZTKYNTTJDkhHL0Mdw46u4caGI2+58GzPtINr6Q+DjP+eQc34/ZlRpx/myCkvLK6trxfXSxubW9o69u9dUUSIxaeCIRbLtI0UYFaShqWakHUuCuM9Iyx9eZ/XWA5GKRuJOj2LS5agvaEgx0sbq2SceR3rgh/Dy3gtQv08k/HFySB/HvdTxOA2gGPfsslNxpoKL4OZQBrnqPXviBRFOOBEaM6RUx3Vi3U2R1BQzMi55iSIxwkPUJx2DAnGiuun0sDE8Mk4Aw0iaJzScur8nUsSVGnHfdGa7qvlaZv5X6yQ6vOimVMSJJgLPPgoTBnUEs5RgQCXBmo0MICyp2RXiAZIIa5NlyYTgzp+8CM3TilutVG/PyrWrPI4iOACH4Bi44BzUwA2ogwbA4Am8gDfwbj1br9aH9TlrLVj5zD74I2vyDX9yoBc= A † Ax 0 | n AAACJnicbZDLSsNAFIYnXmu9VV26GSxCXVgSkepGqLrRXQV7gSaWyWTSDp1MwsxELKFP48ZXceOiIuLOR3HSRtTWHwY+/nMOc87vRoxKZZofxtz8wuLScm4lv7q2vrFZ2NpuyDAWmNRxyELRcpEkjHJSV1Qx0ooEQYHLSNPtX6b15j0Rkob8Vg0i4gSoy6lPMVLa6hTOSnaAVM/14TU8hN98fmd7qNsl4sc5yCh5GHYS0w6oB/mwUyiaZXMsOAtWBkWQqdYpjGwvxHFAuMIMSdm2zEg5CRKKYkaGeTuWJEK4j7qkrZGjgEgnGZ85hPva8aAfCv24gmP390SCAikHgas7013ldC01/6u1Y+WfOgnlUawIx5OP/JhBFcI0M+hRQbBiAw0IC6p3hbiHBMJKJ5vXIVjTJ89C46hsVcqVm+Ni9SKLIwd2wR4oAQucgCq4AjVQBxg8gmcwAq/Gk/FivBnvk9Y5I5vZAX9kfH4BaFSkmA== ( I → A † A ) x 0 | n AAACBHicbVDLSsNAFJ34rPUVddnNYBFclUSkuqy6cVnBPqCJZTKZpEMnkzAzEULowo2/4saFIm79CHf+jZM2grYeuHA4517uvcdLGJXKsr6MpeWV1bX1ykZ1c2t7Z9fc2+/KOBWYdHDMYtH3kCSMctJRVDHSTwRBkcdIzxtfFX7vnghJY36rsoS4EQo5DShGSktDs+ZESI28AF7cOT4KQyLgj5INzbrVsKaAi8QuSR2UaA/NT8ePcRoRrjBDUg5sK1FujoSimJFJ1UklSRAeo5AMNOUoItLNp09M4JFWfBjEQhdXcKr+nshRJGUWebqzOFDOe4X4nzdIVXDu5pQnqSIczxYFKYMqhkUi0KeCYMUyTRAWVN8K8QgJhJXOrapDsOdfXiTdk4bdbDRvTuutyzKOCqiBQ3AMbHAGWuAatEEHYPAAnsALeDUejWfjzXiftS4Z5cwB+APj4xve6Zee A † y AAAB8HicbVDLSgMxFL1TX7W+qi7dBIvgqsyItC6rblxWsA9ph5JJM21okhmSjFCGfoUbF4q49XPc+Tdm2llo64HA4Zx7ybkniDnTxnW/ncLa+sbmVnG7tLO7t39QPjxq6yhRhLZIxCPVDbCmnEnaMsxw2o0VxSLgtBNMbjO/80SVZpF8MNOY+gKPJAsZwcZKj32BzTgI0fWgXHGr7hxolXg5qUCO5qD81R9GJBFUGsKx1j3PjY2fYmUY4XRW6ieaxphM8Ij2LJVYUO2n88AzdGaVIQojZZ80aK7+3kix0HoqAjuZBdTLXib+5/USE175KZNxYqgki4/ChCMToex6NGSKEsOnlmCimM2KyBgrTIztqGRL8JZPXiXti6pXq9buLyuNm7yOIpzAKZyDB3VowB00oQUEBDzDK7w5ynlx3p2PxWjByXeO4Q+czx888JAO A Figure 2. Architecture and inference workflow of our frame work. T op: End-to-end inference pipeline, where EEG features condition a diffusion-transformer -based reconstruction process to produce spatiotemporal fMRI sequences. Bottom: (a) T ransformer-based denoising network that jointly models vertex-le vel tokens with sequence-lev el EEG cross-attention to reconstruct coherent fMRI trajectories. (b) Null-space sampling module (InterRecon) that enforces measurement consistency during rev erse dif fusion, enabling intermediate frame reconstruction without retraining. x ( n ) using the predicted noise to estimate the posterior mean. Null-Space Sampling for InterRecon. T o enable interme- diate fMRI frame reconstruction from sparse anchor frames without additional training, we employ null-space con- strained sampling, as shown in Figure 2 -b). W e model the sparse observ ation as a linear measurement y = AX 1: K w , where A = diag( m 1 , . . . , m K w ) with m k = 1 for observed anchor frames and m k = 0 for missing frames. The solu- tion is decomposed into range space (enforcing measure- ment consistency) and null-space (capturing residual v aria- tions): x ≡ A † Ax | {z } range-space + ( I − A † A ) x | {z } null-space . At each rev erse dif fusion step n , we first obtain the clean- data estimate using the noise predictor: x 0 | n = 1 √ ¯ α n x ( n ) − √ 1 − ¯ α n ϵ θ ( x ( n ) , n, h EEG ) , then apply range-null projection to enforce anchor consis- tency: ˆ x 0 | n = A † y + ( I − A † A ) x 0 | n , which guarantees A ˆ x 0 | n ≡ y . Finally , we update the diffu- sion state using the corrected estimate: x ( n − 1) = √ ¯ α n − 1 β n 1 − ¯ α n ˆ x 0 | n + √ α n (1 − ¯ α n − 1 ) 1 − ¯ α n x ( n ) + σ n ϵ ′ , where ϵ ′ ∼ N (0 , I ) . Through this EEG-guided null- space refinement, intermediate frames are reconstructed to be temporally consistent with anchor frames while preserv- ing functional information encoded in the EEG signal. Im- portantly , this projection is applied only during sampling, requiring no retraining or architectural modification. 3.3. Model Architectur e EEG Encoder . W e employ a temporal con volutional en- coder to extract discriminati ve features from EEG windows. Giv en an input EEG windo w s k ∈ R C × W , the encoder processes it through L stacked 1D conv olutional blocks with stride-2 downsampling, progressi vely increasing fea- ture channels while reducing temporal resolution. Each block applies con volution, batch normalization, and GELU activ ation. After global av erage pooling over the temporal dimension, a two-layer MLP projects to a d e -dimensional embedding, yielding f ϕ ( s k ) ∈ R d e that encodes the tempo- ral patterns within the EEG window . 4 Linear fMRI autoencoder . T o enable ef ficient diffusion modeling while preserving the range-null decomposition property required by null-space sampling, we emplo y a lin- ear MLP-based autoencoder as an implementation trick. The encoder maps each fMRI frame x k ∈ R N v to a lower - dimensional representation z k ∈ R d ( d ≪ N v ) via a lin- ear projection, and the decoder symmetrically reconstructs the fMRI frame via the in verse linear mapping. Criti- cally , the linearity ensures that the null-space projection ( I − A † A ) remains well-defined and the range-null de- composition holds exactly in the compressed space. Both encoder and decoder are trained end-to-end with the dif- fusion transformer model to minimize reconstruction error , allowing the model to operate in a compact representation without sacrificing the mathematical structure required for null-space sampling. 3.4. T raining & Inference T raining. W e train the model end-to-end on paired EEG- fMRI sequences using the denoising score matching objec- tiv e (Eq. 3 ). The linear fMRI autoencoder is jointly op- timized to minimize reconstruction error while preserving the range-null decomposition structure. T raining details, in- cluding hyperparameters, are provided in the supplementary material. Inference for Reconstruction. Giv en a test EEG seg- ment { s k 0 , . . . , s k 0 + K w − 1 } , we initialize x ( N ) ∼ N (0 , I ) and iterati vely denoise using the learned re verse process with EEG conditioning to obtain the reconstructed fMRI se- quence ˆ X k 0 : k 0 + K w − 1 . Inference for InterRecon. Gi ven anchor frames y = { x k − ∆ , x k +∆ } and corresponding EEG windows, we per- form constrained sampling with null-space projection at each denoising step (as described abov e) to reconstruct in- termediate frames at arbitrary query indices K ∗ ⊂ ( k − ∆ , k + ∆) , enabling EEG-guided frame reconstruction at any temporal position without retraining. The complete inference procedure is summarized in Al- gorithm 1 , which unifies both reconstruction tasks through conditional null-space projection. 4. Experiments 4.1. Setting Dataset. W e ev aluate our method on the CineBrain [ 25 ] dataset, which contains time-synchronized EEG-fMRI recordings from six subjects during naturalistic mo vie watching. F or each subject, the data are split into non- ov erlapping training and testing sets with an 80%/20% split. Importantly , for each subject, the testing set contains unseen video clips that are not present in the training set, ensuring within-subject ev aluation. The training data for each sub- ject has a duration of approximately 288 minutes, and the Algorithm 1 Unified EEG-Conditioned fMRI Reconstruc- tion Require: EEG sequence S , diffusion steps N , [optional] anchor frames y , measurement operator A Ensure: Reconstructed fMRI sequence ˆ X 1: Encode EEG sequence: h EEG ← f ϕ ( S ) 2: Initialize noise: x ( N ) ∼ N (0 , I ) 3: for n = N to 1 do 4: Estimate clean data: x 0 | n ← 1 √ ¯ α n ( x ( n ) − √ 1 − ¯ α n ϵ θ ( x ( n ) , n, h EEG )) 5: if InterRecon mode then 6: ˆ x 0 | n ← A † y + ( I − A † A ) x 0 | n 7: else 8: ˆ x 0 | n ← x 0 | n %T ranslation mode 9: end if 10: x ( n − 1) ← √ ¯ α n − 1 β n 1 − ¯ α n ˆ x 0 | n + √ α n (1 − ¯ α n − 1 ) 1 − ¯ α n x ( n ) + σ n ϵ ′ , where ϵ ′ ∼ N (0 , I ) 11: end for 12: return ˆ X ← x (0) testing data cov ers about 72 minutes. Data Prepr ocessing. EEG signals are recorded from 64 channels at 1000 Hz. Following standard practice, EEG sig- nals are normalized channel-wise to the range (-1, 1). Due to the hemodynamic response delay relative to the underly- ing neural activity , we apply a temporal shift of 4s between each EEG window and its corresponding fMRI frame, con- sistent with the delay established in CineBrain [ 25 ]. The fMRI data have a temporal resolution of 0.8s (TR = 0.8s). Preprocessing follows the official CineBrain protocol, im- plemented using the standard fMRIPrep pipeline. The steps include motion correction, susceptibility distortion correc- tion, slice-timing correction, co-registration to the structural T1-weighted image, and normalization to the HCP fsLR- 32k grayordinate space. After preprocessing, each fMRI frame is represented by 91282 grayordinates, consisting of 32492 cortical vertices per hemisphere and 26298 subcorti- cal vertices cov ering the thalamus, striatum, hippocampus, and other deep gray-matter structures. Metrics. Beyond measuring reconstruction fidelity with Mean Squared Error (MSE), we further employ neural- lev el quantitativ e metrics, including Pearson correlation co- efficient (r) and Cosine Similarity . These metrics capture spatial correspondence between predicted and ground-truth fMRI responses, providing biologically meaningful insight into neural representation quality . Baselines. For dynamic fMRI frame reconstruction, we compare our method with the following representative volumetric baselines: (1) CNN-TC [ 9 ], a conv olutional transcoder . (2) CNN-T A G [ 5 ], a graph-attention network. (3) E2fNet [ 10 ], a U-Net based encoder-decoder frame- work. (4) E2fGAN [ 10 ], an adversarial variant of E2fNet 5 T able 1. Comparison of fMRI Reconstruction Perf ormance. W e compare our method against baseline approaches across dif ferent frame lengths and spatial regions (whole brain, visual corte x, and visual–audio cortex). Results are averaged o ver six subjects. Frame Method Whole Brain V isual V isual+A udio MSE ↓ r ↑ cos ↑ MSE ↓ r ↑ cos ↑ MSE ↓ r ↑ cos ↑ 3 CNN-TC [ 9 ] 0.302 0.808 0.832 0.212 0.823 0.873 0.217 0.836 0.875 CNN-T AG [ 5 ] 0.297 0.812 0.835 0.207 0.828 0.879 0.212 0.840 0.878 E2FNet [ 10 ] 0.288 0.818 0.833 0.204 0.831 0.880 0.205 0.844 0.882 E2FGAN [ 10 ] 0.280 0.819 0.840 0.196 0.832 0.882 0.203 0.846 0.884 Ours 0.282 0.822 0.847 0.196 0.834 0.886 0.200 0.848 0.887 10 CNN-TC [ 9 ] 0.315 0.804 0.824 0.230 0.822 0.861 0.204 0.830 0.867 CNN-T AG [ 5 ] 0.309 0.810 0.829 0.225 0.826 0.865 0.199 0.835 0.871 E2FNet [ 10 ] 0.297 0.819 0.836 0.215 0.833 0.872 0.192 0.842 0.878 E2FGAN [ 10 ] 0.290 0.822 0.839 0.210 0.836 0.875 0.188 0.845 0.881 Ours 0.277 0.824 0.849 0.193 0.834 0.887 0.197 0.848 0.889 30 CNN-TC [ 9 ] 0.322 0.800 0.820 0.232 0.818 0.858 0.208 0.828 0.867 CNN-T AG [ 5 ] 0.319 0.803 0.823 0.228 0.820 0.860 0.204 0.831 0.869 E2FNet [ 10 ] 0.316 0.806 0.826 0.225 0.823 0.863 0.201 0.833 0.872 E2FGAN [ 10 ] 0.314 0.808 0.828 0.222 0.825 0.865 0.199 0.835 0.874 Ours 0.281 0.819 0.845 0.195 0.829 0.884 0.200 0.844 0.885 with a discriminator . For the intermediate fMRI frame re- construction (InterRecon) setting, where the middle frames are reconstructed, we e valuate our method with classical widely-used linear interpolation . Implementation Details. The EEG input is first embedded into a 512-dimensional latent space, while each fMRI frame is projected to a 1024-dimensional representation. The dif- fusion backbone is implemented as a Transformer consist- ing of 6 layers with 8 attention heads and a hidden dimen- sion of 1024. During training, we adopt 1000 timesteps with a linear noise schedule following standard diffusion settings. For inference, we employ DDIM [ 26 ] for efficient sampling with 50 denoising steps. The model is trained for 200 epochs using the AdamW optimizer ( β 1 = 0 . 9 , β 2 = 0 . 999 ), with a learning rate of 1 × 10 − 4 and a batch size of 32. For visual decoding, the reconstructed fMRI representations are further processed by CineSync-f [ 25 ] to generate corresponding videos. All experiments are con- ducted on a single NVIDIA A100 GPU. 4.2. Result and Analysis In this section, we quantitati vely examine the model’ s spa- tiotemporal pattern recognition capability , ev aluate its per- formance under various reconstruction scenarios. Dynamic fMRI Frames Reconstruction. For spatial mod- eling, we conduct ev aluations at three spatial regions of the fMRI representation. Whole brain: 91282 vertices; primary visual cortex (V1): 8405 vertices; combined visual auditory cortex (V1 + A1): 18946 vertices. For temporal model- ing, we e v aluate reconstruction under 3, 10, and 30 frame lengths to assess the model’ s ability to recognize and recon- T able 2. Comparison of interpolation and null-space strate gies. W e compare linear interpolation , our model without null-space sampling , and our full model that incorporates null-space sampling for enhanced temporal and structural consistency . Method MSE ↓ r ↑ Cos ↑ Linear 0.280 0.830 0.851 Ours w/o null space 0.272 0.839 0.852 Ours w/ null space 0.250 0.852 0.865 2 3 4 5 6 7 8 Frame Length 0.835 0.840 0.845 0.850 0.855 0.860 Cosine Similarity linear w/o null space w null space 2 3 4 5 6 7 8 Frame Length 0.820 0.825 0.830 0.835 0.840 0.845 R linear w/o null space w null space 2 3 4 5 6 7 8 Frame Length 0.26 0.27 0.28 0.29 0.30 0.31 MSE linear w/o null space w null space Figure 3. Comparison of intermediate fMRI frame reconstruc- tion (InterRecon) performance across different frame lengths and methods. struct temporal patterns. As sho wn in T able 1 , across all spatial resolutions and frame lengths, our method consistently outperforms prior other methods, demonstrating strong spatiotemporal mod- eling capabilities. (1) T emporal rob ustness . When in- creasing the length from 3 to 10 and 30 frames, compet- ing methods such as CNN-TC, CNN-T A G, and E2F-based models show clear degradation in all metrics, whereas our model maintains highly stable performance across whole 6 Figure 4. V ertex-le vel cortical surface visualization of recon- structed fMRI alongside ground truth. The top row shows our reconstructed fMRI (Recon.); the middle row shows the ground- truth fMRI (GT); and the bottom row displays the reconstruction error map; the color scale indicates BOLD signal intensity . brain, V1, and V1+A1 regions. For example, in the whole brain region, our method preserves both low MSE (0.282 → 0.277 → 0.281) and high correlation (r: 0.822 → 0.824 → 0.819), indicating that temporal dependencies are effec- tiv ely modeled ev en as temporal horizons increase. This stability highlights the model’ s ability to recognize and propagate temporal patterns over short, mid, and long-range frame segments. (2) Spatial rob ustness and whole-brain generalization. Across the 91282 vertex whole-brain rep- resentation, our approach achie ves the best cosine similarity for all frame lengths (0.847 / 0.849 / 0.845), demonstrating strong spatial consistency and global neural representation modeling. The ability to handle high-dimensional cortical mesh while maintaining superior performance indicates that the model captures stable and distributed cortical dynam- ics, rather than relying on localized patterns alone. (3) En- hanced performance in task-relevant visual and audio- visual cortices. The performance in the visual (V1) and au- diovisual (V1+A1) cortices is markedly higher than that at the whole-brain le vel. Notably , all metrics are consistently higher than the whole-brain results. Such specific region improv ements are expected, as visual and audiovisual cor- tices are strongly driv en by the movie-watching task. The superior performance in these biologically rele vant areas in- dicates the model captures sensory-driven neural dynamics in a manner that aligns well with established neuroscience principles. Overall, the results demonstrate that our framework de- liv ers both temporal stability and spatial fidelity , scaling from short to long temporal windows while preserving ac- curacy across whole brain and task-rele vant cortical areas. This provides strong evidence that our model not only re- constructs spatially plausible fMRI patterns but also effec- tiv ely models the temporal evolution of neural acti vity . Intermediate fMRI Frames Reconstruction. T able 2 shows the performance on subject 1 for generating the miss- ing intermediate frame given its preceding and follo wing fMRI frames. W e compare three settings: a simple linear baseline, our model without null space sampling, and our full model with null space sampling. As sho wn, incorporat- ing the null space constraint yields consistent improvements across all metrics. Figure 3 further examines the InterRecon setting across different frame lengths of subject 1. The lin- ear interpolation baseline degrades noticeably as the tem- poral gap increases, whereas our null-space v ariant main- tains much more stable performance and remains superior across all sequence lengths. Both the ”w/o null space” and ”w/ null space” results are produced without any additional training, using exactly the same checkpoint learned from the 10-frame dynamic reconstruction task. The comparison therefore, reflects solely the effect of enabling null-space sampling at inference. This demonstrates the fle xibility of our dif fusion-based framew ork, where the same model can be adapted to different reconstruction scenarios through sampling strategies alone. The superior performance of null space sampling indicates that e xplicitly constraining the so- lution space to respect boundary conditions leads to more biologically plausible intermediate frames that capture the smooth temporal ev olution of neural activity . V isualization. T o assess the spatial accuracy of the recon- structed fMRI frames and ev aluate their biological plausi- bility , we visualize the reconstructed cortical surf ace along- side the ground truth. The visualizations present lateral views of both hemispheres as well as a superior vie w , allo w- ing inspection of large-scale spatial patterns across the cor - tex. As shown in Figure 4 , the reconstructed fMRI closely matches the ground truth in both intensity distribution and spatial topology , demonstrating high spatial fidelity with minimal reconstruction error across the entire cortical sur- face. These results indicate that our model captures coher- ent and anatomically consistent activ ation patterns, further supporting the biological plausibility of the generated fMRI signals. Downstr eam V isual Decoding T ask. Beyond vertex-le vel reconstruction metrics, we v alidate the functional plausi- bility of reconstructed fMRI by e v aluating whether it pre- serves task-relev ant neural information for downstream vi- sual decoding task. W e employ CineSync-f [ 25 ], a pre- trained fMRI-to-video decoder, to reconstruct videos from our reconstructed fMRI frames. This provides a direct test of whether the reconstructed fMRI encodes biologically meaningful representations that support high-lev el cogni- tiv e tasks. Qualitativ e results (Figure S1 ) show that videos decoded from our reconstructed fMRI recover the coarse semantic structure of the original scenes. In the first case, the model correctly decodes a living-room setting with tw o characters engaged in con versation and captures salient elements such 7 GT Pred . GT Pred . Figure 5. Functional validation through visual decoding. Comparison between video frames decoded from our reconstructed fMRI (Pred.) and the corresponding ground-truth video frames (GT) using the CineSync-f decoder . as the purple clothing. In the second case, the ground-truth frames (GT) depict a scene in which two male characters are seated on a couch and talking. The frames decoded from our reconstructed fMRI (Pred.) recover the ov erall scene layout, capturing the presence of the two male characters, their approximate poses, and the general con versational set- ting. Although finer details such as precise facial iden- tity or exact clothing appearance are not perfectly repro- duced, the reconstructions successfully preserve the ov er- all scene context and high-lev el visual semantics. More- ov er , the decoded frames form a temporally coherent se- quence, reflecting the continuous nature of the underlying neural dynamics. These observations indicate that our re- constructed fMRI retains functionally meaningful informa- tion that supports do wnstream visual decoding, going be- yond mere verte x-lev el similarity . 5. Conclusion and Discussion Conclusion. In this work, we formulate EEG-to-fMRI reconstruction simultaneously as a spatiotemporal pattern recognition problem, where the model extracts neurally meaningful dynamic features from EEG, and as a gener- ativ e modeling task, where it reconstructs dynamic fMRI frames in high spatial resolution. W ithin a subject-specific setting, our diffusion-transformer-based framew ork recon- structs fMRI trajectories that are spatially detailed, tem- porally coherent, and consistent with kno wn physiological dynamics. Our model achieves substantially better perfor- mance than pre vious representati ve baseline. T o support intermediate frame reconstruction, we incorporate a null- space constrained sampling strategy that enforces consis- tency with observed frames during the generati ve process. This enables the model to infer missing temporal frames without retraining and provides a principled way to assess temporal consistency . Functional v alidation through task- driv en visual decoding sho ws that the reconstructed fMRI frames preserve discriminative neural representations rather than only matching lo w-lev el similarity metrics. Overall, this study demonstrates a feasible path to unify the temporal precision of EEG with the high spatial resolution of fMRI for dynamic brain activity modeling, cross-modal neural re- construction, and future applications in neural decoding. Discussion. While this framew ork achiev es strong perfor- mance, two challenges remain. (1) fMRI responses during complex naturalistic tasks exhibit substantial cross-subject variability , making cross-subject modeling highly challeng- ing. Our model is therefore trained within each subject, and extending it to subject-independent settings will require stronger anatomical or functional alignment. (2) W e adopt a fixed EEG–fMRI delay following standard practice, yet the true hemodynamic latency is region-dependent and time- varying. Although our dynamic sequence modeling par - tially alleviates this mismatch, future work is needed to in- tegrate adapti ve or learnable alignment. 8 Acknowledgments This project is supported by the Grant (2022YFC2405102). References [1] Russell A Poldrack. The future of fmri in cognitiv e neuro- science. Neur oimage , 62(2):1216–1220, 2012. 1 [2] Nikos K Logothetis, Jon Pauls, Mark Augath, T orsten Tri- nath, and Axel Oeltermann. Neurophysiological in vestiga- tion of the basis of the fmri signal. Nature , 412(6843):150– 157, 2001. 1 [3] Gary H Glover . Overview of functional magnetic resonance imaging. Neur osur gery Clinics , 22(2):133–139, 2011. 1 [4] Martin A Lindquist. The statistical analysis of fmri data. Statistical science , 23(4):439–464, 2008. 1 [5] David Calhas and Rui Henriques. Eeg to fmri synthesis ben- efits from attentional graphs of electrode relationships. arXiv pr eprint arXiv:2203.03481 , 2022. 2 , 5 , 6 [6] W eiheng Y ao, Zhihan L yu, Mufti Mahmud, Ning Zhong, Baiying Lei, and Shuqiang W ang. Catd: Unified representa- tion learning for eeg-to-fmri cross-modal generation. IEEE T ransactions on Medical Imaging , 44(7):2757–2767, 2025. 1 , 2 [7] Christoph M Michel, Micah M Murray , Goran Lantz, Sara Gonzalez, Laurent Spinelli, and Rolando Grave de Peralta. T owards the utilization of eeg as a brain imaging tool. Neu- r oimage , 61(2):371–385, 2012. 1 [8] Y amin Li, Ange Lou, Ziyuan Xu, Shengchao Zhang, Shiyu W ang, Dario Englot, Soheil K olouri, Daniel Moyer , Roza Bayrak, and Catie Chang. Neurobolt: Resting-state eeg-to- fmri synthesis with multi-dimensional feature mapping. Ad- vances in neur al information pr ocessing systems , 37:23378– 23405, 2024. 2 [9] Xueqing Liu and P aul Sajda. A con volutional neural network for transcoding simultaneously acquired ee g-fmri data. In 2019 9th International IEEE/EMBS Confer ence on Neural Engineering (NER) , pages 477–482. IEEE, 2019. 2 , 5 , 6 [10] Kristofer Grover Roos, Atsushi Fukuda, and Quan Huu Cap. From brainwaves to brain scans: A robust neural network for eeg-to-fmri synthesis. arXiv preprint , 2025. 2 , 5 , 6 [11] Zhou W ang, Alan C Bovik, Hamid R Sheikh, and Eero P Si- moncelli. Image quality assessment: from error visibility to structural similarity . IEEE Tr ansactions on Image Pr ocess- ing , 13(4):600–612, 2004. 2 [12] Alain Hore and Djemel Ziou. Image quality metrics: Psnr vs. ssim. pages 2366–2369, 2010. 2 [13] Emily J Allen, Ghislain St-Yves, Y ihan Wu, Jesse L Breedlov e, Jacob S Prince, Logan T Dowdle, Matthias Nau, Brad Caron, Franco Pestilli, Ian Charest, et al. A massiv e 7t fmri dataset to bridge cogniti ve neuroscience and artificial intelligence. Natur e neur oscience , 25(1):116–126, 2022. 2 [14] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising dif- fusion probabilistic models. Advances in neural information pr ocessing systems , 33:6840–6851, 2020. 2 [15] Jascha Sohl-Dickstein, Eric W eiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. International conference on machine learning , pages 2256–2265, 2015. 2 [16] Dustin Podell, Zion English, K yle Lacey , Andreas Blattmann, T im Dockhorn, Jonas M ¨ uller , Joe Penna, and Robin Rombach. Sdxl: Improving latent diffusion mod- els for high-resolution image synthesis. arXiv pr eprint arXiv:2307.01952 , 2023. 2 [17] W enyi Hong, Ming Ding, W endi Zheng, Xinghan Liu, and Jie T ang. Cogvideo: Large-scale pretraining for text-to-video generation via transformers. arXiv preprint arXiv:2205.15868 , 2022. 3 [18] T eam W an. W an: Open and adv anced large-scale video gen- erativ e models. arXiv pr eprint arXiv:2503.20314 , 2025. [19] Jianxiong Gao, Zhaoxi Chen, Xian Liu, Jianfeng Feng, Chenyang Si, Y anwei Fu, Y u Qiao, and Ziwei Liu. Longvie: Multimodal-guided controllable ultra-long video generation, 2025. [20] Jianxiong Gao, Zhaoxi Chen, Xian Liu, Junhao Zhuang, Chengming Xu, Jianfeng Feng, Y u Qiao, Y anwei Fu, Chenyang Si, and Ziwei Liu. Longvie 2: Multimodal con- trollable ultra-long video world model, 2025. 3 [21] Zijiao Chen, Jiaxin Qing, T iange Xiang, W an Lin Y ue, and Juan Helen Zhou. Seeing beyond the brain: Masked model- ing conditioned diffusion model for human vision decoding. In arXiv , 2022. 3 [22] Zijiao Chen, Jiaxin Qing, and Juan Helen Zhou. Cinematic mindscapes: High-quality video reconstruction from brain activity . Advances in Neural Information Pr ocessing Sys- tems , 36:24841–24858, 2023. [23] Jianxiong Gao, Y uqian Fu, Y un W ang, Xuelin Qian, Jianfeng Feng, and Y anwei Fu. Mind-3d: Reconstruct high-quality 3d objects in human brain. In Eur opean Conference on Com- puter V ision , pages 312–329. Springer , 2024. [24] Jianxiong Gao, Y anwei Fu, Y uqian Fu, Y un W ang, Xuelin Qian, and Jianfeng Feng. MinD-3D++: Advancing fMRI- Based 3D Reconstruction W ith High-Quality T extured Mesh Generation and a Comprehensi ve Dataset . IEEE T ransac- tions on P attern Analysis & Machine Intelligence , 47(12): 11802–11816, 2025. 3 [25] Jianxiong Gao, Y ichang Liu, Baofeng Y ang, Jianfeng Feng, and Y anwei Fu. Cinebrain: A large-scale multi-modal brain dataset during naturalistic audiovisual narrati ve processing. arXiv pr eprint arXiv:2503.06940 , 2025. 5 , 6 , 7 [26] Jiaming Song, Chenlin Meng, and Stefano Ermon. Denois- ing diffusion implicit models. In International Conference on Learning Repr esentations , 2021. 6 9 S1. Data Preprocessing For CineBrain dataset, the fMRI preprocessing follows the CineBrain protocol and is performed using the standard fM- RIPrep pipeline. The main steps include motion correction, susceptibility distortion correction, slice-timing correction, co-registration to the T1-weighted structural image, and normalization to the fsLR-32k grayordinate space defined by the Human Connectome Project (HCP). After prepro- cessing, each fMRI frame is represented by 91282 grayordi- nates, consisting of 32492 cortical vertices per hemisphere and 26298 subcortical gray-matter voxels covering thala- mus, striatum, hippocampus, and other deep gray structures. EEG data are acquired using an MRI-compatible 64- channel cap at a sampling rate of 1000 Hz, with simul- taneous recording of ECG signals. Synchronization be- tween EEG and fMRI is ensured by logging the exact fMRI TR timings during acquisition. EEG preprocessing follows the CineBrain protocol, with modifications to accommo- date our recording configuration. A multi-step artifact re- mov al procedure is applied to suppress scanner-induced and physiological noise while preserving neural activity . The preprocessing pipeline includes bandpass filtering between 0.1 Hz and 75 Hz to remove baseline drift and muscle arti- facts, and a 50 Hz notch filter to attenuate powerline inter- ference. ECG artifacts are first reduced using QRS-based correction methods, followed by independent component analysis (ICA) to isolate and remove residual artifacts. The recorded ECG signals are further used to refine artifact re- jection through correlation-based adaptiv e adjustment, re- sulting in clean EEG data suitable for subsequent analysis. S2. Subject-wise Dynamic fMRI Reconstruc- tion T o complement the a veraged results reported in the main paper , we provide a full breakdown of dynamic fMRI re- construction performance for all six subjects in the Cine- Brain dataset. T ables S1 S2 S3 report the Mean Squared Error (MSE), Pearson correlation coef ficient (r), and co- sine similarity across three spatial regions: the whole brain (91282 v ertices), the primary visual corte x V1 (8405 ver- tices), and the combined visual and auditory cortex V1+A1 (18946 v ertices). Results are provided under three temporal window lengths of 3, 10, and 30 frames. Furthermore, we report subject-wise performance for the InterRecon task. While the main paper provides results for Subject 1, here we include results for all six subjects. S3. Additional Video Reconstruction Results As shown in fig S1 and fig S2 , we include additional vi- sual comparisons of the original videos, the videos decoded from real fMRI signals, and those decoded from the recon- structed fMRI. These supplementary results provide further T able S1. Subject-wise dynamic fMRI reconstruction perfor - mance (3-frame). Subject MSE r Cos sub1 0.272 ± 0.07 0.839 ± 0.04 0.852 ± 0.03 sub2 0.189 ± 0.03 0.865 ± 0.03 0.890 ± 0.03 sub3 0.285 ± 0.03 0.843 ± 0.06 0.850 ± 0.06 sub4 0.346 ± 0.09 0.781 ± 0.08 0.815 ± 0.06 sub5 0.288 ± 0.08 0.812 ± 0.04 0.839 ± 0.05 sub6 0.314 ± 0.149 0.794 ± 0.06 0.834 ± 0.06 avg 0.282 0.822 0.847 T able S2. Subject-wise dynamic fMRI reconstruction perfor - mance (10-frame). Subject MSE r Cos sub1 0.271 ± 0.05 0.840 ± 0.03 0.853 ± 0.03 sub2 0.188 ± 0.02 0.865 ± 0.03 0.890 ± 0.03 sub3 0.284 ± 0.09 0.843 ± 0.04 0.850 ± 0.04 sub4 0.342 ± 0.19 0.780 ± 0.07 0.816 ± 0.06 sub5 0.275 ± 0.05 0.819 ± 0.03 0.846 ± 0.05 sub6 0.303 ± 0.11 0.797 ± 0.04 0.838 ± 0.04 avg 0.277 0.824 0.849 T able S3. Subject-wise dynamic fMRI reconstruction perfor - mance (30-frame). Subject MSE r Cos sub1 0.283 ± 0.03 0.832 ± 0.02 0.846 ± 0.02 sub2 0.196 ± 0.01 0.857 ± 0.02 0.883 ± 0.02 sub3 0.290 ± 0.07 0.841 ± 0.03 0.849 ± 0.04 sub4 0.344 ± 0.10 0.769 ± 0.04 0.807 ± 0.04 sub5 0.280 ± 0.04 0.814 ± 0.02 0.842 ± 0.04 sub6 0.294 ± 0.104 0.802 ± 0.03 0.841 ± 0.04 avg 0.281 0.819 0.845 support for the quantitative and qualitati ve analyses dis- cussed in the main manuscript. Across div erse scenes and character configurations, the videos decoded from our predicted fMRI closely match those decoded from real fMRI. As sho wn in the figures, the decoding results based on reconstructed fMRI f aithfully re- cov er the overall scene layout, camera vie wpoint, and back- 10 Video (GT) Video (Real fMRI) Video (Pred. fMRI) Vi d e o (G T ) Vi d e o (R e a l fM R I) Vi d e o (P re d . fM R I) Figure S1. Functional validation through visual decoding. Comparison among the original stimulus video frames V ideo (GT), the frames decoded from ground-truth fMRI V ideo (Real fMRI), and the frames decoded from our reconstructed fMRI V ideo (Pred. fMRI) using the CineSync-f decoder . These three-way visual comparisons further illustrate the preserv ation of semantic content in the reconstructed fMRI. Video (GT) Video (Real fMRI) Video (Pred. fMRI) Video (GT) Video (Real fMRI) Video (Pred. fMRI) Figure S2. Functional validation through visual decoding. Comparison among the original stimulus video frames V ideo (GT), the frames decoded from ground-truth fMRI V ideo (Real fMRI), and the frames decoded from our reconstructed fMRI V ideo (Pred. fMRI) using the CineSync-f decoder . These three-way visual comparisons further illustrate the preserv ation of semantic content in the reconstructed fMRI. 11 T able S4. Ablation on latent dimensionality . LinearAE Reconstruction EEGtofMRI dim MSE ↓ MSE ↓ r ↑ Cos ↑ 512 0.0045 0.288 0.814 0.830 1024 0.0043 0.282 0.822 0.847 2048 0.0042 0.285 0.820 0.843 ground structure, while also capturing key aspects of the characters’ appearance, posture, interactions, and contex- tual semantics. Whether in indoor con versations, multi- person interactions, or rapidly changing scenes, the seman- tic content decoded from predicted fMRI is nearly equiv a- lent to that obtained from real fMRI, indicating that our re- constructed fMRI successfully preserves the functional in- formation required for neural visual decoding. In these var - ied scenarios, the EEG-dri ven fMRI reconstructions exhibit stable, high-le vel semantic representations that can be ef- fectiv ely read out by the visual decoder , yielding video out- puts that are comparable to those produced using real fMRI. This functional consistency further demonstrates that the re- constructed fMRI carries meaningful representational value and can serv e as a reliable substitute for real fMRI in visual cognition analyses. S4. Additional Ablation on Latent Dimension- ality T o further examine whether the linear autoencoder intro- duces a bottleneck effect, we perform additional ablation experiments with latent dimensionalities of 512, 1024, and 2048. As sho wn in T able S4 , the downstream EEG-to-fMRI reconstruction performance remains stable across different latent dimensions, indicating that the proposed method is robust to this design choice and that the linear autoencoder is not the dominant limiting factor . 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment