Mixed-signal implementation of feedback-control optimizer for single-layer Spiking Neural Networks

On-chip learning is key to scalable and adaptive neuromorphic systems, yet existing training methods are either difficult to implement in hardware or overly restrictive. However, recent studies show that feedback-control optimizers can enable express…

Authors: Jonathan Haag, Christian Metzner, Dmitrii Zendrikov

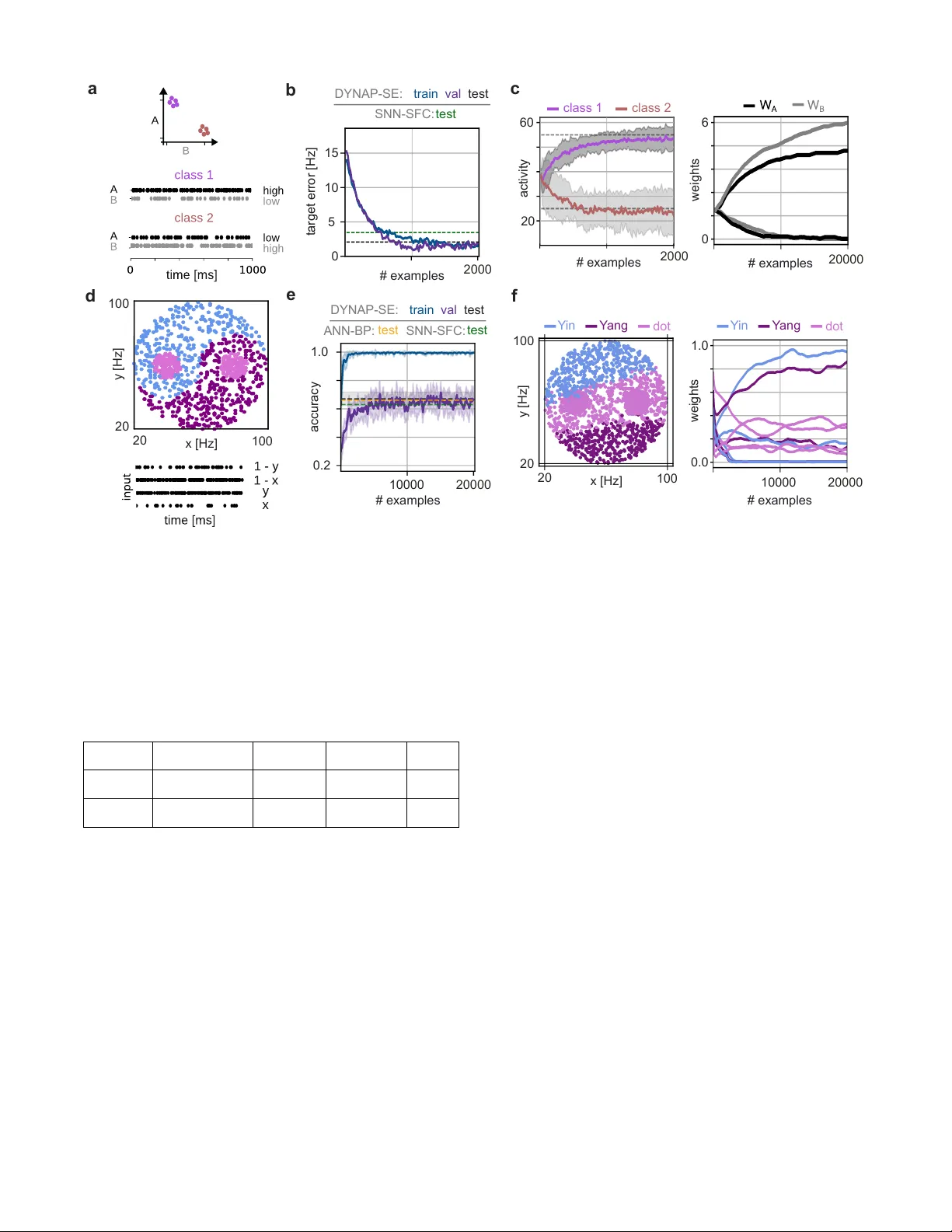

Mix ed-signal implementation of feedback-control optimizer for single-layer Spiking Neural Networks Jonathan Haag ∗ † , Christian Metzner ∗ † , Dmitrii Zendriko v † , Giacomo Indi veri † , Senior Member , IEEE , Benjamin Gre we † ‡ , Chiara De Luca † § ¶ , and Matteo Saponati † ¶ ∗ equal contribution, ¶ equal contribution † Institute of Neuroinformatics, Univ ersity of Z ¨ urich and ETH Z ¨ urich, Z ¨ urich, Switzerland ‡ AI Center , ETH, Z ¨ urich, Switzerland § Digital Society Initiativ e, Uni versity of Zurich, Switzerland Abstract —On-chip learning is k ey to scalable and adaptive neuromor phic systems, yet existing training methods are either difficult to implement in hardware or overly restrictiv e. Howev er , recent studies show that feedback-control optimizers can enable expressi ve, on-chip training of neuromor phic devices. In this work, we present a proof-of-concept implementation of such feedback-control optimizers on a mixed-signal neuromorphic processor . W e assess the proposed appr oach in an In-The-Loop (ITL) training setup on both a binary classification task and the nonlinear Y in–Y ang problem, demonstrating on-chip training that matches the performance of numerical simulations and gradient-based baselines. Our results highlight the feasibility of feedback-driven, online learning under realistic mixed-signal constraints, and represent a co-design approach toward embed- ding such rules directly in silicon for autonomous and adaptive neuromor phic computing. Index T erms —online lear ning, spiking neural networks, contr ol theory , neuromorphic computing, mixed-signal devices I . I N T R O D U C T I O N Spiking Neural Networks (SNNs) are increasingly studied as a biologically inspired and hardware-ef ficient alternativ e to artificial neural networks (ANN). Their event-dri v en dynamics make them particularly well-suited for neuromorphic systems, which exploit mixed-signal circuits to emulate spiking neurons in real time [1, 2]. A major challenge, howe ver , remains the de- velopment of scalable and hardware-compatible learning rules. Con ventional approaches such as Backpropagation through time (BPTT) [3] achiev e high accuracy in software but are inherently non-local: they require information from the entire network (non-local in space) and must process sequences in batches while storing activity ov er time (non-local in time). These requirements make direct deployment on neuromorphic substrates highly impractical. In contrast, recent work has focused on local and online learning rules. Online learning refers to weight updates that are triggered continuously by the occurrence of indi vidual spikes, without waiting for an entire batch or full sequence to be pro- cessed. This property not only reduces memory requirements but also allows learning to proceed in real time. The impor- tance extends beyond algorithmic elegance: it is critical for en- abling subject-specific adaptation, online fine-tuning, and task- dependent optimization in practical deployments. Saponati et al. [4] introduced the spike-based feedback control algorithm, which encodes error signals directly in spike trains and dri ves local synaptic plasticity . Such rules represent a promising route to neuromorphic learning, as they av oid dependence on global error information and enable continuous updates using signals naturally a vailable on hardware. Howe ver , deploying such learning rules on neuromorphic substrates remains non- trivial. Mixed-signal devices exhibit mismatch, noise, and quantization constraints, while internal state variables are only partially observable, making neuromorphic hardware both a demanding and realistic testbed for the robustness of spike- based learning methods. In this work, we use the D YN AP-SE neuromorphic pro- cessor [2] to provide a proof of concept of the spike-based feedback control algorithm. The D YN AP-SE processor is a mixed-signal platform in which analog circuits exploit device physics to emulate spiking neuron dynamics. The chip con- sists of multiple cores of Adapti ve-Exponential Integrate and Fire (AdExp-I&F) neurons interconnected via asynchronous address-ev ent routing, operating in real time and supporting plastic synaptic connections. T o co-design an on-chip im- plementation of such an algorithm, we employ a computer- in-the-loop training procedure, in which a host computer computes weight updates and reconfigures synapses while the chip executes network dynamics. This approach allows us to assess the algorithm under realistic hardware constraints, while paving the way toward future neuromorphic systems that embed the learning rule directly in silicon. I I . M E T H O D S A. Spiking feedback-contr ol optimizer W e adopt the feedback-control optimizer introduced in [4] to train single-layer SNNs on Neuromorphic devices. The architecture is composed of a Leaky Integrate-and-Fire (LIF) output neuron per class, with two additional controller neurons per output (positiv e and a negati v e). These neurons recei ve target and output spike trains and recurrently project back to the output neuron (Fig. 1). By construction, the positi ve control neuron increases the activity of the output neurons when the target rate exceeds the actual firing rate, while the negati v e Output Control Fig. 1. Schematic of the computational primitive. One output neuron (black) receiv es inputs (black arrow) and sends output (blue arro ws) to the two corresponding positive (purple) and negativ e (cyan) control neurons. Arrows and circles indicate excitation and inhibition, respectively . control neuron decreases it when the opposite holds. T ogether , they implement a proportional-integral spiking controller that encodes the error between the target and the output acti vity directly into spike-based feedback currents. The recurrent feedback currents provide a local learning signal to update synaptic weights online according to, w t = w t − 1 + η I fb t s in t , (1) where s in t denotes the presynaptic input spikes, I fb t the feed- back current generated by the controller and receiv ed by the neuron at timestep t , and η the learning rate. This update rule is local, online, and hardware compatible, making it well-suited for implementation on mixed-signal neuromorphic chips. B. The D YNAP-SE chip W e test the feedback-control optimizer with the D YNAP- SE neuromorphic processor , a multi-core asynchronous mix ed- signal system optimized for real-time emulation of spiking neural dynamics [5]. The de vice comprises four process- ing cores, each embedding 256 current-mode silicon neu- rons [6, 7] that implement adaptiv e exponential integrate-and- fire (AdExp-I&F) models [8]. Every neuron supports up to 64 programmable synap- tic inputs, configured through Content Addressable Mem- ory (CAM) blocks that store the addresses of presynaptic sources. Synapses can be set as excitatory (positi ve weight) or inhibitory (ne gativ e weight), and operate with fast or slow dynamics. Since the analog circuits implementing the synapses are inherently noisy and nonlinear , we instead encode synaptic strength by v arying the number of parallel synaptic connections between neural units. This effecti v ely discretizes synaptic weights to a maximum resolution of 6 bits. Neurons within a core share global parameters, including leak constants, time constants, and refractory periods, and each neuron provides up to 1024 fan-out connections. Inter-core and intra-core communication is realized through an Address- Event Representation (AER) protocol [9], which guarantees microsecond-lev el timing precision under e vent-dri ven, high- load conditions. In the high-input regime and with adaptation disabled, the underlying circuit dynamics of an AdExp-I&F neuron reduce to τ d dt I mem + I mem ≈ I in I gain I τ + I a I mem I τ , where I mem denotes the current representing the membrane potential, τ is the effecti ve membrane time constant, and the second term on the right-hand side corresponds to the positiv e feedback loop characteristic of the AdExp-I&F model. Due to device mismatch, AdExp-I&F neurons can exhibit v ariability within and across cores as well as being sensitiv e to signal noise. T o address this, we use populations of 10 AdExp-I&F neurons to represent each neural unit. Each unit’ s acti vity is the av erage firing rate across the entire population. This population-based representation reduces sensiti vity to neuron- to-neuron variability and noise fluctuations. C. In-the-loop training W e ev aluate the feedback-control algorithm by training a D YNAP-SE processor on-chip with an In-The-Loop (ITL) training setup. In this configuration, the chip is interfaced with a host computer via USB and controlled through the Samna Python library [10]. The host is responsible for the ov erall experiment orchestration (sending input spikes from the dataset, sending target spikes for classification, calculating the weight updates, and so on), while the D YN AP-SE performs the forward pass in real time. Each training iteration proceeds as follo ws and as depicted in Figure 2a. First, inputs and target firing rates are con verted to spike trains and sent to the chip. The network dynamics are implemented on-chip, and the output spikes are collected asynchronously through the AER interface. After a fixed time window of duration T , the recorded spike times are transferred to the host. The host estimates the feedback current from the activity of the control neurons and calculates floating-point weight updates according to the learning rule in (1). The updated weights are then quantized to the resolution supported by the hardware synapses and mapped back to the connection matrix of the D YNAP-SE. This procedure requires only adding or removing discrete synaptic connections; howe ver , if a weight changes sign, the synapse type must also be switched from e xcitatory to inhibitory or vice versa, requiring careful calibration. Because the D YNAP-SE runs continuously in real time, the duration of each training step directly corresponds to the wall-clock time (i.e., recording activities over T milliseconds requires T milliseconds of measurement). While this limits throughput, it ensures that the learning rule is tested under realistic hard- ware constraints. This ITL approach thus provides a practical framew ork to assess the functionality and robustness of spike- based learning rules on mixed-signal neuromorphic substrates, before moving towards fully embedded on-chip adaptation. D. Datasets W e test the optimizer with two benchmark datasets, both fol- lowing the definitions in [4]. The first is a binary classification task for single-layer SNNs. Each example consists of tw o input spike trains: in class A, the first input neuron fires at a high rate while the second fires at a lower rate, while the opposite is true for class B inputs. Input examples are presented for fixed- duration windo ws, after which synaptic weights are updated. b a 150 0 desired [Hz] 150 0 measured [Hz] before after error [Hz] -20 20 feedback [Hz] -20 20 target [Hz]: 5 20 40 w = 0 w = 1 w = 2 w = 3 w = 4 0 100 input [Hz] 0 100 output [Hz] neuron activit y s p i k e t i m e s i n p u t f r + t a r g e t f r weight update quantized weights precise weights input spikes quantization dataset Fig. 2. a ) In-the-loop (ITL) training setup. b Left: Calibration of input and target spike generation with recorder neurons, before and after calibration. Middle: Frequency-frequency curves of calibrated D YN AP-SE neurons for different synaptic weights (see legend). Right: relation between error (output activity minus target activity) and the total feedback (activity of the control neurons) in Hz. The second dataset is the Y in–Y ang benchmark [11], a non- linearly separable, three-class problem. Each sample belongs to the Y in, Y ang, or Dot region of the symbol and is encoded by four input features ( x, y , 1 − x, 1 − y ) . These coordinates are mapped to firing rates and con verted to Poisson spike trains, as described in [4]. In both cases, the output layer includes one neuron per class (two neurons for binary classification, three neurons for Y in-Y ang), whose firing rates are modulated by the control neurons to reach high target acti vity for their corresponding class inputs and lo w activity for all others. As in the binary task, each training example is presented once for a fixed duration, and separate training, validation, and test sets are generated randomly to ensure robustness of the ev aluation. I I I . R E S U LT S A. Network calibration on the D YN AP-SE Accurate e valuation of the feedback control algorithm re- quires calibration of neuron, synapse, and controller parame- ters on the D YN AP-SE. Due to analog variability and limited observability of the system, we calibrate the neuron activities through targeted experiments, summarized in Fig. 2b . Since FPGA-based virtual neurons cannot be monitored di- rectly , we first calibrated the input and target spike generation using recorder neurons. In particular , we route the output of the virtual neurons through the recorders neurons present on-chip and correct the mapping to significantly reduce the mismatch between desired and measured acti vities (Fig. 2b, left). Second, we measure the synaptic transfer characteristics by driving pairs of neurons with controlled presynaptic firing rates and varying synaptic weight. The resulting frequency–frequency curves (Fig. 2b, middle) confirm that larger weights reliably increase postsynaptic rates, while highlighting the practical upper bound imposed by the 64-input fan-in constraint. W e use this information to choose the effecti ve weight range used during training. Finally , we tune the controller biases to align the dif ferential activity of positiv e and negativ e control neurons with the target error signal. After calibration, the feedback signal r fb = r + − r − linearly tracks the difference between output and target activity , i.e. the error signal (Fig. 2b, right). This step is critical to ensure that the feedback current used in learning reliably encodes the error magnitude and sign, up to a scaling factor that can be compensated by the learning rate. Overall, these calibration procedures establish stable oper - ating conditions on the D YNAP-SE for robust ITL training.. B. Binary classification dataset W e first e valuate the feedback-control optimzier on the binary classification task (Fig. 3a). The absolute difference between the output and the target activity (i.e., the target error) decreases steadily during ITL training (Fig. 3b). After training, the hardware implementation achiev es 100% accuracy and a 2.1 Hz target error , closely matching corresponding numerical simulations (T able I). During the course of training, the network activity without the feedback dri ve gets closer to the target activities for both class 1 and 2 (Fig. 3c, left). As the system learns to reach the targets without activ e feedback, the synaptic weights are adjusted incrementally , gradually div erging from their constant initialization (Fig. 3c, right). C. Non-linear Y in–Y ang dataset Next, we ev aluate the optimizer on the Y in–Y ang bench- mark, a three-class problem requiring non-linear decision boundaries (see Fig. 3d and Section II-D). The network consists of four input neurons representing the dot coordinates ( x, y, 1 − x, 1 − y ) and three output neurons. Input firing rates ranged from 20 to 100 Hz, while target neurons were driven at 20 Hz for the correct class and 2 Hz otherwise. Figure 3e shows the training and validation accuracy during ITL training of the D YN AP-SE. The hardware implementation achiev es 67% accuracy at test time, closely matching the numerical simulations ( 63% ) and the ANN baseline ( 66% ) for a single linear layer , as reported in [4]. W e estimated the power consumption during the inference phase on the D YNAP-SE chip [2] for each experiment, resulting in an order of magnitude of 10 − 100 µW . T able I summarizes these results. Figure 3f sho ws test inference (without active feedback), rev ealing a clear separation of the three regions. Furthermore, the synaptic weights of the dif ferent output neurons di ver ge from their random Gaussian initialization and stabilize after ∼ 10k training examples (Figure 3f, right). Overall, the results on both classification tasks demonstrate successful training of single-layer SNNs directly on neuro- accuracy # examples 10000 0.2 1.0 20000 D Y N A P - S E : train val test A N N - B P : test S N N - S F C : test x [Hz] y [Hz] 20 20 100 100 0.0 Y in Y ang dot Y in Y ang dot # examples 10000 20000 1.0 weights x [Hz] y [Hz] 20 20 100 100 d e b a f 0 activity 20 60 # examples 20000 6 weights c target error [Hz] # examples 2000 0 5 10 15 D Y N A P - S E : train val test S N N - S F C : test # examples 2000 class 1 class 2 Fig. 3. Successful ITL training with the D YNAP-SE. a ) Binary classification task. b ) T arget error during ITL training and comparison with numerical simulations. SNN-SFC stands for Spiking Neural Network - Spiking Feedback Control. c ) Left: Activity of the output neuron during ITL training when given inputs from class 1 and 2 (see legend). Right: Dynamics of the input weights. d ) Poisson encoding of the Yin Y ang dataset. e ) Same as b for classification accuracy . ANN-BP stands for Artificial Neural Network - Backpropagation. f ) Left: Network inference at test time. Right: Dynamics of the input weights. morphic hardware using the spike-based learning rule of the feedback-control algorithm. T ABLE I T E S T - T I M E A C C U R AC Y F O R S TA N D A R D S I N G L E - L AY E R A N N : 1 0 0 % O N B I NA RY C L A S S I FI C ATI O N , 6 6 % O N Y I N Y A N G [ 4 ] . E S T I M A T E D P O W E R C O N S U M P T I O N O N DY N A P - S E D U R I N G I N F E R E N C E . dataset test-time metric numerical sim. D YNAP-SE power [ µW ] Binary accuracy [%] 100 100 41 target error [Hz] 3.9 2.1 Y in–Y ang accuracy [%] 63 67 189 target error [Hz] 7.2 6.1 I V . C O N C L U S I O N S In this work, we demonstrated the implementation of a spike-based feedback control learning rule on the D YN AP-SE neuromorphic processor . Using an ITL setup, we were able to test the algorithm directly on mixed-signal hardware, account- ing for device mismatch, noise, and limited synaptic resolu- tion. The results on both binary and Y in–Y ang classification tasks sho w that our approach achiev es performance compara- ble to softw are simulations and gradient-based baselines, while operating fully in real time. The continuous, ev ent-driv en dynamics of the D YN AP-SE allowed weight updates to be ap- plied rapidly and seamlessly during training, highlighting the suitability of the platform for exploring hardware–algorithm co-design. Because the learning rule is not yet implemented directly in silicon, a host computer was required to calculate weight updates and reconfigure synapses. Nonetheless, this computer-in-the-loop scheme enabled systematic e v aluation under realistic hardware constraints and established a path tow ard embedded on-chip learning. Extending this frame- work to multi-layer networks requires rules for learning the feedback weights to hidden layers themselves, in addition to the rule introduced here for learning feedforward weights. Backpropagation-free frame works such as [12, 13] provide useful starting points. Furthermore, future work will include the design of custom neuromorphic processors embedding the feedback control rule nativ ely in silicon. Such de velopments would eliminate the need for external supervision, paving the way for scalable and autonomous online learning in SNNs. A C K N O W L E D G M E N T M.S. and J.H. acknowledge the ETH Z ¨ urich Postdoctoral Fellowship (nr . 23-2 FEL-042). C.D.L and J.H. were supported by the DSI at Univ ersity of Zurich (grant no.G-95017-01- 12). G.I. was supported by the HORIZON EUR OPE EIC Pathfinder Grant ELEGANCE (Grant No. 101161114) and re- ceiv ed funding from the Swiss State Secretariat for Education, Research and Innov ation (SERI). B.G. was supported by the Swiss National Science Foundation (CRSII5-173721, 315230 189251) and ETH project funding (24-2 ETH-032). R E F E R E N C E S [1] Elisabetta Chicca, Fabio Stefanini, Chiara Bartolozzi, and Giacomo Indiveri. “Neuromorphic Electronic Cir- cuits for Building Autonomous Cognitiv e Systems”. In: Pr oceedings of the IEEE 102.9 (Sept. 2014), pp. 1367– 1388. I S S N : 1558-2256. D O I : 10 . 1109 / JPROC . 2014 . 2313954. (V isited on 11/04/2024). [2] Saber Moradi, Ning Qiao, Fabio Stefanini, and Giacomo Indiv eri. “A Scalable Multicore Architecture W ith Het- erogeneous Memory Structures for Dynamic Neuromor - phic Asynchronous Processors (D YNAPs)”. In: IEEE T ransactions on Biomedical Cir cuits and Systems 12.1 (Feb . 2018), pp. 106–122. I S S N : 1940-9990. D O I : 10 . 1109/TBCAS.2017.2759700. (V isited on 11/16/2023). [3] Sander M. Bohte, Joost N. Kok, and Han La Poutr ´ e. “Error-Backpropagation in T emporally Encoded Net- works of Spiking Neurons”. In: Neur ocomputing 48.1 (Oct. 2002), pp. 17–37. I S S N : 0925-2312. D O I : 10.1016/ S0925- 2312(01)00658- 0. (V isited on 11/16/2023). [4] Matteo Saponati, Chiara De Luca, Giacomo Indi veri, and Benjamin Gre we. “A feedback control optimizer for online and hardware-a ware training of Spiking Neural Networks”. In: 2025 Neur o Inspir ed Computational Elements (NICE) . IEEE. 2025, pp. 1–10. [5] S. Moradi, N. Qiao, F . Stefanini, and G. Indiveri. “A Scalable Multicore Architecture W ith Heterogeneous Memory Structures for Dynamic Neuromorphic Asyn- chronous Processors (D YNAPs)”. In: IEEE T ransac- tions on Biomedical Circuits and Systems 12.1 (Feb . 2018), pp. 106–122. D O I : 10 . 1109 / TBCAS . 2017 . 2759700. [6] P . Livi and G. Indi veri. “A current-mode conductance- based silicon neuron for Address-Event neuromorphic systems”. In: International Symposium on Cir cuits and Systems, (ISCAS) . IEEE. May 2009, pp. 2898–2901. D O I : 10.1109/ISCAS.2009.5118408. [7] E. Chicca, F . Stefanini, C. Bartolozzi, and G. Indi- veri. “Neuromorphic electronic circuits for building autonomous cogniti ve systems”. In: Pr oceedings of the IEEE 102.9 (Sept. 2014), pp. 1367–1388. I S S N : 0018- 9219. D O I : 10.1109/JPR OC.2014.2313954. [8] Romain Brette and W ulfram Gerstner . “ Adaptive expo- nential integrate-and-fire model as an effecti ve descrip- tion of neuronal activity”. In: J ournal of neur ophysi- ology 94.5 (2005), pp. 3637–3642. D O I : 10 . 1152 / jn . 00686.2005. [9] S.R. Deiss, R.J. Douglas, and A.M. Whatley . “A Pulse- Coded Communications Infrastructure for Neuromor- phic Systems”. In: Pulsed Neural Networks . Ed. by W . Maass and C.M. Bishop. MIT Press, 1998. Chap. 6, pp. 157–78. D O I : 10.7551/mitpress/5704.003.0011. [10] SynSense. Samna: SynSense Samna Public P ackage . https://pypi.or g/project/samna/. V ersion 0.47.1, License: Apache-2.0. Requires at least Python 3.9. Released 12 June 2025. 2025. [11] Laura Kriener, Julian G ¨ oltz, and Mihai A. Petrovici. The Y in-Y ang Dataset . Jan. 2022. D O I : 10.48550/arXi v . 2102.08211. (V isited on 06/10/2024). [12] Alexander Meulemans et al. “Credit Assignment in Neural Networks through Deep Feedback Control”. In: Advances in Neural Information Pr ocessing Systems . V ol. 34. Curran Associates, Inc., 2021, pp. 4674–4687. (V isited on 07/10/2023). [13] Alexander G. Ororbia, Ankur Mali, Daniel Kifer, and C. Lee Giles. “Backpropagation-Free Deep Learning with Recursiv e Local Representation Alignment”. In: Pr oceedings of the AAAI Confer ence on Artificial Intel- ligence 37.8 (June 2023), pp. 9327–9335. I S S N : 2374- 3468. D O I : 10 . 1609 / aaai . v37i8 . 26118. (V isited on 03/13/2024).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment