ACAVCaps: Enabling large-scale training for fine-grained and diverse audio understanding

General audio understanding is a fundamental goal for large audio-language models, with audio captioning serving as a cornerstone task for their development. However, progress in this domain is hindered by existing datasets, which lack the scale and …

Authors: Yadong Niu, Tianzi Wang, Heinrich Dinkel

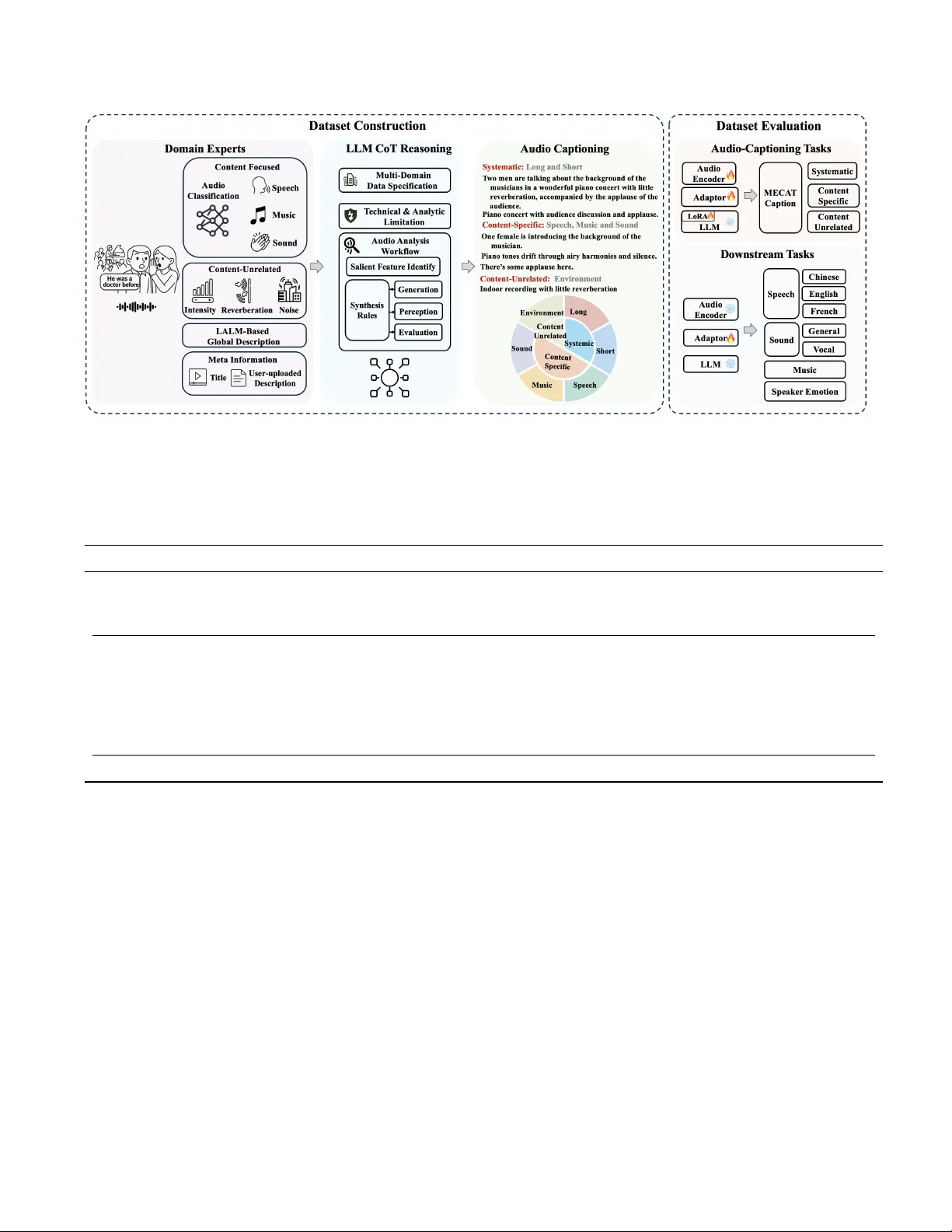

A CA VCAPS: ENABLING LARGE-SCALE TRAINING FOR FINE-GRAINED AND DIVERSE A UDIO UNDERST ANDING Y adong Niu 1 , T ianzi W ang 1,2 , Heinrich Dink el 1 , Xingwei Sun 1 , Jiahao Zhou 1 , Gang Li 1 , Jizhong Liu 1 , J unbo Zhang 1 , Jian Luan 1 1 MiLM Plus, Xiaomi Inc, Beijing, China 2 The Chinese Uni versity of Hong K ong, Hong K ong, China ABSTRA CT General audio understanding is a fundamental goal for large audio-language models, with audio captioning serving as a cornerstone task for their de velopment. Howe ver , progress in this domain is hindered by existing datasets, which lack the scale and descripti ve granularity required to train truly versatile models. T o address this gap, we introduce A CA V - Caps, a ne w lar ge-scale, fine-grained, and multi-faceted audio captioning dataset. Derived from the A CA V100M collection, A CA VCaps is constructed using a multi-expert pipeline that analyzes audio from diverse perspecti ves—including speech, music, and acoustic properties—which are then synthesized into rich, detailed descriptions by a large language model. Experimental results demonstrate that models pre-trained on A CA VCaps exhibit substantially stronger generalization ca- pabilities on various downstream tasks compared to those trained on other leading captioning datasets. The dataset is av aiable at https://github.com/xiaomi-research/aca vcaps. Index T erms — audio captioning, audio understanding, large audio language model 1. INTR ODUCTION The ability to comprehend complex acoustic en vironments is a fundamental goal in the pursuit of general artificial intel- ligence. Large Audio-Language Models (LALMs) hav e re- cently emerged as a promising paradigm for this task, aim- ing to build models with a deep and versatile understanding of sound. A cornerstone for developing such models is the task of audio captioning—generating rich, human-readable descriptions of acoustic content, which serves as a powerful bridge between the audio and text modalities. Despite their rapid progress, the performance of current LALMs on diverse, real-world audio understanding tasks re- mains constrained. W e argue that this limitation stems not from the models themselves, but from the data they are trained on. Existing audio captioning datasets suf fer from sev eral critical issues: (I) Scarcity of high-Fidelity data at scale. Cre- ating large-scale datasets with accurate and detailed descrip- tions is inherently challenging. Manual annotation is costly and dif ficult to scale; (II) Homogeneous Sources and Rigid Annotations. Most large-scale datasets are confined to lim- ited domains (e.g., AudioSet) and characterized by a single, stylistic pattern that lacks the linguistic variety found in the real world; (III) Lack of Descriptiv e Granularity . Captions are often too generic (e.g., “a man is speaking”) and fail to provide the discriminati ve acoustic features needed to distin- guish between nuanced auditory events. Effecti ve audio-text alignment is crucial for LALMs, which requires a training dataset that provides a fine-grained mapping from audio to descriptiv e text. High-quality , detailed captions enable this, allowing models to learn generalizable representations for di- verse tasks. W e introduce A CA VCaps, a large-scale, fine-grained au- dio captioning dataset deriv ed from A CA V100M [1]. T o cre- ate detailed captions, we analyze audio with specialized ex- pert models and synthesize their outputs using a Chain-of- Thought (CoT) enhanced lar ge language model (LLM). This pipeline produces richly detailed descriptions to train more capable audio models. 2. RELA TED WORKS The advancement of general audio understanding is intrinsi- cally linked to the quality and diversity of training datasets. The field has e volv ed from smaller , manually annotated cor- pora to large-scale, automatically generated ones, yet signif- icant challenges related to data scale, descriptiv e granularity , and source limitations persist across all major audio domains. In the domain of general sound e vents, early foundational datasets like AudioCaps [2] and Clotho [3] were created through intensi ve manual annotation. While providing high- quality human descriptions, their data scale is inherently limited (typically a fe w thousand samples), and their captions often lack fine-grained detail, focusing on generic, e vent- lev el descriptions. T o address the scale issue, a new wa ve of datasets was created using automated pipelines. These in- clude W avCaps [7], AudioSetCaps [10], and Auto-A CD [8], which scaled up to millions of audio-caption pairs. How- ev er , this increase in scale came at the cost of descriptive quality . The data sources for these automated methods are Fig. 1 . Data construction and e valuation frameo wrk. T able 1 . Comparison of A CA VCaps with existed caption datasets. The Unique T okens column reports the total number of unique tokens within each dataset, as counted by the Qwen3 tokenizer . † MP-LLM: Multiple Experts Models and LLM; ‡ Multi-Domain: This includes speech, music and sound-e vents ( ⋄ denotes that domain were not elaborated in detail); § Extended Multi-Domain: This includes speech, music, sound-ev ents, combinations thereof, and silence. Labeling Dataset Duration (h) Samples Unique T okens Domain Source Manual AudioCaps [2] 135 50k 5.5k Multi-Domain ‡ , ⋄ AudioSet Clotho [3] 24 3.8k 5.5k Multi-Domain ‡ , ⋄ FreeSound SongDescriber [4] 12 0.4k 2.4k Music MTG-Jamendo LLM MusicCaps [5] 7 4.6k 4.6k Music AudioSet LPMusicCaps [6] 127 21.6k 5.1k Music Audioset, MSD W avCaps [7] 1.8k 0.4M 23.1k Multi-Domain ‡ , ⋄ AudioSet Auto-A CD [8] 5.2k 1.9M 20.3k Multi-Domain ‡ , ⋄ AudioSet Sound-V eCaps [9] 4.5k 1.6M 42.7k Multi-Domain ‡ , ⋄ AudioSet AudioSetCaps [10] 5.6k 2.0M 20.9k Multi-Domain ‡ , ⋄ AudioSet MP-LLM † A CA VCaps (Ours) 13.0k 4.7M 76.7k Extended Multi-Domain § A CA V100M often a limiting factor; for instance, W avCaps refines web- crawled text which is often coarse, while Auto-ACD and Sound-VECaps [9] rely on paired video data, restricting their applicability to audio-only contexts. Consequently , despite their size, these datasets often fail to resolv e the core problem of descriptiv e granularity . The music domain sho ws a similar trajectory . Manu- ally annotated datasets like MusicCaps [5] and SongDe- scriber [11] of fer rich, detailed captions but are limited in scale. In response, automatically labeled datasets such as LP- MusicCaps [6] hav e emerged, lev eraging LLMs to generate captions from existing musical databases. While larger , their descriptiv e granularity is often constrained by the richness of the source metadata, which may not contain the nuanced details of instrumentation, mood, and te xture that a deep acoustic analysis could provide. For speech, large-scale datasets ha ve historically focused on tasks like automatic speech recognition (ASR), with their annotations capturing lexical content (transcripts) rather than descriptiv e captions of the acoustic scene. This leaves a sig- nificant gap, as the holistic description of a speech event - including tone, emotion, and environment — is crucial for general audio intelligence. Thus, the current landscape of audio captioning datasets presents a trade-off between the high descriptive quality of small, manually-annotated datasets and the coarse granular- ity of larger , automatically-generated ones. This highlights a need for a resource that unifies large scale with fine-grained, acoustically-grounded descriptions across all major audio do- mains—sound events, music, and speech. A summary of our proposed dataset in comparison to existing ones can be seen in T able 1. T able 2 . Performance of audio captioning models pre-trained on v arious datasets, e valuated on the MECA T -Caption benchmark. The final score is a weighted average of three main categories: systematic, Content-Specific, and Content-Unrelated. F or all metrics, a higher score indicates better performance. Notably , a ’Pure’ sample contains only one content type (e.g., only speech), while a ’Mixed’ sample contains a combination of two or three types (i.e., speech, music, and sound ev ents). † Combined refers to the combination of AudioSetCaps, Auto-A CD, W avCaps, and Sound-VECaps. T raining Dataset Systematic Content-Related Content-Unrelated Score Long Short Speech Music Sound Envi ronment Pure Mixed Pure Mix ed Pure Mixed AudioSetCaps [10] 52.4 52.0 30.2 31.4 44.3 30.9 52.4 21.6 15.4 37.4 Auto-A CD [8] 47.3 50.0 29.1 31.0 26.9 21.9 49.5 18.9 11.0 32.8 W avCaps [7] 47.3 50.9 27.3 30.1 15.9 19.4 46.5 20.0 9.2 31.4 Sound-V eCaps [9] 47.0 49.7 29.1 30.3 27.2 21.9 49.8 18.7 11.4 32.8 Combined † 52.2 54.1 30.2 32.2 45.4 23.3 52.7 20.2 11.1 36.6 A CA VCaps 76.6 75.7 64.2 64.9 60.5 41.1 59.5 28.0 34.8 60.9 3. A CA VCAPS D A T A CONSTR UCTION The data construction pipeline is adapted from the methodol- ogy established in our prior work [12], where a comprehen- siv e description of the expert models and LLM prompts can be found. In this section, we provide a brief ov ervie w of this pipeline. As illustrated in Figure 1, the multi-stage process is designed to capture a rich, multi-faceted understanding of each audio clip, beginning with analysis by a suite of special- ized expert models and culminating in a final synthesis stage by a LLM. 3.1. Multi-Expert Annotation The initial analysis stage is designed to gather a compre- hensiv e set of features from four key sources to inform the final caption generation. The primary source is a content- related analysis pipeline: a CED-Base model [13] first clas- sifies the audio to predict AudioSet [14] labels, which then routes the clip to specialized modules for speech (perform- ing ASR and extracting speaker attributes), music (analyzing attributes like tempo and mood and separating vocals), or sound ev ents (using the initial labels). This is supplemented by a content-unrelated analysis that universally characterizes acoustic properties such as signal intensity (RMS), recording quality , and reverberation. T o provide further semantic con- text, we also generate a baseline description using a LALM and extract any original metadata, such as titles or tags, from the source file. T ogether , these structured analyses and raw metadata form the complete input for the final synthesis stage. 3.2. LLM-CoT Reasoning The final stage le verages a LLM (Deepseek-R1 [15]) to syn- thesize the disparate outputs from the multi-expert analysis in conjunction with the original file metadata. Employing a Chain-of-Thought (CoT) prompting strategy , the LLM rea- sons over the collected evidence to resolve inconsistencies, infer relationships, and distill the most salient information. T o ensure descriptiv e di versity , this process yields a final set of annotations where, for each identified acoustic scene or ev ent, the LLM generates three semantically consistent yet stylistically v aried captions. These are complemented by cor - responding question-answer pairs, and all generated items are appended with a confidence score. 4. EXPERIMENTS AND RESUL TS T o comprehensiv ely ev aluate our proposed dataset, A CA V - Caps, we designed a series of experiments to assess the quality of the audio representations learned from it. Specif- ically , all models share a unified architecture consisting of a Dasheng-Base audio encoder [16], a lightweight MLP adapter , and a Qwen3-0.6B decoder [17]. Implementation Details All models were trained on eight GPUs. W e used the AdamW8bit optimizer with a learning rate of 1 × 10 − 4 and a weight decay of 0.01 with a batch size of 16. The training strategies, illustrated in Figure 1, differed based on the ev aluation task. For audio captioning, the audio encoder and MLP adapter were jointly trained while the LLM was fine-tuned with LoRA. Con versely , to assess do wnstream generalization, both the audio encoder and LLM were frozen, leaving only the MLP adapter trainable. 4.1. Direct Perf ormance on A udio Captioning T o assess direct audio captioning performance, we conducted a comprehensiv e ev aluation using the MECA T -Caption bench- mark [12]. This benchmark provides a multi-faceted analy- sis of captioning quality across systematic, content-specific and content-unrelated categories, where performance is mea- sured using the discriminati ve-enhanced audio text ev aluation (D A TE) score, a metric that rewards descripti ve specificity in addition to semantic similarity . T able 3 . Performance on downstream tasks. A model is pretrained on each respective training dataset. Then, for each task, we freeze the model and only optimize the adapter . For all speech tasks, lower is better , while for all other tasks higher is better . † Combined refers to the combination of AudioSetCaps, Auto-A CD, W avCaps, and Sound-VECaps. T raining Dataset Speech ↓ Sound ↑ Music ↑ Other ↑ AISHELL-2 LibriSpeech Common V oice General V ocal Instrument Emotion Android IOS MIC Clean Other French VGGSound V ocalSound NSynth IEMOCAP AudioSetCaps 82.7 77.8 81.7 51.6 70.2 84.7 22.4 91.4 67.0 17.6 Auto-A CD 89.1 78.2 88.6 54.6 76.5 85.7 22.5 90.2 46.1 24.1 W avCaps 83.2 74.2 77.9 54.3 74.0 85.2 21.2 91.5 69.1 19.9 Sound-VECaps 87.3 79.5 87.9 51.8 70.1 85.6 22.9 90.8 45.0 20.3 Combined † 84.2 76.4 82.3 41.5 59.4 83.0 34.6 92.6 44.0 19.8 A CA VCaps 58.3 56.5 57.1 19.7 33.7 50.0 20.4 92.1 64.7 28.9 The results, presented in T able 2, demonstrate the clear superiority of the model trained on A CA VCaps. It achie ves an ov erall D A TE score of 60.9, significantly outperforming mod- els trained on other large-scale datasets. This validates that the superior detail fostered by A CA VCaps directly translates into an enhanced ability to generate fine-grained captions. 4.2. Analysis of Generalization Perf ormance The unique token counts in T able 1 offer a static measure of each dataset’ s information richness. This section presents a more functional and dynamic ev aluation, analyzing how that richness translates to the generalization capabilities of pre-trained models. Our ev aluation is premised on the hy- pothesis that a dataset with greater informational breadth is key to learning more transferable representations. T o validate this, we measure the downstream performance of models pre-trained on each dataset by fine-tuning them across four distinct and representativ e sub-domains of au- dio: speech content, sound events, music information, and paralinguistic attributes. For speech, we assess phonetic and linguistic understanding via multilingual ASR across Chi- nese (AISHELL-2 [18]), English (LibriSpeech [19]), and French (Common V oice [20]). For sound ev ents, we test en- vironmental awareness on a general classification benchmark (VGGSound [21]) and the ability to distinguish non-speech human sounds (V ocalSound [22]). In music, we gauge analyt- ical proficiency through a fine-grained instrument recognition task (NSynth [23]). Finally , to probe the understanding of paralinguistic attrib utes, we e v aluate on a speech emotion recognition task (IEMOCAP [24]). This comprehensive se- lection allo ws us to holistically ev aluate the quality of the general-purpose representations that each dataset helps to cultiv ate. The results in table 3 substantiate our hypothesis. No- tably , while the ’Combined’ baseline comprises a larger sample size (6.0M vs. 4.7M), the A CA VCaps-trained model consistently exhibits superior performance across div erse downstream tasks. This performance gap is primarily at- tributed to informational density rather than sheer scale: despite having fe wer audio-text pairs, ACA VCaps possesses a significantly higher unique token count (76.7K) compared to the Combined set (47.6K). Such lexical richness facili- tates more precise audio-text alignment, fostering robust and generalizable representations. These findings illustrate that A CA VCaps achiev es competitiv e or ev en superior results ov er larger aggregated datasets, reinforcing the premise that semantic complexity and data quality outweigh raw sample volume in audio-language pre-training. ” 5. SUMMAR Y Progress in general audio understanding has been hampered by datasets limited in scale, scope, and descriptive detail. This paper addresses this bottleneck by introducing A CA VCaps, a large-scale audio captioning dataset designed to be com- prehensiv e in content and di verse in its descripti ve angles. Generated via a sophisticated pipeline, A CA VCaps provides a nov el resource for training next-generation audio models. Our experiments confirm that models trained on A CA V - Caps e xhibit superior performance. They not only e xcel at complex audio captioning tasks but also demonstrate strong generalization, successfully transferring to down- stream speech, music, and sound event analysis tasks with significant impro vements. This validates our core hypothesis: large-scale, comprehensiv e, and richly described datasets are crucial for dev eloping robust and versatile audio representa- tions. 6. REFERENCES [1] Sangho Lee, Jiwan Chung, Y oungjae Y u, Gunhee Kim, Thomas Breuel, Gal Chechik, and Y ale Song, “ Acav100m: Au- tomatic curation of large-scale datasets for audio-visual video representation learning, ” in Pr oceedings of the IEEE/CVF In- ternational Confer ence on Computer V ision , 2021, pp. 10274– 10284. [2] Chris Dongjoo Kim, Byeongchang Kim, Hyunmin Lee, and Gunhee Kim, “ Audiocaps: Generating captions for audios in the wild, ” in Pr oceedings of the 2019 Confer ence of the North American Chapter of the Association for Computational Lin- guistics , 2019, pp. 119–132. [3] K onstantinos Drossos, Samuel Lipping, and Tuomas V irtanen, “Clotho: an audio captioning dataset, ” in Pr oceedings of the IEEE International Conference on Acoustics, Speech and Sig- nal Pr ocessing (ICASSP) . IEEE, 2020, pp. 736–740. [4] Ilaria Manco, Benno W eck, Seungheon Doh, Minz W on, Y ix- iao Zhang, Dmitry Bogdanov , Y usong W u, Ke Chen, Philip T ovstogan, Emmanouil Benetos, et al., “The song describer dataset: a corpus of audio captions for music-and-language ev aluation, ” arXiv pr eprint arXiv:2311.10057 , 2023. [5] Andrea Agostinelli, T imo I Denk, Zal ´ an Borsos, Jesse En- gel, Mauro V erzetti, Antoine Caillon, Qingqing Huang, Aren Jansen, Adam Roberts, Marco T agliasacchi, et al., “Musiclm: Generating music from te xt, ” arXiv pr eprint arXiv:2301.11325 , 2023. [6] SeungHeon Doh, Keunwoo Choi, Jongpil Lee, and Juhan Nam, “Lp-musiccaps: Llm-based pseudo music captioning, ” arXiv pr eprint arXiv:2307.16372 , 2023. [7] Xinhao Mei, Chutong Meng, Haohe Liu, Qiuqiang K ong, T om Ko, Chengqi Zhao, Mark D Plumbley , Y uexian Zou, and W enwu W ang, “W avcaps: A chatgpt-assisted weakly-labelled audio captioning dataset for audio-language multimodal re- search, ” IEEE/A CM T ransactions on A udio, Speec h, and Lan- guage Pr ocessing , vol. 32, pp. 3339–3354, 2024. [8] Luoyi Sun, Xuenan Xu, Mengyue W u, and W eidi Xie, “ Auto- acd: A large-scale dataset for audio-language representation learning, ” in Pr oceedings of the 32nd ACM International Con- fer ence on Multimedia , 2024, pp. 5025–5034. [9] Y i Y uan, Dongya Jia, Xiaobin Zhuang, Y uanzhe Chen, Zhuo Chen, Y uping W ang, Y uxuan W ang, Xubo Liu, Xiyuan Kang, Mark D Plumbley , et al., “Sound-v ecaps: Improving audio generation with visually enhanced captions, ” in Pr oceedings of the IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2025, pp. 1–5. [10] Jisheng Bai, Haohe Liu, Mou W ang, Dongyuan Shi, W enwu W ang, Mark D Plumbley , W oon-Seng Gan, and Jianfeng Chen, “ Audiosetcaps: An enriched audio-caption dataset using auto- mated generation pipeline with large audio and language mod- els, ” IEEE T ransactions on Audio, Speech and Language Pro- cessing , vol. 33, pp. 2817–2829, 2025. [11] Ilaria Manco, Benno W eck, Seungheon Doh, Minz W on, Y ix- iao Zhang, Dmitry Bogdanov , Y usong W u, Ke Chen, Philip T ovstogan, Emmanouil Benetos, et al., “The song describer dataset: a corpus of audio captions for music-and-language ev aluation, ” arXiv pr eprint arXiv:2311.10057 , 2023. [12] Y adong Niu, Tianzi W ang, Heinrich Dinkel, Xingwei Sun, Jiahao Zhou, Gang Li, Jizhong Liu, Xunying Liu, Junbo Zhang, and Jian Luan, “Mecat: A multi-experts constructed benchmark for fine-grained audio understanding tasks, ” arXiv pr eprint arXiv:2507.23511 , 2025. [13] Heinrich Dinkel, Y ongqing W ang, Zhiyong Y an, Junbo Zhang, and Y ujun W ang, “Ced: Consistent ensemble distillation for audio tagging, ” in Pr oceedings of the IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2024, pp. 291–295. [14] Jort F Gemmeke, Daniel PW Ellis, Dylan Freedman, Aren Jansen, W ade Lawrence, R Channing Moore, Manoj Plakal, and Marvin Ritter , “ Audio set: An ontology and human-labeled dataset for audio e vents, ” in Pr oceedings of the IEEE Interna- tional Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 776–780. [15] Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi W ang, Xiao Bi, et al., “Deepseek-r1: Incentivizing reasoning ca- pability in llms via reinforcement learning, ” arXiv pr eprint arXiv:2501.12948 , 2025. [16] Heinrich Dinkel, Zhiyong Y an, Y ongqing W ang, Junbo Zhang, Y ujun W ang, and Bin W ang, “Scaling up masked audio en- coder learning for general audio classification, ” in Pr oceed- ings of the 25th Interspeech Conference (interspeech) , 2024, pp. 547–551. [17] Qwen T eam, “Qwen3 technical report, ” 2025. [18] Jiayu Du, Xingyu Na, Xuechen Liu, and Hui Bu, “ Aishell- 2: Transforming mandarin asr research into industrial scale, ” arXiv pr eprint arXiv:1808.10583 , 2018. [19] V assil Panayotov , Guoguo Chen, Daniel Pove y , and Sanjeev Khudanpur , “Librispeech: an asr corpus based on public domain audio books, ” in Pr oceedings of the IEEE Interna- tional Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 5206–5210. [20] Rosana Ardila, Megan Branson, Kelly Davis, Michael K ohler , Josh Meyer , Michael Henretty , Reuben Morais, Lindsay Saun- ders, Francis T yers, and Gregor W eber , “Common voice: A massively-multilingual speech corpus, ” in Pr oceedings of the T welfth Language Resour ces and Evaluation Confer ence , 2020, pp. 4218–4222. [21] Honglie Chen, W eidi Xie, Andrea V edaldi, and Andrew Zis- serman, “Vggsound: A large-scale audio-visual dataset, ” in Pr oceedings of the IEEE International Confer ence on Acous- tics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2020, pp. 721–725. [22] Y uan Gong, Jin Y u, and James Glass, “V ocalsound: A dataset for improving human vocal sounds recognition, ” in Pr o- ceedings of the IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2022, pp. 151– 155. [23] Jesse Engel, Cinjon Resnick, Adam Roberts, Sander Dieleman, Mohammad Norouzi, Douglas Eck, and Karen Simonyan, “Neural audio synthesis of musical notes with wav enet au- toencoders, ” in International conference on machine learning . PMLR, 2017, pp. 1068–1077. [24] Carlos Busso, Murtaza Bulut, Chi-Chun Lee, Abe Kazemzadeh, Emily Mower , Samuel Kim, Jeannette N Chang, Sungbok Lee, and Shrikanth S Narayanan, “Iemo- cap: Interactiv e emotional dyadic motion capture database, ” in Language r esour ces and evaluation , 2008, v ol. 42, pp. 335–359.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment