Blind Quality Enhancement for G-PCC Compressed Dynamic Point Clouds

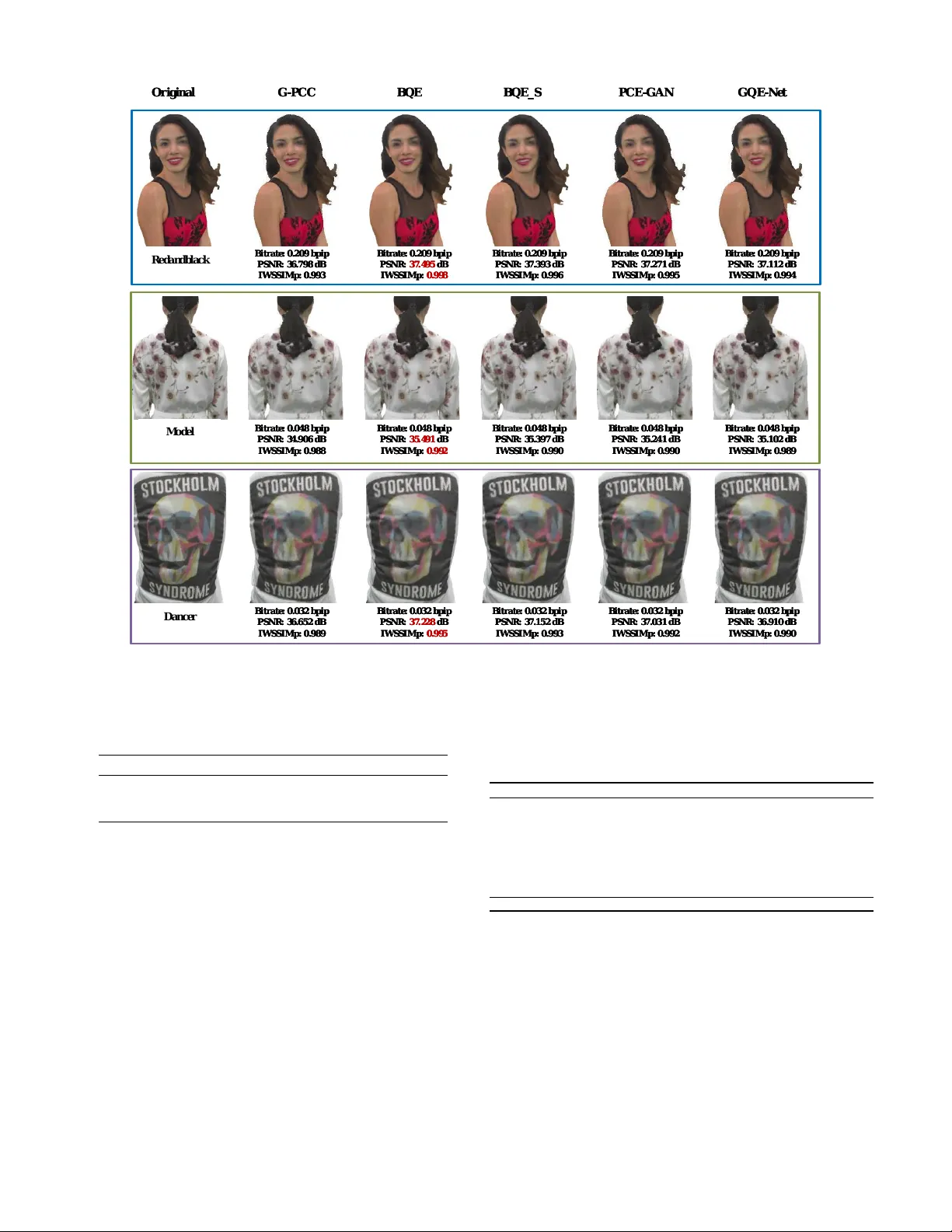

Point cloud compression often introduces noticeable reconstruction artifacts, which makes quality enhancement necessary. Existing approaches typically assume prior knowledge of the distortion level and train multiple models with identical architectur…

Authors: Tian Guo, Hui Yuan, Chang Sun