Analyzing animal movement using deep learning

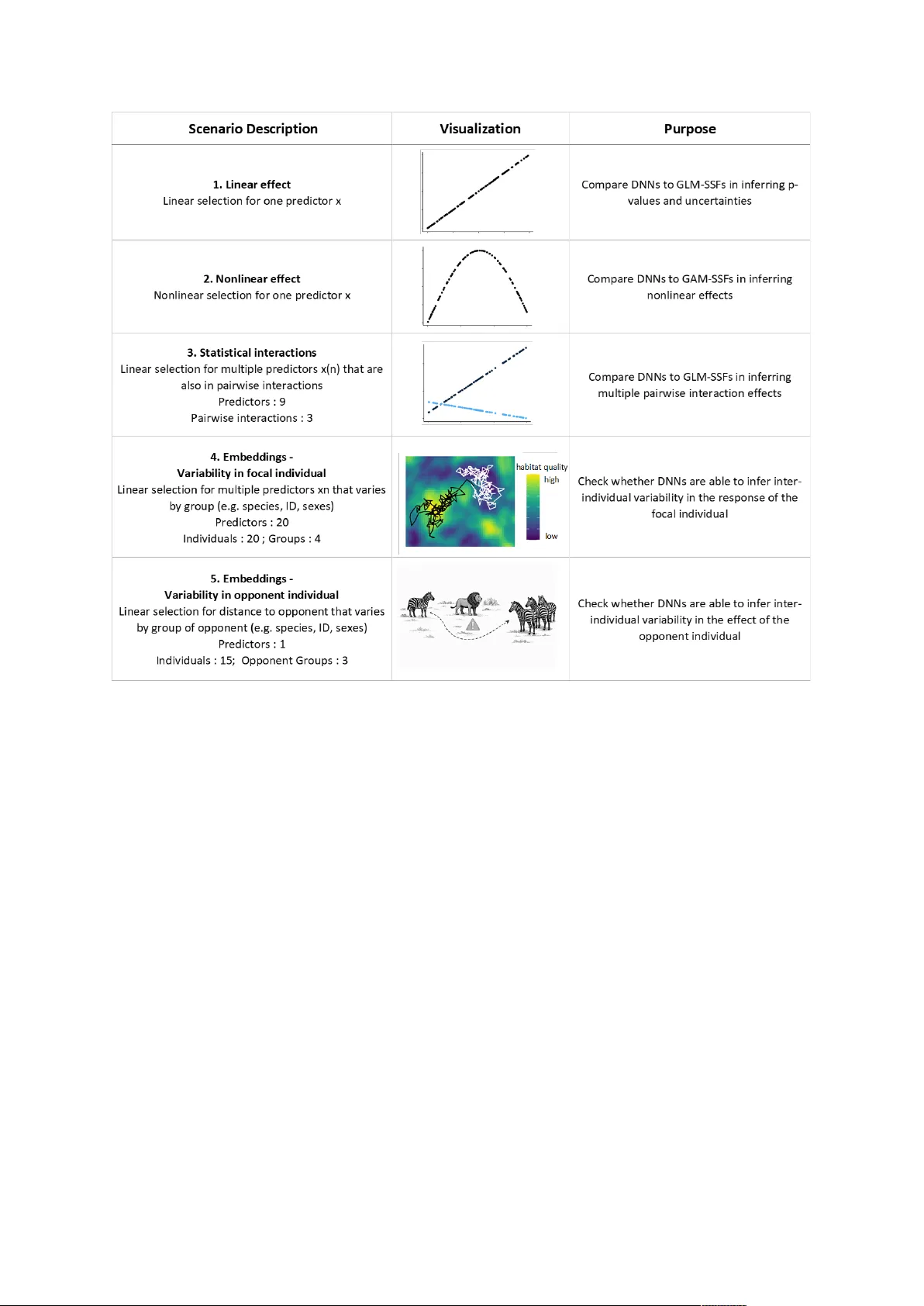

Understanding how animals move through heterogeneous landscapes is central to ecology and conservation. In this context, step selection functions (SSFs) have emerged as the main statistical framework to analyze how biotic and abiotic predictors influ…

Authors: Thibault Fronville, Maximilian Pichler, Johannes Signer