High-Fidelity Face Content Recovery via Tamper-Resilient Versatile Watermarking

The proliferation of AIGC-driven face manipulation and deepfakes poses severe threats to media provenance, integrity, and copyright protection. Prior versatile watermarking systems typically rely on embedding explicit localization payloads, which int…

Authors: Peipeng Yu, Jinfeng Xie, Chengfu Ou

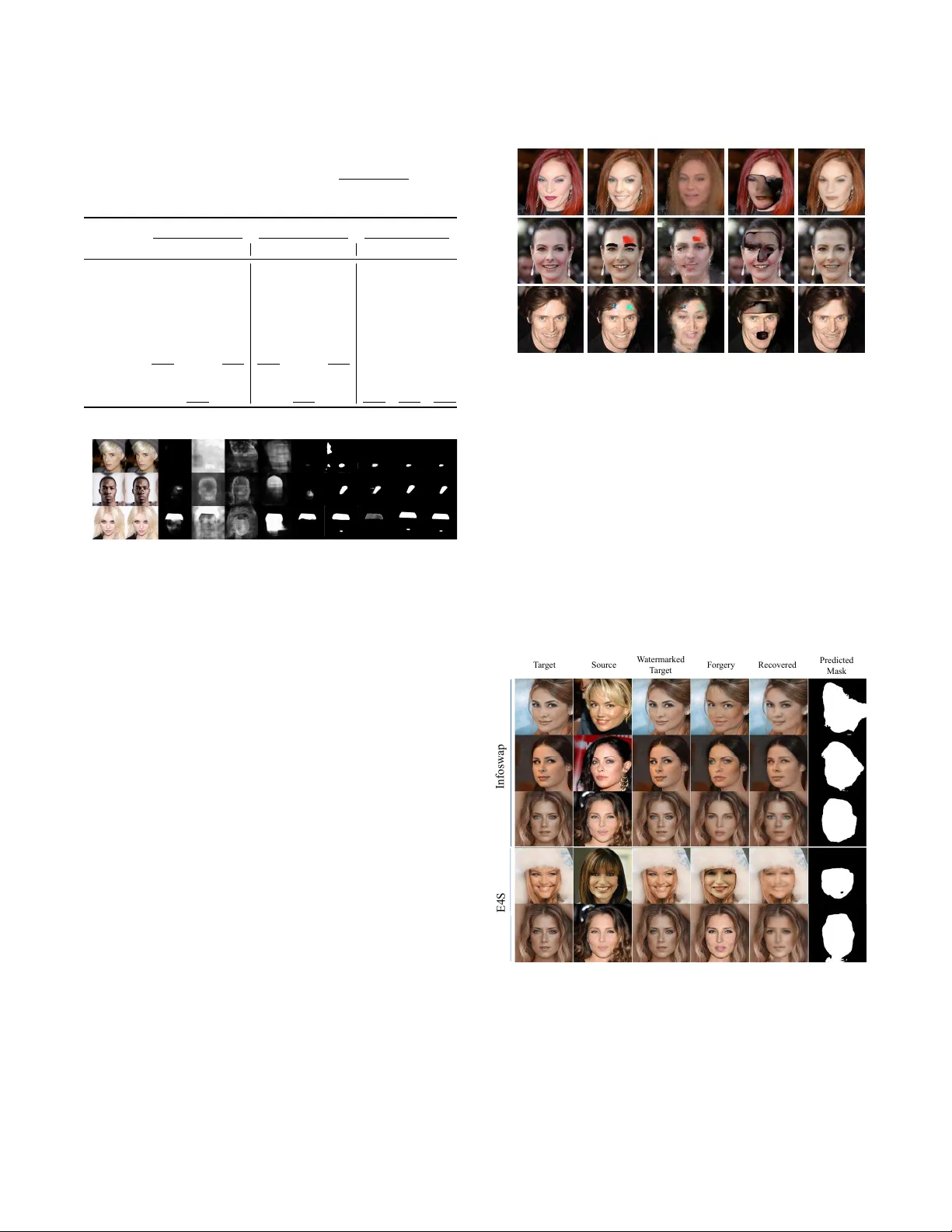

High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking Peipeng Y u ∗ ypp865@163.com Jinan University Guangzhou, Guangdong, China Jinfeng Xie Jinan University Guangzhou, Guangdong, China Chengfu Ou Jinan University Guangzhou, Guangdong, China Xiaoyu Zhou Jinan University Guangzhou, Guangdong, China Jianwei Fei University of Macau Macau, China Y unshu Dai Sun Y at-sen University Guangzhou, Guangdong, China Zhihua Xia Jinan University Guangzhou, Guangdong, China Chip Hong Chang Nanyang T echnological University Singapore, Singapore Abstract The proliferation of AIGC-driven face manipulation and deepfakes poses severe threats to media prov enance, integrity , and copyright protection. Prior versatile watermarking systems typically rely on emb edding explicit localization payloads, which introduces a delity–functionality trade-o: larger localization signals degrade visual quality and often reduce decoding robustness under strong generative edits. Moreover , existing methods rarely support con- tent recovery , limiting their forensic value when original evidence must be reconstructed. T o address these challenges, we present V eriFi, a versatile watermarking framework that unies copyright protection, pixel-level manipulation localization, and high-delity face content recovery . V eriFi makes three key contributions: (1) it embeds a compact semantic latent watermark that ser ves as an content-preserving prior , enabling faithful restoration even af- ter sever e manipulations; (2) it achieves ne-grained localization without emb edding lo calization-specic artifacts by corr elating image features with decoded prov enance signals; and (3) it intro- duces an AIGC attack simulator that combines latent-space mixing with seamless blending to improve robustness to realistic deepfake pipelines. Extensive experiments on Celeb A-HQ and FFHQ show that V eriFi consistently outperforms strong baselines in watermark robustness, localization accuracy , and recovery quality , providing a practical and veriable defense for deepfake forensics. CCS Concepts • Security and privacy → Trust frameworks ; A uthentication . Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than the author(s) must be honor ed. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, r equires prior specic permission and /or a fe e. Request permissions from permissions@acm.org. Conference acronym ’XX, W oodstock, N Y © 2018 Copyright held by the owner/author(s). Publication rights licensed to A CM. ACM ISBN 978-1-4503-XXXX -X/2018/06 https://doi.org/XXXXXXX.XXXXXXX Ke y words AIGC, versatile watermarking, content recov ery , manipulation lo- calization, media forensics A CM Reference Format: Peipeng Yu, Jinfeng Xie, Chengfu Ou, Xiaoyu Zhou, Jianwei Fei, Yunshu Dai, Zhihua Xia, and Chip Hong Chang. 2018. High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking. In Proceedings of Make sure to enter the correct conference title from your rights conrmation email (Conference acronym ’XX) . ACM, New Y ork, N Y , USA, 10 pages. https: //doi.org/XXXXXXX.XXXXXXX 1 Introduction Recent advances in articial intelligence-generated content (AIGC) have enable d highly realistic face synthesis and accessible deep- fakes. While these techniques support creative applications, they also introduce signicant risks, including misinformation, copy- right infringement, and loss of content authenticity [ 28 ]. As genera- tive models evolv e, passiv e detection methods are increasingly inef- fective against sophisticated and unseen deepfakes [ 11 , 22 , 23 ]. This highlights the need for proactive, veriable defenses that remain robust under AIGC manipulations and provide reliable forensic evidence. Digital watermarking provides a proactive mechanism for es- tablishing media provenance and safeguarding integrity [ 32 , 39 ]. Robust watermarks are designed to r emain decodable under b enign transformations and malicious edits (e.g., deepfake generation and splicing) [ 25 , 27 , 29 ], whereas fragile watermarks intentionally re- act to modications, enabling sensitive tamper detection [ 1 , 16 , 26 ]. Building upon these paradigms, recent versatile frame works (e.g., EditGuard [ 37 ], OmniGuard [ 38 ], StableGuard [ 31 ], and W AM [ 20 ]) seek to unify copyright protection with manipulation localization. Howev er , EditGuard and OmniGuar d rely on high-capacity local- ization payloads, which increases embe dding distortion and can weaken copyright robustness. In contrast, StableGuard and W AM largely dep end on passive detectors, which often exhibit limited localization accuracy under unseen AIGC edits. Critically , existing versatile watermarking methods do not support recovery of the original content, substantially limiting their forensic utility when faithful reconstruction is required for evidence preservation. How Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. Use r s Atta c k e r s J u d ge AI GC 1011 10… Co p y r i gh t V e r if ic a t io n 1011 10… F o rg e ry L oca l i z a t i on F a k e F a c e R e c o v e r y R ec o v er F ak e S o ci a l N e t wo r k I nj e c t i o n D i s to rti o n F o r e ns i c s Ve r i Fi Figure 1: O verview of our V eriFi framework. Our versatile watermarking architecture simultane ously achieves robust copyright protection, precise forger y localization, and high- delity fake face recovery , providing comprehensive forensic capabilities essential for real-world deepfake defense scenar- ios. to escap e the delity–functionality trade-o between copyright protection and tamper localization, while enabling high-delity content recovery , remains an open challenge. T o address these limitations, we propose V eriFi , a unied proac- tive framework that simultaneously supports (i) robust copyright protection, (ii) pixel-level tamper localization, and (iii) high-delity facial content recovery via tamper-resilient v ersatile watermarking. Unlike prior versatile methods that rely on separate localization payloads and thus compr omise b etween visual quality and function- ality , V eriFi introduces a novel watermark-conditioned localization network that detects manipulations by analyzing spatial inconsis- tencies in the decoded copyright signal, eliminating the nee d for additional localization watermarks. Moreover , V eriFi incorporates a compact semantic recovery watermark that encodes facial content, providing a strong prior to accurately reconstruct the original face even after sever e manipulation. As illustrated in Figure 1 , given an original image 𝐼 𝑜𝑟 𝑖 , our unied watermark enco der emb eds both a copyright code 𝑤 𝑐𝑜 𝑝 and a compact recovery representation to produce a protected image 𝐼 𝑝𝑟 𝑜 for public distribution. If the image undergoes AIGC-based manipulations, resulting in a forged im- age 𝐼 𝑒𝑑 𝑖 𝑡 , V eriFi enables three core functionalities: (1) extraction of the embedded copyright code ˆ 𝑤 𝑐𝑜 𝑝 for provenance verication; (2) watermark-guided forgery localization, leveraging ˆ 𝑤 𝑐𝑜 𝑝 to achieve accurate pixel-level manipulation mapping; and (3) recovery of the original facial content by decoding the recovery watermark, yielding a high-delity reconstruction ˆ 𝐼 𝑜𝑟 𝑖 . Our main contributions are summarized as follows: T able 1: Functional comparison of representative proactive watermarking and forensic methods. C: copyright protection; L: tamper localization; R: content recovery . Method V enue Category C L R SepMark MM’23 Face-aware robust watermarking ✓ – – LampMark MM’24 Face-aware robust watermarking ✓ – – Fake T agger MM’21 Face-aware robust watermarking ✓ – – AdaIFL CVPR’24 Passive localization – ✓ – HiFi-Net ICCV’23 Passive localization – ✓ – Imuge+ CVPR’23 Proactive localization and recovery – ✓ ✓ DFREC Ar xiv’25 Passive localization and recovery – ✓ ✓ EditGuard CVPR’24 V ersatile watermarking ✓ ✓ – OmniGuard CVPR’25 V ersatile watermarking ✓ ✓ – StableGuard NIPS’25 V ersatile watermarking ✓ ✓ – W AM AAAI’25 V ersatile watermarking ✓ ✓ – V eriFi (ours) – V ersatile watermarking ✓ ✓ ✓ • W e present V eriFi , a tamper-resilient versatile watermarking framework that unies robust copyright protection, pixel- level manipulation localization, and high-delity face con- tent recovery . W e design a watermark-guided recovery net- work that leverages a compact semantic watermark as a prior , enabling faithful restoration even after sever e AIGC manipulations. • W e design a watermark-guide d localization network that leverages the decoded copyright signal as a spatial prior , en- abling accurate tamper mapping without embedding localization- specic payloads and thus avoiding the delity–functionality trade-o. • W e introduce an AIGC attack simulator that jointly models latent mixing, editing, and Poisson blending to emulate r e- alistic deepfake perturbations during training, substantially enhancing watermark robustness. • Extensive experiments on CelebA -HQ and FFHQ show that V eriFi surpasses strong baselines in watermark r obustness, localization accuracy , and recovery quality , establishing a new benchmark for proactive deepfake forensics. 2 Related W orks 2.1 Forgery Localization and Recovery Methods Image forger y localization seeks to identify tamp ered regions at the pixel level across diverse manipulation typ es [ 9 , 11 , 13 , 36 , 40 ]. Representative methods such as MVSS-Net [ 30 ], CA T -Net [ 8 ], and HiFi-Net [ 4 ] leverage multi-branch architectures, frequency artifacts, and hierarchical attention to improve localization accuracy . Howev er , these approaches ar e easily overtted to specic datasets and do not support content recovery . DFREC [ 35 ] extend passive localization to recovery , but remain vulnerable to distribution shifts and complex AIGC manipulations, limiting real-w orld robustness. In contrast, we propose a unied proactive framework that embeds robust watermarks prior to content distribution, which enables reliable tamper localization. Additionally , by simulating complex AIGC attacks, we further enhance watermark e xtraction r obustness in real-world applications. High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y F or ge r y L oc at or M od u le I m age B le ndin g L ate nt M ix ing \ T a m p er - R es i l i en t Ve r sa t i l e W at e r m ar k i n g Fr a m e wo r k W a t e r m a r k - G u i d e d D e ep f a k e R ec o v er y AI G C At t a c k S im u lat or D e e p f a k e R e c o v e r y M o d u l e M odul a t i on P a tc h E mb e d E nc o de r M u lti - he a d A tte n ti o n N o rm N o rm M u lti - he a d A t t e nt i on × T ok e n D e c ode r Do w n Sa m pl e = ( 1 ) ( , ) + 101 1 10… 101 1 10… \ W a t e r m a r k - G u i d e d F o rge ry L o ca to r P a t c h E m be d S e g H e ad Wat e r m ar k E n c od e r 101 1 10… S S S S T ra n s f o rm e r L a y er U ps a m pl e M erg e S im ila r it y C a lc u la t io n C on c at S Figure 2: O ver view of our V eriFi. (1) Unied Recovery-Copyright W atermark Emb edder 𝐸𝑛𝑐 inserts an ownership code 𝑤 𝑐𝑜 𝑝 and a compact facial signature 𝑧 𝑓 𝑎𝑐𝑒 . (2) AIGC Attack Simulator performs Latent Mixing and Poisson blending to mimic realistic deepfake attacks during training. (3) W atermark Extractor 𝐷 𝑒 𝑐 recovers ˆ 𝑤 𝑐𝑜 𝑝 and ˆ 𝑧 𝑓 𝑎𝑐𝑒 , produces an content proxy ˆ 𝐼 𝑏 , and uses ˆ 𝑤 𝑐𝑜 𝑝 to guide the Forger y Locator to predict the manipulation map ˆ 𝑀 . (4) W atermark-Guided Deepfake Recovery Network uses a dual-stream Transformer with spatially gated cross-attention to fuse the e dited image, ˆ 𝐼 𝑏 , and ˆ 𝑀 for selective restoration. 2.2 Proactive W atermarking Methods Proactive watermarking methods embed imperceptible signals into images prior to distribution, enabling reliable provenance tracing and post-hoc forgery detection [ 25 , 27 , 29 ]. As summarized in T a- ble 1 , existing proactive watermarking approaches e xhibit distinct technical focuses and design paradigms. Early de ep watermarking methods, such as HiDDeN [ 42 ] and StegaStamp [ 24 ], primarily tar- get copyright protection, without support for tamper localization or content recovery . Face-aware watermarking methods, includ- ing SepMark [ 29 ] and LampMark [ 27 ], are specically designed to enhance robustness against facial manipulations, but remain limited to copyright verication. Imuge+ [ 33 ] extends proactive watermarking to tamper detection and content recovery by intro- ducing trivial p erturbations, yet do es not address cop yright authen- tication. Recent versatile frameworks, such as EditGuar d [ 37 ] and OmniGuard [ 38 ], unify copyright protection and tamper localiza- tion, but require embe dding high-capacity localization payloads, which can degrade visual delity and compromise robustness. Sta- bleGuard [ 31 ] and W AM [ 20 ] further utilize passive locators to avoid visual artifacts, but often exhibit limited generalization. Over- all, existing proactiv e watermarking solutions are constrained by inherent trade-os among visual delity , adversarial robustness, and forensic functionality . In this work, we introduce a unied framework that integrates watermark-guided localization and re- covery to jointly achieve cop yright protection, pixel-level tamper localization, and high-delity content recovery . This design ad- dresses key limitations of prior methods and sets a new benchmark for robust, versatile image forensics. 3 Proposed Method 3.1 Motivation Existing deepfake watermarking methods suer from two core limitations: (1) a trade-o b etween copyright pr otection and precise tamper localization, and (2) a lack of unied frameworks supporting both authentication and content recovery . Our approach addresses these gaps based on two key insights: Insight 1: T ampering-induced watermark degradation is a strong localization prior . Any content manipulation, such as face swapping, ine vitably corrupts the embedded watermark signal Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. within the altered regions. This creates a spatial inconsistency: tampered areas exhibit a damage d or absent watermark, while untampered areas retain the original, intact signal. By identifying where the watermark fails to de code correctly , we can precisely and robustly localize the manipulation. Insight 2: Semantic watermarking enables face recovery via compact latent embe dding. A naive solution for content recovery is to emb ed the original image (or its pixel-level representation) directly as a watermark. Ho wever , this approach requires a high- capacity watermark, which inevitably introduces signicant visual distortion and severely compr omises robustness to manipulations. Instead, we draw inspiration fr om recent advances in V ariational A utoencoder (V AE)-base d image compression, which demonstrate that facial content can be faithfully represented by low-dimensional latent codes. By embedding a compact semantic latent as the recov- ery watermark, we enable high-delity face content reconstruction while preserving imperceptibility and watermark resilience. 3.2 Overall Architecture Building on these insights, we introduce V eriFi, a unied and tamp er- resilient watermarking framework for proactiv e deepfake defense. V eriFi integrates copyright protection, pixel-level tamper local- ization, and high-delity face content recovery within a single framework. As illustrated in Figure 2 , V eriFi comprises four tightly integrated modules that work synergistically to achieve robust and versatile forensics. At its core, the Unied Recovery-Copyright W atermark (URW) Embe dder and Extractor (detailed in the Ap- pendix.) jointly encode and de code two signals: a discrete 𝑛 -bit ownership code 𝑤 𝑐𝑜 𝑝 for reliable copyright authentication, and a compact continuous facial latent 𝑧 𝑓 𝑎𝑐𝑒 , derived from a pretrained variational auto encoder (V AE), which serves as a semantic prior for high-delity content recov ery . The URW Embedder 𝐸𝑛𝑐 is re- sponsible for embedding these signals into the image in a visually imperceptible and perturbation-resilient manner , while the URW Extractor 𝐷 𝑒 𝑐 is optimized to r ecover both watermarks from images that may have undergone various manipulations. T o further enhance robustness against sophisticated attacks, V er- iFi incorporates an AIGC Attack Simulator (Sec. 3.5 ) during train- ing, which emulates realistic deepfake p erturbations by combining latent mixing, editing and image blending. This simulation strat- egy exposes the watermarking network to a diverse range of chal- lenging manipulations, thereby impr oving its generalization and resilience. For tamp er lo calization, V eriFi employs a W atermark- Guided Forgery Lo calization Network (Sec. 3.3 ) that leverages the decoded watermark as a spatial prior . By fusing semantic features from the potentially manipulated image with watermark-derived cues, this network generates a dense manipulation probability map ˆ M , enabling precise and robust lo calization of altered regions. In parallel, the W atermark-Guide d Deepfake Recovery Network (Se c. 3.4 ) utilizes the recover ed facial signature ˆ 𝑧 𝑓 𝑎𝑐𝑒 and the predicted manipulation map ˆ M to guide a dual-stream Transformer-based reconstructor 𝑅 . Through cross-attention and adaptive modulation, the recovery network selectively restores tamper ed regions while preserving authentic content elsewhere , resulting in high-delity reconstruction of the original image. 3.3 W atermark-Guided Forgery Localization T ampering-induced degradation of emb edded watermarks pro- vides a strong spatial prior for manipulation detection. W e propose a watermark-guided forgery lo calization network that explicitly aligns visual features with watermark-derived signals, enabling ro- bust and precise identication of manipulated r egions. Our network adopts a dual-branch Swin-Unet architecture. The image branch extracts multi-scale semantic features from the potentially manipu- lated input, while the watermark branch enco des spatially aligned features decoded from the recovered watermark. At each of 𝑆 hi- erarchical scales, both branches produce feature maps, which ar e projected to a common channel dimension 𝐶 𝑠 using 1 × 1 convolu- tions to facilitate direct comparison. T o quantify the consistency between the two streams, we com- pute a p er-pixel cosine similarity at each spatial lo cation ( 𝑥 , 𝑦 ) , yielding a scale-specic similarity map 𝑆 𝑠 : 𝑆 𝑠 ( 𝑥 , 𝑦 ) = ⟨F ( 𝑠 ) img ( 𝑥 , 𝑦 ) , F ( 𝑠 ) wm ( 𝑥 , 𝑦 ) ⟩ ∥ F ( 𝑠 ) img ( 𝑥 , 𝑦 ) ∥ 2 · ∥ F ( 𝑠 ) wm ( 𝑥 , 𝑦 ) ∥ 2 , (1) where F ( 𝑠 ) img ∈ R 𝐶 𝑠 × 𝐻 𝑠 × 𝑊 𝑠 and F ( 𝑠 ) wm ∈ R 𝐶 𝑠 × 𝐻 𝑠 × 𝑊 𝑠 denote the image and watermark feature maps at scale 𝑠 , and ⟨· , ·⟩ is the channel- wise inner product. After that, the similarity maps { 𝑆 1 , . . . , 𝑆 𝑆 } are upsampled to the input resolution and aggregated by a lightweight fusion head: F sim = 𝑔 Concat Up ( 𝑆 1 ) , . . . , Up ( 𝑆 𝑆 ) , (2) where Up ( ·) denotes spatial upsampling and 𝑔 ( ·) is a stack of convo- lutional layers with normalization, that produces a dense evidence map. Finally , we fuse the model-driven decoder evidence and the watermark-aligned evidence using a learnable weight 𝛼 to obtain the nal manipulation probability map ˆ M . 3.4 W atermark-Guided Deepfake Recovery The URW Emb edder encodes a compact facial latent ( 𝑧 𝑓 𝑎𝑐𝑒 ) that remains decodable after strong manipulations, serving as a seman- tic prior for recovery . A coarse face proxy is reconstructe d from ˆ 𝑧 𝑓 𝑎𝑐𝑒 via a pretrained V AE decoder . Howev er , direct reconstruction from this proxy is typically over-smoothed and lacks detail. T o overcome this, w e intr oduce a watermark-guided recov ery network that adaptively fuses spatial features from the manipulated image with semantic priors from the watermark, enabling high-delity restoration of the original face. As illustrate d in Figure 2 , the recovery network receives four inputs: (1) the manipulated image 𝐼 𝑚 𝑒𝑑 𝑖 𝑡 (masked according to the predicted forger y map), (2) a coarse content proxy ˆ 𝐼 𝑏 = 𝐷 V AE ( ˆ 𝑧 𝑓 𝑎𝑐𝑒 ) reconstructed from the deco ded semantic watermark, (3) the de- coded facial watermark ˆ 𝑧 𝑓 𝑎𝑐𝑒 , and (4) the predicted manipulation mask ˆ 𝑀 . The proxy ˆ 𝐼 𝑏 provides structural and content-consistent cues, complementing the corrupted observation 𝐼 𝑚 𝑒𝑑 𝑖 𝑡 . W e concate- nate ˆ 𝐼 𝑏 with 𝐼 𝑚 𝑒𝑑 𝑖 𝑡 and apply a patch embedding to obtain image to- kens, which are then processed by a stack of 𝑁 Transformer blocks for feature fusion and recovery . In parallel, the decoded watermark ˆ 𝑧 𝑓 𝑎𝑐𝑒 is encoded into a set of semantic tokens via a dedicated wa- termark encoder . T o ensure that semantic priors are injected only into manipulated regions, the predicted mask ˆ 𝑀 is downsampled High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y V AE D eco d er V AE E n co d er La te n t m i xi ng P oi s s on Bl e ndi ng Ra ndom D e gra da t i on V A E D eco d er V A E E n co d er L at e nt m ix in g Figure 3: O verview of the proposed AIGC Attack Simulator . The simulator models AIGC edits via latent feature grafting and seamless image blending, enabling robust watermark training. to the token resolution and used as a spatial gating mechanism during cross-attention. Formally , the gated cross-attention at each Transformer block is dened as: 𝑅 = G ( ˆ 𝑀 ) ⊙ ℎ A 𝜁 ( 𝑇 𝑥 ) , 𝜁 ( 𝑇 𝑤𝑚 ) , 𝜁 ( 𝑇 𝑤𝑚 ) , (3) where G ( ·) is a spatial gating operator , A ( ·) denotes cross-attention, and 𝜁 ( · ) is layer normalization. This mechanism restricts watermark- guided feature fusion to predicted tampered regions, preventing semantic leakage into authentic areas. After 𝑁 Transformer layers, a lightweight decoder reconstructs the nal recovered image ˆ 𝐼 𝑜𝑟 𝑖 . 3.5 AIGC Attack Simulator State-of-the-art deepfake generation pipelines typically consist of two main stages: (1) content synthesis in the latent space, and (2) image-space blending. Most existing watermarking methods primarily address post-blending perturbations, but often neglect the feature-level distortions introduced during generative synthe- sis [ 38 ]. T o bridge this gap, we propose an AIGC Attack Simulator that jointly models both latent-space and image-space manipula- tions, thereby exposing the watermarking network to a broader spectrum of realistic and challenging attack scenarios during train- ing. Importantly , the simulator is used only during training to enhance robustness and is not required at infer ence time. Our ap- proach does not assume any prior knowledge of the spe cic AIGC tools used at test time. Latent Mixing. T o emulate the feature blending process inherent in modern deepfake generators, we design a Latent Mixing Module, simulating the latent-space manipulations commonly performed by AIGC models. As depicte d in Figure 3 , given a watermarked image I 𝑝𝑟 𝑜 , we rst select a source face I 𝑠𝑟 𝑐 with similar facial landmarks [ 21 , 34 ], and then extract their latent representations z 𝑝𝑟 𝑜 , z 𝑠𝑟 𝑐 ∈ R 𝐶 × 𝐻 × 𝑊 using a pretrained V AE Encoder . W e then ran- domly sample a binary channel mask M 𝑐 ∈ { 0 , 1 } 𝐶 , and construct the mixed latent code as z 𝑔𝑒𝑛 = M 𝑐 ⊙ z 𝑠𝑟 𝑐 + ( 1 − M 𝑐 ) ⊙ z 𝑝𝑟 𝑜 , (4) where ⊙ denotes element-wise multiplication broadcasted across spatial dimensions. The resulting z 𝑔𝑒𝑛 is decoded by the V AE De- coder to obtain the generated image I 𝑔𝑒𝑛 . Such images naturally incorporate model-spe cic features and characteristics from other images, thereby more accurately simulating the AIGC editing pro- cess. Image Blending. T o further simulate realistic face replacement, we employ Poisson blending [ 17 ] to seamlessly integrate the source face I 𝑠𝑟 𝑐 into the generated image I 𝑔𝑒𝑛 . Specically , following the protocol of LVLM-DFD [ 34 ], we generate random facial masks M based on detected facial landmarks to dene the blending region. The nal manipulated image I 𝑒𝑑 𝑖 𝑡 is then produced by solving the Poisson equation within the masked region, ensuring smooth and natural transitions between the synthesized face and the original background. After blending, w e apply random degradations (e .g., JPEG compression, Gaussian noise) to further enhance robustness. 3.6 Training Objectives W atermark Emb e dding. T o guarantee visual imperceptibility , we constrain both pixel-level distortion and p erceptual discrepancy between the original image I 𝑜𝑟 𝑖 and the watermarked image I 𝑤𝑚 : L embed = ∥ I 𝑤𝑚 − I 𝑜𝑟 𝑖 ∥ 2 2 + ℓ ∥ 𝜙 ℓ ( I 𝑤𝑚 ) − 𝜙 ℓ ( I 𝑜𝑟 𝑖 ) ∥ 1 , (5) where 𝜙 ℓ denotes features extracted from the ℓ -th layer of a pre- trained VGG-19 netw ork. Message Decoding. T o ensure robust recov ery of both the own- ership code 𝑤 𝑐𝑜 𝑝 and the facial signature 𝑧 𝑓 𝑎𝑐𝑒 from manipulated images, we jointly optimize: L decode = BCE ( ˆ 𝑤 𝑐𝑜 𝑝 , 𝑤 𝑐𝑜 𝑝 ) + ∥ ˆ 𝑧 𝑓 𝑎𝑐𝑒 − 𝑧 𝑓 𝑎𝑐𝑒 ∥ 2 2 , (6) where ˆ 𝑤 𝑐𝑜 𝑝 and ˆ 𝑧 𝑓 𝑎𝑐𝑒 are the decoded ownership code and facial signature from the manipulated image, respectively . Forgery Localization. Manipulation localization is supervise d us- ing the Dice Loss [ 15 ], which directly optimizes the overlap between the predicted manipulation map ˆ M and the ground-truth mask M ∗ . The localization loss is dened as L loc . Guided Recovery . Restoration delity is enforced by constraining the reconstructed image ˆ I 𝑜𝑟 𝑖 to match the original I 𝑜𝑟 𝑖 in both pixel and perceptual domains: L rec = ∥ ˆ I 𝑜𝑟 𝑖 − I 𝑜𝑟 𝑖 ∥ 1 + ℓ ∥ 𝜙 ℓ ( ˆ I 𝑜𝑟 𝑖 ) − 𝜙 ℓ ( I 𝑜𝑟 𝑖 ) ∥ 1 . (7) Overall Objective. The complete training objective is formulated as: L total = L embed + 𝜆 1 L decode + 𝜆 2 L loc + 𝜆 3 L rec , (8) where 𝜆 𝑖 are balancing coecients. 4 Experiments 4.1 Experimental Settings Datasets. W e evaluate our method on two widely-adopted high- quality datasets: CelebA -HQ [ 6 ] and FFHQ [ 7 ]. CelebA -HQ com- prises 30,000 high-resolution celebrity images. W e follow the ocial data split protocol for training, validation, and testing. FFHQ con- tains 70,000 high-delity face images with substantial diversity in ethnicity , age, gender , and background comple xity . W e partition the dataset into 65,000/4,000/1,000 images for training/validation/testing respectively . All images are resized to 256 × 256 pixels for training and evaluation. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. T able 2: T amper-localization results on CelebA -HQ (1,000 images). Metrics: F1 / AUC / mIoU (higher is better). Best and second-b est per column are b old and underlined , respec- tively . Method SD Inpainting HD-painter Splicing F1 AUC mIoU F1 AUC mIoU F1 AUC mIoU CA T -NET 0.066 0.714 0.427 0.107 0.727 0.447 0.490 0.876 0.659 PSCC-NET 0.310 0.702 0.275 0.335 0.691 0.245 0.362 0.727 0.290 HiFi-Net 0.115 0.742 0.430 0.396 0.815 0.567 0.169 0.757 0.458 NCL-IML 0.085 0.518 0.423 0.087 0.516 0.421 0.040 0.503 0.406 MVSS-NET 0.262 0.843 0.484 0.378 0.866 0.540 0.639 0.935 0.707 Imuge+ 0.414 0.854 0.580 0.699 0.943 0.764 0.719 0.926 0.792 EditGuard 0.862 0.894 0.864 0.849 0.869 0.818 0.867 0.895 0.869 OmniGuard 0.869 0.983 0.880 0.914 0.995 0.908 0.804 0.980 0.817 StableGuard 0.686 0.482 0.548 0.707 0.384 0.570 0.997 1.000 0.994 W AM 0.495 0.787 0.492 0.174 0.503 0.315 0.782 0.921 0.707 V eriFi (Ours) 0.946 0.975 0.941 0.975 0.975 0.939 0.989 0.995 0.985 HD-Painter SD- Inpaint Blending Original Tamper C AT -Net PSCC- NET HiFi-Net MVSS- NET Imuge + EditGuard OmniGuard GT Ours Figure 4: Visual comparison of tamper localization results across dierent methods. Implementation Details. W e use Adam optimizer with a learn- ing rate of 2 × 10 − 4 and batch size 8. The loss weights are set as 𝜆 1 = 1 . 0 , 𝜆 2 = 1 . 0 , and 𝜆 3 = 2 . 0 . All experiments are conducted on four NVIDIA RTX 3090 GP Us. W e use SD Inpainting [ 19 ], HD- painter [ 14 ], E4S [ 12 ], InfoSwap [ 3 ], and MaskFaceGAN [ 18 ] as the AIGC attack models to evaluate performance under diverse manip- ulation scenarios. Additional implementation details are pro vided in Appendix. Baselines. W e compare our method against state-of-the-art deep watermarking, tamper localization and image recovery methods, including: SepMark [ 29 ], TrustMark [ 2 ], EditGuard [ 37 ], Robust- Wide [ 5 ], OmniGuard [ 38 ], StableGuard [ 31 ], W AM [ 20 ], CA T - NET [ 8 ], PSCC-Net [ 10 ], HiFi-Net [ 4 ], NCL-IML [ 41 ], Imuge+ [ 33 ], and DFREC [ 35 ]. All baselines are implemented using their ocial codebases and pretrained weights. Evaluation Metrics. Imperceptibility is evaluated by Peak Signal- to-Noise Ratio (PSNR ↑ ) and Structural Similarity Index (SSIM ↑ ). W a- termark robustness is quantied by Bit Accuracy ↑ (%), the fraction of correctly decoded bits under each attack. Tamper localization is assessed using F1-score ↑ , Area Under the Curve (AUC ↑ ), and mean Intersection-over-Union (mIoU ↑ ). Recovery quality is measured by PSNR rec ↑ / SSIM rec ↑ , and Fréchet Inception Distance (FID ↓ ). 4.2 Comparison with Localization Methods W e evaluate tamper-localization performance on 1,000 CelebA -HQ images under three representativ e AIGC-based forgery typ es: SD Inpainting, HD-painter and face Splicing. Comparisons include re- cent passive detectors (CA T -NET , PSCC-Net, HiFi-Net, NCL-IML) Original T ampered Ours Splicing SD Inpaint HD Inpaint Imuge DFREC Figure 5: Qualitative comparison of face recovery under SD Inpainting/HD-painter and Splicing. and active watermarking and recovery-aware baselines (EditGuard, OmniGuard, Imuge+, StableGuard, W AM). T able 2 reports F1, AUC and mIoU for each tampering scenario. Passive methods exhibit low sensitivity to subtle generative alterations, while several ac- tive baselines struggle under AIGC-induced perturbations. Notably , V eriFi attains F1 scores of 0.946, 0.975, and 0.989 for SD Inpainting, HD-painter , and Splicing, respe ctively , signicantly outp erforming prior works. Qualitative examples in Figure 4 corroborate these quantitative ndings and illustrate clearer boundaries for V eriFi compared to prior work. Additional experiments on FFHQ are pro- vided in the Appendix. Both quantitative and qualitative results consistently demonstrate V eriFi’s superior tamper-localization ca- pabilities across diverse datasets and manipulation types. I n f o s wap E4 S T ar g et S our c e W at er m ar k ed T ar g et F o rg e r y R eco v er ed P r ed i ct ed Ma sk Figure 6: Additional qualitative comparison of face recovery under InfoSwap/E4S on CelebA -HQ. 4.3 Comparison with Face Recovery Methods W e comprehensiv ely evaluate face recovery performance by b ench- marking V eriFi against state-of-the-art methods, including Imuge+ High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y T able 3: Face recovery performance under six representative tamp ering types. Higher PSNR rec / SSIM rec indicate better delity; lower FID indicates higher realism. Attack types are grouped by generation paradigm: Diusion-based (SD Inpainting, HD- painter), GAN-based (E4S, InfoSwap, MaskFaceGAN), and Traditional (Splicing). Best results per column are bold. Method Dataset Diusion-based GAN-based Traditional SD Inpainting HD-painter E4S InfoSwap MaskFaceGAN Splicing PSNR rec SSIM rec FID PSNR rec SSIM rec FID PSNR rec SSIM rec FID PSNR rec SSIM rec FID PSNR rec SSIM rec FID PSNR rec SSIM rec FID Imuge+ CelebA -HQ 21.49 0.741 29.60 16.91 0.753 118.07 18.52 0.625 129.94 17.69 0.674 68.41 19.65 0.622 105.23 27.07 0.906 34.52 DFREC CelebA -HQ 21.14 0.666 71.68 18.36 0.691 107.15 17.74 0.608 38.57 21.11 0.628 38.51 18.63 0.624 91.03 19.64 0.661 59.95 V eriFi (Ours) CelebA-HQ 31.05 0.894 39.25 28.34 0.892 45.68 25.12 0.839 26.71 27.33 0.866 18.82 25.73 0.845 41.18 31.06 0.921 21.51 Imuge+ FFHQ 16.46 0.745 94.05 19.54 0.697 47.13 11.28 0.542 121.56 15.32 0.592 78.10 18.34 0.619 63.57 29.52 0.891 31.48 DFREC FFHQ 20.90 0.656 73.03 10.47 0.342 199.65 16.64 0.577 50.04 20.33 0.598 49.32 17.19 0.575 127.08 17.26 0.606 71.49 V eriFi (Ours) FFHQ 30.61 0.905 20.21 26.84 0.853 29.83 21.1 0.788 45.36 24.25 0.766 38.84 25.19 0.866 38.20 31.60 0.937 23.56 W at er m ar k ed T ar g et F o rg e r y R eco v er ed P r ed i ct ed Ma sk Ma sk Figure 7: Additional qualitative comparison of face recovery under SD Inpainting on CelebA -HQ. [ 33 ] and DFREC [ 35 ], on the Celeb A-HQ dataset. As summarized in T able 3 , V eriFi consistently achieves the best results across all tampering types and datasets, with substantial margins over prior works. V eriFi attains 29.50 dB PSNR and 0.904 SSIM on CelebA -HQ under SD Inpainting, outperforming Imuge+ and DFREC. Similar trends are observed for HD-painter and splicing, as well as on FFHQ, demonstrating the robustness of our approach. Qualitative r esults in Figure 5 6 , 7 , and 8 further highlight that V eriFi can faithfully reconstruct ne-grained facial details, even under challenging and large-area tampering. In contrast, existing methods often produce visible artifacts or fail to recover occluded regions. Notably , V eriFi maintains high visual quality and low FID , indicating that the r e- covered faces are not only visually plausible but also semantically consistent with the original content. S our c e W at er m ar k ed T ar g et F o rg e r y R eco v er ed P r ed i ct ed Ma sk Ma sk Figure 8: Additional qualitative comparison of face recovery under Splicing on CelebA -HQ. 4.4 Comparison with Deep W atermarking W e compare V eriFi against eight state-of-the-art deep-watermarking methods [ 2 , 5 , 20 , 27 , 29 , 31 , 37 , 38 ]. Table 4 summarizes watermarked- image delity and watermark extraction accuracy under representa- tive AIGC edits and common degradations on CelebA -HQ. Quanti- tatively , V eriFi attains a favorable delity–robustness balance with 42.53 dB PSNR and 0.948 SSIM, while achieving the highest mean extraction accuracy (97.70%). In particular , V eriFi yields the best per- formance on MaskFaceGAN and perfect extraction on ColorJitter and Gaussian Noise. It also maintains consistently strong robust- ness against AIGC manipulations, demonstrating resilience to both generative edits and conventional degradations. Additional qualita- tive comparisons, extended generalization experiments on FFHQ and ImageNet, and robustness evaluations under diverse degra- dations are provided in the App endix. Colle ctively , these results further substantiate that V eriFi achieves state-of-the-art r obustness and copyright protection, while maintaining high visual delity and imperceptibility . Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. T able 4: Quantitative comparison on CelebA -HQ . W e report watermarked-image delity (PSNR in dB / SSIM) and watermark extraction accuracy (%) under representative AIGC manipulations and common degradations. The best results are in bold, and the second best are underlined. Method PSNR SSIM GAN-based (%) Diusion-based (%) Common degradations (%) A vg. (%) InfoSwap E4S MaskFaceGAN HD-painter SD Inpainting JPEG ColorJitter Gaussian Noise TrustMark [ 2 ] 47.94 0.955 65.03 68.76 53.01 90.22 87.21 96.31 98.96 99.81 82.41 SepMark [ 29 ] 38.67 0.928 92.01 88.49 95.02 98.83 98.35 99.99 99.98 100.00 96.58 LampMark [ 27 ] 44.05 0.971 89.21 74.80 65.61 83.73 89.79 84.13 84.50 84.88 82.08 Robust- Wide [ 5 ] 43.74 0.952 86.31 86.48 86.90 97.20 96.09 96.45 97.15 97.43 93.00 EditGuard [ 37 ] 32.24 0.758 48.92 77.34 78.56 82.07 91.39 79.55 95.07 93.19 80.76 OmniGuard [ 38 ] 42.08 0.947 72.32 73.66 79.80 76.77 84.33 92.33 92.13 92.29 82.95 StableGuard [ 31 ] 31.81 0.848 50.08 87.93 89.16 88.13 95.58 59.78 99.93 63.61 79.28 W AM [ 20 ] 43.47 0.950 99.64 99.87 92.97 77.60 93.34 100.00 59.64 99.99 90.38 V eriFi (Ours) 42.53 0.948 99.60 90.51 95.62 98.72 97.29 99.82 100.00 100.00 97.70 T able 5: Ablation of AIGC attack-simulator components. Bit extraction accuracy (%) under four representative attacks on 1,000 CelebA -HQ images. “A vg. ” is the mean over the four attacks. Method E4S SimSwap SD Inpainting InfoSwap A vg. Without augmentation 50.97 53.04 63.69 54.11 55.45 With noise augmentation 57.77 66.63 79.27 73.26 69.23 With blending 85.47 76.34 87.95 84.16 83.48 Blending + latent mixing (Ours) 94.25 77.52 86.45 87.90 86.53 4.5 Analysis and Ablation Studies Ablation on AIGC Attack Simulation. W e quantify the indi- vidual and combined eects of the simulator components, namely noise augmentation, image blending, and latent space mixing, by training four variants: (i) without augmentation, (ii) with noise aug- mentation, (iii) with image blending, and (iv) with both blending and latent space mixing, which constitutes the full model referred to as Ours. T able 5 reports bit extraction accuracy (%) on 1,000 CelebA - HQ images under four representative attacks. The results show that both image blending and latent space mixing are r equired to realistically simulate AIGC attacks and to obtain robust watermark extraction. Ablation on Similarity Computation for Lo calization. W e eval- uate the eect of augmenting the localization head with a similarity branch in T able 6 . Replacing segmentation-only supervision with an additional similarity branch improves F1 from 0.912 to 0.946, AUC fr om 0.966 to 0.975, and mIoU from 0.842 to 0.941, demonstrat- ing that watermark-guided similarity cues substantially enhance localization accuracy . W e further compare two pairing strategies during training: forming pairs from original (genuine) images and their watermarked counterparts, and forming pairs by concatenat- ing unrelated images with watermarked images. T raining with a mixture of genuine and synthetic comp osite pairs yields the largest improvement in localization performance. Ablation on W atermark Guidance for Recovery . W e evaluate the impact of V AE-based face latent dimensionality (256, 576, 1024) on recovery . Latents are extracted by resizing the input to dierent resolutions before V AE encoding, thus controlling embe dding size. T able 6: Ablation: localization similarity metric and super vi- sion on CelebA -HQ (SD Inpainting). Setting (Factor) F1 AUC mIoU Design Seg-only (no similarity branch) 0.912 0.966 0.842 Seg + Similarity (ours) 0.946 0.975 0.941 Supervision Original-only (genuine pairs) 0.881 0.939 0.808 Orig. + Synthetic (ours) 0.946 0.975 0.941 T able 7: Ablation on face-representation guidance. Evalu- ation on CelebA -HQ under SD Inpainting (1,000 images). PSNR rec in dB; Bit Acc. denotes watermark extraction accu- racy; latency is per-image decode time on one RTX 3090. Guidance PSNR rec SSIM rec Bit Acc. (%) Latency (ms) 256-D embedding 23.15 0.709 97.54 49.77 576-D embedding 25.83 0.843 96.06 51.15 1024-D embedding 31.05 0.894 97.29 53.57 As shown in Table 7 , higher-dimensional latents yield improved PSNR rec and SSIM rec with negligible eect on watermark extrac- tion accuracy and inference latency . This demonstrates that richer semantic priors enhance recov er y delity without compromising robustness or eciency . Eciency Analysis. W e analyze the computational eciency of V eriFi by measuring the number of mo del parameters, oating-p oint operations (FLOPs), and inference latency for each component on a single RTX 3090 GP U. Table 8 summarizes these metrics. The total model size is approximately 157.12 million parameters, with a cumulative FLOP count of 384.97 billion. The end-to-end inference latency for watermark embedding, extraction, tamper lo calization, and face recovery is 82.75 milliseconds per image, indicating that V eriFi is suitable for real-time or near-real-time applications in practical scenarios. High-Fidelity Face Content Recovery via T amper-Resilient V ersatile W atermarking Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y T able 8: Eciency analysis of V eriFi on a single RTX 3090 GP U. Component Params (M) FLOPs (G) Latency (ms) W atermark Embedder 70.88 67.35 18.41 W atermark Extractor 15.03 231.43 29.27 T amper Locator 37.14 25.44 14.71 Face Recovery Network 34.07 60.75 20.36 T otal 157.12 384.97 82.75 5 Conclusion W e propose V eriFi, a unied and versatile watermarking framework that enables robust copyright tracing, pr ecise tamper localization, and high-delity facial recovery . By jointly emb edding ownership signatures and facial r epresentations, V eriFi demonstrates strong resistance against both pixel-domain and AIGC-based attacks. Ex- tensive experiments on CelebA -HQ and FFHQ validate the sup eri- ority of our approach in terms of extraction accuracy , localization precision, and recovery quality . Our method provides a compre- hensive solution for protecting and verifying digital face images in the era of advanced generative models. Future work may explore extending this framework to video content and other modalities. References [1] T anya Koohpayeh Araghi, David Megías, Victor Garcia-Font, Minoru Kurib- ayashi, and W ojciech Mazurczyk. 2024. Disinformation detection and source tracking using semi-fragile watermarking and blockchain. In Proceedings of the 2024 European Interdisciplinary Cyb ersecurity Conference . 136–143. [2] T u Bui, Shruti Agarwal, and John Collomosse. 2025. TrustMark: Robust W ater- marking and W atermark Remo val for Arbitrar y Resolution Images. In Proceedings of the IEEE/CVF International Conference on Computer Vision . 18629–18639. [3] Gege Gao, Huaibo Huang, Chaoyou Fu, Zhaoyang Li, and Ran He. 2021. In- formation Bottleneck Disentanglement for Identity Swapping. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) . 3404–3413. [4] Xiao Guo, Xiaohong Liu, Zhiyuan Ren, Ste ven Grosz, Iacopo Masi, and Xiaoming Liu. 2023. Hierarchical ne-grained image forgery dete ction and localization. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 3155–3165. [5] Runyi Hu, Jie Zhang, Ting Xu, Jiwei Li, and Tianwei Zhang. 2024. Robust-wide: Robust watermarking against instruction-driven image editing. In European Conference on Computer Vision . Springer , 20–37. [6] T ero Karras, Timo Aila, Samuli Laine, and Jaakko Lehtinen. 2018. Progressive Growing of GANs for Improved Quality , Stability , and V ariation. In International Conference on Learning Representations (ICLR) . [7] T ero Karras, Samuli Laine, and Timo Aila. 2019. A Style-Based Generator Architec- ture for Generative Adversarial Networks. In IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) . [8] Myung-Joon K won, In-Jae Yu, Seung-Hun Nam, and Heung-K yu Lee. 2021. CA T - Net: Compression artifact tracing network for detection and localization of image splicing. In Proceedings of the IEEE/CVF winter conference on applications of computer vision . 375–384. [9] Y uxi Li, Fuyuan Cheng, W angbo Yu, Guangshuo W ang, Guibo Luo, and Yuesh- eng Zhu. 2024. Adai: Adaptive image forgery localization via a dynamic and importance-aware transformer network. In European Conference on Computer Vision . Springer , 477–493. [10] Xiaohong Liu, Y aojie Liu, Jun Chen, and Xiaoming Liu. 2022. PSCC-Net: Progres- sive spatio-channel correlation network for image manipulation detection and localization. IEEE Transactions on Circuits and Systems for Vide o T echnology 32, 11 (2022), 7505–7517. [11] Y aqi Liu, Shuhuan Chen, Haichao Shi, Xiao- Yu Zhang, Song Xiao, and Qiang Cai. 2025. MUN: Image Forgery Localization Based on M 3 Encoder and UN Decoder . In Proceedings of the AAAI Conference on Articial Intelligence , V ol. 39. 5685–5693. [12] Zhian Liu, Maomao Li, Y ong Zhang, Cairong W ang, Qi Zhang, Jue Wang, and Y ongwei Nie. 2023. Fine-grained face swapping via regional gan inversion. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 8578–8587. [13] Zijie Lou, Gang Cao, Kun Guo, Lifang Yu, and Shaowei W eng. 2025. Exploring multi-view pixel contrast for general and robust image forgery localization. IEEE Transactions on Information Forensics and Security (2025). [14] Hayk Manukyan, Andranik Sargsyan, Barsegh Atanyan, Zhangyang W ang, Shant Navasardyan, and Humphrey Shi. 2023. HD-Painter: High-Resolution and Prompt- Faithful T ext-Guided Image Inpainting with Diusion Models. arXiv preprint arXiv:2312.14091 (2023). [15] Fausto Milletari, Nassir Navab, and Seyed- Ahmad Ahmadi. 2016. V-Net: Fully Convolutional Neural Networks for V olumetric Me dical Image Segmentation. In 2016 Fourth International Conference on 3D Vision (3D V) . 565–571. doi:10.1109/ 3D V .2016.79 [16] Paarth Neekhara, Shehzeen Hussain, Xinqiao Zhang, Ke Huang, Julian McAule y , and Farinaz Koushanfar . 2024. Facesigns: Semi-fragile watermarks for media authentication. ACM Transactions on Multimedia Computing, Communications and A pplications 20, 11 (2024), 1–21. [17] Patrick Pérez, Michel Gangnet, and Andrew Blake. 2003. Poisson image editing. In ACM Transactions on Graphics (TOG) , V ol. 22. ACM, 313–318. [18] Martin Pernuš, Vitomir Štruc, and Simon Dobrišek. 2023. MaskFaceGAN: High- Resolution Face Editing With Masked GAN Latent Co de Optimization. IEEE Transactions on Image Processing 32 (2023), 5893–5908. doi:10.1109/TIP.2023. 3326675 [19] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser , and Björn Ommer . 2022. High-resolution image synthesis with latent diusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 10684–10695. [20] T om Sander , Pierre Fernandez, Alain Durmus, T e ddy Furon, and Matthijs Douze. 2025. W atermark anything with localized messages. In International Conference on Learning Representations-ICLR 2025 . [21] Kaede Shiohara and T oshihiko Y amasaki. 2022. Detecting Deepfakes with Self- Blended Images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 18720–18729. [22] Chuangchuang T an, Y ao Zhao, Shikui W ei, Guanghua Gu, Ping Liu, and Yunchao W ei. 2024. Frequency-aware de epfake detection: Improving generalizability through frequency space domain learning. In Proceedings of the AAAI Conference on A rticial Intelligence , V ol. 38. 5052–5060. [23] Chuangchuang T an, Y ao Zhao, Shikui W ei, Guanghua Gu, Ping Liu, and Yunchao W ei. 2024. Rethinking the up-sampling operations in cnn-based generative network for generalizable deepfake detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 28130–28139. [24] Matthew T ancik, Ben Mildenhall, and Ren Ng. 2020. Stegastamp: Invisible hy- perlinks in physical photographs. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 2117–2126. [25] Run W ang, Felix Juefei-Xu, Meng Luo, Yang Liu, and Lina W ang. 2021. Faketagger: Robust safeguards against deepfake dissemination via provenance tracking. In Proceedings of the 29th ACM international conference on multimedia . 3546–3555. [26] Tianyi W ang, Harry Cheng, Ming-Hui Liu, and Mohan K ankanhalli. 2025. Fractal- forensics: Proactive deepfake detection and localization via fractal watermarks. In Proceedings of the 33rd ACM International Conference on Multimedia . 7210–7219. [27] Tianyi W ang, Meng xiao Huang, Harry Cheng, Xiao Zhang, and Zhiqi Shen. 2024. Lampmark: Proactive deepfake detection via training-free landmark per- ceptual watermarks. In Proceedings of the 32nd ACM International Conference on Multimedia . 10515–10524. [28] Tianyi W ang, Xin Liao, Kam Pui Chow , Xiao dong Lin, and Yinglong W ang. 2024. Deepfake Detection: A Comprehensive Survey from the Reliability Perspective. 57, 3, Article 58 (2024), 35 pages. doi:10.1145/3699710 [29] Xiaoshuai Wu, Xin Liao, and Bo Ou. 2023. Sepmark: Deep separable watermarking for unied source tracing and deepfake dete ction. In Proceedings of the 31st ACM International Conference on Multimedia . 1190–1201. [30] Chao Y ang, Zhiyu W ang, Huawei Shen, Huizhou Li, and Bin Jiang. 2021. Multi- Modality Image Manipulation Detection. In 2021 IEEE International Conference on Multimedia and Exp o (ICME) . 1–6. doi:10.1109/ICME51207.2021.9428232 [31] Haoxin Y ang, Bangzhen Liu, Xuemiao Xu, Cheng Xu, Yuyang Y u, Zikai Huang, Yi W ang, and Shengfeng He. 2025. StableGuard: To wards Unie d Copyright Protection and T amper Lo calization in Latent Diusion Models. Advances in Neural Information Processing Systems (2025). [32] Zijin Y ang, Kai Zeng, K ejiang Chen, Han Fang, W eiming Zhang, and Nenghai Y u. 2024. Gaussian shading: Provable performance-lossless image watermarking for diusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 12162–12171. [33] Qichao Ying, Hang Zhou, Zhenxing Qian, Sheng Li, and Xinpeng Zhang. 2023. Learning to immunize images for tamper lo calization and self-recovery . IEEE Transactions on Pattern A nalysis and Machine Intelligence 45, 11 (2023), 13814– 13830. [34] Peipeng Y u, Jianwei Fei, Hui Gao, Xuan Feng, Zhihua Xia, and Chip Hong Chang. 2025. Unlocking the Capabilities of Large Vision-Language Models for Generaliz- able and Explainable Deepfake Detection. In Forty-second International Conference on Machine Learning . Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. [35] Peipeng Yu, Hui Gao, Jianwei Fei, Zhitao Huang, Zhihua Xia, and Chip-Hong Chang. 2025. DFREC: DeepFake Identity Recovery Based on Identity-aware Masked Autoencoder . arXiv: 2412.07260 [cs.CV] [36] Li Zhang, Mingliang Xu, Dong Li, Jianming Du, and Rujing Wang. 2024. Cat- mullrom splines-based regression for image forgery localization. In Proceedings of the AAAI Conference on Articial Intelligence , V ol. 38. 7196–7204. [37] Xuanyu Zhang, Runyi Li, Jiwen Y u, Y oumin Xu, W eiqi Li, and Jian Zhang. 2024. Editguard: V ersatile image watermarking for tamper lo calization and copyright protection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 11964–11974. [38] Xuanyu Zhang, Zecheng Tang, Zhipei Xu, Runyi Li, Y oumin Xu, Bin Chen, Feng Gao, and Jian Zhang. 2025. Omniguard: Hybrid manipulation localization via augmented versatile deep image watermarking. In Proceedings of the Computer Vision and Pattern Recognition Conference . 3008–3018. [39] Xuandong Zhao, Kexun Zhang, Zihao Su, Saastha Vasan, Ilya Grishchenko, Christopher Kruegel, Giovanni Vigna, Y u-Xiang W ang, and Lei Li. 2024. In- visible image watermarks are provably removable using generative ai. Advances in neural information processing systems 37 (2024), 8643–8672. [40] Jizhe Zhou, Xiaochen Ma, Xia Du, Ahmed Y . Alhammadi, and W entao Feng. 2023. Pre- Training-Free Image Manipulation Localization through Non-Mutually Exclusive Contrastive Learning. In Proce edings of the IEEE/CVF International Conference on Computer Vision (ICCV) . 22346–22356. [41] Jizhe Zhou, Xiaochen Ma, Xia Du, Ahmed Y Alhammadi, and W entao Feng. 2023. Pre-training-free image manipulation localization through non-mutually exclu- sive contrastive learning. In Proceedings of the IEEE/CVF international conference on computer vision . 22346–22356. [42] Jiren Zhu, Russell Kaplan, Justin Johnson, and Li Fei-Fei. 2018. Hidden: Hiding data with deep networks. In Proceedings of the European conference on computer vision (ECCV) . 657–672. Received 20 February 2007; revised 12 March 2009; accepted 5 June 2009

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment