Joint Source-Channel-Check Coding with HARQ for Reliable Semantic Communications

Semantic communication has emerged as a promising paradigm for improving transmission efficiency and task-level reliability, yet most existing reliability-enhancement approaches rely on retransmission strategies driven by semantic fidelity checking t…

Authors: Boyuan Li, Shuoyao Wang, Suzhi Bi

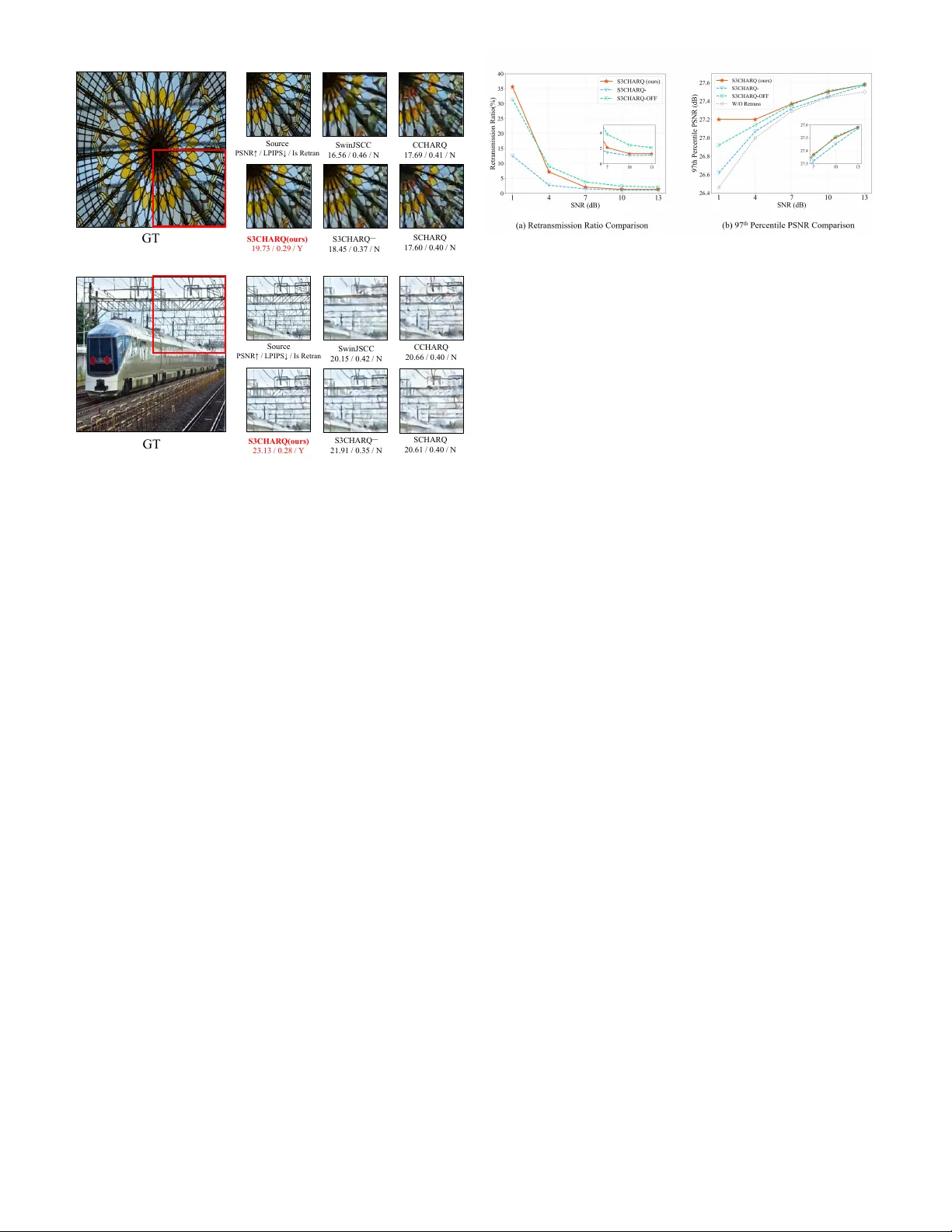

Joint Source-Channel-Check Coding with HARQ for Reliable Semantic Communications Boyuan Li, Shuoyao W ang, Senior Member , IEEE , Suzhi Bi, Senior Member , IEEE , Liping Qian, Senior Member , IEEE , and Y unlong Cai, Senior Member , IEEE Abstract — Semantic communication has emerged as a promis- ing paradigm for impro ving transmission efficiency and task-lev el reliability , yet most existing reliability-enhancement approaches rely on retransmission strategies driven by semantic fidelity checking that requir e additional check codewords solely for retransmission triggering, thereby incurring substantial commu- nication o verhead. In this paper , we propose S3CHARQ, a J oint Source-Channel-Check Coding framework with h ybrid automatic repeat request (HARQ) that fundamentally rethinks the role of check codewords in semantic communications. By integrating the check codeword into the joint source–channel coding (JSCC) process, S3CHARQ enables joint source–channel–check coding (JS3C), allowing the check codeword to simultaneously support semantic fidelity verification and reconstruction enhancement. At the transmitter , a semantic fidelity-aware check encoder embeds auxiliary r econstruction information into the check codeword. At the r eceiver , the JSCC and check codewords are jointly decoded by a JS3C decoder , while the check codeword is additionally exploited for perceptual quality estimation. Moreo ver , because retransmission decisions are necessarily based on imperfect semantic quality estimation in the absence of ground-truth reconstruction, estimation errors are unavoidable and fundamen- tally limit the effectiveness of rule-based decision schemes. T o over come this limitation, we develop a reinf or cement learning (RL)-based retransmission decision module that enables adap- tive, sample-level retransmission decisions, effectively balancing reco very and refinement information under dynamic channel conditions. Experimental results demonstrate that compared with existing HARQ-based semantic communication systems, the proposed S3CHARQ framework achie ves a 2 . 36 dB improvement in the 97th percentile PSNR, as well as a 37 . 45% reduction in outage probability . Index T erms —Semantic communication, image transmission, HARQ, reliable transmission. I . I N T RO D U C T I O N W ith the ev olution of sixth-generation (6G) communication technologies, immersiv e real-time multimedia applications, characterized by high throughput requirements, stringent la- tency constraints, and ultra-high reliability demands, ha ve attracted wide attention. Under existing netw ork architectures, the traditional bit-lev el communication paradigm struggles to simultaneously satisfy these requirements. By bridging artifi- cial intelligence (AI) applications with the physical commu- nication layer , semantic communication achiev es highly com- pressed transmission while preserving task-relev ant semantic B. Li, S. W ang, and S. Bi are with the Colle ge of Electronic and Information Engineering, Shenzhen University , Shenzhen, China. Liping Qian is with the Colle ge of Information Engineering, Zhejiang Uni versity of T echnology , Hangzhou, China. Y . Cai is with the College of Information Science and Electronic Engineering, Zhejiang Uni versity , Hangzhou, China. fidelity [1]. Lev eraging advances in deep learning, semantic communication (SemComm) systems often use deep neural networks (DNNs) for joint source-channel coding (JSCC) to extract and encode semantic information from various types of sources, such as text [2] and images [3]–[5]. Although SemComm systems exhibit inherent robustness to channel noise, systematic designs that ensure end-to-end transmission reliability remain limited, especially for next- generation services such as the metaverse and extended reality . Fully guaranteeing reliable semantic information delivery in SemComm systems therefore remains an open challenge. Existing studies have explored this issue from different per- spectiv es, including robust feature extraction networks [6]–[8], flexible task-offloading mechanisms [9], and large language model (LLM)-based post-processing to recov er corrupted se- mantic features [10], all aiming to enhance semantic transmis- sion reliability . While the aforementioned schemes ef fecti vely enhance transmission robustness, feature extraction and encoding in SemComm systems are still primarily conducted at the ap- plication and physical (PHY) layers. The modern wireless systems rely on HARQ, a cross-layer mechanism spanning both PHY and MA C layers—has prov en highly effecti ve in improving transmission reliability , particularly in static or low- mobility scenarios where the channel remains approximately constant ov er the round-trip time (R TT) of retransmissions. Under such conditions, HARQ enables efficient error recovery through retransmission and combining with limited redun- dancy , making it an attractive reliability-enhancement mecha- nism for SemComm systems. Motiv ated by these advantages, sev eral recent studies hav e explored the integration of HARQ into SemComm systems to improve transmission reliabil- ity . Howe v er , existing HARQ-enabled SemComm approaches largely rely on con v entional fidelity checking mechanisms that are designed to guarantee strict bit-wise fidelity . These mechanisms are fundamentally mismatched with the inherent tolerance of semantic features to bit errors. Therefore, to fully exploit the potential of HARQ in SemComm systems, two fundamental challenges must be addressed. • Question 1: How to accurately assess the impact of channel noise at the semantic-level, rather than at the bit-level? • Question 2: How to jointly optimize r etransmission str ate- gies and encoding scheme, impr oving transmission r elia- bility without sacrificing communication efficiency? T o address Question 1, se veral prior works hav e developed task and modality specific validation schemes. By defining thresholds on perceptual quality metrics, these approaches estimate reconstruction quality at the receiv er without re- quiring access to the original source, and use the estimated quality to guide retransmission decisions. For example, [11] and [12] introduced additional reconstruction quality predic- tors to estimate the Peak Signal-to-Noise Ratio (PSNR) [12] and the Structural Similarity Index (SSIM), respectively , and determine whether further retransmissions are required. T o address Question 2, several recent approaches have explored adapti ve retransmission strategies with variable code- word lengths to reduce retransmission o verhead. For instance, [11] proposed a retransmission scheme CCHARQ, which uses deep reinforcement learning to adaptively adjust the retransmission code length based on SSIM predictions, thereby balancing reconstruction quality and retransmission overhead. In contrast, SCBHARQ [13] introduces feature-lev el negati ve acknowledgment (NAK) signaling to selecti v ely retransmit dif- ferent semantic features by comparing the semantic similarity between receiv ed noisy features and reference features. Despite these advances, existing studies on encoding and retransmission in semantic communication still exhibit se v eral remarkable limitations: i) Due to the heterogeneous optimiza- tion goals of error detection and semantic decoding, most current approaches treat these two processes separately , which fails to explore the potential redundancy between semantic chec k-coding and semantic sour ce-c hannel coding . ii) For the retransmission decision-making, existing threshold-based schemes are vulnerable to prediction err ors of r econstruction quality estimators , sev erely degrading the reliability of the ov erall system. iii) W ith respect to the retransmission process itself, retransmissions serve a dual role: on the one hand, they introduce redundancy to mitigate channel noise; on the other hand, they can con v e y refinement information, func- tioning similarly to enhancement layers in scalable coding. Howe v er , existing retransmission strategies typically rely on binary A CK/N AK signaling, enabling only coarse retransmis- sion decisions. As a result, they lead to static retransmission mechanisms that cannot adapt to the instantaneous distortion lev el of the receiv ed signal, limiting the system’ s ability to effecti v ely balance noise r ecovery and pr ogr essive refinement . T o address these challenges, in this paper , we propose a Joint Source-Channel-Check Coding (JS3C) mechanism, which leverages the redundancy inherent in semantic error detection (SED) to improve image reconstruction performance. Based on JS3C, we further integrate a condition-aware re- transmission strategy and optimized retransmission encoding to constitute Source-Channel-Check Coding Hybrid Automatic Repeat reQuest (S3CHARQ). The proposed framework en- ables more reliable retransmissions and achiev es remarkable reconstruction performance under limited retransmission op- portunities. The main contributions of this paper are summa- rized as follows: • W e propose S3CHARQ, a novel HARQ mechanism that jointly optimizes source coding, channel coding, check coding, as well as retransmission coding for SemComm. W e propose the JS3C principle, which enables uni- fied optimization of transmission encoding and semantic checking within a single transmission round. Overall, the proposed S3CHARQ frame work enables both high- efficienc y and high-reliability semantic communications, ev en over noisy wireless channels. • Joint Sour ce-Channel-Checking Codecs : W e propose a joint source–channel–check coding (JS3C) design that integrates semantic checking into the JSCC process, enabling unified optimization of source–channel coding and check coding. At the transmitter , a semantic fidelity- aware (SF A) check encoder generates compact check codew ords conditioned on encoded semantic features, such that they con ve y both semantic fidelity verification and auxiliary reconstruction information. At the recei v er , the check and JSCC codewords are jointly decoded by a cooperative-a ware JSCC (CA-JSCC) decoder to enhance reconstruction quality , while the check code- word is simultaneously exploited by an LPIPS-based estimator for perceptual quality assessment. Furthermore, a multi-stage training strategy grounded in information bottleneck theory is adopted to ensure that the check codew ord carries complementary semantic information without compromising robustness. • Condition-aware Retransmission Strate gy : T o cope with prediction errors in receiv er-side quality estimation, we design a condition-aware retransmission decision agent based on the Proximal Policy Optimization (PPO) algo- rithm. By dynamically adjusting the aggressiv eness of retransmission based on check codew ord, instantaneous channel conditions, and the uncertainty in quality esti- mation, the proposed agent enables more accurate and robust retransmission decisions under varying distortion lev els and channel impairments. • Adaptive Recovery-Refinement Re-encoding : T o mitigate unnecessary redundancy during retransmissions, we in- corporate quality estimation feedback into the retransmis- sion encoder and design an entropy optimizer that dynam- ically adapts redundancy lev els for error recovery , while allocating residual bandwidth to refinement information for progressiv e quality improvement. Experiments results validate the superiority of the proposed S3CHARQ. For transmission reliability , S3CHARQ achie ves av erage 37.45% reduction on outage probability , as well as a 2 . 36 dB improv ement in 97th percentile PSNR, significantly superior compared with existing HARQ-based semantic com- munication system. In Section IV, we formulate the problem and describe the proposed system in detail. Simulation results are presented in Section V, and conclusions are drawn in Section VI. I I . R E L A T E D W O R K S A. Semantic Communication W ith the rapid advancement of deep learning techniques, extensi ve research has been conducted based on the principle of joint source-channel coding (JSCC) schemes. By leveraging deep neural network (DNN) for semantic feature extraction and representation, JSCC provides an efficient method to address massiv e data transmission demands while achieving significantly lower reconstruction error [14]. In recent years, SemComm has been widely studied for text [15], [16], image [17], [18], and video transmission [19], [20], demonstrating superior communication performance compared with con- ventional approaches. Furthermore, follow-up studies hav e continued to improve average transmission performance by incorporating more powerful neural network backbones [4] and introducing variational information bottleneck principles during training [21]. As a result, SemComm is increasingly regarded as a promising and effecti ve paradigm for next- generation communication systems. Despite these remarkable achiev ements, most existing ap- proaches primarily focus on improving av erage performance, while relativ ely few studies explicitly address optimization ov er the long-tail distribution. Training methods based on av erage-case sampling are inherently limited in their ability to improv e the lo wer performance bound of SemComm systems. Consequently , in practical deployments, these approaches of- ten exhibit pronounced performance fluctuations, failing to re- liably satisfy the stringent requirements on worst-case quality and reliability . B. Reliable Semantic Communication For SemComm systems, achieving reliable transmission is challenging for two reasons. First, optimizing average re- construction quality alone cannot guarantee the lower bound performance, which is critical for ensuring transmission relia- bility , especially in worst-case scenarios [22]. Second, reliabil- ity mechanisms originally designed for bit-lev el communica- tions, such as check coding and validation, are fundamentally mismatched with the semantic paradigm, which emphasizes semantic-lev el fidelity rather than strict bit-wise correctness [23]. According to classical error control strategies, exist- ing reliability-oriented SemComm approaches can be broadly categorized into two main classes: forward error correction (FEC)-based methods and hybrid automatic repeat request (HARQ)-based methods. 1) FEC-based r eliable semantic communication: T o en- able reliable semantic communication, several studies hav e drawn inspiration from conv entional Internet communication protocols (e.g. TCP and R TCP), seeking to adapt and ex- tend bit-le vel reliability mechanisms to the reliable semantic domain. FEC-based methods aim to enhance the robustness of semantic representations by extracting more expressiv e and noise-resilient features, thereby enabling the receiv er to automatically reco ver errors without requiring retransmissions [16], [24], [25]. For instance, [24] models semantic noise and channel impairments during neural network training, enabling the JSCC encoder to learn robust semantic features, which automatically handling channel noise and semantic distortion at the receiv er without additional retransmission rounds. Unfortunately , FEC-based schemes typically adopt pre- defined redundancy that is fixed across different channel real- izations. As a result, they often fail to guarantee transmission reliability and reconstruction quality [26], especially in time- varying and heterogeneous wireless channel conditions. 2) HARQ-based r eliable semantic communication: Accord- ingly , sev eral studies have explored HARQ-based methods to achiev e reliable semantic communication. T o address the mismatch between bit-lev el error validation and the error - tolerant nature of semantic features, many works have pro- posed task-oriented v alidation schemes. For instance, [11] triggers retransmission based on estimated SSIM, and adap- tiv ely adjusts retransmission overhead based on the predicted reconstruction quality without requiring access to the original image. T o enable more feature-le vel retransmission decisions, [13] executes validation directly at the semantic-feature lev el by comparing recei ved features with reference features stored in a semantic codebook, thereby triggering retransmissions based on feature similarity rather than sample-le vel perceptual quality . For multi-modal scenario with multiple performance metrics, [27] jointly considers quality indicators from both text and images, making retransmission decisions based on a combination of perceptual image quality and semantic text consistency . Meanwhile, to better exploit corrupted semantic features instead of discarding them, some studies ha ve introduced joint decoding strategies. By combining multiple receptions among different transmission rounds, these methods ef fectively ex- ploit the information contained in noisy features, thereby enhancing data utilization efficienc y and overall transmission reliability [11], [28], [29]. By integrating semantic-level val- idation with joint decoding into the HARQ framew ork, these methods can reduce unnecessary retransmissions caused by bit-lev el errors. Despite these advances, most existing HARQ-based Sem- Comm systems rely on predefined thresholds to determine whether retransmission is required. Selecting and adapting such thresholds to current transmission conditions is challeng- ing, as it in volv es a delicate trade-off between communication efficienc y and reconstruction quality . In dynamic wireless en vironments, where channel conditions and noise levels v ary frequently , fixed-threshold schemes struggle to adapt effec- tiv ely , often resulting in either excessi ve retransmissions or insufficient error control. Moreover , quality estimates derived from corrupted received signals may deviate significantly from the true reconstruction quality , leading to unnecessary or insufficient retransmissions, further degrading overall system performance. C. Reinfor cement Learning in Semantic Communication Facing the dif ficulty of accurately quantifying semantic distortion and reconstruction performance, some studies have turned to reinforcement learning (RL) for SemComm. RL- based approaches learn adaptiv e policies through continuous interaction with the environment, ef fectively supporting pro- gressiv e transmission [11] and cross-layer joint optimization [30], of fering a promising approach for addressing the chal- lenges in SemComm systems. Moreover , by training agents to learn optimal transmission strategies that adapt to v arying channel conditions and noise levels, RL-based methods are particularly well suited for decision-making under dynamic and uncertain wireless en vironments [31]. Considering the inherent limitations of static retransmission strategies in HARQ-based semantic communication systems, recent studies have explored the integration of RL techniques to dev elop adaptive retransmission mechanisms [32]–[34]. For instance, to jointly optimize energy consumption and data fidelity , [32] formulates semantic communication as a Markov decision process (MDP) and employs a deep Q- network (DQN) agent to determine the optimal number of retransmissions for each sample based on current channel con- dition, energy consumption, and data reliability . By designing appropriate rew ard functions, RL-based methods enable the agent to learn policies that effecti vely balance the trade-off between communication efficiency and transmission reliability . Howe ver , when retransmission decisions are formulated as a sequential decision-making problem, system performance becomes highly dependent on ho w the optimization objecti ves are encoded in the rew ard function. Under di verse channel conditions and heterogeneous reliability requirements, design- ing rew ard functions that accurately reflect retransmission effecti veness and enable robust policy con vergence remains a challenging open problem. I I I . S Y S T E M M O D E L A. System Model As shown in Fig. 1, we consider a single-user semantic communication (SemComm) system for image transmission. W ithout loss of generality , the input of the JS3C system is an image p ∈ R c × h × w , where c , h , and w denote the number of channels, height, and width of the image, respectiv ely . The receiv er outputs a reconstructed image e p ∈ R c × h × w . In this work, we regard semantic transmissions whose reconstruction quality falls below a certain lev el as unreliable services, which necessitate retransmission to guarantee task- lev el reliability . Therefore, accurate quality ev aluation of the initially reconstructed samples is essential for triggering re- transmissions. Ho wever , in semantic communication systems, the receiv er has no access to the original content, rendering exact quality ev aluation fundamentally infeasible. T o address this fundamental limitation, we introduce a performance es- timation module that predicts the quality score based on the recei ved check codeword and channel-related information. The estimated score serves as a surrogate quality indicator, enabling retransmission decision-making in the absence of reference images. 1) T ransmitter: As shown in Fig. 1, the transmitter consists of a JSCC encoder , an adaptive masking module, and an SF A check encoder . The semantic encoder extracts high-lev el features through a learned function f en :: R c × h × w → R K × 1 , Fig. 1: The framew ork of proposed JS3C. where K denotes the number of encoded symbols. Briefly , the semantic encoding function is giv en by x = f en ( p , ϕ en ) , (1) where x ∈ R K × 1 is the encoded semantic features and ϕ en denotes the trainable parameters of the semantic encoder . T o enable the encoded features to adapt to varying com- munication overhead constraints while preserving robustness to noise under time-v arying channel conditions, we intro- duce an adaptiv e masking module. This module dynamically generates binary mask m ∈ R K × 1 based on compression ratio R and channel SNR, thereby adjusting the effecti ve length of transmitted semantic features. Specifically , for a target compression ratio R ∈ [0 , 1] , the number of non- zero elements in the masked feature vector is defined as K mask = ⌈ K · R ⌉ , where ⌈·⌉ indicates operation of rounding up. The adaptiv e masking module then modulates and sorts the encoded features, generating the element m i in mask sequence m through the following formulation: m i = ( 1 , i ≤ K mask . 0 , else . (2) The masked feature z ∈ R K mask × 1 is giv en by z = x ⊙ m, (3) where ⊙ indicates the hadmard product. Moreo ver , in order to generate verification redundancy for performance estimation, the proposed SF A check encoder compresses the encoded features via a learned mapping g com :: R K × 1 → R k × 1 , where k denotes the number of compressed symbols. The compression process is expressed as x com = g com ( x , R, r , θ com ) , (4) where x com ∈ R k × 1 indicates the encoded verification feature, r denotes the channel state information (CSI) of the physical channel, and θ com denotes the optimizable parameters. For analytical simplicity , the CSI considered throughout this paper is specified by the signal-to-noise ratio (SNR) of the physical channel. After po wer normalization, the normalized signal z ∈ R K mask × 1 and z com ∈ R k × 1 are transmitted ov er the physical channel for image reconstruction and performance estimation, respectiv ely . 2) Physical Channel: When the encoded signals are trans- mitted ov er the physical channel, channel impairments such as noise and multipath fading may distort the transmitted signals. Therefore, the received signals could be different from their transmitted signals. For example, the receiv ed signal e z ∈ R K mask × 1 corresponding to the transmitted signal z is giv en by e z = hz + n , (5) where h denotes the channel gain and n denotes the additiv e channel noise.. 3) Receiver: After receiving the noisy signals, the receiv er reconstructs the source image using the CA-JSCC decoder and estimates the reconstruction quality via an LPIPS esti- mator . For the CA-JSCC decoder, the receiv ed verification features e z com and the receiv ed masked features e z are jointly decoded. The corresponding decoding function is defined as f de :: R ( K mask + k ) × 1 → R c × h × w , and the reconstructed image e p ∈ R c × h × w is obtained as e p = f de ( e x com , e z , SNR , ϕ de ) . (6) T o enable retransmission decisions without access to the original source image, the receiver employs a perceptual quality estimator that provides a surrogate quality score based on the received signals. While con ventional metrics such as PSNR and SSIM are widely used for performance e valuation, they are either unbounded or exhibit limited sensitivity in low- quality re gimes, making them unsuitable as reliable triggers for retransmission decisions in semantic communication systems. In this work, we adopt LPIPS as a perceptual quality score for guiding retransmission decisions, as it better reflects semantic fidelity and perceptual degradation under sev ere channel impairments. Importantly , LPIPS is used solely as a decision signal rather than an optimization target. In Section V , the effecti veness of the proposed frame work is primarily validated using PSNR and subjectiv e visual quality . This further indicates that the performance gains are not achieved by explicitly optimizing for PSNR, but instead arise from a more faithful preserv ation of semantic information. In particular , by jointly using the verification redundancy e x com , recei ved feature e z , channel condition r and compression ratio R , the receiver estimates the LPIPS score as LPIPS est = g est ( e z , e x com , r , R, θ est ) , (7) where LPIPS est denotes the estimated LPIPS value of recon- structed image and the θ est indicates the trainable parameters of the LPIPS estimator . B. Retransmission Strate gy At the recei ver , due to the inherent estimation inaccuracies of neural networks, perfectly quality estimation is generally infeasible. Moreover , a key challenge in reliable semantic communication lies in maximizing the coverage of retrans- mission decisions for severely corrupted images under biased reconstruction, which significantly limits the effecti veness of Fig. 2: The proposed network architecture of retransmission. con ventional retransmission strategies. T o address this chal- lenge, we incorporate reinforcement learning and design a PPO-based agent to enable condition-aware retransmission decisions by jointly considering the noise le vel, compression ratio, and estimated reconstruction quality . As shown in Fig. 1, the adaptive retransmission agent at the receiver collects channel conditions and recei ved verification codew ords as state information and mak es retransmission decisions at the sample lev el. When retransmission is requested, the agent sends an N AK signal to trigger the retransmission procedure. T o further meet the reliability requirements of image transmission, we integrate the proposed RL-based retransmission agent into the initial transmission framework sho wn in Fig. 1, together with JS3C, thereby forming the proposed S3CHARQ scheme. This framew ork supports an adaptiv e recovery-refinement retrans- mission process, and the o verall system architecture is depicted in the Fig. 2. As shown in Fig. 2, upon receiving a N AK signal from the receiver , the transmitter initiates a retransmission process. Specifically , the transmitter performs re-encoding based on the estimated perceptual quality LPIPS est and the original encoded features x to generate retransmission features x sec . During the retransmission phase, a new mask ratio R 2 is applied, and the original features are first re-encoded into x 2 . Subsequently , an entropy optimizer is employed to further refine the features, yielding x sec . After adaptive masking and SF A-Check encoding, we obtain the corresponding masked feature z sec and corresponding v erification redundancy x com , 2 . The entropy optimizing function is expressed as x sec = g eo ( x 2 , x , θ eo ) , (8) where x sec denotes the optimized features for retransmission, and θ eo indicates the trainable parameters of the entropy optimizer . For the check-encoding procedure of x com , 2 , we incorporate the estimated quality LPIPS est into the encoding procedure, enabling adjustment based on the feedback from the initial reconstruction. The corresponding compressing function is expressed as x com , 2 = g com , 2 ( x , x sec , R 2 , r , LPIPS est , θ com , 2 ) , (9) where θ sec , 2 indicates the trainable parameters of the SF A- check encoder in the retransmission phase. At the receiver , the CA-JSCC decoder executes joint decoding based on e x com , e z , e x com , 2 , e z sec and the channel condition r to reconstruct the image e p 2 . The joint reconstruction procedure is expressed as e p 2 = f de , 2 ( e x com , e x com , 2 , e z , e z sec , SNR , ϕ de , 2 ) , (10) where ϕ de , 2 denotes the trainable parameters of the CA- JSCC decoder for the retransmission round. For analysis simplicity , we assume that only a single retransmission is permitted and that the channel SNR remains constant dur- ing the retransmission process. Since the LPIPS prediction of retransmission remains av ailable after retransmission, the proposed framew ork can be naturally extended to support mul- tiple retransmission rounds, thereby accommodating diverse requirements and channel conditions. I V . N E T W O R K A R C H I T E C T U R E In this section, the specific design of proposed system model is explained. A. System Arc hitectur e As mentioned above, we adopt the design of SwinJSCC in [4] as the basic JSCC encoder and decoder architecture. The foundation model is trained end to end using an MSE loss to enable image reconstruction and adaptation to v arying channel conditions via the mask ratio. At the transmitter side, the SwinJSCC encoder E Swin ( · ) encodes the source image p into the JSCC-encoded feature x as x = E Swin ( P p ( p ) , P R ( R ) , P snr (SNR) , ϕ en ) , (11) where p denotes the input image, P p ( · ) represents the patch embedding and feature projection of the image, while P R ( · ) and P snr ( · ) denote linear projection and embedding layer that embed the compression ratio and channel SNR into the latent feature space, respectively . E Swin ( · ) is implemented by a hierarchical Swin T ransformer encoder , and ϕ en denotes the trainable parameters of the semantic encoder . Following [35], the system can be modeled as a Markov chain Y → X → Z → e Z → e Y , (12) where the source image p is defined as Y , the encoded feature x as X , and the compressed feature x com as Z . After transmission over the wireless channel and decoding, the recei ved signal e z com is e Z , and the reconstructed image e p is e Y . 1) SF A Check Encoder: As shown in Fig. 3(a), the Semantic-Fidelity-A ware(SF A) check encoder consists of com- pression and reparameterization blocks. Through the SF A check encoder , the encoded feature x is further compressed to x com , which retains partial semantic information while preserving its inherent error detection capability . First, the mean µ com and v ariance σ com of the compressed feature x com are generated by the SF A check encoder as ( µ com , σ com ) = h com ( x , R, r , θ com ) , (13) where h com ( · ) denotes the SF A check encoder , parameterized by θ com , which takes the encoded feature x , the target 𝒙 C o nv+ R eLU C A Blo ck C o nv +ReLU C o nv + R eLU C o nv + R eLU C o nv + R eLU C A Blo ck F l a tten F C + R eLU F C + R eLU FC 𝐒𝐍𝐑 , 𝐑 FC FC T a nh Si g m o i d 𝝈 𝒄𝒐𝒎 𝝁 𝒄𝒐𝒎 R epa ra m eteri za ti o n 𝒙 𝒄𝒐𝒎 J SC C enco der F l a tten C o nca tena te RFCB RFCB FC Si g m o i d 𝐒 𝐍𝐑 , 𝐑 , 𝒛 𝒄 𝒐 𝒎 𝒑 𝐋𝐏 𝐈 𝐏 𝐒 𝒆 𝒔 𝒕 𝒑 , 𝒑 (a) SF A che ck en co d er (b ) L PIPS E s t i m at o r Fig. 3: The architecture of SF A check encoder and LPIPS estimator . compression ratio R , and the mask ratio r as inputs to generate µ com and σ com . The compressed feature x com is then obtained through the reparameterization trick as x com = µ com + σ com ⊙ ϵ , (14) where ϵ is sampled from a standard normal distribution N (0 , I ) . It is crucial for a codec to achieve high decoding perfor- mance while minimizing communication ov erhead. Howe ver , these objecti ves are inherently conflicting: Improving decoding performance typically requires transmitting more symbols, whereas reducing communication overhead degrades noise robustness and thus performance. Information Bottleneck(IB) theory [36] provides a principled way to balance this tradeof f. For one-to-one communication, the objectiv e function can be formulated as L IB ( ϕ, θ ) = − I ( Z ; Y ) + β I ( Z ; X ) , (15) where I ( Z ; Y ) indicates the mutual information between the latent feature Z and the decoding output Y , which is highly related to decoding performance, and I ( Z ; X ) represents the mutual information between the source feature X and Z , encouraging reduced communication overhead. Specifically , the mutual information I ( Z ; Y ) can be written as I ( Z ; Y ) = Z p ( y , z ) log q θ ( y | z )d y d z + Z p ( y , z ) log p ( y | z ) q θ ( y | z ) d y d z + H ( Y ) . (16) Follo wing the variational information bottleneck analysis in [35], [37], and [38], I ( Z ; Y ) can be optimized by minimizing the MSE loss between the reconstructed image e p and the source image p . The mutual information I ( Z ; X ) can be formulated as I ( Z ; X ) = Z p ( z , x ) log p ϕ ( z | x ) q ( z ) d x d z + Z p ( z , x ) log p ( z ) q ( z ) d x d z . (17) Therefore, I ( Z ; X ) consists of two KL-div ergence for- mulas, D KL ( p ϕ ( z | x ) || q ( z )) and D KL ( p ( z ) || q ( z )) . Since D KL ( p ( z ) || q ( z )) ⩾ 0 , the first term dominates and, based on the Markov chain in (12), can be written as D KL ( p θ com ( x com | x ) ∥ p ( x com )) . Follo wing [37], the variational marginal distribution p ( x com ) is modeled as a centered isotropic Gaussian distri- bution N ( x com | 0 , I ) . Therefore, the KL di vergence can be simplified to the following form D KL ( p θ com ( x com | x ) ∥ p ( x com )) = X µ 2 com + σ 2 com − 1 2 − log σ com . (18) 2) CA-JSCC Decoder & LPIPS Estimator: At the receiver , to realize efficient joint decoding and quality estimation, we design a Cooperati ve-A ware JSCC (CA-JSCC) decoder . Fol- lowing (10), the network architecture of CA-JSCC is defined as e p = D Swin ( P x ( e x com ) , P z ( e z ) , P snr (SNR) , ϕ de ) , (19) where P x ( · ) , P z ( · ) , and P snr ( · ) are learnable linear layers that map heterogeneous inputs into a unified latent space for feature alignment. D Swin ( · ) denotes the decoder composed of stacked Swin Transformer blocks [4], parameterized by ϕ de , which jointly exploits spatial correlations and cross-feature dependencies to reconstruct the target image e p . For LPIPS estimation, we define an LPIPS estimator as shown in Fig. 3(b), which is trained by minimizing the MSE loss between the ground-truth LPIPS and its estimated value. Considering the coupled nature of LPIPS estimation, SF A check coding, and CA-JSCC decoding. we jointly optimize the decoding procedure and performance estimation stages. Specifically , the pre viously trained SwinJSCC encoder is frozen, while the SF A check encoder , CA-JSCC decoder, and LPIPS estimator are trained jointly using an Information Bottleneck-based loss function defined as L = 1 B B X i =1 || (LPIPS( p , e p ) , LPIPS est ) || 2 2 + L IB , (20) where L IB is giv en by L IB = 1 B B X i =1 d ( p , e p ) + γ D K L ( p θ com ( x com | x ) ∥ p ( x com )) . (21) Here, γ is the weight of the KL div ergence term in the loss function. 3) Entr opy Optimizer: As shown in Fig. 2, following the design philosophy of the SF A check encoder, we adapt the basic system to the retransmission scenario by reducing the homogeneity between the encoded features used in the initial transmission and the retransmission. T o this end, we design an Entropy Optimizer , whose basic structure is illustrated in Fig. 4. In addition, to enable the SF A check encoder , CA-JSCC decoder and LPIPS estimator to fully exploit ef fective informa- tion for encoding, decoding, and quality estimation, we retrain Con v1d+ RELU Con v1d+ RELU Con v1d FC FC T anh FC S igmoid 𝝈 se c 𝝁 𝒔𝒆𝒄 𝒙 𝒙 𝟐 FC+R ELU FC R epa ra m eteri za ti o n 𝒙 𝒔𝒆 𝒄 Fig. 4: The architecture of the entropy optimizer . FC FC FC+L ay er Norm ReLU Con cat enate FC+L ay er Norm ReLU FC+R eLU FC+R eLU FC FC 𝐒𝐍𝐑 FC FC 𝐑𝐚 𝐭𝐢𝐨 𝐋𝐏 𝐈 𝐏 𝐒 𝐭𝐡 𝐋𝐏 𝐈 𝐏 𝐒 𝐩𝐫 𝐞 𝐝 𝐋𝐏 𝐈 𝐏 𝐒 𝐝𝐢𝐟 𝐟 𝒙 𝐜𝐨 𝐦 Retr ans mission Acti o n State V alue Estimatio n Fe atur e Extrac tor Actor Netw o rk Critic Netw o rk Fig. 5: The architecture of the Adaptiv e Retransmission Agent. the corresponding modules. Since the retransmission modules operate independently of the first-round transmission, the basic system is frozen, and a loss function similar to (21) is used to jointly train these modules. B. Adaptive Retransmission Agent As discussed in Section III-B, we adopt a PPO-based rein- forcement learning agent to jointly optimize the actor and critic networks, which is sho wn in Fig. 5. The goal of PPO agent is to learn a policy π θ po , parameterized by θ po , and a v alue function V π θ po ϕ po , parameterized by ϕ po , that approximates the expected cumulativ e rew ard. For action selection, the adapti ve retransmission agent collects channel conditions and sample- lev el features for representation extraction. The actor network outputs the retransmission decision, while the critic network estimates the corresponding state value, jointly guiding policy learning and con vergence. 1) State and Action F ormulation: T o comprehensi vely char - acterize both the channel conditions and the feature distribu- tion of the current sample, the for i-th image p i we define the input state s i as s i = { SNR , R , LPIPS th , LPIPS est , LPIPS diff , ˜ x com } , (22) where LPIPS diff denotes the deviation between the estimated LPIPS LPIPS est and the target threshold LPIPS th , which is calculated as LPIPS diff = LPIPS est − LPIPS th . (23) Giv en the differences in scale and dimensionality among these state variables, we group them according to their physical meanings and processing each group through independent linear layers for latent-space projection and dimensional align- ment. The resulting latent representations are concatenated and then further processed to extract high-lev el features. W ithin the actor network, these features are used to generate the retransmission decision. For the current sample p i , the action space A i is defined as: A i = { 0 , 1 } , (24) where the two actions correspond to accepting the current transmission result or requesting a retransmission, respectively . Based on the actor’ s output, the receiver sends a NAK signal to the transmitter to trigger retransmission encoding and transmission. 2) Rewar d Design: For reward function design, when mul- tiple optimization objectiv es, e.g. reconstruction quality and transmission overhead, are highly coupled and characterized by heterogeneous scales, achieving effecti ve joint optimization is challenging. Most existing studies adopt weighted reward functions to balance the impact of various optimization ob- jectiv es on policy conv ergence. Ho wever , improper weight selection in such weighted-sum designs can severely hinder policy learning and conv ergence, making it difficult to obtain an effecti ve and robust policy . T o address this issue, we adopt a sparse reward design. By constructing rew ard signals based on the retransmission results and the retransmission decisions, the proposed approach en- ables policy learning to directly target transmission reliability while enhancing policy robustness. Transmitted samples are categorized according to whether the reconstructed quality meets the target threshold and whether retransmission effec- tiv ely improv es quality . Rew ards are then assigned based on the corresponding category , encouraging the agent accurately identify severely corrupted images while minimizing ov erall retransmission ov erhead. For the current image p i , the rew ard associated with action a i is defined as r i = 10 . 0 , LPIPS( p, ˜ p ) > LPIPS th , LPIPS( p, ˜ p 2 ) ≤ LPIPS th , a i = 1 0 . 5 , LPIPS( p, ˜ p ) ≤ LPIPS th , a i = 0 − 0 . 5 , LPIPS( p, ˜ p ) > LPIPS th , LPIPS( p, ˜ p 2 ) > LPIPS th , a i = 1 − 5 . 0 , LPIPS( p, ˜ p ) > LPIPS th , a i = 0 − 1 . 0 , LPIPS( p, ˜ p ) ≤ LPIPS th , a i = 1 . (25) The re ward is determined jointly by the reconstruction quality before retransmission, the reconstruction quality after retrans- mission, and the agent’ s action. Specifically , higher rewards are assigned when retransmission successfully improv es sam- ples that violate the LPIPS threshold, whereas penalties are imposed for redundant or ineffecti ve retransmission, as well as for failing to request retransmission for severely corrupted images. 3) Critic Network Optimization: The critic network is employed to approximate the state-value function under the current policy π θ po , which is defined as V π θ po ϕ po ( s t ) = E π θ po " ∞ X k =0 γ k r t + k s t # , (26) where ϕ po denotes the parameters of the critic network. T o train the critic, the temporal-difference (TD) error is first defined as δ t = r t + γ (1 − d t ) V ϕ po ( s t +1 ) − V ϕ po ( s t ) , (27) where d t ∈ { 0 , 1 } indicates whether the episode terminates at time step t . Based on the Generalized Adv antage Estimation (GAE) framew ork, the advantage function is computed as ˆ A t = ∞ X l =0 ( γ λ ) l δ t + l , (28) where λ ∈ [0 , 1] controls the bias-variance trade-off. Accord- ingly , the regression target for the critic network is giv en by ˆ R t = ˆ A t + V ϕ po ( s t ) , (29) which serves as an estimate of the empirical return. The critic is trained by minimizing the MSE loss between the predicted state value and the regression target L critic ( ϕ po ) = E s t ∼ π θ po V ϕ po ( s t ) − ˆ R t 2 . (30) Optimizing this loss yields a low-v ariance, bias-controlled estimate of the state-value function. V . E X P E R I M E N T R E S U LT S In this section, we e valuate the proposed S3CHARQ frame- work in terms of image reconstruction performance and trans- mission reliability . A. Experiment Settings 1) Datasets: In the simulation experiments, we ev alu- ate both lo w-resolution and high-resolution image datasets to comprehensively validate the generality of the proposed S3CHARQ framew ork under div erse transmission configu- rations. It is worth noting that, due to the discrepancies in LPIPS estimation accuracy between training and testing datasets, we deliberately adopt an additional dataset during reinforcement training. Directly training the PPO agent on the original training set makes it difficult to capture the true deviation distribution, leading to performance degradation during deployment. For low-resolution image e xperiments, we use the CIF AR-10 image dataset as the experimental data for training and testing the encoders and decoders, while the CIF AR-100 test set is employed to train the PPO algorithm. Both CIF AR-10 and CIF AR-100 consist of 32 × 32 RGB images, with 50,000 training samples and 10,000 test samples. For the high-resolution image experiments, to accelerate training, we follow prior work in the selection of training dataset [12]. Specifically , the ImageNet validation set is used T ABLE I: Neural Network Architecture of S3CHARQ Network Layers for Low Resolution Layers for High Resolution Backbone SwinJSCC [4] 32 × 32 SwinJSCC 256 × 256 SF A-Check Encoder FC, 256 → 48 5 × 5 CNN, 256, stride=1 × 4 5 × 5 CNN, 64, stride=1 AF block [12] × 2 FC × 3 , 1024 → 256 → 64 FC, 320 → 48 5 × 5 CNN, 256, stride=1 × 4 5 × 5 CNN, 256, stride=1 AF block × 2 FC × 3 , 3072 → 1024 → 256 LPIPS Estimator FC, 256 → 48 FC-ResBlock [12], 3456 → 3072 FC-ResBlock, 3072 → 3072 FC, 3072 → 1 Sigmoid FC, 320 → 48 FC-ResBlock, 12672 → 3072 FC-ResBlock, 3072 → 3072 FC, 3072 → 1 Sigmoid T emporal Entropy Model FC × 2 , 512 Con v1D, 128 × 3 FC × 2 , 320 Con v1D, 256 → 128 → 256 CA-JSCC Decoder FC, 257 → 256 SwinJSCC Decoder FC, 321 → 320 SwinJSCC Decoder to train the encoders and decoders, as it contains 50,000 high- resolution images with diverse content. The DIV2K dataset, consisting of 800 training images and 100 test images, is em- ployed to training and testing the PPO algorithm, respecti vely . 2) Benchmarks: T o comprehensively demonstrate the su- periority of the proposed S3CHARQ algorithm, we introduce the follo wing benchmark methods for comparati ve e valuation. • SwinJSCC [4]: The baseline framew ork of S3CHARQ, which represents an open-source JSCC approach for wireless image transmission without retransmission. • CCHARQ [11]: A classic HARQ-based semantic retrans- mission system, which uses a single JSCC encoder for both the initial transmission and retransmission when required, and an SSIM estimator for quality prediction. T o reduce transmission overhead, a DDQN network is em- ployed to guide the progressiv e transmission of encoded features under a predefined SSIM threshold. • SCHARQ [29]: Another HARQ-based system that adopts multiple encoder-decoder pairs to independently optimize the initial transmission and retransmission. For reliability validation, SCHARQ follows con ventional CRC-based checking procedure used in bit-le vel communications. When retransmission is required, the retransmission en- coder generates features with the same code length as the initial transmission. At the receiver , SCHARQ performs joint decoding using all receiv ed features. • S3CHARQ − : A variant of the S3CHARQ framew ork, where the PPO-based retransmission decision module is replaced by the same rule-based strategy used in CCHARQ. 3) Implementation Details: T o ensure a fair comparison under an identical estimation metric. For CCHARQ and SCHARQ, we replace the original SSIM estimator and CRC- based checking procedure with the same LPIPS estimator in S3CHARQ. For threshold-based retransmission schemes (CCHARQ, SCHARQ, and S3CHARQ − ), to mitigate vari- ations in retransmission ratios caused by distribution mis- matches between ground-truth and estimated LPIPS, we adopt a scaled LPIPS threshold to better align the retransmission ratios. Moreover , to match sample-level transmission overhead across all benchmarks, we use the same compression ratio for the initial transmission and the same code length for the retransmission stage. Performance is ev aluated using average PSNR and 97th 1 -percentile PSNR; for perceptual quality , we report average LPIPS and 97th-percentile LPIPS, along with outage probability 2 to access transmission reliability . During training, we uniformly sample the SNR from { 0 , 1 , 4 , 7 , 10 , 13 } , while the compression ratio is also uni- formly sampled from [0.1, 1.0] for low resolution datasets and from [1/48, 1/6] for high resolution datasets. The check codew ord length is set to 64 for low-resolution and 256 for high-resolution datasets, which is substantially smaller than the ov erheads of the transmitted JSCC codeword. All benchmark models are trained 1600 epochs and 200 epochs for low- resolution and high-resolution datasets respectively , using the Adam optimizer whose learning rate is set to 0.0001. For γ in (21), we set it 0.0001 to balance multiple optimization objectiv es. Specifically , for neural network hyperparameters, we strictly follow the configurations in [4] and [11] for each benchmark. The parameters of the S3CHARQ modules are summarized in T ABLE I. For training the adapti ve retransmis- sion agent, the linear layer used for input-state preprocessing is set to a dimension of 64, and the agent is trained for 200 epochs using the Adam optimizer with a learning rate of 0.0001 until policy con vergence. All experiments are con- ducted on an NVIDIA R TX4090 in Ubuntu 20.04 L TS with Intel(R) Xeon(R) Platinum 8336C CPU @ 2.30GHz under 2.1.2 PyT orch en vironment. B. V alidation Results 1) Experiment Results on Low-Resolution Datasets: For the low-resolution datasets, we conduct experiments under three representativ e transmission settings: Setting 1: R = R 2 = 1 / 8 under an A WGN channel; Setting 2: R = R 2 = 1 / 6 under an A WGN channel, and Setting 3: R = 1 / 8 , R 2 = 1 / 8 under a Rayleigh fading channel. Regarding the retransmission threshold, we select the 90th percentile of the ground-truth LPIPS values on the test set to align the retransmission ratio across methods. Fig. 6-Fig. 8 illustrate the performance of different methods under each transmission setting. As shown in Fig. 6(a)-(c), S3CHARQ achie ves av erage PSNR gains of 4 . 12 dB and 6 . 44 dB ov er SCHARQ and CCHARQ, respectively . Com- pared with S3CHARQ − , the proposed S3CHARQ attains an additional 0 . 46 dB PSNR gain at SNR = 1dB , demonstrating its improved transmission efficienc y . In terms of transmis- sion reliability , S3CHARQ consistently achie ves lo wer outage probability across all SNR conditions. As shown in Fig. 6(f), S3CHARQ achie ves a 97th-percentile PSNR of 27 . 20 dB at SNR = 1dB , outperforming the variant withput RL agent by 0 . 58 dB. Under Setting 1, S3CHARQ achiev es a minimum outage probability of 3 . 38 %, which is significantly lower than that of SCHARQ ( 95 . 30 %) and CCHARQ ( 60 . 67 %). 1 97% of the total samples achiev e better performance than it. 2 The proportion of samples whose decoded images still fail to meet the predefined LPIPS threshold. (a) A v erag e PSN R (b ) 9 7 th Percen t i l e PS N R (c) O u t ag e Pro b ab i l i t y (d ) A v erag e L PIPS (e) 9 7 th Percen t i l e L PIPS (f) PSN R D i s t ri b u t i o n at SN R=1 d B Fig. 6: Performance Comparison on CIF AR10 with R = R 2 = 1 / 8 under an A WGN channel. (a) A v erag e PSN R (b ) 9 7 th Percen t i l e PS N R (c) O u t ag e Pro b ab i l i t y Fig. 7: Performance Comparison on CIF AR10, with R = R 2 = 1 / 6 under an A WGN channel. (a) A v erag e PSN R (b ) 9 7 th Percen t i l e PS N R (c) O u t ag e Pro b ab i l i t y Fig. 8: Performance Comparison on CIF AR10, with R = R 2 = 1 / 8 under a Rayleigh channel. Compared with S3CHARQ − , S3CHARQ further reduces the outage probability by 0 . 85 % ov erall and by 3 . 53% at SNR = 1dB . On 97th-percentile PSNR performance, as sho wn in Fig. 6(f), S3CHARQ achiev es 27 . 20 dB at SNR = 1dB , outperforming v ariant without RL Agent by 0 . 58 dB. For perceptual quality , as shown in Fig. 6(d)-(e), S3CHARQ achiev es the best LPIPS performance aming all methods, with an av erage LPIPS of 0 . 041 and a 97th-percentile LPIPS of 0 . 064 . These results clearly demonstrate the effecti veness of the proposed S3CHARQ framework in improving both transmission efficienc y and reliability . Similarly , as sho wn in Fig. 7, S3CHARQ continues to demonstrate superior performance at lower compression ra- tios in terms of average PSNR, 97th-percentile PSNR, and outage probability . For instance, S3CHARQ achie ves an out- age probability of only 2 . 50% , outperforming S3CHARQ − ( 4 . 02% ), CCHARQ ( 53 . 23% ), and SCHARQ ( 91 . 17% ). As the transmission ov erhead constraint is relaxed, the advantage of S3CHARQ in transmission reliability becomes ev en more pro- nounced. These results further demonstrate the ef fectiveness of jointly optimizing the encoding and check coding procedures in improving both transmission ef ficiency and reliability . For experiments under Rayleigh fading channels, as shown in Fig. 8, at SNR = 13dB condition, CCHARQ achie ves gains of 0 . 15 dB in A verage PSNR and 0 . 26 dB in 97th- percentile PSNR, compared with S3CHARQ. Unlike the A WGN case, even at relatively high av erage SNR, instanta- neous fading-induced fluctuations introduce severe noise inter- ference to the transmitted features. Compared with CCHARQ, which encodes features once and transmits them progressiv ely , S3CHARQ re-encodes features during retransmission based on feedback, which may further propagate transmission errors under fading conditions, leading to slightly degraded av erage and tail PSNR performance. Overall, under the same compres- sion ratio and retransmission code length, S3CHARQ achieves superior PSNR and LPIPS performance in most settings compared with other benchmarks, indicating higher encoding (a) A v erag e PSN R (b ) 9 7 th Percen t i l e PS N R (c) Ou t ag e Pro b ab i l i t y (d ) A v erag e L PIPS (e) 9 7 th Percen t i l e L PIPS (f) PSN R D i s t ri b u t i o n at SN R=1 d B Fig. 9: Performance Comparison on DIV2K, where R = R 2 = 1 / 48 under an A WGN channel. (a) A v erag e PSN R (b ) 9 7 th Percen t i l e PS N R (c) O u t ag e Pro b ab i l i t y Fig. 10: Performance Comparison on DIV2K, where R = R 2 = 1 / 36 under an A WGN channel. efficienc y . In terms of transmission reliability , S3CHARQ con- sistently maintains lower outage probability than competing schemes, effecti vely ensuring a lower bound on reconstruction quality . Moreover , the gains in efficienc y and reliability remain consistent across both low- and high-SNR regimes, confirming that S3CHARQ enables more refined and reliable transmission encoding. 2) Experiment Results on High-Resolution Datasets: Simi- lar to the low-resolution experiments, for high-resolution ev al- uation, we consider two representativ e compression settings, namely Setting 1: R = R 2 = 1 / 48 under an A WGN channel, and Setting 2: R = R 2 = 1 / 36 under an A WGN channel. Specifically , during training and validation, all original images are resized to a standard resolution of 256 × 256. This resizing ensures a consistent input size for the JSCC encoder across all benchmarks and improves training efficienc y . As shown in Figs. 9 and 10, S3CHARQ outperforms all benchmark methods under most SNR conditions, demonstrat- ing stable and superior performance across multiple metrics, including av erage PSNR, 97th-percentile PSNR, outage proba- bility , as well as av erage and 97th-percentile LPIPS. It is worth noting that under Setting 1, although CCHARQ and SCHARQ achiev e 0 . 34 dB higher av erage PSNR than S3CHARQ at SNR = 13dB , S3CHARQ still provides significant gains in tail performance, deli vering impro vements of 1 . 12 dB and 1 . 59 dB in 97th-percentile PSNR over CCHARQ and S3CHARQ, respectiv ely . For perceptual quality , as shown in Fig. 9(d)- (e), S3CHARQ achieves an av erage LPIPS of 0 . 255 and a 97th-percentile LPIPS of 0 . 356 , significantly outperforming CCHARQ ( 0 . 310 on av erage, 0 . 413 at the 97th-percentile) and SCHARQ ( 0 . 312 on av erage, 0 . 415 at the 97th-percentile). For experiments conducted under Setting 2, as illustrated in Fig. 10, S3CHARQ maintains stable performance advantages with less strigent compression and retransmission overhead constraints. Compared with other benchmarks, S3CHARQ achiev es an outage probability of 1 . 40% , demonstrating its strong capability in ensuring transmission reliability . These results highlight the effecti veness of optimizing information redundancy across different coding procedures to improve reliability . Moreov er, as communication resources increase, the performance gains enabled for this optimization become even more pronounced. 3) V isualization Results: As shown in Fig. 11, to fur- ther illustrate the effecti veness of the proposed S3CHARQ, we present a set of qualitative visualization results on the DIV2K dataset. The visual comparisons are conducted under an A WGN channel with SNR = 4dB , a compression ratio of GT GT Sourc e P SNR ↑ / L P I P S ↓ / I s R etr an S3C H A R Q ( ours) 19.73 / 0.29 / Y Sw i nJ SC C 16.56 / 0.46 / N S3C H A R Q — 18.45 / 0.37 / N CCHARQ 17.69 / 0.41 / N SC H A R Q 17.60 / 0.40 / N Sourc e P SNR ↑ / L P I P S ↓ / I s R etr an S3C H A R Q ( ours) 23.13 / 0.28 / Y S3C H A R Q — 21.9 1 / 0.35 / N Sw i nJ SC C 20.15 / 0.42 / N CCHARQ 20.66 / 0.40 / N SC H A R Q 20.61 / 0.40 / N Fig. 11: V isualization results on DIV2K. R = R 2 = 1 / 48 , and a scaled retransmission threshold set to 0 . 339 . As observed from the visual results, after the first trans- mission round, the reconstruction quality remains well below the preset LPIPS threshold. All rule-based benchmarks fail to detect this perceptual degradation due to estimation errors between the predicted and true LPIPS values. For exam- ple, for the first image, CCHARQ decides not to retransmit based on an estimated LPIPS of 0 . 331 , while SCHARQ and S3CHARQ − yield estimates of 0.329 and 0.289, respectively . In contrast, instead of relying solely on the estimated LPIPS, S3CHARQ makes retransmission decisions by jointly consid- ering sample-level features and transmission conditions, in- cluding SNR, compression ratio, and LPIPS estimation. Unlik e rule-based mechanisms, it can sensitiv ely identify sev erely corrupted samples under adverse channel conditions and ac- curately request retransmission, thereby effecti vely improving reconstruction quality . This observation not only confirms the benefit of retransmission for enhancing perceptual quality but also highlights the critical role of RL-based strategy in improving the reliability and accuracy of retransmission decisions. C. Ablation Study on Adaptive Retransmission Agent For retransmission decision-making, the core objective is to balance additional communication overhead against reliabil- ity guarantees. In rule-based methods, achieving an optimal balance through manually selected retransmission thresholds or ratios is inherently difficult. T o address this, we conduct an ablation study in which a brute-force search is used to determine a rule-based retransmission threshold that matches Fig. 12: Ablation Study on CIF AR10 under an A WGN channel. the retransmission ratio learned by the RL agent under a balanced overhead-reliability tradeoff. This variant is referred to as S3CHARQ − OFF . As shown in Fig. 12, the retransmission ratio of the of- fline rule-based scheme is largely determined by the LPIPS distribution of samples after the initial transmission, resulting in relatively uniform retransmission behavior across different SNRs. This lack of adaptivity leads to inefficient retransmis- sion resource allocation. In contrast, S3CHARQ dynamically adjusts retransmission decisions according to channel condi- tions. For instance, under low-SNR conditions, S3CHARQ tends to trigger retransmission for samples with estimated LPIPS values close to the threshold, mitigating misjudgments caused by estimation errors. When the SNR is higher , it adopts more conservati ve retransmission decisions to reduce communication overhead. At low SNR (1 dB), S3CHARQ achiev es a 0 . 28 dB gain in 97th-percentile PSNR over the offline scheme, while under high-SNR conditions, it maintains comparable reconstruction quality with consistently fewer re- transmissions. These results highlight that by lev eraging rein- forcement learning, S3CHARQ enables sample-lev el, context- aware retransmission decisions. Such adaptive beha vior effec- tiv ely compensates for estimation inaccuracies and achiev es a more fav orable tradeoff between transmission efficiency and reliability than rule-based threshold schemes. V I . C O N C L U S I O N In this paper , we proposed S3CHARQ, a semantic commu- nication system with an adaptiv e retransmission mechanism for image transmission. T o jointly optimize semantic encoding and check coding, we dev eloped JS3C, which enables collabo- rativ e decoding by lev eraging both encoded features and check codew ords. T o address the con vergence challenges arising from multiple optimization objectives, we designed a training strategy based on information bottleneck theory , allowing joint optimization of verification and decoding processes and effecti vely improving reconstruction quality . Furthermore, we introduced a PPO-based adaptive retransmission agent that makes sample-lev el retransmission decisions based on cur- rent channel conditions and estimated reconstruction quality , thereby mitigating the impact of LPIPS estimation errors on system reliability . Extensi ve experiments demonstrated that the proposed S3CHARQ significantly outperforms the existing schemes in terms of PSNR, LPIPS, and outage probability . Especially under a 1/8 retransmission ratio and an A WGN channel with SNR = 1dB , S3CHARQ achieves an outage probability of only 3.38%, substantially outperforming base- line HARQ schemes. These results clearly demonstrate the effecti veness of S3CHARQ in enhancing both transmission efficienc y and reliability . R E F E R E N C E S [1] D. G ¨ und ¨ uz, Z. Qin, I. E. Aguerri, H. S. Dhillon, Z. Y ang, A. Y ener, K. K. W ong, and C.-B. Chae, “Beyond transmitting bits: Context, seman- tics, and task-oriented communications, ” IEEE J. Sel. Ar eas Commun. , vol. 41, pp. 5–41, Jan 2023. [2] H. Xie, Z. Qin, G. Y . Li, and B.-H. Juang, “Deep learning enabled semantic communication systems, ” IEEE T rans. Signal Pr ocess. , vol. 69, pp. 2663–2675, Apr 2021. [3] E. Bourtsoulatze, D. B. Kurka, and D. G ¨ und ¨ uz, “Deep joint source- channel coding for wireless image transmission, ” in ICASSP 2019 - 2019 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pp. 4774–4778, 2019. [4] K. Y ang, S. W ang, J. Dai, X. Qin, K. Niu, and P . Zhang, “Swinjscc: T aming swin transformer for deep joint source-channel coding, ” IEEE T rans. Cogn. Commun. Netw . , vol. 11, pp. 90–104, Feb 2025. [5] M. Gong, S. W ang, and S. Bi, “ Alternative multi-phase training with mask attack for digital semantic communications, ” in 2024 IEEE/CIC In- ternational Conference on Communications in China (ICCC) , pp. 1621– 1626, 2024. [6] P . Luo, H. Zhao, K. Cao, Y . Liu, Y . Zhang, and J. W ei, “Emotion-aided semantic communication system for reliable semantic recovery under low SNR, ” IEEE Commun. Lett. , vol. 28, pp. 503–507, Mar 2024. [7] Y . Zhang, Y . Zhang, H. Zhao, P . Luo, K. T an, J. Deng, and J. W ei, “T opic enhanced semantic communication system for reliable semantic recovery , ” IEEE T rans. Cogn. Commun. Netw . , vol. 12, pp. 2814–2828, Jun 2026. [8] Q. Zeng, Z. W ang, Y . Zhou, H. W u, L. Y ang, and K. Huang, “Knowledge-based ultra-low-latency semantic communications for robotic edge intelligence, ” IEEE T rans. Commun. , vol. 73, pp. 4925– 4940, Jul 2025. [9] M. Zheng, H. Y ang, S. Liu, K. Lin, L. Xiao, and Z. Han, “Reliable semantic communication with qoe-driv en resource scheduling for U A V- assisted MEC, ” IEEE Tr ans. V eh. T echnol. , vol. 74, pp. 11484–11489, Jul 2025. [10] M. Chen, Z. Sun, X. He, L. W ang, and A. Al-Dulaimi, “Llm-based semantic communication: The way from task-originated to general, ” IEEE W irel. Commun. Lett. , vol. 14, pp. 3029–3033, Oct 2025. [11] H. Liu, M.-M. Zhao, M. Lei, L. Li, Y . Cai, and M.-J. Zhao, “ Adaptiv e HARQ design for semantic image transmission, ” in 2024 IEEE 100th V ehicular T echnology Confer ence (VTC2024-F all) , pp. 1–6, 2024. [12] W . Zhang, H. Zhang, H. Ma, H. Shao, N. W ang, and V . C. M. Leung, “Predictiv e and adaptiv e deep coding for wireless image transmission in semantic communication, ” IEEE Tr ans. Wir el. Commun. , vol. 22, pp. 5486–5501, Aug 2023. [13] G. Liang, X. Zhang, J. Zhang, Y . Sun, Q. Cui, and X. T ao, “Semantic codebook-based HARQ for wireless image transmission, ” IEEE Tr ans. Commun. , vol. 73, pp. 14332–14346, Dec 2025. [14] J. Dai, P . Zhang, K. Niu, S. W ang, Z. Si, and X. Qin, “Communication beyond transmitting bits: Semantics-guided source and channel coding, ” IEEE W irel. Commun. , vol. 30, pp. 170–177, Aug 2023. [15] B. T ang, Q. Li, L. Huang, and Y . Y in, “T ext semantic communication systems with sentence-lev el semantic fidelity , ” in 2023 IEEE Wir eless Communications and Networking Confer ence (WCNC) , pp. 1–6, 2023. [16] X. Peng, Z. Qin, X. T ao, J. Lu, and L. Hanzo, “ A robust semantic text communication system, ” IEEE T rans. W irel. Commun. , vol. 23, pp. 11372–11385, Sep 2024. [17] E. Erdemir , T .-Y . T ung, P . L. Dragotti, and D. G ¨ und ¨ uz, “Generative joint source-channel coding for semantic image transmission, ” IEEE J. Sel. Ar eas Commun. , vol. 41, pp. 2645–2657, Aug 2023. [18] M. Gong, S. W ang, S. Bi, Y . Wu, and L. Qian, “Digital semantic communications: An alternating multi-phase training strategy with mask attack, ” IEEE T rans. W irel. Commun. , v ol. 25, pp. 4452–4466, 2026. [19] P . Jiang, C.-K. W en, S. Jin, and G. Y . Li, “W ireless semantic communi- cations for video conferencing, ” IEEE J. Sel. Ar eas Commun. , vol. 41, pp. 230–244, Jan 2023. [20] Z. Zhang, Q. Y ang, S. He, and J. Chen, “Deep learning enabled semantic communication systems for video transmission, ” in 2023 IEEE 98th V ehicular T echnology Confer ence (VTC2023-F all) , pp. 1–5, 2023. [21] J. Shao, Y . Mao, and J. Zhang, “Learning task-oriented communication for edge inference: An information bottleneck approach, ” IEEE J . Sel. Ar eas Commun. , vol. 40, pp. 197–211, Jan 2022. [22] S. Choi, J. Oh, T . S. Do, S. Hong, J. Kim, and S. Cho, “ A survey on noise detection and correction in semantic communication, ” in 2025 Sixteenth International Conference on Ubiquitous and Future Networks (ICUFN) , pp. 23–25, 2025. [23] N. Kalimuthu, “Goal-oriented semantic communication: A foundational enabler for ultra-reliable lo w-latency communication in 6G-enabled in- ternet of things, ” International Journal of Emerging T rends in Computer Science and Information T ec hnology , pp. 140–148, 2025. [24] Q. Hu, G. Zhang, Z. Qin, Y . Cai, G. Y u, and G. Y . Li, “Robust semantic communications against semantic noise, ” in 2022 IEEE 96th V ehicular T echnology Confer ence (VTC2022-F all) , pp. 1–6, 2022. [25] H. Niu, L. W ang, Z. Lu, K. Du, and X. W en, “Deep learning enabled video semantic transmission against multi-dimensional noise, ” in 2023 IEEE Globecom W orkshops (GC Wkshps) , pp. 1267–1272, 2023. [26] Y . Li, X. W ang, Z. Shi, and Y . Fu, “Semantic HARQ for intelligent transportation systems: Joint source-channel coding-powered reliable retransmissions, ” arXiv pr eprint arXiv:2504.14615 , 2025. [27] X. W ang, X. Xie, M. Li, and Z. Liu, “Diffusion-aided bandwidth- efficient semantic communication with adapti ve requests, ” arXiv pr eprint arXiv:2510.26442 , 2025. [28] X. Li, S. Bi, S. W ang, X. Li, and Y .-J. A. Zhang, “Digital semantic device-edge co-inference with task-oriented arq, ” IEEE T ransactions on V ehicular T echnology , vol. 73, no. 9, pp. 13986–13990, 2024. [29] P . Jiang, C.-K. W en, S. Jin, and G. Y . Li, “Deep source-channel coding for sentence semantic transmission with HARQ, ” IEEE T rans. Commun. , vol. 70, pp. 5225–5240, Aug 2022. [30] L. W ang, W . Wu, F . Zhou, Z. Qin, and Q. W u, “Cross-layer security for semantic communications: Metrics and optimization, ” IEEE T rans. V eh. T echnol. , vol. 74, pp. 13179–13183, Aug 2025. [31] K. Sun, X. Li, X.-H. Lin, and S. Bi, “LSTM-enabled scalable semantic retransmission for de vice-edge co-inference systems, ” in 2025 IEEE/CIC International Conference on Communications in China (ICCC) , pp. 1–6, 2025. [32] J. Huang, J. Jiao, Y . W ang, R. Lu, and Q. Zhang, “Semantic-empowered utility loss of information transmission policy in satellite-integrated internet, ” in IEEE INFOCOM 2024-IEEE Conference on Computer Communications W orkshops (INFOCOM WKSHPS) , pp. 1–6, 2024. [33] K. Lu, R. Li, X. Chen, Z. Zhao, and H. Zhang, “Reinforcement learning-powered semantic communication via semantic similarity , ” arXiv preprint arXiv:2108.12121 , 2021. [34] G. Liu, Y . Liu, R. Zhang, H. Du, D. Niyato, Z. Xiong, S. Sun, and A. Jamalipour , “W ireless agentic ai with retrie val-augmented multimodal semantic perception, ” arXiv preprint , 2025. [35] M. Gong, S. W ang, F . Y e, and S. Bi, “Compression before fusion: Broadcast semantic communication system for heterogeneous tasks, ” IEEE T rans. W ireless Commun. , vol. 23, pp. 19428–19443, Dec 2024. [36] A. A. Alemi, I. Fischer , J. V . Dillon, and K. Murphy , “Deep variational information bottleneck, ” arXiv preprint , 2019. [37] J. Shao, Y . Mao, and J. Zhang, “T ask-oriented communication for mul- tidevice cooperative edge inference, ” IEEE Tr ans. W ireless Commun. , vol. 22, pp. 73–87, Jan 2023. [38] Y . Pei and X. Hou, “Learning representations in reinforcement learning : An information bottleneck approach, ” arXiv preprint , 2019.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment