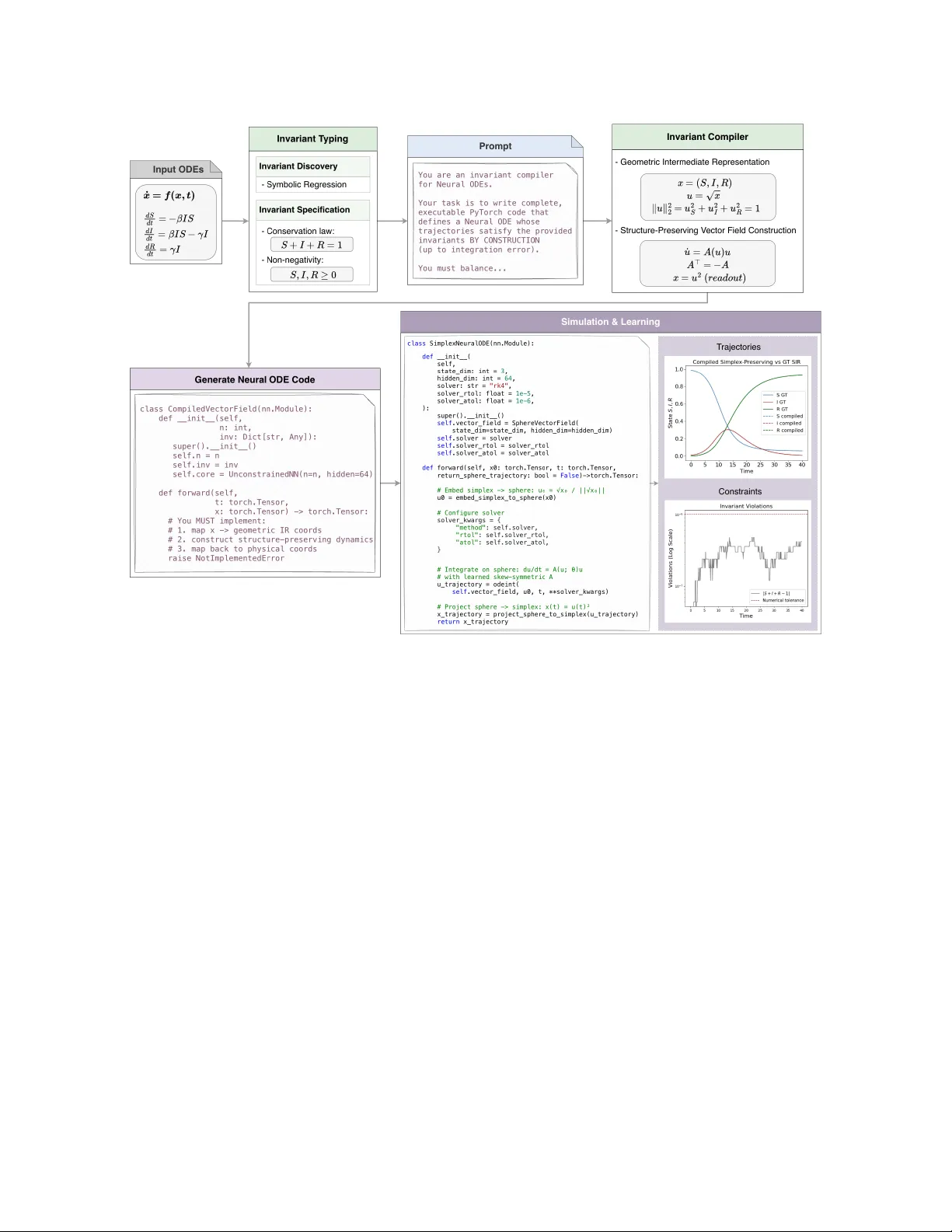

An Invariant Compiler for Neural ODEs in AI-Accelerated Scientific Simulation

Neural ODEs are increasingly used as continuous-time models for scientific and sensor data, but unconstrained neural ODEs can drift and violate domain invariants (e.g., conservation laws), yielding physically implausible solutions. In turn, this can …

Authors: Fangzhou Yu, Yiqi Su, Ray Lee