Unveiling Hidden Convexity in Deep Learning: a Sparse Signal Processing Perspective

Deep neural networks (DNNs), particularly those using Rectified Linear Unit (ReLU) activation functions, have achieved remarkable success across diverse machine learning tasks, including image recognition, audio processing, and language modeling. Des…

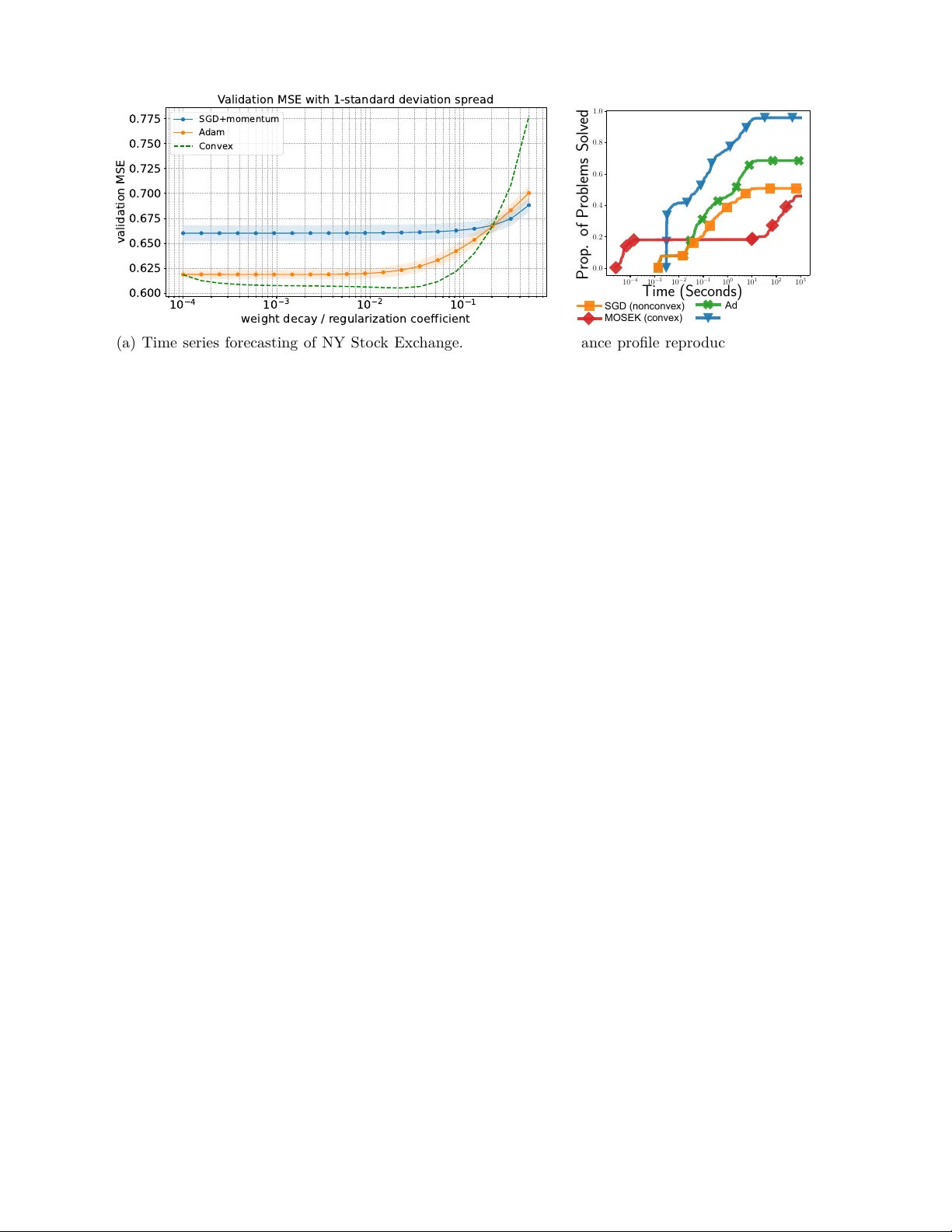

Authors: Emi Zeger, Mert Pilanci