Rethinking Masking Strategies for Masked Prediction-based Audio Self-supervised Learning

Since the introduction of Masked Autoencoders, various improvements to masking techniques have been explored. In this paper, we rethink masking strategies for audio representation learning using masked prediction-based self-supervised learning (SSL) …

Authors: Daisuke Niizumi, Daiki Takeuchi, Masahiro Yasuda

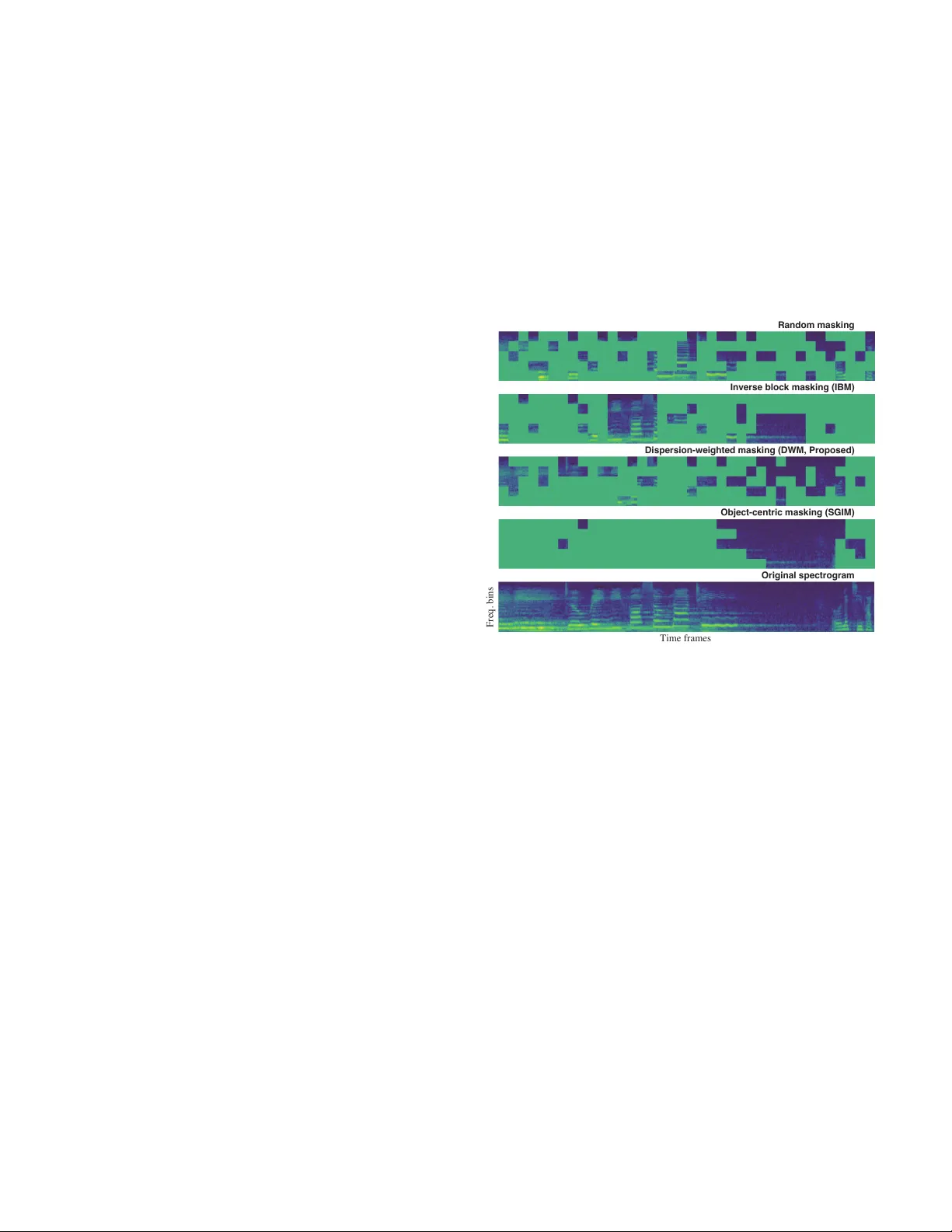

Rethinking Masking Strate gies for Masked Prediction-based Audio Self-supervised Learning Daisuke Niizumi † ‡ ¶ , Daiki T akeuchi ‡ , Masahiro Y asuda ‡ , Binh Thien Nguyen ‡ , Noboru Harada ‡ , and Nobutaka Ono † † T okyo Metr opolitan University , T okyo, Japan ‡ NTT Inc. , Atsugi, Japan ¶ daisukelab .cs@gmail.com Abstract —Since the introduction of Mask ed A utoencoders, var- ious impro vements to masking techniques hav e been explored. In this paper , we rethink masking strategies f or audio representation learning using masked pr ediction-based self-supervised learning (SSL) on general audio spectrograms. While r ecent informed masking techniques ha ve attracted attention, we observe that they incur substantial computational overhead. Motivated by this observation, we pr opose dispersion-weighted masking (D WM), a lightweight masking strategy that leverages the spectral sparsity inherent in the frequency structure of audio content. Our experiments show that inv erse block masking, commonly used in recent SSL frameworks, improves audio event understanding performance while introducing a trade-off in generalization. The proposed D WM alleviates these limitations and computational complexity , leading to consistent performance improvements. This work pro vides practical guidance on masking strategy de- sign for masked prediction-based audio repr esentation learning. Index T erms —masking strategy , masked autoencoders, spec- trogram, audio representation learning I . I N T RO D U C T I O N Masked Autoencoders (MAE) [1] have had a significant impact on representation learning through masked prediction- based self-supervised learning (SSL), initially in the image domain, and have subsequently influenced v arious studies on general-purpose audio representations [2]–[4]. While the original MAE demonstrated the ef fectiv eness of fully random masking, subsequent work in the image domain showed that more structured strategies can lead to improved representa- tions, such as block masking in BEiT [5] and in verse block masking (IBM) in data2vec 2.0 [6]. In addition to masking strategies that rely purely on randomness regardless of data content, several recent studies have explored informed mask- ing approaches [7], [8], which exploit attention patterns or patch-lev el representations deriv ed from pretrained models or from the model itself during training. In the audio domain, many existing methods largely follo w the random masking strategy introduced in MAE, whereas recent SSL approaches hav e reported performance gains by adopting IBM [9], [10]. Ho wev er , in these studies, the contri- butions of masking strategies are often entangled with other training components, and evaluations are typically conducted This work w as partially supported by JST Strategic International Collabo- rativ e Research Program (SICORP), Grant Number JPMJSC2306, Japan. Freq. bins Time frames Original spectrogram Object-centric masking (SGIM) Dispersion-weighted masking (DWM, Proposed) Inverse block masking (IBM) Random masking Fig. 1. Masking examples on an audio spectrogram (hint ratio r h = 0 . 0 ). Object-centric masking (SGIM) almost entirely covers audio e vents (objects), whereas the proposed DWM applies dispersion-weighted random masking based on patch-wise spectral variability , yielding masking patterns that loosely align with object-centric behavior . on a limited set of downstream tasks. As a result, the role of masking strategy design in masked prediction-based audio representation learning remains insuf ficiently understood, es- pecially in terms of generalization performance, leaving room for further inv estigation into alternative masking strategies. In this study , we inv estigate how v arious masking strate gies, ranging from random masking to informed masking, con- tribute to masked prediction-based representation learning for general audio (e.g., environmental sound, speech, and music) using spectrogram inputs. Using three masked prediction- based SSL frame works, we conduct a comparati ve analysis of random masking, IBM, informed masking, and the proposed dispersion-weighted masking (DWM), as illustrated in Fig. 1. D WM is motiv ated by the observation that typical audio ev ents do not occupy all frequency components uniformly , resulting in blank or lo w-energy regions in spectrogram frequency bins. By leveraging this property , DWM assigns higher masking probabilities to regions with lar ger disper- sion, thereby incorporating spectral structure into the masking process. Compared to pre vious informed masking approaches that require non-ne gligible computational o verhead to identify candidate regions, DWM provides a simple and lightweight algorithm, enabling a spectrogram-friendly and efficient form of informed masking. Our experimental results provide insights into ho w dif ferent masking strategies af fect representation learning. While IBM facilitates the learning of representations that are well suited for audio ev ent semantics, it degrades performance on other downstream tasks, resulting in an overall trade-of f. Informed masking approaches, on the other hand, incur non-negligible computational costs due to the need to compute masking candidates using the model during training. In contrast, the proposed DWM improves performance on audio ev ent under- standing without increasing computational complexity , while suppressing performance degradation on other tasks and pre- serving generalization performance. The findings of this study , together with the proposed D WM, are applicable to a broad range of masked prediction- based audio representation learning methods and contribute to masking strategy design in the following ways: • W e clarify the impact of masking strategies on audio spectrogram-based representation learning, highlighting their effects across different downstream tasks. • W e propose D WM, a lightweight masking strategy tai- lored to audio spectrograms, which leverages dispersion patterns with minimal additional computational cost. W e publicly release our code 1 to support reproducibility and to facilitate further adv ances in mask ed prediction-based audio representation learning. I I . P R E L I M I NA RY W e introduce the background and prior work necessary to frame our study on masking strategies for masked prediction- based representation learning in this section. A. Masked prediction-based r epr esentation learning for audio spectr ograms Masked prediction-based representation learning operates by di viding an input into visible and masked patches, denoted as x v and x m , respecti vely . An encoder f ( · ) processes only the visible patches to produce latent representations z v = f ( x v ) . A predictor g ( · ) then infers the masked part of the input as ˆ z m = g (concat( z v , m )) , where m denotes mask tokens concatenated at the masked positions. The form of the prediction target ˆ z m and the role of the predictor g ( · ) depend on the specific method. For example, in MAE [1], ˆ z m corresponds to the reconstructed input signal in the masked regions, and g ( · ) acts as a reconstruction decoder . In Masked Modeling Duo (M2D) [11], also used in our experiments, ˆ z m represents latent representations of the masked regions, and g ( · ) serves as a predictor in the representation space. 1 https://github .com/onolab- tmu/audio ssl masking For audio spectrograms, MAE-AST [2], MSM-MAE [3], Audio-MAE [4], and CED [12] are MAE-based methods. Prior to MAE, SSAST [13] also learns representations us- ing a reconstruction-based objectiv e. In contrast, M2D [11], A TST [14], EA T [9], and AaPE [10] learn by predicting masked patch representations, while BEA Ts [15] predicts tokenized labels. M2D-CLAP [16] further extends M2D by combining masked prediction with contrastive language-audio pre-training (CLAP), enabling the model to directly learn semantic information from natural language descriptions of audio. Among these methods, most adopt a random masking strategy . More recently , EA T and AaPE hav e demonstrated performance improvements by le veraging IBM. B. Masking strate gies Among existing masking strategies, we consider random masking, inv erse block masking (IBM) [6], and self-guided informed masking (SGIM) [8] in our e valuation. a) Random Masking: In MAE, sev eral masking v ariants, such as block and grid masking, were in vestigated, and random masking was found to be the most effecti ve, enabling higher masking ratios while maintaining strong performance. b) In verse Block Masking: IBM starts with a complete mask ov er all patches and iterativ ely restores original content in block-shaped re gions, which may o verlap, until the number of masked embeddings matches a target masking ratio. As a result, visible patches remain in contiguous block regions; howe ver , the selection of these regions is performed randomly and independently of the input content, making IBM a content- agnostic masking strategy . EA T [9] extends IBM to audio spectrograms and provides empirical evidence of its utility for audio representation learning. c) Self-Guided Informed Masking: SGIM, proposed in SG-MAE [8], is an object-centric masking strate gy that applies masks to estimated object-centric regions. SGIM assigns an object relev ance score S i to each of the N input patches, where i denotes the patch index, and determines masked and visible patches based on their ranking. A hint ratio is used to randomly replace a subset of masked patches with visible ones to control task dif ficulty , and is gradually annealed tow ard zero through scheduling, as in [7]. T o compute these relev ance scores, SGIM performs a Nor- malized Cut [17]-based partitioning using pairwise similarities between patch features [18]. Specifically , it applies an eigen- value decomposition to the similarity matrix constructed from patch representations, and the resulting partition is used to ev aluate the relev ance score of each patch. Howe ver , this procedure in volv es eigen value decomposition, whose computational complexity generally scales as O ( N 3 ) . This high computational cost poses a challenge to training ef- ficiency in self-supervised pre-training, where a large number of patches and batch-wise processing are required. In our preliminary implementation based on M2D using four A100 GPUs, random masking required approximately 7 minutes per epoch, whereas SGIM incurred a substantially higher cost of approximately 35 minutes, resulting in roughly a fivefold slo wdown. Due to this computational overhead, ap- plying SGIM broadly across masked prediction-based learning framew orks can be impractical. These limitations motiv ate the need for a lightweight al- ternativ e that retains the benefits of informed masking while remaining computationally efficient. I I I . P RO P O S E D M A S K I N G M E T H O D : DW M D WM aims to approximate informed masking tailored to audio spectrograms while maintaining low computational cost. As illustrated by the object-centric masking e xample produced by SGIM in Fig. 1 (Object-centric masking), patches corre- sponding to spectrally sparse regions are often assigned as visible in SGIM. Unlike images, audio spectrograms naturally contain blank regions when certain frequency components are absent. Based on this observation, DWM is moti vated by the idea that the dispersion of a spectrogram patch can serve as a proxy for the amount of information related to audio e vents contained in that patch. As shown in Algorithm 1, D WM operates on an input patch sequence X and first estimates patch-wise importance using mean absolute deviation (MAD) as a dispersion metric. The MAD of a patch x i is defined as MAD ( x i ) = 1 n P j | x i,j − ¯ x i | , where n is the number of elements in the patch and ¯ x i is the patch mean, and ϵ is a small constant to pre vent division by zero. The resulting dispersion values are con verted into sampling probabilities, which are used to sample an initial set of masked patches M 0 in a weighted manner . From M 0 , a subset of patches is randomly selected according to the hint ratio and returned to the visible set. T o preserv e the target mask ratio, the same number of patches is then randomly selected from the visible set and reassigned as masked. As a result, patches with higher dispersion are probabilisti- cally more likely to be masked, while the hint-based exchange process prevents the masking task from becoming o verly diffi- cult. Although D WM does not explicitly model object-centric regions, it assigns lower masking probability to spectrally sparse patches, which partially aligns its masking behavior with the effects observed in SGIM for audio spectrograms, without expensi ve computations. Similar to SGIM, the hint ratio r h is determined by a scheduling strategy . Follo wing SemMAE [7], r h is updated based on training progress as r h = 1 . 0 − epoch total epochs γ , (1) where γ is a scheduling parameter . I V . E X P E R I M E N T S W e in vestigated the effects of different masking strategies on masked prediction-based representation learning for gen- eral audio, focusing on both task-specific performance and generalization across di verse do wnstream tasks. Learned repre- sentations were ev aluated through linear ev aluation and fine- tuning on benchmarks used in M2D [11], and the proposed Algorithm 1 Dispersion-weighted Masking (D WM) Input: Input sequence X , mask ratio r m , hint ratio r h . Output: V isible indices V final , Masked indices M final . 1: # Step 1: Setup parameters 2: L ← T otal number of patches 3: N keep ← ⌊ L · (1 − r m ) ⌋ , N mask ← L − N keep 4: N hint ← ⌊ N mask · r h ⌋ 5: # Step 2: Importance estimation 6: for each patch x i in X do 7: ω i ← MAD ( x i ) { Calculate mean absolute deviation } 8: end for 9: P ( i ) ← ( ω i + ϵ ) / P ( ω j + ϵ ) { Sampling probability } 10: # Step 3: Initial weighted sampling 11: M 0 ← Sample ( { 1 , . . . , L } , N mask , prob = P ) 12: V 0 ← { 1 , . . . , L } \ M 0 13: # Step 4: Hint-based exchang e pr ocess 14: H ← RandomSelect ( M 0 , N hint ) { Select hints } 15: V temp ← V 0 ∪ H 16: C ← RandomSelect ( V temp , N hint ) { Maintain N keep } 17: # Step 5: F inal index sets 18: V final ← V temp \ C 19: M final ← { 1 , . . . , L } \ V final method was further analyzed via ablation studies on hint ratio scheduling. Linear ev aluation focuses on the linear separability of frozen representations across di verse downstream tasks and also serves as an indicator of generalization. Fine-tuning ev al- uates end-to-end performance under task-specific optimization, reflecting practical ef fectiveness on standard benchmarks. Our ablation studies e xamined the sensiti vity of DWM to hint ratio scheduling, providing insight into its design behavior . W e ev aluated three masking strategies, random masking, IBM, and the proposed D WM, by integrating each into masked prediction-based SSL frame works. SGIM was excluded from ev aluation due to its significant computational ov erhead, which renders lar ge-scale pre-training impractical in our e xperimental setup. Experiments were conducted using a series of three SSL framew orks: MSM-MAE [3], which adapts MAE to audio spectrograms; M2D [11], which learns by predicting masked patch representations; and M2D-CLAP [16], which extends M2D by incorporating semantic supervision from audio-text alignment. These frameworks share most of the encoder ar- chitecture and training pipeline, differing primarily in their learning objecti ves, which makes them well suited for a controlled comparison in our experiments. A. Experimental Setup All pre-training settings followed those in the base SSL methods, including a masking ratio of 0.75 for MSM-MAE and 0.7 for M2D and M2D-CLAP . W e pre-trained two models per masking strate gy for MSM-MAE and M2D, while only one model per strate gy was trained for M2D-CLAP due to resource constraints. For DWM, the hint scheduling parameter γ was T ABLE I L I NE A R E V A L UATI O N R E S U L T S ( % ) W I T H 9 5 % C I C OM PA RI N G D I FF E RE N T M A S KI N G S T R A T E G I ES . En v . sound tasks Speech tasks Music tasks Masking strate gy ESC-50 US8K SPCV2 VC1 VF CRM-D GTZAN NSynth Surge A vg. (MSM-MAE variants) Random 89.2 ± 0.4 87.2 ± 0.2 96.1 ± 0.1 73.4 ± 0.4 97.9 ± 0.1 71.3 ± 0.2 80.3 ± 1.0 74.6 ± 0.4 43.2 ± 0.2 79.2 IBM [6] 90.1 ± 0.3 88.2 ± 0.4 95.4 ± 0.1 65.1 ± 0.5 97.7 ± 0.1 70.9 ± 0.6 80.5 ± 2.3 74.2 ± 0.6 42.8 ± 0.1 78.3 vs. random [pp] +0.9 +1.0 -0.7 -8.3 -0.2 -0.4 +0.2 -0.4 -0.4 -0.9 DWM (proposed) 89.9 ± 0.7 87.4 ± 0.1 95.9 ± 0.1 72.5 ± 0.7 97.8 ± 0.1 70.9 ± 0.3 81.8 ± 1.1 75.5 ± 0.1 43.3 ± 0.3 79.5 vs. random [pp] +0.7 +0.2 -0.2 -0.9 -0.1 -0.4 +1.5 +0.9 +0.1 +0.3 (M2D variants) Random 91.3 ± 0.6 87.6 ± 0.2 96.0 ± 0.1 73.4 ± 0.2 98.3 ± 0.0 73.0 ± 0.7 84.1 ± 2.7 75.7 ± 0.1 42.1 ± 0.2 80.2 IBM [6] 92.8 ± 0.3 88.2 ± 0.2 95.8 ± 0.1 69.1 ± 0.3 98.3 ± 0.0 73.2 ± 0.7 85.3 ± 2.0 75.5 ± 0.6 42.5 ± 0.2 80.1 vs. random [pp] +1.5 +0.6 -0.2 -4.3 0.0 +0.2 +1.2 -0.2 +0.4 -0.1 DWM (proposed) 91.9 ± 0.3 87.9 ± 0.2 96.1 ± 0.1 72.8 ± 0.4 98.4 ± 0.0 73.0 ± 0.8 85.1 ± 1.2 75.4 ± 0.5 42.0 ± 0.3 80.3 vs. random [pp] +0.6 +0.3 +0.1 -0.6 +0.1 0.0 +1.0 -0.3 -0.1 +0.1 (M2D-CLAP variants) Random 95.2 ± 0.3 89.5 ± 0.2 95.6 ± 0.1 68.6 ± 0.5 98.1 ± 0.1 73.4 ± 0.7 86.6 ± 1.6 76.5 ± 0.4 42.2 ± 0.3 80.6 IBM [6] 95.8 ± 0.2 89.5 ± 0.2 95.2 ± 0.1 65.7 ± 0.2 97.9 ± 0.1 73.6 ± 0.6 86.4 ± 0.9 76.5 ± 0.6 42.5 ± 0.2 80.3 vs. random [pp] +0.6 0.0 -0.4 -2.9 -0.2 +0.2 -0.2 0.0 +0.3 -0.3 DWM (proposed) 95.6 ± 1.0 90.2 ± 0.4 95.5 ± 0.1 67.9 ± 0.5 98.0 ± 0.0 73.2 ± 1.8 87.7 ± 4.3 75.2 ± 0.4 43.0 ± 0.2 80.7 vs. random [pp] +0.4 +0.7 -0.1 -0.7 -0.1 -0.2 +1.1 -1.3 +0.8 +0.1 (Refer ence: Results of prior methods evaluated in this work) BEA Ts [15] (Random) 86.9 ± 1.4 84.8 ± 0.1 89.4 ± 0.1 41.4 ± 0.7 94.1 ± 0.3 64.7 ± 0.8 72.6 ± 4.3 75.9 ± 0.2 39.3 ± 0.4 72.1 EA T [9] (IBM) 85.6 ± 0.4 81.7 ± 0.3 81.5 ± 0.4 39.6 ± 0.5 92.6 ± 0.0 64.9 ± 2.8 73.7 ± 0.5 71.9 ± 0.1 39.0 ± 0.3 70.0 set to 2, coinciding with the v alue reported in SemMAE [7]. The hint ratio was initialized at 1.0 and gradually annealed to zero, as illustrated in Fig. 2. For IBM, we followed EA T [9] for the block size (i.e., 5 × 5 ). All ev aluation settings followed those in [3], [11], [16], [19], including the e valuation platform (EV AR 2 ) and the do wn- stream classification tasks spanning en vironmental sound, speech, and music. For each model and downstream task, we conducted three runs and report the resulting statistics as the final results. When two models are pre-trained, this results in six ev aluations per pre-training setting. En vironmental sound tasks include AudioSet [20], ESC- 50 [21], UrbanSound8K [22] (US8K); speech tasks include Speech Commands V2 [23] (SPCV2), V oxCeleb1 [24] (VC1), V oxForge [25] (VF), and CREMA-D [26] (CRM-D); and music tasks include GTZAN [27], NSynth [28], and the Pitch Audio Dataset [29] (Surge). AudioSet is ev aluated under two settings: AS2M using the full 2M training samples and AS20K using 21K samples from the balanced training set. Surge is a pitch classification task ov er 88 MIDI notes. All tasks are formulated as classification problems, and performance is reported in terms of accuracy or mean a verage precision (mAP). B. Comparison of Masking Strate gies in Linear Evaluation W ith the linear ev aluation setup, we compared masking strategies by assessing the linear separability of frozen rep- resentations across downstream tasks, which also serv es as an indicator of representation generalization. 2 https://github .com/nttcslab/ev al- audio- repr T ABLE II F I NE - T U NI N G R E S ULT S W I T H 9 5% C I C O MPA R I NG M AS K I N G S T RATE G I E S . Masking AS2M AS20K ESC-50 SPCV2 VC1 strategy mAP mAP acc(%) acc(%) acc(%) (MAE variants) Random 47.4 ± 0.1 37.9 ± 0.0 95.4 ± 0.1 98.4 ± 0.0 96.6 ± 0.2 IBM [6] 47.4 ± 0.1 38.6 ± 0.1 95.4 ± 0.3 98.3 ± 0.0 95.4 ± 0.5 diff.[pp] 0.0 +0.7 0.0 -0.1 -1.2 DWM 47.5 ± 0.1 38.3 ± 0.1 95.5 ± 0.2 98.4 ± 0.1 96.4 ± 0.2 diff.[pp] +0.1 +0.4 +0.1 0.0 -0.2 (M2D variants) Random 47.8 ± 0.1 38.6 ± 0.1 96.0 ± 0.2 98.4 ± 0.1 96.3 ± 0.2 IBM [6] 47.9 ± 0.2 39.3 ± 0.1 96.2 ± 0.2 98.4 ± 0.0 95.4 ± 0.7 diff.[pp] +0.1 +0.7 +0.2 0.0 -0.9 DWM 47.8 ± 0.0 38.9 ± 0.0 96.0 ± 0.2 98.4 ± 0.1 96.1 ± 0.2 diff.[pp] 0.0 +0.3 0.0 0.0 -0.2 (M2D-CLAP variants) Random 49.0 ± 0.1 42.1 ± 0.0 97.8 ± 0.1 98.4 ± 0.0 95.5 ± 0.3 IBM [6] 49.1 ± 0.1 42.3 ± 0.1 98.0 ± 0.1 98.4 ± 0.1 94.2 ± 0.1 diff.[pp] +0.1 +0.2 +0.2 0.0 -1.3 DWM 49.0 ± 0.2 41.8 ± 0.1 97.8 ± 0.1 98.5 ± 0.0 95.1 ± 0.4 diff.[pp] 0.0 -0.3 0.0 +0.1 -0.4 (Refer ence: P erformance reported in prior studies) BEA Ts [15] 48.0 38.3 95.6 98.3 - EA T [9] 48.6 40.2 95.9 98.3 - The results in T able I indicate that both IBM and D WM influence downstream task performance consistently across different base SSL frameworks. Their effects vary by task domain: performance improvements are generally observed for environmental sound tasks, whereas degradations tend to appear in speech tasks. For music tasks, the effect differs across tasks: performance improv es for genre classification (GTZAN) but degrades for instrument classification (NSynth). T ABLE III A B LAT IO N O F H IN T R ATI O S C H E DU L I N G I N DW M : L I N EA R E V A L UAT IO N R E S U L T S ( % ) W I T H 9 5 % C I . En v . sound tasks Speech tasks Music tasks Scheduling ESC-50 US8K SPCV2 VC1 VF CRM-D GTZAN NSynth Sur ge A vg. Baseline MSM-MAE 89.2 ± 0.4 87.2 ± 0.2 96.1 ± 0.1 73.4 ± 0.4 97.9 ± 0.1 71.3 ± 0.2 80.3 ± 1.0 74.6 ± 0.4 43.2 ± 0.2 79.2 Hint ratio r h =0.0 89.9 ± 1.1 87.3 ± 0.7 95.5 ± 0.1 69.1 ± 0.1 97.7 ± 0.0 70.4 ± 0.6 83.4 ± 4.5 75.4 ± 0.2 42.5 ± 0.9 79.0 Hint ratio r h =0.5 87.4 ± 0.9 86.3 ± 0.6 96.0 ± 0.2 71.8 ± 0.5 97.6 ± 0.3 70.5 ± 0.9 82.1 ± 3.1 74.3 ± 0.4 42.5 ± 0.5 78.7 Schedule γ =1 88.1 ± 0.2 87.3 ± 0.3 96.2 ± 0.2 70.0 ± 0.5 97.7 ± 0.2 71.4 ± 0.9 82.0 ± 6.4 76.0 ± 0.2 42.6 ± 0.7 79.0 Schedule γ =2 89.9 ± 0.7 87.4 ± 0.1 95.9 ± 0.1 72.5 ± 0.7 97.8 ± 0.1 70.9 ± 0.3 81.8 ± 1.1 75.5 ± 0.1 43.3 ± 0.3 79.5 Schedule γ =4 89.5 ± 0.8 87.3 ± 0.3 95.9 ± 0.2 73.4 ± 0.5 98.0 ± 0.1 69.9 ± 1.9 81.7 ± 4.5 74.7 ± 0.2 43.1 ± 0.4 79.3 Schedule γ =8 89.5 ± 2.7 87.2 ± 0.6 96.2 ± 0.0 73.4 ± 0.3 98.0 ± 0.2 70.1 ± 2.0 82.5 ± 5.8 72.5 ± 0.7 42.9 ± 0.4 79.2 Although the variance across tasks is non-negligible, IBM ov erall exhibits a larger impact on performance compared to D WM. IBM particularly improves performance on en vironmental sound tasks (ESC-50 and US8K) by approximately 1 pp, which is consistent with the performance gains on AudioSet reported in EA T that employs IBM. In contrast, notable performance degradation is observed for speak er identification on VC1, with drops of up to 8.3 pp in MSM-MAE and 4.3 pp in M2D. This suggests that IBM may suppress fine-grained speaker -specific cues that are crucial for tasks requiring sen- sitivity to subtle vocal differences. D WM exhibits relatively smaller gains on ESC-50 and US8K compared to IBM, while being characterized by notably smaller performance degradation on speech tasks. Although the improv ements are modest, D WM consistently improv es performance on ESC-50, US8K, and GTZAN, contributing to ov erall performance gains across tasks. Performance degra- dation on speech tasks is largely mitigated, with the drop on VC1 remaining within 1 pp, which is substantially smaller than that observed with IBM. Overall, these results suggest that D WM modestly improves performance while better preserving generalization across diverse downstream tasks. C. Comparison of Masking Strate gies in F ine-tuning In fine-tuning comparisons shown in T able II, the impact of dif ferent masking strategies is generally smaller than that observed in linear ev aluation; nevertheless, similar trends can still be identified, with performance improvements on en vironmental sound tasks and notable degradation on VC1. IBM yields little gain on AS2M but more consistent gains on AS20K, where training data are limited, suggesting that it facilitates learning representations that generalize across div erse patterns of audio events. Conv ersely , the substantial performance drop on speaker identification (VC1) indicates a trade-off with the fine-grained discriminativ e information required for this task. Compared to IBM, DWM exhibits more moderate per- formance changes across tasks, while consistently reducing degradation on VC1, in line with the observations from linear ev aluation. On AS20K, DWM generally maintains fa- vorable performance for MSM-MAE and M2D, indicating improv ed performance with preserved generalization. In con- trast, AS20K performance decreases for M2D-CLAP; although Fig. 2. Hint ratio r h schedules over training epochs for ablation settings. based on limited runs due to computational constraints, it suggests that the effecti veness of DWM may be sensitiv e to training conditions when competing for high-end performance. D. Ablations on D WM Hint Ratio Scheduling W e conducted an ablation study on the scheduling of the hint ratio r h in DWM, which is designed to prev ent the prediction task from becoming ov erly difficult in the early stages of training. As illustrated in Fig. 2, we either fixed r h to a constant value or v aried the scheduling parameter γ , and e valuated the resulting MSM-MAE models using linear ev aluation. The results are summarized in T able III. When r h was fixed to 0.0, some tasks showed improv ed performance; howe ver , a substantial degradation was observ ed on VC1. When r h was fix ed to 0.5, performance impro vements were observ ed only on GTZAN, while de gradation was e vident on the remaining tasks. In contrast, scheduling r h using different v alues of γ re- sulted in more stable behavior . From γ = 2 , degradation on VC1 was effecti vely mitigated, and with γ = 8 , performance became nearly comparable to that of random masking. Con- sistent with observations from SG-MAE [8] and SemMAE [7], these results highlight the importance of appropriately scheduling task difficulty during training, which also holds for DWM. E. Summary of Experiments The experiments reveal several insights into the role of masking strategies. First, masking strategies substantially in- fluence downstream performance across tasks, e ven when the underlying SSL framework is fixed, indicating that masking design is a non-tri vial factor in masked prediction-based representation learning. Second, while IBM can yield notable gains on en vironmental sound tasks, it tends to incur a trade- off with fine-grained discriminativ e tasks such as speaker identification, leading to degraded generalization. In contrast, D WM achiev es more moderate yet consistent improv ements across tasks, while sho wing less performance degradation on speech-related tasks. Finally , the ablation results highlight the importance of appropriately scheduling task difficulty via the hint ratio, demonstrating that controlled masking progression is critical for stable and generalizable representation learning. V . C O N C L U S I O N In this work, we in vestigated the role of masking strate- gies in masked prediction-based self-supervised learning for general audio using spectrogram inputs. Through comparisons across multiple SSL framew orks and downstream tasks, we showed that masking strategies substantially influence both task performance and generalization. Our analysis re vealed that IBM substantially improves en vi- ronmental sound performance under a limited-data condition, while reducing generalization, particularly to speech tasks. In our e xperiments, informed masking approaches such as SGIM exhibited non-negligible computational ov erhead. Motiv ated by these observations, we proposed DWM, a lightweight alternativ e that exploits dispersion characteristics inherent to audio spectrograms. Experimental results demonstrated that DWM yields modest but consistent performance improv ements while largely main- taining performance on speech tasks, thereby better preserving generalization across div erse downstream tasks. In addition, ablation studies highlighted the importance of scheduling the hint ratio to control task difficulty during training for stable and effecti ve representation learning. Overall, our findings provide empirical insights into mask- ing strategy design and suggest that lightweight, spectrogram- aware masking can serv e as a practical alternati ve to computa- tionally intensiv e informed masking approaches. Future work includes exploring dispersion metrics beyond MAD that better capture semantically relevant content, ev aluating DWM with varying patch sizes and larger -scale datasets, and extending comparisons to computationally intensive methods such as SGIM under matched budgets. R E F E R E N C E S [1] K. He, X. Chen, S. Xie, Y . Li, P . Doll ´ ar , and R. Girshick, “Masked Autoencoders Are Scalable V ision Learners, ” in Proc. CVPR , Jun. 2022, pp. 16 000–16 009. [2] A. Baade, P . Peng, and D. Harwath, “MAE-AST : Masked Autoencoding Audio Spectrogram Transformer, ” in Proc. Interspeech , 2022, pp. 2438– 2442. [3] D. Niizumi, D. T akeuchi, Y . Ohishi, N. Harada, and K. Kashino, “Masked Spectrogram Modeling using Masked Autoencoders for Learn- ing General-purpose Audio Representation, ” in Proc. HEAR: Holistic Evaluation of A udio Repr esentations (NeurIPS 2021 Competition) , vol. 166, 2022, pp. 1–24. [4] P .-Y . Huang, H. Xu, J. Li, A. Bae vski, M. Auli, W . Galuba, F . Metze, and C. Feichtenhofer, “Masked autoencoders that listen, ” in Proc. NeurIPS , vol. 35, 2022, pp. 28 708–28 720. [5] H. Bao, L. Dong, S. Piao, and F . W ei, “BEiT: BER T pre-training of image transformers, ” in Pr oc. ICLR , 2022. [6] A. Baevski, A. Babu, W .-N. Hsu, and M. Auli, “Efficient self-supervised learning with contextualized tar get representations for vision, speech and language, ” in Pr oc. ICML , 23–29 Jul 2023, pp. 1416–1429. [7] G. Li, H. Zheng, D. Liu, C. W ang, B. Su, and C. Zheng, “SemMAE: Semantic-guided masking for learning masked autoencoders, ” in Proc. NeurIPS , S. K oyejo, S. Mohamed, A. Agarwal, D. Belgrave, K. Cho, and A. Oh, Eds., vol. 35. Curran Associates, Inc., 2022, pp. 14 290–14 302. [8] J. Shin, I. Lee, J. Lee, and J. Lee, “Self-guided masked autoencoder, ” in Pr oc. NeurIPS , A. Globerson, L. Mackey , D. Belgrav e, A. Fan, U. Paquet, J. T omczak, and C. Zhang, Eds., vol. 37. Curran Associates, Inc., 2024, pp. 58 929–58 954. [9] W . Chen, Y . Liang, Z. Ma, Z. Zheng, and X. Chen, “EA T: Self- supervised pre-training with efficient audio transformer , ” in Proc. IJCAI , 8 2024, pp. 3807–3815. [10] K. Y amamoto and K. Okusa, “AaPE: Aliasing-aware patch embed- ding for self-supervised audio representation learning, ” arXiv pr eprint arXiv:2512.03637 , 2025. [11] D. Niizumi, D. T akeuchi, Y . Ohishi, N. Harada, and K. Kashino, “Masked Modeling Duo: T owards a Univ ersal Audio Pre-Training Framew ork, ” IEEE/ACM Tr ans. Audio, Speech, Language Process. , vol. 32, pp. 2391–2406, 2024. [12] H. Dinkel, Y . W ang, Z. Y an, J. Zhang, and Y . W ang, “CED: Consistent ensemble distillation for audio tagging, ” in Pr oc. ICASSP , 2024. [13] Y . Gong, C.-I. Lai, Y .-A. Chung, and J. Glass, “SSAST : Self-Supervised Audio Spectrogram Transformer, ” in Proc. AAAI , vol. 36, No. 10, 2022, pp. 10 699–10 709. [14] X. Li, N. Shao, and X. Li, “Self-Supervised Audio T eacher-Student T ransformer for Both Clip-Lev el and Frame-Level T asks, ” IEEE/ACM T rans. Audio, Speech, Language Pr ocess. , vol. 32, pp. 1336–1351, 2024. [15] S. Chen, Y . W u, C. W ang, S. Liu, D. T ompkins, Z. Chen, and F . W ei, “BEA Ts: Audio Pre-Training with Acoustic T okenizers, ” in Pr oc. ICML , 2023, pp. 5178–5193. [16] D. Niizumi, D. T akeuchi, M. Y asuda, B. Thien Nguyen, Y . Ohishi, and N. Harada, “M2D-CLAP: Exploring general-purpose audio-language representations beyond clap, ” IEEE Access , vol. 13, pp. 163 313– 163 330, 2025. [17] J. Shi and J. Malik, “Normalized cuts and image segmentation, ” IEEE T rans. P attern Anal. Mach. Intell. , vol. 22, no. 8, pp. 888–905, 2000. [18] Y . W ang, X. Shen, S. X. Hu, Y . Y uan, J. L. Crowley , and D. V aufreydaz, “Self-supervised transformers for unsupervised object discovery using normalized cut, ” in Pr oc. CVPR , Jun. 2022, pp. 14 523–14 533. [19] D. Niizumi, D. T akeuchi, Y . Ohishi, N. Harada, and K. Kashino, “BYOL for Audio: Exploring Pre-trained General-purpose Audio Representa- tions, ” IEEE/A CM T rans. Audio, Speech, Language Process. , vol. 31, p. 137–151, 2023. [20] J. F . Gemmeke, D. P . W . Ellis, D. Freedman, A. Jansen, W . Lawrence, R. C. Moore, M. Plakal, and M. Ritter , “ Audio set: An ontology and human-labeled dataset for audio events, ” in Pr oc. ICASSP , 2017, pp. 776–780. [21] K. J. Piczak, “ESC: Dataset for Environmental Sound Classification, ” in Pr oc. ACM-MM , Oct. 2015, pp. 1015–1018. [22] J. Salamon, C. Jacoby , and J. P . Bello, “ A dataset and taxonomy for urban sound research, ” in Pr oc. A CM-MM , Nov . 2014, pp. 1041–1044. [23] P . W arden, “Speech Commands: A Dataset for Limited-V ocabulary Speech Recognition, ” arXiv pr eprint arXiv::1804.03209 , Apr . 2018. [24] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxceleb: A large-scale speaker identification dataset, ” in Pr oc. Interspeech , 2017, pp. 2616– 2620. [25] K. MacLean, “V oxforge, ” 2018, available: http://www .voxforge.org/ home. [26] H. Cao, D. G. Cooper , M. K. Keutmann, R. C. Gur, A. Nenkova, and R. V erma, “Crema-d: Cro wd-sourced emotional multimodal actors dataset, ” IEEE T rans. Affective Comput. , vol. 5, no. 4, 2014. [27] G. Tzanetakis and P . Cook, “Musical genre classification of audio signals, ” IEEE Speech Audio Pr ocess. , vol. 10, no. 5, 2002. [28] J. Engel, C. Resnick, A. Roberts, S. Dieleman, M. Norouzi, D. Eck, and K. Simon yan, “Neural audio synthesis of musical notes with Wa veNet autoencoders, ” in Pr oc. ICML , 2017, pp. 1068–1077. [29] J. T urian, J. Shier , G. Tzanetakis, K. McNally , and M. Henry , “One billion audio sounds from GPU-enabled modular synthesis, ” in Proc. D AFx2020 , Sep. 2021, pp. 222–229. A P P E N D I X A N OT E S O N T H E D E S I G N O F D I S P E R S I O N - W E I G H T E D M A S K I N G Audio e vents typically excite specific frequency bands, pro- ducing patches with high spectral variability in active regions and low variability in silent or tonal regions. MAD directly captures this variability: a high MAD value indicates that the patch contains a mixture of active and inactiv e frequency components, which correlates with the presence of informative spectral content. This property makes MAD a computationally efficient proxy for patch-level information density without re- quiring explicit object detection or eigenv alue decomposition. W e attribute the balanced cross-task behavior of DWM to its probabilistic nature: unlike IBM, which deterministically unmasks contiguous blocks, DWM distributes masking proba- bility across all patches proportionally to their dispersion. This preserves a degree of randomness that maintains sensitivity to fine-grained features (e.g., speaker characteristics in VC1), while still biasing the masking tow ard spectrally informativ e regions that benefit event-related tasks. A P P E N D I X B L I M I TA T I O N S O F M A D A S A D I S P E R S I O N M E T R I C One limitation of using MAD as a dispersion metric is that it measures within-patch v ariability rather than absolute ener gy or semantic relev ance. Consequently , a patch containing a strong but spectrally uniform signal (e.g., a sustained pure tone) may recei ve a low dispersion score despite carrying meaningful content. Con versely , patches with high v ariability due to noise may be overweighted. Inv estigating alternativ e or complementary importance metrics remains an avenue for future work.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment