DAK-UCB: Diversity-Aware Prompt Routing for LLMs and Generative Models

The expansion of generative AI and LLM services underscores the growing need for adaptive mechanisms to select an appropriate available model to respond to a user's prompts. Recent works have proposed offline and online learning formulations to ident…

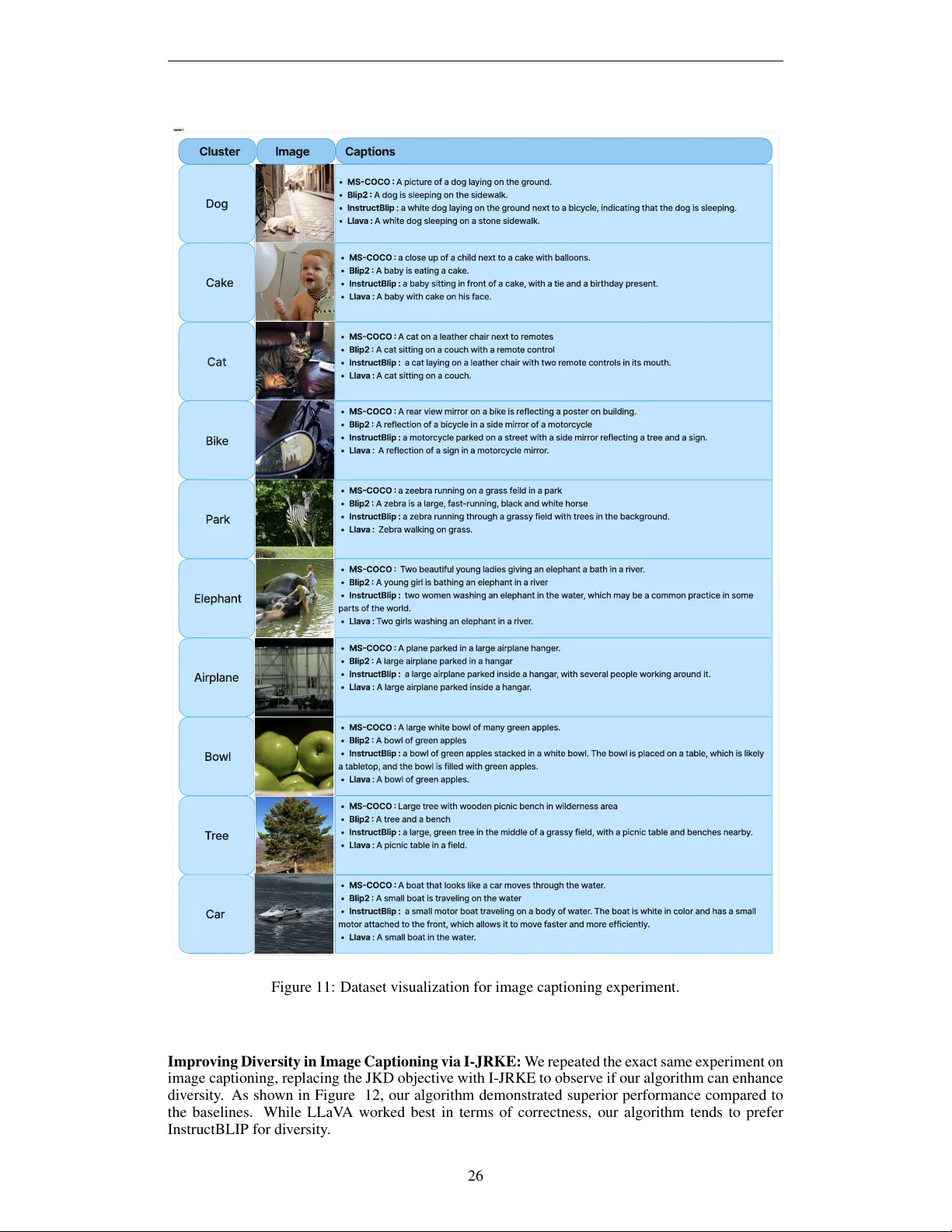

Authors: Donya Jafari, Farzan Farnia