Morph: A Motion-free Physics Optimization Framework for Human Motion Generation

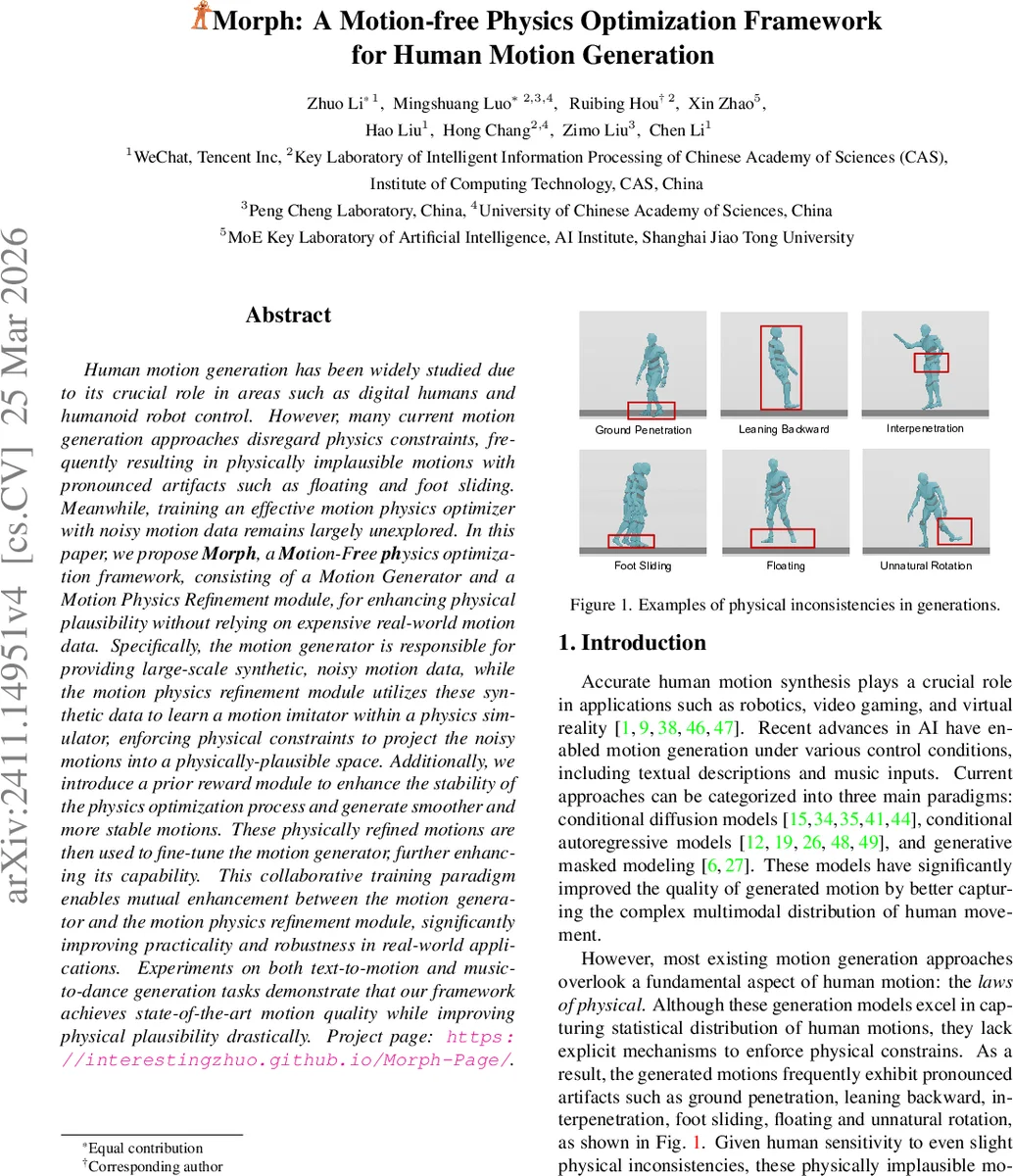

Human motion generation has been widely studied due to its crucial role in areas such as digital humans and humanoid robot control. However, many current motion generation approaches disregard physics constraints, frequently resulting in physically implausible motions with pronounced artifacts such as floating and foot sliding. Meanwhile, training an effective motion physics optimizer with noisy motion data remains largely unexplored. In this paper, we propose \textbf{Morph}, a \textbf{Mo}tion-F\textbf{r}ee \textbf{ph}ysics optimization framework, consisting of a Motion Generator and a Motion Physics Refinement module, for enhancing physical plausibility without relying on expensive real-world motion data. Specifically, the motion generator is responsible for providing large-scale synthetic, noisy motion data, while the motion physics refinement module utilizes these synthetic data to learn a motion imitator within a physics simulator, enforcing physical constraints to project the noisy motions into a physically-plausible space. Additionally, we introduce a prior reward module to enhance the stability of the physics optimization process and generate smoother and more stable motions. These physically refined motions are then used to fine-tune the motion generator, further enhancing its capability. This collaborative training paradigm enables mutual enhancement between the motion generator and the motion physics refinement module, significantly improving practicality and robustness in real-world applications. Experiments on both text-to-motion and music-to-dance generation tasks demonstrate that our framework achieves state-of-the-art motion quality while improving physical plausibility drastically. Project page: https://interestingzhuo.github.io/Morph-Page/.

💡 Research Summary

Morph introduces a novel, data‑efficient framework for improving the physical plausibility of human motion generation without relying on real motion‑capture datasets. The system consists of two loosely coupled components: a Motion Generator (MG) and a Motion Physics Refinement (MPR) module. MG can be any existing conditional motion synthesis model—diffusion‑based (e.g., MDM), autoregressive (e.g., T2M‑GPT), or masked‑model (e.g., MoMask). It is used to produce a large corpus of synthetic, noisy motions conditioned on text or music. These motions typically violate basic physical laws, exhibiting artifacts such as foot‑sliding, floating, ground penetration, and unnatural joint rotations.

MPR addresses these violations by learning a motion imitator that operates inside a physics simulator (e.g., MuJoCo or PhysX). The imitator is trained with Proximal Policy Optimization (PPO) to generate control actions (PD target joint angles) that drive the simulated character toward the noisy reference motion. The state fed to the policy includes the next reference pose and the residuals between reference and simulated poses for rotation, position, velocity, and angular velocity, enabling the policy to focus on correcting specific discrepancies.

The reward function combines three terms: (1) a Mimic Reward that exponentially penalizes differences in joint rotation, position, velocity, and angular velocity; (2) an Energy Penalty that discourages excessive torques and angular velocities; and (3) a Prior Reward derived from a lightweight Motion VAE trained on the same synthetic data. The VAE learns the distribution of the generated motions and provides a smooth, differentiable estimate of how “human‑like” a simulated pose is, thereby mitigating the mechanical feel that pure physics‑based imitation can produce.

After the MPR module has been trained, it generates physically refined motion sequences. These refined motions are then used to fine‑tune the original MG. This second stage aligns the generator’s parameters with a data distribution that already respects physical constraints, allowing the MG to output plausible motions directly at inference time, without invoking the physics simulator again.

The authors evaluate Morph on two tasks—text‑to‑motion and music‑to‑dance—using three representative generators. Quantitative metrics (FID, diversity, and physical error rates such as foot‑sliding, floating, and inter‑penetration) show a consistent reduction of physical errors by more than 70 % across all settings, while maintaining or slightly improving visual quality. Compared with prior methods that embed physics into diffusion steps (PhysDiff, Reindiffuse), Morph achieves comparable physical realism with far lower inference cost because the physics refinement is performed offline during training.

Key contributions include: (1) a motion‑free approach that eliminates the need for large real‑world motion datasets; (2) a reinforcement‑learning based imitator augmented by a VAE prior, which jointly enforces physical constraints and human‑like dynamics; (3) a model‑agnostic, two‑stage collaborative training pipeline that can be attached to any conditional motion generator; and (4 extensive experiments demonstrating state‑of‑the‑art physical plausibility and generation quality.

Limitations are acknowledged: the initial training of the physics simulator and PPO policy is computationally intensive, and the quality of the synthetic noisy data influences the efficiency of MPR learning. The current implementation focuses on single‑character motions; extending to multi‑character interaction or object manipulation will require additional research. Future work may explore lighter simulators, model‑free RL techniques, or automated noise‑filtering mechanisms to further reduce training cost and broaden applicability.

Comments & Academic Discussion

Loading comments...

Leave a Comment