The Order Is The Message

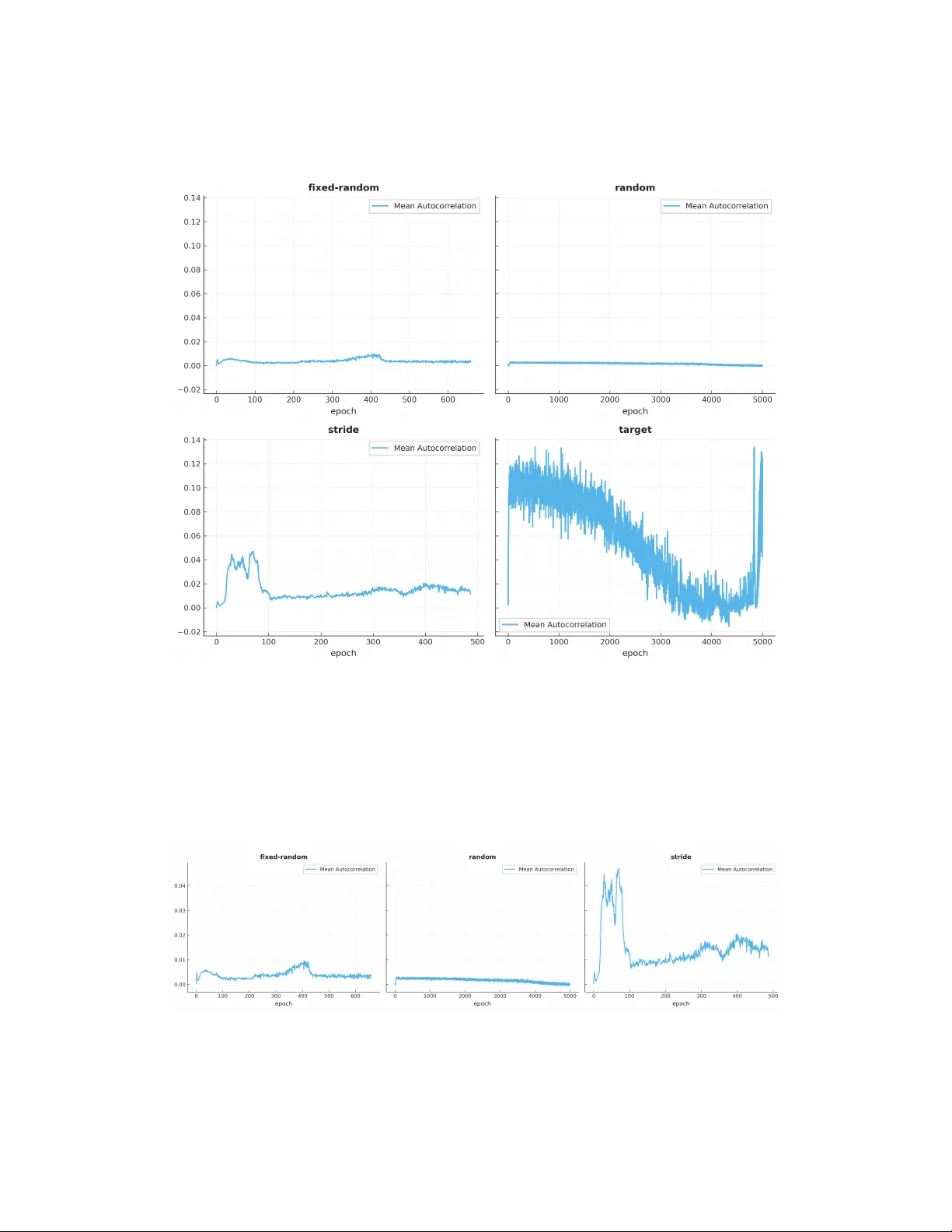

In a controlled experiment on modular arithmetic ($p = 9973$), varying only example ordering while holding all else constant, two fixed-ordering strategies achieve 99.5\% test accuracy by epochs 487 and 659 respectively from a training set comprising…

Authors: Jordan LeDoux