Data-driven online control for real-time optimal economic dispatch and temperature regulation in district heating systems

District heating systems (DHSs) require coordinated economic dispatch and temperature regulation under uncertain operating conditions. Existing DHS operation strategies often rely on disturbance forecasts and nominal models, so their economic and the…

Authors: Xinyi Yi, Ioannis Lestas

Highlights Data-driven online control for real-time op timal economic dispatch and temperature regulation in district heating sys tems Xinyi Yi, Ioannis Lestas • Optimality conditions are embedded into augmented DHS dynamics. • A dat a-driven controller is dev eloped f or real-time DHS operation. • Adaptiv e online updates improv e lear ning and closed-loop per f ormance. • The framewor k guarantees controller optimality and closed-loop conv er g ence. • V alidation on an industrial-park DHS show s stable near -optimal operation. Data-dr iv en online control f or real-time optimal economic dispatch and temperature regulation in dis tr ict heating sy stems Xin yi Yi a , Ioannis Lestas a , ∗ Department of Engineering, University of Cambridge, T r umpington Stree t, Cambridge, CB2 1PZ, United Kingdom A R T I C L E I N F O Keyw or ds : District heating systems Economic dispatch T emperature regulation Online data-dr iven control Performance guarantees A B S T R A C T District heating systems (DHSs) require coordinated economic dispatch and temperature regulation under uncertain operating conditions. Existing DHS operation str ategies often rely on disturbance f orecasts and nominal models, so their economic and thermal perf ormance ma y degrade when predictive information or model know ledge is inaccurate. This paper dev elops a data-dr iven online control framew ork f or DHS operation by embedding steady-s tate economic optimality conditions into the temperature dynamics, so that the closed-loop system conv erg es to the economically optimal operating point without relying on disturbance forecasts. Based on t his f ormulation, we dev elop a Data-Enabled Policy Optimization (DeePO)-based online lear ning controller and incor porate Adaptiv e Moment Estimation (ADAM) to impro ve closed-loop performance. We furt her establish conv er gence and performance guarantees f or the resulting closed-loop system. Simulations on an industrial-park DHS in Norther n China show that t he proposed method achie v es stable near-optimal operation and strong empirical robustness to both static and time-varying model mismatch under practical disturbance conditions. 1. Introduction Heating sys tems account for a significant share of global energy consumption and greenhouse gas emissions. Improv - ing t he operational efficiency and flexibility of district heat- ing systems (DHSs) is therefore impor tant f or low -carbon energy transitions. Widely deployed in China, Russia, and Europe, DHSs distr ibute thermal energy through lar ge-scale pipeline netw orks. Their increasing integration wit h renew - able and w aste-heat sources reduces reliance on f ossil fuels, but also increases operational uncer tainty and coordination complexity [ 15 ]. In practical DHS operation, coordinating economic dis- patch and temperature regulation remains c hallenging under demand uncertainty and model mismatch. Existing forecas t- based and model-based control str ategies can per f or m well when disturbance predictions and nominal models are ac- curate, but their perf ormance may deg rade under uncertain heat demand and changing operating conditions. This mo- tivates a closer e xamination of tem perature regulation for DHSs under uncer tainty . Although temperature regulation has been widely stud- ied in buildings [ 3 ], DHSs differ substantially in system structure, control objectives, and operating conditions. Com- pared wit h building heating control, DHS regulation requires coordinated heat generation, transpor t, and allocation o ver larg e-scale networks, where control perf ormance depends on netw ork interconnections and t hermal transpor t dynam- ics [ 1 , 16 ]. This motiv ates the dev elopment of control frame- w orks f or DHSs that can maintain economically efficient and ther mall y stable operation under uncert ainty . ∗ Corresponding author . xy343@cam.ac.uk (X. Yi); icl20@cam.ac.uk (I. Lestas) OR CID (s): 0000-0003-1797-6280 (X. Yi) 1.1. F orecast-r eliant and model-based DHS operation In practice, DHS operation often combines f orecast- based setpoint scheduling with real-time se tpoint tr acking [ 1 , 16 ]. How ever , forecas t er rors and model mismatch can de- grade both thermal and economic per f or mance under uncer - tain heat demand [ 6 , 10 ]. Model predictiv e control (MPC) partially alleviates this issue through receding-hor izon re- optimization [ 11 ]. Related DHS operation framewor k s hav e been dev eloped using impro ved load f orecasting methods [ 8 , 24 ] together with control-oriented or reduced-order ther- mal models [ 14 , 19 , 28 ] to impro ve thermal and economic performance. Howe ver , their practical performance remains sensitive to f orecast quality and model accuracy , especially when heat demand varies and model mismatch is present. 1.2. Data-driven enhancements within MPC-based DHS operation Building on these MPC framew orks, recent studies hav e furt her introduced data-dr iven components to mitigate the impact of model mismatch in DHS operation. Ho wev er, rather than removing the reliance on model kno wledg e, these methods are still largel y de veloped within MPC frame- w orks. For ex ample, recent works hav e e xplored phy sics- inf or med neural netw ork models f or predictive control [ 7 ] and AI-based approaches f or steam system modeling from a graph perspective [ 27 ]. Ne vert heless, these approaches re- main prediction-reliant and model-reliant: their performance still depends on the quality of the predictive model and its associated inf or mation, while formal closed-loop guar - antees under prediction er rors and model mismatc h remain limited. These limit ations motiv ate a shift from prediction- reliant MPC framewor k s tow ards online data-dr iv en control framew orks that can suppor t economically consistent and reliable DHS operation under uncer tainty while providing Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier Page 1 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems strong er closed-loop guarantees. Feedback -based optimiza- tion controllers [ 9 ] provide an alter native, but t heir fas t- optimization assumption may be restrictive f or slow ther mal dynamics. Our previous linear quadratic regulator (LQR) framew ork [ 26 ] guarantees conv erg ence to t he economically optimal operating point under unkno wn deterministic dis- turbances, but does not account for stochastic demand varia- tions or renew able-heat fluctuations encountered in practical operation. 1.3. Online dat a-driven control opportunities f or real-time economic dispatch and temperatur e regulation in DHSs Data-dr iv en LQR provides a promising framew ork f or online DHS control when accurate system models are dif- ficult to maintain and str ong closed-loop guarantees are desired. Existing approac hes broadly f all into two categor ies: indirect methods, which first identify system dynamics and then solv e a cer tainty-equiv alent (CE) control problem, and direct methods, which learn the control policy directly from data. For large-scale DHSs under uncer tainty and model mismatch, direct methods are par ticularly attractive because they reduce reliance on repeated model identification and can adapt more naturally to closed-loop operating data. Representativ e direct data-dr iven LQR methods include gradient-based policy updates [ 17 , 22 ] and Data-Enabled Policy Optimization (DeePO) [ 29 ]. Gradient-based methods often require relativel y long data tra jector ies to estimate gradients or value functions accurately , which can limit online sample efficiency . By contrast, DeePO constr ucts a cov ar iance-based sur rogate objective directly from closed- loop data, making it w ell suited to online adaptation from a single operating trajectory . This is par ticularly relev ant f or DHS operation, where repeated restarts, multiple inde- pendent e xper iments, or extensive exploration are typically impractical. Despite t hese advantages, existing DeePO studies hav e mainly been v alidated on low -dimensional benchmark sys- tems, and its online implementation can still be sensitive to stoc hastic disturbances, time-cor related dat a, and sur rogate bias under model mismatch. To improv e performance in this setting, w e incor porate Adaptiv e Moment Estimation (AD AM) [ 13 ] into t he DeePO update. Although AD AM is widely used to accelerate gradient-based lear ning [ 4 , 12 ], its con verg ence properties f or LQR policy learning do not fol- low directly from existing g eneral-pur pose results, because the cost depends implicitly on L yapunov equations. This mo- tivates an AD AM-enhanced DeePO framew ork f or optimal real-time economic dispatch and temperature regulation in DHSs under demand uncer tainty and model mismatch. 1.4. Contributions and paper organization The main contr ibutions of t his work are threef old: • Economically consistent online control formula- tion for DHS operation. W e develop a data-dr iven online control framew ork for real-time optimal eco- nomic dispatch and temperature regulation in DHSs. Steady -state economic optimality conditions are em- bedded into t he DHS temperature dynamics, yielding an augmented regulation problem whose closed-loop equilibrium coincides wit h the economically optimal operating point, wit hout relying on disturbance f ore- casts for control design or real-time operation. • Online data-driven control with conv ergence and stability guarantees. Based on Dat a-Enabled P olicy Optimization (DeePO), we dev elop an online dat a- driven controller for DHSs under stoc hastic distur- bances and model mismatch, and establish conv er- gence to an optimal control policy , toge t her wit h closed-loop st ability guarantees. • AD AM-enhanced online policy learning with im- pro ved closed-loop performance. W e incor porate Adaptiv e Moment Estimation (ADAM) into the DeePO update to improv e closed-loop per f or mance, and es- tablish con ver gence guarantees for the resulting AD AM- enhanced scheme in large-scale DHS control. The remainder of the paper is organized as f ollow s. Section 2 presents the DHS model. Sections 3 and 4 de velop the augmented LQR framew ork and the DeePO-based online controller design. Section 5 presents simulation results on an industrial-scale DHS wit h model mismatch and time- varying parameter per turbations. Section 6 concludes t he paper . 2. District heating sys tem (DHS) model 2.1. T emperatur e dynamics of DHSs District heating system (DHS) tem perature dynamics can be modeled at different fidelity lev els. High-fidelity par- tial differential equation (PDE) models capture spatiotem- poral heat transpor t in detail, but are often impractical f or real-time optimization and learning-based control because of their high dimensionality and nonlinear structure [ 21 ]. Reduced-order models t hat emphasize agg regate heat trans- port and node-level mixing are theref ore more suit able for scalable netw ork -lev el control [ 1 , 14 , 16 , 18 ]. Representative DHS pipeline models are summar ized in T able 1 . W e there- f ore adopt a control-or iented DHS temperature model based on energy conser vation and mass-flo w mixing, which yields a low -dimensional state-space represent ation while preser v - ing t he network topology and interconnection str ucture. Let denote t he set of edg es (heat ex chang ers and pipelines) and the set of nodes (storage tanks). The edge dynamics in ( 1a ) capture t he dependence of outle t temperatures on inlet–outlet temperature differences, while the node dynamics in ( 1b ) descr ibe the balance between variations in stored ther mal ener gy and the net inflo w from adjacent edg es, where is the outlet temperature of edge and is t he temperature of node : = ( − ) + − , ∈ , ∈ , (1a) Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 2 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems T able 1 Compa rison of representative DHS pip eline mo dels. Here, denotes the outlet temp erature of the th pip e segment at time step , 𝑻 in and 𝑻 denote the inlet and outlet temperature vecto rs, respectively , 𝒗 is the diagonal flo w-velo city matrix, is the ambient temp erature, and , , 𝝀 , , and 𝑽 denote the fluid densit y , specific heat capacity , heat-transfer coefficient, pip e cross-sectional area, and pip e volume, resp ectively . is the heat loss in the th pip e segment at time step . Reference Mo del [ 21 ] +1 − Δ = − − −1 Δ − [ 1 ] 𝑽 𝑻 = 𝒗 ( 𝑻 in − 𝑻 ) + 𝝀 ( − 𝑻 ) [ 14 , 16 , 18 ] 𝑽 𝑻 = 𝒗 ( 𝑻 in − 𝑻 ) = ∈ ( − ) , ∈ . (1b) and denote the heat-source and heat-load powers at edge , respectiv ely . is the set of edg es whose outlets are node . The parameters and represent edge and node volumes, is t he mass-flow rate, and and are t he specific heat capacity and density of water . The edge set is partitioned into the heat producer set , heat load set , and pipeline set . For an edge ∈ , we ha ve = 0 . For an edge ∈ , we hav e = 0 and is prescribed. For an edge ∈ , we ha ve = 0 and = 0 . Equations ( 1a )–( 1b ) can be compactl y expressed in ma- trix form as 1 . 𝑽 𝑻 𝑻 𝑻 = − 𝑨 𝑻 𝑻 𝑻 + 𝒉 𝑮 − 𝒉 𝟎 , (2a) 𝑽 𝑻 𝑻 = − 𝑨 𝑻 𝑻 + 𝒉 𝑮 − 𝒉 𝟎 , (2b) 𝑻 = − 𝑨 𝟏 𝑻 + 𝑩 𝟏 𝒉 𝑮 − 𝑩 𝟐 𝒉 𝑳 , (2c) where 𝒉 𝑮 and 𝒉 𝑳 denote the production and load vectors, respectiv ely , scaled by 1 . 𝑨 𝟏 = 𝑽 −𝟏 𝑨 𝒉 , 𝑩 𝟏 = 𝑽 −𝟏 ⎡ ⎢ ⎢ ⎣ 𝑰 𝟎 𝟎 ⎤ ⎥ ⎥ ⎦ , 𝑩 𝟐 = 𝑽 −𝟏 ⎡ ⎢ ⎢ ⎣ 𝟎 𝑰 𝟎 ⎤ ⎥ ⎥ ⎦ . 𝑨 𝒉 is defined as 𝑨 𝒉 = diag( 𝒒 𝑬 ) − diag( 𝒒 𝑬 ) 𝑩 𝒔𝒉 − 𝑩 𝒕𝒉 diag( 𝒒 𝑬 ) diag( 𝑩 𝒕𝒉 𝒒 𝑬 ) . 𝑨 𝒉 is a con- stant matrix for a given mass flow v ector , where 𝑩 𝒕𝒉 = 1 2 ( 𝑩 𝒉 + 𝑩 𝒉 ) , and 𝑩 𝒔𝒉 = 1 2 ( 𝑩 𝒉 − 𝑩 𝒉 ) , where 𝑩 𝒉 is the incidence matr ix of t he DHS, and 𝑩 𝒉 denotes the elementwise absolute value of 𝑩 𝒉 [ 18 ]. 1 𝑨 𝒉 satisfies 𝑨 𝒉 𝟏 = 𝟎 , 𝟏 ⊤ 𝑨 𝒉 = 𝟎 , and 𝑨 𝒉 + 𝑨 ⊤ 𝒉 ⪰ 𝟎 with a simple zero eigen value, hence it can be regarded as a Kirchhoff matrix of the heating netw ork. Therefore, the null space of 𝑨 𝒉 and 𝑨 𝟏 is 𝟏 , where ∈ ℝ . 2.2. Steady -state optimization of DHSs Given the steady-state heat demand 𝒉 , the steady-state DHS operation is characterized by the follo wing two opti- mization problems. Problem E1 determines the economi- cally optimal heat g eneration, while problem E2 selects the cor responding temperature profile by minimizing tempera- ture deviation o ver the equilibrium set. E1: min 𝒉 𝑮 ∈ ℝ , 𝑻 ∈ ℝ 1 2 𝒉 𝑮 𝑭 𝑮 𝒉 𝑮 , (3a) s.t. 𝑨 𝟏 𝑻 = 𝑩 𝟏 𝒉 𝑮 − 𝑩 𝟐 𝒉 𝑳 , (3b) where 𝑭 𝑮 = diag( ) 𝟎 collects t he producer cost coef- ficients for ∈ . E1 admits a unique optimizer 𝒉 . The associated equilibrium temperature set is 𝑻 = 𝑨 † 1 ( 𝑩 1 𝒉 − 𝑩 2 𝒉 ) + 𝟏 , ∈ ℝ , where 𝑨 † 1 denotes the Moore–Penr ose pseudoin verse. Problem E2 then deter mines 𝑻 by minimiz- ing t he temperature-deviation cost ov er this set. E2: min ∈ ℝ , 𝑻 ∈ ℝ 1 2 𝑻 𝑭 𝑫 𝑻 , (4a) .. 𝑻 = 𝑨 † 𝟏 ( 𝑩 𝟏 𝒉 𝑮 ⋆ − 𝑩 𝟐 𝒉 𝑳 ) + 𝟏 , (4b) where 𝑭 𝑫 = ( ) 𝟎 , and represents the temperature deviation cost coefficient at node . The follo wing result characterizes the steady -state opti- mality conditions that link the DHS equilibr ium to problems E1 and E2 . Theorem 1. If the DHS ( 2c ) achieves an equilibr ium at 𝑻 ⋆ and 𝒉 𝑮 , and satisfies 𝑭 𝑴 𝒉 𝑮 ⋆ = 𝟎 and 𝟏 ⊤ 𝑭 𝑫 𝑻 ⋆ = 0 , wher e 𝑭 𝑴 ∈ ℝ ( −1)× is defined by 𝑭 𝑴 = 1 − 2 0 ⋯ 0 0 2 − 3 ⋯ 0 ⋮ ⋮ ⋱ ⋱ ⋮ 0 0 ⋯ −1 − . Then it uniquely solv es the optimization problems E1 and E2 . The proof is def er red to Appendix A . 3. LQR problem f or mulation In this paper, ⋅ denotes the Euclidean nor m, ⋅ 2 the induced 2 norm, ⋅ the Frobenius norm, ⋅ the nuclear nor m, and ⋅ 2 the 2 norm. The notation , ∶= Tr ( ) denotes the Frobenius inner product. 3.1. Discrete-time temper ature dynamics T o f acilitate controller design and simulation, we dis- cretize the continuous-time dynamics in ( 2c ). For simplicity , we use Euler discretization with sampling inter val . The controller de veloped in the remainder of the paper is not restricted to this choice and can be applied with an y dis- cretization method that yields discrete-time dynamics with a Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 3 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems constant sampling inter v al. The resulting discrete-time DHS model is 𝑻 +1 = ( 𝑰 − 𝑨 1 ) 𝑻 + 𝑩 1 𝒉 − 𝑩 2 ( 𝒉 , con + 𝒉 , st o ) = 𝑨 𝑻 + 𝑩 𝒉 + 𝑩 𝒉 , con + 𝑩 𝒉 , st o , (5) where the heat load 𝒉 is decomposed into a slow -varying component 𝒉 , con and a fast-v ar ying stochastic component 𝒉 , st o . 3.2. Output and error definition W e aim to design a temperature regulator that en sures con verg ence to the optimal equilibr ium point ( 𝑻 ⋆ , 𝒉 𝑮⋆ ) defined b y t he solutions of E1 and E2 . To achiev e this, we introduce an er ror signal whose con ver gence to zero guaran- tees satisfaction of the cor responding optimality conditions. Specifically , the error definition is constructed directly from the E1–E2 optimality conditions in Theorem 1 , with 𝒆 𝒌 ha ving the same dimension as 𝒉 𝑮 𝒌 : 𝒆 𝒌 = 𝟎 𝟏 ⊤ 𝑭 𝑫 𝑻 𝒌 + 𝑭 𝑴 𝟎 𝒉 𝑮 𝒌 = 𝑪 𝑻 𝑻 𝒌 + 𝑫 𝑻 𝒉 𝑮 𝒌 . (6) 3.3. A ugmented dynamics T o achiev e both temperature regulation and economi- cally consistent steady -st ate operation, we design the con- troller so that the optimality er ror satisfies 𝔼 [ 𝒆 ] → 𝟎 and the heat-generation increment satisfies 𝔼 [ 𝒉 − 𝒉 −1 ] → 𝟎 at equilibrium in e xpect ation. This motiv ates the augmented state: 𝒙 𝒌 +𝟏 = 𝑻 𝒌 +𝟏 − 𝑻 𝒌 𝒆 𝒌 , t he resulting augmented dy- namics are descr ibed as follo ws, 𝒙 𝒌 +𝟏 = 𝑨 𝑻 𝟎 𝑪 𝑻 𝑰 𝒙 𝒌 + 𝑩 𝑻 𝑫 𝑻 𝒖 𝒌 + 𝑩 𝑳 𝑻 𝟎 𝒘 𝒌 (7a) = 𝑨𝒙 𝒌 + 𝑩 𝒖 𝒌 + 𝑩 𝒘 𝒘 𝒌 𝒖 𝒌 = 𝒉 𝑮 𝒌 − 𝒉 𝑮 𝒌 −𝟏 , 𝒘 𝒌 = 𝒉 , st o − 𝒉 , st o −1 , (7b) 𝒆 𝒌 = 𝑪 𝑻 𝑰 𝒙 𝒌 + 𝑫 𝑻 𝒖 𝒌 (7c) = 𝑪 𝒙 𝒌 + 𝑫 𝒖 𝒌 . Assumption 1. The pair ( , ) in ( 7 ) is controllable. Assumption 2. (Bounded disturbances with finite co var i- ance) The disturbance sequence { 𝒘 } is zero-mean and uniformly bounded. Specifically, ther e exists a constant > 0 suc h that 𝒘 ≤ for all . Mor eover , the disturbance has a finite, time-invariant covariance matrix, i.e., 𝔼 [ 𝒘 ] = 𝟎 , 𝔼 [ 𝒘 𝒘 ] = 𝑼 dis 𝟎 , where 𝑼 dis is finite. Definition 1. The augmented system ( 7 ) has an input dimen- sion of = and a state dimension of = + . Proposition 1. (Converg ence to the op timal operating point under a stabilizing feedback law) Consider the original DHS ( 2c ) and its augmented repr esentation in ( 7 ) under the state-f eedback law 𝒖 = 𝑲 𝒙 , wher e 𝑲 is stabilizing . If 𝔼 [ 𝒙 ] → 𝟎 , 𝔼 [ 𝒖 ] → 𝟎 , and 𝔼 [ 𝒆 ] → 𝟎 , then the original DHS conv erg es in expectation to its optimal equilibrium 𝑻 ⋆ , 𝒉 𝑮⋆ defined in ( 3 , 4 ). Mor eov er , un- der Assumption 2 , the closed-loop state is mean-squar e bounded, i.e., sup 𝔼 𝒙 2 < ∞ ; equivalently , 𝔼 [ 𝒙 𝒙 ] conv erg es to a unique stationar y covariance that scales with the disturbance covariance level. P RO OF . From 𝔼 [ 𝒖 ] = 𝔼 [ 𝒉 − 𝒉 −1 ] = 𝟎 it f ollows that 𝔼 [ 𝒉 ] is constant f or sufficientl y large. T ogether with 𝔼 [ 𝒙 ] → 𝟎 and 𝔼 [ 𝒆 ] → 𝟎 , the expected steady- state of the original DHS satisfies the KKT -based optimality conditions characterized in Theorem 1 ; hence t he original DHS con ver ges in expectation to 𝑻 ⋆ , 𝒉 𝑮⋆ . Since 𝑲 is stabilizing, the closed-loop matrix satisfies ( 𝑨 + 𝑩 𝑲 ) < 1 , where ( ⋅ ) denotes the spectral radius. With zero-mean disturbances of finite cov ar iance, the standard discrete-time L yapunov argument implies mean-square boundedness and con verg ence of 𝔼 [ 𝒙 𝒙 ] to the stationar y cov ar iance. 3.4. Model-based LQR tem perature r egulator 3.4.1. Stationar y distr ibution of 𝒙 𝒌 T o support online lear ning, we inject a bounded ex- ploration signal with magnitude > 0 . Let { 𝒘 , } be a zero-mean bounded sequence with 𝔼 [ 𝒘 , ] = 𝟎 and 𝔼 [ 𝒘 , 𝒘 , ] = 𝑰 . The control input is 𝒖 = 𝑲 𝒙 + 𝒘 , , yielding the closed-loop dynamics 𝒙 +1 = ( 𝑨 + 𝑩 𝑲 ) 𝒙 + 𝝐 , where 𝝐 ∶= 𝑩 𝒘 𝒘 + 𝑩 𝒘 , . Under Assumption 2 , { 𝒘 } and { 𝒘 , } are zero-mean and independent, so 𝝐 is zero-mean with covariance 𝔼 [ 𝝐 𝝐 ] = 𝑩 𝒘 𝑼 𝒅 𝒊𝒔 𝑩 ⊤ 𝒘 + 2 𝑩 𝑩 ⊤ =∶ 𝑼 𝝐 𝟎 . Since both sequences are bounded, { 𝝐 } is also bounded. For ( 𝑨 + 𝑩𝑲 ) < 1 , the closed-loop state process admits a stationary cov ar iance 𝑼 , whic h is the unique positive semidefinite solution to 𝑼 𝑲 = 𝑼 𝝐 + ( 𝑨 + 𝑩 𝑲 ) 𝑼 𝑲 ( 𝑨 + 𝑩 𝑲 ) , (8a) 𝑼 𝑲 = ∞ =0 ( 𝑨 + 𝑩 𝑲 ) 𝑼 𝝐 [( 𝑨 + 𝑩 𝑲 ) ] . (8b) It is a standard result for stable discrete-time stochas tic linear systems [ 30 ]. 3.4.2. Cost function T o quantify long-r un regulation performance, we define the performance output as 𝒛 = 𝑸 1∕2 𝒙 𝑹 1∕2 𝒖 , where 𝑸 𝟎 and 𝑹 𝟎 weight state deviations and control effort, respectiv ely . W e consider t he feedbac k la w 𝒖 = 𝑲 𝒙 . Under Assumption 2 and a stabilizing gain 𝑲 satisfying ( 𝑨 + 𝑩 𝑲 ) < 1 , the closed-loop system admits a unique stationary cov ar iance. The cor responding 2 cost of the closed-loop map ( 𝑲 ) ∶ 𝑩 𝒘 ↦ 𝒛 is ( 𝑲 ) ∶= ( 𝑲 ) 2 2 = Tr ( 𝑸 + 𝑲 𝑹𝑲 ) 𝑼 , (9a) = Tr 𝑷 𝑼 , (9b) Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 4 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems where 𝑷 𝟎 is the unique solution to 𝑷 = 𝑸 + 𝑲 𝑹𝑲 + ( 𝑨 + 𝑩 𝑲 ) 𝑷 ( 𝑨 + 𝑩 𝑲 ) . (10) Equiv alently , ( 𝑲 ) is t he infinite-hor izon long-r un av erage quadratic cost ( 𝑲 ) = lim → ∞ 1 −1 =0 𝔼 [ 𝒛 𝒛 ] . 3.4.3. Model-based LQR When the system matrices ( 𝑨, 𝑩 ) are known and the pair is stabilizable, the infinite-horizon discrete-time LQR problem admits the optimal state-f eedback solution 𝑲 ⋆ = −( 𝑹 + 𝑩 𝑷 ⋆ 𝑩 ) −1 𝑩 𝑷 ⋆ 𝑨 , (11) where 𝑷 ⋆ 𝟎 is the stabilizing solution to the discrete- time algebraic Riccati equation. This standard model-based solution ser ves as a benchmark f or t he subsequent dat a- driven controller design [ 2 ]. 3.4.4. Indirect cer tainty-equivalence (CE) LQR As a benc hmark data-dr iven approach, CE first identifies a model from dat a and then solv es the cor responding LQR problem as if t he identified dynamics were e xact. Consider a trajectory of length generated under a stabilizing policy 𝑲 0 , and define 𝑿 0 ∶= [ 𝒙 0 𝒙 1 ⋯ 𝒙 −1 ] ∈ ℝ × , 𝑼 0 ∶= [ 𝒖 0 𝒖 1 ⋯ 𝒖 −1 ] ∈ ℝ × , 𝑿 1 ∶= [ 𝒙 1 𝒙 2 ⋯ 𝒙 ] ∈ ℝ × , 𝑾 0 ∶= [ 𝒘 0 𝒘 1 ⋯ 𝒘 −1 ] ∈ ℝ × . (12) Let 𝑫 0 ∶= 𝑼 0 𝑿 0 = [ 𝒅 0 𝒅 1 ⋯ 𝒅 −1 ] and 𝚽 ∶= 1 𝑫 0 𝑫 0 , so that 𝑿 1 = 𝑨𝑿 0 + 𝑩 𝑼 0 + 𝑾 0 . Assumption 3 (Persistent excitation). The input sequence { 𝒖 } is persistentl y exciting of order + , so that the data matrix 𝑫 0 has full row r ank . Under Assumption 3 , the least-squares estimate is [ 𝑩 , 𝑨 ] = 𝑿 1 𝚽 −1 , 𝑿 1 ∶= 1 𝑿 1 𝑫 0 . (13) The agg regated-disturbance cov ar iance is estimated by 𝜺 0 = 𝑿 1 − ( 𝑨 + 𝑩 𝑲 0 ) 𝑿 0 , 𝑼 = 1 𝜺 0 𝜺 0 . (14) Fixing 𝑼 from t he initial batch, the CE LQR problem is min 𝑲 , 𝑼 ( 𝑲 ) = Tr ( 𝑸 + 𝑲 𝑹𝑲 ) 𝑼 , (15a) s.t. 𝑼 = 𝑼 + ( 𝑨 + 𝑩 𝑲 ) 𝑼 ( 𝑨 + 𝑩 𝑲 ) . (15b) Remar k 1 (Role of estimating 𝑼 ). Although the optimal controller is theoretically independent of 𝑼 , estimating 𝑼 impro ves the physical rele vance of cov ar iance-weighted learning in DHS applications, where disturbances may be anisotropic and state-cor related. 4. Data-enabled policy optimization (DeePO) 4.1. Co variance parame ter ization Assumption 4 (Uniform boundedness of signals). There exist positive constants > 0 , > 0 , and > 0 such that 𝒙 ≤ , 𝒖 ≤ , and 𝒅 ≤ for all ∈ ℕ . T o obtain a data-enabled parameterization of the LQR problem, define 𝑼 0 ∶= 1 𝑼 0 𝑫 0 , 𝑿 0 ∶= 1 𝑿 0 𝑫 0 , 𝑿 1 ∶= 1 𝑿 1 𝑫 0 , and 𝑾 0 ∶= 1 𝑾 0 𝑫 0 . Then 𝑿 1 = 𝑨 𝑿 0 + 𝑩 𝑼 0 + 𝑾 0 . Under Assumption 3 , there exists a unique matrix 𝑽 ∈ ℝ ( + )× such that 𝑲 𝑰 = 𝚽 𝑽 = 𝑼 0 𝑽 𝑿 0 𝑽 . Hence, t he closed-loop matr ix can be written as 𝑨 + 𝑩 𝑲 = ( 𝑿 1 − 𝑾 0 ) 𝑽 . Since { 𝒅 } is uniformly bounded and { 𝒘 } is zero-mean with finite cov ar iance, 𝑾 0 2 a . s . ← ← ← ← ← ← ← ← ← ← ← ← ← ← ← ← ← ← ← → → ∞ 0 . Thus, for large samples, 𝑨 + 𝑩 𝑲 ≈ 𝑿 1 𝑽 . The cov ar iance estimate is 𝜺 0 = 𝑿 1 − 𝑿 1 𝑽 𝑿 0 , 𝑼 = 1 𝜺 0 𝜺 0 . (16) Using 𝑲 𝑰 = 𝚽 𝑽 and 𝑲 = 𝑼 0 𝑽 , the CE-LQR problem in ( 15 ) can be rewritten in terms of 𝑽 as min 𝑽 , 𝑼 ( 𝑽 ) = Tr ( 𝑸 + 𝑽 𝑼 0 𝑹 𝑼 0 𝑽 ) 𝑼 , (17a) s.t. 𝑼 = 𝑼 + ( 𝑿 1 𝑽 ) 𝑼 ( 𝑿 1 𝑽 ) , (17b) 𝑿 0 𝑽 = 𝑰 . (17c) Let 𝑽 ∶= 𝚽 −1 𝑲 𝑰 . Then ( 15 ) and ( 17 ) are equiv alent, and the optimal gain is recov ered as 𝑲 = 𝑼 0 𝑽 [ 29 ]. 4.2. DeePO Implementation Assumption 5 (Feasibility of co variance parametrization). The cov ariance parame terization of the LQR pr oblem in ( 17 ) admits a nonempty feasible set. Specifically, there exist constants ∈ (0 , 1) and > 0 such that ∶= 𝑽 𝑿 0 𝑽 = 𝑰 , ( 𝑿 1 𝑽 ) ≤ 1 − , 𝑼 0 𝑽 2 ≤ is nonempty. Theorem 2. Consider ( 17 ) . Assume that 𝑽 satisfies 𝑿 0 𝑽 = 𝑰 and that 𝑼 is the unique solution of ( 17b ) associated with 𝑽 . Then the gr adient of ( 𝑽 ) in ( 17a ) is ∇ 𝑽 ( 𝑽 ) = 2 𝑼 0 𝑹 𝑼 0 + 𝑿 1 𝑷 𝑿 1 𝑽 𝑼 . (18) The proof is def er red to Appendix B . 4.2.1. Rank-1 gradient-descent implement ation of DeePO In t he adaptive control setting, we collect online closed- loop data ( 𝒙 𝒕 , 𝒖 𝒕 , 𝒙 𝒕 +𝟏 ) at each time step , which are used to f orm the data matr ices ( 𝑿 𝟎 ,𝒕 +𝟏 , 𝑼 𝟎 ,𝒕 +𝟏 , 𝑿 𝟏 ,𝒕 +𝟏 ) . These data enable a single projected gradient-descent update of the parameterized policy at time . The updated policy is then applied to the system, and the procedure is repeated iterativ ely . The method is summar ized in Algor ithm 1 . Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 5 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems Algorithm 1: Rank -1 GD DeePO Input: Initial stabilizing policy 𝑲 0 , stepsize , and offline dat a ( 𝑿 0 , 0 , 𝑼 0 , 0 , 𝑿 1 , 0 ) . 1 for = 0 , 0 + 1 , … do 2 Apply 𝒖 = 𝑲 𝒙 and obser ve 𝒙 +1 ; 3 Form 𝝓 ∶= 𝒖 𝒙 ; 4 Update 𝑿 0 , +1 , 𝑼 0 , +1 , 𝑿 1 , +1 recursiv ely ; 5 Update 𝚽 +1 = 𝚽 + 𝝓 𝝓 +1 and compute 𝚽 −1 +1 ; 6 Compute 𝑽 +1 = 𝚽 −1 +1 𝑲 𝑰 ; 7 Perf or m one-step projected GD: 𝑽 ′ +1 = 𝑽 +1 − 𝚷 𝑿 0 , +1 ∇ +1 ( 𝑽 +1 ); (19) Update the control gain: 𝑲 +1 = 𝑼 0 , +1 𝑽 ′ +1 . (20) Assumption 6 (Persistency of ex cit ation for online DeePO). F or all ≥ 0 , the input sequence { 𝒖 } −1 =0 pr ovides a uniform level of excitation. Specifically, the input matrix 𝑼 0 , ∶= [ 𝒖 0 , … , 𝒖 −1 ] is persistentl y exciting of or der + 1 with excitation level ( + 1) , in the sense that the associated Hankel matrix +1 ( 𝑼 0 , ) = 𝒖 0 𝒖 1 ⋯ 𝒖 − −1 𝒖 1 𝒖 2 ⋯ 𝒖 − ⋮ ⋮ ⋱ ⋮ 𝒖 𝒖 +1 ⋯ 𝒖 −1 satisfies min +1 ( 𝑼 0 , ) ≥ ( + 1) , for some constant > 0 independent of . Remar k 2. Assumption 6 ensures that sufficiently informa- tive data are av ailable f or cov ariance estimation and online policy updates, and together with Assumption 2 deter mines the SNR used in the subsequent analysis [ 29 ]. The signal-to-noise ratio (SNR) is defined as ∕ , where and are defined in Assumptions 6 and 2 , respectivel y . The sample cov ar iance matrices are updated recursivel y . Let 𝝓 ∶= 𝒖 𝒙 . Then, f or ex ample, 𝑿 0 , +1 = +1 𝑿 0 , + 1 +1 𝒙 𝝓 , and similarly f or 𝑼 0 , +1 and 𝑿 1 , +1 . The sample co variance matr ix satisfies 𝚽 +1 = 𝚽 + 𝝓 𝝓 +1 . By the Sher man–Mor rison formula [ 20 ], 𝚽 −1 +1 is calculated as: 𝚽 −1 +1 = +1 𝚽 −1 − 𝚽 −1 𝝓 𝝓 𝚽 −1 + 𝝓 𝚽 −1 𝝓 . The projection operator 𝚷 𝑿 0 , +1 in ( 19 ) denotes the or- thogonal projection onto the tangent space of the affine constraint 𝑿 0 , +1 𝑽 = 𝑰 . It preserves the equality constraint to first order ( 17c ) and can be implemented either by the closed-f or m projector 𝑰 − 𝑿 0 , +1 ( 𝑿 0 , +1 𝑿 0 , +1 ) −1 𝑿 0 , +1 or via a null-space parameterization. For any gain 𝑲 , let ( 𝑲 ) denote the tr ue steady -state cost and ( 𝑲 ) its cov ar iance-estimated counter part. In the lifted formulation, let ( 𝑽 ) denote t he cor responding ob- jective in ( 17 ). W e use the optimality gap ( 𝑲 ) − to quantify t he conv ergence of the controller . Accordingly , define t he reg ret Regr et ∶= 1 0 + −1 = 0 ( 𝑲 ) − . (21) This regret measures t he a verag e steady -st ate per f ormance gap between t he online controller and the optimal steady- state policy . Let 𝒆 noise ∶= 𝑼 − 𝑼 denote the co variance estimation er ror induced by finite data. The optimality gap ( 21 ) can be decomposed as ( 𝑲 ) − = ( 𝑲 ) − ( 𝑲 ) noise mismatch + ( 𝑲 ) − CE regret + − optimal bias , (22) where noise mismatch = ( 𝑲 ) − ( 𝑲 ) = Tr 𝑷 𝒆 𝐧𝐨𝐢𝐬𝐞 and optimal bias = − = Tr ( 𝑷 𝒆 noise ) . Lemma 1. Under Assum ptions 1 – 6 , let Algor ithm 1 run for ∶= − 0 + 1 steps, ther e exist positive const ants > 0 , ∈ {1 , 2 , 3 , 4} , depending on ( , , 𝑹 2 , ( 𝑸 ) , ( 𝑹 ) , ) , suc h that, if the stepsize satisfies ∈ (0 , 1 ] and SNR ≥ 2 , then 1 0 + −1 = 0 CE r egre t ≤ 3 + 4 SNR −1∕2 . (23) The proof f ollow s the framew ork of Theorem 2 in [ 29 ], which relies on projected g radient dominance and local smoothness of the objective ov er the feasible set. In our setting, replacing the unit disturbance co variance with a bounded cov ar iance estimate 𝑼 does not change t he proof structure; it only rescales the associated constants. Since our f ocus is on DHS modeling and cov ar iance- a ware robustification, t he full derivation is omitted f or brevity . Lemma 2. Under Assumption 5 , there exists a constant max , 2 > 0 such that for all 𝑽 ∈ with 𝑲 = 𝑼 0 𝑽 , the Lyapunov solution 𝑷 𝑲 in ( 10 ) satisfies 𝑷 𝑲 2 ≤ max , 2 . Consequently, 𝑷 𝑲 ≤ 𝑷 𝑲 2 ≤ max , 2 =∶ max , uniformly for all 𝑽 ∈ . The proof is def er red to Appendix C . Assumption 7. (Covariance estimation mismatch) W e assume that the mismatc h noise is bounded in 2 norm: noise 2 ≤ noise . Theorem 3. Suppose Assumptions 1 – 7 hold, and le t Algorithm 1 run f or ∶= − 0 + 1 iter ations giv en offline data ( 𝑿 0 , 0 , 𝑼 0 , 0 , 𝑿 1 , 0 ) , there exis t positive constants > 0 , ∈ {1 , 2 , 3 , 4} , de- pending on ( , , 𝑹 2 , ( 𝑸 ) , ( 𝑹 ) , ) , suc h that, if the stepsize satisfies ∈ (0 , 1 ] and SNR ≥ 2 , the r egr et satisfies Regr et ≤ 3 + 4 SNR −1∕2 + 2 max noise . (24) The proof is def er red to Appendix D . Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 6 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems 4.2.2. AD AM–GD Implementation of DeePO T o improv e the performance of online policy updates, we replace t he lif ted-variable standard gradient step in ( 19 ) with an AD AM-style preconditioned update ( 25 )–( 26 ), while keeping the same online recursive cov ar iance updates as in DeePO. The re- sulting AD AM–DeePO procedure is summarized in Algor ithm 2 . Here, the ADAM moments , , , ha ve the same dimension as 𝑽 , namel y ℝ ( + )× . In ( 25e ), all nonlinearities act elementwise: +1 is t aken entrywise, and +1 ∕( +1 + ) denotes elemen- twise division. Accordingl y , 𝑫 +1 ∶= 𝒗 +1 + is used only f or elementwise scaling. Assumption 8. Let 𝑫 ∶= 𝒗 + denote the elementwise ADAM scaling matrix. There exist constants 0 < min ≤ max < ∞ suc h that, for all entr ies ( , ) and all , min ≤ ( 𝑫 ) −1 ≤ max . Assumption 9 (Effective stepsize schedule). Let ∶= ∕ (1 − +1 1 ) denote the bias-correct ed effective st epsize. Assume that { } is positive, nonincreasing, and unif or mly bounded, i.e., 0 < ≤ max for all . Moreov er , the stepsizes ar e chosen suc h that the cumulative stepsize gr ows sublinearly with the time horizon, satisfying 1 ≤ 0 + −1 = 0 ≤ 2 , for some constants 1 , 2 > 0 and all ≥ 1 . Theorem 4. U nder Assumptions 1 – 9 , let Algorithm 2 run for ∶= − 0 + 1 st eps. Then there exist constants 1 , 2 , 3 , 4 > 0 , depending on ( , , 𝑹 2 , ( 𝑸 ) , ( 𝑹 ) , ) , such that, if max ∈ (0 , 1 ] and SNR ≥ 2 , then Regr et ≤ 3 + 4 SNR −1∕2 + 2 max noise . (27) The proof is def er red to Appendix E . In practice, the proposed update improv es online learning per- f or mance wit hout sacr ificing per f or mance guarantees. Moreov er, Assumption 2 is adopted for analytical clar ity; extending the result to light-tailed stochas tic disturbances is left f or future work. 5. Simulation This section evaluates the proposed method from both method- ological and application-oriented perspectives. W e first use a standard three-dimensional benchmark [ 29 ] to isolate the effects of disturbance-cov ar iance estimation and AD AM-based updates under stoc hastic ex cit ation. W e then assess the method on an industrial-park DHS in Norther n China [ 25 ]. In the DHS study , the system model is fur ther subjected to static and time-varying parameter per turbations to emulate practical uncer tainty in mass flow rates and heat-transfer characteristics. Overall, the results show that the proposed method achie ves stable near-optimal DHS operation and improv ed robustness under realistic model mismatch and stochas tic disturbances. 5.1. Mechanism validation on a three-dimensional benchmark system This benchmar k is not intended to represent t he scale of in- dustrial DHSs. Rather, it ser ves as a low -dimensional testbed for isolating two key mechanisms of t he proposed approac h, namely disturbance-co variance estimation and adaptive gradient updates, bef ore moving to the industrial DHS case. W e consider the marginall y unstable Laplacian system from [ 5 , 29 ]: 𝑨 = 1 . 01 0 . 01 0 0 . 01 1 . 01 0 . 01 0 0 . 01 1 . 01 . The state and control weighting Algorithm 2: AD AM–DeePO for Direct A daptive LQR Policy Learning Input: Initial stabilizing gain 𝑲 0 , 𝒎 0 = 𝟎 , 𝒗 0 = 𝟎 ; initial stepsize 0 > 0 (and a stepsize schedule { } ≥ 0 ); ADAM hyperparameters 1 , 2 ∈ (0 , 1) and > 0 ; offline data ( 0 , 0 , 0 , 0 , 1 , 0 ) . 1 for = 0 , 0 + 1 , … do 2 Apply control = 𝑲 and obser ve +1 ; 3 Form 𝝓 ∶= 𝒖 𝒙 ; 4 Update 𝑿 0 , +1 , 𝑼 0 , +1 , 𝑿 1 , +1 recursiv ely ; 5 Update 𝚽 +1 = 𝚽 + 𝝓 𝝓 +1 and compute 𝚽 −1 +1 . 6 Given 𝑲 , compute lifted representation: 𝑽 +1 = 𝚽 −1 +1 𝑲 𝑰 . 7 Compute stochastic gradient 𝒈 +1 ∶= ∇ +1 ( 𝑽 +1 ) ; 8 AD AM moment updates: 𝒎 +1 = 1 𝒎 + (1 − 1 ) 𝒈 +1 , (25a) 𝒗 +1 = 2 𝒗 + (1 − 2 ) 𝒈 +1 𝒈 +1 , (25b) 𝒎 +1 = 𝒎 +1 ∕ 1 − − 0 +1 1 , (25c) 𝒗 +1 = 𝒗 +1 ∕ 1 − − 0 +1 2 , (25d) 𝑽 +1 = 𝑽 +1 − 𝒎 +1 𝒗 +1 + = 𝑽 +1 − 𝒎 +1 𝑫 +1 , (25e) where ∶= ∕ 1 − − 0 +1 1 and 𝑫 +1 ∶= 𝒗 +1 + (elementwise). 9 Affine projection to satisfy 𝑿 0 , +1 𝑽 = 𝑰 : 𝑽 ′ +1 = 𝑽 +1 + 𝑿 0 , +1 𝑿 0 , +1 𝑿 0 , +1 −1 𝑰 − 𝑿 0 , +1 𝑽 +1 . (26) 10 Update control gain: 𝑲 +1 = 𝑼 0 , +1 𝑽 ′ +1 . matrices are 𝑸 = 𝑹 = 𝑰 3 . T o ev aluate DeePO under stochastic dis- turbances, we consider Gaussian process noise 𝒘 ∼ (0 , 1 100 𝑰 3 ) and apply a PE input 𝒖 = 𝑲 𝒙 + 𝒗 , where 𝒗 ∼ (0 , 𝑰 3 ) , yielding SNR ∈ [0 , 5] as in [ 29 ]. The DeePO algor ithm is initialized using an LQR controller designed f or a perturbed model 𝑨 design = (1 + ) 𝑨 , where denotes the percent age model mismatch, and a 50- step w ar m-start dat aset is collected. Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 7 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems 5.1.1. Effect of disturbance–covariance estimation T o assess t he effect of disturbance–cov ar iance estimation, we test f our input matrices with increasing coupling lev els: decoupled 𝑩 1 = 1 0 0 0 1 0 0 0 1 , moderately coupled 𝑩 2 = 1 1 0 0 1 0 0 0 1 , strongl y coupled 𝑩 3 = 1 1 0 . 5 0 1 0 0 0 1 , and highly coupled 𝑩 4 = 1 1 1 0 1 0 0 0 1 . A 10% model per turbation is used to initialize a stabilizing gain 𝑲 . W e compare DeePO with 𝑼 = 𝑰 against DeePO using t he estimated disturbance cov ar iance 𝑼 across all four input matr ices as shown in Figure. 1 . Although the optimal LQR g ain 𝑲 is t heo- reticall y independent of 𝑼 , the sur rogate gradient used b y DeePO depends on the closed-loop cov ar iance. Consequently , assuming 𝑼 = 𝑰 distor ts the effective cost landscape when disturbances are anisotropic. In contrast, using 𝑼 pro vides more accurate g radient directions and leads to smoother and more stable con verg ence. This advantage becomes increasingly pronounced from 𝑩 1 to 𝑩 4 as the disturbance coupling strength increases. Figure 1: Effect of disturbance-co variance es timation on DeePO conv ergence. 5.1.2. Compar ison with zeroth-order policy optimization (ZO-PO) W e furt her compare DeePO with classical zeroth-order policy optimization (ZO-PO) on the strongly coupled case 𝑩 4 , using the same initial stabilizing controller and disturbance lev el ( = 0 . 01 ). ZO-PO es timates gradients using two-point finite differ- ences, whereas DeePO updates t he policy directl y from cov ar iance inf or mation in closed-loop dat a. As shown in Figure. 2 (a), DeePO con verg es smoothly and rapidly , whereas ZO-PO conv erges more slow ly and exhibits lar ger fluctuations due to noisy gradient esti- mates. The sample-efficiency compar ison in Figure. 2 (b) fur ther highlights this difference: each ZO-PO update req uires roughly 3000 samples, whereas DeePO uses only one. Under the same sample budget, DeePO reaches near -optimal performance se veral orders of magnitude sooner , indicating substantially higher sample efficiency and strong er robustness to stochastic noise. Figure 2: Comparison of con ver gence beha vior and sample efficiency between DeePO and ZO-PO. 5.2. Industrial DHS in Northern China T o e valuate the proposed method in a realistic setting, w e consider an industrial-park DHS in Nor thern China with three producers and eight loads [ 25 ], yielding an augmented system with = 22 states and = 11 inputs. The data are collected wit h a sampling inter val of = 0 . 1 s . W e apply Gaussian process noise 𝒘 = 0 . 0042 𝒗 ( ) (k W) and exploration noise 𝒘 , = 0 . 1 𝒗 ( ) (k W) , where 𝒗 ( ) , 𝒗 ( ) ∼ ( 𝟎 , 𝑰 11 ) are independent. The f or mer captures stochas tic demand fluctuations and unmodeled thermal effects, while the latter provides excitation f or online lear ning. W e compare GD-DeePO and AD AM-DeePO under model mismatch. The nominal system is 𝑨 nominal = 𝑰 − 𝑨 1 𝟎 𝑪 𝑰 , whereas the per turbed real model is 𝑨 r eal = 𝑰 − (1 + ) 𝑨 1 𝟎 𝑪 𝑰 , with denoting t he percent age model mismatch. A positive corresponds t o fas ter thermal dynamics, whereas a negativ e corresponds to slow er t hermal dynamics. The controller is initialized using the LQR solution of the nominal model, af ter which DeePO updates the f eedback gain 𝑲 online from closed-loop data. Both algorit hms are run for 10 , 000 iterations. Bey ond static model mismatch, we also consider time-varying dynamics. Specifically , the thermal time-scale per turbation is augmented by a zero-mean bounded time-varying component ( ) , yielding the effective perturbation (1 + ( )) . This emulates variations in operating conditions such as chang es in mass flo w rates and heat-transfer characteristics, and results in a linear time- varying sys tem whose dynamics deviate persistentl y from the nom- inal model used f or controller initialization. 5.2.1. Near -optimal and st able DHS operation With a model mismatch of 20% , and a constant heat demand of 10 MW , the final relative cost error ( 𝑲 )− achie ved by AD AM-DeePO is as low as 𝟕 . 𝟓𝟒𝟑 × 𝟏𝟎 − 𝟑 , indicating near-optimal closed-loop operation of the lear ned feedbac k gain 𝑲 . This result show s that the proposed data-dr iven controller can maint ain near - optimal operating performance in a high-dimensional industrial DHS without requiring an exact system model. Figure 3: T emperature ev olution under AD AM-DeePO. Figure 4: Optimality er ror ev olution under AD AM-DeePO. Figure 5: Heat generation under AD AM-DeePO. The closed-loop trajectories in Figures. 3 – 5 furt her illustrate the operating behavior of the lear ned controller . The augmented f or mulation drives the optimality er ror 𝒆 to zero, impl ying con- ver gence to the economically optimal operating point. Meanwhile, Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 8 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems the temperature state 𝑻 and the heat-generation input 𝒉 remain bounded and fluctuate only mildly around their steady-s t ate v alues under stochas tic disturbances and persistent ex citation. These re- sults show t hat t he proposed method can simult aneousl y maintain stable temperature regulation and economically efficient heat allo- cation in a larg e-scale DHS under uncer tainty . 5.2.2. V alue of online data-dr iven control under model mismatch and stoc hastic disturbances T o illustrate the value of online data-driven control, we com- pare ADAM-DeePO with a nominal forecast-based MPC baseline under the same 20% model mismatch and the same stochas tic heat-demand disturbance. The MPC controller is implemented in receding-horizon fashion using the nominal model, whereas t he phy sical process evol ves according to t he mismatched model 𝑨 r eal . Figures. 6 and 7 show that nominal MPC yields smooth heat- generation trajectories, but does not dr iv e the optimality er ror to zero under model mismatch. At least one error component settles at a nonzero steady -state value, indicating conv ergence to a biased op- erating point rather than the economically optimal one. By contrast, Figures. 4 and 5 show that ADAM-DeePO drives the optimality error close to zero while maintaining bounded heat-g eneration trajectories. These results suggest t hat t he proposed online data- driven controller is better able to reco ver t he economically optimal operating point under model mismatch, because it updates the control policy directly from closed-loop data rat her t han relying solely on nominal predictions in real-time operation. 5.2.3. Compar ison of AD AM-DeePO and GD-DeePO T ables 2 and 3 repor t t he relative operating-cost errors under different le vels of static model mismatch. Both GD-DeePO and AD AM-DeePO con verg e to near-optimal solutions, but ADAM- DeePO consistently achiev es lo wer operating-cost error, with im- pro vements (IMP) of up to 48 . 71% . This indicates that adaptiv e moment-based scaling impr ov es online learning and leads to better closed-loop performance under model uncert ainty . Figure 6: Heat generation trajectories under forecas t-based nominal MPC with 20% model mismatch. Figure 7: Optimality er ror ev olution under f orecast-based nominal MPC with 20% model mismatch. 5.2.4. Robustness to time-varying thermal dynamics Comparing T ables 2 and 4 , we observe that mild time-v ar ying perturbations around a fixed 20% model mismatch improv e the operating-cost per f or mance of both GD-DeePO and ADAM-DeePO. Small zero-mean variations provide additional excitation, par tially mitigating t he bias introduced by static model mismatch and T able 2 Relative operating-cost error under static mo del mismatch (mo derate mo del va riations). -15% 15% -20% 20% GD 3.423e-2 1.673e-2 3.849e-2 1.470e-2 AD AM 2.695e-2 9.563e-3 3.118e-2 7.543e-3 IMP 21.27% 42.84% 18.99% 48.71% T able 3 Relative operating-cost error under static mo del mismatch (mild mo del variations). -1% 1% -2% 2% GD 2.468e-2 2.354e-2 2.527e-2 2.299e-2 AD AM 1.746e-2 1.633e-2 1.805e-2 1.579e-2 IMP 29.25% 30.63% 28.57% 31.32% T able 4 Relative operating-cost error under time-varying thermal- mo del p erturbations ( = 20% ). 10% 30% 50% 80% GD 1.780e-3 3.302e-3 1.190e-2 3.795e-2 AD AM 3.334e-4 1.857e-3 1.046e-2 3.651e-2 IMP 81.27% 43.76% 12.10% 3.79% impro ving online adaptation. Under these conditions, AD AM- DeePO achie ves better per f or mance through more stable policy updates. As the per turbation magnitude increases, howe ver , the effective dynamics mov e fur ther a wa y from t he nominal linear model, reducing the benefit of adaptive g radient scaling. As a result, the per formance gap between AD AM-DeePO and GD-DeePO becomes smaller at larg er per turbation lev els. 6. Conclusion This paper dev elops a dat a-driven online control framew ork f or DHSs by embedding steady-state economic optimality conditions into the system dynamics. Based on DeePO, the resulting controller enables online learning of near -optimal regulation policies un- der stochastic disturbances and model mismatch, while pro viding con verg ence to an optimal control policy , together with closed- loop stability guarantees. An AD AM-enhanced variant is fur ther dev eloped to improv e the per f or mance of online policy updates. Simulations on an industrial-scale DHS sho w that the proposed method achie ves stable near-optimal operation and strong empir ical robustness to both static and time-varying model per turbations under different heat-demand disturbance conditions. These results suggest that the proposed data-driven online control is a promising approach for practical DHS operation, especially in large-scale sys- tems where accurate models are difficult to maintain, disturbance f orecasts may be unreliable, and strong closed-loop guarantees are desired. CRedi T authorship contribution statement Xinyi Yi: Conceptualization, Methodology , Software, V ali- dation, For mal analysis, Visualization, Writing – original draft, W r iting – review & editing. Ioannis Lestas: Supervision, For mal analy sis, Writing – revie w & editing. Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 9 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems Declaration of compe ting interest The authors declare that they hav e no kno wn competing finan- cial interests or personal relationships that could hav e appeared to influence the work repor ted in this paper . A. Proof of Theor em 1 The Lagrangian for E1 is given by : = 1 2 𝒉 𝑮 𝑭 𝑮 𝒉 𝑮 + 𝝁 ( − 𝑨 𝟏 𝑻 + 𝑩 𝟏 𝒉 𝑮 − 𝑩 𝟐 𝒉 𝑳 ) , where 𝝁 is the dual variable associated with ( 3b ). The KKT conditions yield: 𝒉 𝑮 = 𝑭 𝑮 𝒉 𝑮 + 𝑩 ⊤ 𝟏 𝝁 = 𝟎 , 𝑻 = 𝑨 𝟏 𝝁 = 𝟎 , wher e 𝝁 = 𝟏 . The conditions can be rewritten as 𝑭 𝑮 𝒉 𝑮 ⋆ = 𝟏 such that − = 0 for any , ∈ , establishing 𝑭 𝑴 𝒉 𝑮 ⋆ = 𝟎 as the optimality condition for the unique solution of 𝒉 𝑮 ⋆ . The Lag rangian for E2 is: ( 𝑻 , , 𝝀 ) = 1 2 𝑻 ⊤ 𝑭 𝑫 𝑻 + 𝝀 ( 𝑻 − 𝑨 † 𝟏 ( 𝑩 𝟏 𝒉 𝑮 ⋆ − 𝑩 𝟐 𝒉 𝑳 ) − 𝟏 ) . The KKT conditions f or E2 are ( 4b ) together with: 𝑻 = 𝑭 𝑫 𝑻 + 𝝀 = 𝟎 , = − 𝝀 𝟏 = 0 , which gives 𝟏 𝑻 𝑭 𝑫 𝑻 ⋆ = 𝟎 . The optimality condition ( 4b ) en- sures that 𝑻 ⋆ satisfy the optimal condition of E1 . Given that 𝑭 𝑫 is positive definite, E2 constitutes a conve x optimization problem ov er 𝑻 , t hereby confirming unique 𝑻 ⋆ and t hat these optimality conditions are both necessary and sufficient. B. Proof of Theor em 2 The proof follo ws the same differential argument as Lemma 2 in DeePO [ 29 ]. In particular, using ( 𝑽 ) = Tr (( 𝑸 + 𝑽 𝑼 0 𝑹 𝑼 0 𝑽 ) 𝑼 ) = Tr ( 𝑷 𝑼 ) , t he same recursive differentiation of 𝑷 as in the DeePO proof yields = 2Tr 𝑼 𝑬 𝑽 , where 𝑬 = ( 𝑼 0 𝑹 𝑼 0 + 𝑿 1 𝑷 𝑿 1 ) 𝑽 . Hence, ∇ 𝑽 ( 𝑽 ) = 2 𝑬 𝑼 = 2( 𝑼 0 𝑹 𝑼 0 + 𝑿 1 𝑷 𝑿 1 ) 𝑽 𝑼 . The linear constraint ( 17c ) is en- f orced separatel y by projection in the algor ithmic update and therefor e does not appear e xplicitly in the gradient expression. C. Proof of Lemma 2 Fix 𝑽 ∈ and let 𝑨 𝑲 ∶= 𝑿 1 𝑽 . By Assumption 5 , ( 𝑨 𝑲 ) ≤ 1 − and 𝑲 2 ≤ . The L yapuno v equation 𝑷 𝑲 = ∞ =0 ( 𝑨 𝑲 ) ( 𝑸 + 𝑲 𝑹𝑲 )( 𝑨 𝑲 ) implies 𝑷 𝑲 2 ≤ ∞ =0 𝑨 𝑲 2 2 𝑸 2 + 𝑲 2 2 𝑹 2 ≤ ∞ =0 𝑨 𝑲 2 2 𝑸 2 + 2 𝑹 2 . Since ( 𝑨 𝑲 ) ≤ 1 − uniformly on , the series ≥ 0 𝑨 𝑲 2 2 is unif or mly bounded, hence 𝑷 𝑲 2 ≤ max , 2 f or all 𝑽 ∈ . D. Proof of Theor em 3 By Assumption 7 , we hav e noise 2 ≤ noise . Moreover , with the definition of max , by Hölder’ s inequality for Sc hatten norms [ 23 , (1.174)], it holds that ( 𝑲 ) − ( 𝑲 ) = Tr 𝑷 𝒆 𝐧𝐨𝐢𝐬𝐞 ≤ 𝑷 𝒆 𝐧𝐨𝐢𝐬𝐞 2 ≤ max noise . Similarl y , − = Tr 𝑷 𝒆 𝐧𝐨𝐢𝐬𝐞 ≤ 𝑷 𝒆 𝐧𝐨𝐢𝐬𝐞 2 ≤ max noise . Thus, 1 0 + −1 = 0 ( ( 𝑲 𝒕 ) − ( 𝑲 𝒕 ) + − ) ≤ 2 max noise . Combined with ( 22 , 23 ), we obtain ( 24 ). E. Proof of Theor em 4 The proof f ollows t he same descent-based argument as the GD- DeePO reg ret analysis in Theorem 3 , with the standard gradient step replaced b y the AD AM-preconditioned update. By t he local smoothness property of in DeePO[ 29 ], the one-step chang e of along the AD AM update direction satisfies ( 𝑽 +1 ) − ( 𝑽 ) ≤ − ∇ ( 𝑽 ) , 𝑫 𝒎 + ( ( 𝑽 )) 2 2 𝑫 𝒎 2 . (28) Under Assumptions 8 – 9 , Lemma 22 in [ 31 ] implies that t he preconditioned AD AM direction remains sufficiently aligned with the tr ue gradient and has bounded magnitude relative to it. Hence, there exist constants 1 , 2 > 0 such that ∇ ( 𝑽 ) , 𝑫 𝒎 ≥ 1 ∇ ( 𝑽 ) 2 , 𝑫 𝒎 2 ≤ 2 ∇ ( 𝑽 ) 2 . (29) Substituting ( 29 ) into ( 28 ) giv es ( 𝑽 +1 ) − ( 𝑽 ) ≤ − 1 − ( ( 𝑽 )) 2 2 ∇ ( 𝑽 ) 2 . (30) By absorbing 1 and 2 into the projected gradient-dominance modulus and smoothness constant, ( 30 ) yields the ADAM analogue of the one-step descent inequality used in the GD-DeePO analysis. The remainder of the reg ret proof then f ollows exactl y as in the proof of Theorem 3 , toge t her wit h the cov ar iance-mismatch term 2 max noise . This gives ( 27 ). Data a vailability The data and code that support the findings of this study are a vailable from the cor responding author upon reasonable reques t. Ref erences [1] Ahmed, S., Machado, J.E., Cucuzzella, M., Scherpen, J.M., 2023. Control-oriented modeling and passivity analysis of thermal dynam- ics in a multi-producer district heating system. IF AC-PapersOnLine 56, 175–180. [2] Anderson, B.D., Moore, J.B., 2007. Optimal control: linear quadratic methods. Courier Cor poration. [3] Cholew a, T ., Siuta-Olcha, A., Smolarz, A., Muryjas, P ., W olszczak, P ., Guz, Ł., Bocian, M., Balaras, C.A., 2022. An easy and widel y applicable f orecast control for heating systems in e xisting and new buildings: First field experiences. Journal of Cleaner Production 352, 131605. [4] Cui, L., Jiang, Z.P ., Kolm, P .N., Macqueron, G.G., 2025. A fully data- driven value iteration for stochas tic lqr: Con vergence, robustness and stability . arXiv preprint arXiv:2505.02970 . [5] Dean, S., Mania, H., Matni, N., Recht, B., Tu, S., 2020. On the sample complexity of the linear quadratic regulator. Foundations of Computational Mat hematics 20, 633–679. [6] Frison, L., Gölzhäuser, S., Bitterling, M., Kramer, W ., 2024. Ev al- uating different ar tificial neural network forecasting approaches for optimizing district heating network operation. Ener gy 307, 132745. [7] de Giuli, L.B., La Bella, A., Scattolini, R., 2024. Ph ysics-informed neural network modeling and predictive control of district heating systems. IEEE Transactions on Control Systems T echnology 32, 1182–1195. [8] Guo, C., Zhang, J., Y uan, H., Y uan, Y ., W ang, H., Mei, N., 2024. Inf ormer-based model predictive control framew ork considering group controlled hydraulic balance model to improv e the precision of client heat load control in district heating system. Applied energy 373, 123951. [9] Hauswirth, A., He, Z., Bolognani, S., Hug, G., Dörfler, F ., 2024. Optimization algorithms as robust feedbac k controllers. Annual Re views in Control 57, 100941. Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 10 of 11 Data-driven online control for real-time optimal economic dispatch and temp erature regulation in district heating systems [10] Jansen, J., Jorissen, F ., Helsen, L., 2024a. Effect of prediction uncert ainties on the per formance of a white-box model predictive controller f or district heating netw orks. Energy and Buildings 319, 114520. [11] Jansen, J., Jor issen, F ., Helsen, L., 2024b. Mixed-integer non-linear model predictive control of district heating netw orks. Applied Energy 361, 122874. [12] Kim, Y ., Kim, Y ., Kim, M., Cho, N., 2025. Neural policy iteration f or stochastic optimal control: A physics-inf or med approach. arXiv preprint arXiv :2508.01718 . [13] Kingma, D.P ., 2014. Adam: A method f or stoc hastic optimization. arXiv prepr int arXiv:1412.6980 . [14] La Bella, A., Del Cor no, A., 2023. Optimal management and data-based predictive control of district heating systems: The nov ate milanese experiment al case-study . Control Engineering Practice 132, 105429. [15] Liu, S., Guo, Y ., W agner, F ., Liu, H., Cui, R.Y ., Mauzerall, D.L., 2024. Diversifying heat sources in china ’ s urban distr ict heating systems will reduce r isk of carbon lock -in. N ature Energy 9, 1021–1031. [16] Machado, J.E., Ferguson, J., Cucuzzella, M., Scher pen, J.M., 2022. Decentralized temperature and storage volume control in multipro- ducer distr ict heating. IEEE Control Systems Letters 7, 413–418. [17] Mohammadi, H., Zare, A., Solt anolko t abi, M., Jov anović, M.R., 2021. Conv ergence and sample complexity of gradient methods f or the model-free linear –quadratic regulator problem. IEEE T ransactions on Automatic Control 67, 2435–2450. [18] Qin, X., Lestas, I., 2024. Frequency control and power shar ing in combined heat and power netw orks, in: 2024 IEEE 63rd Conference on Decision and Control (CDC), IEEE. pp. 5771–5776. [19] Saloux, E., Candanedo, J.A., 2021. Model-based predictive control to minimize pr imary energy use in a solar district heating system with seasonal ther mal energy storage. Applied energy 291, 116840. [20] Sherman, J., Mor rison, W .J., 1950. Adjustment of an inv erse matr ix corresponding to a chang e in one element of a given matrix. The Annals of Mathematical Statistics 21, 124–127. [21] Simonsson, J., 2021. To wards efficient modeling and simulation of district energy systems. Ph.D. thesis. Luleå University of Tec hnology . [22] T u, S.L., 2019. Sample complexity bounds for the linear quadratic regulator . Ph.D. thesis. Univ ersity of California, Berkeley . [23] W atrous, J., 2018. The theory of quantum inf ormation. Cambridge university press. [24] W ei, Z., Tien, P .W ., Calautit, J., Darkwa, J., W orall, M., Boukhanouf, R., 2024. Inv estigation of a model predictive control (mpc) strategy f or seasonal thermochemical energy storag e systems in district heat- ing networks. Applied Energy 376, 124164. [25] Yi, X., Guo, Y ., Sun, H., Qin, X., Wu, Q., 2023. Energy-grade double pricing f or combined heat and po wer systems. IEEE Transactions on Po wer Systems . [26] Yi, X., Lestas, I., 2025. Optimal energy-sharing and temperature regulation in distr ict heating systems. IFA C-PapersOnLine 59, 67– 72. [27] Y uan, C., Lin, X., 2025. Graph-temporal convolutional netw ork f or steam heating networ k simulation consider ing dynamic characteris- tics. Energy , 137567. [28] Zhang, Z., Zhou, X., Du, H., Cui, P ., 2023. A new model predictive control approach integrating physical and data-dr iven modelling for improv ed energy per f or mance of district heating substations. Energy and Buildings 301, 113688. [29] Zhao, F ., Dörfler, F ., Chiuso, A., Y ou, K., 2025. Data-enabled policy optimization f or direct adaptive learning of t he lqr. IEEE Transactions on Automatic Control . [30] Zhou, K., Doy le, J.C., Glover , K., et al., 1996. Robust and optimal control. volume 40. Prentice hall N ew Jerse y . [31] Zou, F ., Shen, L., Jie, Z., Zhang, W ., Liu, W ., 2019. A sufficient con- dition f or con vergences of adam and rmsprop, in: Proceedings of t he IEEE/CVF Conf erence on computer vision and pattern recognition, pp. 11127–11135. Xinyi Yi and Ioannis Lestas: Preprint submitted to Elsevier P age 11 of 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

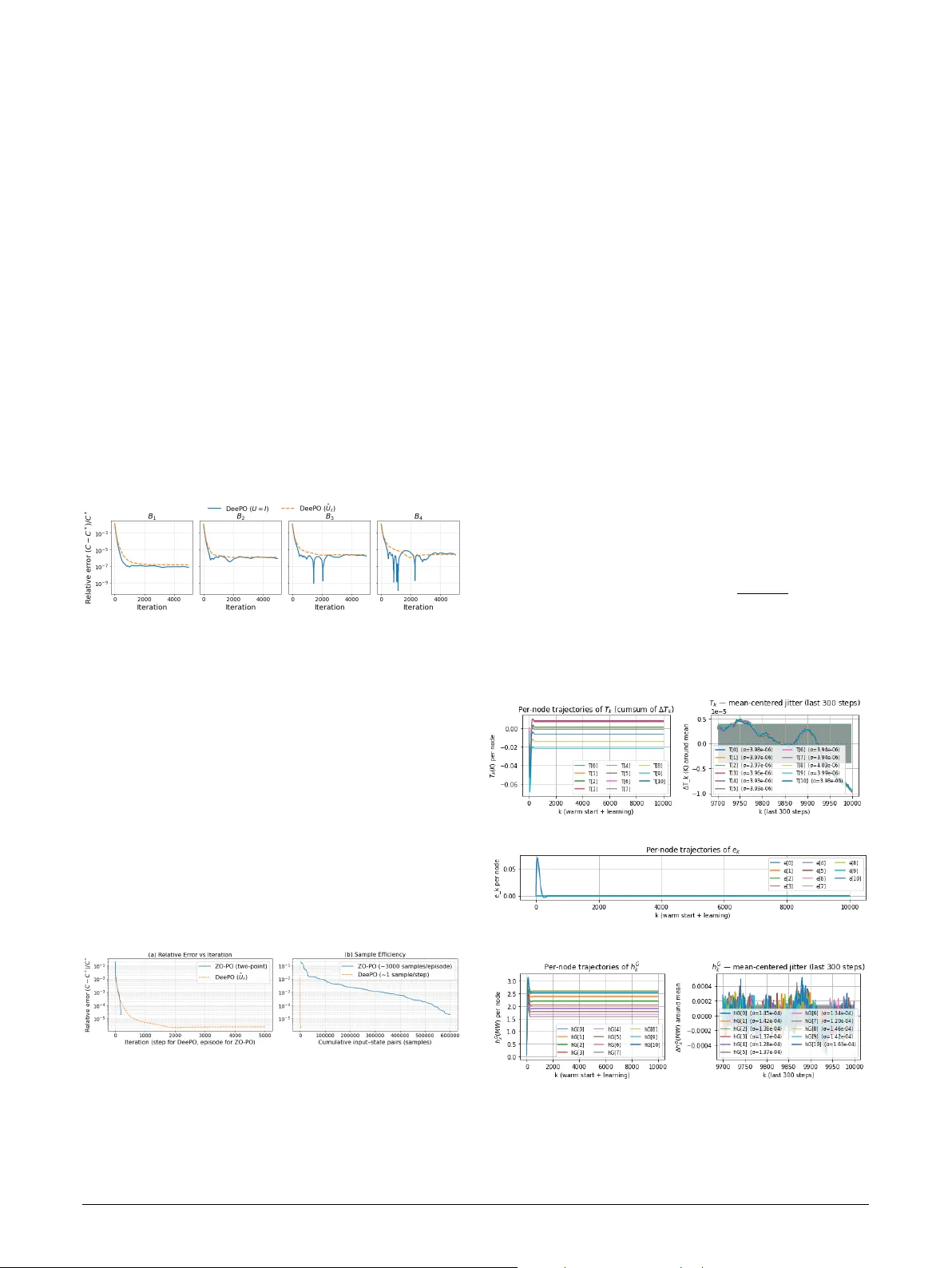

Leave a Comment