Autoregressive Guidance of Deep Spatially Selective Filters using Bayesian Tracking for Efficient Extraction of Moving Speakers

Deep spatially selective filters achieve high-quality enhancement with real-time capable architectures for stationary speakers of known directions. To retain this level of performance in dynamic scenarios when only the speakers' initial directions ar…

Authors: Jakob Kienegger, Timo Gerkmann

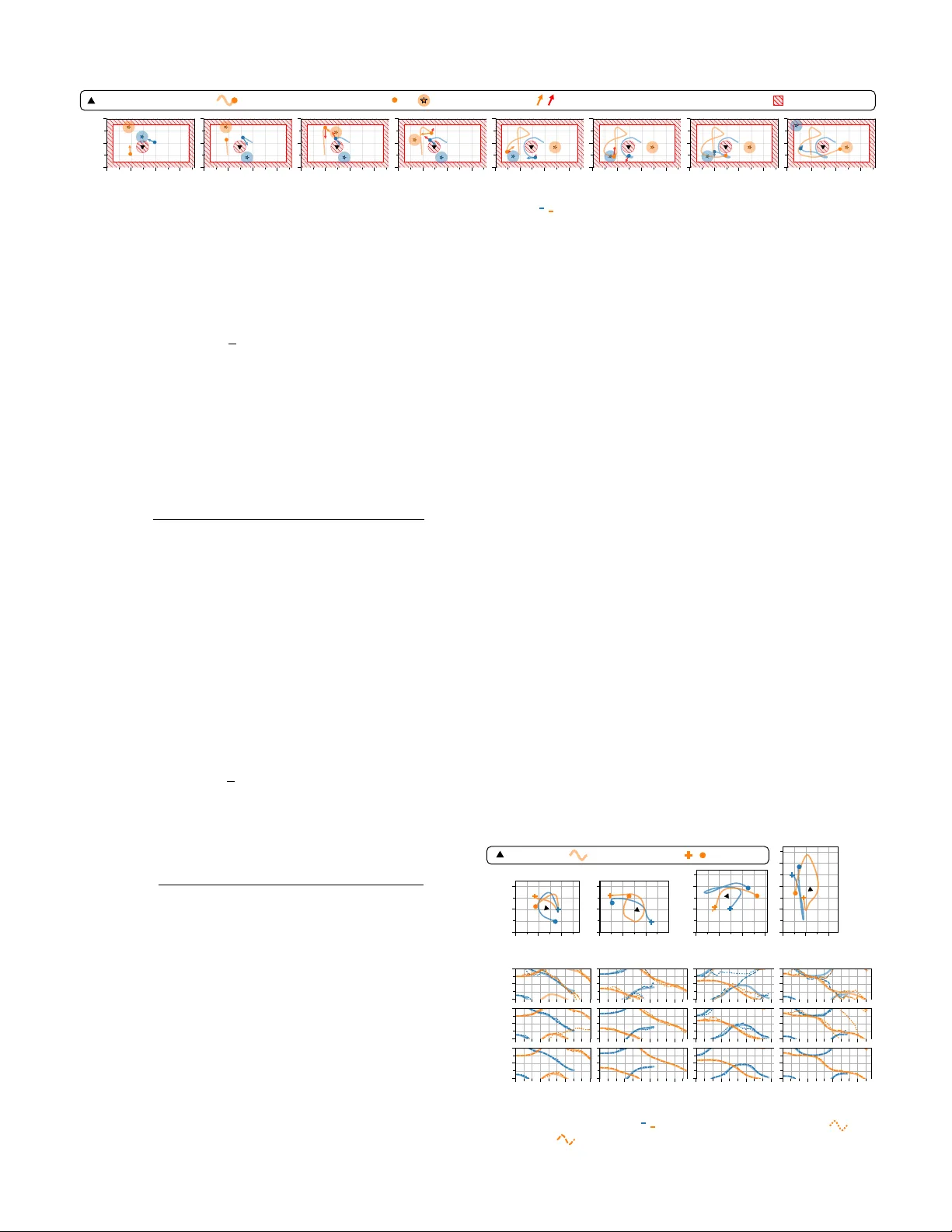

JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 1 Autore gressi v e Guidance of Deep Spatially Selecti v e Filters using Bayesian T racking for Ef ficient Extraction of Moving Speakers Jakob Kienegger , Student Member , IEEE, and T imo Gerkmann, Senior Member , IEEE Abstract —Deep spatially selective filters achieve high-quality enhancement with real-time capable ar chitectures f or stationary speakers of known directions. T o retain this lev el of performance in dynamic scenarios when only the speakers’ initial directions are given, accurate, yet computationally lightweight tracking algorithms become necessary . Assuming a frame-wise causal processing style, temporal feedback allows for leveraging the enhanced speech signal to improve tracking perf ormance. In this work, we inv estigate strategies to incorporate the enhanced signal into lightweight tracking algorithms and autoregr essively guide deep spatial filters. Our proposed Bayesian tracking algorithms are compatible with arbitrary deep spatial filters. T o increase the realism of simulated trajectories during development and evaluation, we propose and publish a novel dataset based on the social for ce model. Results validate that the autoregr essive incorporation significantly impr oves the accuracy of our Bay esian trackers, resulting in superior enhancement with none or only negligibly increased computational overhead. Real-world record- ings complement these findings and demonstrate the generaliz- ability of our methods to unseen, challenging acoustic conditions. Index T erms —Multichannel speaker extraction, direction of arrival (DoA) estimation, mo ving speakers, Bayesian tracking. I . I N T RO D U C T I O N S PEECH enhancement aims to improve the quality and intelligibility of a recorded speech signal by removing noise and rev erberation. In a scenario with multiple speakers, such as the cocktail party pr oblem [1], additional, overlap- ping speech signals of other competing speakers represent a particularly challenging noise type, due to their similar and non-stationary statistical properties. If these interferences are of similar level as the desired target speaker , an ambiguity arises who to enhance and who to suppress. T arget speaker extraction (TSE) solves this problem by utilizing additional information, referred to as cues, to distinguish the desired from competing speakers. Conditioned on one or multiple cues, recent adv ances in neural network (NN)-dri ven methods demonstrate exceptional speech enhancement performance un- der e ver more challenging conditions, see [2] for an ov erview . This work was supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under Grant 508337379. Computational re- sources were provided by the Regional Computer Center (RRZ) of the Uni- versity of Hamburg and the Erlangen National High Performance Computing Center (NHR@F A U) under Project f104ac. NHR is funded by the Federal Government and the State of Bav aria. Hardware at NHR@F A U and RRZ receiv ed partial DFG funding under Grants 440719683 and 498394658. The authors are with the Signal Processing Group, Department of Informatics, Univ ersity of Hamburg, 22527 Hamburg, Germany (e-mail: jakob .kienegger@uni-hamburg.de; timo.gerkmann@uni-hamb urg.de). When recordings from a microphone array are av ailable, the tar get speaker’ s position provides an effecti ve cue for speech enhancement. Lev eraging this information, a spatially selectiv e filter (SSF) can be steered toward the desired location to extract the corresponding speech signal. In practice, this cue is commonly restricted to the target’ s azimuth orientation relativ e to the microphone array , referred to as the direction of arriv al (DoA) [3], [4]. F or stationary and directionally distinct tar get speakers, deep non-linear SSFs can achie ve high spatial selectivity [5], resulting in strong interference suppression. Consequently , when provided with accurate DoA information, recently proposed SSFs demonstrate state-of-the- art enhancement performance while retaining computationally lightweight NN architectures [3], [4], [6], [7], [8], [9], [10]. Highly constrained recording setups, such as a seated con- ference meeting with a centrally placed microphone array [11], may legitimate the assumption of stationary and directionally distinct speaker locations. Howe ver , more general settings like the dinner party scenario considered in [12], clearly violate these assumptions. The resulting time-varying signal-to-noise ratios (SNRs) due to changing speaker-to-array distances and directionally ambiguous constellations, e.g., crossing speakers, significantly increase the difficulty of the enhancement task. While deep SSFs are capable of resolving such ambiguities by utilizing temporal context to learn the target’ s temporal- spectral characteristics [13], the need for precise directional guidance bears an additional challenge. Since continuous knowledge of the target speaker’ s DoA throughout the record- ing, referred to as str ong guidance, is in general unavailable, weakly guided TSE relies only on the initial direction and incorporates a tracking algorithm to automate the steering of the SSF [13], [14], [15]. Howe ver , accurate tracking of a moving target speaker under difficult acoustic conditions typically requires resource-intensi ve NNs [16], [17], [18], [19], [20], increasing the computational burden of the TSE pipeline. While most speech enhancement systems operate offline, increasing demand in telecommunications, assistiv e technolo- gies, and consumer electronics drives research toward real- time solutions [21], [22], [23]. T ypically implemented as frame-wise causal versions of of fline methods, these ap- proaches suffer from a fundamental disadvantage due to being restricted to current and past data during processing [24], [25]. Howe ver , recent works show that their sequential nature can also benefit the enhancement performance. An autoregressi ve (AR) NN architecture with the processed signal as feedback facilitates improved exploitation of the temporal correlations of speech to preserve wav eform continuity [26], [27], [28]. Pseudo-AR training strategies allow these methods to maintain parallelizability while generalizing to frame-wise inference. JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 2 Instead of leveraging autoregression within the speech en- hancement architecture, we have pre viously proposed to incor- porate the processed speech signal for tracking, resulting in an AR-guided, or self-steering , SSF [14], [15]. While we focused on a neural tracker in [15], our work in [14] demonstrated how the estimates of a slightly adapted SSF architecture can effecti vely compensate for the limited modeling capabilities of a lightweight, statistics-based algorithm. In this work, we further develop our approach from [14] by exploring different strategies to incorporate the SSF into Bayesian tracking frame- works. Specifically , we propose modified filtering formulations for widely used Kalman [29] and particle filter [30] algorithms. T o improv e realism of simulated speaker trajectories during dev elopment and ev aluation, we publish a nov el synthetic dataset based on the social force motion model [31]. Results demonstrate that the AR incorporation of the processed speech signal consistently increases tracking accurac y , yielding signif- icantly improved enhancement performance. A detailed analy- sis demonstrates the generalization capabilities of our methods to real-world recordings in unseen, challenging conditions. The remainder of this paper is organized as follows. Section II formulates the problem and notation, along with an introduction of steerable SSFs and Bayesian estimators for tracking in Sec. III. Our proposed Bayesian tracking formulations for AR guidance are presented in Section IV. Section V introduces our novel synthetic dataset, followed by an overvie w of the experimental setup in Sec. VI. Performance and generalization capabilities are discussed in Sec. VII. I I . P RO B L E M D E FI N I T I O N W e consider a noisy and rev erberant recording en vironment captured by a planar omni-directional microphone array with M channels. The multichannel observation at the m -th micro- phone y m is modeled as the sum of anechoic target speech signals s m and noise v m , where v m comprises interfering speech, environmental and measurement noise, and the re- verberant components of the target speech. In the short-time Fourier transform (STFT) domain, which we denote by capital letters, the multichannel observation can be written as Y tk = S tk + V tk ∈ C M , (1) with t and k indexing frame and frequency bins respectively and v ectorization (indicated in boldface) conducted o ver the M microphone channels. In this work, we aim to reconstruct the anechoic target speech at a predefined reference microphone, denoted by S tk . Under far-field conditions, amplitude dif fer- ences across microphones are negligible, and the remaining inter-channel time delays w .r .t. the reference microphone can be modeled using a steering vector d tk [32, Sec. 3.1], gi ving Y tk = d tk S tk + V tk . (2) For a planar array with microphones at a similar height as the target speaker , the steering vector can be approximated as depending only on the target’ s azimuth direction θ t , i.e., d tk ≈ d k ( θ t ) . (3) Consequently , we refer to θ t as the direction of arriv al (DoA) throughout this work, implicitly excluding elev ation. I I I . S T E E R I N G S PA T I A L F I LT E R S A. Str ongly Guided T ar get Speaker Extraction Spatially selectiv e filters (SSFs) exploit positional informa- tion to extract a sound source originating from a designated direction. In this work, we follow the common con vention of using only the target speaker’ s azimuth DoA θ t for guidance [3], [4]. When the DoA is known throughout the entire recording, the SSF can be directly employed for target speaker extraction (TSE) by continuously steering it toward the target speaker , a scenario we refer to as str ong guidance. In a frame-wise causal STFT-domain processing pipeline, the SSF has access to the current and all pre vious broadband multichannel observations Y 1: t together with the DoAs θ 1: t for computing the speech estimate ˆ S tk . Howe ver , for online inference, re-ev aluating all prior input values for each new frame t becomes computationally intractable. Instead, tempo- ral conte xt can be embedded into a hidden state z t 1 , yielding a sequential processing style, which, conditioned on z t 1 , solely depends on current multichannel observation Y t and DoA θ t , ˆ S tk = F k ( Y t , θ t | z t 1 ) , (4) with F k denoting the SSF. Updating the hidden state z t frame-by-frame yields a computationally efficient formulation suitable for real-time speech enhancement [21], [22], [23]. B. Bayesian T racking for W eakly Guided Speaker Extraction The dependency of strongly guided TSE on continuous ground-truth directional cues greatly limits practical appli- cability . W eakly guided TSE [13] relaxes this constraint and solely relies on the target speaker’ s initial DoA θ 0 . T o continue using a SSF for enhancement, a target speaker tracking (TST) algorithm must be incorporated to replace the continuous oracle guidance with DoA estimates ˆ θ t based on θ 0 . When tracking solely relies on the noisy observations Y 1: t , the TST and SSF algorithms can be directly concatenated, as shown in Fig. 1a. In this work, we focus on recursive Bayesian filters for TST, which model the posterior p ( θ t | Y 1: t , θ 0 ) , referred to as filtering distribution [33]. The DoA is inferred via a central tendency measure of the filtering distribution, e.g., the mean, yielding the minimum mean squared error (MMSE) estimate ˆ θ t = E { θ t | Y 1: t , θ 0 } . (5) Recursiv e Bayesian filters rely on a generativ e state-space model which specifies how the state θ t ev olves over time and generates the observations Y 1: t . Assuming Markov proper- ties [33, Sec. 4.1], the state-transition is fully specified by p ( θ t | θ t 1 ) , and the observation Y t is conditionally indepen- dent of all past states and observations giv en the current state θ t . This allows to recursiv ely update the filtering distribution p ( θ t | Y 1: t , θ 0 ) ∝ p ( Y t | θ t ) p ( θ t | Y 1: t 1 , θ 0 ) , (6) via likelihood p ( Y t | θ t ) , and the predictive distrib ution (prior) written as a function of the state transition p ( θ t | θ t 1 ) p ( θ t | Y 1: t 1 , θ 0 ) = Z θ t 1 p ( θ t | θ t 1 ) p ( θ t 1 | Y 1: t 1 , θ 0 ) d θ t 1 . (7) JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 3 Y t TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST θ 0 ˆ S t SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF ˆ θ t θ 0 (a) Concatenation of TST and SSF. Y t TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST θ 0 ˆ S t SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF z − 1 z − 1 z − 1 z − 1 ˆ θ t θ 0 ˆ S t 1 (b) Modify TST for AR use of processed speech. Y t SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF SSF TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST TST θ 0 ˆ S t z − 1 z − 1 z − 1 ˆ S t 1 ˆ θ t θ 0 (c) Multichannel (MIMO) SSF for MIMO-AR. Fig. 1. W eakly guided speaker extraction using target speaker tracking (TST) to estimate the target’ s direction θ t from starting direction θ 0 and guide a spatially selecti ve filter (SSF) for enhancement. W e propose an autoregressiv e (AR) inte gration of the processed speech for improv ed guidance in (b) and (c). Kalman Filter The Kalman filter (KF) [33, Sec. 4.3], [34] is a recursiv e Bayesian filter defined for a linear -Gaussian state-space model. Given this condition, both the filtering and predictiv e distributions in (6) and (7) remain Gaussian, yielding a tractable recursion while providing the optimal MMSE estimate via (5). In tracking applications, the state- transition model is often extended by first or higher -order deriv ativ es to enforce smooth trajectories [35]. In this work, we adopt a white-noise acceleration model [36], [37], which assumes linear dynamics for DoA θ t and azimuth velocity ˙ θ t θ t ˙ θ t = 1 ∆ T 0 1 θ t 1 ˙ θ t 1 + ∆ T 2 / 2 ∆ T ν t , ν t ∼ N 0 , σ 2 ν . (8) W ith one-dimensional process noise ν t , the joint state tran- sition p θ t , ˙ θ t | θ t 1 , ˙ θ t 1 is Gaussian but degenerate (rank- deficient). Eliminating ν t yields θ t = θ t 1 + ∆ T / 2 ˙ θ t + ˙ θ t 1 , thus, a deterministic link between θ t and ˙ θ t , reducing the two- dimensional model to only one effecti ve degree of freedom. While in commonly used signal models, e.g. (2), the DoA θ t enters non-linearly via the steering vector d tk , the KF requires a linear relationship between STFT coefficients Y t and θ t . T o fulfill this property , Traa et al. [29], [38, Sec. 4.1.2] utilize the DoA estimate Φ t ( Y t ) and implicitly assume it is a sufficient statistic of Y t regarding θ t . In particular , Φ t is the aggregation of narrow-band DoA estimates ϕ tk ( Y tk ) , which minimize the linear-phase least-squares (LS) error between corresponding direct-path and noisy inter-channel phase differences (IPDs) across all microphone pairs, see, e.g., [39]. Giv en equal inter- channel spacings, such as in a uniform circular array of three microphones, the sufficient statistic Φ t can be expressed as Φ t = arg K/ 2 X k =1 g k e j ϕ tk , g k = ( 1 , k ≤ K A 0 , else , (9) with p ( Y t | θ t ) ∝ p (Φ t | θ t ) regarding θ t . The weights g k exclude frequency bins above K A suffering from spatial aliasing [39]. Howe ver , due to the inherent circularity , this results in the wrapped Gaussian state-space [40, Sec. 3.5.7] Φ t | θ t ∼ W N θ t , σ 2 Φ . (10) T o maintain tractability , T raa et al. use mode-matching to project the wrapped Gaussian back to an ordinary Gaussian. P article F ilter Instead of relying on linear -Gaussian as- sumptions, the particle filter (PF) [41], [42] approximates the filtering distribution using a weighted set of samples, kno wn as particles. Specifically , the PF models p ( θ 1: t | Y 1: t , θ 0 ) , i.e., the joint filtering distribution of DoA sequence θ 1: t , factorizing as p ( θ 1: t | Y 1: t , θ 0 ) ∝ p ( Y 1: t | θ 0: t ) p ( θ 1: t | θ 0 ) . (11) The bootstrap filter [33, Alg. 7.5], [41] is a variant of the PF representing the joint filtering distribution using Monte Carlo samples θ n 1: t [33, Sec. 2.5] from the joint prior p ( θ 1: t | θ 0 ) p ( θ 1: t | Y 1: t , θ 0 ) ≈ N X n =1 w n t δ ( θ 1: t − θ n 1: t ) , (12) with δ ( · ) denoting the Dirac delta function and normalized weights w n t . The filtering distribution p ( θ t | Y 1: t , θ 0 ) is triv- ially obtained via mar ginalization. Under Marko v assumptions, the joint prior in (11) factorizes, enabling sequential sampling of θ n t from p ( θ t | θ n t 1 ) and recursiv e weight updates via w n t ∝ t Y t ′ =1 p ( Y t ′ | θ n t ′ ) ∝ p ( Y t | θ n t ) w n t 1 . (13) This multiplicativ e recursion can lead to weight degeneracy , resulting in near-zero weights for the majority of particles. Using the effecti ve number of particles N (eff ) t as an indicator N (eff ) t = 1 N X n =1 ( w n t ) 2 , (14) adaptiv e resampling schemes [33, Sec. 7.4], [42] counter weight degenerac y by resampling particles according to weights w n t if N (eff ) t falls belo w a threshold. Since θ t is embedded in the multidimensional Gaussian state-space in (8), simulating p ( θ t | θ t 1 ) is in general achie ved via Rao- Blackwellization [33, Sec. 7.5]. Ho we ver , in this case the degenerac y of the state-space allows for sampling particles θ n t directly from p ( θ t | θ n t 1 , ˙ θ n t 1 ) using the recursiv e relationship ˙ θ n t | θ n 1: t , θ 0 = 2 / ∆ T ( θ n t − θ n t 1 ) − ˙ θ n t 1 , (15) where we set the initial angular velocity ˙ θ 0 to zero. Giv en that the PF does not require Gaussianity , we may now use a directional distribution to model the circular nature of the DoA estimation problem. Under far -field conditions, amplitude differences between microphones are negligible and the IPDs of the normalized STFT coef ficients Y tk = Y tk / ∥ Y tk ∥ contain all spatial information. Thus, we assume sufficienc y for estimating DoA θ t and model Y tk via the complex W atson distribution [40, Sec. 14.7], [43], [44], which is in v ariant to global phase shifts, centered at steering vector d tk / √ M with concentration κ . For independent STFT bins, this giv es Y tk | θ t ∼ C W ( d tk / √ M , κ ) (16) with p ( Y t | θ t ) ∝ p ( Y t | θ t ) regarding θ t , which, together with the state-transition model in (8), defines the PF’ s recursive approximation of the filtering distribution p ( θ t | Y 1: t , θ 0 ) . JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 4 I V . A U T O R E G R E S S I V E L Y G U I D E D S PA T I A L F I LT E R S By including an independent upstream target speaker track- ing (TST) algorithm, the concatenative speaker extraction (TSE) pipeline shown in Fig. 1a may appear like the natural approach to automate the steering of a spatially selecti ve filter (SSF). Howe ver , in a frame-wise causal and sequential processing framework, the previously enhanced speech signal ˆ S t 1 is av ailable at frame t and can be incorporated to improv e extraction. In the resulting AR pipeline, ˆ S t 1 can either serve as auxiliary guide for enhancement [26], [27], [28], to improve tracking performance [14], or both [15]. Extending our conference paper [14], in this work, we aim to increase the tracking accurac y of Kalman and particle filters, while retaining minimal computational ov erhead. The processed speech signal is either additionally incorporated into the Bayesian filtering formulations as shown in Fig. 1b or directly used to replace the noisy observation Y t , see Fig. 1c. A. Extended Bayesian F iltering F ormulations T o include the enhanced speech from the SSF in the pre- sented Bayesian tracking algorithms, we extend the generativ e framew ork by introducing the clean speech signals S 1: t 1 as latent observations that complement Y 1: t for estimating the DoA θ t . Howe ver , without a matched speech signal at frame t , noisy STFT coef ficients Y t are less informati ve for tracking, since the target’ s spectral characteristics cannot be exploited. W e therefore omit Y t and solely rely on the predictiv e distri- bution p ( θ t | Y 1: t 1 , S 1: t 1 , θ 0 ) for DoA estimation, yielding ˆ θ t = E { θ t | Y 1: t 1 , S 1: t 1 , θ 0 } . (17) During inference, we use the enhanced STFT segments ˆ S 1: t 1 as plug-in approximation for samples of clean speech S 1: t 1 . While this neglects processing degradation from the SSF, we demonstrate that a high tracking accuracy can be achiev ed. Kalman F ilter Assuming independence between the single channel clean speech signal S t and DoA θ t , the predictive distribution can be further factorized, resulting in the recursion p ( θ t | Y 1: t 1 , S 1: t 1 , θ 0 ) ∝ Z θ t 1 p ( θ t | θ t 1 ) × p ( Y t 1 | S t 1 , θ t 1 ) p ( θ t 1 | Y 1: t 2 , S 1: t 2 , θ 0 ) d θ t 1 . (18) T o enforce linear-Gaussianity of the likelihood p ( Y t | S t , θ t ) for tractability , we follow Traa et al. and employ the DoA estimator Φ t in (9) as a sufficient statistic for θ t . Howe ver , in- stead of uniformly aggre gating the narro w-band DoA estimates ϕ tk as done in (9), we propose incorporating S t to emphasize frequency bins dominated by the target speaker . This giv es Φ t = arg K/ 2 X k =1 g tk e j ϕ tk , g tk = ( | S tk | 2 , k ≤ K A 0 , else , (19) with p ( Y t | S t , θ t ) ∝ p (Φ t | S t , θ t ) regarding θ t . Nev ertheless, as with the KF from T raa et al. in Sec. III-B, the linear- phase DoA estimates ϕ tk conceptually limit the efficiency of incorporating the enhanced speech, since only the bandwidth below the spatial aliasing frequency bin K A can be utilized. Our subsequent PF algorithm does not share this limitation. Algorithm 1 Proposed bootstrap particle filter (PF) for autore- gressiv e (AR) incorporation with SSF estimates (MISO-AR). Require: DoA θ 0 , noise cov ariance ˆ R 0 and threshold τ (eff ) 1: Initialize { θ n 0 , ˙ θ n 0 , w n 0 } ← { θ 0 , 0 , 1 / N } 2: for t = 1 , 2 , 3 , . . . do 3: if t > 1 then 4: Obtain measurements Y t 1 , ˆ S t 1 5: Update noise cov ariance matrix ˆ R t 1 using (25) 6: Update weights ˜ w t 1 using (22) 7: Normalize weights w n t 1 ← ˜ w n t 1 / P n ′ ˜ w n ′ t 1 8: Compute eff. sample size N (eff ) t 1 using (14) 9: if N (eff ) t 1 < τ (eff ) N then 10: Resample n ′ ∼ Categorical ( w n t 1 ) 11: Set { θ n t 1 , ˙ θ n t 1 , w n t 1 } ← { θ n ′ t 1 , ˙ θ n ′ t 1 , 1 / N } 12: end if 13: end if 14: Sample DoA particles θ n t ∼ p ( θ t | θ n t 1 , ˙ θ n t 1 ) 15: Update velocities ˙ θ n t using (15) 16: Estimate DoA ˆ θ t ← arg P n w n t 1 e j θ n t 17: end for P article F ilter T o obtain a bootstrap PF formulation for computing the predictive mean in (17), we approximate the joint predictiv e distribution p ( θ 1: t | Y 1: t 1 , S 1: t 1 , θ 0 ) via Monte Carlo sampling. Assuming that speech S t is indepen- dent of DoA θ t , the joint predictiv e distribution factorizes as p ( θ 1: t | Y 1: t 1 , S 1: t 1 , θ 0 ) ∝ p ( Y 1: t 1 | S 1: t 1 , θ 0: t ) p ( θ 1: t | θ 0 ) . (20) Under Markov properties, the Monte Carlo approximation for the filtering distribution after marginalization yields p ( θ t | Y 1: t 1 , S 1: t 1 , θ 0 ) ≈ N X n =1 w n t 1 δ ( θ t − θ n t ) , (21) with recursively sampled particles θ n t (Sec. III-B) and weights w n t ∝ p ( Y t | S t , θ n t ) w n t 1 . (22) Since the PF is not constrained to a linear relationship between observation Y t and DoA θ t , we use the generativ e model in (2), which encodes θ t via steering vector d tk . Assuming noise STFT coefficients V tk are uncorrelated across frequency [45] and follow a zero mean, proper complex Gaussian distribution [46, Sec. 2.3.1] with cov ariance R tk , results in the likelihood Y tk | S tk , θ t ∼ C N ( d tk S tk , R tk ) . (23) During inference, we use the noise estimate ˆ V tk defined as ˆ V tk = Y tk − d k ( ˆ θ t ) ˆ S tk (24) to recursively estimate the time-v arying noise cov ariance ma- trix R t using the exponential moving av erage (EMA) ˆ R t = (1 − α (EMA) ) ˆ V t ˆ V H t + α (EMA) ˆ R t 1 . (25) Alg. 1 summarizes the proposed incorporation of speech estimates into the PF using the generic bootstrap filter from Lehmann et al. [47, Alg. 1] as foundational framework. JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 5 B. Multiple-Input and Multiple-Output (MIMO) Spatial F ilters Instead of modifying the Bayesian filtering formulations to incorporate the enhanced speech signal as additional observa- tion, it can also be used as a replacement for the noisy mea- surement Y t . Howe ver , the original SSF formulation in (4), which is multiple-input and single-output (MISO) considering the channel dimension, yields a single-channel speech estimate ˆ S t without spatial information and is therefore uninformativ e for tracking on its own. Motiv ated by recent works reporting accurate localization in stationary scenarios [48], [49], [50], we propose to extend the MISO SSF in (4) to a multiple- input and multiple-output (MIMO) formulation by estimating the target’ s full direct-path propagated speech signal ˆ S tk [14] ˆ S tk = F k ( Y t , θ t | z t 1 ) . (26) Giv en that the final layer of a deep SSF is typically linear [3], [4], the MIMO extension effects the model complexity only marginally for reasonably sized arrays. Howe ver , enforcing spatial cue preservation in the speech estimates introduces additional challenges for enhancement. W ith the signal model in (1) implying that direct-path speech S t captures all informa- tion in Y t for DoA θ t , we can drop the additional conditioning p ( θ t | Y 1: t 1 , S 1: t 1 , θ 0 ) = p ( θ t | S 1: t 1 , θ 0 ) , (27) and use the predictiv e prior p ( θ t | S 1: t 1 , θ 0 ) for estimation ˆ θ t = E { θ t | S 1: t 1 , θ 0 } . (28) By retaining the same generative model, Y t can be directly substituted by S t in the filtering formulations in Sec. III-B. Similar to Sec. IV -A, we use the enhanced signals ˆ S 1: t 1 as plug-in approximation for S 1: t 1 during inference. Figure 1c presents the resulting AR TSE pipeline, which we denote as MIMO-AR , opposed to MISO-AR from Sec. IV -A and Fig. 1b. V . D AT A S E T A. Acoustic Dataset P arametrization T o facilitate dev elopment and ev aluation under controlled acoustic conditions, we generate a synthetic dataset of noisy and rev erberant recordings containing two moving speakers. In particular , we use utterances from the LibriSpeech corpus [51] and pair them according to Libri2Mix [52]. For spatialization, we simulate room impulse responses (RIRs) for shoe-box shaped rooms via gpuRIR [53], a GPU accelerated imple- mentation of the image method [54]. W e parameterize each acoustic scenario according to the randomized setup of T esch et al. [3], using reverberation times between 0.2 s and 0.5 s and a circular three-microphone array with 10 cm diameter . T o encourage movement around the array , we place it within the central 20 % of the room while increasing the range of room widths and lengths to values between 4 − 8 m. Speakers are initially separated by at least 15° in azimuth and move along trajectories in the horizontal plane at a constant height during each recording. The temporal discretization of the trajectories is aligned with the STFT parametrization, for which we use a √ Hann window of length 32 ms and 16 ms hop-size at 16 kHz. While our focus is speaker extraction, we add spatially diffuse, spectrally white, stationary Gaussian noise [55] at 20–30 dB SNR to improv e robustness to mild additive interference. B. Social F or ce Motion Model While gpuRIR [53] enables efficient simulation of moving speakers, realistic motion models are essential to ensure gen- eralization of data-driven tracking and enhancement to real- world recordings. A common approach samples start and end points within the simulation boundaries and connects them via linear trajectories at constant velocity [56], [57], or op- tionally use sinusoidally modulated trajectories [16]. Howe ver , finite path lengths couple speaker velocity to room size and recording duration. Circular trajectories [13], [14], [58] av oid this issue, but enforce an unrealistic fixed array distance. T o ov ercome these limitations, we propose adopting the social for ce model of Helbing et al. [31], originally introduced in the context of en vironmentally aware pedestrian dynamics, to simulate speaker mov ement in enclosed acoustic scenarios. V ia a Newtonian formulation, smooth trajectories of arbitrary length and velocity profiles can be generated that satisfy en vironmental constraints. In particular , Newton’ s second law of motion [59, Eq. 1.3] is employed to couple the positions r i of all i ∈ I speakers to the unit mass driving forces f (D) i and repulsiv e forces f (R) i through the differential equation ˙ v i = f (D) i + f (R) i , v i = ˙ r i . (29) The driving force f (D) i represents the i -th speaker’ s desire to mov e to wards a fictitious goal r (D) i at a desired velocity v (D) i , with relaxation time τ influencing the acceleration behavior f (D) i = 1 τ v (D) i − v i , v (D) i = r (D) i − r i r (D) i − r i v (D) i . (30) W e sample the dri ving velocity v (D) i from a Gaussian dis- tribution with mean 1.34 m / s and standard deviation 0.26 m / s (clamped at zero) [31], which corresponds to typical walking speeds [60]. The fictitious goal r (D) i is randomly initialized and resampled when the speaker comes within 0.5 m, with relaxation time τ = 1 s enforcing smooth directional changes. While the dri ving force f (D) i guides each speaker along an intended path, the repulsiv e force f (R) i in (29) incorporates en vironmental constraints and thereby shapes trajectories to ensure physical feasibility . W e decompose f (R) i into boundary forces from walls f (W) i , the microphone array f (A) i , and inter- speaker forces f (S) i that preserve comfortable distances, f (R) i = f (W) i + f (A) i + f (S) i . (31) W e model the wall forces f (W) i as the sum of gradients of per-w all repulsi ve, exponential potentials U (W) iw [31] f (W) i = − 4 X w =1 ∇ r i U (W) iw , U (W) iw = A (W) i e − d (W) iw / B (W) , (32) where distance d (W) iw = r i − r (W) iw and r (W) iw denotes the point on wall w closest to the speaker’ s position r i . T o parametrize A (W) i for maintaining a minimum distance ε (W) to the walls, we consider the limiting case of a head-on approach at speed ∥ v i ∥ = v (D) i . Specifically , we equate the speaker’ s kinetic energy [59, Eq. 1.3] to the work of the wall force for deceleration. This is approximated by an infinite deceleration path to ε (W) , justified by the small exponential scale B (W) of 0.2 m [31]. Since the integration of the wall force component JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 6 0 2 4 6 0 2 4 0.0 s 0 2 4 6 1.5 s 0 2 4 6 2.5 s 0 2 4 6 3.5 s 0 2 4 6 6.5 s 0 2 4 6 7.5 s 0 2 4 6 8.5 s 0 2 4 6 9.5 s room width [m] length [m] mic. array pos. r (A) trajectory to speaker pos. r i ( ) driving goal r (D) i / driving force f (D) i / repulsive force f (R) i boundary region Fig. 2. Social force motion model adapted from [31] to simulate planar two speaker ( / ) trajectories in an enclosed room. An underlying Newtonian formulation enforces smooth motion patterns while satisfying boundary constraints. Dataset generation code and further visualizations are av ailable online 1 . tow ard the w -th w all cancels the gradient, work amounts to the w -th potential U (W) iw in (32) at ε (W) . Minding sign-con vention, the resulting equality can be solved for A (W) i , leading to A (W) i = 1 2 v (D) i 2 e ε (W) / B (W) , (33) which we parametrize according to a minimum distance ε (W) of 0.5 m. Interaction forces from the microphone array f (A) i and other speakers f (S) i are modeled by repulsiv e potentials with elliptical contours, inducing realistic ev asion maneuvers for point-like obstacles. For the microphone array , this giv es f (A) i = −∇ r i U (A) i , U (A) i = A (A) e − 2 b (A) i / B (A) , (34) and semi-minor axis b (A) i of the equipotential lines defined as 2 b (A) i = q d (A) i + d (A) i + ∆ t v i 2 − (∆ t ∥ v i ∥ ) 2 , (35) with distance d (A) i = r i − r (A) and array center r (A) . Thus, the non-central focal point of the ellipse is shifted toward the i -th speaker proportional with factor ∆ t of 2 s to the velocity v i , causing earlier interaction for fast, head-on approaches. T o maintain far-field conditions, we parametrize A (A) i to ensure a minimum distance ε (A) of 0.5 m from speaker to array . Howe ver , since the semi-minor in (35) depends on both posi- tion and velocity , the previous energy-based method becomes intractable. Instead, we adopt a quasi-static approximation ( v i = 0 ), which guarantees to overestimate the deceleration force. Follo wing the same deriv ation as for (33) yields A (A) i = 1 2 v (D) i 2 e 2 ε (A) / B (A) . (36) While the array is a stationary obstacle, the interfering speak- ers are moving. Accordingly , we employ the modified defi- nition of repulsiv e potentials in [61], which uses the speaker velocity difference v ij = v i − v j to orient semi-minor b (S) ij 2 b (S) ij = q ( ∥ d ij ∥ + ∥ d ij + ∆ t v ij ∥ ) 2 − (∆ t ∥ v ij ∥ ) 2 (37) and results after accumulation in the inter-speaker force f (S) i = − X j ∈I \{ i } ∇ r i U (S) ij , U (S) ij = A (S) e − 2 b (S) ij / B (S) . (38) For parametrization, we adopt the originally proposed values of A (S) = 2.1 m 2 / s 2 and B (S) = 0.3 m in [31]. During sim- ulation, we solve the resulting nonlinear , coupled differential equations for the speaker’ s positions r i in (29) using Euler’ s method. Figure 2 illustrates how the interplay between driving and repulsiv e forces determines the speaker’ s movement pat- terns. Further trajectories are shown in Fig. 3, with additional visualizations and dataset generation code av ailable online 1 . 1 https://github.com/sp-uhh/autoregressive-spatial-filters V I . E X P E R I M E N T A L S E T U P A. Model and Algorithm P arametrization Spatially Selective Filter W e employ SpatialNet [62] as a deep, non-linear multichannel speech enhancement archi- tecture. SpatialNet demonstrates exceptional spatial filtering capabilities by utilizing repeated narro w- and wideband pro- cessing modules. Specifically , we emplo y its frame-wise causal version using Mamba blocks for narrowband processing [63], [64] and the steering mechanism from [65, Fig. 5]. In total, this amounts to a computational cost of 18.8 GMA Cs / s and 1.74 M parameters, with the MIMO extension using the microphone array and STFT configuration of Sec. V -A adding fewer than 500 parameters and about 800 kMA Cs / s per kHz bandwidth. T arget Speaker T racking In the concatenative, weakly guided case, we use the Wrapped KF and Boostrap PF from Sec. III-B for tracking, with generic algorithmic implemen- tations found in [29, Alg. 1] and [47, Alg. 1] respectively . Our proposed modifications in Sec. IV can be incorporated by changing the order of prediction and update steps with modi- fied likelihood definitions, as demonstrated for the Boostrap PF in Alg. 1. The complexity of the Wrapped KF filter is mainly gov erned by the IPD computation and LS operation in the linear-phase DoA estimators in (9) and (19). Since the spatial aliasing frequency is already at 2 kHz for the circular three- microphone array (Sec. V -A), the computational load is only approximately 300 kMA Cs / s. The Boostrap PF is also dom- inated by the likelihood ev aluation, which has to be done N = 50 particle times, yielding about 2.5 MMACs / s for both W atson and Gaussian likelihoods in (16) and (23) respectively . 0 3 6 9 -180 0 180 -180 0 180 -180 0 180 0 2 4 0 2 4 0 3 6 9 0 2 4 0 3 6 9 0 2 4 6 MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR MIMO-AR 0 3 6 9 MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR MISO-AR Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat Concat 0 2 4 time [s] room width [m] DoA [°] length [m] mic. array oracle trajectory / start / stop Fig. 3. Sample two-speaker ( / ) trajectories using Wrapped KF ( ) and Bootstrap PF ( ) for tracking in (top to bottom) concatentative (Fig. 1a) and our autoregressiv e (MISO-AR: Fig. 1b, MIMO-AR: Fig. 1c) configurations. JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 7 B. T raining and Optimization Details T o ensure a robust interplay between tracking (TST) and enhancement (SSF) while minimizing NN training overhead, we adopt a multi-stage optimization strategy , which has lead to significant performance improvements in prior work [13]. Pretraining In the pretr aining stage, we train the deep SSF SpatialNet in a strongly guided setup (oracle DoA), adopting the joint time- and frequency domain loss L (MISO) from [3] L (MISO) ( s, ˆ s ) = α ( ℓ 1 ) ∥ s − ˆ s ∥ 1 + | S | − | ˆ S | 1 . (39) The ℓ 1 norms are computed over temporal wa veform and STFT time-frequency bins respectively , with α ( ℓ 1 ) = 10 bal- ancing both domains [3]. For the MIMO extension in (26), L (MISO) is av eraged across all microphone channels, yielding L (MIMO) ( s , ˆ s ) = 1 M M X m =1 L (MISO) ( s m , ˆ s m ) . (40) Pretraining runs for 50 epochs using the Adam optimizer with an initial learning rate of 10 − 3 . Exponential decay with a factor of 0.955 decimates the learning rate during pretraining. F ine-tuning After pretraining, we fine-tune SpatialNet with the DoA estimates of the weakly guided Bayesian TST algo- rithms. Fine-tuning continues with the reduced learning rate of 10 − 4 and lasts for 20 additional epochs. T o av oid the inherent non-parallelizability of the AR TSE pipelines, we adapt the pseudo-AR training strate gy used in [28], [66] for our setup. Specifically , non-AR (open-loop) tracking results, which are based on noisy measurements at training start and later incorporate enhanced speech, are stored during each epoch and used for SSF guidance in the following, thereby emulating AR (closed-loop) inference. Although the AR tracking algorithms are inherently dependent on the SSF performance, which ev olves during fine-tuning, fixing their parameters after the first epoch and then performing a final parameter sweep prov ed sufficient, see Fig. 4a. T o preserve spatial cues in the estimates of SpatialNet-MIMO, we incorporate the IPD loss L (IPD) [50] L (MIMO − AR) ( s , ˆ s ) = α (IPD) L (IPD) ( s , ˆ s ) + L (MIMO) ( s , ˆ s ) , (41) with α (IPD) balancing both optimizations objectiv es. Fine- tuning SpatialNet-MIMO for different values of α (IPD) , as shown in Fig. 4b, proves how stronger spatial cue preservation consistently improves tracking in terms of mean angular error (MAE). Ho we ver , increasing α (IPD) de-emphasizes the signal reconstruction loss L (MIMO) in (41), resulting in a tradeof f between more precise guidance and SSF performance with a distinct optimum for closed-loop enhancement (PESQ). 34.57 25.76 24.33 24.70 23.72 20.86 30.21 17.95 13.92 13.32 12.46 10.36 23.96 11.29 8.96 8.27 7.25 6.22 21.53 9.54 8.02 7.54 6.32 6.27 22.10 9.66 8.31 7.96 7.33 7.19 23.70 10.65 9.86 9.60 9.41 9.28 25.23 12.03 11.53 11.54 11.63 11.25 10 − 3 10 − 2 10 − 1 σ 2 ξ /σ 2 ν 1 4 8 12 16 20 fine-tuning epoch 10 20 30 MAE [°] ← (a) Parameter sweep during fine-tuning. 10 − 4 10 − 3 10 − 2 10 − 1 α (IPD) 1.85 1.9 1.95 2.0 2.05 PESQ → 4 6 8 10 12 MAE [°] ← (b) Influence of IPD-loss in (41). Fig. 4. Closed-loop (AR) parameter optimization on the validation set using the Wrapped KF for TST and SpatialNet-MIMO as SSF (MIMO-AR, Fig. 1c). T ABLE I C O NC ATE NAT IV E AN D AUT O R EG R E S SI V E ( A R ) E X T R AC TI O N M E T H OD S Extraction Method T racking Results Enhancement Results ID Tracking MIMO AR A CC [%] ↑ MAE [°] ↓ PESQ ↑ ESTOI [%] ↑ (0) − − − − − 1.10 ± .06 41.8 ± .3 (1) Oracle ✗ − − − 2.14 ± .01 81.6 ± .2 (2) Oracle ✓ − − − 2.14 ± .01 81.4 ± .2 (3) Wrapped KF ✗ ✗ 33.2 ± .3 32.67 ± .36 1.89 ± .01 77.6 ± .2 (4) Wrapped KF ✗ ✓ 47.1 ± .3 17.94 ± .27 1.94 ± .01 78.3 ± .2 (5) Wrapped KF ✓ ✓ 86.4 ± .3 6.65 ± .18 1.98 ± .01 79.8 ± .2 (6) Bootstrap PF ✗ ✗ 56.2 ± .4 21.65 ± .37 1.93 ± .01 78.2 ± .2 (7) Bootstrap PF ✗ ✓ 87.6 ± .3 6.47 ± .19 2.04 ± .01 80.4 ± .2 (8) Bootstrap PF ✓ ✓ 86.6 ± .4 8.07 ± .25 1.98 ± .01 79.9 ± .2 Reported values are sample means with 95% confidence intervals. V I I . E V A L UA T I O N During e valuation, we utilize our synthetic dataset from Sec. V together with real-world recordings to provide a detailed analysis in a controlled acoustic scenario as well as test generalization capabilities to unseen acoustic conditions. A. Spatially Guided Extraction of Moving Speakers T able I summarizes the results of all presented target speaker extraction (TSE) pipelines using SpatialNet as spatially selec- tiv e filter (SSF). With the synthetic dataset av ailing ground truth speaker trajectories and speech signals, we employ intrusiv e metrics during ev aluation. Specifically , we report utterance-wise MAE and accuracy (ACC) with a 10° threshold [19], [20] to assess tracking performance as well as PESQ [67] and ESTOI [68] as measures for perceptual speech quality and intelligibility , respectiv ely . Under strong guidance (oracle DoA), the MIMO extension of SpatialNet (2) shows only negligible degradation in intelligibility while matching the perceptual quality of the initial MISO implementation (1), resulting in comparable starting conditions across all weakly guided methods following pretraining. After subsequent fine- tuning with the Bayesian trackers from Sec. III-B, the enhance- ment performance in the concatenativ e TSE pipeline (Con- cat, Fig. 1a) drops significantly due to imprecise guidance, with the more accurate Bootstrap PF (6) outperforming the Wrapped KF (3). W ith a MAE above 30°, the latter performs particularly poorly , reflecting the limited modeling capacity of the KF’ s linear-Gaussian state-space on top of its bandwidth- constraint due to spatial aliasing. By autoregressiv ely incor- porating the processed speech into the filtering formulations (MISO-AR, Fig. 1b), spurious modes of interfering speakers can be suppressed in the underlying statistical models, increas- ing robustness for both Bayesian trackers (5, 7). This becomes especially evident for closely spaced or crossing speakers, as shown in the example trajectories in Fig. 3 and on our project page 2 . Incorporating the multichannel estimates of SpatialNet (MIMO-AR, Fig. 1c) can further amplify this effect, achie ving superior tracking and enhancement for the Wrapped KF (5). Nev ertheless, the SSF guided by our proposed MISO-AR formulation of the Bootstrap PF (7) in Alg. 1 achieves the best performance overall, emphasizing the potential of accurate guidance without enforcing spatial cue preservation. 2 https://sp-uhh.github.io/autoregressive-spatial-filters/ JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 8 ID T racking MA Cs , Wrapped KF 0.3 [M/s] +MIMO-SSF 1.8 [M/s] , Bootstrap PF 2.5 [M/s] +MIMO-SSF 8.7 [M/s] SELDnet 70 [M/s] CNN/LSTM 830 [M/s] Concat MISO-AR MIMO-AR 0 10 20 30 MAE [°] ← Concat MISO-AR MIMO-AR 40 60 80 100 A CC [%] → Fig. 5. Computational cost (MACs) and tracking performance of our Bayesian filters (Wrapped KF, Bootstrap PF) relative to DNNs (SELDnet, CNN/LSTM). B. Comparison with Deep Neural T rac king Methods T o contextualize the performance of the Bayesian filters, we compare against data-driv en methods for target speaker tracking (TST). As a strong reference, we use the CNN/LSTM architecture from our prior work in [13], which we adapted from [17]. Additionally , we include SELDnet [69] as a low- complexity baseline, following [15], [20]. T o adapt it for our setup, we incorporate the modifications for causality according to [70], condition the GR U layers of SELDnet with the initial DoA [3], [13] and solely use the GCC-PHA T input features [69] due to the array’ s compact size. Figure 5 presents the tracking performance and computational complexity of all Bayesian and data-driv en tracking methods. In their original formulation (Concat), the Bayesian filters Wrapped KF ( ) and Bootstrap PF ( ) are greatly outperformed by the neu- ral trackers ( , ). Ho wev er, autoregressi vely incorporating SpatialNet as SSF into our proposed reformulation of the Bootstrap PF (MISO-AR), ( ) as well as for both Bayesian filters with the SSF-MIMO extension (MIMO-AR), ( , ) achiev es competetiv e performance to the data-dri ven meth- ods ( , ). Most notably , our Bootstrap PF in MISO-AR configuration ( ) consistently outperforms SELDnet ( ), with the same SSF at less than a tenth of the computational cost. C. Influence of Speaker Motion P atterns on Enhancement While prior work demonstrated the necessity of training a deep SSF with moving speakers for robust speech enhance- ment under dynamic conditions [13], the role of the mo- tion patterns remains unclear . For further analysis, we cross- ev aluate SpatialNet as strongly guided SSF trained on different speaker trajectories. Specifically , we retain the acoustic setup of Sec. V -A while varying speaker motion between stationary , circular [13], [14], and our social force model (Sec. V -B). As a benchmark, we use real-world trajectories from T ask 4 of the LOCA T A Challenge [71] with a stationary array and two moving speakers. Figure 6 presents the performance results in terms of perceptual quality (PESQ) and intelligibility (ESTOI). Due to the same span of room dimensions, the distribution of speaker-array distances varies throughout datasets, yielding different input SNRs [72], ranging from -5.7 dB (circular) to -7.6 dB (LOCA T A). As expected, SpatialNet trained on stationary speakers performs poorly across all motion types. Howe ver , due to constant speaker-array distances, also the circular dataset results in significant enhancement degradation when ev aluated on other movement patterns. Only SpatialNet trained on our proposed social force model remains rob ust o ver all datasets while achieving a 0.3 PESQ gain on the LOCA T A trajectories, underlining the importance of motion diversity . test dataset training stationary circular proposed LOCA T A proposed circular stationary unprocessed training stationary circular proposed LOCA T A proposed circular stationary unprocessed 1.09 1.10 1.10 1.10 2.16 1.63 1.50 1.70 2.09 2.27 1.68 1.67 2.05 2.08 2.14 2.02 40.2 44.4 41.8 40.9 82.1 73.9 69.0 73.2 80.2 84.4 75.3 74.1 79.4 82.1 81.6 79.3 1.2 1.6 2.0 PESQ → 40 60 80 ESTOI [%] → Fig. 6. Generalization from training to inference under mismatched speaker trajectories using SpatialNet as SSF with strong guidance (ground truth DoA). D. Generalization and Robustness in Real-W orld Recordings Recording Setup T o assess generalizability and robustness to real-world settings, we include recordings from a variable- acoustics listening room measuring 9.5 m × 5.1 m × 2.4 m with the same centered microphone array as in Sec. V -A. Each recording features two male non-native English speakers read- ing Rainbow Passage segments [73] while walking throughout the room. W e adopt the circular trajectories introduced in [14], which yield motion patterns suitable for ev aluating tracking performance without ground-truth positional data. Specifically , the speakers start from opposite ends at roughly 1 m and 2 m distance from the array , traverse to the other end of the room, and back over the duration of their prompt. W e test three acoustic conditions with reverberation times of 200 ms, 350 ms and 800 ms. Each configuration includes three 10 − 20 s recordings, yielding nine two-speaker mixtures in total. V ideos of the recording setup are av ailable on our project page 2 . T arget Speak er T racking Figure 7 visualizes the tracking results using the Wrapped KF ( ) and Bootstrap PF ( ) for our 10 s listening room recordings under varying acoustic conditions. The W atson likelihood from (16), sho wn as a background reference, clearly exposes the smearing ef fect of high re verberation on spatial features, which increases tracking difficulty . Consistent with the synthetic dataset, the Bayesian filters in their original formulation (top row) yield inaccurate tracking results, especially with increasing reverberation. In contrast, the AR versions using SSF estimates (MISO-AR, center row and MIMO-AR, bottom row) robustly resolve both speaker crossings. For a quantitativ e evaluation, we approx- imate the speaker’ s circular motion patterns with piecewise- linear azimuth trajectories. After segmenting the duration of each recording into four parts, we compute the fraction of estimates on the e xpected array side (see in Fig. 7), termed regional accuracy (Re-A CC), which is particular sensitive to 0 2 4 6 8 10 -180 0 180 -180 0 180 -180 0 180 0 2 4 6 8 10 0 2 4 6 8 10 time [s] DoA [°] T 60 = 200 ms T 60 = 350 ms T 60 = 800 ms Fig. 7. T wo-speaker ( / ) DoA tracking under increasing rev erberation (left to right) with (top to bottom) concatentative (Fig. 1a) and our autoregressi ve (MISO-AR: Fig. 1b, MIMO-AR: Fig. 1c) methods using Wrapped KF ( ) and Bootstrap PF ( ). Shaded areas ( ) indicate Re-ACC computation. JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 9 Concat MISO-AR MIMO-AR Re-A CC [%] → 20 40 60 80 100 Concat MISO-AR MIMO-AR NISQA → 2.5 3.0 3.5 4.0 4.5 5.0 Concat MISO-AR MIMO-AR WER [%] ← 0 10 20 30 40 Fig. 8. Tracking (Re-A CC) and enhancement (NISQA, WER) performance of weakly guided TSE pipelines with Wrapped KF ( ) and Bootstrap PF ( ). Un- processed recordings yield a sample mean of 1.82 NISQA and 81.4 % WER. speaker confusions after directional crossings. The results in Fig. 8 show how both Bayesian filters in MIMO-AR configu- ration ( , ) and the MISO-AR Bootstrap PF ( ) consistently retain robust tracking accuracy , demonstrating generalizability to real-world recordings under unseen acoustic conditions. T arget Speaker Extraction T o ev aluate perceptual quality without ground truth speech signals, we employ NISQA [74], a data-driven, non-intrusive estimator of the subjective mean opinion score. For intelligibility , we le verage transcriptions of a downstream automatic speech recognition (ASR) system and compute the word error rate (WER) against the Rainbow Passage reference segments. Specifically , we utilize the ASR model QuartzNet15x5Base-En [75], which is very sensi- tiv e to signal distortions as it is only trained on clean and tele- phony speech. Figure 8 presents the enhancement results ob- tained from the listening room recordings using the Bayesian trackers for guidance. Both perceptual quality (NISQA) and intelligibility (WER) demonstrate how the increased tracking accuracy of the AR methods, particularly the MIMO-AR configurations ( , ) and the MISO-AR Bootstrap PF ( ), translate into superior enhancement, consistent with the trend on the synthetic data in T able I. Listening tests, provided on our project page 2 , indicate that performance differences are most pronounced at the end of the recordings. Without reliable guidance, the non-AR approaches ( , ) must retain speaker characteristics over time and ev entually suffer from signal dis- tortions and speaker leakage. The accurate tracking provided by our AR methods prev ents this degradation and yields robust enhancement throughout long-form audio recordings. V I I I . C O N C L U S I O N Based on our conference paper [14], we in vestig ated how to improve lightweight Bayesian tracking by autoregressi vely (AR) incorporating the processed speech signal of a deep spatially selectiv e filter (SSF). On top of the multichan- nel (MIMO) SSF extension from [14], we developed novel Bayesian filtering formulations, which integrate the enhanced speech without modifying the SSF. T o enable dev elopment with realistic motion patterns, we released a synthetic dataset based on the social force motion model, which yields superior generalization to real-word trajectories. A detailed analysis on our synthetic dataset demonstrates significant tracking improv ements for our AR Bayesian methods with none or negligible additional o verhead, achie ving competitive accuracy relativ e to neural methods of much greater complexity . Real- world recordings complement these findings, with the perfor- mance gains of our autore gr essive methods generalizing to challenging and unseen realistic acoustic conditions. R E F E R E N C E S [1] E. C. Cherry , “Some experiments on the recognition of speech, with one and two ears, ” J. Acoust. Soc. Am. , vol. 25, 1953. [2] K. Zmolikov a, M. Delcroix, T . Ochiai, K. Kinoshita, J. ˇ Cernock ´ y, and D. Y u, “Neural target speech extraction: An ov erview , ” IEEE Signal Pr oc. Magazine , vol. 40, 2023. [3] K. T esch and T . Gerkmann, “Multi-channel speech separation using spatially selective deep non-linear filters, ” IEEE/ACM T ASLP , vol. 32, 2024. [4] A. Bohlender , A. Spriet, W . Tirry , and N. Madhu, “Spatially selective speaker separation using a DNN with a location dependent feature extraction, ” IEEE/ACM T ASLP , vol. 32, 2024. [5] K. T esch and T . Gerkmann, “Insights into deep non-linear filters for im- proved multi-channel speech enhancement, ” IEEE/ACM TASLP , vol. 31, 2023. [6] K. Jing, W . Zhang, and Y . Gao, “End-to-end DOA-guided speech extraction in noisy multi-talker scenarios, ” in Interspeech , 2025. [7] A. Pandey , S. Lee, J. Azcarreta, D. W ong, and B. Xu, “ All neural low- latency directional speech extraction, ” in Interspeech , 2024. [8] R. Gu and Y . Luo, “ReZero: Region-customizable sound extraction, ” IEEE/ACM T ASLP , 2024. [9] D. Choi and J.-W . Choi, “Multichannel-to-multichannel target sound extraction using direction and timestamp clues, ” in IEEE ICASSP , 2025. [10] D.-J. A. Padilla, N. L. W esthausen, S. V i vekananthan, and B. T . Meyer , “Location-aware target speaker extraction for hearing aids, ” in Interspeech , 2025. [11] Z. Chen, T . Y oshioka, L. Lu, T . Zhou, Z. Meng, Y . Luo, J. Wu, X. Xiao, and J. Li, “Continuous speech separation: Dataset and analysis, ” in IEEE ICASSP , 2020. [12] J. Barker, S. W atanabe, E. V incent, and J. Trmal, “The fifth ’CHiME’ speech separation and recognition challenge: Dataset, task and base- lines, ” in Interspeech , 2018. [13] J. Kienegger and T . Gerkmann, “Steering deep non-linear spatially selectiv e filters for weakly guided extraction of moving speakers in dynamic scenarios, ” in Interspeech , 2025. [14] J. Kienegger , A. Mannanova, H. Fang, and T . Gerkmann, “Self-steering deep non-linear spatially selective filters for efficient extraction of moving speakers under weak guidance, ” in IEEE WASP AA , 2025. [15] J. Kienegger and T . Gerkmann, “ Adaptive rotary steering with joint autoregression for robust extraction of closely moving speakers in dynamic scenarios, ” in IEEE ICASSP , 2026. [16] D. Diaz-Guerra, A. Miguel, and J. R. Beltran, “Robust sound source tracking using SRP-PHA T and 3D conv olutional neural networks, ” IEEE/ACM T ASLP , vol. 29, 2021. [17] A. Bohlender , A. Spriet, W . T irry , and N. Madhu, “Exploiting temporal context in CNN based multisource DoA estimation, ” IEEE/ACM T ASLP , vol. 29, 2021. [18] B. Y ang, H. Liu, and X. Li, “SRP-DNN: Learning direct-path phase difference for multiple moving sound source localization, ” in IEEE ICASSP , 2022. [19] Y . W ang, B. Y ang, and X. Li, “FN-SSL: Full-band and narrow-band fusion for sound source localization, ” in Interspeech , 2023. [20] Y . Xiao and R. K. Das, “TF-Mamba: A time-frequency network for sound source localization, ” in Interspeech , 2025. [21] K. T an and D. W ang, “ A conv olutional recurrent neural network for real-time speech enhancement, ” in Interspeech , 2018. [22] A. D ´ efossez, G. Synnaeve, and Y . Adi, “Real time speech enhancement in the waveform domain, ” in Interspeech , 2020. [23] S. Braun, H. Gamper, C. K. Reddy , and I. T ashev , “T owards efficient models for real-time deep noise suppression, ” in IEEE ICASSP , 2021. [24] Y . Luo and N. Mesgarani, “Conv-T asNet: Surpassing ideal time–frequency magnitude masking for speech separation, ” IEEE/ACM T ASLP , vol. 27, 2019. [25] R. Chao, W .-H. Cheng, M. L. Quatra, S. M. Siniscalchi, C.-H. H. Y ang, S.-W . Fu, and Y . Tsao, “ An investig ation of incorporating Mamba for speech enhancement, ” in IEEE Spoken Language T ech. W orkshop , 2024. [26] P . Andreev , N. Babaev , A. Saginbaev , I. Shchekotov , and A. Alanov , “Iterativ e autoregression: A novel trick to improve your low-latenc y speech enhancement model, ” in Interspeech , 2023. [27] Z. Pan, G. Wichern, F . G. Germain, K. Saijo, and J. Le Roux, “P ARIS: Pseudo-autoregressi ve siamese training for online speech separation, ” in Interspeech , 2024. [28] P . Shen, X. Zhang, and Z.-Q. W ang, “ARiSE: Auto-regressiv e multi- channel speech enhancement, ” in Interspeech , 2025. [29] J. Traa and P . Smaragdis, “ A wrapped Kalman filter for azimuthal speaker tracking, ” IEEE Signal Proc. Letters , v ol. 20, 2013. JOURNAL OF L A T E X CLASS FILES, VOL. XX, NO. X, MONTH XXXX 10 [30] D. W ard, E. Lehmann, and R. Williamson, “Particle filtering algorithms for tracking an acoustic source in a reverberant en vironment, ” IEEE T rans. on Speech and Audio Pr oc. , vol. 11, 2003. [31] D. Helbing and P . Molnar, “Social force model for pedestrian dynamics, ” Physical re view E , vol. 51, 1995. [32] J. Benesty , G. Huang, J. Chen, and N. Pan, Micr ophone Arrays . Springer , 2024. [33] S. S ¨ arkk ¨ a, Bayesian Filtering and Smoothing . Cambridge University Press, 2013. [34] R. E. Kalman, “ A new approach to linear filtering and prediction problems, ” Journal of Basic Engineering , vol. 82, 1960. [35] X. Rong Li and V . Jilkov , “Survey of maneuvering target tracking. Part I. Dynamic models, ” IEEE T rans. on Aerospace and Electronic Systems , vol. 39, 2003. [36] X. Zhong and A. B. Premkumar, “Particle filtering approaches for multiple acoustic source detection and 2-D direction of arriv al estimation using a single acoustic vector sensor, ” IEEE T rans. on Signal Pr oc. , vol. 60, 2012. [37] F . Dong, L. Xu, and X. Li, “Particle filter algorithm for DoA tracking using co-prime array , ” IEEE Comm. Letters , vol. 24, 2020. [38] J. T raa, “Multichannel source separation and tracking with phase dif- ferences by random sample consensus, ” Master’ s thesis, University of Illinois at Urbana-Champaign, 2013. [39] O. Thier gart, W . Huang, and E. A. Habets, “ A lo w complexity weighted least squares narrowband DOA estimator for arbitrary array geometries, ” in IEEE ICASSP , 2016. [40] K. V . Mardia and P . E. Jupp, Directional statistics . John Wiley & Sons, 2000. [41] N. Gordon, D. Salmond, and A. Smith, “Novel approach to nonlinear/non-Gaussian Bayesian state estimation, ” IEE proc. F , vol. 140, 1993. [42] M. Arulampalam, S. Maskell, N. Gordon, and T . Clapp, “ A tutorial on particle filters for online nonlinear/non-Gaussian Bayesian tracking, ” IEEE T rans. on Signal Proc. , v ol. 50, 2002. [43] L. Drude, F . Jacob, and R. Haeb-Umbach, “DOA-estimation based on a complex W atson kernel method, ” in EUSIPCO , 2015. [44] Z. W ang, J. Li, and Y . Y an, “T arget speaker localization based on the complex W atson mixture model and time-frequency selection neural network, ” Applied Sciences , vol. 8, 2018. [45] R. C. Hendriks, R. Heusdens, U. Kjems, and J. Jensen, “On optimal multichannel mean-squared error estimators for speech enhancement, ” IEEE Signal Proc. Letters , vol. 16, 2009. [46] P . J. Schreier and L. L. Scharf, Statistical signal pr ocessing of complex- valued data: The theory of improper and noncircular signals . Cam- bridge University Press, 2010. [47] E. A. Lehmann and A. M. Johansson, “Particle filter with integrated voice activity detection for acoustic source tracking, ” EURASIP J. on Adv . in Signal Pr oc. , vol. 2007, 2006. [48] G. Li, W . Xue, W . Liu, J. Y i, and J. T ao, “GCC-Speak er: T arget speaker localization with optimal speak er-dependent weighting in multi-speaker scenarios, ” in IEEE ICASSP , 2023. [49] Y . Chen, X. Qian, Z. P an, K. Chen, and H. Li, “LocSelect: T arget speaker localization with an auditory selectiv e hearing mechanism, ” in IEEE ICASSP , 2024. [50] S. S. Battula, H. T aherian, A. Pandey , D. W ong, B. Xu, and D. W ang, “Robust frame-le vel speaker localization in rev erberant and noisy envi- ronments by exploiting phase difference losses, ” in IEEE ICASSP , 2025. [51] V . Panayotov , G. Chen, D. Povey , and S. Khudanpur, “Librispeech: An ASR corpus based on public domain audio books, ” in IEEE ICASSP , 2015. [52] J. Cosentino, M. Pariente, S. Cornell, A. Deleforge, and E. Vincent, “LibriMix: An open-source dataset for generalizable speech separation, ” 2020. [Online]. A vailable: https://arxiv .org/abs/2005.11262 [53] D. Diaz-Guerra, A. Miguel, and J. R. Beltr ´ an, “gpuRIR: A Python library for room impulse response simulation with GPU acceleration, ” Multimedia T ools and Applications , vol. 80, 2018. [54] J. Allen and D. Berkley , “Image method for efficiently simulating small- room acoustics, ” J. Acoust. Soc. Am. , vol. 65, 1979. [55] E. Habets and S. Gannot, “Generating sensor signals in isotropic noise fields, ” J. Acoust. Soc. Am. , vol. 122, 2007. [56] T . Ochiai, M. Delcroix, T . Nakatani, and S. Araki, “Mask-based neural beamforming for moving speakers with self-attention-based tracking, ” IEEE/ACM T ASLP , vol. 31, 2023. [57] M. T ammen, T . Ochiai, M. Delcroix, T . Nakatani, S. Araki, and S. Doclo, “ Array geometry-robust attention-based neural beamformer for moving speakers, ” in Interspeech , 2024. [58] J. Rusrus, S. Shirmohammadi, and M. Bouchard, “Characterization of moving sound sources direction-of-arrival estimation using different deep learning architectures, ” IEEE TIM , vol. 72, 2023. [59] H. Goldstein, J. Safk o, , and C. Poole, Classical mechanics . Addison- W esley , 2002. [60] E. M. Murtagh, J. L. Mair , E. Aguiar , C. Tudor -Locke, and M. H. Murphy , “Outdoor walking speeds of apparently healthy adults: A systematic revie w and meta-analysis, ” Sports Medicine , vol. 51, 2021. [61] A. Johansson, D. Helbing, and P . K. Shukla, “Specification of the social force pedestrian model by evolutionary adjustment to video tracking data, ” Advances in complex systems , vol. 10, 2007. [62] C. Quan and X. Li, “SpatialNet: Extensively learning spatial information for multichannel joint speech separation, denoising and derev erberation, ” IEEE/ACM T ASLP , vol. 32, 2024. [63] A. Gu and T . Dao, “Mamba: Linear-time sequence modeling with selectiv e state spaces, ” in F irst confer ence on language modeling , 2024. [64] C. Quan and X. Li, “Multichannel long-term streaming neural speech enhancement for static and moving speak ers, ” IEEE Signal Pr oc. Letters , vol. 31, 2024. [65] D. W u, X. W u, and T . Qu, “Leveraging sound source trajectories for univ ersal sound separation, ” IEEE/ACM T ASLP , 2025. [66] Z.-Q. W ang and D. W ang, “Recurrent deep stacking networks for supervised speech separation, ” in IEEE ICASSP , 2017. [67] A. Rix, J. Beerends, M. Hollier , and A. Hekstra, “Perceptual ev aluation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs, ” in IEEE ICASSP , 2001. [68] J. Jensen and C. H. T aal, “ An algorithm for predicting the intelligibility of speech masked by modulated noise maskers, ” IEEE/ACM T ASLP , vol. 24, 2016. [69] S. Adav anne, A. Politis, J. Nikunen, and T . V irtanen, “Sound ev ent localization and detection of overlapping sources using conv olutional recurrent neural networks, ” IEEE J. Sel. T opics Signal Proc. , vol. 13, 2019. [70] M. Y asuda, S. Saito, A. Nakayama, and N. Harada, “6DoF SELD: Sound ev ent localization and detection using microphones and motion tracking sensors on self-motioning human, ” in IEEE ICASSP , 2024. [71] C. Evers, H. W . L ¨ ollmann, H. Mellmann, A. Schmidt, H. Barfuss, P . A. Naylor , and W . K ellermann, “The LOCA T A challenge: Acoustic source localization and tracking, ” IEEE/ACM T ASLP , vol. 28, 2020. [72] J. L. Roux, S. W isdom, H. Erdogan, and J. R. Hershey , “SDR – Half- baked or well done?” in IEEE ICASSP , 2019. [73] G. Fairbanks, V oice and Articulation Drillbook . Harper , 1960. [74] G. Mittag, B. Naderi, A. Chehadi, and S. M ¨ oller , “NISQA: A deep CNN- self-attention model for multidimensional speech quality prediction with crowdsourced datasets, ” in Interspeech , 2021. [75] O. Kuchaiev , J. Li, H. Nguyen, O. Hrinchuk, R. Leary , B. Ginsburg, S. Kriman, S. Beliaev , V . Lavrukhin, J. Cook, P . Castonguay , M. Popova, J. Huang, and J. M. Cohen, “NeMo: A toolkit for building AI applica- tions using neural modules, ” 2019. Jakob Kienegger (Student Member, IEEE) received the B.Sc. degree in Electrical Engineering from the O WL Univ ersity of Applied Sciences, Lemgo, Ger- many , in 2021, and the M.Sc. degree from the Uni- versity of Paderborn, Paderborn, German y , in 2024. He is currently with the Signal Processing Research Group, University of Hamb urg, Hamburg, Germany , under the supervision of Prof. Timo Gerkmann. His research interests include statistical signal process- ing and machine learning applied to sound source localization and multichannel speech enhancement. Timo Gerkmann (Senior Member, IEEE) is a pro- fessor with the Univ ersity of Hambur g, Hambur g, Germany , where he is the head of the Signal Pro- cessing Research Group. He has previously held positions with T echnicolor Research & Innovation, Univ ersity of Oldenbur g, Oldenbur g, Germany , KTH Royal Institute of T echnology , Stockholm, Sweden, Ruhr-Uni versit ¨ at Bochum, Bochum, Germany , and Siemens Corporate Research, Princeton, NJ, USA. His research interests include statistical signal pro- cessing and machine learning for speech and audio applied to communication devices, hearing instruments, audio-visual media, and human-machine interfaces. He received the VDE ITG award 2022.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment