Adaptive Gaussian Process Search for Simulation-Based Sample Size Estimation in Clinical Prediction Models: Validation of the pmsims R Package

Background: Determining an adequate sample size is essential for developing reliable and generalisable clinical prediction models, yet practical guidance on selecting appropriate methods remains limited. Existing analytical and simulation-based appro…

Authors: Oyebayo Ridwan Olaniran, Diana Shamsutdinova, Sarah Markham

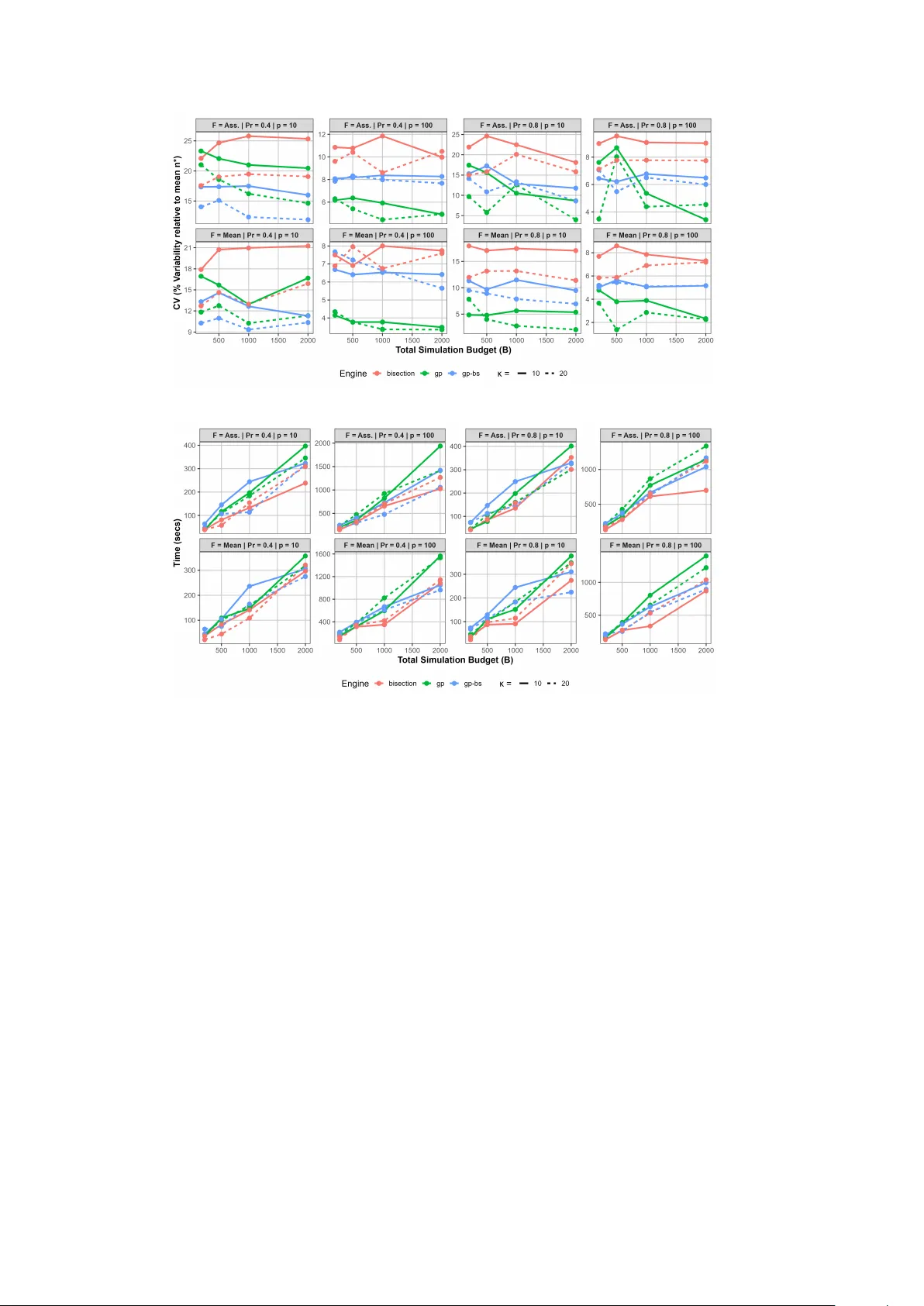

Adaptiv e Gaussian Pro cess Searc h for Sim ulation-Based Sample Size Estimation in Clinical Prediction Mo dels: V alidation of the pmsims R P ac k age Oy ebay o Ridw an Olaniran 1,2* , Diana Shamsutdinov a 1,2 , Sarah Markham 1 , F elix Zimmer 1 , Daniel Stahl 1,2 , Gordon F orb es 1,2 † , Ew an Carr 1 † 1* Departmen t of Biostatistics and Health Informatics, King’s College London, London, United Kingdom. 2 NIHR Biomedical Researc h Cen tre, Maudsley NHS T rust, London, United Kingdom. *Corresp onding author(s). E-mail(s): ridwan.olaniran@k cl.ac.uk ; † These authors con tributed equally to this work. Abstract Bac kground Determining an adequate sample size is essen tial for developing reliable and generalisable clinical prediction mo dels, y et practical guidance on selecting appropriate sample size calculation metho ds remains limited. Existing analyt- ical and simulation-based to ols imp ose restrictive assumptions and fo cus on mean-based criteria. W e present and v alidate pmsims , an R pack age that uses Gaussian pro cess (GP) surrogate mo delling to pro vide a flexible and efficient sim ulation-based framew ork for sample size determination across a broad range of prediction mo delling con texts. Metho ds W e conducted a comprehensiv e simulation study with t wo aims. Aim 1 compared three searc h engines implemented in pmsims , a GP surrogate-based adaptive pro cedure ( gp ), a deterministic bisection method ( bisection ), and a hybrid GP- bisection approac h ( gp-bs ), across binary , contin uous, and surviv al outcomes. Scenarios v aried outcome prev alence or even t rate, predictor dimensionalit y ( p = { 10 , 100 } ), target p erformance metric (discrimination and calibration 1 slop e), aggregation criterion (mean vs 80% assurance), and total sim ulation budget ( B = 200 − 2000 ). Each scenario was replicated 100 times; estimator stabilit y w as assessed via the co efficient of v ariation (CV). Aim 2 b enc h- mark ed the b est-performing pmsims engine against pmsampsize (analytical) and samplesizedev (simulation-based) across a wider range of realistic scenarios, ev aluating recommended sample sizes, computational time, and achiev ed mo del p erformance on indep enden t v alidation datasets of 30,000 observ ations. Results The GP-based searc h engine consistently yielded the most stable sample size estimates (low est CV) across all outcome types, ranking highest in 9/12 outcome-aggregation metric configurations. Its adv antage was most pronounced in low-signal, high-dimensional settings, and was accentuated with κ = 20 replications p er ev aluation and a budget of B ≈ 1000 , after which gains were minimal. In b enc hmark comparisons, pmsims (mean) achiev ed p erformance deviations within ± 1% of the presp ecified target across binary , contin uous, and surviv al outcomes, comparable to samplesizedev and substan tially outp erform- ing pmsampsize in high-discrimination settings (deviations up to − 9 . 84% ). Conclusions The pmsims pack age, using the GP-based searc h engine with κ ≥ 20 replica- tions per ev aluation and a budget of B ≈ 1000 , provides a computationally efficien t and flexible framew ork for principled sample size planning in clinical pre- diction mo delling. It reliably ac hieves p erformance targets across a wide range of scenarios while requiring fewer model ev aluations than non-adaptive simula- tion approaches, offering a comp elling alternative to b oth analytical formulae and exhaustive simulation-based searc h. Keyw ords: Sample size planning, prediction modelling, Gaussian pro cess, adaptive simulation, discrimination, calibration, assurance, R pac k age 1 In tro duction Determining an adequate sample size is an essential prerequisite for dev eloping stable, reliable, and generalisable clinical prediction mo dels. Developmen t samples that are to o small can pro duce ov erfitted mo dels with p o or calibration and reduced discrimi- nation when applied to new data, undermining clinical utility and p oten tially causing harm in decision-making con texts [ 1 ]. In prediction mo delling, sample size estimation seeks the minimum developmen t sample size needed for mo del p erformance to meet or exceed a presp ecified threshold on a c hosen metric [ 2 ]. T argets can b e defined using either a ‘mean’ criterion, where exp ected performance exceeds the threshold, or an ‘assurance’ criterion, where p erfor- mance exceeds the threshold with high probability (e.g., 80%). The assurance approac h explicitly accoun ts for training-sample v ariability and is therefore more robust to uncertain ty in mo del dev elopment [ 3 , 4 ]. 2 Analytic to ols ha ve b een developed to estimate minimum sample sizes for tradi- tional regression-based prediction mo dels. F or example, the pmsampsize R library and Stata mo dules target an acceptable degree of o verfitting (via shrink age) or ensure suffi- cien tly precise estimation of the av erage outcome in the population [ 5 ]. Ho w ever, these approac hes are limited to linear or generalised linear mo dels estimated using maxi- m um likelihoo d and are not generalisable to a wider class of machine learning mo dels or alternative estimation metho ds such as p enalised maximum lik eliho o d. Moreov er, they rely on assumptions that are often unmet in practice. In particular, they typi- cally presume a correctly sp ecified linear predictor, normally distributed cov ariates, and prop erties of maximum likelihoo d estimation—assumptions that can b e violated in real-world clinical data c haracterised by non-linearities, interactions, and complex correlation structures [ 6 ]. A further limitation of existing analytical metho ds is that they fo cus on the ‘mean’ criterion, which do es not capture v ariability in p erformance arising from finite training samples. Consequently , a sample size that meets a mean target may still imply a substantial probability of underp erformance in the target p opulation—risk not captured by closed-form formulae. Simulation studies hav e also sho wn analytic approac hes may underestimate the required sample size when mo del strength is high (C-statistic ≥ 0.8) [ 7 ]. T o address the limitations of analytic approac hes, sim ulation-based sample size to ols ha ve been developed. samplesizedev is a simulation-based R pac k age that estimates sample sizes for binary and surviv al outcomes, quantifying v ariability in mo del p erformance metrics and supp orting probability-based assessmen t of ac hiev- ing target p erformance across training samples (the ‘assurance’ criterion) [ 4 ]. More recen tly , Ba yesian approaches ha ve also b een prop osed to support probability-based (assurance-t yp e) sample size criteria for prediction mo delling [ 3 , 4 , 8 ]. samplesizedev only supp orts logistic and Cox regression mo dels, simulates predictors indep endently from standard normal distributions, and is not readily extendable to other mo dels or data generators. Other approaches to sample size determination include le arning curve metho ds , whic h extrap olate model p erformance from pilot data; and hybrid appr o aches , in which estimated learning curv es are summarised as approximate analytical formu- lae parametrised by the characteristics of the datasets used to derive them. These approac hes are reviewed in [ 2 ]. Despite these adv ances, there is a lack of practical, accessible to ols for assurance- t yp e sample size determination accommo dating diverse mo del sp ecifications and realistic data-generating mechanisms. This gap presents researc hers with an unhelp- ful choice: rely on simplified approaches that ma y not reflect the complexities of the in tended application or omit sample size justifications altogether. Consistent with this, systematic reviews show that most prediction mo delling studies do not rep ort an explicit sample size justification [ 9 ]. In this pap er, w e presen t pmsims , an R pack age that provides a flexible sim ulation- based framework for estimating minimum sample sizes for developing clinical pre- diction mo dels. The pack age addresses tw o core limitations of existing analytical approac hes: limited flexibility in mo del and data-generating sp ecifications, and the computational burden of na ¨ ıv e sim ulation-based searches ov er large sample size spaces [ 2 ]. 3 The pmsims pack age incorp orates tw o key innov ations. First, it uses Gaussian pro- cess (GP) surrogate mo delling of the sample size–p erformance relationship [ 10 , 11 ]. Rather than exhaustively ev aluating every candidate sample size via computation- ally exp ensiv e mo del fitting, the GP-based engine uses a Gaussian process surrogate mo del to approximate p erformance as a function of sample size. It iteratively selects the next sample sizes to ev aluate b y choosing p oin ts where the curren t Gaussian pro- cess shows the highest p osterior uncertain ty (or where an acquisition function that balances predicted performance and uncertaint y is maximised), runs a limited num- b er of Mon te Carlo simulations at those p oin ts, up dates the GP p osterior with the new results, and repeats until the surrogate provides a smooth, reliable appro ximation of the entire p erformance curve [ 2 ]. This approach, grounded in Ba yesian optimisa- tion principles, dramatically reduces the n umber of required mo del fits compared to traditional grid search or bisection algorithms [ 10 ]. Second, pmsims pro vides a flex- ible framework for sample size calculations across any mo del type, data-generating mec hanism, and p erformance metric of in terest. This generalit y mo ves b ey ond the nar- ro wer f o cus of existing to ols, enabling researchers to tailor calculations to their specific prediction mo delling con text rather than fitting their problem to av ailable softw are. This study presents the comprehensiv e v alidation of the pmsims pac k age (ver- sion 0.5.0) through tw o complementary ob jectives. First, w e ev aluate the p erformance and computational efficiency of the GP-based engine against traditional simulation- based search algorithms. Second, w e assess empirical agreement b et ween sample size estimates from pmsims and those from established analytical and sim ulation-based to ols, specifically pmsampsize (implementing the Riley et al. metho dology for logistic, linear and surviv al outcomes) [ 12 ] and samplesizedev (a simulation-based approach motiv ated b y limitations of analytical form ulae in certain scenarios) [ 7 ], across a broad sp ectrum of realistic prediction mo delling scenarios. 2 The pmsims w orkflo w The pmsims pack age implements a flexible sim ulation framew ork for sample size estimation, described b y three components: (i) a data-generating process, (ii) a mo del- fitting pro cedure, and (iii) a p erformance metric. These components are com bined with a search engine to estimate the minimum sample size n that satisfies a presp ecified p erformance criterion, either on a v erage (the ‘mean’ criterion) or with a sp ecified lev el of certain ty (the ‘assurance’ criterion). F urther details of the conceptual framework are provided in [ 2 ]. Although pmsims supp orts arbitrary com binations of data-generating pro cesses, mo del-fitting pro cedures, and p erformance criteria, we restricted the scop e of this v alidation study to enable direct comparison with existing sample size tools. Specifi- cally , w e focused on binary , con tinuous, and surviv al outcomes; simple data-generating pro cesses; and logistic regression, linear regression, and prop ortional hazards mo d- els. These are defined formally below and are provided in the pack age as pre-defined functions. 4 2.1 Data-generating pro cess Let X = ( X 1 , X 2 , . . . , X p ) b e a p -dimensional vector of predictors, where p = p signal + p noise . The data-generating process is defined by the join t distribution P ( X , Y ), where the outcome Y is generated conditionally on X . F or eac h outcome type, the linear predictor η = X T β + β 0 is constructed, where β contains co efficien ts for the signal predictors (all set to a common v alue β signal ) and zeros for noise predictors. This structure reflects standard assumptions in sim ulation studies for ev aluating prediction models [ 13 ]. The current v ersion of the pmsims pac k age (0.5.0) pro vides three data-generating pro cesses: Binary outcomes Y ∼ Bernoulli( π ), where π = logit − 1 ( η ). The baseline probability π 0 (when X = 0 ) is controlled by the intercept β 0 , which is tuned along with β signal to achiev e a sp ecified outcome prev alence and dis- crimination, key drivers of required sample size in binary prediction mo dels [ 5 ]. Con tinuous outcomes Y ∼ N ( η , σ 2 ), with σ 2 = 1. The signal strength β signal is tuned to ac hieve a target large-sample R 2 , a common measure of explained v ariance in contin uous prediction settings [ 14 ]. Surviv al outcomes Ev ent times T ∼ Exp onen tial( λ ), where λ = λ 0 exp( η ). Righ t- censoring is introduced at a time p oin t t c suc h that the censoring rate matches a sp ecified v alue. The linear predictor co efficien ts are tuned to achiev e a target large-sample C-index, the most widely used discrimination measure for time-to-ev ent prediction mo dels [ 15 , 16 ]. The predictors X j are sim ulated as standardised contin uous normal v ariables, reflecting common design choices in methodological simulation studies [ 1 ]. These data- generating processe s are implemented in ternally within the pack age, with separate routines handling binary , contin uous, and surviv al outcomes, resp ectiv ely . 2.1.1 Data generator tuning for contin uous outcomes The parameters go verning each data-generating process are tuned to ac hiev e tw o user- sp ecified targets: (i) the desired outcome prev alence in the p opulation, and (ii) the maxim um achiev able p erformance (e.g., C-statistic for binary/surviv al, R 2 for con- tin uous) of a correctly sp ecified mo del, as advocated in principled sim ulation-based ev aluations [ 7 , 17 ]. It should b e noted that these tuning strategies are implemented sp ecifically for the default prediction models (logistic, linear, and Cox regression); researc hers wishing to emplo y alternative mo del classes would need to dev elop cor- resp onding tuning functions tailored to those mo dels, which remains an av en ue for future developmen t. The contin uous outcome tuning function in the pac k age analytically determines the common co efficient β signal for all signal predictors. Given a target large-sample 5 R 2 , num b er of candidate features p , and num b er of noise features p noise , it calculates β signal = s R 2 ( p signal · (1 − R 2 )) , where p signal = p − p noise is the n um b er of true signal predictors. This deriv ation follows directly from the relationship b et ween regression co efficien ts, predictor v ariance, and explained v ariance in linear mo dels [ 18 ]. This ensures that a linear regression mo del fitted to a v ery large sample will achiev e the exp ected R 2 . 2.1.2 Data generator tuning for binary outcomes The tuning of the signal co efficien t to match the required p erformance targets follows an approach similar to that of [ 19 ]. A numerical optimisation pro cedure is employ ed to jointly calibrate the distribution of the linear predictor η = X T β + β 0 , finding the mean µ and v ariance σ 2 of η that simultaneously satisfy the target outcome prev alence π 0 and target large-sample C-statistic. The optimisation minimises the squared error b et w een estimated and target v alues: min µ,σ 2 h ( ˆ C − C target ) 2 + ( ˆ π − π target ) 2 i , where ˆ π = E [logit − 1 ( η )] and ˆ C is computed via numerical integration of the biv ariate normal distribution of the linear predictor for cases and controls, consistent with the binormal mo del for the R OC curve [ 19 ]. Once σ 2 is determined, the common signal co efficien t is set as β signal = σ √ p signal , ensuring that the v ariance of the linear predictor matches the optimised σ 2 . 2.1.3 Data generator tuning for surviv al outcomes The surviv al outcome tuning function p erforms optimisation to find parameters for a prop ortional hazards mo del. It searches for the log of the baseline hazard log( λ ) and the log of the standard deviation of the linear predictor log( σ ) that minimises the squared error b et w een the actual ev ent rate (1 − censoring rate) and C-index and their resp ectiv e targets. The optimisation uses simulated surviv al data (with exp onen tial ev ent times) to ev aluate the ob jective function, building on established links b etw een the linear predictor v ariance and concordance in prop ortional hazards mo dels [ 19 ]. The resulting σ is then used to set β signal analogously to the binary case. 2.2 Mo del-fitting pro cedure Giv en a training dataset D train = { ( X i , Y i ) } n i =1 , a prediction mo del f ( X ; ˆ θ ) is fitted. The pack age supports logistic regression for binary outcomes, linear regression for contin uous outcomes, and Cox prop ortional hazards 6 mo dels for surviv al outcomes as default mo del classes. The mo del-fitting function M returns a fitted mo del ob ject ˆ f , which is then used to generate predictions on an indep enden t v alidation set. 2.3 Mo del ev aluation and p erformance metrics Mo del p erformance is ev aluated on a large, indep enden t v alidation set D v al of size n v al to approximate the exp ected p erformance in the p opulation. Let ˆ π i denote the predicted probability (or risk score) for the i -th observ ation. The pack age supp orts m ultiple metrics: Discrimination F or binary outcomes, the area under the ROC curve (AUC) is com- puted. F or surviv al outcomes, Harrell’s C-index is used. F or contin uous outcomes, the out-of-sample R 2 is calculated as [ 14 ] R 2 = 1 − MSE V ar( Y ) . Calibr ation The calibration slop e γ is estimated b y regressing the observed outcome on the linear predictor (or log-o dds) of the predictions. A slop e of 1 indicates p erfect calibration. 2.4 Sample size searc h engines In this study , we compare three searc h engines to find the minimum sample size n ∗ suc h that the exp ected p erformance E [ G ( n )] (or a sp ecified quantile thereof ) exceeds a target τ . Let S ( n ) denote the sim ulation process: generate training data of size n , fit the mo del, and compute the p erformance metric G on the v alidation set. 2.4.1 Gaussian pro cess search The primary searc h engine in pmsims is a Gaussian pro cess (GP)-based adaptiv e pro- cedure implemented via the mlpwr pac k age [ 10 ]. This approach treats the relationship b et w een sample size and mo del p erformance as an unknown smo oth function, g ( n ) = F { G ( n ) } , and uses GP surrogate mo delling to em ulate the function using a limited num b er of sim ulation ev aluations. 7 Algorithm 1 Gaussian Pro cess–Based Sample Size Search ( gp Engine) Require: Data generating function D ( · ), mo del fitting function M ( · ), p erformance metric G ( · ) Require: T arget p erformance τ , aggregation criterion (mean or assurance) Require: Ev aluation budget B , replications p er sample size κ 1: Compute heuristic starting b ounds [ n (0) min , n (0) max ] based on outcome t yp e and n umber of predictors p 2: Refine b ounds via adaptiv e searc h using B 0 pilot replications, yielding [ n min , n max ] 3: Generate a fixed indep enden t test dataset D test ← D ( n test ) 4: Initialise GP with n k sample sizes drawn within [ n min , n max ] 5: for each initial sample size n k do 6: Sim ulate training data D train ← D ( n k ) 7: Fit mo del ˆ f ← M ( D train ) 8: Ev aluate p erformance g ← G ( D test , ˆ f ) 9: end for 10: Fit GP surrogate to observ ed ( n, g ) pairs 11: while ev aluation budget B not exhausted do 12: Select next candidate n via GP acquisition rule, targeting smallest n ac hieving τ 13: Estimate aggregated p erformance via κ rep eated sim ulations: 14: if criterion is mean then 15: ˆ g ( n ) ← 1 κ P κ j =1 G ( D test , M ( D j ( n ))) 16: else if criterion is assurance then 17: ˆ g ( n ) ← Q 0 . 20 G ( D test , M ( D j ( n ))) 18: end if 19: Up date GP surrogate with new ( n, ˆ g ( n )) observ ation 20: end while 21: return Estimated minimum sample size n ∗ suc h that ˆ g ( n ∗ ) ≥ τ Algorithm ( 1 ) b egins b y determining a data-driven lo wer bound on the sample size using outcome-sp ecific heuristics informed by the num b er of predictors and, where relev an t, the outcome prev alence or censoring rate. A small set of initial sample sizes { n 1 , . . . , n k } is then ev aluated to initialise the GP surrogate. A t each iteration, the GP guides the selection of a new candidate sample size, targeting the smallest n at whic h the desired p erformance threshold τ is achiev ed. Mo del p erformance is aggregated either by its mean (mean-based criterion) or by a low er quan tile of its sampling distribution (assurance criterion). F or the assurance criterion, the target is the 20th p ercen tile of the p erformance distribution across train- ing sets, estimated empirically across simulation replications at eac h candidate n . In the GP-based engines, simulation noise is explicitly mo delled using a b o otstrap-based v ariance estimator, and the search contin ues until the sim ulation budget is exhausted. 8 2.4.2 Bisection searc h ( bisection ) The bisection search uses a classical deterministic bisection algorithm. This engine main tains an interv al [ n low , n high ] that brack ets the unkno wn minimum sample size n ∗ . At each iteration, the algorithm ev aluates mo del p erformance at the midp oin t n mid = n low + n high 2 . Multiple simulation replicates are generated at n mid , and p erformance is aggregated using either the mean-based or assurance criterion. If the aggregated p erformance estimate ˆ g ( n mid ) ≥ τ , the upper b ound is up dated to n mid ; otherwise, the low er bound is up dated. The in terv al is rep eatedly halved until its width falls b elo w a predefined tolerance or the sim ulation budget is exhausted. Algorithm 2 Bisection-Based Sample Size Search ( bisection Engine) Require: Data generating function D ( · ), mo del fitting function M ( · ), p erformance metric G ( · ) Require: T arget p erformance τ , aggregation criterion (mean or assurance) Require: Ev aluation budget B , replications p er sample size κ 1: Compute heuristic starting b ounds [ n (0) min , n (0) max ] based on outcome t yp e and n umber of predictors p 2: Refine b ounds via adaptiv e searc h using B 0 pilot replications, yielding [ n min , n max ] 3: Generate a fixed indep enden t test dataset D test ← D ( n test ) 4: Set maximum iterations T ← ⌊ B /κ ⌋ 5: Ev aluate aggregated p erformance at b ounds: ˆ g ( n min ) and ˆ g ( n max ) 6: while iteration t < T do 7: Set n mid ← ⌊ ( n min + n max ) / 2 ⌋ 8: Sim ulate κ training datasets D train ← D j ( n mid ) , j = 1 , . . . , κ 9: Fit mo del ˆ f j ← M ( D train ,j ) and ev aluate G ( D test , ˆ f j ) for each replicate 10: Aggregate p erformance: 11: if criterion is mean then 12: ˆ g ( n mid ) ← 1 κ P κ j =1 G ( D test , ˆ f j ) 13: else if criterion is assurance then 14: ˆ g ( n mid ) ← Q 0 . 20 G ( D test , ˆ f j ) 15: end if 16: if ˆ g ( n mid ) ≥ τ then 17: n max ← n mid , ˆ g ( n max ) ← ˆ g ( n mid ) 18: else 19: n min ← n mid , ˆ g ( n min ) ← ˆ g ( n mid ) 20: end if 21: t ← t + 1 22: end while 23: return Estimated minimum sample size n ∗ = n max 9 2.4.3 Hybrid GP–bisection search ( gp-bs ) The hybrid engine ( gp bs ) com bines bisection and Gaussian pro cess surrogate mo d- elling in a three-stage strategy . First, adaptive b ound estimation is p erformed as in the other engines. Second, a coarse bisection search is run with a fixed budget of T = ⌊ 0 . 2 × B ⌋ replications to rapidly narro w the search in terv al to [ n ∗ min , n ∗ max ], reduc- ing the risk of p oor GP initialisation that can o ccur when the search space is wide or the p erformance function is highly v ariable. The refined interv al is then passed to the GP search stage, whic h conducts an adaptiv e, mo del-guided searc h within these tigh ter b ounds. This engine may b e particularly useful in scenarios with high p erformance v ariance or complex data-generating mec hanisms. Algorithm 3 Hybrid Bisection–Gaussian Pro cess Search ( gp-bs Engine) Require: Data generating function D ( · ), mo del fitting function M ( · ), p erformance metric G ( · ) Require: T arget p erformance τ , aggregation criterion (mean or assurance) Require: Ev aluation budget B , replications p er sample size κ % Stage 1: A daptive b ound estimation 1: Compute heuristic starting b ounds [ n (0) min , n (0) max ] based on outcome t yp e and n umber of predictors p 2: Refine b ounds via adaptiv e searc h using B 0 pilot replications, yielding [ n min , n max ] 3: Generate a fixed indep enden t test dataset D test ← D ( n test ) % Stage 2: Co arse bise ction se ar ch 4: Run bisection algorithm ( 2 ) within [ n min , n max ] using a fixed budget of T = ⌊ 0 . 2 × B ⌋ total replications, yielding bisection history H = { ( n t , ˆ g ( n t )) } T t =1 % Stage 3: R efine d GP se ar ch 5: Deriv e refined GP b ounds [ n ∗ min , n ∗ max ] from H 6: Initialise GP with k start sets drawn within [ n ∗ min , n ∗ max ] 7: while ev aluation budget B not exhausted do 8: Select next candidate n via GP acquisition rule, targeting smallest n ac hieving τ 9: Sim ulate κ training datasets D train ← D j ( n ) , j = 1 , . . . , κ 10: Fit mo del ˆ f j ← M ( D train ,j ) and ev aluate G ( D test , ˆ f j ) for each replicate 11: Aggregate p erformance: 12: if criterion is mean then 13: ˆ g ( n ) ← 1 κ P κ j =1 G ( D test , ˆ f j ) 14: else if criterion is assurance then 15: ˆ g ( n ) ← Q 0 . 20 G ( D test , ˆ f j ) 16: end if 17: Up date GP surrogate with new ( n, ˆ g ( n )) observ ation 18: end while 19: return Estimated minimum sample size n ∗ suc h that ˆ g ( n ∗ ) ≥ τ 10 2.5 Adaptiv e starting v alue searc h for pmsims engines All three engines share a common first stage: an adaptive pro cedure that automatically determines the sample size b ounds [ n min , n max ] supplied to the main searc h algorithm (as described in Sections 2.4.1 – 2.4.3 ). The goal is to find tw o sample sizes n min and n max suc h that ˆ G ( n min ) ≤ τ ≤ ˆ G ( n max ) , where τ is the user-specified target p erformance. This stage uses a fixed pilot budget of B 0 replications (distinct from the main ev aluation budget B ), allocated as κ = ⌊ B 0 /K ⌋ replications p er candidate sample size, where K is the maximum n umber of iterations. A tolerance δ = 0 . 0001 is applied to a void unnecessary iterations when the p erformance estimate is already sufficien tly close to τ . 2.5.1 Initialisation 1. Generate a fixed test set D test ← D ( n test ). 2. Set k ← 1, n (1) ← n 0 , where n 0 is a heuristic starting v alue based on outcome type and num b er of predictors p . 3. Compute ˆ G (1) using κ replications. 4. Determine the direction of searc h: direction ← ( “up” if ˆ G (1) < τ , “do wn” if ˆ G (1) ≥ τ . 2.5.2 Iterativ e search While k < K : 1. Propose a new candidate sample size: n ( k +1) ← ( 2 n ( k ) if direction = “up”, ⌊ n ( k ) / 2 ⌋ if direction = “down”. If n ( k +1) = n ( k ) , the algorithm terminates. 2. Compute ˆ G ( k +1) using κ fresh replications. 3. Update b ounds: - If direction = “up”: - If ˆ G ( k +1) ≥ τ − δ : set n max ← n ( k +1) and stop. - Otherwise: set n min ← n ( k +1) and contin ue. - If direction = “down”: - If ˆ G ( k +1) ≤ τ + δ : set n min ← n ( k +1) and stop. - Otherwise: set n max ← n ( k +1) and contin ue. Set k ← k + 1 and rep eat. 11 The doubling and halving strategy lo cates a brack et around τ without requiring prior knowledge of the sample size–p erformance relationship. The pro cedure is inten- tionally conserv ativ e, using few replications p er candidate to keep the pilot cost low. The metho d assumes that the p erformance metric is monotonically non-decreasing in n ; if the metric is non-monotonic, the algorithm ma y fail to brack et the target correctly , and man ual sp ecification of [ n min , n max ] is recommended. 3 Sim ulation scenarios for pmsims pac k age v alidation This simulation study had tw o aims, following standard op erating simulation design principles [ 20 ]. Aim 1 ev aluated the statistical p erformance and computational effi- ciency of the Gaussian pro cess–based adaptive search gp engine, b enc hmarking it against tw o reference engines: its hybrid ( gp-bs ) and a bisection search ( bisection ). Aim 2 b enchmark ed pmsims , using the engine identified as b est-p erforming in Aim 1, against tw o existing approaches: pmsampsize [ 5 ] and samplesizedev [ 7 ]. 3.1 Aim 1: Comparison of pmsims search engines T o systematically compare the p erformance of the three search engines ( gp , bisection , and gp-bs ), we conducted an extensive sim ulation study cov ering a range of realistic prediction mo delling scenarios, consisten t with b est-practice guidance for sim ulation-based methodological research [ 21 , 22 ]. The scenarios were v aried along five k ey dimensions: (1) outcome type, (2) num b er of candidate predictors, (3) outcome distribution or mo del strength, (4) target p erformance metric and threshold, and (5) total simulation budget. F or each outcome t yp e—binary , contin uous, and surviv al—w e defined a set of base scenarios reflecting common characteristics encoun tered in clini- cal prediction research [ 5 , 23 ]. Eac h scenario w as indep endently replicated 100 times to ensure robust estimation of the precision and stability of the sample size estimates pro duced by each engine, in line with recommendations for Monte Carlo precision assessmen t [ 22 ]. The levels of sp ecific factors for each outcome type are summarised in T able 1 . F or binary outcomes, we considered tw o even t prev alences (5% and 20%) and tw o target metrics: the C-statistic (A UC) with a target set 0.05 b elo w the large-sample v alue and the calibration slop e with a target of 0.9. F or contin uous outcomes , we v aried the exp ected large-sample R 2 (0.2 and 0.7) and similarly targeted either R 2 or a calibration slop e of 0.9. F or surviv al outcomes, we considered tw o even t rates (40% and 80%) with targets on the C-index or the calibration slop e (0.9). Across all outcome types, we examined b oth a mo dest (10) and a larger (100) n umber of candidate predictors, with no noise predictors. The total simulation bud- get B was v aried from 200 to 2 , 000 to assess how algorithmic efficiency scales with a v ailable computational resources. The budget was allo cated in blo c ks of κ ∈ { 10 , 20 } indep enden t simulation replicates p er candidate sample size. 12 T able 1 : Simulation scenarios for the comparative ev aluation of search engines. Outcome p Mo del strength T arget metric T arget v alue Binary 10, 100 Prev alence = 0.05, C = 0 . 80 A UC 0.75 Prev alence = 0.05, C = 0 . 80 Calibration slop e 0.90 Prev alence = 0.20, C = 0 . 80 A UC 0.75 Prev alence = 0.20, C = 0 . 80 Calibration slop e 0.90 Con tinuous 10, 100 R 2 = 0 . 20 R 2 0.15 R 2 = 0 . 20 Calibration slop e 0.90 R 2 = 0 . 70 R 2 0.65 R 2 = 0 . 70 Calibration slop e 0.90 Surviv al 10, 100 Ev ent rate = 0.40, C = 0 . 80 C-index 0.75 Ev ent rate = 0.40, C = 0 . 80 Calibration slop e 0.90 Ev ent rate = 0.80, C = 0 . 80 C-index 0.75 Ev ent rate = 0.80, C = 0 . 80 Calibration slop e 0.90 3.2 Aim 2: Benc hmark comparison of pmsims with pmsampsize and samplesizedev T o b enc hmark the simulation-based approac h implemen ted in pmsims against existing metho ds, we compared the sample size estimates from the b est-p erforming engine ( gp ) with those provided b y pmsampsize (for binary and contin uous outcomes) and samplesizedev (for binary and surviv al outcomes). W e ev aluated a range of realistic prediction-mo delling scenarios, systematically v arying the mo del strength, outcome prev alence (or even t rate), num b er of predictors, and target p erformance metric. F or binary outcomes, we considered large-sample C-statistics (AUC) of 0.8 and 0.9; outcome prev alences of 5% and 20%; and predictor counts ranging from 5 to 100 in seven steps (5, 10, 20, 40, 60, 80, 100). F or contin uous outcomes, the large-sample R 2 w as set at 0.2, 0.5, and 0.7, with the same range of predictor counts. F or surviv al outcomes, w e used large-sample C-indices of 0.7, 0.8, and 0.9; ev en t rates of 40%, 50%, and 80%; and the same predictor counts. Within each scenario, tw o target metrics w ere examined: a discrimination metric set to 0.05 b elo w the large-sample v alue, and the calibration slop e set to 0.90. The gp engine was run with a fixed simulation budget of 1,000 total mo del ev aluations, using 20 replications per candidate sample size, under b oth mean-based and assurance-based criteria (80% assurance). F or each scenario, we recorded the recommended sample size for eac h metho d and the corresp onding computational time. T o assess v alidit y , w e generated an indep endent v alidation dataset of 30,000 observ ations and ev aluated the achiev ed p erformance of a model developed on a sample of the recommended size. Eac h scenario was rep eated 100 times. The complete set of ev aluated scenarios is summarised in T able 2 . Note: F or binary and surviv al outcomes, the large-sample performance is the exp ected C-statistic (AUC) or C-index, resp ectiv ely . The target for discrimination metrics is set 0.05 b elo w this v alue to reflect a practically acceptable margin for finite-s ample optimism [ 23 ]. The calibration-slop e target is 0.90, representing a commonly accepted 13 T able 2 : Simulation scenarios for comparing pmsims ( gp engine) with existing sample size to ols ( pmsampsize , samplesizedev ). All scenarios were ev aluated for b oth mean- based and assurance-based criteria (80% assurance). Outcome type Large-sample performance Prev alence / even t rate T arget metric T arget v alue Binary C-statistic = 0 . 8 , 0 . 9 0.05, 0.20 AUC | C − 0 . 05 | C-statistic = 0 . 8 , 0 . 9 0.05, 0.20 Calibration slop e 0.90 Contin uous R 2 = 0 . 2 , 0 . 5 , 0 . 7 – R 2 | R 2 − 0 . 05 | R 2 = 0 . 2 , 0 . 5 , 0 . 7 – Calibration slop e 0.90 Surviv al C-index = 0 . 7 , 0 . 8 , 0 . 9 0.40, 0.50, 0.80 C-index | C − 0 . 05 | C-index = 0 . 7 , 0 . 8 , 0 . 9 0.40, 0.50, 0.80 Calibration slop e 0.90 threshold for go o d calibration in clinical prediction models [ 1 ]. All predictors are con tinuous and truly asso ciated with the outcome. 3.3 Sim ulation estimands In each simulation scenario, the p erformance of the sample size determination pro- cedures is summarised using Mon te Carlo estimands computed across S = 100 indep enden t simulation runs [ 21 , 22 ]. W e computed the mean ˆ n ∗ and standard devia- tion σ ˆ n ∗ of the estimated minim um sample sizes across runs, as well as the co efficien t of v ariation, CV = σ ˆ n ∗ ˆ n ∗ × 100% , whic h provides a scale-free measure of relative disp ersion and is used to assess estimator stability across simulation replicates [ 21 ]. F or Aim 2, p erformance is ev aluated based on the achieve d mo del p erformanc e when fitting a mo del using the sample size recommended by each metho d. Let d P erf ( s ) denote the performance estimated on an indep enden t v alidation dataset in replicate s , and let T arget denote the corresp onding target p erformance v alue. Performance deviation is defined as Deviation ( s ) (%) = d P erf ( s ) − T arget T arget × 100 . 4 Results 4.1 Aim 1: Comparison of searc h engines Binary outcomes Figure 1 compares precision (co efficien t of v ariation; CV) and computational time for the three search pro cedures across the calibration slop e metric under v arying design parameters: num b er of simulation replicates p er ev aluation ( κ = 10 , 20), outcome prev alence (0 . 05 , 0 . 20), num b er of predictors ( p = 10 , 100), and total simulation budget ( B = 200–2000). Increasing the n umber of replicates p er ev aluation to 20 substantially reduced the CV for the gp engine. Across all remaining conditions, gp consistently 14 pro duced lo wer and more stable CV estimates than bisection and gp-bs , with the adv an tage most pronounced in the low-prev alence setting. Performance impro vemen ts w ere more consistent under mean-aggregation, which yielded smo other conv ergence tra jectories. On av erage, with κ = 20, CV plateaued at a total simulation budget of appro ximately B = 1000 under b oth aggregation schemes, indicating that B = 1000 constitutes an efficien t low er b ound. Comparable patterns were obtained for the AUC metric (Figure S1 , Additional File 1). A t B = 1000 (T able 3 ), gp consistently achiev ed the lo west CV for AUC estimation, most notably in challenging settings c haracterised by low prev alence (0 . 05) and high dimensionality ( p = 100). F or calibration slop e estimation, gp-bs o ccasionally outp erformed the other metho ds under assurance-based aggregation in lo w-prev alence scenarios, though gp generally delivered competitive p erformance under mean aggregation. 15 (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. 1 : Aim 1 (Binary outcome): Comparison of CV and computational time across searc h engines gp , bisection and gp-bs for calibration slop e metric under v arying n umber of simulation replicates p er ev aluation ( κ = 10 , 20), prev alence (0 . 05 , 0 . 20), n umber of predictors ( p = 10 , 100) and total simulation budget ( B = 200–2000). 16 T able 3 : Estimated minim um sample size ( ˆ n ∗ ) and stabilit y (CV) for binary outcomes using assurance aggregation (20% quan tile) and mean aggregation, stratified by target metric, ev ent prev alence and n um b er of predictors ( p ), at a fixed total budget B = 1000 and κ = 20. Results are av eraged ov er 100 simulation replicates. Metric Prev alence p Engine Mean ˆ n ∗ CV Assurance Mean agg. Assurance Mean agg. bisection 444 320 17.05 11.79 gp 431 315 13.39 3.01 10 gp-bs 428 308 11.72 10.11 bisection 3671 3190 11.67 8.17 gp 3691 3168 5.43 1.52 0.05 100 gp-bs 3650 3127 10.08 9.90 bisection 152 111 12.68 10.89 gp 154 110 4.51 2.91 10 gp-bs 152 110 6.17 7.03 bisection 1311 1145 7.43 6.76 gp 1307 1138 2.61 0.82 AUC 0.2 100 gp-bs 1289 1145 6.09 6.35 bisection 3778 1641 35.72 30.30 gp 3515 1566 27.62 24.94 10 gp-bs 3577 1596 29.20 23.37 bisection 23654 18314 19.94 26.38 gp 23955 17958 6.53 14.82 0.05 100 gp-bs 22669 18879 18.28 25.52 bisection 1389 594 26.20 16.48 gp 1311 592 20.11 8.57 10 gp-bs 1325 583 19.03 12.86 bisection 8704 6696 18.50 14.39 gp 8654 6432 11.15 2.19 Calib. Slop e 0.2 100 gp-bs 8308 6595 15.98 11.44 Con tin uous outcomes Results for contin uous outcomes were consistent with the binary findings. The gp engine consistently pro duced the low est and most stable CV across all exp erimen tal conditions, with the adv antage particularly pronounced in high-dimensional settings ( p = 100) and under lo w-signal conditions ( R 2 = 0 . 2), where gp yielded CV reductions of up to 70–80% relative to bisection and gp-bs . With κ = 20, CV plateaued at appro ximately B = 1000. Figures S2 and S3 (Additional File 1) display CV and computational time for the calibration slop e and R 2 metrics resp ectively , and the full n umerical results are rep orted in T able 4 . 17 T able 4 : Estimated minimum sample size ( ˆ n ∗ ) and stability (CV) for contin uous outcomes using assurance aggregation (20% quan tile) and mean aggregation, stratified b y target metric, large-sample R 2 , and num ber of predictors ( p ), at B = 1000 and κ = 20. Results are av eraged ov er 100 simulation replicates. Metric R 2 p Engine Mean ˆ n ∗ CV Assurance Mean agg. Assurance Mean agg. R 2 bisection 243 191 11.20 10.46 gp 237 191 6.65 3.62 10 gp-bs 242 188 8.51 6.71 bisection 1934 1741 7.04 8.33 gp 1954 1721 1.85 3.46 0.2 100 gp-bs 1907 1735 7.97 7.18 bisection 99 77 8.56 11.31 gp 100 79 2.52 6.22 10 gp-bs 99 78 6.17 6.37 bisection 786 710 6.30 6.26 gp 785 707 0.86 0.61 0.7 100 gp-bs 778 711 5.72 5.08 Calib. Slop e bisection 86 190 17.56 8.03 gp 91 191 10.21 3.55 10 gp-bs 84 187 12.02 7.01 bisection 4676 1741 12.59 8.50 gp 4530 1732 6.30 4.34 0.2 100 gp-bs 4594 1745 12.54 8.46 bisection 598 77 7.51 7.71 gp 582 78 3.71 1.81 10 gp-bs 580 77 5.13 5.28 bisection 572 715 8.56 5.94 gp 568 706 5.35 0.49 0.7 100 gp-bs 583 714 4.33 5.50 Surviv al outcomes Figure 2 compares sample size precision (CV) and computational time for the cali- bration slop e metric across design configurations: κ ∈ { 10 , 20 } , even t rate (0 . 4 , 0 . 8), p ∈ { 10 , 100 } , and B = 200–2000. The gp engine consistently yielded low er and more stable CV v alues than b oth bisection and gp-bs , with the relative adv antage most mark ed in the more challenging low-ev en t-rate scenarios (0 . 4). Performance improv e- men ts w ere more systematic under mean-based aggregation. CV v alues approached a plateau at B ≈ 1000 for κ = 20 under b oth aggregation schemes. The corresp onding results for the C-index metric are rep orted in Figure S4 (Additional File 1). 18 (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. 2 : Aim 1 (Surviv al outcome): Comparison of CV and computational time across searc h engines gp , bisection and gp-bs for calibration slop e metric under v arying ( κ = 10 , 20), even t rate (0 . 4 , 0 . 8), num b er of predictors ( p = 10 , 100) and total simu- lation budget ( B = 200–2000). Under B = 1000 (T able 5 ), gp consistently ac hieved the lo west CV for C-index estimation, often by a substantial margin, with the adv an tage most eviden t in low- ev ent-rate (0 . 4), high-dimensional ( p = 100) scenarios. The only exception arose under assurance-based aggregation with p = 10 and even t rate 0 . 4, where gp-bs pro duced a slightly smaller CV. Calibration slop e estimation was more demanding, yet gp still 19 consisten tly ac hieved the low est CV across all scenarios. The bisection pro cedure systematically underp erformed under nearly all ev aluated conditions. T able 5 : Estimated minim um sample size ˆ n ∗ and stability (CV) for surviv al outcomes using assurance aggregation (20% quan tile) and mean aggregation, stratified by target metric, even t rate and num b er of predictors ( p ), at B = 1000 and κ = 20. Results are a veraged o ver 100 simulation replicates. Metric Ev ent rate p Engine Mean ˆ n ∗ CV Assurance Mean agg. Assurance Mean agg. C-index bisection 60 47 11.74 10.80 gp 62 48 8.48 4.77 10 gp-bs 62 48 6.77 4.82 bisection 540 489 4.55 3.58 gp 543 491 2.38 0.47 0.4 100 gp-bs 541 491 3.22 2.44 bisection 38 31 12.05 10.71 gp 41 32 4.69 4.43 1 0 gp-bs 40 32 4.78 3.65 bisection 334 304 5.04 3.12 gp 334 304 0.86 0.48 0.8 100 gp-bs 334 304 2.39 1.97 Calib. Slop e bisection 486 268 25.79 20.96 gp 479 270 21.00 12.94 10 gp-bs 471 267 17.49 12.67 bisection 3524 2992 11.84 8.00 gp 3403 2990 5.92 3.78 0.4 100 gp-bs 3504 2941 8.37 6.53 bisection 328 178 22.46 17.43 gp 324 171 10.53 5.63 10 gp-bs 315 175 12.86 11.48 bisection 2277 1863 9.07 7.85 gp 2198 1846 5.36 3.88 0.8 100 gp-bs 2225 1878 6.78 5.06 Ov erall ranking T able 6 summarises the comparativ e performance of the three search algorithms across all 12 outcome–aggregation–metric configurations. gp ac hieves the low est mean rank in 11 of the 12 configurations and is ov erall the b est-p erforming search engine, achieving a p erfect av erage rank of 1.00 in nine configurations. The sole exception arises for the calibration slop e under binary outcome with assurance-based aggregation, where gp-bs attains the b est rank. The bisection pro cedure is ranked last or tied for last in every configuration, consistent with the inefficiency of deterministic bisection in sto c hastic optimisation settings. 20 T able 6 : Average rank of search engines across outcomes, aggregation metho ds, and target metrics at B = 1000. Low er a verage rank indicates b etter ov erall p erformance (CV). Outcome Aggregation metho d Metric bisection gp gp-bs Assurance AUC 3.00 1.00 2.00 Binary Calib. Slop e 2.75 1.75 1.50 Mean AUC 3.00 1.00 2.00 Calib. Slop e 2.75 1.25 2.00 Assurance R 2 2.75 1.00 2.25 Contin uous Calib. Slop e 3.00 1.00 2.00 Mean R 2 2.75 1.00 2.25 Calib. Slop e 3.00 1.00 2.00 Assurance C-index 2.50 1.25 2.25 Surviv al Calib. Slop e 2.75 1.00 2.25 Mean C-index 3.00 1.00 2.00 Calib. Slop e 2.50 1.00 2.50 4.2 Aim 2: Benc hmark comparison Binary outcome T able 7 rep orts the percentage deviation of ac hieved p erformance from target for binary outcome mo dels with p = 20. pmsims (mean) and samplesizedev exhibited the most stable p erformance, with deviations typically within ± 1% of the target. pmsampsize generally suggested the smallest sample sizes; although it o ccasionally ac hieved p erformance close to the target, it sho wed substantial negative deviations in sev eral scenarios, most pronounced at the highest target discrimination (AUC 0 . 9), where deviations reached − 7 . 24% (prev alence 5%) and − 9 . 84% (prev alence 20%). pmsims (assurance) recommended the largest sample sizes and pro duced consistently minor negativ e deviations (ranging from − 1 . 05% to − 2 . 00%). Supplementary figures sho wing minimum sample size requirements, co efficient of v ariation, computational time, and ac hieved calibration p erformance across all predictor coun ts and scenarios are provided in Figures S5 – S8 (Additional File 1). 21 T able 7 : Comparison of sample size requirements and achiev ed p erformance devi- ations for binary outcomes across tw o prev alence levels (0.05, 0.20) and tw o target large-sample AUC v alues (0.8, 0.9), with p = 20. P erformance ev aluated for calibration slop e. Metric Prev alence Large-sample AU C Engine ˆ n ∗ ˆ G ( ˆ n ∗ ) % Deviation pmsims (assurance) 5562 0.882 -2.00 0.05 0.8 pmsims (mean) 3403 0.906 0.70 pmsampsize 2788 0.888 -1.34 samplesizedev 3346 0.903 0.34 pmsims (assurance) 4080 0.890 -1.16 0.9 pmsims (mean) 2352 0.911 1.18 pmsampsize 1303 0.835 -7.24 samplesizedev 2331 0.908 0.91 Calib. slop e pmsims (assurance) 2024 0.884 -1.75 0.20 0.8 pmsims (mean) 1239 0.898 -0.25 pmsampsize 882 0.863 -4.14 samplesizedev 1245 0.902 0.21 pmsims (assurance) 1674 0.891 -1.05 0.9 pmsims (mean) 986 0.898 -0.24 pmsampsize 509 0.811 -9.84 samplesizedev 1005 0.904 0.42 Con tin uous outcome T able 8 presents the p ercentage deviation of observed p erformance from presp ecified targets for contin uous outcome mo dels with p = 20. pmsims (mean) achiev ed high precision, with deviations within ± 0 . 09% at R 2 = 0 . 5 and 0 . 7, and a mo dest p ositiv e deviation of +0 . 98% at R 2 = 0 . 2. The assurance-based v ariant produced sligh t negative deviations ( − 0 . 12% to − 0 . 42%). In contrast, pmsampsize show ed systematic p ositiv e deviations that increased strongly with target R 2 : from +0 . 93% at R 2 = 0 . 2 to +7 . 53% at R 2 = 0 . 7, reflecting the fact that in higher-signal settings the pmsampsize sample size is not driven by shrink age, resulting in calibration slop es that exceed the target. Supplemen tary figures are provided in Figures S9 – S12 (Additional File 1). 22 T able 8 : Comparison of sample size requirements and achiev ed p erformance devia- tions for con tinuous outcomes across three target R 2 lev els (0.2, 0.5, 0.7), with p = 20. P erformance ev aluated for calibration slop e. Metric Large-sample R 2 Engine ˆ n ∗ ˆ G ( ˆ n ∗ ) % Deviation pmsims (assurance) 1196 0.897 -0.39 0.2 pmsims (mean) 702 0.909 0.98 pmsampsize 716 0.908 0.93 pmsims (assurance) 320 0.899 -0.12 Calib. slop e 0.5 pmsims (mean) 189 0.899 -0.09 pmsampsize 254 0.928 3.09 pmsims (assurance) 151 0.896 -0.42 0.7 pmsims (mean) 95 0.900 0.03 pmsampsize 254 0.968 7.53 Surviv al outcome T able 9 compares the sample size requirements and achiev ed calibration slop e devia- tions for surviv al outcome mo dels with p = 20. All three metho ds achiev ed calibration slop es close to the target of 0 . 90 across the full range of ev en t rates and target C-index v alues. pmsims (mean) pro duced the smallest deviations ov erall, consistently within ± 0 . 87% across all scenarios. pmsims (assurance) sho wed mo dest negative deviations, ranging from − 0 . 41% to − 1 . 47%, reflecting its conserv ativ e sample size recommen- dations. samplesizedev p erformed comparably at ev ent rates of 0 . 4 and 0 . 5, with deviations b et ween − 0 . 94% and +3 . 19%, though p ositive deviations at C -index = 0 . 9 (up to +3 . 19% at ev ent rate 0 . 4 and +2 . 78% at even t rate 0 . 5) suggest mild ov er- estimation of the required sample size in high-discrimination settings. At even t rate 0 . 8, all three metho ds p erformed w ell, with deviations within ± 1 . 67%. Supplementary figures are provided in Figures S13 – S16 (Additional File 1). 23 T able 9 : Comparison of sample size requirements and achiev ed p erformance devia- tions for surviv al outcomes across three even t rates (0.4, 0.5, 0.8) and three target C -index v alues (0.7, 0.8, 0.9), with p = 20. Performance ev aluated for calibration slop e. Metric Ev ent rate Large-sample C -index Engine ˆ n ∗ ˆ G ( ˆ n ∗ ) % Deviation pmsims (assurance) 1569 0.888 -1.34 0.7 pmsims (mean) 963 0.896 -0.41 samplesizedev 925 0.892 -0.94 pmsims (assurance) 889 0.891 -1.03 0.4 0.8 pmsims (mean) 584 0.908 0.87 samplesizedev 544 0.895 -0.52 pmsims (assurance) 680 0.893 -0.79 0.9 pmsims (mean) 478 0.901 0.16 samplesizedev 656 0.929 3.19 pmsims (assurance) 1283 0.887 -1.47 0.7 pmsims (mean) 808 0.902 0.26 samplesizedev 802 0.899 -0.11 pmsims (assurance) 756 0.894 -0.72 Calib. slop e 0.5 0.8 pmsims (mean) 493 0.898 -0.21 samplesizedev 472 0.897 -0.33 pmsims (assurance) 590 0.896 -0.41 0.9 pmsims (mean) 408 0.903 0.33 samplesizedev 535 0.925 2.78 pmsims (assurance) 919 0.891 -1.01 0.7 pmsims (mean) 607 0.901 0.10 samplesizedev 604 0.899 -0.11 pmsims (assurance) 574 0.901 0.12 0.8 0.8 pmsims (mean) 353 0.895 -0.60 samplesizedev 374 0.901 0.11 pmsims (assurance) 436 0.896 -0.48 0.9 pmsims (mean) 292 0.901 0.15 samplesizedev 340 0.915 1.67 5 Discussion Researc hers dev eloping clinical prediction mo dels face the critical challenge of justify- ing their c hosen sample sizes, yet practical guidance on which sample size calculation metho d to use remains limited. In this study , we ev aluated three simulation-based searc h algorithms implemen ted in the pmsims pack age— gp (Gaussian pro cess-based), a deterministic bisection pro cedure, and a hybrid gp-bs approac h—for estimating the minim um developmen t sample size required for prediction mo dels with binary , contin- uous, and time-to-ev ent outcomes. W e assessed each metho d under b oth mean-based and assurance-based criteria across a wide range of scenarios v arying outcome prev a- lence or even t rate, predictor dimensionality , and target p erformance metrics. W e also b enc hmark ed the b est-p erforming metho d against established R pack ages for sample size calculation. 24 5.1 Sim ulation findings and recommendations In all scenarios, the GP-based gp searc h engine produced the most precise estimates of the minimum sample size (low est CV) when compared with a classical bisection searc h and the hybrid gp-bs approac h. The adv antage was esp ecially large in c halleng- ing scenarios characterised by lo w signal (lo w R 2 or low even t prev alence) and high dimensionalit y . This finding aligns with recent metho dological adv ances in sto c has- tic ro ot-finding, where adaptiv e sampling metho ds like the Probabilistic Bisection Algorithm maintain near-optimal con v ergence rates even in low-signal regimes [ 24 , 25 ]. Increasing the n umber of simulation replicates p er candidate sample size ( κ ) sub- stan tially stabilised the surrogate model up dates and reduced the CV of the estimated n ∗ ; κ = 20 (rather than κ = 10) yielded mark edly low er CV for gp . A total sim u- lation budget of roughly B ≈ 1000 w as an efficient trade-off b et w een precision and cost, with only mo dest p erformance impro vemen ts b ey ond this threshold. This effi- ciency gain reflects the sequential nature of the GP-based search, whic h automatically concen trates sim ulation effort in regions where greater precision is needed [ 25 ]. As exp ected, the assurance criterion pro duced more conserv ative recommended sample sizes than mean-based targets. gp pro duced reliable assurance-based recom- mendations but required higher simulation effort to ac hieve comparable precision to mean-based estimates. The hybrid gp-bs sometimes achiev ed marginally b etter precision than gp for calibration targets under assurance in limited binary settings, reflecting the b enefit of a coarse deterministic range-finding step when the p erformance curv e is particularly noisy . The deterministic bisection search consistently underp erformed, requiring many more mo del ev aluations to achiev e comparable stability . This supp orts the view that deterministic interv al halving is not well suited to stochastic sample size searc hes unless a very large ev aluation budget is a v ailable [ 25 ]. When compared with pmsampsize (analytical) and samplesizedev (simulation- based), the pmsims gp engine pro duced recommended sample sizes that achiev ed the presp ecified discrimination and calibration targets when ev aluated on large indep en- den t v alidation sets. Analytical form ulae can b e sensitiv e to modelling assumptions and ma y under- or o verestimate the required sample sizes in some high mo del strength set- tings [ 5 ], whereas simulation-based approaches can more directly reflect p erformance v ariabilit y under the assumed data-generating mechanism. Our findings suggest that the GP-based adaptiv e searc h implemen ted in gp offers a compelling middle ground: it ac hieves the precision of sim ulation-based approaches while maintaining comparable sample efficiency through sequen tial learning. 5.2 Limitations and implications for future research Our v alidation study fo cused on data-generating mechanisms with contin uous predic- tors and seven levels of mo del dimensionality ( p = 5 , . . . , 100), where all candidate predictors corresponded to true signal v ariables. This design does not fully cap- ture real-world complexities such as mixtures of discrete and contin uous cov ariates, 25 correlated noise features, missing data, or heavy-tailed distributions. Additional data- generating mec hanisms incorp orating realistic correlation structures and explicit noise predictors will be required to more fully c haracterise the generalisability of the findings. The sp ecification of the starting v alue can influence numerical stability . Analytical approac hes suc h as pmsampsize use closed-form form ulae and are insensitive to start- ing v alues. By contrast, simulation-based pro cedures can b e sensitiv e to their initial searc h region. The pmsims gp engine uses adaptiv e, data-driv en starting v alues (see subsection 2.5 ) to fo cus the search on informative regions of the sample size space; early iterations may b e unstable when the p erformance curve is flat or m ultimo dal. 6 Conclusion The pmsims pac k age uses a GP-based gp search engine to pro vide a computation- ally efficient and flexible framew ork for principled sample size planning in clinical prediction mo delling. Our ev aluation shows that adaptive surrogate-based searc h sub- stan tially outperforms deterministic bisection approac hes and ac hieves comparable p erformance relativ e to established analytical and simulation-based to ols while requir- ing few er ev aluations. With pragmatic adaptive starting limits, sufficien t replication p er ev aluation ( κ ≥ 20), and transparent rep orting of uncertaint y around the esti- mated minim um n , simulation-based planning can impro ve the reliability of prediction mo dels developed in practice. F uture work should prioritise: (1) more robust surrogate mo delling under non- monotonic or highly v ariable p erformance curv es, (2) improv ed assurance-based estimation to reduce simul ation budgets for quantile targets, and (3) broader v alida- tion across more realistic data-generating mechanisms and mo del classes, including correlated cov ariates, noise predictors, missing data, and predictive uncertaint y . Supplemen tary information. Additional File 1 contains supplementary figures for the Aim 1 and Aim 2 results, including CV and computational time plots for the AUC metric (binary), R 2 and calibration slop e metrics (contin uous), and C-index metric (surviv al) from the search engine comparisons, as w ell as minimum sample size requirements, co efficien t of v ariation, computational time, and ac hiev ed cali- bration performance figures from the benchmark comparisons with pmsampsize and samplesizedev for binary , contin uous, and surviv al outcomes. Ac knowledgemen ts. W e are grateful to the Advisory Group of the pmsims pro ject for their sustained guidance and supp ort throughout the developmen t of this w ork. W e ac knowledge the con tributions of Dr Nick Cummins (King’s College London, UK) and Dr Joie Ensor (Universit y of Birmingham, UK), whose academic insights strengthened b oth the conceptual and metho dological foundations of the pro ject. W e also extend our thanks to public represen tatives Katherine Barrett, Emma Shellard, and Aurora T o disco, whose lived exp erience and thoughtful engagement help ed ensure that the dev elopment of the pac k age remained grounded in real-w orld needs and user priorities. Their combined exp ertise significantly enriched the direction and clarity of this w ork. 26 Declarations F unding. The pmsims pro ject, including this study , is funded by the NIHR Research for Patien t Benefit programme (NIHR206858). The views expressed are those of the authors and not necessarily those of the NIHR or the Department of Health and So cial Care. ORO is supp orted by NIHR206858 at King’s College London. This study is part-funded b y the National Institute for Health and Care Researc h (NIHR) Maudsley Biomedical Researc h Cen tre (BRC). DSt, DSh, GF, and EC receiv ed financial support from the National Institute for Health Researc h (NIHR) Biomedical Researc h Centre at South London and Maudsley NHS F oundation T rust (NIHR203318) and King’s College London. Comp eting interests. The authors declare that they hav e no comp eting in terests. Ethics appro v al and consent to participate. Not applicable. This study inv olved no h uman participants, human data, or animal sub jects; all data were generated by computer simulation. Consen t for publication. Not applicable. Data a v ailabilit y . No empirical datasets were generated or analysed in this study . All sim ulation results are repro ducible using the pmsims R pack age (version 0.5.0) with the simulation scenarios and parameter settings describ ed in the Metho ds section. Materials a v ailabilit y . Not applicable. Co de av ailability . The pmsims R pack age (version 0.5.0) is freely a v ailable on GitHub at https://gith ub.com/pmsims- pack age/pmsims/ . All simulation co de required to repro duce the results presented in this paper is av ailable from the corresp onding author up on request. Author contributions. ORO, GF, and EC designed the simulation study . ORO conducted the sim ulation study , analysed the results, and drafted the manuscript. OR O, DSh, FZ, GF, and EC developed the pmsims pac k age. DSh, SM, FZ, DSt, GF, and EC contributed to the conceptualisation and study design, interpretation of results, and critical revision of the manuscript. GF and EC provided o versigh t of the pro ject. All authors read and approv ed the final manuscript. 27 App endix A Additional File 1: Supplemen tary Figures Aim 1 Supplemen tary Figures (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. S1 : Aim 1 (Binary outcome): Comparison of CV and computational time across searc h engines gp , bisection and gp-bs for AUC metric under v arying ( κ = 10 , 20), prev alence (0 . 05 , 0 . 20), n um b er of predictors ( p = 10 , 100) and total simulation budget ( B = 200–2000). 28 (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. S2 : Aim 1 (Contin uous outcome): Comparison of CV and computational time across search engines gp , bisection and gp-bs for calibration slop e metric under v arying ( κ = 10 , 20), R 2 = 0 . 2 , 0 . 7, num b er of predictors ( p = 10 , 100) and total sim ulation budget ( B = 200–2000). 29 (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. S3 : Aim 1 (Contin uous outcome): Comparison of CV and computational time across searc h engines gp , bisection and gp-bs for R 2 metric under v arying ( κ = 10 , 20), R 2 = 0 . 2 , 0 . 7, n umber of predictors ( p = 10 , 100) and total simulation budget ( B = 200–2000). 30 (a) Co efficien t of V ariation (CV) of sample size estimates relative to mean n ∗ . (b) Computational time required to estimate minimum sample size n ∗ . Fig. S4 : Aim 1 (Surviv al outcome): Comparison of CV and computational time across searc h engines gp , bisection and gp-bs for C-index metric under v arying ( κ = 10 , 20), ev ent rate (0 . 4 , 0 . 8), num b er of predictors ( p = 10 , 100) and total simulation budget ( B = 200–2000). 31 Aim 2 Supplemen tary Figures Fig. S5 : Minim um sample size requirements recommended by different engines as a function of the num b er of predictors, stratified by outcome prev alence and large- sample AUC for binary outcome mo dels targeting a calibration slop e of 0 . 90. 32 Fig. S6 : Comparison of relative co efficien t of v ariation in recommended sample sizes across sample size determination engines as a function of the n umber of candidate pre- dictors, stratified by outcome prev alence and large-sample AUC in binary prediction mo dels. 33 Fig. S7 : Computational time required by each sample size determination engine as a function of the num b er of predictors, stratified by outcome prev alence and large- sample AUC in binary prediction mo dels. 34 Fig. S8 : Achiev ed calibration slop e at the recommended sample size for eac h engine as a function of the num be r of predictors, stratified by outcome prev alence and large- sample AUC in binary prediction mo dels (dashed line indicates target slop e of 0 . 90). 35 Fig. S9 : Minim um sample size requirements recommended by different engines as a function of the n umber of predictors, stratified by large-sample R 2 for contin uous outcome mo dels targeting a calibration slop e of 0 . 90. 36 Fig. S10 : Comparison of relative coefficient of v ariation in recommended sample sizes across sample size determination engines as a function of the num b er of candidate predictors, stratified by large-sample R 2 in contin uous prediction mo dels. 37 Fig. S11 : Computational time required by eac h sample size determination engine as a function of the num b er of predictors, stratified by large-sample R 2 in contin uous prediction mo dels. 38 Fig. S12 : Achiev ed calibration slop e at the recommended sample size for each engine as a function of the n umber of predictors, stratified b y large-sample R 2 (dashed line indicates target slop e of 0 . 90). 39 Fig. S13 : Minimum sample size requirements recommended by differen t engines as a function of the num ber of predictors, stratified by even t rate and large-sample C - index for surviv al outcome mo dels targeting a calibration slop e of 0 . 90. 40 Fig. S14 : Comparison of relative coefficient of v ariation in recommended sample sizes across sample size determination engines as a function of the num b er of candidate predictors, stratified by even t rate and large-sample C -index in surviv al prediction mo dels. 41 Fig. S15 : Computational time required by eac h sample size determination engine as a function of the num ber of predictors, stratified by even t rate and large-sample C - index in surviv al prediction mo dels. 42 Fig. S16 : Achiev ed calibration slop e at the recommended sample size for each engine as a function of the num b er of predictors, stratified by ev ent rate and large-sample C -index in surviv al prediction mo dels (dashed line indicates target slop e of 0 . 90). References [1] V an Calster, B., Nieb o er, D., V ergouw e, Y., De Co c k, B., Pencina, M.J., Steyer- b erg, E.W.: A calibration hierarc hy for risk mo dels was defined: from utopia to empirical data. Journal of clinical epidemiology 74 , 167–176 (2016) [2] Shamsutdino v a, D., Zimmer, F., Olaniran, O.R., Markham, S., Stahl, D., F orb es, G., Carr, E.: Sample size calculations for developing clinical prediction mo dels: Ov erview and pmsims r pack age. arXiv preprin t arXiv:2602.23507 (2026) [3] Sadatsafa vi, M., Gustafson, P ., Seta yeshgar, S., Wynan ts, L., Riley , R.D.: Ba yesian sample size calculations for external v alidation studies of risk prediction mo dels. arXiv preprint arXiv:2504.15923 (2025) [4] P a vlou, M., Omar, R.Z., Am bler, G.: Sample size calculations for the developmen t 43 of risk prediction mo dels that account for p erformance v ariability . arXiv preprint arXiv:2509.14028 (2025) [5] Riley , R.D., Ensor, J., Snell, K.I., Harrell, F.E., Martin, G.P ., Reitsma, J.B., Mo ons, K.G., Collins, G., V an Smeden, M.: Calculating the sample size required for developing a clinical prediction mo del. Bmj 368 (2020) [6] Ploeg, T., Austin, P .C., Stey erb erg, E.W.: Mo dern modelling techniques are data h ungry: a simulation study for predicting dichotomous endp oin ts. BMC medical researc h metho dology 14 (1), 137 (2014) [7] P a vlou, M., Ambler, G., Qu, C., Seaman, S.R., White, I.R., Omar, R.Z.: An ev aluation of sample size requirements for developing risk prediction mo dels with binary outcomes. BMC Medical Research Metho dology 24 (1), 146 (2024) [8] Riley , R.D., Whittle, R., Sadatsafa vi, M., Martin, G.P ., P ate, A., Collins, G.S., Ensor, J.: A general sample size framework for dev eloping or up dating a clinical prediction mo del. arXiv preprint arXiv:2504.18730 (2025) [9] Dhiman, P ., Ma, J., Qi, C., Bullock, G., Sergean t, J.C., Riley , R.D., Collins, G.S.: Sample size requiremen ts are not b eing considered in studies developing predic- tion mo dels for binary outcomes: a systematic review. BMC Medical Research Metho dology 23 (1), 188 (2023) [10] Zimmer, F., Henninger, M., Deb elak, R.: Sample size planning for complex study designs: A tutorial for the mlpwr pack age. Beha vior researc h metho ds 56 (5), 5246–5263 (2024) [11] Wilson, D.T., Ho op er, R., Brown, J., F arrin, A.J., W alwyn, R.E.: Efficient and flexible sim ulation-based sample size determination for clinical trials with m ultiple design parameters. Statistical metho ds in medical research 30 (3), 799–815 (2021) [12] P ate, A., Riley , R.D., Collins, G.S., V an Smeden, M., V an Calster, B., Ensor, J., Martin, G.P .: Minim um sample size for developing a multiv ariable predic- tion mo del using multinomial logistic regression. Statistical metho ds in medical researc h 32 (3), 555–571 (2023) [13] V an Calster, B., McLernon, D.J., V an Smeden, M., Wynants, L., Steyerberg, E.W.: Calibration: the achilles heel of predictiv e analytics. BMC medicine 17 (1), 230 (2019) [14] Ha wink el, S., W aegeman, W., Maere, S.: Out-of-sample r 2: estimation and inference. The American Statistician 78 (1), 15–25 (2024) [15] Harrell Jr, F.E., Lee, K.L., Mark, D.B.: Multiv ariable prognostic mo dels: issues in developing mo dels, ev aluating assumptions and adequacy , and measuring and reducing errors. Statistics in medicine 15 (4), 361–387 (1996) 44 [16] Uno, H., Cai, T., Pencina, M.J., D’Agostino, R.B., W ei, L.-J.: On the c-statistics for ev aluating ov erall adequacy of risk prediction procedures with censored surviv al data. Statistics in medicine 30 (10), 1105–1117 (2011) [17] Riley , R.D., Snell, K.I., Ensor, J., Burke, D.L., Harrell Jr, F.E., Mo ons, K.G., Collins, G.S.: Minimum sample size for developing a m ultiv ariable prediction mo del: P art i–contin uous outcomes. Statistics in medicine 38 (7), 1262–1275 (2019) [18] Kutner, M.H.: Applied linear statistical mo dels. (2005) [19] P a vlou, M., Qu, C., Omar, R.Z., Seaman, S.R., Steyerberg, E.W., White, I.R., Am bler, G.: Estimation of required sample size for external v alidation of risk mo dels for binary outcomes. Statistical metho ds in medical researc h 30 (10), 2187– 2206 (2021) [20] Moons, K.G., Kengne, A.P ., Grobbee, D.E., Ro yston, P ., V ergouw e, Y., Alt- man, D.G., W o o dw ard, M.: Risk prediction mo dels: Ii. external v alidation, mo del up dating, and impact assessment. Heart 98 (9), 691–698 (2012) [21] Burton, A., Altman, D.G., Royston, P ., Holder, R.L.: The design of simulation studies in medical statistics. Statistics in medicine 25 (24), 4279–4292 (2006) [22] Morris, T.P ., White, I.R., Crowther, M.J.: Using simulation studies to ev aluate statistical metho ds. Statistics in medicine 38 (11), 2074–2102 (2019) [23] Stey erb erg, E.W., Vick ers, A.J., Co ok, N.R., Gerds, T., Gonen, M., Obucho wski, N., P encina, M.J., Kattan, M.W.: Assessing the p erformance of prediction mo dels: a framework for traditional and no vel measures. Epidemiology 21 (1), 128–138 (2010) [24] Chalmers, R.P .: Solving v ariables with monte carlo simulation exp erimen ts: A sto c hastic ro ot-solving approach. Psychological Metho ds (2024) [25] Y u, Y., Banerjee, M., Ritov, Y.: The ro ot finding problem revisited: Beyond the robbins-monro pro cedure. arXiv preprint arXiv:2508.17591 (2025) 45

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment