Kinetic Langevin Splitting Schemes for Constrained Sampling

Constrained sampling is an important and challenging task in computational statistics, concerned with generating samples from a distribution under certain constraints. There are numerous types of algorithm aimed at this task, ranging from general Mar…

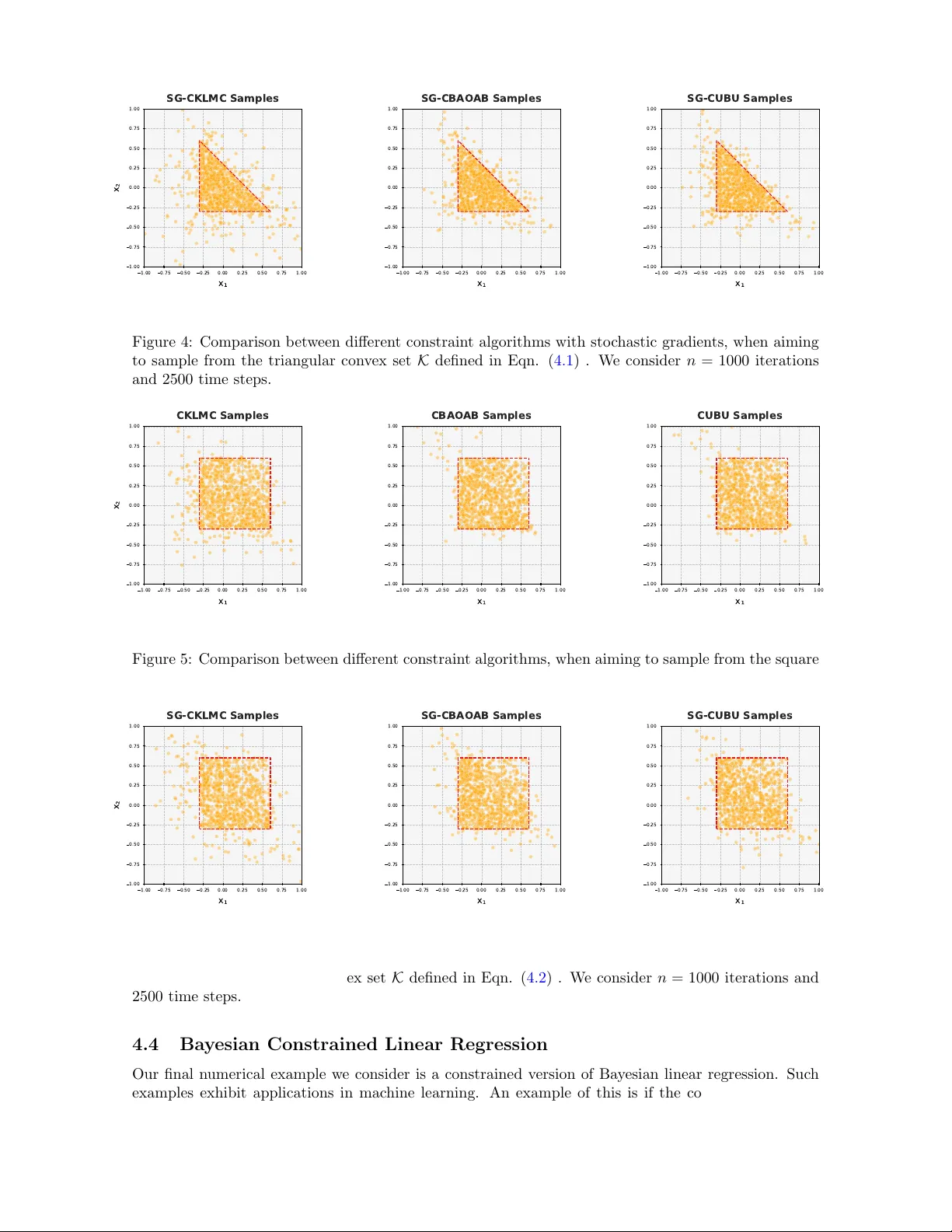

Authors: Neil K. Chada, Lu Yu